More on Personal Growth

Suzie Glassman

3 years ago

How I Stay Fit Despite Eating Fast Food and Drinking Alcohol

Here's me. Perfectionism is unnecessary.

This post isn't for people who gag at the prospect of eating french fries. I've been ridiculed for stating you can lose weight eating carbs and six-pack abs aren't good.

My family eats frozen processed meals and quick food most weeks (sometimes more). Clean eaters may think I'm unqualified to give fitness advice. I get it.

Hear me out, though. I’m a 44-year-old raising two busy kids with a weekly-traveling husband. Tutoring, dance, and guitar classes fill weeknights. I'm also juggling my job and freelancing.

I'm as worried and tired as my clients. I wish I ate only kale smoothies and salads. I can’t. Despite my mistakes, I'm fit. I won't promise you something just because it worked for me. But here’s a look at how I manage.

What I largely get right about eating

I have a flexible diet and track my daily intake. I count protein, fat, and carbs. Only on vacation or exceptional occasions do I not track.

My protein goal is 1 g per lb. I consume a lot of chicken breasts, eggs, turkey, and lean ground beef. I also occasionally drink protein shakes.

I eat 220–240 grams of carbs daily. My carb count depends on training volume and goals. I'm trying to lose weight slowly. If I want to lose weight faster, I cut carbs to 150-180.

My carbs include white rice, Daves Killer Bread, fruit, pasta, and veggies. I don't eat enough vegetables, so I take Athletic Greens. Also, V8.

Fat grams over 50 help me control my hormones. Recently, I've reached 70-80 grams. Cooking with olive oil. I eat daily dark chocolate. Eggs, butter, milk, and cheese contribute to the rest.

Those frozen meals? What can I say? Stouffer’s lasagna is sometimes needed. I order the healthiest fast food I can find (although I can never bring myself to order the salad). That's a chicken sandwich or a kid's hamburger. I rarely order fries. I eat slowly and savor each bite to feel full.

Potato chips and sugary cereals are in the pantry, but I'm not tempted. My kids eat them because I'd rather teach them moderation than total avoidance. If I eat them, I only eat one portion.

If you're not hungry and eating enough protein and fat, you won't want to eat everything in sight.

I drink once or twice a week. As a result, I rarely overdo it.

Food tracking is tedious and frustrating for many. Taking breaks and using estimates when eating out help. Not perfect, but realistic.

I practice a prolonged fast to enhance metabolic adaptability

Metabolic flexibility is the ability to switch between fuel sources (fat and carbs) based on activity intensity and time since eating. At rest or during low to moderate exertion, your body burns fat. Your body burns carbs after eating and during intense exercise.

Our metabolic flexibility can be hampered by lack of exercise, overeating, and stress. Our bodies become lousy fat burners, making weight loss difficult.

Once a week, I skip dinner (usually around 24 hours). Long-term fasting teaches my body to burn fat. It provides me one low-calorie day a week (I break the fast with a normal-sized dinner).

Fasting day helps me maintain my weight on weekends, when I typically overeat and drink.

Try an extended fast slowly. Delay breakfast by two hours. Next week, add two hours, etc. It takes practice to go that long without biting off your arm. I also suggest consulting your doctor.

I stay active.

I've always been active. As a child, I danced many nights a week, was on the high school dance team, and ran marathons in my 20s.

Often, I feel driven by an internal engine. Working from home makes it easy to exercise. If that’s not you, I get it. Everyone can benefit from raising their baseline.

After taking the kids to school, I walk two miles around the neighborhood. When I need to think, I switch off podcasts. First thing in the morning, I go for a walk.

I lift weights Monday, Wednesday, and Friday. 45 minutes is typical. I run 45-90 minutes on Tuesday and Thursday. I'm slow but reliable. On Saturdays and Sundays, I walk and add a short spin class if I'm not too tired.

I almost never forgo sleep.

I rarely stay up past 10 p.m., much to my night-owl husband's dismay. My 7-8-hour nights help me recover from workouts and handle stress. Without it, I'm grumpy.

I suppose sleep duration matters more than bedtime. Some people just can't fall asleep early. Internal clock and genetics determine sleep and wake hours.

Prioritize sleep.

Last thoughts

Fitness and diet advice is often useless. Some of the advice is inaccurate, dangerous, or difficult to follow if you have a life. I want to throw a shoe at my screen when I see headlines promising to speed up my metabolism or help me lose fat.

I studied exercise physiology for years. No shortcuts exist. No medications or cleanses reset metabolism. I play the hand I'm dealt. I realize that just because something works for me, it won't for you.

If I wanted 15% body fat and ripped abs, I'd have to be stricter. I occasionally think I’d like to get there. But then I remember I’m happy with my life. I like fast food and beer. Pizza and margaritas are favorites (not every day).

You can get it mostly right and live a healthy life.

Jari Roomer

3 years ago

After 240 articles and 2.5M views on Medium, 9 Raw Writing Tips

Late in 2018, I published my first Medium article, but I didn't start writing seriously until 2019. Since then, I've written more than 240 articles, earned over $50,000 through Medium's Partner Program, and had over 2.5 million page views.

Write A Lot

Most people don't have the patience and persistence for this simple writing secret:

Write + Write + Write = possible success

Writing more improves your skills.

The more articles you publish, the more likely one will go viral.

If you only publish once a month, you have no views. If you publish 10 or 20 articles a month, your success odds increase 10- or 20-fold.

Tim Denning, Ayodeji Awosika, Megan Holstein, and Zulie Rane. Medium is their jam. How are these authors alike? They're productive and consistent. They're prolific.

80% is publishable

Many writers battle perfectionism.

To succeed as a writer, you must publish often. You'll never publish if you aim for perfection.

Adopt the 80 percent-is-good-enough mindset to publish more. It sounds terrible, but it'll boost your writing success.

Your work won't be perfect. Always improve. Waiting for perfection before publishing will take a long time.

Second, readers are your true critics, not you. What you consider "not perfect" may be life-changing for the reader. Don't let perfectionism hinder the reader.

Don't let perfectionism hinder the reader. ou don't want to publish mediocre articles. When the article is 80% done, publish it. Don't spend hours editing. Realize it. Get feedback. Only this will work.

Make Your Headline Irresistible

We all judge books by their covers, despite the saying. And headlines. Readers, including yourself, judge articles by their titles. We use it to decide if an article is worth reading.

Make your headlines irresistible. Want more article views? Then, whether you like it or not, write an attractive article title.

Many high-quality articles are collecting dust because of dull, vague headlines. It didn't make the reader click.

As a writer, you must do more than produce quality content. You must also make people click on your article. This is a writer's job. How to create irresistible headlines:

Curiosity makes readers click. Here's a tempting example...

Example: What Women Actually Look For in a Guy, According to a Huge Study by Luba Sigaud

Use Numbers: Click-bait lists. I mean, which article would you click first? ‘Some ways to improve your productivity’ or ’17 ways to improve your productivity.’ Which would I click?

Example: 9 Uncomfortable Truths You Should Accept Early in Life by Sinem Günel

Most headlines are dull. If you want clicks, get 'sexy'. Buzzword-ify. Invoke emotion. Trendy words.

Example: 20 Realistic Micro-Habits To Live Better Every Day by Amardeep Parmar

Concise paragraphs

Our culture lacks focus. If your headline gets a click, keep paragraphs short to keep readers' attention.

Some writers use 6–8 lines per paragraph, but I prefer 3–4. Longer paragraphs lose readers' interest.

A writer should help the reader finish an article, in my opinion. I consider it a job requirement. You can't force readers to finish an article, but you can make it 'snackable'

Help readers finish an article with concise paragraphs, interesting subheadings, exciting images, clever formatting, or bold attention grabbers.

Work And Move On

I've learned over the years not to get too attached to my articles. Many writers report a strange phenomenon:

The articles you're most excited about usually bomb, while the ones you're not tend to do well.

This isn't always true, but I've noticed it in my own writing. My hopes for an article usually make it worse. The more objective I am, the better an article does.

Let go of a finished article. 40 or 40,000 views, whatever. Now let the article do its job. Onward. Next story. Start another project.

Disregard Haters

Online content creators will encounter haters, whether on YouTube, Instagram, or Medium. More views equal more haters. Fun, right?

As a web content creator, I learned:

Don't debate haters. Never.

It's a mistake I've made several times. It's tempting to prove haters wrong, but they'll always find a way to be 'right'. Your response is their fuel.

I smile and ignore hateful comments. I'm indifferent. I won't enter a negative environment. I have goals, money, and a life to build. "I'm not paid to argue," Drake once said.

Use Grammarly

Grammarly saves me as a non-native English speaker. You know Grammarly. It shows writing errors and makes article suggestions.

As a writer, you need Grammarly. I have a paid plan, but their free version works. It improved my writing greatly.

Put The Reader First, Not Yourself

Many writers write for themselves. They focus on themselves rather than the reader.

Ask yourself:

This article teaches what? How can they be entertained or educated?

Personal examples and experiences improve writing quality. Don't focus on yourself.

It's not about you, the content creator. Reader-focused. Putting the reader first will change things.

Extreme ownership: Stop blaming others

I remember writing a lot on Medium but not getting many views. I blamed Medium first. Poor algorithm. Poor publishing. All sucked.

Instead of looking at what I could do better, I blamed others.

When you blame others, you lose power. Owning your results gives you power.

As a content creator, you must take full responsibility. Extreme ownership means 100% responsibility for work and results.

You don’t blame others. You don't blame the economy, president, platform, founders, or audience. Instead, you look for ways to improve. Few people can do this.

Blaming is useless. Zero. Taking ownership of your work and results will help you progress. It makes you smarter, better, and stronger.

Instead of blaming others, you'll learn writing, marketing, copywriting, content creation, productivity, and other skills. Game-changer.

Leon Ho

3 years ago

Digital Brainbuilding (Your Second Brain)

The human brain is amazing. As more scientists examine the brain, we learn how much it can store.

The human brain has 1 billion neurons, according to Scientific American. Each neuron creates 1,000 connections, totaling over a trillion. If each neuron could store one memory, we'd run out of room. [1]

What if you could store and access more info, freeing up brain space for problem-solving and creativity?

Build a second brain to keep up with rising knowledge (what I refer to as a Digital Brain). Effectively managing information entails realizing you can't recall everything.

Every action requires information. You need the correct information to learn a new skill, complete a project at work, or establish a business. You must manage information properly to advance your profession and improve your life.

How to construct a second brain to organize information and achieve goals.

What Is a Second Brain?

How often do you forget an article or book's key point? Have you ever wasted hours looking for a saved file?

If so, you're not alone. Information overload affects millions of individuals worldwide. Information overload drains mental resources and causes anxiety.

This is when the second brain comes in.

Building a second brain doesn't involve duplicating the human brain. Building a system that captures, organizes, retrieves, and archives ideas and thoughts. The second brain improves memory, organization, and recall.

Digital tools are preferable to analog for building a second brain.

Digital tools are portable and accessible. Due to these benefits, we'll focus on digital second-brain building.

Brainware

Digital Brains are external hard drives. It stores, organizes, and retrieves. This means improving your memory won't be difficult.

Memory has three components in computing:

Recording — storing the information

Organization — archiving it in a logical manner

Recall — retrieving it again when you need it

For example:

Due to rigorous security settings, many websites need you to create complicated passwords with special characters.

You must now memorize (Record), organize (Organize), and input this new password the next time you check in (Recall).

Even in this simple example, there are many pieces to remember. We can't recognize this new password with our usual patterns. If we don't use the password every day, we'll forget it. You'll type the wrong password when you try to remember it.

It's common. Is it because the information is complicated? Nope. Passwords are basically letters, numbers, and symbols.

It happens because our brains aren't meant to memorize these. Digital Brains can do heavy lifting.

Why You Need a Digital Brain

Dual minds are best. Birth brain is limited.

The cerebral cortex has 125 trillion synapses, according to a Stanford Study. The human brain can hold 2.5 million terabytes of digital data. [2]

Building a second brain improves learning and memory.

Learn and store information effectively

Faster information recall

Organize information to see connections and patterns

Build a Digital Brain to learn more and reach your goals faster. Building a second brain requires time and work, but you'll have more time for vital undertakings.

Why you need a Digital Brain:

1. Use Brainpower Effectively

Your brain has boundaries, like any organ. This is true while solving a complex question or activity. If you can't focus on a work project, you won't finish it on time.

Second brain reduces distractions. A robust structure helps you handle complicated challenges quickly and stay on track. Without distractions, it's easy to focus on vital activities.

2. Staying Organized

Professional and personal duties must be balanced. With so much to do, it's easy to neglect crucial duties. This is especially true for skill-building. Digital Brain will keep you organized and stress-free.

Life success requires action. Organized people get things done. Organizing your information will give you time for crucial tasks.

You'll finish projects faster with good materials and methods. As you succeed, you'll gain creative confidence. You can then tackle greater jobs.

3. Creativity Process

Creativity drives today's world. Creativity is mysterious and surprising for millions worldwide. Immersing yourself in others' associations, triggers, thoughts, and ideas can generate inspiration and creativity.

Building a second brain is crucial to establishing your creative process and building habits that will help you reach your goals. Creativity doesn't require perfection or overthinking.

4. Transforming Your Knowledge Into Opportunities

This is the age of entrepreneurship. Today, you can publish online, build an audience, and make money.

Whether it's a business or hobby, you'll have several job alternatives. Knowledge can boost your economy with ideas and insights.

5. Improving Thinking and Uncovering Connections

Modern career success depends on how you think. Instead of overthinking or perfecting, collect the best images, stories, metaphors, anecdotes, and observations.

This will increase your creativity and reveal connections. Increasing your imagination can help you achieve your goals, according to research. [3]

Your ability to recognize trends will help you stay ahead of the pack.

6. Credibility for a New Job or Business

Your main asset is experience-based expertise. Others won't be able to learn without your help. Technology makes knowledge tangible.

This lets you use your time as you choose while helping others. Changing professions or establishing a new business become learning opportunities when you have a Digital Brain.

7. Using Learning Resources

Millions of people use internet learning materials to improve their lives. Online resources abound. These include books, forums, podcasts, articles, and webinars.

These resources are mostly free or inexpensive. Organizing your knowledge can save you time and money. Building a Digital Brain helps you learn faster. You'll make rapid progress by enjoying learning.

How does a second brain feel?

Digital Brain has helped me arrange my job and family life for years.

No need to remember 1001 passwords. I never forget anything on my wife's grocery lists. Never miss a meeting. I can access essential information and papers anytime, anywhere.

Delegating memory to a second brain reduces tension and anxiety because you'll know what to do with every piece of information.

No information will be forgotten, boosting your confidence. Better manage your fears and concerns by writing them down and establishing a strategy. You'll understand the plethora of daily information and have a clear head.

How to Develop Your Digital Brain (Your Second Brain)

It's cheap but requires work.

Digital Brain development requires:

Recording — storing the information

Organization — archiving it in a logical manner

Recall — retrieving it again when you need it

1. Decide what information matters before recording.

To succeed in today's environment, you must manage massive amounts of data. Articles, books, webinars, podcasts, emails, and texts provide value. Remembering everything is impossible and overwhelming.

What information do you need to achieve your goals?

You must consolidate ideas and create a strategy to reach your aims. Your biological brain can imagine and create with a Digital Brain.

2. Use the Right Tool

We usually record information without any preparation - we brainstorm in a word processor, email ourselves a message, or take notes while reading.

This information isn't used. You must store information in a central location.

Different information needs different instruments.

Evernote is a top note-taking program. Audio clips, Slack chats, PDFs, text notes, photos, scanned handwritten pages, emails, and webpages can be added.

Pocket is a great software for saving and organizing content. Images, videos, and text can be sorted. Web-optimized design

Calendar apps help you manage your time and enhance your productivity by reminding you of your most important tasks. Calendar apps flourish. The best calendar apps are easy to use, have many features, and work across devices. These calendars include Google, Apple, and Outlook.

To-do list/checklist apps are useful for managing tasks. Easy-to-use, versatility, budget, and cross-platform compatibility are important when picking to-do list apps. Google Keep, Google Tasks, and Apple Notes are good to-do apps.

3. Organize data for easy retrieval

How should you organize collected data?

When you collect and organize data, you'll see connections. An article about networking can assist you comprehend web marketing. Saved business cards can help you find new clients.

Choosing the correct tools helps organize data. Here are some tools selection criteria:

Can the tool sync across devices?

Personal or team?

Has a search function for easy information retrieval?

Does it provide easy data categorization?

Can users create lists or collections?

Does it offer easy idea-information connections?

Does it mind map and visually organize thoughts?

Conclusion

Building a Digital Brain (second brain) helps us save information, think creatively, and implement ideas. Your second brain is a biological extension. It prevents amnesia, allowing you to tackle bigger creative difficulties.

People who love learning often consume information without using it. Every day, they postpone life-improving experiences until they're forgotten. Useful information becomes strength.

Reference

[1] ^ Scientific American: What Is the Memory Capacity of the Human Brain?

[2] ^ Clinical Neurology Specialists: What is the Memory Capacity of a Human Brain?

[3] ^ National Library of Medicine: Imagining Success: Multiple Achievement Goals and the Effectiveness of Imagery

You might also like

Boris Müller

3 years ago

Why Do Websites Have the Same Design?

My kids redesigned the internet because it lacks inventiveness.

Internet today is bland. Everything is generic: fonts, layouts, pages, and visual language. Microtypography is messy.

Web design today seems dictated by technical and ideological constraints rather than creativity and ideas. Text and graphics are in containers on every page. All design is assumed.

Ironically, web technologies can design a lot. We can execute most designs. We make shocking, evocative websites. Experimental typography, generating graphics, and interactive experiences are possible.

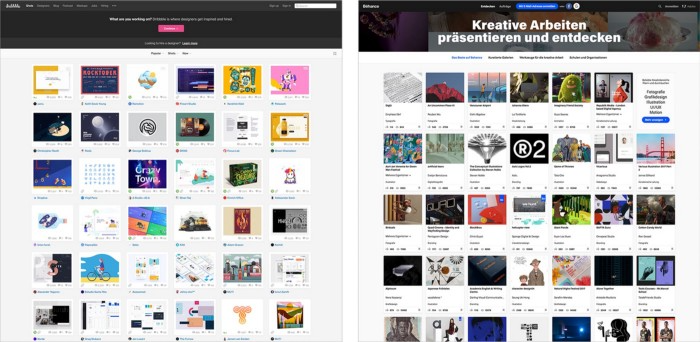

Even designer websites use containers in containers. Dribbble and Behance, the two most popular creative websites, are boring. Lead image.

How did this happen?

Several reasons. WordPress and other blogging platforms use templates. These frameworks build web pages by combining graphics, headlines, body content, and videos. Not designs, templates. These rules combine related data types. These platforms don't let users customize pages beyond the template. You filled the template.

Templates are content-neutral. Thus, the issue.

Form should reflect and shape content, which is a design principle. Separating them produces content containers. Templates have no design value.

One of the fundamental principles of design is a deep and meaningful connection between form and content.

Web design lacks imagination for many reasons. Most are pragmatic and economic. Page design takes time. Large websites lack the resources to create a page from scratch due to the speed of internet news and the frequency of new items. HTML, JavaScript, and CSS continue to challenge web designers. Web design can't match desktop publishing's straightforward operations.

Designers may also be lazy. Mobile-first, generic, framework-driven development tends to ignore web page visual and contextual integrity.

How can we overcome this? How might expressive and avant-garde websites look today?

Rediscovering the past helps design the future.

'90s-era web design

At the University of the Arts Bremen's research and development group, I created my first website 23 years ago. Web design was trendy. Young web. Pages inspired me.

We struggled with HTML in the mid-1990s. Arial, Times, and Verdana were the only web-safe fonts. Anything exciting required table layouts, monospaced fonts, or GIFs. HTML was originally content-driven, thus we had to work against it to create a page.

Experimental typography was booming. Designers challenged the established quo from Jan Tschichold's Die Neue Typographie in the twenties to April Greiman's computer-driven layouts in the eighties. By the mid-1990s, an uncommon confluence of technological and cultural breakthroughs enabled radical graphic design. Irma Boom, David Carson, Paula Scher, Neville Brody, and others showed it.

Early web pages were dull compared to graphic design's aesthetic explosion. The Web Design Museum shows this.

Nobody knew how to conduct browser-based graphic design. Web page design was undefined. No standards. No CMS (nearly), CSS, JS, video, animation.

Now is as good a time as any to challenge the internet’s visual conformity.

In 2018, everything is browser-based. Massive layouts to micro-typography, animation, and video. How do we use these great possibilities? Containerized containers. JavaScript-contaminated mobile-first pages. Visually uniform templates. Web design 23 years later would disappoint my younger self.

Our imagination, not technology, restricts web design. We're too conformist to aesthetics, economics, and expectations.

Crisis generates opportunity. Challenge online visual conformity now. I'm too old and bourgeois to develop a radical, experimental, and cutting-edge website. I can ask my students.

I taught web design at the Potsdam Interface Design Programme in 2017. Each team has to redesign a website. Create expressive, inventive visual experiences on the browser. Create with contemporary web technologies. Avoid usability, readability, and flexibility concerns. Act. Ignore Erwartungskonformität.

The class outcome pleased me. This overview page shows all results. Four diverse projects address the challenge.

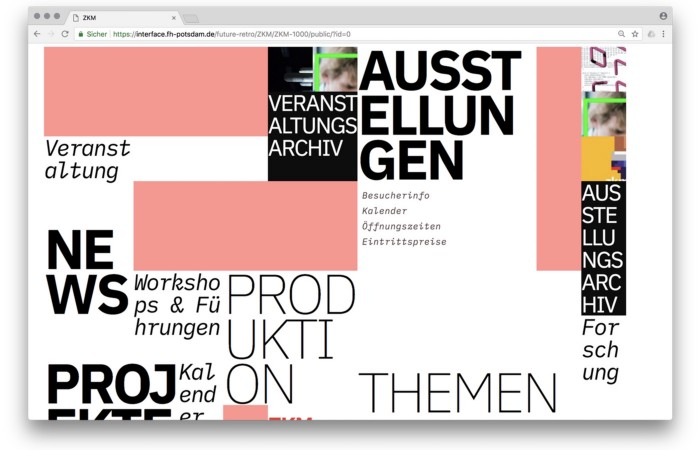

1. ZKM by Frederic Haase and Jonas Köpfer

Frederic and Jonas began their experiments on the ZKM website. The ZKM is Germany's leading media art exhibition location, but its website remains conventional. It's useful but not avant-garde like the shows' art.

Frederic and Jonas designed the ZKM site's concept, aesthetic language, and technical configuration to reflect the museum's progressive approach. A generative design engine generates new layouts for each page load.

ZKM redesign.

2. Streem by Daria Thies, Bela Kurek, and Lucas Vogel

Street art magazine Streem. It promotes new artists and societal topics. Streem includes artwork, painting, photography, design, writing, and journalism. Daria, Bela, and Lucas used these influences to develop a conceptual metropolis. They designed four neighborhoods to reflect magazine sections for their prototype. For a legible city, they use powerful illustrative styles and spatial typography.

Streem makeover.

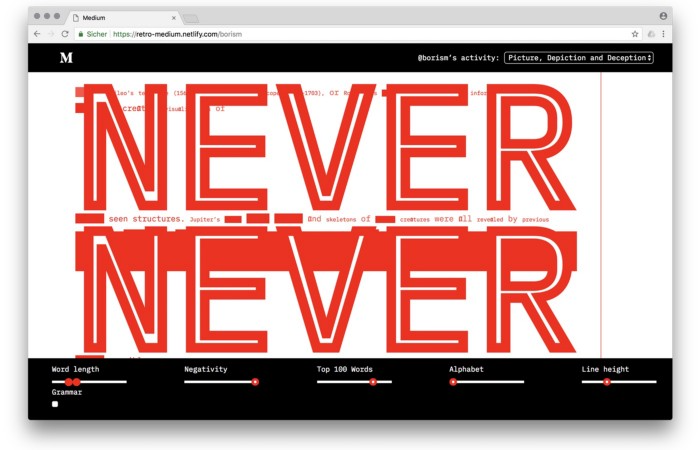

3. Medium by Amelie Kirchmeyer and Fabian Schultz

Amelie and Fabian structured. Instead of developing a form for a tale, they dissolved a web page into semantic, syntactical, and statistical aspects. HTML's flexibility was their goal. They broke Medium posts into experimental typographic space.

Medium revamp.

4. Hacker News by Fabian Dinklage and Florian Zia

Florian and Fabian made Hacker News interactive. The social networking site aggregates computer science and IT news. Its voting and debate features are extensive despite its simple style. Fabian and Florian transformed the structure into a typographic timeline and network area. News and comments sequence and connect the visuals. To read Hacker News, they connected their design to the API. Hacker News makeover.

Communication is not legibility, said Carson. Apply this to web design today. Modern websites must be legible, usable, responsive, and accessible. They shouldn't limit its visual palette. Visual and human-centered design are not stereotypes.

I want radical, generative, evocative, insightful, adequate, content-specific, and intelligent site design. I want to rediscover web design experimentation. More surprises please. I hope the web will appear different in 23 years.

Update: this essay has sparked a lively discussion! I wrote a brief response to the debate's most common points: Creativity vs. Usability

Dr. Linda Dahl

3 years ago

We eat corn in almost everything. Is It Important?

Corn Kid got viral on TikTok after being interviewed by Recess Therapy. Tariq, called the Corn Kid, ate a buttery ear of corn in the video. He's corn crazy. He thinks everyone just has to try it. It turns out, whether we know it or not, we already have.

Corn is a fruit, veggie, and grain. It's the second-most-grown crop. Corn makes up 36% of U.S. exports. In the U.S., it's easy to grow and provides high yields, as proven by the vast corn belt spanning the Midwest, Great Plains, and Texas panhandle. Since 1950, the corn crop has doubled to 10 billion bushels.

You say, "Fine." We shouldn't just grow because we can. Why so much corn? What's this corn for?

Why is practical and political. Michael Pollan's The Omnivore's Dilemma has the full narrative. Early 1970s food costs increased. Nixon subsidized maize to feed the public. Monsanto genetically engineered corn seeds to make them hardier, and soon there was plenty of corn. Everyone ate. Woot! Too much corn followed. The powers-that-be had to decide what to do with leftover corn-on-the-cob.

They are fortunate that corn has a wide range of uses.

First, the edible variants. I divide corn into obvious and stealth.

Obvious corn includes popcorn, canned corn, and corn on the cob. This form isn't always digested and often comes out as entire, polka-dotting poop. Cornmeal can be ground to make cornbread, polenta, and corn tortillas. Corn provides antioxidants, minerals, and vitamins in moderation. Most synthetic Vitamin C comes from GMO maize.

Corn oil, corn starch, dextrose (a sugar), and high-fructose corn syrup are often overlooked. They're stealth corn because they sneak into practically everything. Corn oil is used for frying, baking, and in potato chips, mayonnaise, margarine, and salad dressing. Baby food, bread, cakes, antibiotics, canned vegetables, beverages, and even dairy and animal products include corn starch. Dextrose appears in almost all prepared foods, excluding those with high-fructose corn syrup. HFCS isn't as easily digested as sucrose (from cane sugar). It can also cause other ailments, which we'll discuss later.

Most foods contain corn. It's fed to almost all food animals. 96% of U.S. animal feed is corn. 39% of U.S. corn is fed to livestock. But animals prefer other foods. Omnivore chickens prefer insects, worms, grains, and grasses. Captive cows are fed a total mixed ration, which contains corn. These animals' products, like eggs and milk, are also corn-fed.

There are numerous non-edible by-products of corn that are employed in the production of items like:

fuel-grade ethanol

plastics

batteries

cosmetics

meds/vitamins binder

carpets, fabrics

glutathione

crayons

Paint/glue

How does corn influence you? Consider quick food for dinner. You order a cheeseburger, fries, and big Coke at the counter (or drive-through in the suburbs). You tell yourself, "No corn." All that contains corn. Deconstruct:

Cows fed corn produce meat and cheese. Meat and cheese were bonded with corn syrup and starch (same). The bun (corn flour and dextrose) and fries were fried in maize oil. High fructose corn syrup sweetens the drink and helps make the cup and straw.

Just about everything contains corn. Then what? A cornspiracy, perhaps? Is eating too much maize an issue, or should we strive to stay away from it whenever possible?

As I've said, eating some maize can be healthy. 92% of U.S. corn is genetically modified, according to the Center for Food Safety. The adjustments are expected to boost corn yields. Some sweet corn is genetically modified to produce its own insecticide, a protein deadly to insects made by Bacillus thuringiensis. It's safe to eat in sweet corn. Concerns exist about feeding agricultural animals so much maize, modified or not.

High fructose corn syrup should be consumed in moderation. Fructose, a sugar, isn't easily metabolized. Fructose causes diabetes, fatty liver, obesity, and heart disease. It causes inflammation, which might aggravate gout. Candy, packaged sweets, soda, fast food, juice drinks, ice cream, ice cream topping syrups, sauces & condiments, jams, bread, crackers, and pancake syrup contain the most high fructose corn syrup. Everyday foods with little nutrients. Check labels and choose cane sugar or sucrose-sweetened goods. Or, eat corn like the Corn Kid.

Alison Randel

3 years ago

Raising the Bar on Your 1:1s

Managers spend much time in 1:1s. Most team members meet with supervisors regularly. 1:1s can help create relationships and tackle tough topics. Few appreciate the 1:1 format's potential. Most of the time, that potential is spent on small talk, surface-level updates, and ranting (Ugh, the marketing team isn’t stepping up the way I want them to).

What if you used that time to have deeper conversations and important insights? What if change was easy?

This post introduces a new 1:1 format to help you dive deeper, faster, and develop genuine relationships without losing impact.

A 1:1 is a chat, you would assume. Why use structure to talk to a coworker? Go! I know how to talk to people. I can write. I've always written. Also, This article was edited by Zoe.

Before you discard something, ask yourself if there's a good reason not to try anything new. Is the 1:1 only a talk, or do you want extra benefits? Try the steps below to discover more.

I. Reflection (5 minutes)

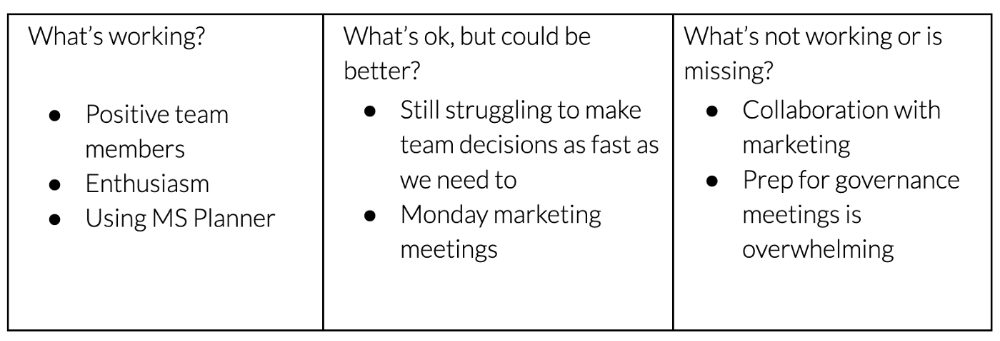

Context-free, broad comments waste time and are useless. Instead, give team members 5 minutes to write these 3 prompts.

What's effective?

What is decent but could be improved?

What is broken or missing?

Why these? They encourage people to be honest about all their experiences. Answering these questions helps people realize something isn't working. These prompts let people consider what's working.

Why take notes? Because you get more in less time. Will you feel awkward sitting quietly while your coworker writes? Probably. Persevere. Multi-task. Take a break from your afternoon meeting marathon. Any awkwardness will pay off.

What happens? After a few minutes of light conversation, create a template like the one given here and have team members fill in their replies. You can pre-share the template (with the caveat that this isn’t meant to take much prep time). Do this with your coworker: Answer the prompts. Everyone can benefit from pondering and obtaining guidance.

This step's output.

Part II: Talk (10-20 minutes)

Most individuals can explain what they see but not what's behind an answer. You don't like a meeting. Why not? Marketing partnership is difficult. What makes working with them difficult? I don't recommend slandering coworkers. Consider how your meetings, decisions, and priorities make work harder. The excellent stuff too. You want to know what's humming so you can reproduce the magic.

First, recognize some facts.

Real power dynamics exist. To encourage individuals to be honest, you must provide a safe environment and extend clear invites. Even then, it may take a few 1:1s for someone to feel secure enough to go there in person. It is part of your responsibility to admit that it is normal.

Curiosity and self-disclosure are crucial. Most leaders have received training to present themselves as the authorities. However, you will both benefit more from the dialogue if you can be open and honest about your personal experience, ask questions out of real curiosity, and acknowledge the pertinent sacrifices you're making as a leader.

Honesty without bias is difficult and important. Due to concern for the feelings of others, people frequently hold back. Or if they do point anything out, they do so in a critical manner. The key is to be open and unapologetic about what you observe while not presuming that your viewpoint is correct and that of the other person is incorrect.

Let's go into some prompts (based on genuine conversations):

“What do you notice across your answers?”

“What about the way you/we/they do X, Y, or Z is working well?”

“ Will you say more about item X in ‘What’s not working?’”

“I’m surprised there isn’t anything about Z. Why is that?”

“All of us tend to play some role in maintaining certain patterns. How might you/we be playing a role in this pattern persisting?”

“How might the way we meet, make decisions, or collaborate play a role in what’s currently happening?”

Consider the preceding example. What about the Monday meeting isn't working? Why? or What about the way we work with marketing makes collaboration harder? Remember to share your honest observations!

Third section: observe patterns (10-15 minutes)

Leaders desire to empower their people but don't know how. We also have many preconceptions about what empowerment means to us and how it works. The next phase in this 1:1 format will assist you and your team member comprehend team power and empowerment. This understanding can help you support and shift your team member's behavior, especially where you disagree.

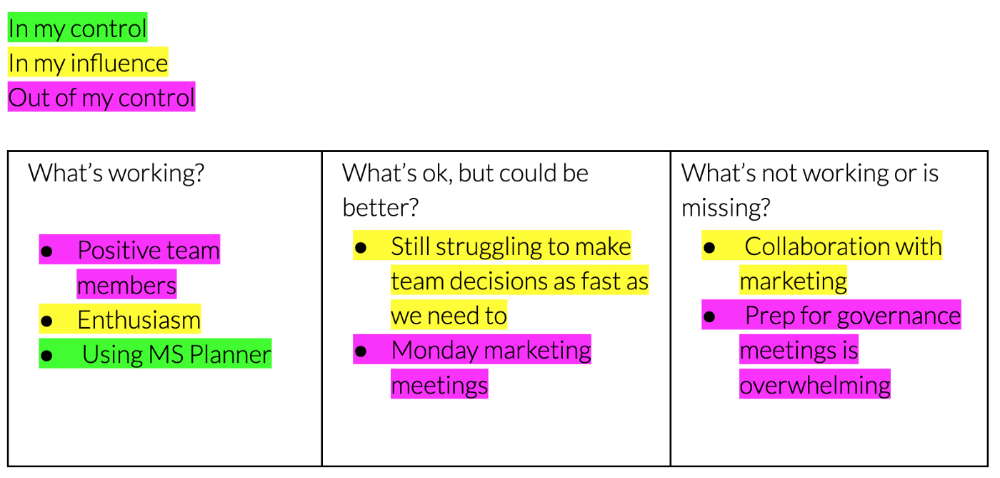

How to? After discussing the stated responses, ask each team member what they can control, influence, and not control. Mark their replies. You can do the same, adding colors where you disagree.

This step's output.

Next, consider the color constellation. Discuss these questions:

Is one color much more prevalent than the other? Why, if so?

Are the colors for the "what's working," "what's fine," and "what's not working" categories clearly distinct? Why, if so?

Do you have any disagreements? If yes, specifically where does your viewpoint differ? What activities do you object to? (Remember, there is no right or wrong in this. Give explicit details and ask questions with curiosity.)

Example: Based on the colors, you can ask, Is the marketing meeting's quality beyond your control? Were our marketing partners consulted? Are there any parts of team decisions we can control? We can't control people, but have we explored another decision-making method? How can we collaborate and generate governance-related information to reduce work, even if the requirement for prep can't be eliminated?

Consider the top one or two topics for this conversation. No 1:1 can cover everything, and that's OK. Focus on the present.

Part IV: Determine the next step (5 minutes)

Last, examine what this conversation means for you and your team member. It's easy to think we know the next moves when we don't.

Like what? You and your teammate answer these questions.

What does this signify moving ahead for me? What can I do to change this? Make requests, for instance, and see how people respond before thinking they won't be responsive.

What demands do I have on other people or my partners? What should I do first? E.g. Make a suggestion to marketing that we hold a monthly retrospective so we can address problems and exchange input more frequently. Include it on the meeting's agenda for next Monday.

Close the 1:1 by sharing what you noticed about the chat. Observations? Learn anything?

Yourself, you, and the 1:1

As a leader, you either reinforce or disrupt habits. Try this template if you desire greater ownership, empowerment, or creativity. Consider how you affect surrounding dynamics. How can you expect others to try something new in high-stakes scenarios, like meetings with cross-functional partners or senior stakeholders, if you won't? How can you expect deep thought and relationship if you don't encourage it in 1:1s? What pattern could this new format disrupt or reinforce?

Fight reluctance. First attempts won't be ideal, and that's OK. You'll only learn by trying.