More on Entrepreneurship/Creators

Jenn Leach

3 years ago

How Much I Got Paid by YouTube for a 68 Million Views Video

My nameless, faceless channel case study

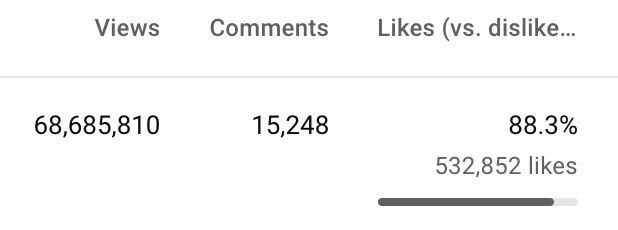

The Numbers

I anonymize this YouTube channel.

It's in a trendy, crowded niche. Sharing it publicly will likely enhance competition.

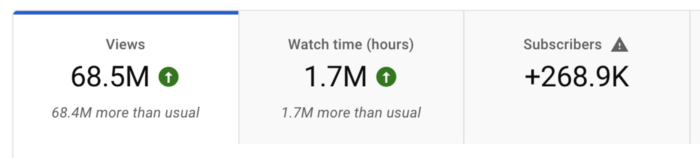

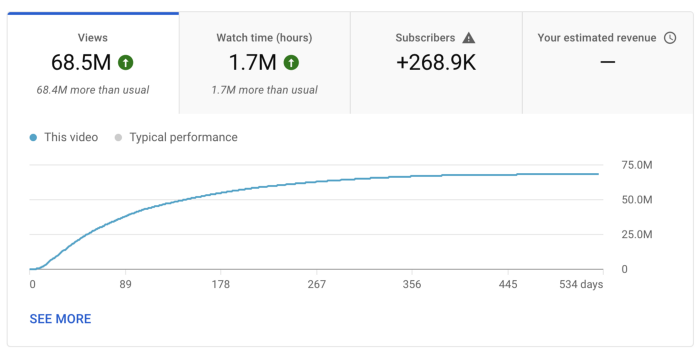

I'll still share my dashboard numbers:

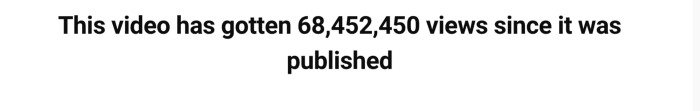

A year ago, the video was released.

What I earned

I'll stop stalling. Here's a screenshot of my YouTube statistics page displaying Adsense profits.

YouTube Adsense made me ZERO dollars.

OMG!

How is this possible?

YouTube Adsense can't monetize my niche. This is typical in faceless niches like TikTok's rain videos. If they were started a while ago, I'm sure certain rain accounts are monetized, but not today.

I actually started a soothing sounds faceless YouTube channel. This was another account of mine.

I looped Pexels films for hours. No background music, just wind, rain, etc.

People could watch these videos to relax or get ready for bed. They're ideal for background noise and relaxation.

They're long-lasting, too. It's easy to make a lot from YouTube Adsense if you insert ads.

Anyway, I tried to monetize it and couldn’t. This was about a year ago. That’s why I doubt new accounts in this genre would be able to get approved for ads.

Back to my faceless channel with 68 million views.

I received nothing from YouTube Adsense, but I made money elsewhere.

Getting paid by the gods of affiliate marketing

Place links in the video and other videos on the channel to get money. Visitors that buy through your affiliate link earn you a commission.

This video earned many clicks on my affiliate links.

I linked to a couple of Amazon products, a YouTube creator tool, my kofi link, and my subscribe link.

Sponsorships

Brands pay you to include ads in your videos.

This video led to many sponsorships.

I've done dozens of sponsorship campaigns that paid $40 to $50 for an end screen to $450 for a preroll ad.

Last word

Overall, I made less than $3,000.

If I had time, I'd be more proactive with sponsorships. You can pitch brand sponsorships. This actually works.

I'd do that if I could rewind time.

I still can, but I think the reaction rate would be higher closer to the viral video's premiere date.

Greg Lim

3 years ago

How I made $160,000 from non-fiction books

I've sold over 40,000 non-fiction books on Amazon and made over $160,000 in six years while writing on the side.

I have a full-time job and three young sons; I can't spend 40 hours a week writing. This article describes my journey.

I write mainly tech books:

Thanks to my readers, many wrote positive evaluations. Several are bestsellers.

A few have been adopted by universities as textbooks:

My books' passive income allows me more time with my family.

Knowing I could quit my job and write full time gave me more confidence. And I find purpose in my work (i am in christian ministry).

I'm always eager to write. When work is a dread or something bad happens, writing gives me energy. Writing isn't scary. In fact, I can’t stop myself from writing!

Writing has also established my tech authority. Universities use my books, as I've said. Traditional publishers have asked me to write books.

These mindsets helped me become a successful nonfiction author:

1. You don’t have to be an Authority

Yes, I have computer science experience. But I'm no expert on my topics. Before authoring "Beginning Node.js, Express & MongoDB," my most profitable book, I had no experience with those topics. Node was a new server-side technology for me. Would that stop me from writing a book? It can. I liked learning a new technology. So I read the top three Node books, took the top online courses, and put them into my own book (which makes me know more than 90 percent of people already).

I didn't have to worry about using too much jargon because I was learning as I wrote. An expert forgets a beginner's hardship.

"The fellow learner can aid more than the master since he knows less," says C.S. Lewis. The problem he must explain is recent. The expert has forgotten.”

2. Solve a micro-problem (Niching down)

I didn't set out to write a definitive handbook. I found a market with several challenges and wrote one book. Ex:

- Instead of web development, what about web development using Angular?

- Instead of Blockchain, what about Blockchain using Solidity and React?

- Instead of cooking recipes, how about a recipe for a specific kind of diet?

- Instead of Learning math, what about Learning Singapore Math?

3. Piggy Backing Trends

The above topics may still be a competitive market. E.g. Angular, React. To stand out, include the latest technologies or trends in your book. Learn iOS 15 instead of iOS programming. Instead of personal finance, what about personal finance with NFTs.

Even though you're a newbie author, your topic is well-known.

4. Publish short books

My books are known for being direct. Many people like this:

Your reader will appreciate you cutting out the fluff and getting to the good stuff. A reader can finish and review your book.

Second, short books are easier to write. Instead of creating a 500-page book for $50 (which few will buy), write a 100-page book that answers a subset of the problem and sell it for less. (You make less, but that's another subject). At least it got published instead of languishing. Less time spent creating a book means less time wasted if it fails. Write a small-bets book portfolio like Daniel Vassallo!

Third, it's $2.99-$9.99 on Amazon (gets 70 percent royalties for ebooks). Anything less receives 35% royalties. $9.99 books have 20,000–30,000 words. If you write more and charge more over $9.99, you get 35% royalties. Why not make it a $9.99 book?

(This is the ebook version.) Paperbacks cost more. Higher royalties allow for higher prices.

5. Validate book idea

Amazon will tell you if your book concept, title, and related phrases are popular. See? Check its best-sellers list.

150,000 is preferable. It sells 2–3 copies daily. Consider your rivals. Profitable niches have high demand and low competition.

Don't be afraid of competitive niches. First, it shows high demand. Secondly, what are the ways you can undercut the completion? Better book? Or cheaper option? There was lots of competition in my NodeJS book's area. None received 4.5 stars or more. I wrote a NodeJS book. Today, it's a best-selling Node book.

What’s Next

So long. Part II follows. Meanwhile, I will continue to write more books!

Follow my journey on Twitter.

This post is a summary. Read full article here

Caleb Naysmith

3 years ago

Ads Coming to Medium?

Could this happen?

Medium isn't like other social media giants. It wasn't a dot-com startup that became a multi-trillion-dollar social media firm. It launched in 2012 but didn't gain popularity until later. Now, it's one of the largest sites by web traffic, but it's still little compared to most. Most of Medium's traffic is external, but they don't run advertisements, so it's all about memberships.

Medium isn't profitable, but they don't disclose how terrible the problem is. Most of the $163 million they raised has been spent or used for acquisitions. If the money turns off, Medium can't stop paying its writers since the site dies. Writers must be paid, but they can't substantially slash payment without hurting the platform. The existing model needs scale to be viable and has a low ceiling. Facebook and other free social media platforms are struggling to retain users. Here, you must pay to appreciate it, and it's bad for writers AND readers. If I had the same Medium stats on YouTube, I'd make thousands of dollars a month.

Then what? Medium has tried to monetize by offering writers a cut of new members, but that's unsustainable. People-based growth is limited. Imagine recruiting non-Facebook users and getting them to pay to join. Some may, but I'd rather write.

Alternatives:

Donation buttons

Tiered subscriptions ($5, $10, $25, etc.)

Expanding content

and these may be short-term fixes, but they're not as profitable as allowing ads. Advertisements can pay several dollars per click and cents every view. If you get 40,000 views a month like me, that's several thousand instead of a few hundred. Also, Medium would have enough money to split ad revenue with writers, who would make more. I'm among the top 6% of Medium writers. Only 6% of Medium writers make more than $100, and I made $500 with 35,000 views last month. Compared to YouTube, the top 1% of Medium authors make a lot. Mr. Beast and PewDiePie make MILLIONS a month, yet top Medium writers make tens of thousands. Sure, paying 3 or 4 people a few grand, or perhaps tens of thousands, will keep them around. What if great authors leveraged their following to go huge on YouTube and abandoned Medium? If people use Medium to get successful on other platforms, Medium will be continuously cycling through authors and paying them to stay.

Ads might make writing on Medium more profitable than making videos on YouTube because they could preserve the present freemium model and pay users based on internal views. The $5 might be ad-free.

Consider: Would you accept Medium ads? A $5 ad-free version + pay-as-you-go, etc. What are your thoughts on this?

Original post available here

You might also like

Jonathan Vanian

4 years ago

What is Terra? Your guide to the hot cryptocurrency

With cryptocurrencies like Bitcoin, Ether, and Dogecoin gyrating in value over the past few months, many people are looking at so-called stablecoins like Terra to invest in because of their more predictable prices.

Terraform Labs, which oversees the Terra cryptocurrency project, has benefited from its rising popularity. The company said recently that investors like Arrington Capital, Lightspeed Venture Partners, and Pantera Capital have pledged $150 million to help it incubate various crypto projects that are connected to Terra.

Terraform Labs and its partners have built apps that operate on the company’s blockchain technology that helps keep a permanent and shared record of the firm’s crypto-related financial transactions.

Here’s what you need to know about Terra and the company behind it.

What is Terra?

Terra is a blockchain project developed by Terraform Labs that powers the startup’s cryptocurrencies and financial apps. These cryptocurrencies include the Terra U.S. Dollar, or UST, that is pegged to the U.S. dollar through an algorithm.

Terra is a stablecoin that is intended to reduce the volatility endemic to cryptocurrencies like Bitcoin. Some stablecoins, like Tether, are pegged to more conventional currencies, like the U.S. dollar, through cash and cash equivalents as opposed to an algorithm and associated reserve token.

To mint new UST tokens, a percentage of another digital token and reserve asset, Luna, is “burned.” If the demand for UST rises with more people using the currency, more Luna will be automatically burned and diverted to a community pool. That balancing act is supposed to help stabilize the price, to a degree.

“Luna directly benefits from the economic growth of the Terra economy, and it suffers from contractions of the Terra coin,” Terraform Labs CEO Do Kwon said.

Each time someone buys something—like an ice cream—using UST, that transaction generates a fee, similar to a credit card transaction. That fee is then distributed to people who own Luna tokens, similar to a stock dividend.

Who leads Terra?

The South Korean firm Terraform Labs was founded in 2018 by Daniel Shin and Kwon, who is now the company’s CEO. Kwon is a 29-year-old former Microsoft employee; Shin now heads the Chai online payment service, a Terra partner. Kwon said many Koreans have used the Chai service to buy goods like movie tickets using Terra cryptocurrency.

Terraform Labs does not make money from transactions using its crypto and instead relies on outside funding to operate, Kwon said. It has raised $57 million in funding from investors like HashKey Digital Asset Group, Divergence Digital Currency Fund, and Huobi Capital, according to deal-tracking service PitchBook. The amount raised is in addition to the latest $150 million funding commitment announced on July 16.

What are Terra’s plans?

Terraform Labs plans to use Terra’s blockchain and its associated cryptocurrencies—including one pegged to the Korean won—to create a digital financial system independent of major banks and fintech-app makers. So far, its main source of growth has been in Korea, where people have bought goods at stores, like coffee, using the Chai payment app that’s built on Terra’s blockchain. Kwon said the company’s associated Mirror trading app is experiencing growth in China and Thailand.

Meanwhile, Kwon said Terraform Labs would use its latest $150 million in funding to invest in groups that build financial apps on Terra’s blockchain. He likened the scouting and investing in other groups as akin to a “Y Combinator demo day type of situation,” a reference to the popular startup pitch event organized by early-stage investor Y Combinator.

The combination of all these Terra-specific financial apps shows that Terraform Labs is “almost creating a kind of bank,” said Ryan Watkins, a senior research analyst at cryptocurrency consultancy Messari.

In addition to cryptocurrencies, Terraform Labs has a number of other projects including the Anchor app, a high-yield savings account for holders of the group’s digital coins. Meanwhile, people can use the firm’s associated Mirror app to create synthetic financial assets that mimic more conventional ones, like “tokenized” representations of corporate stocks. These synthetic assets are supposed to be helpful to people like “a small retail trader in Thailand” who can more easily buy shares and “get some exposure to the upside” of stocks that they otherwise wouldn’t have been able to obtain, Kwon said. But some critics have said the U.S. Securities and Exchange Commission may eventually crack down on synthetic stocks, which are currently unregulated.

What do critics say?

Terra still has a long way to go to catch up to bigger cryptocurrency projects like Ethereum.

Most financial transactions involving Terra-related cryptocurrencies have originated in Korea, where its founders are based. Although Terra is becoming more popular in Korea thanks to rising interest in its partner Chai, it’s too early to say whether Terra-related currencies will gain traction in other countries.

Terra’s blockchain runs on a “limited number of nodes,” said Messari’s Watkins, referring to the computers that help keep the system running. That helps reduce latency that may otherwise slow processing of financial transactions, he said.

But the tradeoff is that Terra is less “decentralized” than other blockchain platforms like Ethereum, which is powered by thousands of interconnected computing nodes worldwide. That could make Terra less appealing to some blockchain purists.

Laura Sanders

3 years ago

Xenobots, tiny living machines, can duplicate themselves.

Strange and complex behavior of frog cell blobs

A xenobot “parent,” shaped like a hungry Pac-Man (shown in red false color), created an “offspring” xenobot (green sphere) by gathering loose frog cells in its opening.

Tiny “living machines” made of frog cells can make copies of themselves. This newly discovered renewal mechanism may help create self-renewing biological machines.

According to Kirstin Petersen, an electrical and computer engineer at Cornell University who studies groups of robots, “this is an extremely exciting breakthrough.” She says self-replicating robots are a big step toward human-free systems.

Researchers described the behavior of xenobots earlier this year (SN: 3/31/21). Small clumps of skin stem cells from frog embryos knitted themselves into small spheres and started moving. Cilia, or cellular extensions, powered the xenobots around their lab dishes.

The findings are published in the Proceedings of the National Academy of Sciences on Dec. 7. The xenobots can gather loose frog cells into spheres, which then form xenobots.

The researchers call this type of movement-induced reproduction kinematic self-replication. The study's coauthor, Douglas Blackiston of Tufts University in Medford, Massachusetts, and Harvard University, says this is typical. For example, sexual reproduction requires parental sperm and egg cells. Sometimes cells split or budded off from a parent.

“This is unique,” Blackiston says. These xenobots “find loose parts in the environment and cobble them together.” This second generation of xenobots can move like their parents, Blackiston says.

The researchers discovered that spheroid xenobots could only produce one more generation before dying out. The original xenobots' shape was predicted by an artificial intelligence program, allowing for four generations of replication.

A C shape, like an openmouthed Pac-Man, was predicted to be a more efficient progenitor. When improved xenobots were let loose in a dish, they began scooping up loose cells into their gaping “mouths,” forming more sphere-shaped bots (see image below). As many as 50 cells clumped together in the opening of a parent to form a mobile offspring. A xenobot is made up of 4,000–6,000 frog cells.

Petersen likes the Xenobots' small size. “The fact that they were able to do this at such a small scale just makes it even better,” she says. Miniature xenobots could sculpt tissues for implantation or deliver therapeutics inside the body.

Beyond the xenobots' potential jobs, the research advances an important science, says study coauthor and Tufts developmental biologist Michael Levin. The science of anticipating and controlling the outcomes of complex systems, he says.

“No one could have predicted this,” Levin says. “They regularly surprise us.” Researchers can use xenobots to test the unexpected. “This is about advancing the science of being less surprised,” Levin says.

1eth1da

3 years ago

6 Rules to build a successful NFT Community in 2022

Too much NFT, Discord, and shitposting.

How do you choose?

How do you recruit more members to join your NFT project?

In 2021, a successful NFT project required:

Monkey/ape artwork

Twitter and Discord bot-filled

Roadmap overpromise

Goal was quick cash.

2022 and the years after will change that.

These are 6 Rules for a Strong NFT Community in 2022:

THINK LONG TERM

This relates to roadmap planning. Hype and dumb luck may drive NFT projects (ahem, goblins) but rarely will your project soar.

Instead, consider sustainability.

Plan your roadmap based on your team's abilities.

Do what you're already doing, but with NFTs, make it bigger and better.

You shouldn't copy a project's roadmap just because it was profitable.

This will lead to over-promising, team burnout, and an RUG NFT project.

OFFER VALUE

Building a great community starts with giving.

Why are musicians popular?

Because they offer entertainment for everyone, a random person becomes a fan, and more fans become a cult.

That's how you should approach your community.

TEAM UP

A great team helps.

An NFT project could have 3 or 2 people.

Credibility trumps team size.

Make sure your team can answer community questions, resolve issues, and constantly attend to them.

Don't overwork and burn out.

Your community will be able to recognize that you are trying too hard and give up on the project.

BUILD A GREAT PRODUCT

Bored Ape Yacht Club altered the NFT space.

Cryptopunks transformed NFTs.

Many others did, including Okay Bears.

What made them that way?

Because they answered a key question.

What is my NFT supposed to be?

Before planning art, this question must be answered.

NFTs can't be just jpegs.

What does it represent?

Is it a Metaverse-ready project?

What blockchain are you going to be using and why?

Set some ground rules for yourself. This helps your project's direction.

These questions will help you and your team set a direction for blockchain, NFT, and Web3 technology.

EDUCATE ON WEB3

The more the team learns about Web3 technology, the more they can offer their community.

Think tokens, metaverse, cross-chain interoperability and more.

BUILD A GREAT COMMUNITY

Several projects mistreat their communities.

They treat their community like "customers" and try to sell them NFT.

Providing Whitelists and giveaways aren't your only community-building options.

Think bigger.

Consider them family and friends, not wallets.

Consider them fans.

These are some tips to start your NFT project.