Gran Turismo 7 Update Eases Up On The Grind After Fan Outrage

Polyphony Digital has changed the game after apologizing in March.

To make amends for some disastrous downtime, Gran Turismo 7 director Kazunori Yamauchi announced a credits handout and promised to “dramatically change GT7's car economy to help make amends” last month. The first of these has arrived.

The game's 1.11 update includes the following concessions to players frustrated by the economy and its subsequent grind:

-

The last half of the World Circuits events have increased in-game credit rewards.

-

Modified Arcade and Custom Race rewards

-

Clearing all circuit layouts with Gold or Bronze now rewards In-game Credits. Exiting the Sector selection screen with the Exit button will award Credits if an event has already been cleared.

-

Increased Credits Rewards in Lobby and Daily Races

-

Increased the free in-game Credits cap from 20,000,000 to 100,000,000.

Additionally, “The Human Comedy” missions are one-hour endurance races that award “up to 1,200,000” credits per event.

This isn't everything Yamauchi promised last month; he said it would take several patches and updates to fully implement the changes. Here's a list of everything he said would happen, some of which have already happened (like the World Cup rewards and credit cap):

- Increase rewards in the latter half of the World Circuits by roughly 100%.

- Added high rewards for all Gold/Bronze results clearing the Circuit Experience.

- Online Races rewards increase.

- Add 8 new 1-hour Endurance Race events to Missions. So expect higher rewards.

- Increase the non-paid credit limit in player wallets from 20M to 100M.

- Expand the number of Used and Legend cars available at any time.

- With time, we will increase the payout value of limited time rewards.

- New World Circuit events.

- Missions now include 24-hour endurance races.

- Online Time Trials added, with rewards based on the player's time difference from the leader.

- Make cars sellable.

The full list of updates and changes can be found here.

Read the original post.

More on Gaming

Matthew Cluff

3 years ago

GTO Poker 101

"GTO" (Game Theory Optimal) has been used a lot in poker recently. To clarify its meaning and application, the aim of this article is to define what it is, when to use it when playing, what strategies to apply for how to play GTO poker, for beginner and more advanced players!

Poker GTO

In poker, you can choose between two main winning strategies:

Exploitative play maximizes expected value (EV) by countering opponents' sub-optimal plays and weaker tendencies. Yes, playing this way opens you up to being exploited, but the weaker opponents you're targeting won't change their game to counteract this, allowing you to reap maximum profits over the long run.

GTO (Game-Theory Optimal): You try to play perfect poker, which forces your opponents to make mistakes (which is where almost all of your profit will be derived from). It mixes bluffs or semi-bluffs with value bets, clarifies bet sizes, and more.

GTO vs. Exploitative: Which is Better in Poker?

Before diving into GTO poker strategy, it's important to know which of these two play styles is more profitable for beginners and advanced players. The simple answer is probably both, but usually more exploitable.

Most players don't play GTO poker and can be exploited in their gameplay and strategy, allowing for more profits to be made using an exploitative approach. In fact, it’s only in some of the largest games at the highest stakes that GTO concepts are fully utilized and seen in practice, and even then, exploitative plays are still sometimes used.

Knowing, understanding, and applying GTO poker basics will create a solid foundation for your poker game. It's also important to understand GTO so you can deviate from it to maximize profits.

GTO Poker Strategy

According to Ed Miller's book "Poker's 1%," the most fundamental concept that only elite poker players understand is frequency, which could be in relation to cbets, bluffs, folds, calls, raises, etc.

GTO poker solvers (downloadable online software) give solutions for how to play optimally in any given spot and often recommend using mixed strategies based on select frequencies.

In a river situation, a solver may tell you to call 70% of the time and fold 30%. It may also suggest calling 50% of the time, folding 35% of the time, and raising 15% of the time (with a certain range of hands).

Frequencies are a fundamental and often unrecognized part of poker, but they run through these 5 GTO concepts.

1. Preflop ranges

To compensate for positional disadvantage, out-of-position players must open tighter hand ranges.

Premium starting hands aren't enough, though. Considering GTO poker ranges and principles, you want a good, balanced starting hand range from each position with at least some hands that can make a strong poker hand regardless of the flop texture (low, mid, high, disconnected, etc).

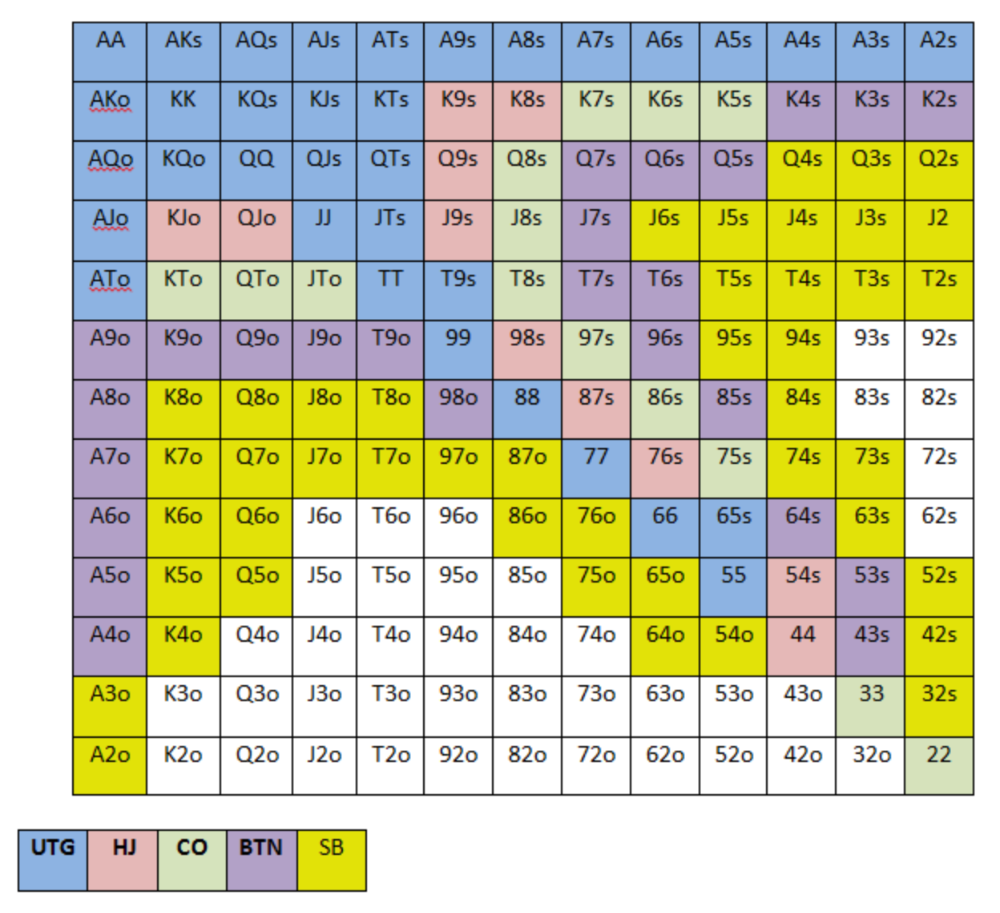

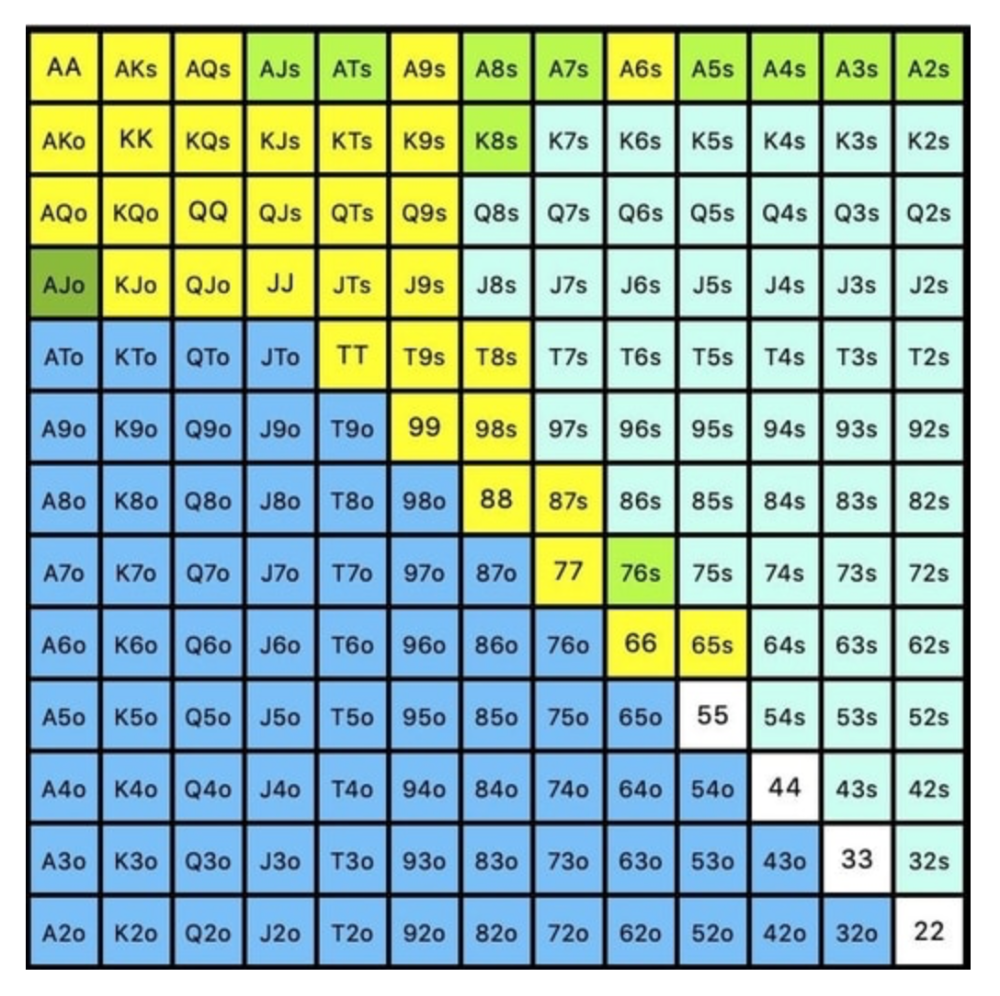

Below is a GTO preflop beginner poker chart for online 6-max play, showing which hand ranges one should open-raise with. Table positions are color-coded (see key below).

NOTE: For GTO play, it's advisable to use a mixed strategy for opening in the small blind, combining open-limps and open-raises for various hands. This cannot be illustrated with the color system used for the chart.

Choosing which hands to play is often a math problem, as discussed below.

Other preflop GTO poker charts include which hands to play after a raise, which to 3bet, etc. Solvers can help you decide which preflop hands to play (call, raise, re-raise, etc.).

2. Pot Odds

Always make +EV decisions that profit you as a poker player. Understanding pot odds (and equity) can help.

Postflop Pot Odds

Let’s say that we have JhTh on a board of 9h8h2s4c (open-ended straight-flush draw). We have $40 left and $50 in the pot. He has you covered and goes all-in. As calling or folding are our only options, playing GTO involves calculating whether a call is +EV or –EV. (The hand was empty.)

Any remaining heart, Queen, or 7 wins the hand. This means we can improve 15 of 46 unknown cards, or 32.6% of the time.

What if our opponent has a set? The 4h or 2h could give us a flush, but it could also give the villain a boat. If we reduce outs from 15 to 14.5, our equity would be 31.5%.

We must now calculate pot odds.

(bet/(our bet+pot)) = pot odds

= $50 / ($40 + $90)

= $40 / $130

= 30.7%

To make a profitable call, we need at least 30.7% equity. This is a profitable call as we have 31.5% equity (even if villain has a set). Yes, we will lose most of the time, but we will make a small profit in the long run, making a call correct.

Pot odds aren't just for draws, either. If an opponent bets 50% pot, you get 3 to 1 odds on a call, so you must win 25% of the time to be profitable. If your current hand has more than 25% equity against your opponent's perceived range, call.

Preflop Pot Odds

Preflop, you raise to 3bb and the button 3bets to 9bb. You must decide how to act. In situations like these, we can actually use pot odds to assist our decision-making.

This pot is:

(our open+3bet size+small blind+big blind)

(3bb+9bb+0.5bb+1bb)

= 13.5

This means we must call 6bb to win a pot of 13.5bb, which requires 30.7% equity against the 3bettor's range.

Three additional factors must be considered:

Being out of position on our opponent makes it harder to realize our hand's equity, as he can use his position to put us in tough spots. To profitably continue against villain's hand range, we should add 7% to our equity.

Implied Odds / Reverse Implied Odds: The ability to win or lose significantly more post-flop (than pre-flop) based on our remaining stack.

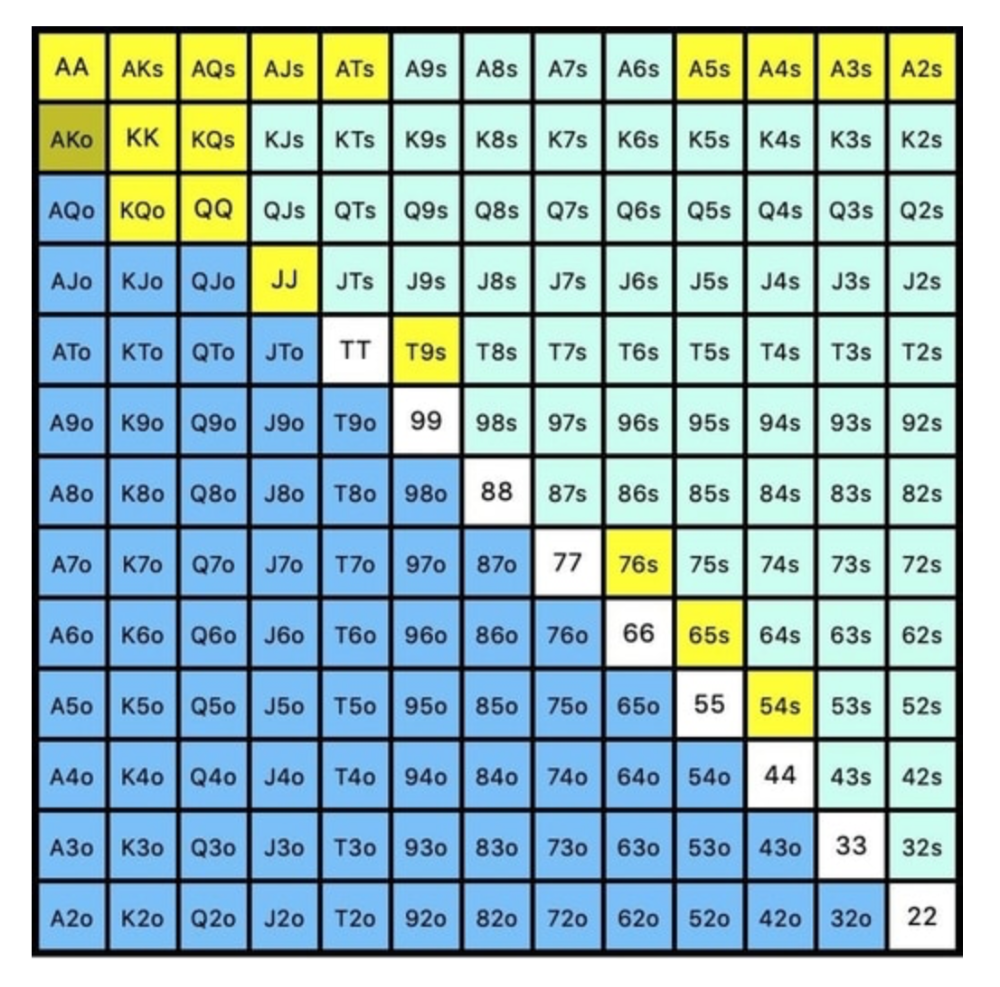

While statistics on 3bet stats can be gained with a large enough sample size (i.e. 8% 3bet stat from button), the numbers don't tell us which 8% of hands villain could be 3betting with. Both polarized and depolarized charts below show 8% of possible hands.

7.4% of hands are depolarized.

Polarized Hand range (7.54%):

Each hand range has different contents. We don't know if he 3bets some hands and calls or folds others.

Using an exploitable strategy can help you play a hand range correctly. The next GTO concept will make things easier.

3. Minimum Defense Frequency:

This concept refers to the % of our range we must continue with (by calling or raising) to avoid being exploited by our opponents. This concept is most often used off-table and is difficult to apply in-game.

These beginner GTO concepts will help your decision-making during a hand, especially against aggressive opponents.

MDF formula:

MDF = POT SIZE/(POT SIZE+BET SIZE)

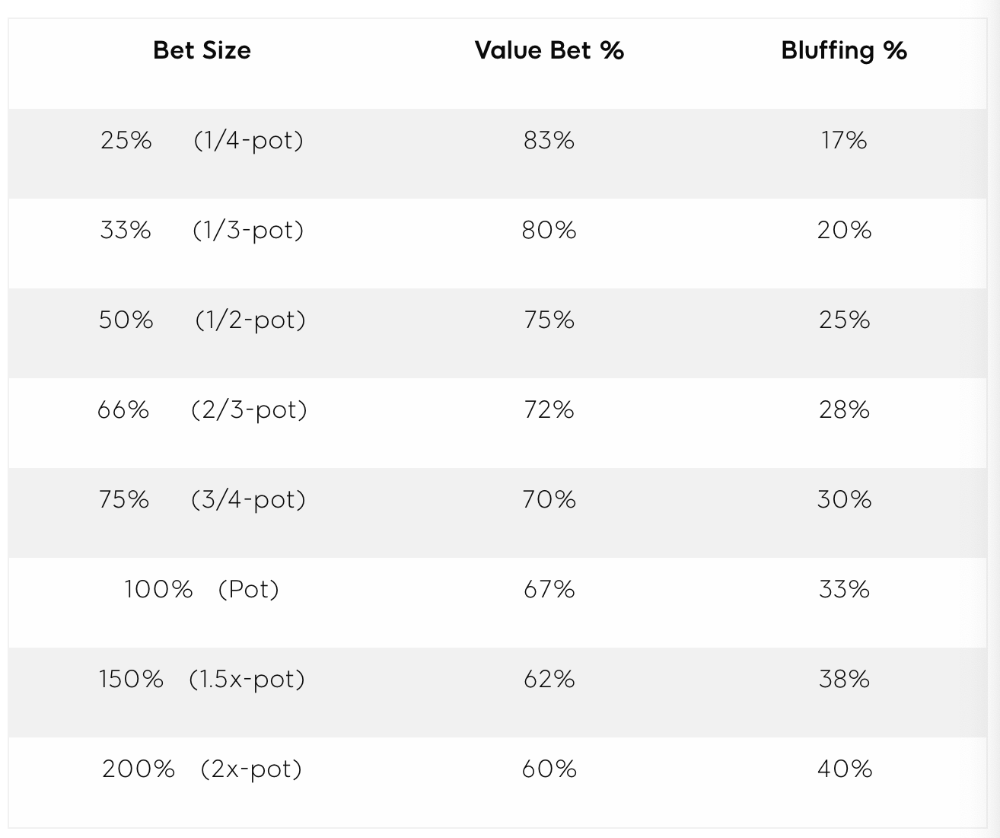

Here's a poker GTO chart of common bet sizes and minimum defense frequency.

Take the number of hand combos in your starting hand range and use the MDF to determine which hands to continue with. Choose hands with the most playability and equity against your opponent's betting range.

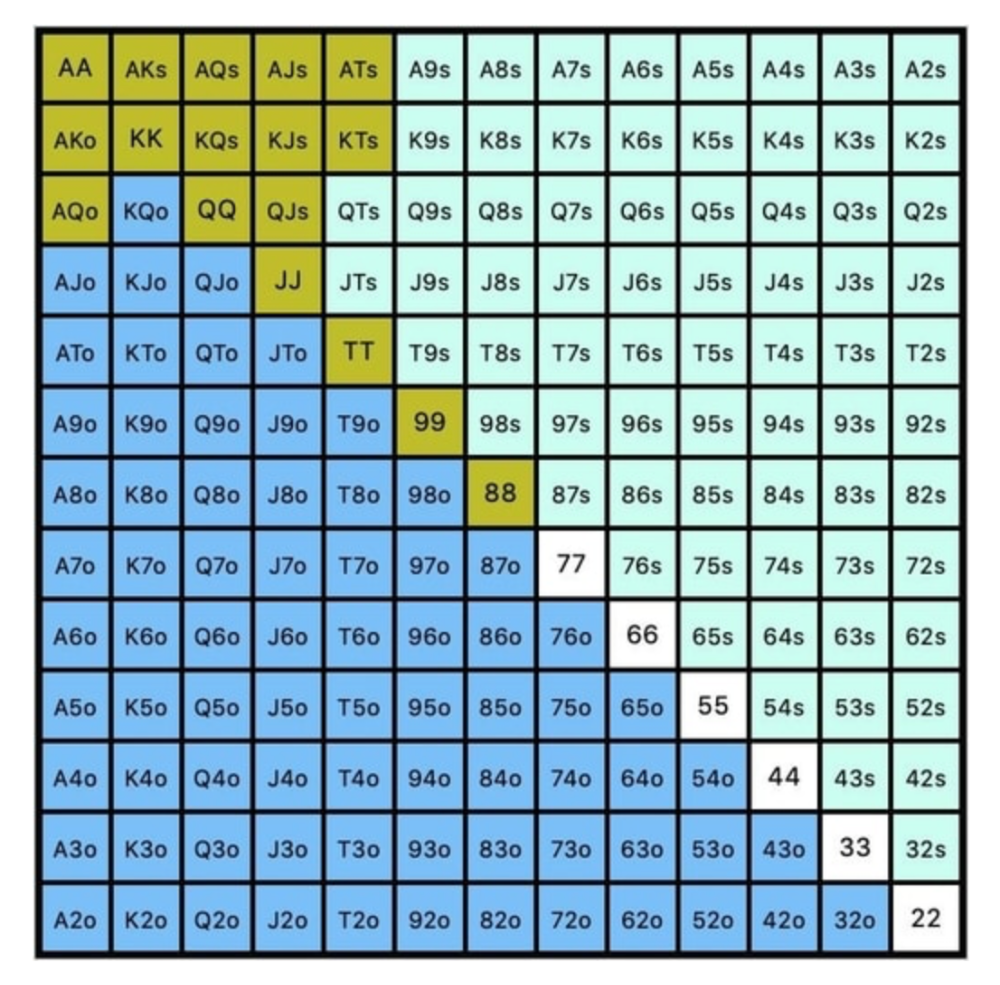

Say you open-raise HJ and BB calls. Qh9h6c flop. Your opponent leads you for a half-pot bet. MDF suggests keeping 67% of our range.

Using the above starting hand chart, we can determine that the HJ opens 254 combos:

We must defend 67% of these hands, or 170 combos, according to MDF. Hands we should keep include:

Flush draws

Open-Ended Straight Draws

Gut-Shot Straight Draws

Overcards

Any Pair or better

So, our flop continuing range could be:

Some highlights:

Fours and fives have little chance of improving on the turn or river.

We only continue with AX hearts (with a flush draw) without a pair or better.

We'll also include 4 AJo combos, all of which have the Ace of hearts, and AcJh, which can block a backdoor nut flush combo.

Let's assume all these hands are called and the turn is blank (2 of spades). Opponent bets full-pot. MDF says we must defend 50% of our flop continuing range, or 85 of 170 combos, to be unexploitable. This strategy includes our best flush draws, straight draws, and made hands.

Here, we keep combining:

Nut flush draws

Pair + flush draws

GS + flush draws

Second Pair, Top Kicker+

One combo of JJ that doesn’t block the flush draw or backdoor flush draw.

On the river, we can fold our missed draws and keep our best made hands. When calling with weaker hands, consider blocker effects and card removal to avoid overcalling and decide which combos to continue.

4. Poker GTO Bet Sizing

To avoid being exploited, balance your bluffs and value bets. Your betting range depends on how much you bet (in relation to the pot). This concept only applies on the river, as draws (bluffs) on the flop and turn still have equity (and are therefore total bluffs).

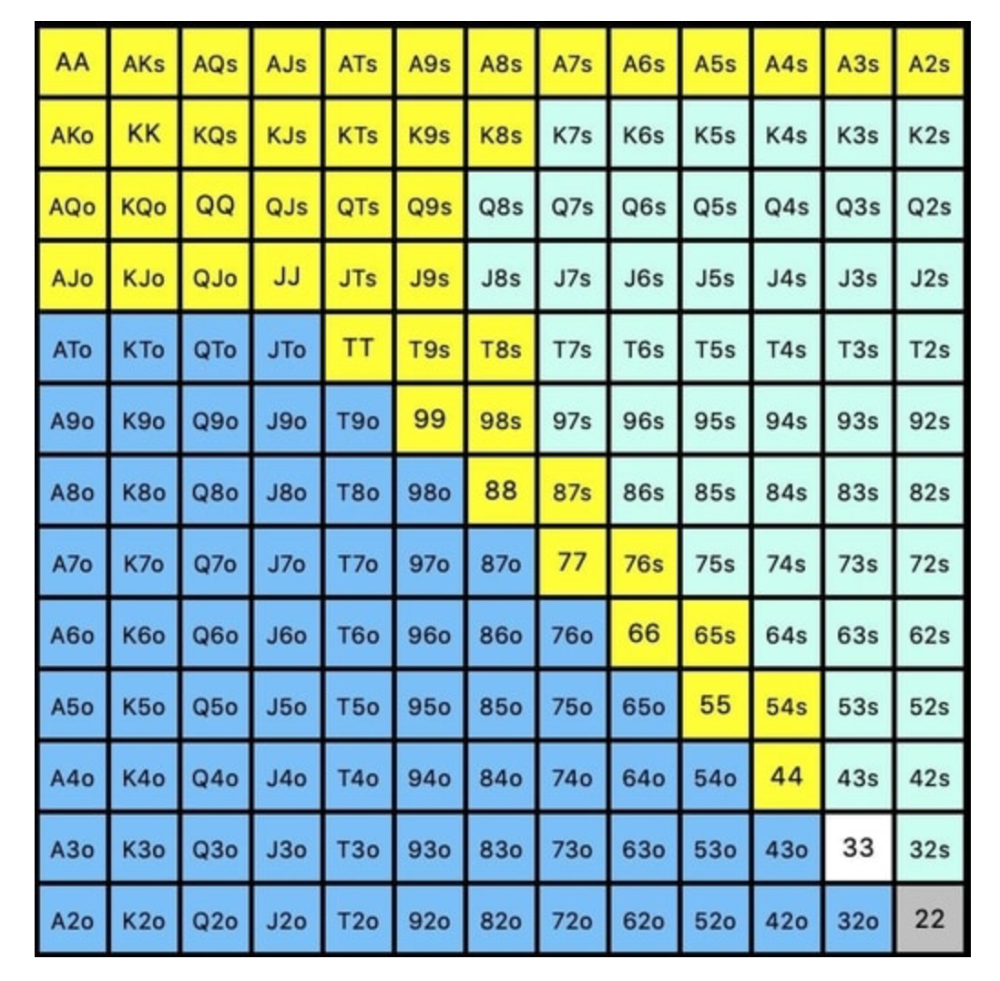

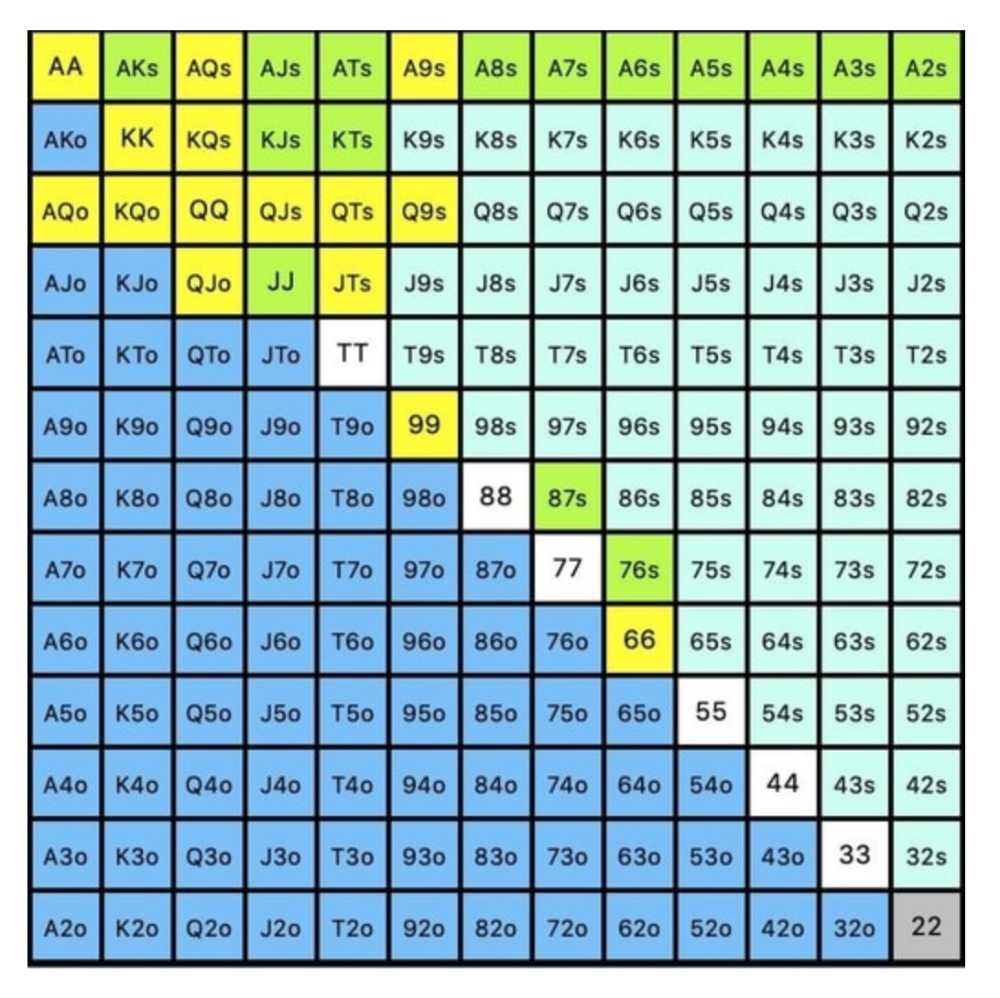

On the flop, you want a 2:1 bluff-to-value-bet ratio. On the flop, there won't be as many made hands as on the river, and your bluffs will usually contain equity. The turn should have a "bluffing" ratio of 1:1. Use the chart below to determine GTO river bluff frequencies (relative to your bet size):

This chart relates to your opponent's pot odds. If you bet 50% pot, your opponent gets 3:1 odds and must win 25% of the time to call. Poker GTO theory suggests including 25% bluff combinations in your betting range so you're indifferent to your opponent calling or folding.

Best river bluffs don't block hands you want your opponent to have (or not have). For example, betting with missed Ace-high flush draws is often a mistake because you block a missed flush draw you want your opponent to have when bluffing on the river (meaning that it would subsequently be less likely he would have it, if you held two of the flush draw cards). Ace-high usually has some river showdown value.

If you had a 3-flush on the river and wanted to raise, you could bluff raise with AX combos holding the bluff suit Ace. Blocking the nut flush prevents your opponent from using that combo.

5. Bet Sizes and Frequency

GTO beginner strategies aren't just bluffs and value bets. They show how often and how much to bet in certain spots. Top players have benefited greatly from poker solvers, which we'll discuss next.

GTO Poker Software

In recent years, various poker GTO solvers have been released to help beginner, intermediate, and advanced players play balanced/GTO poker in various situations.

PokerSnowie and PioSolver are popular GTO and poker study programs.

While you can't compute players' hand ranges and what hands to bet or check with in real time, studying GTO play strategies with these programs will pay off. It will improve your poker thinking and understanding.

Solvers can help you balance ranges, choose optimal bet sizes, and master cbet frequencies.

GTO Poker Tournament

Late-stage tournaments have shorter stacks than cash games. In order to follow GTO poker guidelines, Nash charts have been created, tweaked, and used for many years (and also when to call, depending on what number of big blinds you have when you find yourself shortstacked).

The charts are for heads-up push/fold. In a multi-player game, the "pusher" chart can only be used if play is folded to you in the small blind. The "caller" chart can only be used if you're in the big blind and assumes a small blind "pusher" (with a much wider range than if a player in another position was open-shoving).

Divide the pusher chart's numbers by 2 to see which hand to use from the Button. Divide the original chart numbers by 4 to find the CO's pushing range. Some of the figures will be impossible to calculate accurately for the CO or positions to the right of the blinds because the chart's highest figure is "20+" big blinds, which is also used for a wide range of hands in the push chart.

Both of the GTO charts below are ideal for heads-up play, but exploitable HU shortstack strategies can lead to more +EV decisions against certain opponents. Following the charts will make your play GTO and unexploitable.

Poker pro Max Silver created the GTO push/fold software SnapShove. (It's accessible online at www.snapshove.com or as iOS or Android apps.)

Players can access GTO shove range examples in the full version. (You can customize the number of big blinds you have, your position, the size of the ante, and many other options.)

In Conclusion

Due to the constantly changing poker landscape, players are always improving their skills. Exploitable strategies often yield higher profit margins than GTO-based approaches, but knowing GTO beginner and advanced concepts can give you an edge for a few reasons.

It creates a solid gameplay base.

Having a baseline makes it easier to exploit certain villains.

You can avoid leveling wars with your opponents by making sound poker decisions based on GTO strategy.

It doesn't require assuming opponents' play styles.

Not results-oriented.

This is just the beginning of GTO and poker theory. Consider investing in the GTO poker solver software listed above to improve your game.

Chris Moyse

4 years ago

Sony and LEGO raise $2 billion for Epic Games' metaverse

‘Kid-friendly’ project holds $32 billion valuation

Epic Games announced today that it has raised $2 billion USD from Sony Group Corporation and KIRKBI (holding company of The LEGO Group). Both companies contributed $1 billion to Epic Games' upcoming ‘metaverse' project.

“We need partners who share our vision as we reimagine entertainment and play. Our partnership with Sony and KIRKBI has found this,” said Epic Games CEO Tim Sweeney. A new metaverse will be built where players can have fun with friends and brands create creative and immersive experiences, as well as creators thrive.

Last week, LEGO and Epic Games announced their plans to create a family-friendly metaverse where kids can play, interact, and create in digital environments. The service's users' safety and security will be prioritized.

With this new round of funding, Epic Games' project is now valued at $32 billion.

“Epic Games is known for empowering creators large and small,” said KIRKBI CEO Sren Thorup Srensen. “We invest in trends that we believe will impact the world we and our children will live in. We are pleased to invest in Epic Games to support their continued growth journey, with a long-term focus on the future metaverse.”

Epic Games is expected to unveil its metaverse plans later this year, including its name, details, services, and release date.

Ash Parrish

3 years ago

Sonic Prime and indie games on Netflix

Netflix will stream Spiritfarer, Raji: An Ancient Epic, and Lucky Luna.

Netflix's Geeked Week brought a slew of announcements. The flurry of reveals for The Sandman, The Umbrella Academy season 3, One Piece, and more also included game and game-adjacent announcements.

Netflix released a teaser for Cuphead season 2 ahead of its August premiere, featuring more of Grey DeLisle's Ms. Chalice. DOTA: Dragon's Blood season 3 hits Netflix in August. Tekken, the fighting game that throws kids off cliffs, gets an anime, Tekken: Bloodline.

Netflix debuted a clip of Sonic Prime before Sonic Origins in June and Sonic Frontiers in 2022.

Castlevania: Nocturne will follow Richter Belmont.

Netflix is reviving licensed games with titles based on its shows. There's a Queen's Gambit chess game, a Shadow and Bone RPG, a La Casa de Papel heist adventure, and a Too Hot to Handle game where a pregnant woman must choose between stabbing her cheating ex or forgiving him.

Riot's rhythm platformer Hextech Mayhem debuted on Netflix last year, and now Netflix is adding games from Devolver Digital. Reigns: Three Kingdoms is a card game that lets players choose the fate of Three Kingdoms-era China by swiping left or right on cards. Spiritfarer, the "cozy game about death" from 2020, and Raji: An Ancient Epic are coming to Netflix. Poinpy, a vertical climber from the creator of Downwell, is now on Netflix.

Desta: The Memories Between is a turn-based strategy game set in dreams and memories.

Snowman's Lucky Luna will also be added soon.

With these games, Netflix is expanding beyond dinky mobile games — it plans to have 50 by the end of the year — and could be a serious platform for indies that want to expand into mobile. It takes gaming seriously.

You might also like

JEFF JOHN ROBERTS

3 years ago

What just happened in cryptocurrency? A plain-English Q&A about Binance's FTX takedown.

Crypto people have witnessed things. They've seen big hacks, mind-boggling swindles, and amazing successes. They've never seen a day like Tuesday, when the world's largest crypto exchange murdered its closest competition.

Here's a primer on Binance and FTX's lunacy and why it matters if you're new to crypto.

What happened?

CZ, a shrewd Chinese-Canadian billionaire, runs Binance. FTX, a newcomer, has challenged Binance in recent years. SBF (Sam Bankman-Fried)—a young American with wild hair—founded FTX (initials are a thing in crypto).

Last weekend, CZ complained about SBF's lobbying and then exploited Binance's market power to attack his competition.

How did CZ do that?

CZ invested in SBF's new cryptocurrency exchange when they were friends. CZ sold his investment in FTX for FTT when he no longer wanted it. FTX clients utilize those tokens to get trade discounts, although they are less liquid than Bitcoin.

SBF made a mistake by providing CZ just too many FTT tokens, giving him control over FTX. It's like Pepsi handing Coca-Cola a lot of stock it could sell at any time. CZ got upset with SBF and flooded the market with FTT tokens.

SBF owns a trading fund with many FTT tokens, therefore this was catastrophic. SBF sought to defend FTT's worth by selling other assets to buy up the FTT tokens flooding the market, but it didn't succeed, and as FTT's value plummeted, his liabilities exceeded his assets. By Tuesday, his companies were insolvent, so he sold them to his competition.

Crazy. How could CZ do that?

CZ likely did this to crush a rising competition. It was also personal. In recent months, regulators have been tough toward the crypto business, and Binance and FTX have been trying to stay on their good side. CZ believed SBF was poisoning U.S. authorities by saying CZ was linked to China, so CZ took retribution.

“We supported previously, but we won't pretend to make love after divorce. We're neutral. But we won't assist people that push against other industry players behind their backs," CZ stated in a tragic tweet on Sunday. He crushed his rival's company two days later.

So does Binance now own FTX?

No. Not yet. CZ has only stated that Binance signed a "letter of intent" to acquire FTX. CZ and SBF say Binance will protect FTX consumers' funds.

Who’s to blame?

You could blame CZ for using his control over FTX to destroy it. SBF is also being criticized for not disclosing the full overlap between FTX and his trading company, which controlled plenty of FTT. If he had been upfront, someone might have warned FTX about this vulnerability earlier, preventing this mess.

Others have alleged that SBF utilized customer monies to patch flaws in his enterprises' balance accounts. That happened to multiple crypto startups that collapsed this spring, which is unfortunate. These are allegations, not proof.

Why does this matter? Isn't this common in crypto?

Crypto is notorious for shady executives and pranks. FTX is the second-largest crypto business, and SBF was largely considered as the industry's golden boy who would help it get on authorities' good side. Thus far.

Does this affect cryptocurrency prices?

Short-term, it's bad. Prices fell on suspicions that FTX was in peril, then rallied when Binance rescued it, only to fall again later on Tuesday.

These occurrences have hurt FTT and SBF's Solana token. It appears like a huge token selloff is affecting the rest of the market. Bitcoin fell 10% and Ethereum 15%, which is bad but not catastrophic for the two largest coins by market cap.

Scott Stockdale

3 years ago

A Day in the Life of Lex Fridman Can Help You Hit 6-Month Goals

The Lex Fridman podcast host has interviewed Elon Musk.

Lex is a minimalist YouTuber. His videos are sloppy. Suits are his trademark.

In a video, he shares a typical day. I've smashed my 6-month goals using its ideas.

Here's his schedule.

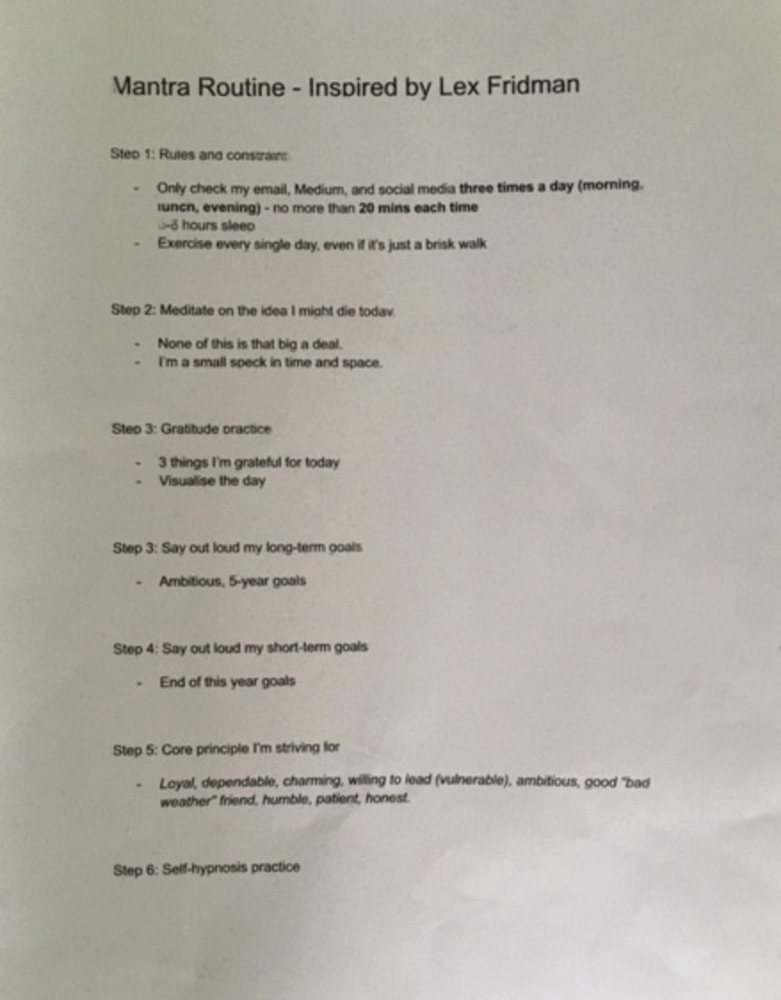

Morning Mantra

Not woo-woo. Lex's mantra reflects his practicality.

Four parts.

Rulebook

"I remember the game's rules," he says.

Among them:

Sleeping 6–8 hours nightly

1–3 times a day, he checks social media.

Every day, despite pain, he exercises. "I exercise uninjured body parts."

Visualize

He imagines his day. "Like Sims..."

He says three things he's grateful for and contemplates death.

"Today may be my last"

Objectives

Then he visualizes his goals. He starts big. Five-year goals.

Short-term goals follow. Lex says they're year-end goals.

Near but out of reach.

Principles

He lists his principles. Assertions. His goals.

He acknowledges his cliche beliefs. Compassion, empathy, and strength are key.

Here's my mantra routine:

Four-Hour Deep Work

Lex begins a four-hour deep work session after his mantra routine. Today's toughest.

AI is Lex's specialty. His video doesn't explain what he does.

Clearly, he works hard.

Before starting, he has water, coffee, and a bathroom break.

"During deep work sessions, I minimize breaks."

He's distraction-free. Phoneless. Silence. Nothing. Any loose ideas are typed into a Google doc for later. He wants to work.

"Just get the job done. Don’t think about it too much and feel good once it’s complete." — Lex Fridman

30-Minute Social Media & Music

After his first deep work session, Lex rewards himself.

10 minutes on social media, 20 on music. Upload content and respond to comments in 10 minutes. 20 minutes for guitar or piano.

"In the real world, I’m currently single, but in the music world, I’m in an open relationship with this beautiful guitar. Open relationship because sometimes I cheat on her with the acoustic." — Lex Fridman

Two-hour exercise

Then exercise for two hours.

Daily runs six miles. Then he chooses how far to go. Run time is an hour.

He does bodyweight exercises. Every minute for 15 minutes, do five pull-ups and ten push-ups. It's David Goggins-inspired. He aims for an hour a day.

He's hungry. Before running, he takes a salt pill for electrolytes.

He'll then take a one-minute cold shower while listening to cheesy songs. Afterward, he might eat.

Four-Hour Deep Work

Lex's second work session.

He works 8 hours a day.

Again, zero distractions.

Eating

The video's meal doesn't look appetizing, but it's healthy.

It's ground beef with vegetables. Cauliflower is his "ground-floor" veggie. "Carrots are my go-to party food."

Lex's keto diet includes 1800–2000 calories.

He drinks a "nutrient-packed" Atheltic Greens shake and takes tablets. It's:

One daily tablet of sodium.

Magnesium glycinate tablets stopped his keto headaches.

Potassium — "For electrolytes"

Fish oil: healthy joints

“So much of nutrition science is barely a science… I like to listen to my own body and do a one-person, one-subject scientific experiment to feel good.” — Lex Fridman

Four-hour shallow session

This work isn't as mentally taxing.

Lex planned to:

Finish last session's deep work (about an hour)

Adobe Premiere podcasting (about two hours).

Email-check (about an hour). Three times a day max. First, check for emergencies.

If he's sick, he may watch Netflix or YouTube documentaries or visit friends.

“The possibilities of chaos are wide open, so I can do whatever the hell I want.” — Lex Fridman

Two-hour evening reading

Nonstop work.

Lex ends the day reading academic papers for an hour. "Today I'm skimming two machine learning and neuroscience papers"

This helps him "think beyond the paper."

He reads for an hour.

“When I have a lot of energy, I just chill on the bed and read… When I’m feeling tired, I jump to the desk…” — Lex Fridman

Takeaways

Lex's day-in-the-life video is inspiring.

He has positive energy and works hard every day.

Schedule:

Mantra Routine includes rules, visualizing, goals, and principles.

Deep Work Session #1: Four hours of focus.

10 minutes social media, 20 minutes guitar or piano. "Music brings me joy"

Six-mile run, then bodyweight workout. Two hours total.

Deep Work #2: Four hours with no distractions. Google Docs stores random thoughts.

Lex supplements his keto diet.

This four-hour session is "open to chaos."

Evening reading: academic papers followed by fiction.

"I value some things in life. Work is one. The other is loving others. With those two things, life is great." — Lex Fridman

Enrique Dans

3 years ago

When we want to return anything, why on earth do stores still require a receipt?

A friend told me of an incident she found particularly irritating: a retailer where she is a frequent client, with an account and loyalty card, asked for the item's receipt.

We all know that stores collect every bit of data they can on us, including our socio-demographic profile, address, shopping habits, and everything we've ever bought, so why would they need a fading receipt? Who knows? That their consumers try to pass off other goods? It's easy to verify past transactions to see when the item was purchased.

That's it. Why require receipts? Companies send us incentives, discounts, and other marketing, yet when we need something, we have to prove we're not cheating.

Why require us to preserve data and documents when our governments and governmental institutions already have them? Why do I need to carry documents like my driver's license if the authorities can check if I have one and what state it's in once I prove my identity?

We shouldn't be required to give someone data or documents they already have. The days of waiting up with our paperwork for a stern official to inform us something is missing are over.

How can retailers still ask if you have a receipt if we've made our slow, bureaucratic, and all-powerful government sensible? Then what? The shop may not accept your return (which has a two-year window, longer than most purchase tickets last) or they may just let you replace the item.

Isn't this an anachronism in the age of CRMs, customer files that know what we ate for breakfast, and loyalty programs? If government and bureaucracies have learnt to use its own files and make life easier for the consumer, why do retailers ask for a receipt?

They're adding friction to the system. They know we can obtain a refund, use our warranty, or get our money back. But if I ask for ludicrous criteria, like keeping the purchase receipt in your wallet (wallet? another anachronism, if I leave the house with only my smartphone! ), it will dissuade some individuals and tip the scales in their favor when it comes to limiting returns. Some manager will take credit for lowering returns and collect her annual bonus. Having the wrong metrics is common in management.

To slow things down, asking for a receipt is like asking us to perform a handstand and leap 20 times on one foot. You have my information, use it to send me everything, and know everything I've bought, yet when I need a two-way service, you refuse to utilize it and require that I keep it and prove it.

Refuse as customers. If retailers want our business, they should treat us well, not just when we spend money. If I come to return a product, claim its use or warranty, or be taught how to use it, I am the same person you treated wonderfully when I bought it. Remember that, and act accordingly.

A store should use my information for everything, not just what it wants. Keep my info, but don't sell me anything.