More on Cooking

Joseph Mavericks

3 years ago

Apples Top 100 Meeting: Steve Jobs's Secret Agenda's Lessons

Jobs' secret emails became public due to a litigation with Samsung.

Steve Jobs sent Phil Schiller an email at the end of 2010. Top 100 A was the codename for Apple's annual Top 100 executive meetings. The 2011 one was scheduled.

Everything about this gathering is secret, even attendance. The location is hidden, and attendees can't even drive themselves. Instead, buses transport them to a 2-3 day retreat.

Due to a litigation with Samsung, this Top 100 meeting's agenda was made public in 2014. This was a critical milestone in Apple's history, not a Top 100 meeting. Apple had many obstacles in the 2010s to remain a technological leader. Apple made more money with non-PC goods than with its best-selling Macintosh series. This was the last Top 100 gathering Steve Jobs would attend before passing, and he wanted to make sure his messages carried on before handing over his firm to Tim Cook.

In this post, we'll discuss lessons from Jobs' meeting agenda. Two sorts of entrepreneurs can use these tips:

Those who manage a team in a business and must ensure that everyone is working toward the same goals, upholding the same principles, and being inspired by the same future.

Those who are sole proprietors or independent contractors and who must maintain strict self-discipline in order to stay innovative in their industry and adhere to their own growth strategy.

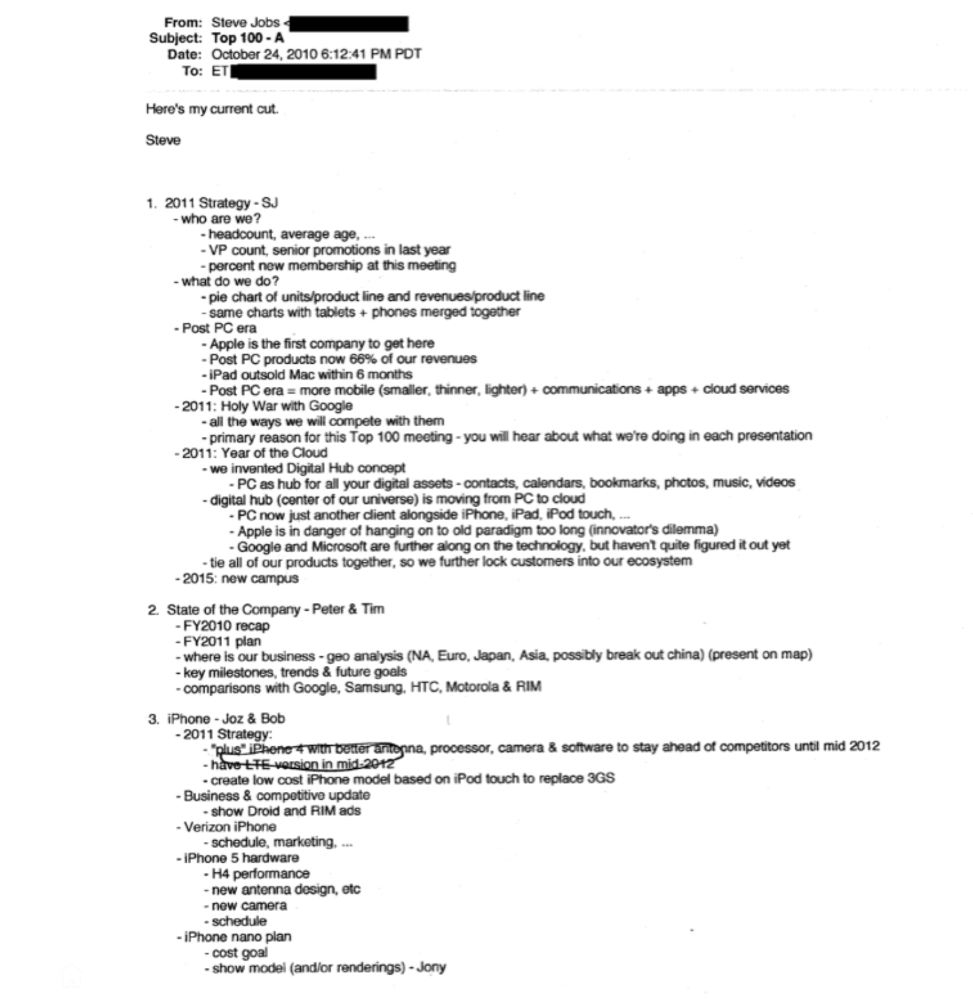

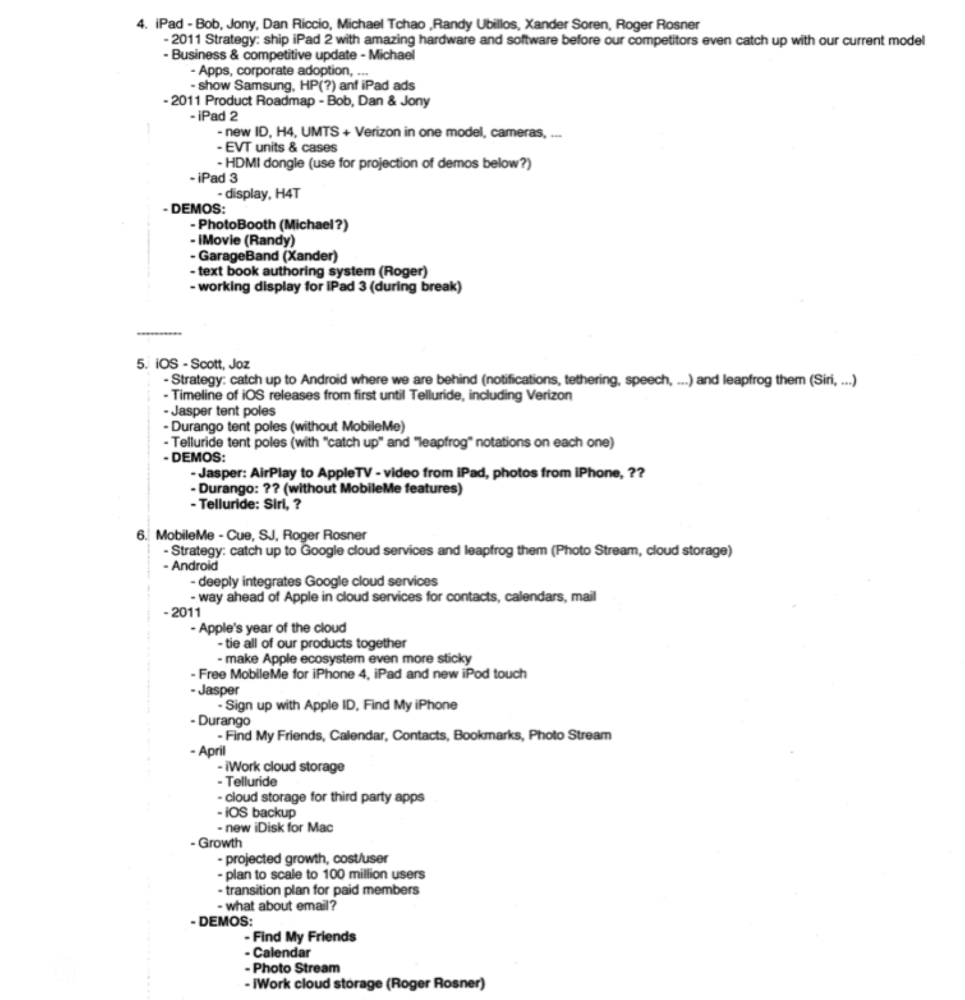

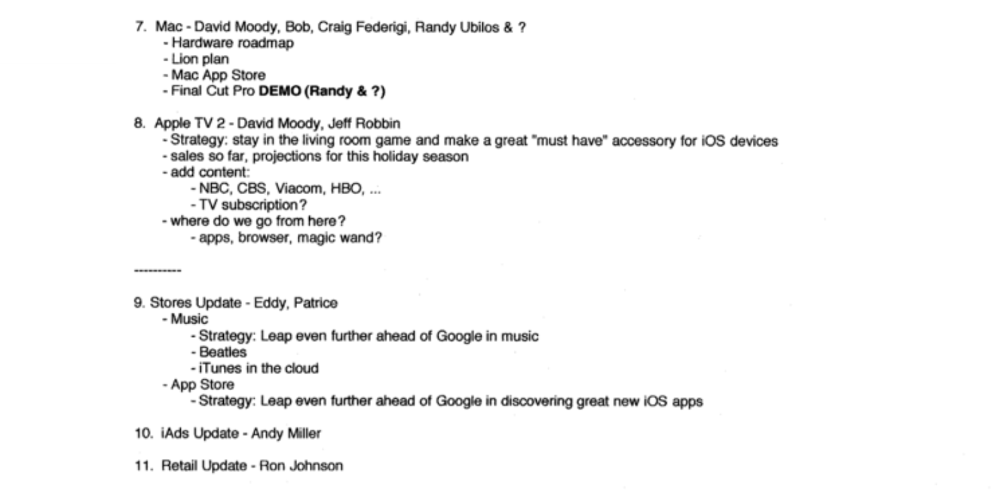

Here's Steve Jobs's email outlining the annual meeting agenda. It's an 11-part summary of the company's shape and strategy.

Steve Jobs outlines Apple's 2011 strategy, 10/24/10

1. Correct your data

Business leaders must comprehend their company's metrics. Jobs either mentions critical information he already knows or demands slides showing the numbers he wants. These numbers fall under 2 categories:

Metrics for growth and strategy

As we will see, this was a crucial statistic for Apple since it signaled the beginning of the Post PC era and required them to make significant strategic changes in order to stay ahead of the curve. Post PC products now account for 66% of our revenues.

Within six months, iPad outsold Mac, another sign of the Post-PC age. As we will see, Jobs thought the iPad would be the next big thing, and item number four on the agenda is one of the most thorough references to the iPad.

Geographical analysis: Here, Jobs emphasizes China, where the corporation has a slower start than anticipated. China was dominating Apple's sales growth with 16% of revenue one year after this meeting.

Metrics for people & culture

The individuals that make up a firm are more significant to its success than its headcount or average age. That holds true regardless of size, from a 5-person startup to a Fortune 500 firm. Jobs was aware of this, which is why his suggested agenda begins by emphasizing demographic data.

Along with the senior advancements in the previous year's requested statistic, it's crucial to demonstrate that if the business is growing, the employees who make it successful must also grow.

2. Recognize the vulnerabilities and strengths of your rivals

Steve Jobs was known for attacking his competition in interviews and in his strategies and roadmaps. This agenda mentions 18 competitors, including:

Google 7 times

Android 3 times

Samsung 2 times

Jobs' agenda email was issued 6 days after Apple's Q4 results call (2010). On the call, Jobs trashed Google and Android. His 5-minute intervention included:

Google has acknowledged that the present iteration of Android is not tablet-optimized.

Future Android tablets will not work (Dead On Arrival)

While Google Play only has 90,000 apps, the Apple App Store has 300,000.

Android is extremely fragmented and is continuing to do so.

The App Store for iPad contains over 35,000 applications. The market share of the latest generation of tablets (which debuted in 2011) will be close to nil.

Jobs' aim in blasting the competition on that call was to reassure investors about the upcoming flood of new tablets. Jobs often criticized Google, Samsung, and Microsoft, but he also acknowledged when they did a better job. He was great at detecting his competitors' advantages and devising ways to catch up.

Jobs doesn't hold back when he says in bullet 1 of his agenda: "We further lock customers into our ecosystem while Google and Microsoft are further along on the technology, but haven't quite figured it out yet tie all of our goods together."

The plan outlined in bullet point 5 is immediately clear: catch up to Android where we are falling behind (notifications, tethering, and speech), and surpass them (Siri,). It's important to note that Siri frequently let users down and never quite lived up to expectations.

Regarding MobileMe, see Bullet 6 Jobs admits that when it comes to cloud services like contacts, calendars, and mail, Google is far ahead of Apple.

3. Adapt or perish

Steve Jobs was a visionary businessman. He knew personal computers were the future when he worked on the first Macintosh in the 1980s.

Jobs acknowledged the Post-PC age in his 2010 D8 interview.

Will the tablet replace the laptop, Walt Mossberg questioned Jobs? Jobs' response:

“You know, when we were an agrarian nation, all cars were trucks, because that’s what you needed on the farm. As vehicles started to be used in the urban centers and America started to move into those urban and suburban centers, cars got more popular and innovations like automatic transmission and things that you didn’t care about in a truck as much started to become paramount in cars. And now, maybe 1 out of every 25 vehicles is a truck, where it used to be 100%. PCs are going to be like trucks. They’re still going to be around, still going to have a lot of value, but they’re going to be used by one out of X people.”

Imagine how forward-thinking that was in 2010, especially for the Macintosh creator. You have to be willing to recognize that things were changing and that it was time to start over and focus on the next big thing.

Post-PC is priority number 8 in his 2010 agenda's 2011 Strategy section. Jobs says Apple is the first firm to get here and that Post PC items account about 66% of our income. The iPad outsold the Mac in 6 months, and the Post-PC age means increased mobility (smaller, thinner, lighter). Samsung had just introduced its first tablet, while Apple was working on the iPad 3. (as mentioned in bullet 4).

4. Plan ahead (and different)

Jobs' agenda warns that Apple risks clinging to outmoded paradigms. Clayton Christensen explains in The Innovators Dilemma that huge firms neglect disruptive technologies until they become profitable. Samsung's Galaxy tab, released too late, never caught up to Apple.

Apple faces a similar dilemma with the iPhone, its cash cow for over a decade. It doesn't sell as much because consumers aren't as excited about new iPhone launches and because technology is developing and cell phones may need to be upgraded.

Large companies' established consumer base typically hinders innovation. Clayton Christensen emphasizes that loyal customers from established brands anticipate better versions of current products rather than something altogether fresh and new technologies.

Apple's marketing is smart. Apple's ecosystem is trusted by customers, and its products integrate smoothly. So much so that Apple can afford to be a disruptor by doing something no one has ever done before, something the world's largest corporation shouldn't be the first to try. Apple can test the waters and produce a tremendous innovation tsunami, something few corporations can do.

In March 2011, Jobs appeared at an Apple event. During his address, Steve reminded us about Apple's brand:

“It’s in Apple’s DNA, that technology alone is not enough. That it’s technology married with liberal arts, married with the humanities that yields us the results that make our hearts sink. And nowhere is that more true that in these Post-PC devices.“

More than a decade later, Apple remains one of the most innovative and trailblazing companies in the Post-PC world (industry-disrupting products like Airpods or the Apple Watch came out after that 2011 strategy meeting), and it has reinvented how we use laptops with its M1-powered line of laptops offering unprecedented performance.

A decade after Jobs' death, Apple remains the world's largest firm, and its former CEO had a crucial part in its expansion. If you can do 1% of what Jobs did, you may be 1% as successful.

Not bad.

Dr. Linda Dahl

3 years ago

We eat corn in almost everything. Is It Important?

Corn Kid got viral on TikTok after being interviewed by Recess Therapy. Tariq, called the Corn Kid, ate a buttery ear of corn in the video. He's corn crazy. He thinks everyone just has to try it. It turns out, whether we know it or not, we already have.

Corn is a fruit, veggie, and grain. It's the second-most-grown crop. Corn makes up 36% of U.S. exports. In the U.S., it's easy to grow and provides high yields, as proven by the vast corn belt spanning the Midwest, Great Plains, and Texas panhandle. Since 1950, the corn crop has doubled to 10 billion bushels.

You say, "Fine." We shouldn't just grow because we can. Why so much corn? What's this corn for?

Why is practical and political. Michael Pollan's The Omnivore's Dilemma has the full narrative. Early 1970s food costs increased. Nixon subsidized maize to feed the public. Monsanto genetically engineered corn seeds to make them hardier, and soon there was plenty of corn. Everyone ate. Woot! Too much corn followed. The powers-that-be had to decide what to do with leftover corn-on-the-cob.

They are fortunate that corn has a wide range of uses.

First, the edible variants. I divide corn into obvious and stealth.

Obvious corn includes popcorn, canned corn, and corn on the cob. This form isn't always digested and often comes out as entire, polka-dotting poop. Cornmeal can be ground to make cornbread, polenta, and corn tortillas. Corn provides antioxidants, minerals, and vitamins in moderation. Most synthetic Vitamin C comes from GMO maize.

Corn oil, corn starch, dextrose (a sugar), and high-fructose corn syrup are often overlooked. They're stealth corn because they sneak into practically everything. Corn oil is used for frying, baking, and in potato chips, mayonnaise, margarine, and salad dressing. Baby food, bread, cakes, antibiotics, canned vegetables, beverages, and even dairy and animal products include corn starch. Dextrose appears in almost all prepared foods, excluding those with high-fructose corn syrup. HFCS isn't as easily digested as sucrose (from cane sugar). It can also cause other ailments, which we'll discuss later.

Most foods contain corn. It's fed to almost all food animals. 96% of U.S. animal feed is corn. 39% of U.S. corn is fed to livestock. But animals prefer other foods. Omnivore chickens prefer insects, worms, grains, and grasses. Captive cows are fed a total mixed ration, which contains corn. These animals' products, like eggs and milk, are also corn-fed.

There are numerous non-edible by-products of corn that are employed in the production of items like:

fuel-grade ethanol

plastics

batteries

cosmetics

meds/vitamins binder

carpets, fabrics

glutathione

crayons

Paint/glue

How does corn influence you? Consider quick food for dinner. You order a cheeseburger, fries, and big Coke at the counter (or drive-through in the suburbs). You tell yourself, "No corn." All that contains corn. Deconstruct:

Cows fed corn produce meat and cheese. Meat and cheese were bonded with corn syrup and starch (same). The bun (corn flour and dextrose) and fries were fried in maize oil. High fructose corn syrup sweetens the drink and helps make the cup and straw.

Just about everything contains corn. Then what? A cornspiracy, perhaps? Is eating too much maize an issue, or should we strive to stay away from it whenever possible?

As I've said, eating some maize can be healthy. 92% of U.S. corn is genetically modified, according to the Center for Food Safety. The adjustments are expected to boost corn yields. Some sweet corn is genetically modified to produce its own insecticide, a protein deadly to insects made by Bacillus thuringiensis. It's safe to eat in sweet corn. Concerns exist about feeding agricultural animals so much maize, modified or not.

High fructose corn syrup should be consumed in moderation. Fructose, a sugar, isn't easily metabolized. Fructose causes diabetes, fatty liver, obesity, and heart disease. It causes inflammation, which might aggravate gout. Candy, packaged sweets, soda, fast food, juice drinks, ice cream, ice cream topping syrups, sauces & condiments, jams, bread, crackers, and pancake syrup contain the most high fructose corn syrup. Everyday foods with little nutrients. Check labels and choose cane sugar or sucrose-sweetened goods. Or, eat corn like the Corn Kid.

Karthik Rajan

3 years ago

11 Cooking Hacks I Wish I Knew Earlier

Quick, easy and tasty (and dollops of parenting around food).

My wife and mom are both great mothers. They're super-efficient planners. They soak and ferment food. My 104-year-old grandfather loved fermented foods.

When I'm hungry and need something fast, I waffle to the pantry. Like most people, I like to improvise. I wish I knew these 11 hacks sooner.

1. The world's best pasta sauce only has 3 ingredients.

You watch recipe videos with prepped ingredients. In reality, prepping and washing take time. The food's taste isn't guaranteed. The raw truth at a sublime level is not talked about often.

Sometimes a radical recipe comes along that's so easy and tasty, you're dumbfounded. The Classic Italian Cook Book has a pasta recipe.

One 28-ounce can of whole, peeled tomatoes, one medium peeled onion, and 5 tablespoons of butter. And salt to taste.

Combine everything in a single pot and simmer for 45 minutes, uncovered. Stir occasionally. Toss the onion halves after 45 minutes and pour the sauce over pasta. Finish!

This simple recipe fights our deepest fears.

Salt to taste! Customized to perfection, no frills.

2. Reheating rice with ice. Magical.

Most of the world eats rice. I was raised in south India. My grandfather farmed rice in the Cauvery river delta.

The problem with rice With growing kids, you can't cook just enough. Leftovers are a norm. Microwaves help most people. Ice cubes are the frosting.

Before reheating rice in the microwave, add an ice cube. The ice will steam the rice, making it fluffy and delicious again.

3. Pineapple leaf

if it comes off easy, it is ripe enough to cut. No rethinking.

My daughter loves pineapples like her dad. One daddy task is cutting them. Sharing immediate results is therapeutic.

Timing the cut has been the most annoying part over the years. The pineapple leaf tip reveals the fruitiness inside. Always loved it.

4. Magic knife words (rolling and curling)

Cutting hand: Roll the blade's back, not its tip, to cut.

Other hand: If you can’t see your finger tips, you can’t cut them. So curl your fingers.

I dislike that schools don't teach financial literacy or cutting skills.

My wife and I used scissors differently for 25 years. We both used the thumb. My index finger, her middle. We googled the difference when I noticed it and laughed. She's right.

This video teaches knifing skills:

5. Best advice about heat

If it's done in the pan, it's overdone on the plate.

This simple advice stands out when we worry about ingredients and proportions.

6. The truth about pasta water

Pasta water should be sea-salty.

Properly seasoning food separates good from great. Salt depends is a good line.

Want delicious pasta? Well, then kind of a lot, to be perfectly honest.

7. Clean as you go

Clean blender as you go by blending water and dish soap.

I find clean as you go easier than clean afterwords. This easy tip is gold.

8. Clean as you go (bis)

Microwave a bowl of water, vinegar, and a toothpick for 5 minutes.

2 cups water, 2 tablespoons vinegar, and a toothpick to prevent overflow.

5-minute microwave. Let the steam work for another 2 minutes. Sponge-off dirt and food. Simple.

9 and 10. Tools,tools, tools

Immersion blender and pressure cooker save time and money.

Narrative: I experienced fatherly pride. My middle-schooler loves science. We discussed boiling. I spoke. Water doesn't need 100°C to boil. She looked confused. 100 degrees assume something. The world around the water is a normal room. Changing water pressure affects its boiling point. This saves energy. Pressure cooker magic.

I captivated her. She's into science and sustainable living.

Whistling is a subliminal form of self-expression when done right. Pressure cookers remind me of simple pleasures.

Your handiness depends on your home tools. Immersion blenders are great for pre- and post-cooking. It eliminates chopping and washing. Second to the dishwasher, in my opinion.

11. One pepper is plenty

A story I share with my daughters.

Once, everyone thought about spice (not spicy). More valuable than silk. One of the three mighty oceans was named after a source country. Columbus sailed the wrong way and found America. The explorer called the natives after reaching his spice destination.

It was pre-internet days. His Google wasn't working.

My younger daughter listens in awe. Strong roots. Image cast. She can contextualize one of the ocean names.

I struggle with spices in daily life. Combinations are mind-boggling. I have more spices than Columbus. Flavor explosion has repercussions. You must closely follow the recipe without guarantees. Best aha. Double down on one spice and move on. If you like it, it's great.

I naturally gravitate towards cumin soups, fennel dishes, mint rice, oregano pasta, basil thai curry and cardamom pudding.

Variety enhances life. Each of my dishes is unique.

To each their own comfort food and nostalgic memories.

Happy living!

You might also like

Jerry Keszka

3 years ago

10 Crazy Useful Free Websites No One Told You About But You Needed

The internet is a massive information resource. With so much stuff, it's easy to forget about useful websites. Here are five essential websites you may not have known about.

1. Companies.tools

Companies.tools are what successful startups employ. This website offers a curated selection of design, research, coding, support, and feedback resources. Ct has the latest app development platform and greatest client feedback method.

2. Excel Formula Bot

Excel Formula Bot can help if you forget a formula. Formula Bot uses AI to convert text instructions into Excel formulas, so you don't have to remember them.

Just tell the Bot what to do, and it will do it. Excel Formula Bot can calculate sales tax and vacation days. When you're stuck, let the Bot help.

3.TypeLit

TypeLit helps you improve your typing abilities while reading great literature.

TypeLit.io lets you type any book or dozens of preset classics. TypeLit provides real-time feedback on accuracy and speed.

Goals and progress can be tracked. Why not improve your typing and learn great literature with TypeLit?

4. Calm Schedule

Finding a meeting time that works for everyone is difficult. Personal and business calendars might be difficult to coordinate.

Synchronize your two calendars to save time and avoid problems. You may avoid searching through many calendars for conflicts and keep your personal information secret. Having one source of truth for personal and work occasions will help you never miss another appointment.

https://calmcalendar.com/

5. myNoise

myNoise makes the outside world quieter. myNoise is the right noise for a noisy office or busy street.

If you can't locate the right noise, make it. MyNoise unlocks the world. Shut out distractions. Thank your ears.

6. Synthesia

Professional videos require directors, filmmakers, editors, and animators. Now, thanks to AI, you can generate high-quality videos without video editing experience.

AI avatars are crucial. You can design a personalized avatar using a web-based software like synthesia.io. Our avatars can lip-sync in over 60 languages, so you can make worldwide videos. There's an AI avatar for every video goal.

Not free. Amazing service, though.

7. Cleaning-up-images

Have you shot a wonderful photo just to notice something in the background? You may have a beautiful headshot but wish to erase an imperfection.

Cleanup.pictures removes undesirable objects from photos. Our algorithms will eliminate the selected object.

Cleanup.pictures can help you obtain the ideal shot every time. Next time you take images, let Cleanup.pictures fix any flaws.

8. PDF24 Tools

Editing a PDF can be a pain. Most of us don't know Adobe Acrobat's functionalities. Why buy something you'll rarely use? Better options exist.

PDF24 is an online PDF editor that's free and subscription-free. Rotate, merge, split, compress, and convert PDFs in your browser. PDF24 makes document signing easy.

Upload your document, sign it (or generate a digital signature), and download it. It's easy and free. PDF24 is a free alternative to pricey PDF editing software.

9. Class Central

Finding online classes is much easier. Class Central has classes from Harvard, Stanford, Coursera, Udemy, and Google, Amazon, etc. in one spot.

Whether you want to acquire a new skill or increase your knowledge, you'll find something. New courses bring variety.

10. Rome2rio

Foreign travel offers countless transport alternatives. How do you get from A to B? It’s easy!

Rome2rio will show you the best method to get there, including which mode of transport is ideal.

Plane

Car

Train

Bus

Ferry

Driving

Shared bikes

Walking

Do you know any free, useful websites?

Olga Kharif

3 years ago

A month after freezing customer withdrawals, Celsius files for bankruptcy.

Alex Mashinsky, CEO of Celsius, speaks at Web Summit 2021 in Lisbon.

Celsius Network filed for Chapter 11 bankruptcy a month after freezing customer withdrawals, joining other crypto casualties.

Celsius took the step to stabilize its business and restructure for all stakeholders. The filing was done in the Southern District of New York.

The company, which amassed more than $20 billion by offering 18% interest on cryptocurrency deposits, paused withdrawals and other functions in mid-June, citing "extreme market conditions."

As the Fed raises interest rates aggressively, it hurts risk sentiment and squeezes funding costs. Voyager Digital Ltd. filed for Chapter 11 bankruptcy this month, and Three Arrows Capital has called in liquidators.

Celsius called the pause "difficult but necessary." Without the halt, "the acceleration of withdrawals would have allowed certain customers to be paid in full while leaving others to wait for Celsius to harvest value from illiquid or longer-term asset deployment activities," it said.

Celsius declined to comment. CEO Alex Mashinsky said the move will strengthen the company's future.

The company wants to keep operating. It's not requesting permission to allow customer withdrawals right now; Chapter 11 will handle customer claims. The filing estimates assets and liabilities between $1 billion and $10 billion.

Celsius is advised by Kirkland & Ellis, Centerview Partners, and Alvarez & Marsal.

Yield-promises

Celsius promised 18% returns on crypto loans. It lent those coins to institutional investors and participated in decentralized-finance apps.

When TerraUSD (UST) and Luna collapsed in May, Celsius pulled its funds from Terra's Anchor Protocol, which offered 20% returns on UST deposits. Recently, another large holding, staked ETH, or stETH, which is tied to Ether, became illiquid and discounted to Ether.

The lender is one of many crypto companies hurt by risky bets in the bear market. Also, Babel halted withdrawals. Voyager Digital filed for bankruptcy, and crypto hedge fund Three Arrows Capital filed for Chapter 15 bankruptcy.

According to blockchain data and tracker Zapper, Celsius repaid all of its debt in Aave, Compound, and MakerDAO last month.

Celsius charged Symbolic Capital Partners Ltd. 2,000 Ether as collateral for a cash loan on June 13. According to company filings, Symbolic was charged 2,545.25 Ether on June 11.

In July 6 filings, it said it reshuffled its board, appointing two new members and firing others.

DC Palter

2 years ago

Why Are There So Few Startups in Japan?

Japan's startup challenge: 7 reasons

Every day, another Silicon Valley business is bought for a billion dollars, making its founders rich while growing the economy and improving consumers' lives.

Google, Amazon, Twitter, and Medium dominate our daily lives. Tesla automobiles and Moderna Covid vaccinations.

The startup movement started in Silicon Valley, California, but the rest of the world is catching up. Global startup buzz is rising. Except Japan.

644 of CB Insights' 1170 unicorns—successful firms valued at over $1 billion—are US-based. China follows with 302 and India third with 108.

Japan? 6!

1% of US startups succeed. The third-largest economy is tied with small Switzerland for startup success.

Mexico (8), Indonesia (12), and Brazil (12) have more successful startups than Japan (16). South Korea has 16. Yikes! Problem?

Why Don't Startups Exist in Japan More?

Not about money. Japanese firms invest in startups. To invest in startups, big Japanese firms create Silicon Valley offices instead of Tokyo.

Startups aren't the issue either. Local governments are competing to be Japan's Shirikon Tani, providing entrepreneurs financing, office space, and founder visas.

Startup accelerators like Plug and Play in Tokyo, Osaka, and Kyoto, the Startup Hub in Kobe, and Google for Startups are many.

Most of the companies I've encountered in Japan are either local offices of foreign firms aiming to expand into the Japanese market or small businesses offering local services rather than disrupting a staid industry with new ideas.

There must be a reason Japan can develop world-beating giant corporations like Toyota, Nintendo, Shiseido, and Suntory but not inventive startups.

Culture, obviously. Japanese culture excels in teamwork, craftsmanship, and quality, but it hates moving fast, making mistakes, and breaking things.

If you have a brilliant idea in Silicon Valley, quit your job, get money from friends and family, and build a prototype. To fund the business, you approach angel investors and VCs.

Most non-startup folks don't aware that venture capitalists don't want good, profitable enterprises. That's wonderful if you're developing a solid small business to consult, open shops, or make a specialty product. However, you must pay for it or borrow money. Venture capitalists want moon rockets. Silicon Valley is big or bust. Almost 90% will explode and crash. The few successes are remarkable enough to make up for the failures.

Silicon Valley's high-risk, high-reward attitude contrasts with Japan's incrementalism. Japan makes the best automobiles and cleanrooms, but it fails to produce new items that grow the economy.

Changeable? Absolutely. But, what makes huge manufacturing enterprises successful and what makes Japan a safe and comfortable place to live are inextricably connected with the lack of startups.

Barriers to Startup Development in Japan

These are the 7 biggest obstacles to Japanese startup success.

Unresponsive Employment Market

While the lifelong employment system in Japan is evolving, the average employee stays at their firm for 12 years (15 years for men at large organizations) compared to 4.3 years in the US. Seniority, not experience or aptitude, determines career routes, making it tough to quit a job to join a startup and then return to corporate work if it fails.

Conservative Buyers

Even if your product is buggy and undocumented, US customers will migrate to a cheaper, superior one. Japanese corporations demand perfection from their trusted suppliers and keep with them forever. Startups need income fast, yet product evaluation takes forever.

Failure intolerance

Japanese business failures harm lives. Failed forever. It hinders risk-taking. Silicon Valley embraces failure. Build another startup if your first fails. Build a third if that fails. Every setback is viewed as a learning opportunity for success.

4. No Corporate Purchases

Silicon Valley industrial giants will buy fast-growing startups for a lot of money. Many huge firms have stopped developing new goods and instead buy startups after the product is validated.

Japanese companies prefer in-house product development over startup acquisitions. No acquisitions mean no startup investment and no investor reward.

Startup investments can also be monetized through stock market listings. Public stock listings in Japan are risky because the Nikkei was stagnant for 35 years while the S&P rose 14x.

5. Social Unity Above Wealth

In Silicon Valley, everyone wants to be rich. That creates a competitive environment where everyone wants to succeed, but it also promotes fraud and societal problems.

Japan values communal harmony above individual success. Wealthy folks and overachievers are avoided. In Japan, renegades are nearly impossible.

6. Rote Learning Education System

Japanese high school graduates outperform most Americans. Nonetheless, Japanese education is known for its rote memorization. The American system, which fails too many kids, emphasizes creativity to create new products.

Immigration.

Immigrants start 55% of successful Silicon Valley firms. Some come for university, some to escape poverty and war, and some are recruited by Silicon Valley startups and stay to start their own.

Japan is difficult for immigrants to start a business due to language barriers, visa restrictions, and social isolation.

How Japan Can Promote Innovation

Patchwork solutions to deep-rooted cultural issues will not work. If customers don't buy things, immigration visas won't aid startups. Startups must have a chance of being acquired for a huge sum to attract investors. If risky startups fail, employees won't join.

Will Japan never have a startup culture?

Once a consensus is reached, Japan changes rapidly. A dwindling population and standard of living may lead to such consensus.

Toyota and Sony were firms with renowned founders who used technology to transform the world. Repeatable.

Silicon Valley is flawed too. Many people struggle due to wealth disparities, job churn and layoffs, and the tremendous ups and downs of the economy caused by stock market fluctuations.

The founders of the 10% successful startups are heroes. The 90% that fail and return to good-paying jobs with benefits are never mentioned.

Silicon Valley startup culture and Japanese corporate culture are opposites. Each have pros and cons. Big Japanese corporations make the most reliable, dependable, high-quality products yet move too slowly. That's good for creating cars, not social networking apps.

Can innovation and success be encouraged without eroding social cohesion? That can motivate software firms to move fast and break things while recognizing the beauty and precision of expert craftsmen? A hybrid culture where Japan can make the world's best and most original items. Hopefully.