How Payment for Order Flow (PFOF) Works

What is PFOF?

PFOF is a brokerage firm's compensation for directing orders to different parties for trade execution. The brokerage firm receives fractions of a penny per share for directing the order to a market maker.

Each optionable stock could have thousands of contracts, so market makers dominate options trades. Order flow payments average less than $0.50 per option contract.

Order Flow Payments (PFOF) Explained

The proliferation of exchanges and electronic communication networks has complicated equity and options trading (ECNs) Ironically, Bernard Madoff, the Ponzi schemer, pioneered pay-for-order-flow.

In a December 2000 study on PFOF, the SEC said, "Payment for order flow is a method of transferring trading profits from market making to brokers who route customer orders to specialists for execution."

Given the complexity of trading thousands of stocks on multiple exchanges, market making has grown. Market makers are large firms that specialize in a set of stocks and options, maintaining an inventory of shares and contracts for buyers and sellers. Market makers are paid the bid-ask spread. Spreads have narrowed since 2001, when exchanges switched to decimals. A market maker's ability to play both sides of trades is key to profitability.

Benefits, requirements

A broker receives fees from a third party for order flow, sometimes without a client's knowledge. This invites conflicts of interest and criticism. Regulation NMS from 2005 requires brokers to disclose their policies and financial relationships with market makers.

Your broker must tell you if it's paid to send your orders to specific parties. This must be done at account opening and annually. The firm must disclose whether it participates in payment-for-order-flow and, upon request, every paid order. Brokerage clients can request payment data on specific transactions, but the response takes weeks.

Order flow payments save money. Smaller brokerage firms can benefit from routing orders through market makers and getting paid. This allows brokerage firms to send their orders to another firm to be executed with other orders, reducing costs. The market maker or exchange benefits from additional share volume, so it pays brokerage firms to direct traffic.

Retail investors, who lack bargaining power, may benefit from order-filling competition. Arrangements to steer the business in one direction invite wrongdoing, which can erode investor confidence in financial markets and their players.

Pay-for-order-flow criticism

It has always been controversial. Several firms offering zero-commission trades in the late 1990s routed orders to untrustworthy market makers. During the end of fractional pricing, the smallest stock spread was $0.125. Options spreads widened. Traders found that some of their "free" trades cost them a lot because they weren't getting the best price.

The SEC then studied the issue, focusing on options trades, and nearly decided to ban PFOF. The proliferation of options exchanges narrowed spreads because there was more competition for executing orders. Options market makers said their services provided liquidity. In its conclusion, the report said, "While increased multiple-listing produced immediate economic benefits to investors in the form of narrower quotes and effective spreads, these improvements have been muted with the spread of payment for order flow and internalization."

The SEC allowed payment for order flow to continue to prevent exchanges from gaining monopoly power. What would happen to trades if the practice was outlawed was also unclear. SEC requires brokers to disclose financial arrangements with market makers. Since then, the SEC has watched closely.

2020 Order Flow Payment

Rule 605 and Rule 606 show execution quality and order flow payment statistics on a broker's website. Despite being required by the SEC, these reports can be hard to find. The SEC mandated these reports in 2005, but the format and reporting requirements have changed over the years, most recently in 2018.

Brokers and market makers formed a working group with the Financial Information Forum (FIF) to standardize order execution quality reporting. Only one retail brokerage (Fidelity) and one market maker remain (Two Sigma Securities). FIF notes that the 605/606 reports "do not provide the level of information that allows a retail investor to gauge how well a broker-dealer fills a retail order compared to the NBBO (national best bid or offer’) at the time the order was received by the executing broker-dealer."

In the first quarter of 2020, Rule 606 reporting changed to require brokers to report net payments from market makers for S&P 500 and non-S&P 500 equity trades and options trades. Brokers must disclose payment rates per 100 shares by order type (market orders, marketable limit orders, non-marketable limit orders, and other orders).

Richard Repetto, Managing Director of New York-based Piper Sandler & Co., publishes a report on Rule 606 broker reports. Repetto focused on Charles Schwab, TD Ameritrade, E-TRADE, and Robinhood in Q2 2020. Repetto reported that payment for order flow was higher in the second quarter than the first due to increased trading activity, and that options paid more than equities.

Repetto says PFOF contributions rose overall. Schwab has the lowest options rates, while TD Ameritrade and Robinhood have the highest. Robinhood had the highest equity rating. Repetto assumes Robinhood's ability to charge higher PFOF reflects their order flow profitability and that they receive a fixed rate per spread (vs. a fixed rate per share by the other brokers).

Robinhood's PFOF in equities and options grew the most quarter-over-quarter of the four brokers Piper Sandler analyzed, as did their implied volumes. All four brokers saw higher PFOF rates.

TD Ameritrade took the biggest income hit when cutting trading commissions in fall 2019, and this report shows they're trying to make up the shortfall by routing orders for additional PFOF. Robinhood refuses to disclose trading statistics using the same metrics as the rest of the industry, offering only a vague explanation on their website.

Summary

Payment for order flow has become a major source of revenue as brokers offer no-commission equity (stock and ETF) orders. For retail investors, payment for order flow poses a problem because the brokerage may route orders to a market maker for its own benefit, not the investor's.

Infrequent or small-volume traders may not notice their broker's PFOF practices. Frequent traders and those who trade larger quantities should learn about their broker's order routing system to ensure they're not losing out on price improvement due to a broker prioritizing payment for order flow.

This post is a summary. Read full article here

More on Economics & Investing

Cody Collins

3 years ago

The direction of the economy is as follows.

What quarterly bank earnings reveal

Big banks know the economy best. Unless we’re talking about a housing crisis in 2007…

Banks are crucial to the U.S. economy. The Fed, communities, and investments exchange money.

An economy depends on money flow. Banks' views on the economy can affect their decision-making.

Most large banks released quarterly earnings and forward guidance last week. Others were pessimistic about the future.

What Makes Banks Confident

Bank of America's profit decreased 30% year-over-year, but they're optimistic about the economy. Comparatively, they're bullish.

Who banks serve affects what they see. Bank of America supports customers.

They think consumers' future is bright. They believe this for many reasons.

The average customer has decent credit, unless the system is flawed. Bank of America's new credit card and mortgage borrowers averaged 771. New-car loan and home equity borrower averages were 791 and 797.

2008's housing crisis affected people with scores below 620.

Bank of America and the economy benefit from a robust consumer. Major problems can be avoided if individuals maintain spending.

Reasons Other Banks Are Less Confident

Spending requires income. Many companies, mostly in the computer industry, have announced they will slow or freeze hiring. Layoffs are frequently an indication of poor times ahead.

BOA is positive, but investment banks are bearish.

Jamie Dimon, CEO of JPMorgan, outlined various difficulties our economy could confront.

But geopolitical tension, high inflation, waning consumer confidence, the uncertainty about how high rates have to go and the never-before-seen quantitative tightening and their effects on global liquidity, combined with the war in Ukraine and its harmful effect on global energy and food prices are very likely to have negative consequences on the global economy sometime down the road.

That's more headwinds than tailwinds.

JPMorgan, which helps with mergers and IPOs, is less enthusiastic due to these concerns. Incoming headwinds signal drying liquidity, they say. Less business will be done.

Final Reflections

I don't think we're done. Yes, stocks are up 10% from a month ago. It's a long way from old highs.

I don't think the stock market is a strong economic indicator.

Many executives foresee a 2023 recession. According to the traditional definition, we may be in a recession when Q2 GDP statistics are released next week.

Regardless of criteria, I predict the economy will have a terrible year.

Weekly layoffs are announced. Inflation persists. Will prices return to 2020 levels if inflation cools? Perhaps. Still expensive energy. Ukraine's war has global repercussions.

I predict BOA's next quarter earnings won't be as bullish about the consumer's strength.

Sam Hickmann

3 years ago

Donor-Advised Fund Tax Benefits (DAF)

Giving through a donor-advised fund can be tax-efficient. Using a donor-advised fund can reduce your tax liability while increasing your charitable impact.

Grow Your Donations Tax-Free.

Your DAF's charitable dollars can be invested before being distributed. Your DAF balance can grow with the market. This increases grantmaking funds. The assets of the DAF belong to the charitable sponsor, so you will not be taxed on any growth.

Avoid a Windfall Tax Year.

DAFs can help reduce tax burdens after a windfall like an inheritance, business sale, or strong market returns. Contributions to your DAF are immediately tax deductible, lowering your taxable income. With DAFs, you can effectively pre-fund years of giving with assets from a single high-income event.

Make a contribution to reduce or eliminate capital gains.

One of the most common ways to fund a DAF is by gifting publicly traded securities. Securities held for more than a year can be donated at fair market value and are not subject to capital gains tax. If a donor liquidates assets and then donates the proceeds to their DAF, capital gains tax reduces the amount available for philanthropy. Gifts of appreciated securities, mutual funds, real estate, and other assets are immediately tax deductible up to 30% of Adjusted gross income (AGI), with a five-year carry-forward for gifts that exceed AGI limits.

Using Appreciated Stock as a Gift

Donating appreciated stock directly to a DAF rather than liquidating it and donating the proceeds reduces philanthropists' tax liability by eliminating capital gains tax and lowering marginal income tax.

In the example below, a donor has $100,000 in long-term appreciated stock with a cost basis of $10,000:

Using a DAF would allow this donor to give more to charity while paying less taxes. This strategy often allows donors to give more than 20% more to their favorite causes.

For illustration purposes, this hypothetical example assumes a 35% income tax rate. All realized gains are subject to the federal long-term capital gains tax of 20% and the 3.8% Medicare surtax. No other state taxes are considered.

The information provided here is general and educational in nature. It is not intended to be, nor should it be construed as, legal or tax advice. NPT does not provide legal or tax advice. Furthermore, the content provided here is related to taxation at the federal level only. NPT strongly encourages you to consult with your tax advisor or attorney before making charitable contributions.

Tanya Aggarwal

3 years ago

What I learned from my experience as a recent graduate working in venture capital

Every week I meet many people interested in VC. Many of them ask me what it's like to be a junior analyst in VC or what I've learned so far.

Looking back, I've learned many things as a junior VC, having gone through an almost-euphoric peak bull market, failed tech IPOs of 2019 including WeWorks' catastrophic fall, and the beginnings of a bearish market.

1. Network, network, network!

VCs spend 80% of their time networking. Junior VCs source deals or manage portfolios. You spend your time bringing startups to your fund or helping existing portfolio companies grow. Knowing stakeholders (corporations, star talent, investors) in your particular areas of investment helps you develop your portfolio.

Networking was one of my strengths. When I first started in the industry, I'd go to startup events and meet 50 people a month. Over time, I realized these relationships were shallow and I was only getting business cards. So I stopped seeing networking as a transaction. VC is a long-term game, so you should work with people you like. Now I know who I click with and can build deeper relationships with them. My network is smaller but more valuable than before.

2. The Most Important Metric Is Founder

People often ask how we pick investments. Why some companies can raise money and others can't is a mystery. The founder is the most important metric for VCs. When a company is young, the product, environment, and team all change, but the founder remains constant. VCs bet on the founder, not the company.

How do we decide which founders are best after 2-3 calls? When looking at a founder's profile, ask why this person can solve this problem. The founders' track record will tell. If the founder is a serial entrepreneur, you know he/she possesses the entrepreneur DNA and will likely succeed again. If it's his/her first startup, focus on industry knowledge to deliver the best solution.

3. A company's fate can be determined by macrotrends.

Macro trends are crucial. A company can have the perfect product, founder, and team, but if it's solving the wrong problem, it won't succeed. I've also seen average companies ride the wave to success. When you're on the right side of a trend, there's so much demand that more companies can get a piece of the pie.

In COVID-19, macro trends made or broke a company. Ed-tech and health-tech companies gained unicorn status and raised funding at inflated valuations due to sudden demand. With the easing of pandemic restrictions and the start of a bear market, many of these companies' valuations are in question.

4. Look for methods to ACTUALLY add value.

You only need to go on VC twitter (read: @vcstartterkit and @vcbrags) for 5 minutes or look at fin-meme accounts on Instagram to see how much VCs claim to add value but how little they actually do. VC is a long-term game, though. Long-term, founders won't work with you if you don't add value.

How can we add value when we're young and have no network? Leaning on my strengths helped me. Instead of viewing my age and limited experience as a disadvantage, I realized that I brought a unique perspective to the table.

As a VC, you invest in companies that will be big in 5-7 years, and millennials and Gen Z will have the most purchasing power. Because you can relate to that market, you can offer insights that most Partners at 40 can't. I added value by helping with hiring because I had direct access to university talent pools and by finding university students for product beta testing.

5. Develop your personal brand.

Generalists or specialists run most funds. This means that funds either invest across industries or have a specific mandate. Most funds are becoming specialists, I've noticed. Top-tier founders don't lack capital, so funds must find other ways to attract them. Why would a founder work with a generalist fund when a specialist can offer better industry connections and partnership opportunities?

Same for fund members. Founders want quality investors. Become a thought leader in your industry to meet founders. Create content and share your thoughts on industry-related social media. When I first started building my brand, I found it helpful to interview industry veterans to create better content than I could on my own. Over time, my content attracted quality founders so I didn't have to look for them.

These are my biggest VC lessons. This list isn't exhaustive, but it's my industry survival guide.

You might also like

Andy Raskin

3 years ago

I've Never Seen a Sales Deck This Good

It’s Zuora’s, and it’s brilliant. Here’s why.

My friend Tim got a sales position at a Series-C software company that garnered $60 million from A-list investors. He's one of the best salespeople I know, yet he emailed me after starting to struggle.

Tim has a few modest clients. “Big companies ignore my pitch”. Tim said.

I love helping teams write the strategic story that drives sales, marketing, and fundraising. Tim and I had lunch at Amber India on Market Street to evaluate his deck.

After a feast, I asked Tim when prospects tune out.

He said, “several slides in”.

Intent on maximizing dining ROI, Tim went back to the buffet for seconds. When he returned, I pulled out my laptop and launched into a Powerpoint presentation.

“What’s this?” Tim asked.

“This,” I said, “is the greatest sales deck I have ever seen.”

Five Essentials of a Great Sales Narrative

I showed Tim a sales slide from IPO-bound Zuora, which sells a SaaS platform for subscription billing. Zuora supports recurring payments (e.g. enterprise software).

Ex-Zuora salesman gave me the deck, saying it helped him close his largest business. (I don't know anyone who works at Zuora.) After reading this, a few Zuora employees contacted me.)

Tim abandoned his naan in a pool of goat curry and took notes while we discussed the Zuora deck.

We remarked how well the deck led prospects through five elements:

(The ex-Zuora salesperson begged me not to release the Zuora deck publicly.) All of the images below originate from Zuora's website and SlideShare channel.)

#1. Name a Significant Change in the World

Don't start a sales presentation with mentioning your product, headquarters, investors, clients, or yourself.

Name the world shift that raises enormous stakes and urgency for your prospect.

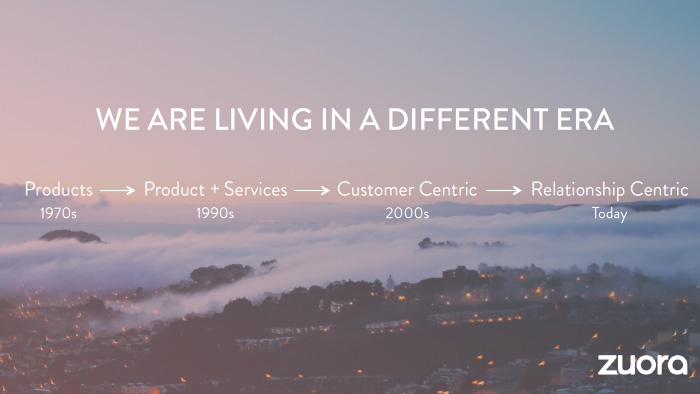

Every Zuora sales deck begins with this slide:

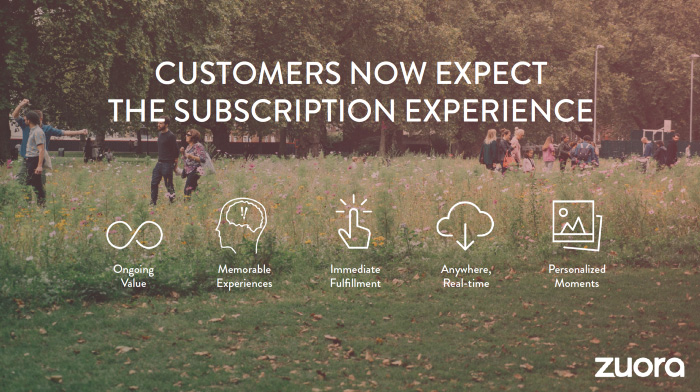

Zuora coined the term subscription economy to describe a new market where purchasers prefer regular service payments over outright purchases. Zuora then shows a slide with the change's history.

Most pitch recommendation advises starting with the problem. When you claim a problem, you put prospects on the defensive. They may be unaware of or uncomfortable admitting the situation.

When you highlight a global trend, prospects open up about how it affects them, worries them, and where they see opportunity. You capture their interest. Robert McKee says:

…what attracts human attention is change. …if the temperature around you changes, if the phone rings — that gets your attention. The way in which a story begins is a starting event that creates a moment of change.

#2. Show There’ll Be Winners and Losers

Loss aversion affects all prospects. They avoid a loss by sticking with the status quo rather than risking a gain by changing.

To fight loss aversion, show how the change will create winners and losers. You must show both

that if the prospect can adjust to the modification you mentioned, the outcome will probably be quite favorable; and

That failing to do so is likely to have an unacceptable negative impact on the prospect's future

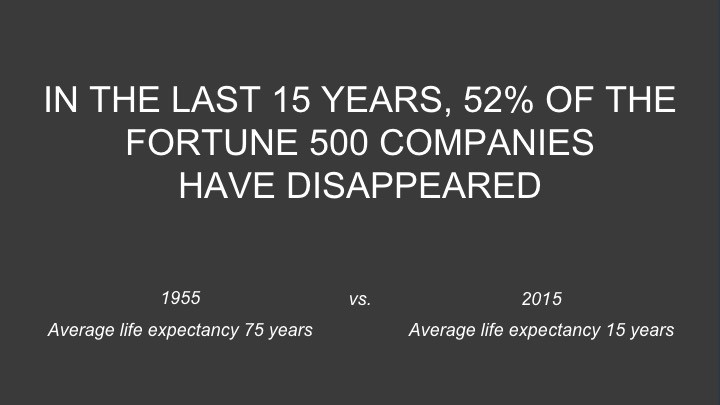

Zuora shows a mass extinction among Fortune 500 firms.

…and then showing how the “winners” have shifted from product ownership to subscription services. Those include upstarts…

…as well as rejuvenated incumbents:

To illustrate, Zuora asks:

Winners utilize Zuora's subscription service models.

#3. Tease the Promised Land

It's tempting to get into product or service details now. Resist that urge.

Prospects won't understand why product/service details are crucial if you introduce them too soon, therefore they'll tune out.

Instead, providing a teaser image of the happily-ever-after your product/service will assist the prospect reach.

Your Promised Land should be appealing and hard to achieve without support. Otherwise, why does your company exist?

Zuora shows this Promised Land slide after explaining that the subscription economy will have winners and losers.

Not your product or service, but a new future state.

(I asked my friend Tim to describe his Promised Land, and he answered, "You’ll have the most innovative platform for ____." Nope: the Promised Land isn't possessing your technology, but living with it.)

Your Promised Land helps prospects market your solution to coworkers after your sales meeting. Your coworkers will wonder what you do without you. Your prospects are more likely to provide a persuasive answer with a captivating Promised Land.

#4. Present Features as “Mystic Gifts” for Overcoming Difficulties on the Road to the Promised Land

Successful sales decks follow the same format as epic films and fairy tales. Obi Wan gives Luke a lightsaber to help him destroy the Empire. You're Gandalf, helping Frodo destroy the ring. Your prospect is Cinderella, and you're her fairy godmother.

Position your product or service's skills as mystical gifts to aid your main character (prospect) achieve the Promised Land.

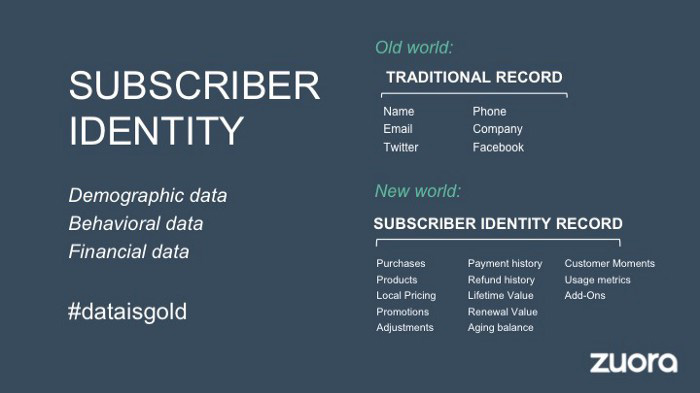

Zuora's client record slide is shown above. Without context, even the most technical prospect would be bored.

Positioned in the context of shifting from an “old” to a “new world”, it's the foundation for a compelling conversation with prospects—technical and otherwise—about why traditional solutions can't reach the Promised Land.

#5. Show Proof That You Can Make the Story True.

In this sense, you're promising possibilities that if they follow you, they'll reach the Promised Land.

The journey to the Promised Land is by definition rocky, so prospects are right to be cautious. The final part of the pitch is proof that you can make the story come true.

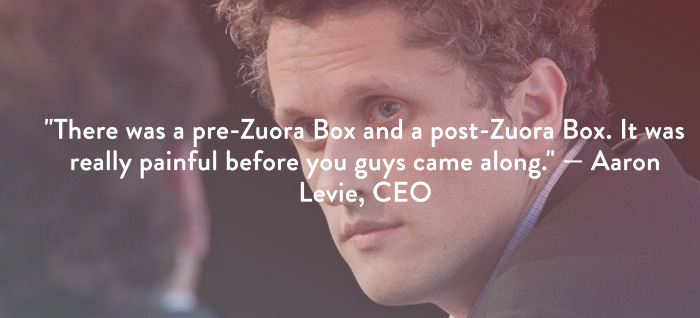

The most convincing proof is a success story about how you assisted someone comparable to the prospect. Zuora's sales people use a deck of customer success stories, but this one gets the essence.

I particularly appreciate this one from an NCR exec (a Zuora customer), which relates more strongly to Zuora's Promised Land:

Not enough successful customers? Product demos are the next best evidence, but features should always be presented in the context of helping a prospect achieve the Promised Land.

The best sales narrative is one that is told by everyone.

Success rarely comes from a fantastic deck alone. To be effective, salespeople need an organization-wide story about change, Promised Land, and Magic Gifts.

Zuora exemplifies this. If you hear a Zuora executive, including CEO Tien Tzuo, talk, you'll likely hear about the subscription economy and its winners and losers. This is the theme of the company's marketing communications, campaigns, and vision statement.

According to the ex-Zuora salesperson, company-wide story alignment made him successful.

The Zuora marketing folks ran campaigns and branding around this shift to the subscription economy, and [CEO] Tien [Tzuo] talked it up all the time. All of that was like air cover for my in-person sales ground attack. By the time I arrived, prospects were already convinced they had to act. It was the closest thing I’ve ever experienced to sales nirvana.

The largest deal ever

Tim contacted me three weeks after our lunch to tell me that prospects at large organizations were responding well to his new deck, which we modeled on Zuora's framework. First, prospects revealed their obstacles more quickly. The new pitch engages CFOs and other top gatekeepers better, he said.

A week later, Tim emailed that he'd signed his company's biggest agreement.

Next week, we’re headed back to Amber India to celebrate.

Jano le Roux

3 years ago

Quit worrying about Twitter: Elon moves quickly before refining

Elon's rides start rough, but then...

Elon Musk has never been so hated.

They don’t get Elon.

He began using PayPal in this manner.

He began with SpaceX in a similar manner.

He began with Tesla in this manner.

Disruptive.

Elon had rocky starts. His creativity requires it. Just like writing a first draft.

His fastest way to find the way is to avoid it.

PayPal's pricey launch

PayPal was a 1999 business flop.

They were considered insane.

Elon and his co-founders had big plans for PayPal. They adopted the popular philosophy of the time, exchanging short-term profit for growth, and pulled off a miracle just before the bubble burst.

PayPal was created as a dollar alternative. Original PayPal software allowed PalmPilot money transfers. Unfortunately, there weren't enough PalmPilot users.

Since everyone had email, the company emailed payments. Costs rose faster than sales.

The startup wanted to get a million subscribers by paying $10 to sign up and $10 for each referral. Elon thought the price was fair because PayPal made money by charging transaction fees. They needed to make money quickly.

A Wall Street Journal article valuing PayPal at $500 million attracted investors. The dot-com bubble burst soon after they rushed to get financing.

Musk and his partners sold PayPal to eBay for $1.5 billion in 2002. Musk's most successful company was PayPal.

SpaceX's start-up error

Elon and his friends bought a reconditioned ICBM in Russia in 2002.

He planned to invest much of his wealth in a stunt to promote NASA and space travel.

Many called Elon crazy.

The goal was to buy a cheap Russian rocket to launch mice or plants to Mars and return them. He thought SpaceX would revive global space interest. After a bad meeting in Moscow, Elon decided to build his own rockets to undercut launch contracts.

Then SpaceX was founded.

Elon’s plan was harder than expected.

Explosions followed explosions.

Millions lost on cargo.

Millions lost on the rockets.

Investors thought Elon was crazy, but he wasn't.

NASA's biggest competitor became SpaceX. NASA hired SpaceX to handle many of its missions.

Tesla's shaky beginning

Tesla began shakily.

Clients detested their roadster.

They continued to miss deadlines.

Lotus would handle the car while Tesla focused on the EV component, easing Tesla's entry. The business experienced elegance creep. Modifying specific parts kept the car from getting worse.

Cost overruns, delays, and other factors changed the Elise-like car's appearance. Only 7% of the Tesla Roadster's parts matched its Lotus twin.

Tesla was about to die.

Elon saved the mess as CEO.

He fired 25% of the workforce to reduce costs.

Elon Musk transformed Tesla into the world's most valuable automaker by running it like a startup.

Tesla hasn't spent a dime on advertising. They let the media do the talking by investing in innovation.

Elon sheds. Elon tries. Elon learns. Elon refines.

Twitter doesn't worry me.

The media is shocked. I’m not.

This is just Elon being Elon.

Elon makes lean.

Elon tries new things.

Elon listens to feedback.

Elon refines.

Besides Twitter will always be Twitter.

Mia Gradelski

3 years ago

Six Things Best-With-Money People Do Follow

I shouldn't generalize, yet this is true.

Spending is simpler than earning.

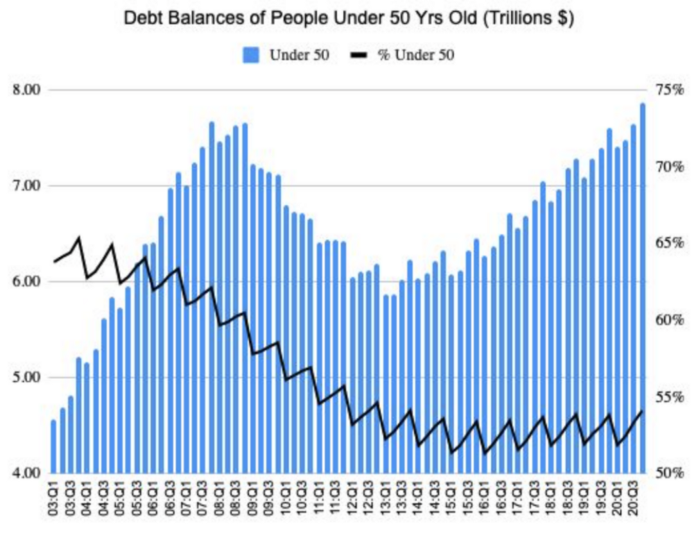

Prove me wrong, but with home debt at $145k in 2020 and individual debt at $67k, people don't have their priorities straight.

Where does this loan originate?

Under-50 Americans owed $7.86 trillion in Q4 20T. That's more than the US's 3-trillion-dollar deficit.

Here’s a breakdown:

🏡 Mortgages/Home Equity Loans = $5.28 trillion (67%)

🎓 Student Loans = $1.20 trillion (15%)

🚗 Auto Loans = $0.80 trillion (10%)

💳 Credit Cards = $0.37 trillion (5%)

🏥 Other/Medical = $0.20 trillion (3%)

Images.google.com

At least the Fed and government can explain themselves with their debt balance which includes:

-Providing stimulus packages 2x for Covid relief

-Stabilizing the economy

-Reducing inflation and unemployment

-Providing for the military, education and farmers

No American should have this much debt.

Don’t get me wrong. Debt isn’t all the same. Yes, it’s a negative number but it carries different purposes which may not be all bad.

Good debt: Use those funds in hopes of them appreciating as an investment in the future

-Student loans

-Business loan

-Mortgage, home equity loan

-Experiences

Paying cash for a home is wasteful. Just if the home is exceptionally uncommon, only 1 in a million on the market, and has an incredible bargain with numerous bidders seeking higher prices should you do so.

To impress the vendor, pay cash so they can sell it quickly. Most people can't afford most properties outright. Only 15% of U.S. homebuyers can afford their home. Zillow reports that only 37% of homes are mortgage-free.

People have clearly overreached.

Ignore appearances.

5% down can buy a 10-bedroom mansion.

Not paying in cash isn't necessarily a negative thing given property prices have increased by 30% since 2008, and throughout the epidemic, we've seen work-from-homers resort to the midwest, avoiding pricey coastal cities like NYC and San Francisco.

By no means do I think NYC is dead, nothing will replace this beautiful city that never sleeps, and now is the perfect time to rent or buy when everything is below average value for people who always wanted to come but never could. Once social distance ends, cities will recover. 24/7 sardine-packed subways prove New York isn't designed for isolation.

When buying a home, pay 20% cash and the balance with a mortgage. A mortgage must be incorporated into other costs such as maintenance, brokerage fees, property taxes, etc. If you're stuck on why a home isn't right for you, read here. A mortgage must be paid until the term date. Whether its a 10 year or 30 year fixed mortgage, depending on interest rates, especially now as the 10-year yield is inching towards 1.25%, it's better to refinance in a lower interest rate environment and pay off your debt as well since the Fed will be inching interest rates up following the 10-year eventually to stabilize the economy, but I believe that won't be until after Covid and when businesses like luxury, air travel, and tourism will get bashed.

Bad debt: I guess the contrary must be true. There is no way to profit from the loan in the future, therefore it is just money down the drain.

-Luxury goods

-Credit card debt

-Fancy junk

-Vacations, weddings, parties, etc.

Credit cards and school loans are the two largest risks to the financial security of those under 50 since banks love to compound interest to affect your credit score and make it tougher to take out more loans, not that you should with that much debt anyhow. With a low credit score and heavy debt, banks take advantage of you because you need aid to pay more for their services. Paying back debt is the challenge for most.

Choose Not Chosen

As a financial literacy advocate and blogger, I prefer not to brag, but I will now. I know what to buy and what to avoid. My parents educated me to live a frugal, minimalist stealth wealth lifestyle by choice, not because we had to.

That's the lesson.

The poorest person who shows off with bling is trying to seem rich.

Rich people know garbage is a bad investment. Investing in education is one of the best long-term investments. With information, you can do anything.

Good with money shun some items out of respect and appreciation for what they have.

Less is more.

Instead of copying the Joneses, use what you have. They may look cheerful and stylish in their 20k ft home, yet they may be as broke as OJ Simpson in his 20-bedroom mansion.

Let's look at what appears good to follow and maintain your wealth.

#1: Quality comes before quantity

Being frugal doesn't entail being cheap and cruel. Rich individuals care about relationships and treating others correctly, not impressing them. You don't have to be rich to be good with money, although most are since they don't live the fantasy lifestyle.

Underspending is appreciating what you have.

Many people believe organic food is the same as washing chemical-laden produce. Hopefully. Organic, vegan, fresh vegetables from upstate may be more expensive in the short term, but they will help you live longer and save you money in the long run.

Consider. You'll save thousands a month eating McDonalds 3x a day instead of fresh seafood, veggies, and organic fruit, but your life will be shortened. If you want to save money and die early, go ahead, but I assume we all want to break the world record for longest person living and would rather spend less. Plus, elderly people get tax breaks, medicare, pensions, 401ks, etc. You're living for free, therefore eating fast food forever is a terrible decision.

With a few longer years, you may make hundreds or millions more in the stock market, spend more time with family, and just live.

Folks, health is wealth.

Consider the future benefit, not simply the cash sign. Cheapness is useless.

Same with stuff. Don't stock your closet with fast-fashion you can't wear for years. Buying inexpensive goods that will fail tomorrow is stupid.

Investing isn't only in stocks. You're living. Consume less.

#2: If you cannot afford it twice, you cannot afford it once

I learned this from my dad in 6th grade. I've been lucky to travel, experience things, go to a great university, and conduct many experiments that others without a stable, decent lifestyle can afford.

I didn't live this way because of my parents' paycheck or financial knowledge.

Saving and choosing caused it.

I always bring cash when I shop. I ditch Apple Pay and credit cards since I can spend all I want on even if my account bounces.

Banks are nasty. When you lose it, they profit.

Cash hinders banks' profits. Carrying a big, hefty wallet with cash is lame and annoying, but it's the best method to only spend what you need. Not for vacation, but for tiny daily expenses.

Physical currency lets you know how much you have for lunch or a taxi.

It's physical, thus losing it prevents debt.

If you can't afford it, it will harm more than help.

#3: You really can purchase happiness with money.

If used correctly, yes.

Happiness and satisfaction differ.

It won't bring you fulfillment because you must work hard on your own to help others, but you can travel and meet individuals you wouldn't otherwise meet.

You can meet your future co-worker or strike a deal while waiting an hour in first class for takeoff, or you can meet renowned people at a networking brunch.

Seen a pattern here?

Your time and money are best spent on connections. Not automobiles or firearms. That’s just stuff. It doesn’t make you a better person.

Be different if you've earned less. Instead of trying to win the lotto or become an NFL star for your first big salary, network online for free.

Be resourceful. Sign up for LinkedIn, post regularly, and leave unengaged posts up because that shows power.

Consistency is beneficial.

I did that for a few months and met amazing people who helped me get jobs. Money doesn't create jobs, it creates opportunities.

Resist social media and scammers that peddle false hopes.

Choose wisely.

#4: Avoid gushing over titles and purchasing trash.

As Insider’s Hillary Hoffower reports, “Showing off wealth is no longer the way to signify having wealth. In the US particularly, the top 1% have been spending less on material goods since 2007.”

I checked my closet. No brand comes to mind. I've never worn a brand's logo and rotate 6 white shirts daily. I have my priorities and don't waste money or effort on clothing that won't fit me in a year.

Unless it's your full-time work, clothing shouldn't be part of our mornings.

Lifestyle of stealth wealth. You're so fulfilled that seeming homeless won't hurt your self-esteem.

That's self-assurance.

Extroverts aren't required.

That's irrelevant.

Showing off won't win you friends.

They'll like your personality.

#5: Time is the most valuable commodity.

Being rich doesn't entail working 24/7 M-F.

They work when they are ready to work.

Waking up at 5 a.m. won't make you a millionaire, but it will inculcate diligence and tenacity in you.

You have a busy day yet want to exercise. You can skip the workout or wake up at 4am instead of 6am to do it.

Emotion-driven lazy bums stay in bed.

Those that are accountable keep their promises because they know breaking one will destroy their week.

Since 7th grade, I've worked out at 5am for myself, not to impress others. It gives me greater energy to contribute to others, especially on weekends and holidays.

It's a habit that I have in my life.

Find something that you take seriously and makes you a better person.

As someone who is close to becoming a millionaire and has encountered them throughout my life, I can share with you a few important differences that have shaped who we are as a society based on the weekends:

-Read

-Sleep

-Best time to work with no distractions

-Eat together

-Take walks and be in nature

-Gratitude

-Major family time

-Plan out weeks

-Go grocery shopping because health = wealth

#6. Perspective is Important

Timing the markets will slow down your career. Professors preach scarcity, not abundance. Why should school teach success? They give us bad advice.

If you trust in abundance and luck by attempting and experimenting, growth will come effortlessly. Passion isn't a term that just appears. Mistakes and fresh people help. You can get money. If you don't think it's worth it, you won't.

You don’t have to be wealthy to be good at money, but most are for these reasons. Rich is a mindset, wealth is power. Prioritize your resources. Invest in yourself, knowing the toughest part is starting.

Thanks for reading!