More on Marketing

Yucel F. Sahan

3 years ago

How I Created the Day's Top Product on Product Hunt

In this article, I'll describe a weekend project I started to make something. It was Product Hunt's #1 of the Day, #2 Weekly, and #4 Monthly product.

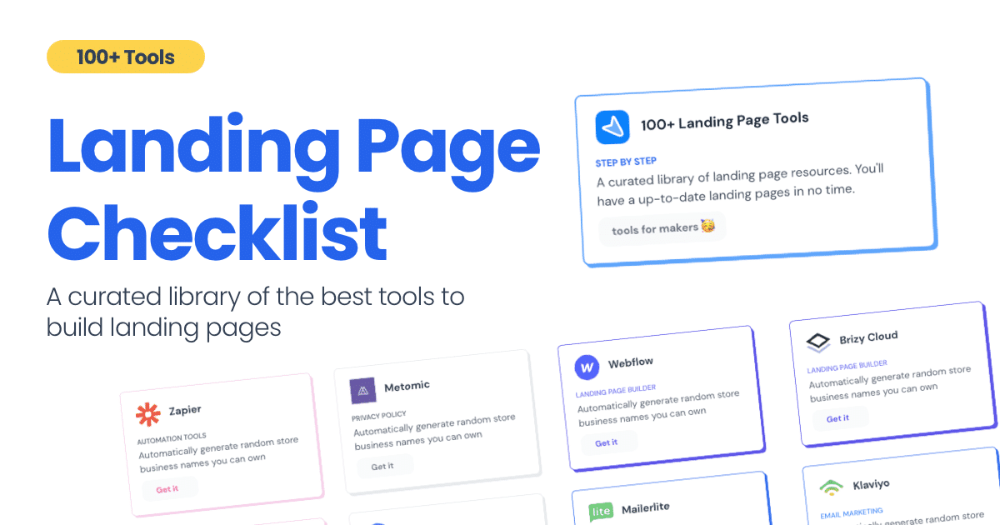

How did I make Landing Page Checklist so simple? Building and launching took 3 weeks. I worked 3 hours a day max. Weekends were busy.

It's sort of a long story, so scroll to the bottom of the page to see what tools I utilized to create Landing Page Checklist :x

As a matter of fact, it all started with the startups-investments blog; Startup Bulletin, that I started writing in 2018. No, don’t worry, I won’t be going that far behind. The twitter account where I shared the blog posts of this newsletter was inactive for a looong time. I was holding this Twitter account since 2009, I couldn’t bear to destroy it. At the same time, I was thinking how to evaluate this account.

So I looked for a weekend assignment.

Weekend undertaking: Generate business names

Barash and I established a weekend effort to stay current. Building things helped us learn faster.

Simple. Startup Name Generator The utility generated random startup names. After market research for SEO purposes, we dubbed it Business Name Generator.

Backend developer Barash dislikes frontend work. He told me to write frontend code. Chakra UI and Tailwind CSS were recommended.

It was the first time I have heard about Tailwind CSS.

Before this project, I made mobile-web app designs in Sketch and shared them via Zeplin. I can read HTML-CSS or React code, but not write it. I didn't believe myself but followed Barash's advice.

My home page wasn't responsive when I started. Here it was:)

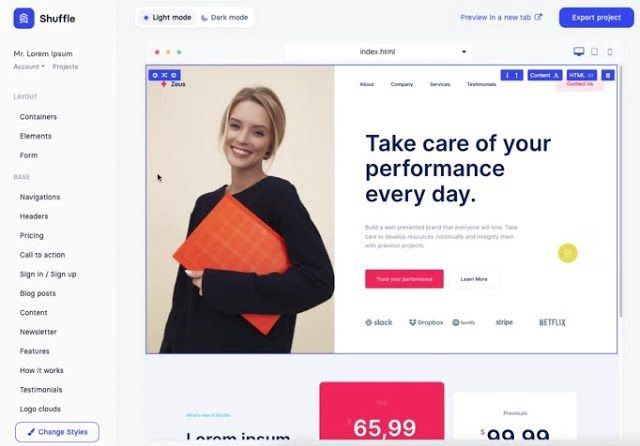

And then... Product Hunt had something I needed. Me-only! A website builder that gives you clean Tailwind CSS code and pre-made web components (like Elementor). Incredible.

I bought it right away because it was so easy to use. Best part: It's not just index.html. It includes all needed files. Like

postcss.config.js

README.md

package.json

among other things, tailwind.config.js

This is for non-techies.

Tailwind.build; which is Shuffle now, allows you to create and export projects for free (with limited features). You can try it by visiting their website.

After downloading the project, you can edit the text and graphics in Visual Studio (or another text editor). This HTML file can be hosted whenever.

Github is an easy way to host a landing page.

your project via Shuffle for export

your website's content, edit

Create a Gitlab, Github, or Bitbucket account.

to Github, upload your project folder.

Integrate Vercel with your Github account (or another platform below)

Allow them to guide you in steps.

Finally. If you push your code to Github using Github Desktop, you'll do it quickly and easily.

Speaking of; here are some hosting and serverless backend services for web applications and static websites for you host your landing pages for FREE!

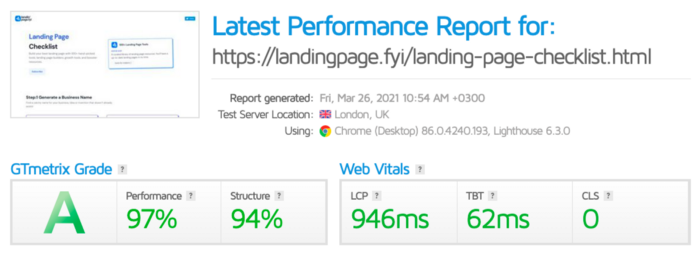

I host landingpage.fyi on Vercel but all is fine. You can choose any platform below with peace in mind.

Vercel

Render

Netlify

After connecting your project/repo to Vercel, you don’t have to do anything on Vercel. Vercel updates your live website when you update Github Desktop. Wow!

Tails came out while I was using tailwind.build. Although it's prettier, tailwind.build is more mobile-friendly. I couldn't resist their lovely parts. Tails :)

Tails have several well-designed parts. Some components looked awful on mobile, but this bug helped me understand Tailwind CSS.

Unlike Shuffle, Tails does not include files when you export such as config.js, main.js, README.md. It just gives you the HTML code. Suffle.dev is a bit ahead in this regard and with mobile-friendly blocks if you ask me. Of course, I took advantage of both.

creativebusinessnames.co is inactive, but I'll leave a deployment link :)

Adam Wathan's YouTube videos and Tailwind's official literature helped me, but I couldn't have done it without Tails and Shuffle. These tools helped me make landing pages. I shouldn't have started over.

So began my Tailwind CSS adventure. I didn't build landingpage. I didn't plan it to be this long; sorry.

I learnt a lot while I was playing around with Shuffle and Tails Builders.

Long story short I built landingpage.fyi with the help of these tools;

Learning, building, and distribution

Shuffle (Started with a Shuffle Template)

Tails (Used components from here)

Sketch (to handle icons, logos, and .svg’s)

metatags.io (Auto Generator Meta Tags)

Vercel (Hosting)

Github Desktop (Pushing code to Github -super easy-)

Visual Studio Code (Edit my code)

Mailerlite (Capture Emails)

Jarvis / Conversion.ai (%90 of the text on website written by AI 😇 )

CookieHub (Consent Management)

That's all. A few things:

The Outcome

.fyi Domain: Why?

I'm often asked this.

I don't know, but I wanted to include the landing page term. Popular TLDs are gone. I saw my alternatives. brief and catchy.

CSS Tailwind Resources

I'll share project resources like Tails and Shuffle.

Beginner Tailwind (I lately enrolled in this course but haven’t completed it yet.)

Thanks for reading my blog's first post. Please share if you like it.

Victoria Kurichenko

3 years ago

My Blog Is in Google's Top 10—Here's How to Compete

"Competition" is beautiful and hateful.

Some people bury their dreams because they are afraid of competition. Others challenge themselves, shaping our world.

Competition is normal.

It spurs innovation and progress.

I wish more people agreed.

As a marketer, content writer, and solopreneur, my readers often ask:

"I want to create a niche website, but I have no ideas. Everything's done"

"Is a website worthwhile?"

I can't count how many times I said, "Yes, it makes sense, and you can succeed in a competitive market."

I encourage and share examples, but it's not enough to overcome competition anxiety.

I launched an SEO writing website for content creators a year ago, knowing it wouldn't beat Ahrefs, Semrush, Backlinko, etc.

Not needed.

Many of my website's pages rank highly on Google.

Everyone can eat the pie.

In a competitive niche, I took a different approach.

Look farther

When chatting with bloggers that want a website, I discovered something fascinating.

They want to launch a website but have no ideas. As a next step, they start listing the interests they believe they should work on, like wellness, lifestyle, investments, etc. I could keep going.

Too many generalists who claim to know everything confuse many.

Generalists aren't trusted.

We want someone to fix our problems immediately.

I don't think broad-spectrum experts are undervalued. People have many demands that go beyond generalists' work. Narrow-niche experts can help.

I've done SEO for three years. I learned from experts and courses. I couldn't find a comprehensive SEO writing resource.

I read tons of articles before realizing that wasn't it. I took courses that covered SEO basics eventually.

I had a demand for learning SEO writing, but there was no solution on the market. My website fills this micro-niche.

Have you ever had trouble online?

Professional courses too general, boring, etc.?

You've bought off-topic books, right?

You're not alone.

Niche ideas!

Big players often disregard new opportunities. Too small. Individual content creators can succeed here.

In a competitive market:

Never choose wide subjects

Think about issues you can relate to and have direct experience with.

Be a consumer to discover both the positive and negative aspects of a good or service.

Merchandise your annoyances.

Consider ways to transform your frustrations into opportunities.

The right niche is half-success. Here is what else I did to hit the Google front page with my website.

An innovative method for choosing subjects

Why publish on social media and websites?

Want likes, shares, followers, or fame?

Some people do it for fun. No judgment.

I bet you want more.

You want to make decent money from blogging.

Writing about random topics, even if they are related to your niche, won’t help you attract an audience from organic search. I'm a marketer and writer.

I worked at companies with dead blogs because they posted for themselves, not readers. They did not follow SEO writing rules; that’s why most of their content flopped.

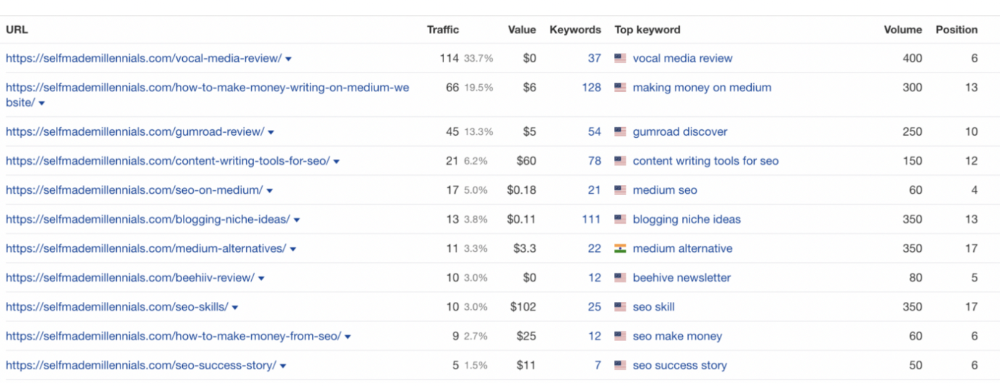

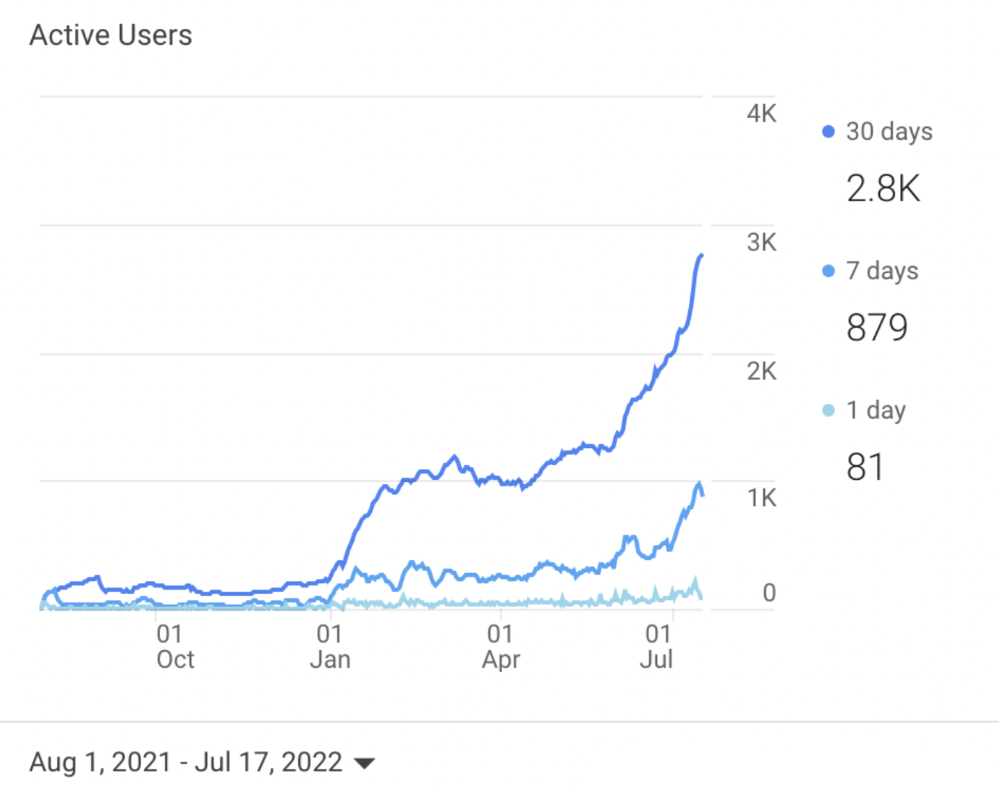

I learned these hard lessons and grew my website from 0 to 3,000+ visitors per month while working on it a few hours a week only. Evidence:

I choose website topics using these criteria:

- Business potential. The information should benefit my audience and generate revenue. There would be no use in having it otherwise.

My topics should help me:

Attract organic search traffic with my "fluff-free" content -> Subscribers > SEO ebook sales.

Simple and effective.

- traffic on search engines. The number of monthly searches reveals how popular my topic is all across the world. If I find that no one is interested in my suggested topic, I don't write a blog article.

- Competition. Every search term is up against rivals. Some are more popular (thus competitive) since more websites target them in organic search. A new website won't score highly for keywords that are too competitive. On the other side, keywords with moderate to light competition can help you rank higher on Google more quickly.

- Search purpose. The "why" underlying users' search requests is revealed. I analyze search intent to understand what users need when they plug various queries in the search bar and what content can perfectly meet their needs.

My specialty website produces money, ranks well, and attracts the target audience because I handpick high-traffic themes.

Following these guidelines, even a new website can stand out.

I wrote a 50-page SEO writing guide where I detailed topic selection and share my front-page Google strategy.

My guide can help you run a successful niche website.

In summary

You're not late to the niche-website party.

The Internet offers many untapped opportunities.

We need new solutions and are willing to listen.

There are unexplored niches in any topic.

Don't fight giants. They have their piece of the pie. They might overlook new opportunities while trying to keep that piece of the pie. You should act now.

Tim Denning

3 years ago

I Posted Six Times a Day for 210 Days on Twitter. Here's What Happened.

I'd spend hours composing articles only to find out they were useless. Twitter solved the problem.

Twitter is wrinkled, say critics.

Nope. Writing is different. It won't make sense until you write there.

Twitter is resurgent. People are reading again. 15-second TikToks overloaded our senses.

After nuking my 20,000-follower Twitter account and starting again, I wrote every day for 210 days.

I'll explain.

I came across the strange world of microblogging.

Traditional web writing is filler-heavy.

On Twitter, you must be brief. I played Wordle.

Twitter Threads are the most popular writing format. Like a blog post. It reminds me of the famous broetry posts on LinkedIn a few years ago.

Threads combine tweets into an article.

Sharp, concise sentences

No regard for grammar

As important as the information is how the text looks.

Twitter Threads are like Michael Angelo's David monument. He chipped away at an enormous piece of marble until a man with a big willy appeared.

That's Twitter Threads.

I tried to remove unnecessary layers from several of my Wordpress blog posts. Then I realized something.

Tweeting from scratch is easier and more entertaining. It's quicker and makes you think more concisely.

Superpower: saying much with little words. My long-form writing has improved. My article sentences resemble tweets.

You never know what will happen.

Twitter's subcultures are odd. Best-performing tweets are strange.

Unusual trend: working alone and without telling anyone. It's a rebellion against Instagram influencers who share their every moment.

Early on, random thoughts worked:

My friend’s wife is Ukrainian. Her family are trapped in the warzone. He is devastated. And here I was complaining about my broken garage door. War puts everything in perspective. Today is a day to be grateful for peace.

Documenting what's happening triggers writing. It's not about viral tweets. Helping others matters.

There are numerous anonymous users.

Twitter uses pseudonyms.

You don't matter. On sites like LinkedIn, you must use your real name. Welcome to the Cyberpunk metaverse of Twitter :)

One daily piece of writing is a powerful habit.

Habits build creator careers. Read that again.

Twitter is an easy habit to pick up. If you can't tweet in one sentence, something's wrong. Easy-peasy-japanese.

Not what I tweeted, but my constancy, made the difference.

Daily writing is challenging, especially if your supervisor is on your back. Twitter encourages writing.

Tweets evolved as the foundation of all other material.

During my experiment, I enjoyed Twitter's speed.

Tweets get immediate responses, comments, and feedback. My popular tweets become newspaper headlines. I've also written essays from tweet discussions.

Sometimes the tweet and article were clear. Twitter sometimes helped me overcome writer's block.

I used to spend hours composing big things that had little real-world use.

Twitter helped me. No guessing. Data guides my coverage and validates concepts.

Test ideas on Twitter.

It took some time for my email list to grow.

Subscribers are a writer's lifeblood.

Without them, you're broke and homeless when Mark Zuckerberg tweaks the algorithms for ad dollars. Twitter has three ways to obtain email subscribers:

1. Add a link to your bio.

Twitter allows bio links (LinkedIn now does too). My eBook's landing page is linked. I collect emails there.

2. Start an online newsletter.

Twitter bought newsletter app Revue. They promote what they own.

I just established up a Revue email newsletter. I imported them weekly into my ConvertKit email list.

3. Create Twitter threads and include a link to your email list in the final tweet.

Write Twitter Threads and link the last tweet to your email list (example below).

Initial email subscribers were modest.

Numbers are growing. Twitter provides 25% of my new email subscribers. Some days, 50 people join.

Without them, my writing career is over. I'd be back at a 9-5 job begging for time off to spend with my newborn daughter. Nope.

Collect email addresses or die trying.

As insurance against unsubscribes and Zucks, use a second email list or Discord community.

What I still need to do

Twitter's fun. I'm wiser. I need to enable auto-replies and auto-DMs (direct messages).

This adds another way to attract subscribers. I schedule tweets with Tweet Hunter.

It’s best to go slow. People assume you're an internet marketer if you spam them with click requests.

A human internet marketer is preferable to a robot. My opinion.

210 days on Twitter taught me that. I plan to use the platform until I'm a grandfather unless Elon ruins it.

You might also like

Ryan Weeks

3 years ago

Terra fiasco raises TRON's stablecoin backstop

After Terra's algorithmic stablecoin collapsed in May, TRON announced a plan to increase the capital backing its own stablecoin.

USDD, a near-carbon copy of Terra's UST, arrived on the TRON blockchain on May 5. TRON founder Justin Sun says USDD will be overcollateralized after initially being pegged algorithmically to the US dollar.

A reserve of cryptocurrencies and stablecoins will be kept at 130 percent of total USDD issuance, he said. TRON described the collateral ratio as "guaranteed" and said it would begin publishing real-time updates on June 5.

Currently, the reserve contains 14,040 bitcoin (around $418 million), 140 million USDT, 1.9 billion TRX, and 8.29 billion TRX in a burning contract.

Sun: "We want to hybridize USDD." We have an algorithmic stablecoin and TRON DAO Reserve.

algorithmic failure

USDD was designed to incentivize arbitrageurs to keep its price pegged to the US dollar by trading TRX, TRON's token, and USDD. Like Terra, TRON signaled its intent to establish a bitcoin and cryptocurrency reserve to support USDD in extreme market conditions.

Still, Terra's UST failed despite these safeguards. The stablecoin veered sharply away from its dollar peg in mid-May, bringing down Terra's LUNA and wiping out $40 billion in value in days. In a frantic attempt to restore the peg, billions of dollars in bitcoin were sold and unprecedented volumes of LUNA were issued.

Sun believes USDD, which has a total circulating supply of $667 million, can be backed up.

"Our reserve backing is diversified." Bitcoin and stablecoins are included. USDC will be a small part of Circle's reserve, he said.

TRON's news release lists the reserve's assets as bitcoin, TRX, USDC, USDT, TUSD, and USDJ.

All Bitcoin addresses will be signed so everyone knows they belong to us, Sun said.

Not giving in

Sun told that the crypto industry needs "decentralized" stablecoins that regulators can't touch.

Sun said the Luna Foundation Guard, a Singapore-based non-profit that raised billions in cryptocurrency to buttress UST, mismanaged the situation by trying to sell to panicked investors.

He said, "We must be ahead of the market." We want to stabilize the market and reduce volatility.

Currently, TRON finances most of its reserve directly, but Sun says the company hopes to add external capital soon.

Before its demise, UST holders could park the stablecoin in Terra's lending platform Anchor Protocol to earn 20% interest, which many deemed unsustainable. TRON's JustLend is similar. Sun hopes to raise annual interest rates from 17.67% to "around 30%."

This post is a summary. Read full article here

Thomas Smith

3 years ago

ChatGPT Is Experiencing a Lightbulb Moment

Why breakthrough technologies must be accessible

ChatGPT has exploded. Over 1 million people have used the app, and coding sites like Stack Overflow have banned its answers. It's huge.

I wouldn't have called that as an AI researcher. ChatGPT uses the same GPT-3 technology that's been around for over two years.

More than impressive technology, ChatGPT 3 shows how access makes breakthroughs usable. OpenAI has finally made people realize the power of AI by packaging GPT-3 for normal users.

We think of Thomas Edison as the inventor of the lightbulb, not because he invented it, but because he popularized it.

Going forward, AI companies that make using AI easy will thrive.

Use-case importance

Most modern AI systems use massive language models. These language models are trained on 6,000+ years of human text.

GPT-3 ate 8 billion pages, almost every book, and Wikipedia. It created an AI that can write sea shanties and solve coding problems.

Nothing new. I began beta testing GPT-3 in 2020, but the system's basics date back further.

Tools like GPT-3 are hidden in many apps. Many of the AI writing assistants on this platform are just wrappers around GPT-3.

Lots of online utilitarian text, like restaurant menu summaries or city guides, is written by AI systems like GPT-3. You've probably read GPT-3 without knowing it.

Accessibility

Why is ChatGPT so popular if the technology is old?

ChatGPT makes the technology accessible. Free to use, people can sign up and text with the chatbot daily. ChatGPT isn't revolutionary. It does it in a way normal people can access and be amazed by.

Accessibility isn't easy. OpenAI's Sam Altman tweeted that opening ChatGPT to the public increased computing costs.

Each chat costs "low-digit cents" to process. OpenAI probably spends several hundred thousand dollars a day to keep ChatGPT running, with no immediate business case.

Academic researchers and others who developed GPT-3 couldn't afford it. Without resources to make technology accessible, it can't be used.

Retrospective

This dynamic is old. In the history of science, a researcher with a breakthrough idea was often overshadowed by an entrepreneur or visionary who made it accessible to the public.

We think of Thomas Edison as the inventor of the lightbulb. But really, Vasilij Petrov, Thomas Wright, and Joseph Swan invented the lightbulb. Edison made technology visible and accessible by electrifying public buildings, building power plants, and wiring.

Edison probably lost a ton of money on stunts like building a power plant to light JP Morgan's home, the NYSE, and several newspaper headquarters.

People wanted electric lights once they saw their benefits. By making the technology accessible and visible, Edison unlocked a hugely profitable market.

Similar things are happening in AI. ChatGPT shows that developing breakthrough technology in the lab or on B2B servers won't change the culture.

AI must engage people's imaginations to become mainstream. Before the tech impacts the world, people must play with it and see its revolutionary power.

As the field evolves, companies that make the technology widely available, even at great cost, will succeed.

OpenAI's compute fees are eye-watering. Revolutions are costly.

Pat Vieljeux

3 years ago

In 5 minutes, you can tell if a startup will succeed.

Or the “lie to me” method.

I can predict a startup's success in minutes.

Just interview its founder.

Ask "why?"

I question "why" till I sense him.

I need to feel the person I have in front of me. I need to know if he or she can deliver. Startups aren't easy. Without abilities, a brilliant idea will fail.

Good entrepreneurs have these qualities: He's a leader, determined, and resilient.

For me, they can be split in two categories.

The first entrepreneur aspires to live meaningfully. The second wants to get rich. The second is communicative. He wants to wow the crowd. He's motivated by the thought of one day sailing a boat past palm trees and sunny beaches.

What drives the first entrepreneur is evident in his speech, face, and voice. He will not speak about his product. He's (nearly) uninterested. He's not selling anything. He's not a salesman. He wants to succeed. The product is his fuel.

He'll explain his decision. He'll share his motivations. His desire. And he'll use meaningful words.

Paul Ekman has shown that face expressions aren't cultural. His study influenced the American TV series "lie to me" about body language and speech.

Passionate entrepreneurs are obvious. It's palpable. Faking passion is tough. Someone who wants your favor and money will expose his actual motives through his expressions and language.

The good liar will be able to fool you for a while, but not for long if you pay attention to his body language and how he expresses himself.

And also, if you look at his business plan.

His business plan reveals his goals. Read between the lines.

Entrepreneur 1 will focus on his "why", whereas Entrepreneur 2 will focus on the "how".

Entrepreneur 1 will develop a vision-driven culture.

The second, on the other hand, will focus on his EBITDA.

Why is the culture so critical? Because it will allow entrepreneur 1 to develop a solid team that can tackle his problems and trials. His team's "why" will keep them together in tough times.

"Give me a terrific start-up team with a mediocre idea over a weak one any day." Because a great team knows when to pivot and trusts each other. Weak teams fail.” — Bernhard Schroeder

Closings thoughts

Every VC must ask Why. Entrepreneur's motivations. This "why" will create the team's culture. This culture will help the team adjust to any setback.