More on Gaming

Ash Parrish

3 years ago

Sonic Prime and indie games on Netflix

Netflix will stream Spiritfarer, Raji: An Ancient Epic, and Lucky Luna.

Netflix's Geeked Week brought a slew of announcements. The flurry of reveals for The Sandman, The Umbrella Academy season 3, One Piece, and more also included game and game-adjacent announcements.

Netflix released a teaser for Cuphead season 2 ahead of its August premiere, featuring more of Grey DeLisle's Ms. Chalice. DOTA: Dragon's Blood season 3 hits Netflix in August. Tekken, the fighting game that throws kids off cliffs, gets an anime, Tekken: Bloodline.

Netflix debuted a clip of Sonic Prime before Sonic Origins in June and Sonic Frontiers in 2022.

Castlevania: Nocturne will follow Richter Belmont.

Netflix is reviving licensed games with titles based on its shows. There's a Queen's Gambit chess game, a Shadow and Bone RPG, a La Casa de Papel heist adventure, and a Too Hot to Handle game where a pregnant woman must choose between stabbing her cheating ex or forgiving him.

Riot's rhythm platformer Hextech Mayhem debuted on Netflix last year, and now Netflix is adding games from Devolver Digital. Reigns: Three Kingdoms is a card game that lets players choose the fate of Three Kingdoms-era China by swiping left or right on cards. Spiritfarer, the "cozy game about death" from 2020, and Raji: An Ancient Epic are coming to Netflix. Poinpy, a vertical climber from the creator of Downwell, is now on Netflix.

Desta: The Memories Between is a turn-based strategy game set in dreams and memories.

Snowman's Lucky Luna will also be added soon.

With these games, Netflix is expanding beyond dinky mobile games — it plans to have 50 by the end of the year — and could be a serious platform for indies that want to expand into mobile. It takes gaming seriously.

Chris Moyse

3 years ago

Sony and LEGO raise $2 billion for Epic Games' metaverse

‘Kid-friendly’ project holds $32 billion valuation

Epic Games announced today that it has raised $2 billion USD from Sony Group Corporation and KIRKBI (holding company of The LEGO Group). Both companies contributed $1 billion to Epic Games' upcoming ‘metaverse' project.

“We need partners who share our vision as we reimagine entertainment and play. Our partnership with Sony and KIRKBI has found this,” said Epic Games CEO Tim Sweeney. A new metaverse will be built where players can have fun with friends and brands create creative and immersive experiences, as well as creators thrive.

Last week, LEGO and Epic Games announced their plans to create a family-friendly metaverse where kids can play, interact, and create in digital environments. The service's users' safety and security will be prioritized.

With this new round of funding, Epic Games' project is now valued at $32 billion.

“Epic Games is known for empowering creators large and small,” said KIRKBI CEO Sren Thorup Srensen. “We invest in trends that we believe will impact the world we and our children will live in. We are pleased to invest in Epic Games to support their continued growth journey, with a long-term focus on the future metaverse.”

Epic Games is expected to unveil its metaverse plans later this year, including its name, details, services, and release date.

Luke Plunkett

3 years ago

Gran Turismo 7 Update Eases Up On The Grind After Fan Outrage

Polyphony Digital has changed the game after apologizing in March.

To make amends for some disastrous downtime, Gran Turismo 7 director Kazunori Yamauchi announced a credits handout and promised to “dramatically change GT7's car economy to help make amends” last month. The first of these has arrived.

The game's 1.11 update includes the following concessions to players frustrated by the economy and its subsequent grind:

-

The last half of the World Circuits events have increased in-game credit rewards.

-

Modified Arcade and Custom Race rewards

-

Clearing all circuit layouts with Gold or Bronze now rewards In-game Credits. Exiting the Sector selection screen with the Exit button will award Credits if an event has already been cleared.

-

Increased Credits Rewards in Lobby and Daily Races

-

Increased the free in-game Credits cap from 20,000,000 to 100,000,000.

Additionally, “The Human Comedy” missions are one-hour endurance races that award “up to 1,200,000” credits per event.

This isn't everything Yamauchi promised last month; he said it would take several patches and updates to fully implement the changes. Here's a list of everything he said would happen, some of which have already happened (like the World Cup rewards and credit cap):

- Increase rewards in the latter half of the World Circuits by roughly 100%.

- Added high rewards for all Gold/Bronze results clearing the Circuit Experience.

- Online Races rewards increase.

- Add 8 new 1-hour Endurance Race events to Missions. So expect higher rewards.

- Increase the non-paid credit limit in player wallets from 20M to 100M.

- Expand the number of Used and Legend cars available at any time.

- With time, we will increase the payout value of limited time rewards.

- New World Circuit events.

- Missions now include 24-hour endurance races.

- Online Time Trials added, with rewards based on the player's time difference from the leader.

- Make cars sellable.

The full list of updates and changes can be found here.

Read the original post.

You might also like

James Howell

3 years ago

Which Metaverse Is Better, Decentraland or Sandbox?

The metaverse is the most commonly used term in current technology discussions. While the entire tech ecosystem awaits the metaverse's full arrival, defining it is difficult. Imagine the internet in the '80s! The metaverse is a three-dimensional virtual world where users can interact with digital solutions and each other as digital avatars.

The metaverse is a three-dimensional virtual world where users can interact with digital solutions and each other as digital avatars.

Among the metaverse hype, the Decentraland vs Sandbox debate has gained traction. Both are decentralized metaverse platforms with no central authority. So, what's the difference and which is better? Let us examine the distinctions between Decentraland and Sandbox.

2 Popular Metaverse Platforms Explained

The first step in comparing sandbox and Decentraland is to outline the definitions. Anyone keeping up with the metaverse news has heard of the two current leaders. Both have many similarities, but also many differences. Let us start with defining both platforms to see if there is a winner.

Decentraland

Decentraland, a fully immersive and engaging 3D metaverse, launched in 2017. It allows players to buy land while exploring the vast virtual universe. Decentraland offers a wide range of activities for its visitors, including games, casinos, galleries, and concerts. It is currently the longest-running metaverse project.

Decentraland began with a $24 million ICO and went public in 2020. The platform's virtual real estate parcels allow users to create a variety of experiences. MANA and LAND are two distinct tokens associated with Decentraland. MANA is the platform's native ERC-20 token, and users can burn MANA to get LAND, which is ERC-721 compliant. The MANA coin can be used to buy avatars, wearables, products, and names on Decentraland.

Sandbox

Sandbox, the next major player, began as a blockchain-based virtual world in 2011 and migrated to a 3D gaming platform in 2017. The virtual world allows users to create, play, own, and monetize their virtual experiences. Sandbox aims to empower artists, creators, and players in the blockchain community to customize the platform. Sandbox gives the ideal means for unleashing creativity in the development of the modern gaming ecosystem.

The project combines NFTs and DAOs to empower a growing community of gamers. A new play-to-earn model helps users grow as gamers and creators. The platform offers a utility token, SAND, which is required for all transactions.

What are the key points from both metaverse definitions to compare Decentraland vs sandbox?

It is ideal for individuals, businesses, and creators seeking new artistic, entertainment, and business opportunities. It is one of the rapidly growing Decentralized Autonomous Organization projects. Holders of MANA tokens also control the Decentraland domain.

Sandbox, on the other hand, is a blockchain-based virtual world that runs on the native token SAND. On the platform, users can create, sell, and buy digital assets and experiences, enabling blockchain-based gaming. Sandbox focuses on user-generated content and building an ecosystem of developers.

Sandbox vs. Decentraland

If you try to find what is better Sandbox or Decentraland, then you might struggle with only the basic definitions. Both are metaverse platforms offering immersive 3D experiences. Users can freely create, buy, sell, and trade digital assets. However, both have significant differences, especially in MANA vs SAND.

For starters, MANA has a market cap of $5,736,097,349 versus $4,528,715,461, giving Decentraland an advantage.

The MANA vs SAND pricing comparison is also noteworthy. A SAND is currently worth $3664, while a MANA is worth $2452.

The value of the native tokens and the market capitalization of the two metaverse platforms are not enough to make a choice. Let us compare Sandbox vs Decentraland based on the following factors.

Workstyle

The way Decentraland and Sandbox work is one of the main comparisons. From a distance, they both appear to work the same way. But there's a lot more to learn about both platforms' workings. Decentraland has 90,601 digital parcels of land.

Individual parcels of virtual real estate or estates with multiple parcels of land are assembled. It also has districts with similar themes and plazas, which are non-tradeable parcels owned by the community. It has three token types: MANA, LAND, and WEAR.

Sandbox has 166,464 plots of virtual land that can be grouped into estates. Estates are owned by one person, while districts are owned by two or more people. The Sandbox metaverse has four token types: SAND, GAMES, LAND, and ASSETS.

Age

The maturity of metaverse projects is also a factor in the debate. Decentraland is clearly the winner in terms of maturity. It was the first solution to create a 3D blockchain metaverse. Decentraland made the first working proof of concept public. However, Sandbox has only made an Alpha version available to the public.

Backing

The MANA vs SAND comparison would also include support for both platforms. Digital Currency Group, FBG Capital, and CoinFund are all supporters of Decentraland. It has also partnered with Polygon, the South Korean government, Cyberpunk, and Samsung.

SoftBank, a Japanese multinational conglomerate focused on investment management, is another major backer. Sandbox has the backing of one of the world's largest investment firms, as well as Slack and Uber.

Compatibility

Wallet compatibility is an important factor in comparing the two metaverse platforms. Decentraland currently has a competitive advantage. How? Both projects' marketplaces accept ERC-20 wallets. However, Decentraland has recently improved by bridging with Walletconnect. So it can let Polygon users join Decentraland.

Scalability

Because Sandbox and Decentraland use the Ethereum blockchain, scalability is an issue. Both platforms' scalability is constrained by volatile tokens and high gas fees. So, scalability issues can hinder large-scale adoption of both metaverse platforms.

Buying Land

Decentraland vs Sandbox comparisons often include virtual real estate. However, the ability to buy virtual land on both platforms defines the user experience and differentiates them. In this case, Sandbox offers better options for users to buy virtual land by combining OpenSea and Sandbox. In fact, Decentraland users can only buy from the MANA marketplace.

Innovation

The rate of development distinguishes Sandbox and Decentraland. Both platforms have been developing rapidly new features. However, Sandbox wins by adopting Polygon NFT layer 2 solutions, which consume almost 100 times less energy than Ethereum.

Collaborations

The platforms' collaborations are the key to determining "which is better Sandbox or Decentraland." Adoption of metaverse platforms like the two in question can be boosted by association with reputable brands. Among the partners are Atari, Cyberpunk, and Polygon. Rather, Sandbox has partnered with well-known brands like OpenSea, CryptoKitties, The Walking Dead, Snoop Dogg, and others.

Platform Adaptivity

Another key feature that distinguishes Sandbox and Decentraland is the ease of use. Sandbox clearly wins in terms of platform access. It allows easy access via social media, email, or a Metamask wallet. However, Decentraland requires a wallet connection.

Prospects

The future development plans also play a big role in defining Sandbox vs Decentraland. Sandbox's future development plans include bringing the platform to mobile devices. This includes consoles like PlayStation and Xbox. By the end of 2023, the platform expects to have around 5000 games.

Decentraland, on the other hand, has no set plan. In fact, the team defines the decisions that appear to have value. They plan to add celebrities, creators, and brands soon, along with NFT ads and drops.

Final Words

The comparison of Decentraland vs Sandbox provides a balanced view of both platforms. You can see how difficult it is to determine which decentralized metaverse is better now. Sandbox is still in Alpha, whereas Decentraland has a working proof of concept.

Sandbox, on the other hand, has better graphics and is backed by some big names. But both have a long way to go in the larger decentralized metaverse.

Dung Claire Tran

3 years ago

Is the future of brand marketing with virtual influencers?

Digital influences that mimic humans are rising.

Lil Miquela has 3M Instagram followers, 3.6M TikTok followers, and 30K Twitter followers. She's been on the covers of Prada, Dior, and Calvin Klein magazines. Miquela released Not Mine in 2017 and launched Hard Feelings at Lollapazoolas this year. This isn't surprising, given the rise of influencer marketing.

This may be unexpected. Miquela's fake. Brud, a Los Angeles startup, produced her in 2016.

Lil Miquela is one of many rising virtual influencers in the new era of social media marketing. She acts like a real person and performs the same tasks as sports stars and models.

The emergence of online influencers

Before 2018, computer-generated characters were rare. Since the virtual human industry boomed, they've appeared in marketing efforts worldwide.

In 2020, the WHO partnered up with Atlanta-based virtual influencer Knox Frost (@knoxfrost) to gather contributions for the COVID-19 Solidarity Response Fund.

Lu do Magalu (@magazineluiza) has been the virtual spokeswoman for Magalu since 2009, using social media to promote reviews, product recommendations, unboxing videos, and brand updates. Magalu's 10-year profit was $552M.

In 2020, PUMA partnered with Southeast Asia's first virtual model, Maya (@mayaaa.gram). She joined Singaporean actor Tosh Zhang in the PUMA campaign. Local virtual influencer Ava Lee-Graham (@avagram.ai) partnered with retail firm BHG to promote their in-house labels.

In Japan, Imma (@imma.gram) is the face of Nike, PUMA, Dior, Salvatore Ferragamo SpA, and Valentino. Imma's bubblegum pink bob and ultra-fine fashion landed her on the cover of Grazia magazine.

Lotte Home Shopping created Lucy (@here.me.lucy) in September 2020. She made her TV debut as a Christmas show host in 2021. Since then, she has 100K Instagram followers and 13K TikTok followers.

Liu Yiexi gained 3 million fans in five days on Douyin, China's TikTok, in 2021. Her two-minute video went viral overnight. She's posted 6 videos and has 830 million Douyin followers.

China's virtual human industry was worth $487 million in 2020, up 70% year over year, and is expected to reach $875.9 million in 2021.

Investors worldwide are interested. Immas creator Aww Inc. raised $1 million from Coral Capital in September 2020, according to Bloomberg. Superplastic Inc., the Vermont-based startup behind influencers Janky and Guggimon, raised $16 million by 2020. Craft Ventures, SV Angels, and Scooter Braun invested. Crunchbase shows the company has raised $47 million.

The industries they represent, including Augmented and Virtual reality, were worth $14.84 billion in 2020 and are projected to reach $454.73 billion by 2030, a CAGR of 40.7%, according to PR Newswire.

Advantages for brands

Forbes suggests brands embrace computer-generated influencers. Examples:

Unlimited creative opportunities: Because brands can personalize everything—from a person's look and activities to the style of their content—virtual influencers may be suited to a brand's needs and personalities.

100% brand control: Brand managers now have more influence over virtual influencers, so they no longer have to give up and rely on content creators to include brands into their storytelling and style. Virtual influencers can constantly produce social media content to promote a brand's identity and ideals because they are completely scandal-free.

Long-term cost savings: Because virtual influencers are made of pixels, they may be reused endlessly and never lose their beauty. Additionally, they can move anywhere around the world and even into space to fit a brand notion. They are also always available. Additionally, the expense of creating their content will not rise in step with their expanding fan base.

Introduction to the metaverse: Statista reports that 75% of American consumers between the ages of 18 and 25 follow at least one virtual influencer. As a result, marketers that support virtual celebrities may now interact with younger audiences that are more tech-savvy and accustomed to the digital world. Virtual influencers can be included into any digital space, including the metaverse, as they are entirely computer-generated 3D personas. Virtual influencers can provide brands with a smooth transition into this new digital universe to increase brand trust and develop emotional ties, in addition to the young generations' rapid adoption of the metaverse.

Better engagement than in-person influencers: A Hype Auditor study found that online influencers have roughly three times the engagement of their conventional counterparts. Virtual influencers should be used to boost brand engagement even though the data might not accurately reflect the entire sector.

Concerns about influencers created by computers

Virtual influencers could encourage excessive beauty standards in South Korea, which has a $10.7 billion plastic surgery industry.

A classic Korean beauty has a small face, huge eyes, and pale, immaculate skin. Virtual influencers like Lucy have these traits. According to Lee Eun-hee, a professor at Inha University's Department of Consumer Science, this could make national beauty standards more unrealistic, increasing demand for plastic surgery or cosmetic items.

Other parts of the world raise issues regarding selling items to consumers who don't recognize the models aren't human and the potential of cultural appropriation when generating influencers of other ethnicities, called digital blackface by some.

Meta, Facebook and Instagram's parent corporation, acknowledges this risk.

“Like any disruptive technology, synthetic media has the potential for both good and harm. Issues of representation, cultural appropriation and expressive liberty are already a growing concern,” the company stated in a blog post. “To help brands navigate the ethical quandaries of this emerging medium and avoid potential hazards, (Meta) is working with partners to develop an ethical framework to guide the use of (virtual influencers).”

Despite theoretical controversies, the industry will likely survive. Companies think virtual influencers are the next frontier in the digital world, which includes the metaverse, virtual reality, and digital currency.

In conclusion

Virtual influencers may garner millions of followers online and help marketers reach youthful audiences. According to a YouGov survey, the real impact of computer-generated influencers is yet unknown because people prefer genuine connections. Virtual characters can supplement brand marketing methods. When brands are metaverse-ready, the author predicts virtual influencer endorsement will continue to expand.

Florian Wahl

3 years ago

An Approach to Product Strategy

I've been pondering product strategy and how to articulate it. Frameworks helped guide our thinking.

If your teams aren't working together or there's no clear path to victory, your product strategy may not be well-articulated or communicated (if you have one).

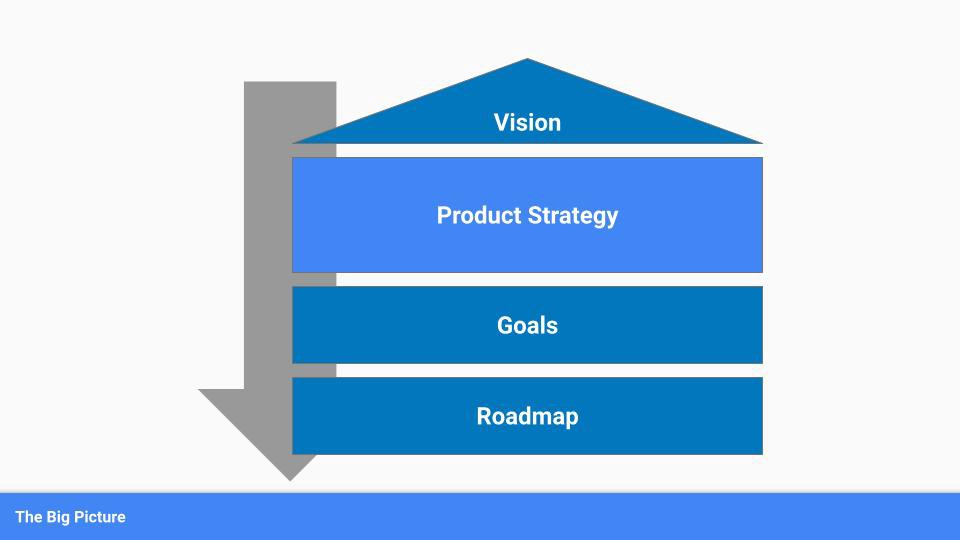

Before diving into a product strategy's details, it's important to understand its role in the bigger picture — the pieces that move your organization forward.

the overall picture

A product strategy is crucial, in my opinion. It's part of a successful product or business. It's the showpiece.

To simplify, we'll discuss four main components:

Vision

Product Management

Goals

Roadmap

Vision

Your company's mission? Your company/product in 35 years? Which headlines?

The vision defines everything your organization will do in the long term. It shows how your company impacted the world. It's your organization's rallying cry.

An ambitious but realistic vision is needed.

Without a clear vision, your product strategy may be inconsistent.

Product Management

Our main subject. Product strategy connects everything. It fulfills the vision.

In Part 2, we'll discuss product strategy.

Goals

This component can be goals, objectives, key results, targets, milestones, or whatever goal-tracking framework works best for your organization.

These product strategy metrics will help your team prioritize strategies and roadmaps.

Your company's goals should be unified. This fuels success.

Roadmap

The roadmap is your product strategy's timeline. It provides a prioritized view of your team's upcoming deliverables.

A roadmap is time-bound and includes measurable goals for your company. Your team's steps and capabilities for executing product strategy.

If your team has trouble prioritizing or defining a roadmap, your product strategy or vision is likely unclear.

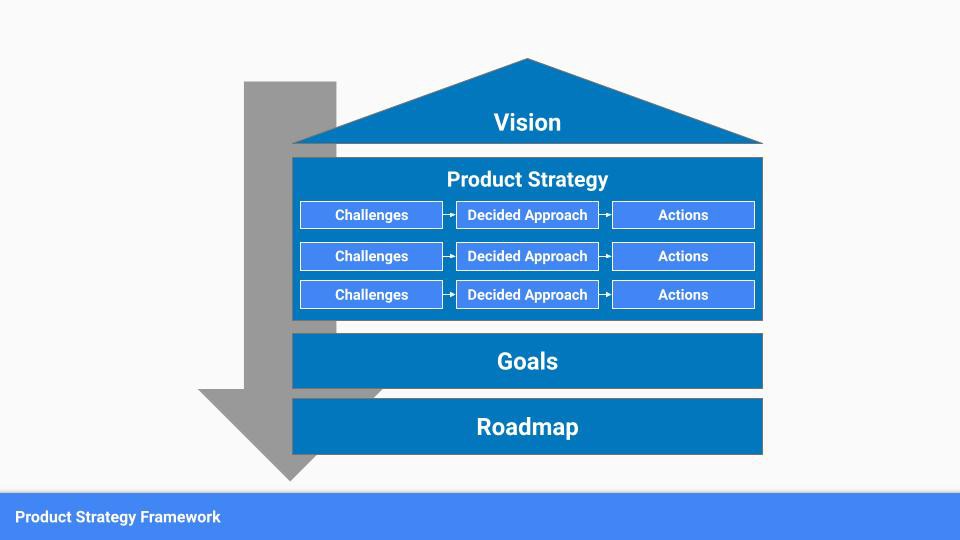

Formulation of a Product Strategy

Now that we've discussed where your product strategy fits in the big picture, let's look at a framework.

A product strategy should include challenges, an approach, and actions.

Challenges

First, analyze the problems/situations you're solving. It can be customer- or company-focused.

The analysis should explain the problems and why they're important. Try to simplify the situation and identify critical aspects.

Some questions:

What issues are we attempting to resolve?

What obstacles—internal or otherwise—are we attempting to overcome?

What is the opportunity, and why should we pursue it, in your opinion?

Decided Method

Second, describe your approach. This can be a set of company policies for handling the challenge. It's the overall approach to the first part's analysis.

The approach can be your company's bets, the solutions you've found, or how you'll solve the problems you've identified.

Again, these questions can help:

What is the value that we hope to offer to our clients?

Which market are we focusing on first?

What makes us stand out? Our benefit over rivals?

Actions

Third, identify actions that result from your approach. Second-part actions should be these.

Coordinate these actions. You may need to add products or features to your roadmap, acquire new capabilities through partnerships, or launch new marketing campaigns. Whatever fits your challenges and strategy.

Final questions:

What skills do we need to develop or obtain?

What is the chosen remedy? What are the main outputs?

What else ought to be added to our road map?

Put everything together

… and iterate!

Strategy isn't one-and-done. Changes occur. Economies change. Competitors emerge. Customer expectations change.

One unexpected event can make strategies obsolete quickly. Muscle it. Review, evaluate, and course-correct your strategies with your teams. Quarterly works. In a new or unstable industry, more often.