More on Entrepreneurship/Creators

Edward Williams

3 years ago

I currently manage 4 profitable online companies. I find all the generic advice and garbage courses very frustrating. The only advice you need is this.

This is for young entrepreneurs, especially in tech.

People give useless success advice on TikTok and Reddit. Early risers, bookworms, etc. Entrepreneurship courses. Work hard and hustle.

False. These aren't successful traits.

I mean, organization is good. As someone who founded several businesses and now works at a VC firm, I find these tips to be clichés.

Based on founding four successful businesses and working with other successful firms, here's my best actionable advice:

1. Choose a sector or a niche and become an expert in it.

This is more generic than my next tip, but it's a must-do that's often overlooked. Become an expert in the industry or niche you want to enter. Discover everything.

Buy (future) competitors' products. Understand consumers' pain points. Market-test. Target keyword combos. Learn technical details.

The most successful businesses I've worked with were all formed by 9-5 employees. They knew the industry's pain points. They started a business targeting these pain points.

2. Choose a niche or industry crossroads to target.

How do you choose an industry or niche? What if your industry is too competitive?

List your skills and hobbies. Randomness is fine. Find an intersection between two interests or skills.

Say you build websites well. You like cars.

Web design is a *very* competitive industry. Cars and web design?

Instead of web design, target car dealers and mechanics. Build a few fake demo auto mechanic websites, then cold call shops with poor websites. Verticalize.

I've noticed a pattern:

Person works in a particular industry for a corporation.

Person gains expertise in the relevant industry.

Person quits their job and launches a small business to address a problem that their former employer was unwilling to address.

I originally posted this on Reddit and it seemed to have taken off so I decided to share it with you all.

Focus on the product. When someone buys from you, you convince them the product's value exceeds the price. It's not fair and favors the buyer.

Creating a superior product or service will win. Narrowing this helps you outcompete others.

You may be their only (lucky) option.

Nick Nolan

3 years ago

In five years, starting a business won't be hip.

People are slowly recognizing entrepreneurship's downside.

Growing up, entrepreneurship wasn't common. High school class of 2012 had no entrepreneurs.

Businesses were different.

They had staff and a lengthy history of achievement.

I never wanted a business. It felt unattainable. My friends didn't care.

Weird.

People desired degrees to attain good jobs at big companies.

When graduated high school:

9 out of 10 people attend college

Earn minimum wage (7%) working in a restaurant or retail establishment

Or join the military (3%)

Later, entrepreneurship became a thing.

2014-ish

I was in the military and most of my high school friends were in college, so I didn't hear anything.

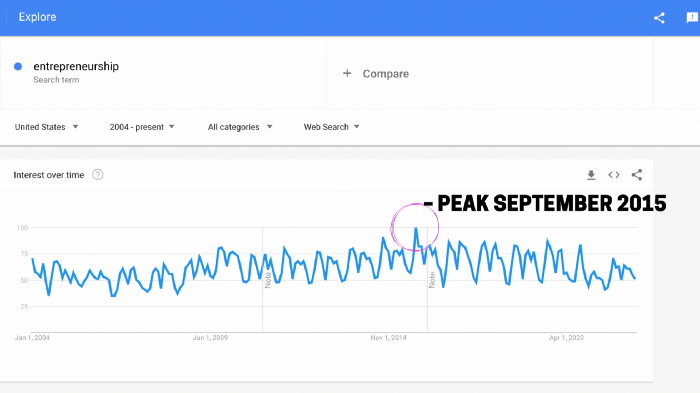

Entrepreneurship soared in 2015, according to Google Trends.

Then more individuals were interested. Entrepreneurship went from unusual to cool.

In 2015, it was easier than ever to build a website, run Facebook advertisements, and achieve organic social media reach.

There were several online business tools.

You didn't need to spend years or money figuring it out. Most entry barriers were gone.

Everyone wanted a side gig to escape the 95.

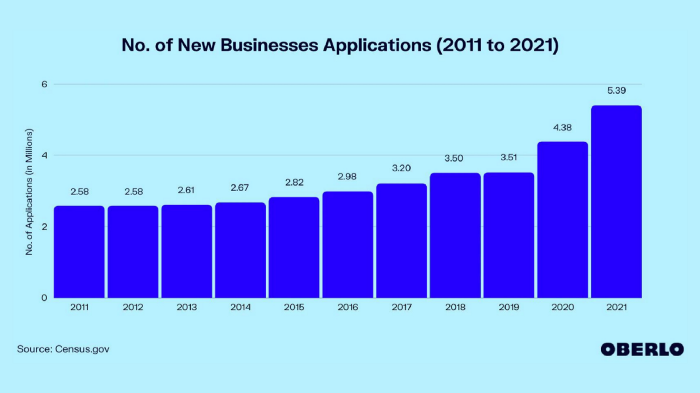

Small company applications have increased during the previous 10 years.

2011-2014 trend continues.

2015 adds 150,000 applications. 2016 adds 200,000. Plus 300,000 in 2017.

The graph makes it look little, but that's a considerable annual spike with no indications of stopping.

By 2021, new business apps had doubled.

Entrepreneurship will return to its early 2010s level.

I think we'll go backward in 5 years.

Entrepreneurship is half as popular as it was in 2015.

In the late 2020s and 30s, entrepreneurship will again be obscure.

Entrepreneurship's decade-long splendor is fading. People will cease escaping 9-5 and launch fewer companies.

That’s not a bad thing.

I think people have a rose-colored vision of entrepreneurship. It's fashionable. People feel that they're missing out if they're not entrepreneurial.

Reality is showing up.

People say on social media, "I knew starting a business would be hard, but not this hard."

More negative posts on entrepreneurship:

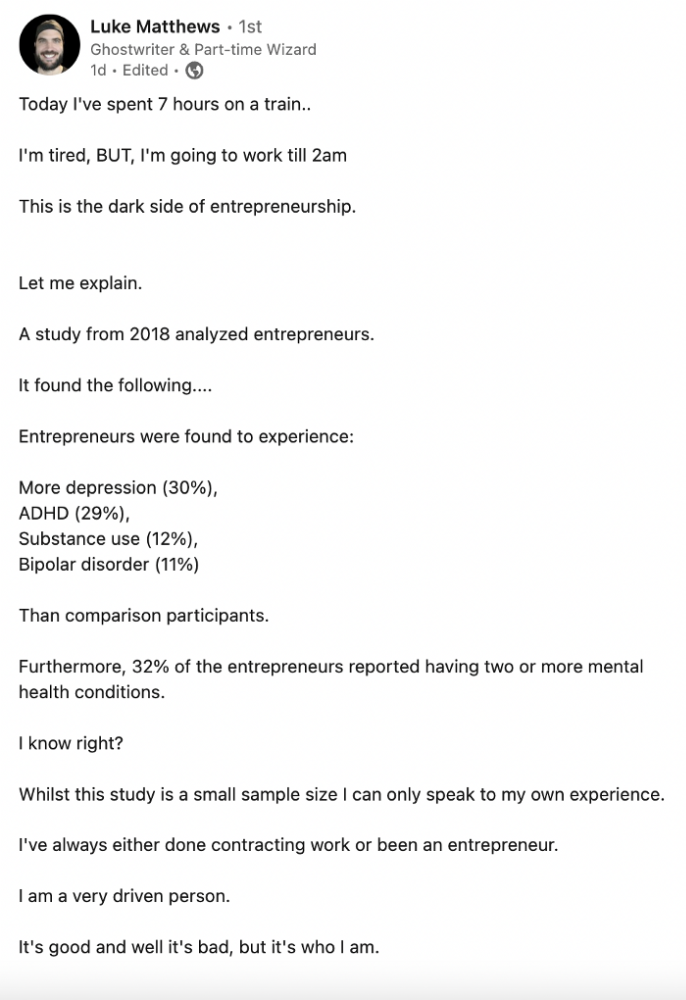

Luke adds:

Is being an entrepreneur ‘healthy’? I don’t really think so. Many like Gary V, are not role models for a well-balanced life. Despite what feel-good LinkedIn tells you the odds are against you as an entrepreneur. You have to work your face off. It’s a tough but rewarding lifestyle. So maybe let’s stop glorifying it because it takes a lot of (bleepin) work to survive a pandemic, mental health battles, and a competitive market.

Entrepreneurship is no longer a pipe dream.

It’s hard.

I went full-time in March 2020. I was done by April 2021. I had a good-paying job with perks.

When that fell through (on my start date), I had to continue my entrepreneurial path. I needed money by May 1 to pay rent.

Entrepreneurship isn't as great as many think.

Entrepreneurship is a serious business.

If you have a 9-5, the grass isn't greener here. Most people aren't telling the whole story when they post on social media or quote successful entrepreneurs.

People prefer to communicate their victories than their defeats.

Is this a bad thing?

I don’t think so.

Over the previous decade, entrepreneurship went from impossible to the finest thing ever.

It peaked in 2020-21 and is returning to reality.

Startups aren't for everyone.

If you like your job, don't quit.

Entrepreneurship won't amaze people if you quit your job.

It's irrelevant.

You're doomed.

And you'll probably make less money.

If you hate your job, quit. Change jobs and bosses. Changing jobs could net you a greater pay or better perks.

When you go solo, your paycheck and perks vanish. Did I mention you'll fail, sleep less, and stress more?

Nobody will stop you from pursuing entrepreneurship. You'll face several challenges.

Possibly.

Entrepreneurship may be romanticized for years.

Based on what I see from entrepreneurs on social media and trends, entrepreneurship is challenging and few will succeed.

Aaron Dinin, PhD

3 years ago

There Are Two Types of Entrepreneurs in the World Make sure you are aware of your type!

Know why it's important.

The entrepreneur I was meeting with said, "I should be doing crypto, or maybe AI? Aren't those the hot spots? I should look there for a startup idea.”

I shook my head. Yes, they're exciting, but that doesn't mean they're best for you and your business.

“There are different types of entrepreneurs?” he asked.

I said "obviously." Two types, actually. Knowing what type of entrepreneur you are helps you build the right startup.

The two types of businesspeople

The best way for me to describe the two types of entrepreneurs is to start by telling you exactly the kinds of entrepreneurial opportunities I never get excited about: future opportunities.

In the early 1990s, my older brother showed me the World Wide Web and urged me to use it. Unimpressed, I returned to my Super Nintendo.

My roommate tried to get me to join Facebook as a senior in college. I remember thinking, This is dumb. Who'll use it?

In 2011, my best friend tried to convince me to buy bitcoin and I laughed.

Heck, a couple of years ago I had to buy a new car, and I never even considered buying something that didn’t require fossilized dinosaur bones.

I'm no visionary. I don't anticipate the future. I focus on the present.

This tendency makes me a problem-solving entrepreneur. I identify entrepreneurial opportunities by spotting flaws and/or inefficiencies in the world and devising solutions.

There are other ways to find business opportunities. Visionary entrepreneurs also exist. I don't mean visionary in the hyperbolic sense that implies world-changing impact. I mean visionary as an entrepreneur who identifies future technological shifts that will change how people work and live and create new markets.

Problem-solving and visionary entrepreneurs are equally good. But the two approaches to building companies are very different. Knowing the type of entrepreneur you are will help you build a startup that fits your worldview.

What is the distinction?

Let's use some simple hypotheticals to compare problem-solving and visionary entrepreneurship.

Imagine a city office building without nearby restaurants. Those office workers love to eat. Sometimes they'd rather eat out than pack a lunch. As an entrepreneur, you can solve the lack of nearby restaurants. You'd open a restaurant near that office, say a pizza parlor, and get customers because you solved the lack of nearby restaurants. Problem-solving entrepreneurship.

Imagine a new office building in a developing area with no residents or workers. In this scenario, a large office building is coming. The workers will need to eat then. As a visionary entrepreneur, you're excited about the new market and decide to open a pizzeria near the construction to meet demand.

Both possibilities involve the same product. You opened a pizzeria. How you launched that pizza restaurant and what will affect its success are different.

Why is the distinction important?

Let's say you opened a pizzeria near an office. You'll probably get customers. Because people are nearby and demand isn't being met, someone from a nearby building will stop in within the first few days of your pizzeria's grand opening. This makes solving the problem relatively risk-free. You'll get customers unless you're a fool.

The market you're targeting existed before you entered it, so you're not guaranteed success. This means people in that market solved the lack of nearby restaurants. Those office workers are used to bringing their own lunches. Why should your restaurant change their habits? Even when they eat out, they're used to traveling far. They've likely developed pizza preferences.

To be successful with your problem-solving startup, you must convince consumers to change their behavior, which is difficult.

Unlike opening a pizza restaurant near a construction site. Once the building opens, workers won't have many preferences or standardized food-getting practices. Your pizza restaurant can become the incumbent quickly. You'll be the first restaurant in the area, so you'll gain a devoted following that makes your food a routine.

Great, right? It's easier than changing people's behavior. The benefit comes with a risk. Opening a pizza restaurant near a construction site increases future risk. What if builders run out of money? No one moves in? What if the building's occupants are the National Association of Pizza Haters? Then you've opened a pizza restaurant next to pizza haters.

Which kind of businessperson are you?

This isn't to say one type of entrepreneur is better than another. Each type of entrepreneurship requires different skills.

As my simple examples show, a problem-solving entrepreneur must operate in markets with established behaviors and habits. To be successful, you must be able to teach a market a new way of doing things.

Conversely, the challenge of being a visionary entrepreneur is that you have to be good at predicting the future and getting in front of that future before other people.

Both are difficult in different ways. So, smart entrepreneurs don't just chase opportunities. Smart entrepreneurs pursue opportunities that match their skill sets.

You might also like

Clive Thompson

3 years ago

Small Pieces of Code That Revolutionized the World

Few sentences can have global significance.

Ethan Zuckerman invented the pop-up commercial in 1997.

He was working for Tripod.com, an online service that let people make little web pages for free. Tripod offered advertising to make money. Advertisers didn't enjoy seeing their advertising next to filthy content, like a user's anal sex website.

Zuckerman's boss wanted a solution. Wasn't there a way to move the ads away from user-generated content?

When you visited a Tripod page, a pop-up ad page appeared. So, the ad isn't officially tied to any user page. It'd float onscreen.

Here’s the thing, though: Zuckerman’s bit of Javascript, that created the popup ad? It was incredibly short — a single line of code:

window.open('http://tripod.com/navbar.html'

"width=200, height=400, toolbar=no, scrollbars=no, resizable=no, target=_top");Javascript tells the browser to open a 200-by-400-pixel window on top of any other open web pages, without a scrollbar or toolbar.

Simple yet harmful! Soon, commercial websites mimicked Zuckerman's concept, infesting the Internet with pop-up advertising. In the early 2000s, a coder for a download site told me that most of their revenue came from porn pop-up ads.

Pop-up advertising are everywhere. You despise them. Hopefully, your browser blocks them.

Zuckerman wrote a single line of code that made the world worse.

I read Zuckerman's story in How 26 Lines of Code Changed the World. Torie Bosch compiled a humorous anthology of short writings about code that tipped the world.

Most of these samples are quite short. Pop-cultural preconceptions about coding say that important code is vast and expansive. Hollywood depicts programmers as blurs spouting out Niagaras of code. Google's success was formerly attributed to its 2 billion lines of code.

It's usually not true. Google's original breakthrough, the piece of code that propelled Google above its search-engine counterparts, was its PageRank algorithm, which determined a web page's value based on how many other pages connected to it and the quality of those connecting pages. People have written their own Python versions; it's only a few dozen lines.

Google's operations, like any large tech company's, comprise thousands of procedures. So their code base grows. The most impactful code can be brief.

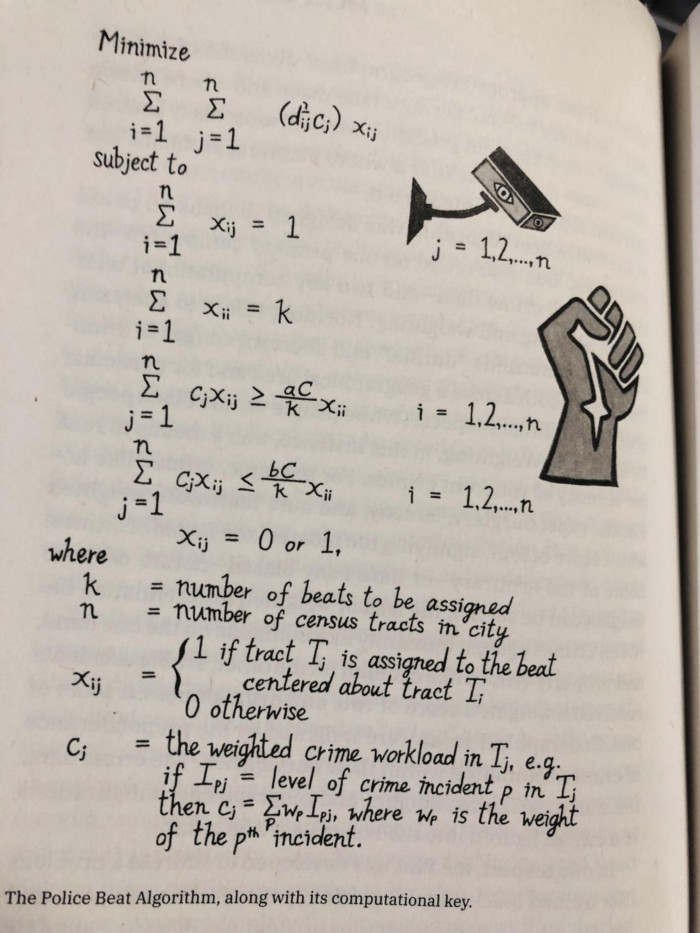

The examples are fascinating and wide-ranging, so read the whole book (or give it to nerds as a present). Charlton McIlwain wrote a chapter on the police beat algorithm developed in the late 1960s to anticipate crime hotspots so law enforcement could dispatch more officers there. It created a racial feedback loop. Since poor Black neighborhoods were already overpoliced compared to white ones, the algorithm directed more policing there, resulting in more arrests, which convinced it to send more police; rinse and repeat.

Kelly Chudler's You Are Not Expected To Understand This depicts the police-beat algorithm.

Even shorter code changed the world: the tracking pixel.

Lily Hay Newman's chapter on monitoring pixels says you probably interact with this code every day. It's a snippet of HTML that embeds a single tiny pixel in an email. Getting an email with a tracking code spies on me. As follows: My browser requests the single-pixel image as soon as I open the mail. My email sender checks to see if Clives browser has requested that pixel. My email sender can tell when I open it.

Adding a tracking pixel to an email is easy:

<img src="URL LINKING TO THE PIXEL ONLINE" width="0" height="0">An older example: Ellen R. Stofan and Nick Partridge wrote a chapter on Apollo 11's lunar module bailout code. This bailout code operated on the lunar module's tiny on-board computer and was designed to prioritize: If the computer grew overloaded, it would discard all but the most vital work.

When the lunar module approached the moon, the computer became overloaded. The bailout code shut down anything non-essential to landing the module. It shut down certain lunar module display systems, scaring the astronauts. Module landed safely.

22-line code

POODOO INHINT

CA Q

TS ALMCADR

TC BANKCALL

CADR VAC5STOR # STORE ERASABLES FOR DEBUGGING PURPOSES.

INDEX ALMCADR

CAF 0

ABORT2 TC BORTENT

OCT77770 OCT 77770 # DONT MOVE

CA V37FLBIT # IS AVERAGE G ON

MASK FLAGWRD7

CCS A

TC WHIMPER -1 # YES. DONT DO POODOO. DO BAILOUT.

TC DOWNFLAG

ADRES STATEFLG

TC DOWNFLAG

ADRES REINTFLG

TC DOWNFLAG

ADRES NODOFLAG

TC BANKCALL

CADR MR.KLEAN

TC WHIMPERThis fun book is worth reading.

I'm a contributor to the New York Times Magazine, Wired, and Mother Jones. I've also written Coders: The Making of a New Tribe and the Remaking of the World and Smarter Than You Think: How Technology is Changing Our Minds. Twitter and Instagram: @pomeranian99; Mastodon: @clive@saturation.social.

Kyle Planck

3 years ago

The chronicles of monkeypox.

or, how I spread monkeypox and got it myself.

This story contains nsfw (not safe for wife) stuff and shouldn't be read if you're under 18 or think I'm a newborn angel. After the opening, it's broken into three sections: a chronological explanation of my disease course, my ideas, and what I plan to do next.

Your journey awaits.

As early as mid-may, I was waltzing around the lab talking about monkeypox, a rare tropical disease with an inaccurate name. Monkeys are not its primary animal reservoir. It caused an outbreak among men who have sex with men across Europe, with unprecedented levels of person-to-person transmission. European health authorities speculated that the virus spread at raves and parties and was easily transferred through intimate, mainly sexual, contact. I had already read the nejm article about the first confirmed monkeypox patient in the u.s. and shared the photos on social media so people knew what to look for. The cdc information page only included 4 photographs of monkeypox lesions that looked like they were captured on a motorola razr.

I warned my ex-boyfriend about monkeypox. Monkeypox? responded.

Mom, I'm afraid about monkeypox. What's monkeypox?

My therapist is scared about monkeypox. What's monkeypox?

Was I alone? A few science gays on Twitter didn't make me feel overreacting.

This information got my gay head turning. The incubation period for the sickness is weeks. Many of my social media contacts are traveling to Europe this summer. What is pride? Travel, parties, and sex. Many people may become infected before attending these activities. Monkeypox will affect the lgbtq+ community.

Being right always stinks. My young scientist brain was right, though. Someone who saw this coming is one of the early victims. I'll talk about my feelings publicly, and trust me, I have many concerning what's occurring.

Part 1 is the specifics.

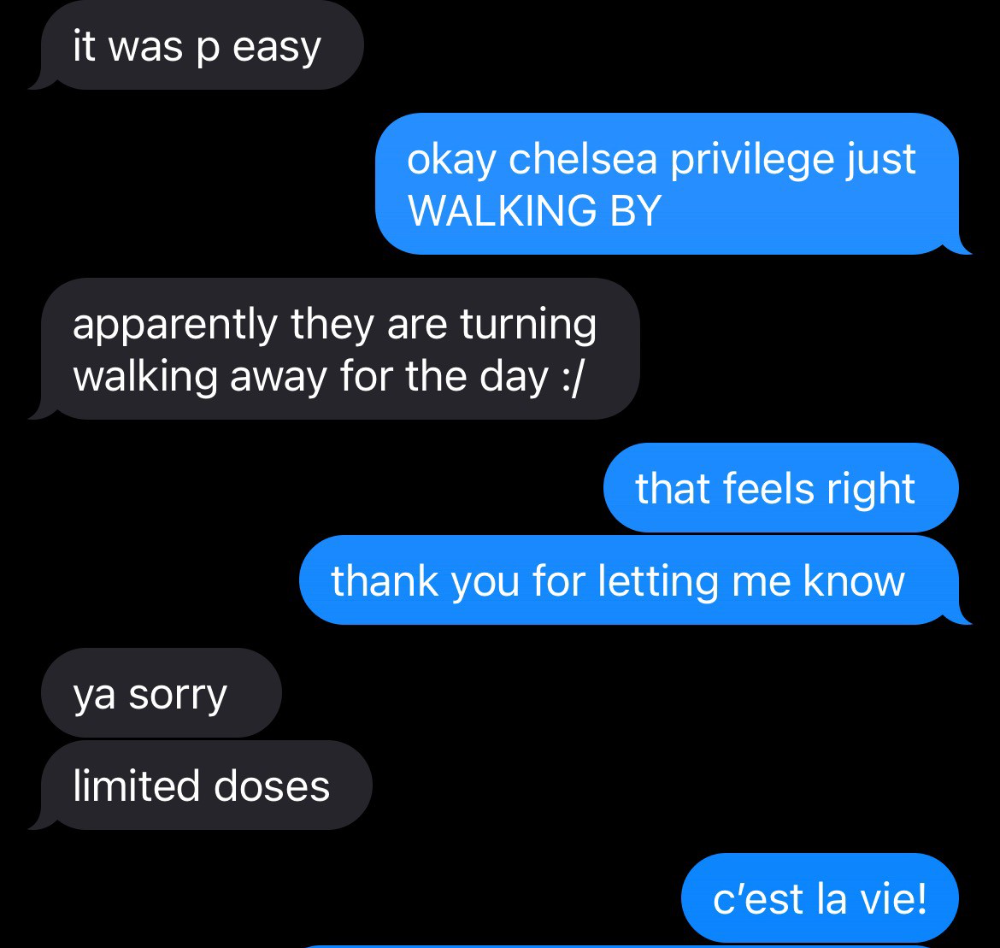

Wednesday nights are never smart but always entertaining. I didn't wake up until noon on june 23 and saw gay twitter blazing. Without warning, the nyc department of health announced a pop-up monkeypox immunization station in chelsea. Some days would be 11am-7pm. Walk-ins were welcome, however appointments were preferred. I tried to arrange an appointment after rubbing my eyes, but they were all taken. I got out of bed, washed my face, brushed my teeth, and put on short shorts because I wanted to get a walk-in dose and show off my legs. I got a 20-oz. cold brew on the way to the train and texted a chelsea-based acquaintance for help.

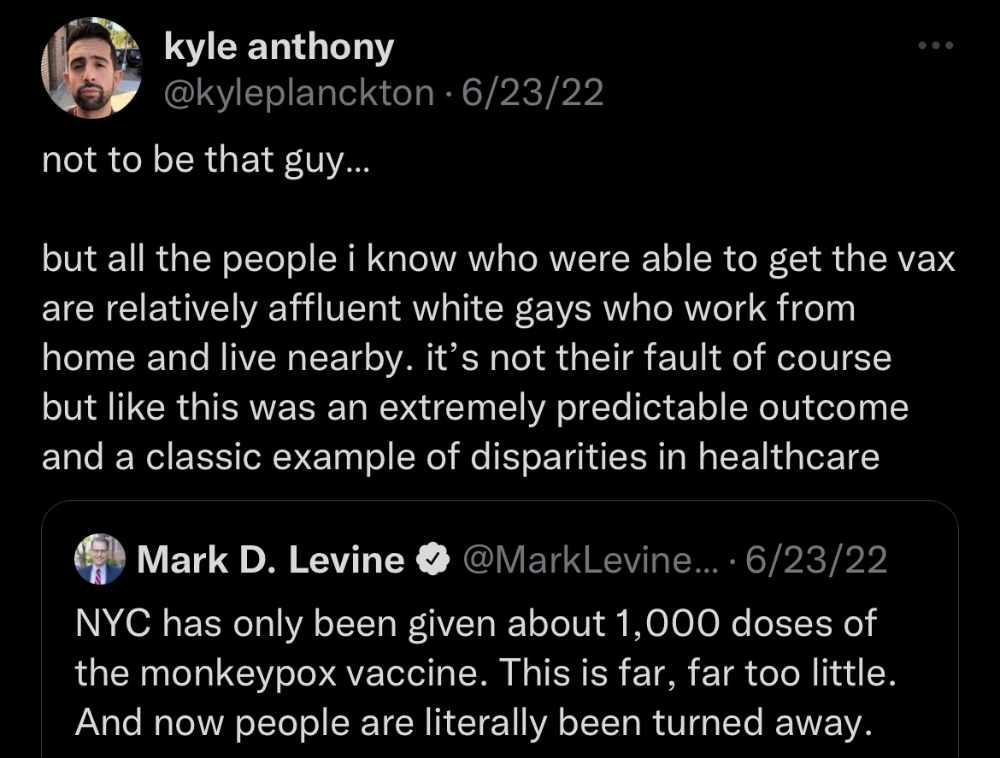

Clinic closed at 2pm. No more doses. Hundreds queued up. The government initially gave them only 1,000 dosages. For a city with 500,000 LGBT people, c'mon. What more could I do? I was upset by how things were handled. The evidence speaks for itself.

I decided to seek an appointment when additional doses were available and continued my weekend. I was celebrating nyc pride with pals. Fun! sex! *

On tuesday after that, I felt a little burn. This wasn't surprising because I'd been sexually active throughout the weekend, so I got a sti panel the next day. I expected to get results in a few days, take antibiotics, and move on.

Emerging germs had other intentions. Wednesday night, I felt sore, and thursday morning, I had a blazing temperature and had sweat through my bedding. I had fever, chills, and body-wide aches and pains for three days. I reached 102 degrees. I believed I had covid over pride weekend, but I tested negative for three days straight.

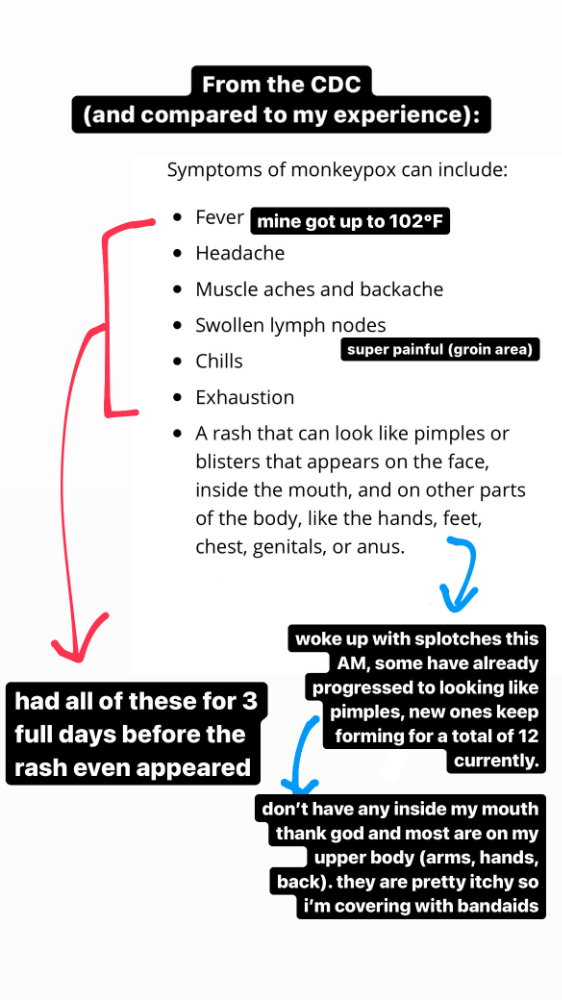

STDs don't induce fevers or other systemic symptoms. If lymphogranuloma venereum advances, it can cause flu-like symptoms and swollen lymph nodes. I was suspicious and desperate for answers, so I researched monkeypox on the cdc website (for healthcare professionals). Much of what I saw on screen about monkeypox prodrome matched my symptoms. Multiple-day fever, headache, muscle aches, chills, tiredness, enlarged lymph nodes. Pox were lacking.

I told my doctor my concerns pre-medically. I'm occasionally annoying.

On saturday night, my fever broke and I felt better. Still burning, I was optimistic till sunday, when I woke up with five red splotches on my arms and fingertips.

As spots formed, burning became pain. I observed as spots developed on my body throughout the day. I had more than a dozen by the end of the day, and the early spots were pustular. I had monkeypox, as feared.

Fourth of July weekend limited my options. I'm well-connected in my school's infectious disease academic community, so I texted a coworker for advice. He agreed it was likely monkeypox and scheduled me for testing on tuesday.

nyc health could only perform 10 monkeypox tests every day. Before doctors could take swabs and send them in, each test had to be approved by the department. Some commercial labs can now perform monkeypox testing, but the backlog is huge. I still don't have a positive orthopoxvirus test five days after my test. *My 12-day-old case may not be included in the official monkeypox tally. This outbreak is far wider than we first thought, therefore I'm attempting to spread the information and help contain it.

*Update, 7/11: I have orthopoxvirus.

I spent all day in the bathtub because of the agony. Warm lavender epsom salts helped me feel better. I can't stand lavender anymore. I brought my laptop into the bathroom and viewed everything everywhere at once (2022). If my ex and I hadn't recently broken up, I wouldn't have monkeypox. All of these things made me cry, and I sat in the bathtub on the 4th of July sobbing. I thought, Is this it? I felt like Bridesmaids' Kristen Wiig (2011). I'm a flop. From here, things can only improve.

Later that night, I wore a mask and went to my roof to see the fireworks. Even though I don't like fireworks, there was something wonderful about them this year: the colors, how they illuminated the black surfaces around me, and their transient beauty. Joyful moments rarely linger long in our life. We must enjoy them now.

Several roofs away, my neighbors gathered. Happy 4th! I heard a woman yell. Why is this godforsaken country so happy? Instead of being rude, I replied. I didn't tell them I had monkeypox. I thought that would kill the mood.

By the time I went to the hospital the next day to get my lesions swabbed, wearing long sleeves, pants, and a mask, they looked like this:

I had 30 lesions on my arms, hands, stomach, back, legs, buttcheeks, face, scalp, and right eyebrow. I had some in my mouth, gums, and throat. Current medical thought is that lesions on mucous membranes cause discomfort in sensitive places. Internal lesions are a new feature of this outbreak of monkeypox. Despite being unattractive, the other sores weren't unpleasant or bothersome.

I had a bacterial sti with the pox. Who knows if that would've created symptoms (often it doesn't), but different infections can happen at once. My care team remembered that having a sti doesn't exclude out monkeypox. doxycycline rocks!

The coworker who introduced me to testing also offered me his home. We share a restroom, and monkeypox can be spread through surfaces. (Being a dna virus gives it environmental hardiness that rna viruses like sars-cov-2 lack.) I disinfected our bathroom after every usage, but I was apprehensive. My friend's place has a guest room and second bathroom, so no cross-contamination. It was the ideal monkeypox isolation environment, so I accepted his offer and am writing this piece there. I don't know what I would have done without his hospitality and attention.

The next day, I started tecovirimat, or tpoxx, for 14 days. Smallpox has been eradicated worldwide since the 1980s but remains a bioterrorism concern. Tecovirimat has a unique, orthopoxvirus-specific method of action, which reduces side effects to headache and nausea. It hasn't been used in many people, therefore the cdc is encouraging patients who take it for monkeypox to track their disease and symptoms.

Tpoxx's oral absorption requires a fatty meal. The hospital ordered me to take the medication after a 600-calorie, 25-gram-fat meal every 12 hours. The coordinator joked, "Don't diet for the next two weeks." I wanted to get peanut butter delivered, but jif is recalling their supply due to salmonella. Please give pathogens a break. I got almond butter.

Tpoxx study enrollment was documented. After signing consent documents, my lesions were photographed and measured during a complete physical exam. I got bloodwork to assess my health. My medication delivery was precise; every step must be accounted for. I got a two-week supply and started taking it that night. I rewarded myself with McDonald's. I'd been hungry for a week. I was also prescribed ketorolac (aka toradol), a stronger ibuprofen, for my discomfort.

I thought tpoxx was a wonder medicine by day two of treatment. Early lesions looked like this.

however, They vanished. The three largest lesions on my back flattened and practically disappeared into my skin. Some pustular lesions were diminishing. Tpoxx+toradol has helped me sleep, focus, and feel human again. I'm down to twice-daily baths and feeling hungrier than ever in this illness. On day five of tpoxx, some of the lesions look like this:

I have a ways to go. We must believe I'll be contagious until the last of my patches scabs over, falls off, and sprouts new skin. There's no way to tell. After a week and a half of tremendous pain and psychological stress, any news is good news. I'm grateful for my slow but steady development.

Part 2 of the rant.

Being close to yet not in the medical world is interesting. It lets me know a lot about it without being persuaded by my involvement. Doctors identify and treat patients using a tool called differential diagnosis.

A doctor interviews a patient to learn about them and their symptoms. More is better. Doctors may ask, "Have you traveled recently?" sex life? Have pets? preferred streaming service? (No, really. (Hbomax is right.) After the inquisition, the doctor will complete a body exam ranging from looking in your eyes, ears, and throat to a thorough physical.

After collecting data, the doctor makes a mental (or physical) inventory of all the conceivable illnesses that could cause or explain the patient's symptoms. Differential diagnosis list. After establishing the differential, the clinician can eliminate options. The doctor will usually conduct nucleic acid tests on swab samples or bloodwork to learn more. This helps eliminate conditions from the differential or boosts a condition's likelihood. In an ideal circumstance, the doctor can eliminate all but one reason of your symptoms, leaving your formal diagnosis. Once diagnosed, treatment can begin. yay! Love medicine.

My symptoms two weeks ago did not suggest monkeypox. Fever, pains, weariness, and swollen lymph nodes are caused by several things. My scandalous symptoms weren't linked to common ones. My instance shows the importance of diversity and representation in healthcare. My doctor isn't gay, but he provides culturally sensitive care. I'd heard about monkeypox as a gay man in New York. I was hyper-aware of it and had heard of friends of friends who had contracted it the week before, even though the official case count in the US was 40. My physicians weren't concerned, but I was. How would it appear on his mental differential if it wasn't on his radar? Mental differential rhymes! I'll trademark it to prevent theft. differential!

I was in a rare position to recognize my condition and advocate for myself. I study infections. I'd spent months researching monkeypox. I work at a university where I rub shoulders with some of the country's greatest doctors. I'm a gay dude who follows nyc queer social networks online. All of these variables positioned me to think, "Maybe this is monkeypox," and to explain why.

This outbreak is another example of privilege at work. The brokenness of our healthcare system is once again exposed by the inequities produced by the vaccination rollout and the existence of people like myself who can pull strings owing to their line of work. I can't cure this situation on my own, but I can be a strong voice demanding the government do a better job addressing the outbreak and giving resources and advice to everyone I can.

lgbtqia+ community members' support has always impressed me in new york. The queer community has watched out for me and supported me in ways I never dreamed were possible.

Queer individuals are there for each other when societal structures fail. People went to the internet on the first day of the vaccine rollout to share appointment information and the vaccine clinic's message. Twitter timelines were more effective than marketing campaigns. Contrary to widespread anti-vaccine sentiment, the LGBT community was eager to protect themselves. Smallpox vaccination? sure. gimme. whether I'm safe. I credit the community's sex positivity. Many people are used to talking about STDs, so there's a reduced barrier to saying, "I think I have something, you should be on the watch too," and taking steps to protect our health.

Once I got monkeypox, I posted on Twitter and Instagram. Besides fueling my main character syndrome, I felt like I wasn't alone. My dc-based friend had monkeypox within hours. He told me about his experience and gave me ideas for managing the discomfort. I can't imagine life without him.

My buddy and colleague organized my medical care and let me remain in his home. His and his husband's friendliness and attention made a world of difference in my recovery. All of my friends and family who helped me, whether by venmo, doordash, or moral support, made me feel cared about. I don't deserve the amazing people in my life.

Finally, I think of everyone who commented on my social media posts regarding my trip. Friends from all sectors of my life and all sexualities have written me well wishes and complimented me for my vulnerability, but I feel the most gravitas from fellow lgbtq+ persons. They're learning to spot. They're learning where to go ill. They're learning self-advocacy. I'm another link in our network of caretaking. I've been cared for, therefore I want to do the same. Community and knowledge are powerful.

You're probably wondering where the diatribe is. You may believe he's gushing about his loved ones, and you'd be right. I say that just because the queer community can take care of itself doesn't mean we should.

Even when caused by the same pathogen, comparing health crises is risky. Aids is unlike covid-19 or monkeypox, yet all were caused by poorly understood viruses. The lgbtq+ community has a history of self-medicating. Queer people (and their supporters) have led the charge to protect themselves throughout history when the government refused. Surreal to experience this in real time.

First, vaccination access is a government failure. The strategic national stockpile contains tens of thousands of doses of jynneos, the newest fda-approved smallpox vaccine, and millions of doses of acam2000, an older vaccine for immunocompetent populations. Despite being a monkeypox hotspot and international crossroads, new york has only received 7,000 doses of the jynneos vaccine. Vaccine appointments are booked within minutes. It's showing Hunger Games, which bothers me.

Second, I think the government failed to recognize the severity of the european monkeypox outbreak. We saw abroad reports in may, but the first vaccines weren't available until june. Why was I a 26-year-old pharmacology grad student, able to see a monkeypox problem in europe but not the u.s. public health agency? Or was there too much bureaucracy and politicking, delaying action?

Lack of testing infrastructure for a known virus with vaccinations and therapies is appalling. More testing would have helped understand the problem's breadth. Many homosexual guys, including myself, didn't behave like monkeypox was a significant threat because there were only a dozen instances across the country. Our underestimating of the issue, spurred by a story of few infections, was huge.

Public health officials' response to infectious diseases frustrates me. A wait-and-see approach to infectious diseases is unsatisfactory. Before a sick person is recognized, they've exposed and maybe contaminated numerous others. Vaccinating susceptible populations before a disease becomes entrenched prevents disease. CDC might operate this way. When it was easier, they didn't control or prevent monkeypox. We'll learn when. Sometimes I fear never. Emerging viral infections are a menace in the era of climate change and globalization, and I fear our government will repeat the same mistakes. I don't work at the cdc, thus I have no idea what they do. As a scientist, a homosexual guy, and a citizen of this country, I feel confident declaring that the cdc has not done enough about monkeypox. Will they do enough about monkeypox? The strategic national stockpile can respond to a bioterrorism disaster in 12 hours. I'm skeptical following this outbreak.

It's simple to criticize the cdc, but they're not to blame. Underfunding public health services, especially the cdc, is another way our government fails to safeguard its citizens. I may gripe about the vaccination rollout all I want, but local health departments are doing their best with limited resources. They may not have enough workers to keep up with demand and run a contact-tracing program. Since my orthopoxvirus test is still negative, the doh hasn't asked about my close contacts. By then, my illness will be two weeks old, too long to do anything productive. Not their fault. They're functioning in a broken system that's underfunded for the work it does.

*Update, 7/11: I have orthopoxvirus.

Monkeypox is slow, so i've had time to contemplate. Now that I'm better, I'm angry. furious and sad I want to help. I wish to spare others my pain. This was preventable and solvable, I hope. HOW?

Third, the duty.

Family, especially selected family, helps each other. So many people have helped me throughout this difficult time. How can I give back? I have ideas.

1. Education. I've already started doing this by writing incredibly detailed posts on Instagram about my physical sickness and my thoughts on the entire scandal. via tweets. by producing this essay. I'll keep doing it even if people start to resent me! It's crucial! On my Instagram profile (@kyleplanckton), you may discover a story highlight with links to all of my bizarre yet educational posts.

2. Resources. I've forwarded the contact information for my institution's infectious diseases clinic to several folks who will hopefully be able to get tpoxx under the expanded use policy. Through my social networks, I've learned of similar institutions. I've also shared crowdsourced resources about symptom relief and vaccine appointment availability on social media. DM me or see my Instagram highlight for more.

3. Community action. During my illness, my friends' willingness to aid me has meant the most. It was nice to know I had folks on my side. One of my pals (thanks, kenny) snagged me a mcgriddle this morning when seamless canceled my order. This scenario has me thinking about methods to help people with monkeypox isolation. A two-week isolation period is financially damaging for many hourly workers. Certain governments required paid sick leave for covid-19 to allow employees to recover and prevent spread. No comparable program exists for monkeypox, and none seems to be planned shortly.

I want to aid monkeypox patients in severe financial conditions. I'm willing to pick up and bring groceries or fund meals/expenses for sick neighbors. I've seen several GoFundMe accounts, but I wish there was a centralized mechanism to link those in need with those who can help. Please contact me if you have expertise with mutual aid organizations. I hope we can start this shortly.

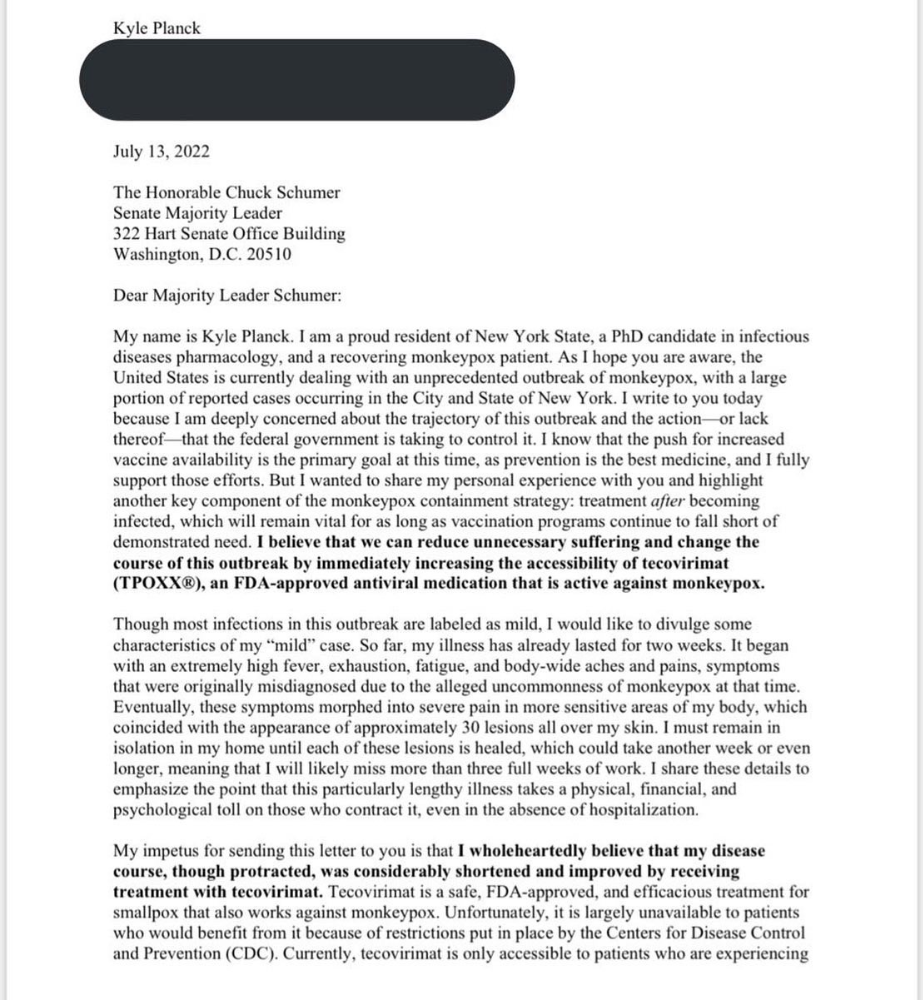

4. lobbying. Personal narratives are powerful. My narrative is only one, but I think it's compelling. Over the next day or so, i'll write to local, state, and federal officials about monkeypox. I wanted a vaccine but couldn't acquire one, and I feel tpoxx helped my disease. As a pharmacologist-in-training, I believe collecting data on a novel medicine is important, and there are ethical problems when making a drug with limited patient data broadly available. Many folks I know can't receive tpoxx due of red tape and a lack of contacts. People shouldn't have to go to an ivy league hospital to obtain the greatest care. Based on my experience and other people's tales, I believe tpoxx can drastically lessen monkeypox patients' pain and potentially curb transmission chains if administered early enough. This outbreak is manageable. It's not too late if we use all the instruments we have (diagnostic, vaccine, treatment).

*UPDATE 7/15: I submitted the following letter to Chuck Schumer and Kirsten Gillibrand. I've addressed identical letters to local, state, and federal officials, including the CDC and HHS.

I hope to join RESPND-MI, an LGBTQ+ community-led assessment of monkeypox symptoms and networks in NYC. Visit their website to learn more and give to this community-based charity.

How I got monkeypox is a mystery. I received it through a pride physical interaction, but i'm not sure which one. This outbreak will expand unless leaders act quickly. Until then, I'll keep educating and connecting people to care in my neighborhood.

Despite my misgivings, I see some optimism. Health department social media efforts are underway. During the outbreak, the CDC provided nonjudgmental suggestions for safer social and sexual activity. There's additional information regarding the disease course online, including how to request tpoxx for sufferers. These materials can help people advocate for themselves if they're sick. Importantly, homosexual guys are listening when they discuss about monkeypox online and irl. Learners They're serious.

The government has a terrible track record with lgtbq+ health issues, and they're not off to a good start this time. I hope this time will be better. If I can aid even one individual, I'll do so.

Thanks for reading, supporting me, and spreading awareness about the 2022 monkeypox outbreak. My dms are accessible if you want info, resources, queries, or to chat.

y'all well

kyle

Al Anany

3 years ago

Notion AI Might Destroy Grammarly and Jasper

The trick Notion could use is simply Facebook-ing the hell out of them.

*Time travel to fifteen years ago.* Future-Me: “Hey! What are you up to?” Old-Me: “I am proofreading an article. It’s taking a few hours, but I will be done soon.” Future-Me: “You know, in the future, you will be using a google chrome plugin called Grammarly that will help you easily proofread articles in half that time.” Old-Me: “What is… Google Chrome?” Future-Me: “Gosh…”

I love Grammarly. It’s one of those products that I personally feel the effects of. I mean, Space X is a great company. But I am not a rocket writing this article in space (or am I?)…

No, I’m not. So I don’t personally feel a connection to Space X. So, if a company collapse occurs in the morning, I might write about it. But I will have zero emotions regarding it.

Yet, if Grammarly fails tomorrow, I will feel 1% emotionally distressed. So looking at the title of this article, you’d realize that I am betting against them. This is how much I believe in the critical business model that’s taking over the world, the one of Notion.

Notion How frequently do you go through your notes?

Grammarly is everywhere, which helps its success. Grammarly is available when you update LinkedIn on Chrome. Grammarly prevents errors in Google Docs.

My internal concentration isn't apparent in the previous paragraph. Not Grammarly. I should have used Chrome to make a Google doc and LinkedIn update. Without this base, Grammarly will be useless.

So, welcome to this business essay.

Grammarly provides a solution.

Another issue is resolved by Jasper.

Your entire existence is supposed to be contained within Notion.

New Google Chrome is offline. It's an all-purpose notepad (in the near future.)

How should I start my blog? Enter it in Note.

an update on LinkedIn? If you mention it, it might be automatically uploaded there (with little help from another app.)

An advanced thesis? You can brainstorm it with your coworkers.

This ad sounds great! I won't cry if Notion dies tomorrow.

I'll reread the following passages to illustrate why I think Notion could kill Grammarly and Jasper.

Notion is a fantastic app that incubates your work.

Smartly, they began with note-taking.

Hopefully, your work will be on Notion. Grammarly and Jasper are still must-haves.

Grammarly will proofread your typing while Jasper helps with copywriting and AI picture development.

They're the best, therefore you'll need them. Correct? Nah.

Notion might bombard them with Facebook posts.

Notion: “Hi Grammarly, do you want to sell your product to us?” Grammarly: “Dude, we are more valuable than you are. We’ve even raised $400m, while you raised $342m. Our last valuation round put us at $13 billion, while yours put you at $10 billion. Go to hell.” Notion: “Okay, we’ll speak again in five years.”

Notion: “Jasper, wanna sell?” Jasper: “Nah, we’re deep into AI and the field. You can’t compete with our people.” Notion: “How about you either sell or you turn into a Snapchat case?” Jasper: “…”

Notion is your home. Grammarly is your neighbor. Your track is Jasper.

What if you grew enough vegetables in your backyard to avoid the supermarket? No more visits.

What if your home had a beautiful treadmill? You won't rush outside as much (I disagree with my own metaphor). (You get it.)

It's Facebooking. Instagram Stories reduced your Snapchat usage. Notion will reduce your need to use Grammarly.

The Final Piece of the AI Puzzle

Let's talk about Notion first, since you've probably read about it everywhere.

They raised $343 million, as I previously reported, and bought four businesses

According to Forbes, Notion will have more than 20 million users by 2022. The number of users is up from 4 million in 2020.

If raising $1.8 billion was impressive, FTX wouldn't have fallen.

This article compares the basic product to two others. Notion is a day-long app.

Notion has released Notion AI to support writers. It's early, so it's not as good as Jasper. Then-Jasper isn't now-Jasper. In five years, Notion AI will be different.

With hard work, they may construct a Jasper-like writing assistant. They have resources and users.

At this point, it's all speculation. Jasper's copywriting is top-notch. Grammarly's proofreading is top-notch. Businesses are constrained by user activities.

If Notion's future business movements are strategic, they might become a blue ocean shark (or get acquired by an unbelievable amount.)

I love business mental teasers, so tell me:

How do you feel? Are you a frequent Notion user?

Do you dispute my position? I enjoy hearing opposing viewpoints.

Ironically, I proofread this with Grammarly.