17 Google Secrets 99 Percent of People Don't Know

What can't Google do?

Seriously, nothing! Google rocks.

Google is a major player in online tools and services. We use it for everything, from research to entertainment.

Did I say entertain yourself?

Yes, with so many features and options, it can be difficult to fully utilize Google.

#1. Drive Google Mad

You can make Google's homepage dance if you want to be silly.

Just type “Google Gravity” into Google.com. Then select I'm lucky.

See the page unstick before your eyes!

#2 Play With Google Image

Google isn't just for work.

Then have fun with it!

You can play games right in your search results. When you need a break, google “Solitaire” or “Tic Tac Toe”.

#3. Do a Barrel Roll

Need a little more excitement in your life? Want to see Google dance?

Type “Do a barrel roll” into the Google search bar.

Then relax and watch your screen do a 360.

#4 No Internet? No issue!

This is a fun trick to use when you have no internet.

If your browser shows a “No Internet” page, simply press Space.

Boom!

We have dinosaurs! Now use arrow keys to save your pixelated T-Rex from extinction.

#5 Google Can Help

Play this Google coin flip game to see if you're lucky.

Enter “Flip a coin” into the search engine.

You'll see a coin flipping animation. If you get heads or tails, click it.

#6. Think with Google

My favorite Google find so far is the “Think with Google” website.

Think with Google is a website that offers marketing insights, research, and case studies.

I highly recommend it to entrepreneurs, small business owners, and anyone interested in online marketing.

#7. Google Can Read Images!

This is a cool Google trick that few know about.

You can search for images by keyword or upload your own by clicking the camera icon on Google Images.

Google will then show you all of its similar images.

Caution: You should be fine with your uploaded images being public.

#8. Modify the Google Logo!

Clicking on the “I'm Feeling Lucky” button on Google.com takes you to a random Google Doodle.

Each year, Google creates a Doodle to commemorate holidays, anniversaries, and other occasions.

#9. What is my IP?

Simply type “What is my IP” into Google to find out.

Your IP address will appear on the results page.

#10. Send a Self-Destructing Email With Gmail,

Create a new message in Gmail. Find an icon that resembles a lock and a clock near the SEND button. That's where the Confidential Mode is.

By clicking it, you can set an expiration date for your email. Expiring emails are automatically deleted from both your and the recipient's inbox.

#11. Blink, Google Blink!

This is a unique Google trick.

Type “blink HTML” into Google. The words “blink HTML” will appear and then disappear.

The text is displayed for a split second before being deleted.

To make this work, Google reads the HTML code and executes the “blink” command.

#12. The Answer To Everything

This is for all Douglas Adams fans.

The answer to life, the universe, and everything is 42, according to Google.

An allusion to Douglas Adams' Hitchhiker's Guide to the Galaxy, in which Ford Prefect seeks to understand life, the universe, and everything.

#13. Google in 1998

It's a blast!

Type “Google in 1998” into Google. "I'm feeling lucky"

You'll be taken to an old-school Google homepage.

It's a nostalgic trip for long-time Google users.

#14. Scholarships and Internships

Google can help you find college funding!

Type “scholarships” or “internships” into Google.

The number of results will surprise you.

#15. OK, Google. Dice!

To roll a die, simply type “Roll a die” into Google.

On the results page is a virtual dice that you can click to roll.

#16. Google has secret codes!

Hit the nine squares on the right side of your Google homepage to go to My Account. Then Personal Info.

You can add your favorite language to the “General preferences for the web” tab.

#17. Google Terminal

You can feel like a true hacker.

Just type “Google Terminal” into Google.com. "I'm feeling lucky"

Voila~!

You'll be taken to an old-school computer terminal-style page.

You can then type commands to see what happens.

Have you tried any of these activities? Tell me in the comments.

Read full article here

More on Productivity

Niharikaa Kaur Sodhi

3 years ago

The Only Paid Resources I Turn to as a Solopreneur

4 Pricey Tools That Are Valuable

I pay based on ROI (return on investment).

If a $20/month tool or $500 online course doubles my return, I'm in.

Investing helps me build wealth.

Canva Pro

I initially refused to pay.

My course content needed updating a few months ago. My Google Docs text looked cleaner and more professional in Canva.

I've used it to:

product cover pages

eBook covers

Product page infographics

See my Google Sheets vs. Canva product page graph.

Google Sheets vs Canva

Yesterday, I used it to make a LinkedIn video thumbnail. It took less than 5 minutes and improved my video.

In 30 hours, the video had 39,000 views.

Here's more.

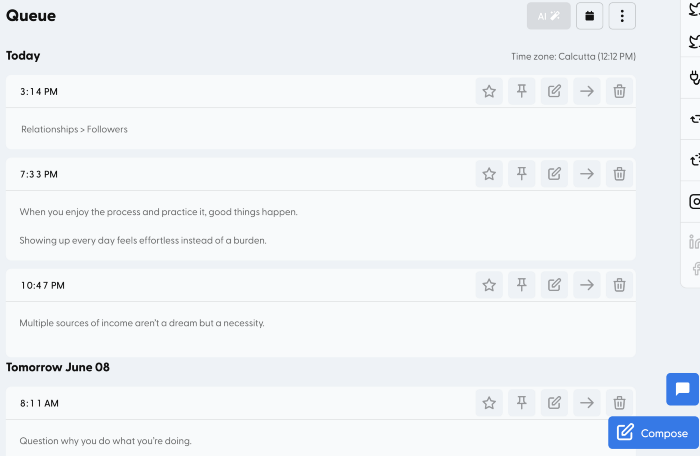

HypeFury

Hypefury rocks!

It builds my brand as I sleep. What else?

Because I'm traveling this weekend, I planned tweets for 10 days. It took me 80 minutes.

So while I travel or am absent, my content mill keeps producing.

Also I like:

I can reach hundreds of people thanks to auto-DMs. I utilize it to advertise freebies; for instance, leave an emoji remark to receive my checklist. And they automatically receive a message in their DM.

Scheduled Retweets: By appearing in a different time zone, they give my tweet a second chance.

It helps me save time and expand my following, so that's my favorite part.

It’s also super neat:

Zoom Pro

My course involves weekly and monthly calls for alumni.

Google Meet isn't great for group calls. The interface isn't great.

Zoom Pro is expensive, and the monthly payments suck, but it's necessary.

It gives my students a smooth experience.

Previously, we'd do 40-minute meetings and then reconvene.

Zoom's free edition limits group calls to 40 minutes.

This wouldn't be a good online course if I paid hundreds of dollars.

So I felt obligated to help.

YouTube Premium

My laptop has an ad blocker.

I bought an iPad recently.

When you're self-employed and work from home, the line between the two blurs. My bed is only 5 steps away!

When I read or watched videos on my laptop, I'd slide into work mode. Only option was to view on phone, which is awkward.

YouTube premium handles it. No more advertisements and I can listen on the move.

3 Expensive Tools That Aren't Valuable

Marketing strategies are sometimes aimed to make you feel you need 38474 cool features when you don’t.

Certain tools are useless.

I found it useless.

Depending on your needs. As a writer and creator, I get no return.

They could for other jobs.

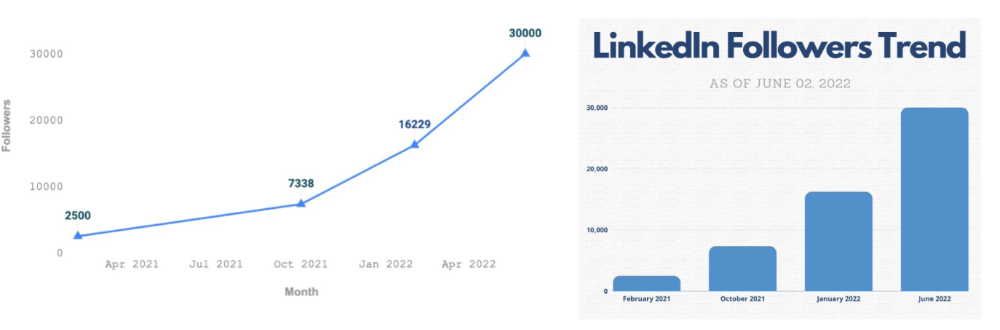

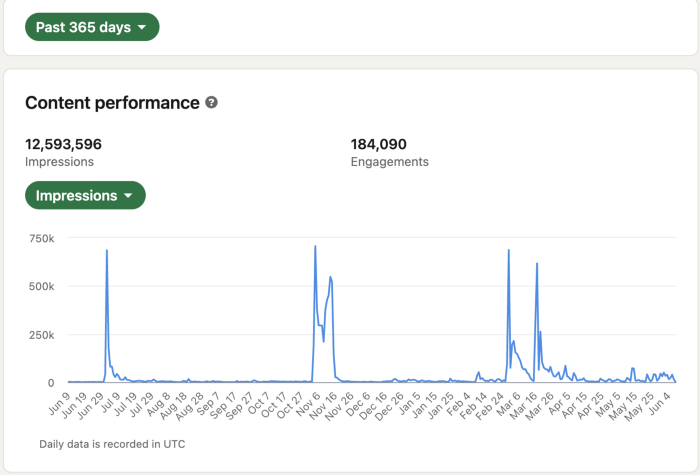

Shield Analytics

It tracks LinkedIn stats, like:

follower growth

trend chart for impressions

Engagement, views, and comment stats for posts

and much more.

Middle-tier creator costs $12/month.

I got a 25% off coupon but canceled my free trial before writing this. It's not worth the discount.

Why?

LinkedIn provides free analytics. See:

Not thorough and won't show top posts.

I don't need to see my top posts because I love experimenting with writing.

Slack Premium

Slack was my classroom. Slack provided me a premium trial during the prior cohort.

I skipped it.

Sure, voice notes are better than a big paragraph. I didn't require pro features.

Marketing methods sometimes make you think you need 38474 amazing features. Don’t fall for it.

Calendly Pro

This may be worth it if you get many calls.

I avoid calls. During my 9-5, I had too many pointless calls.

I don't need:

ability to schedule calls for 15, 30, or 60 minutes: I just distribute each link separately.

I have a Gumroad consultation page with a payment option.

follow-up emails: I hardly ever make calls, so

I just use one calendar, therefore I link to various calendars.

I'll admit, the integrations are cool. Not for me.

If you're a coach or consultant, the features may be helpful. Or book meetings.

Conclusion

Investing is spending to make money.

Use my technique — put money in tools that help you make money. This separates it from being an investment instead of an expense.

Try free versions of these tools before buying them since everyone else is.

Deon Ashleigh

3 years ago

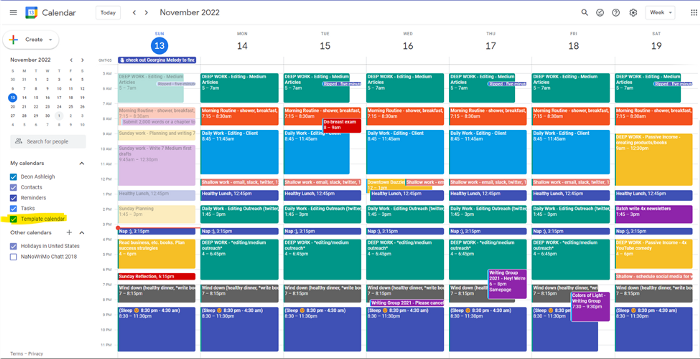

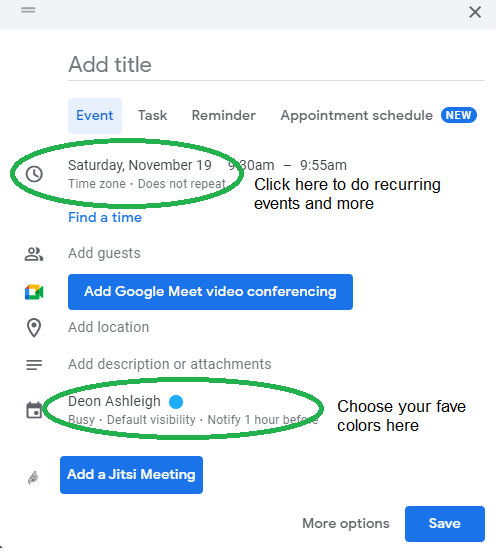

You can dominate your daily productivity with these 9 little-known Google Calendar tips.

Calendars are great unpaid employees.

After using Notion to organize my next three months' goals, my days were a mess.

I grew very chaotic afterward. I was overwhelmed, unsure of what to do, and wasting time attempting to plan the day after it had started.

Imagine if our skeletons were on the outside. Doesn’t work.

The goals were too big; I needed to break them into smaller chunks. But how?

Enters Google Calendar

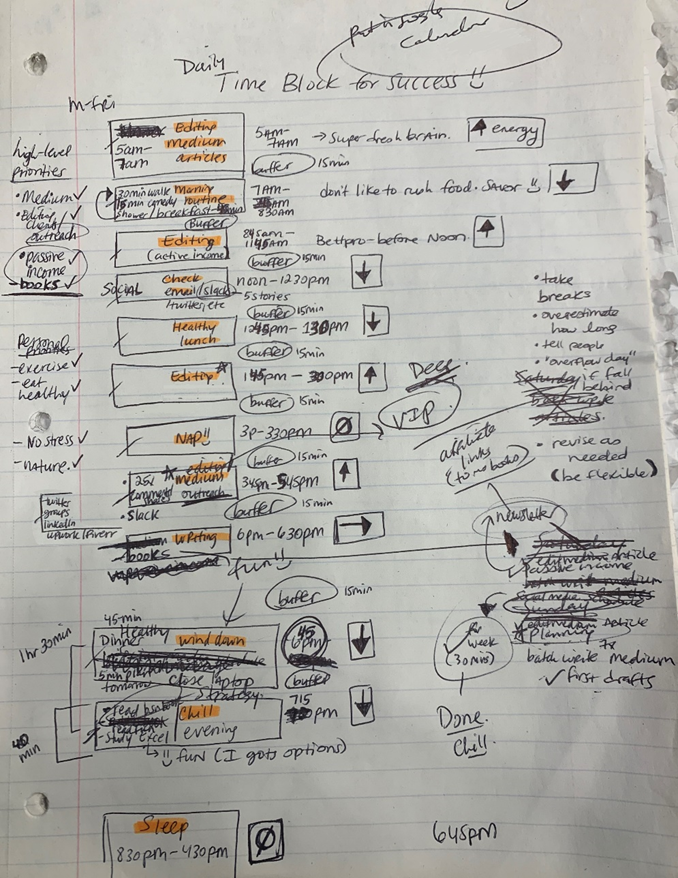

RescueTime’s recommendations took me seven hours to make a daily planner. This epic narrative begins with a sheet of paper and concludes with a daily calendar that helps me focus and achieve more goals. Ain’t nobody got time for “what’s next?” all day.

Onward!

Return to the Paleolithic Era

Plan in writing.

Not on the list, but it helped me plan my day. Physical writing boosts creativity and recall.

Find My Heart

i.e. prioritize

RescueTime suggested I prioritize before planning. Personal and business goals were proposed.

My top priorities are to exercise, eat healthily, spend time in nature, and avoid stress.

Priorities include writing and publishing Medium articles, conducting more freelance editing and Medium outreach, and writing/editing sci-fi books.

These eight things will help me feel accomplished every day.

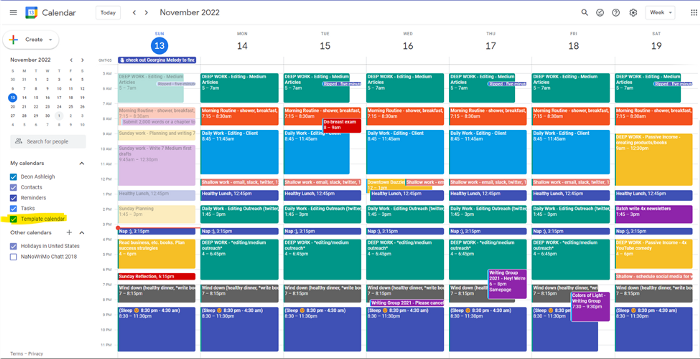

Make a baby calendar.

Create daily calendar templates.

Make family, pleasure, etc. calendars.

Google Calendar instructions:

Other calendars

Press the “+” button

Create a new calendar

Create recurring events for each day

My calendar, without the template:

Empty, so I can fill it with vital tasks.

With the template:

My daily skeleton corresponds with my priorities. I've been overwhelmed for years because I lack daily, weekly, monthly, and yearly structure.

Google Calendars helps me reach my goals and focus my energy.

Get your colored pencils ready

Time-block color-coding.

Color labeling lets me quickly see what's happening. Maybe you are too.

Google Calendar instructions:

Determine which colors correspond to each time block.

When establishing new events, select a color.

Save

My calendar is color-coded as follows:

Yellow — passive income or other future-related activities

Red — important activities, like my monthly breast exam

Flamingo — shallow work, like emails, Twitter, etc.

Blue — all my favorite activities, like walking, watching comedy, napping, and sleeping. Oh, and eating.

Green — money-related events required for this adulting thing

Purple — writing-related stuff

Associating a time block with a color helps me stay focused. Less distractions mean faster work.

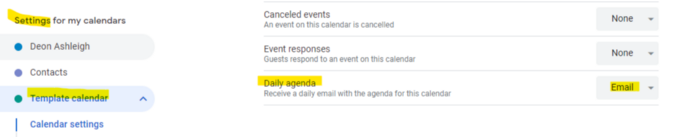

Open My Email

aka receive a daily email from Google Calendar.

Google Calendar sends a daily email feed of your calendars. I sent myself the template calendar in this email.

Google Calendar instructions:

Access settings

Select the calendar that you want to send (left side)

Go down the page to see more alerts

Under the daily agenda area, click Email.

Get in Touch With Your Red Bull Wings — Naturally

aka audit your energy levels.

My daily planner has arrows. These indicate how much energy each activity requires or how much I have.

Rightward arrow denotes medium energy.

I do my Medium and professional editing in the morning because it's energy-intensive.

Niharikaa Sodhi recommends morning Medium editing.

I’m a morning person. As long as I go to bed at a reasonable time, 5 a.m. is super wild GO-TIME. It’s like the world was just born, and I marvel at its wonderfulness.

Freelance editing lets me do what I want. An afternoon snooze will help me finish on time.

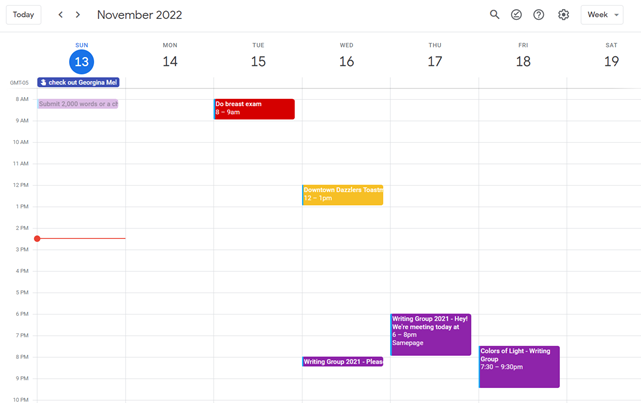

Ditch Schedule View

aka focus on the weekly view.

RescueTime advocated utilizing the weekly view of Google Calendar, so I switched.

When you launch the phone app or desktop calendar, a red line shows where you are in the day.

I'll follow the red line's instructions. My digital supervisor is easy to follow.

In the image above, it's almost 3 p.m., therefore the red line implies it's time to snooze.

I won't forget this block ;).

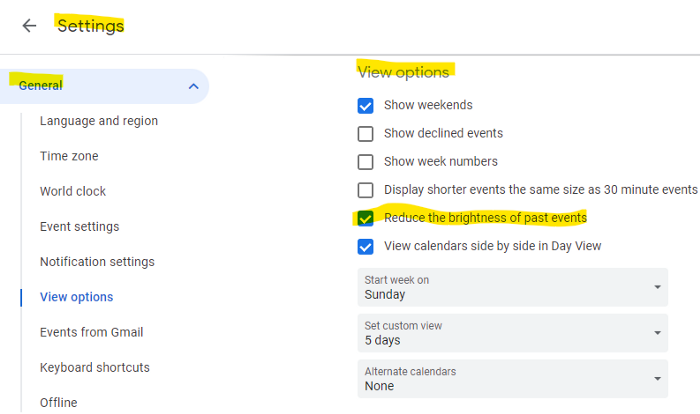

Reduce the Lighting

aka dim previous days.

This is another Google Calendar feature I didn't know about. Once the allotted time passes, the time block dims. This keeps me present.

Google Calendar instructions:

Access settings

remaining general

To view choices, click.

Check Diminish the glare of the past.

Bonus

Two additional RescueTimes hacks:

Maintain a space between tasks

I left 15 minutes between each time block to transition smoothly. This relates to my goal of less stress. If I set strict start and end times, I'll be stressed.

With a buffer, I can breathe, stroll around, and start the following time block fresh.

Find a time is related to the buffer.

This option allows you conclude small meetings five minutes early and longer ones ten. Before the next meeting, relax or go wild.

Decide on a backup day.

This productivity technique is amazing.

Spend this excess day catching up on work. It helps reduce tension and clutter.

That's all I can say about Google Calendar's functionality.

Ellane W

3 years ago

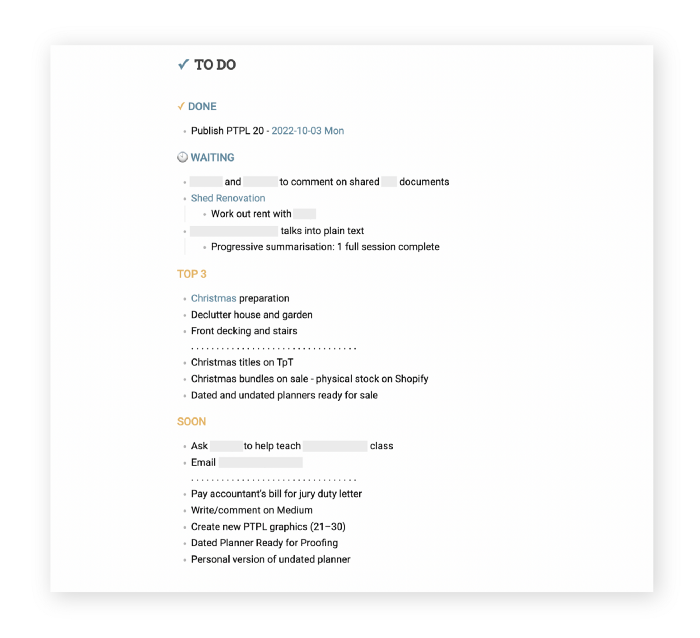

The Last To-Do List Template I'll Ever Need, Years in the Making

The holy grail of plain text task management is finally within reach

Plain text task management? Are you serious?? Dedicated task managers exist for a reason, you know. Sheesh.

—Oh, I know. Believe me, I know! But hear me out.

I've managed projects and tasks in plain text for more than four years. Since reorganizing my to-do list, plain text task management is within reach.

Data completely yours? One billion percent. Beef it up with coding? Be my guest.

Enter: The List

The answer? A list. That’s it!

Write down tasks. Obsidian, Notenik, Drafts, or iA Writer are good plain text note-taking apps.

List too long? Of course, it is! A large list tells you what to do. Feel the itch and friction. Then fix it.

But I want to be able to distinguish between work and personal life! List two things.

However, I need to know what should be completed first. Put those items at the top.

However, some things keep coming up, and I need to be reminded of them! Put those in your calendar and make an alarm for them.

But since individual X hasn't completed task Y, I can't proceed with this. Create a Waiting section on your list by dividing it.

But I must know what I'm supposed to be doing right now! Read your list(s). Check your calendar. Think critically.

Before I begin a new one, I remind myself that "Listory Never Repeats."

There’s no such thing as too many lists if all are needed. There is such a thing as too many lists if you make them before they’re needed. Before they complain that their previous room was small or too crowded or needed a new light.

A list that feels too long has a voice; it’s telling you what to do next.

I use one Master List. It's a control panel that tells me what to focus on short-term. If something doesn't need semi-immediate attention, it goes on my Backlog list.

Todd Lewandowski's DWTS (Done, Waiting, Top 3, Soon) performance deserves praise. His DWTS to-do list structure has transformed my plain-text task management. I didn't realize it was upside down.

This is my take on it:

D = Done

Move finished items here. If they pile up, clear them out every week or month. I have a Done Archive folder.

W = Waiting

Things seething in the background, awaiting action. Stir them occasionally so they don't burn.

T = Top 3

Three priorities. Personal comes first, then work. There will always be a top 3 (no more than 5) in every category. Projects, not chores, usually.

S = Soon

This part is action-oriented. It's for anything you can accomplish to finish one of the Top 3. This collection includes thoughts and project lists. The sole requirement is that they should be short-term goals.

Some of you have probably concluded this isn't for you. Please read Todd's piece before throwing out the baby. Often. You shouldn't miss a newborn.

As much as Dancing With The Stars helps me recall this method, I may try switching their order. TSWD; Drilling Tunnel Seismic? Serenity After Task?

Master List Showcase

My Master List lives alone in its own file, but sometimes appears in other places. It's included in my Weekly List template. Here's a (soon-to-be-updated) demo vault of my Obsidian planning setup to download for free.

Here's the code behind my weekly screenshot:

## [[Master List - 2022|✓]] TO DO

![[Master List - 2022]]FYI, I use the Minimal Theme in Obsidian, with a few tweaks.

You may note I'm utilizing a checkmark as a link. For me, that's easier than locating the proper spot to click on the embed.

Blue headings for Done and Waiting are links. Done links to the Done Archive page and Waiting to a general waiting page.

Read my full article here.

You might also like

Yuga Labs

3 years ago

Yuga Labs (BAYC and MAYC) buys CryptoPunks and Meebits and gives them commercial rights

Yuga has acquired the CryptoPunks and Meebits NFT IP from Larva Labs. These include 423 CryptoPunks and 1711 Meebits.

We set out to create in the NFT space because we admired CryptoPunks and the founders' visionary work. A lot of their work influenced how we built BAYC and NFTs. We're proud to lead CryptoPunks and Meebits into the future as part of our broader ecosystem.

"Yuga Labs invented the modern profile picture project and are the best in the world at operating these projects. They are ideal CrytoPunk and Meebit stewards. We are confident that in their hands, these projects will thrive in the emerging decentralized web.”

–The founders of Larva Labs, CryptoPunks, and Meebits

This deal grew out of discussions between our partner Guy Oseary and the Larva Labs founders. One call led to another, and now we're here. This does not mean Matt and John will join Yuga. They'll keep running Larva Labs and creating awesome projects that help shape the future of web3.

Next steps

Here's what we plan to do with CryptoPunks and Meebits now that we own the IP. Owners of CryptoPunks and Meebits will soon receive commercial rights equal to those of BAYC and MAYC holders. Our legal teams are working on new terms and conditions for both collections, which we hope to share with the community soon. We expect a wide range of third-party developers and community creators to incorporate CryptoPunks and Meebits into their web3 projects. We'll build the brand alongside them.

We don't intend to cram these NFT collections into the BAYC club model. We see BAYC as the hub of the Yuga universe, and CryptoPunks as a historical collection. We will work to improve the CryptoPunks and Meebits collections as good stewards. We're not in a hurry. We'll consult the community before deciding what to do next.

For us, NFTs are about culture. We're deeply invested in the BAYC community, and it's inspiring to see them grow, collaborate, and innovate. We're excited to see what CryptoPunks and Meebits do with IP rights. Our goal has always been to create a community-owned brand that goes beyond NFTs, and now we can include CryptoPunks and Meebits.

forkast

3 years ago

Three Arrows Capital collapse sends crypto tremors

Three Arrows Capital's Google search volume rose over 5,000%.

Three Arrows Capital, a Singapore-based cryptocurrency hedge fund, filed for Chapter 15 bankruptcy last Friday to protect its U.S. assets from creditors.

Three Arrows filed for bankruptcy on July 1 in New York.

Three Arrows was ordered liquidated by a British Virgin Islands court last week after defaulting on a $670 million loan from Voyager Digital. Three days later, the Singaporean government reprimanded Three Arrows for spreading misleading information and exceeding asset limits.

Three Arrows' troubles began with Terra's collapse in May, after it bought US$200 million worth of Terra's LUNA tokens in February, co-founder Kyle Davies told the Wall Street Journal. Three Arrows has failed to meet multiple margin calls since then, including from BlockFi and Genesis.

Three Arrows Capital, founded by Kyle Davies and Su Zhu in 2012, manages $10 billion in crypto assets.

Bitcoin's price fell from US$20,600 to below US$19,200 after Three Arrows' bankruptcy petition. According to CoinMarketCap, BTC is now above US$20,000.

What does it mean?

Every action causes an equal and opposite reaction, per Newton's third law. Newtonian physics won't comfort Three Arrows investors, but future investors will thank them for their overconfidence.

Regulators are taking notice of crypto's meteoric rise and subsequent fall. Historically, authorities labeled the industry "high risk" to warn traditional investors against entering it. That attitude is changing. Regulators are moving quickly to regulate crypto to protect investors and prevent broader asset market busts.

The EU has reached a landmark deal that will regulate crypto asset sales and crypto markets across the 27-member bloc. The U.S. is close behind with a similar ruling, and smaller markets are also looking to improve safeguards.

For many, regulation is the only way to ensure the crypto industry survives the current winter.

Stephen Moore

3 years ago

Web 2 + Web 3 = Web 5.

Monkey jpegs and shitcoins have tarnished Web3's reputation. Let’s move on.

Web3 was called "the internet's future."

Well, 'crypto bros' shouted about it loudly.

As quickly as it arrived to be the next internet, it appears to be dead. It's had scandals, turbulence, and crashes galore:

Web 3.0's cryptocurrencies have crashed. Bitcoin's all-time high was $66,935. This month, Ethereum fell from $2130 to $1117. Six months ago, the cryptocurrency market peaked at $3 trillion. Worst is likely ahead.

Gas fees make even the simplest Web3 blockchain transactions unsustainable.

Terra, Luna, and other dollar pegs collapsed, hurting crypto markets. Celsius, a crypto lender backed by VCs and Canada's second-largest pension fund, and Binance, a crypto marketplace, have withheld money and coins. They're near collapse.

NFT sales are falling rapidly and losing public interest.

Web3 has few real-world uses, like most crypto/blockchain technologies. Web3's image has been tarnished by monkey profile pictures and shitcoins while failing to become decentralized (the whole concept is controlled by VCs).

The damage seems irreparable, leaving Web3 in the gutter.

Step forward our new saviour — Web5

Fear not though, as hero awaits to drag us out of the Web3 hellscape. Jack Dorsey revealed his plan to save the internet quickly.

Dorsey has long criticized Web3, believing that VC capital and silicon valley insiders have created a centralized platform. In a tweet that upset believers and VCs (he was promptly blocked by Marc Andreessen), Dorsey argued, "You don't own "Web3." VCs and LPs do. Their incentives prevent it. It's a centralized organization with a new name.

Dorsey announced Web5 on June 10 in a very Elon-like manner. Block's TBD unit will work on the project (formerly Square).

Web5's pitch is that users will control their own data and identity. Bitcoin-based. Sound familiar? The presentation pack's official definition emphasizes decentralization. Web5 is a decentralized web platform that enables developers to write decentralized web apps using decentralized identifiers, verifiable credentials, and decentralized web nodes, returning ownership and control over identity and data to individuals.

Web5 would be permission-less, open, and token-less. What that means for Earth is anyone's guess. Identity. Ownership. Blockchains. Bitcoin. Different.

Web4 appears to have been skipped, forever destined to wish it could have shown the world what it could have been. (It was probably crap.) As this iteration combines Web2 and Web3, simple math and common sense add up to 5. Or something.

Dorsey and his team have had this idea simmering for a while. Daniel Buchner, a member of Block's Decentralized Identity team, said, "We're finishing up Web5's technical components."

Web5 could be the project that decentralizes the internet. It must be useful to users and convince everyone to drop the countless Web3 projects, products, services, coins, blockchains, and websites being developed as I write this.

Web5 may be too late for Dorsey and the incoming flood of creators.

Web6 is planned!

The next months and years will be hectic and less stable than the transition from Web 1.0 to Web 2.0.

Web1 was around 1991-2004.

Web2 ran from 2004 to 2021. (though the Web3 term was first used in 2014, it only really gained traction years later.)

Web3 lasted a year.

Web4 is dead.

Silicon Valley billionaires are turning it into a startup-style race, each disrupting the next iteration until they crack it. Or destroy it completely.

Web5 won't last either.