More on Personal Growth

Akshad Singi

3 years ago

Four obnoxious one-minute habits that help me save more than 30 hours each week

These four, when combined, destroy procrastination.

You're not rushed. You waste it on busywork.

You'll accept this eventually.

In 2022, the daily average usage of a user on social media is 2.5 hours.

By 2020, 6 billion hours of video were watched each month by Netflix's customers, who used the service an average of 3.2 hours per day.

When we see these numbers, we think "Wow!" People squander so much time as though they don't contribute. True. These are yours. Likewise.

We don't lack time; we just waste it. Once you realize this, you can change your habits to save time. This article explains. If you adopt ALL 4 of these simple behaviors, you'll see amazing benefits.

Time-blocking

Cal Newport's time-blocking trick takes a minute but improves your day's clarity.

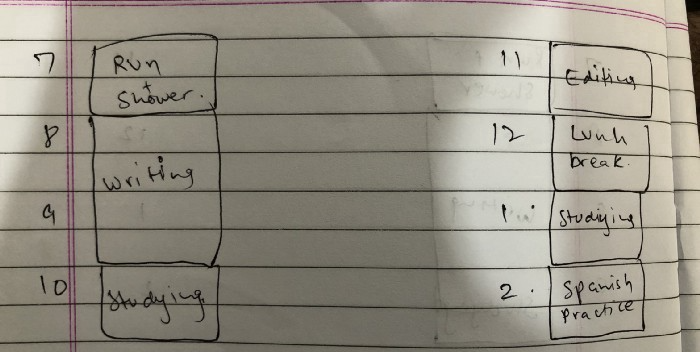

Divide the next day into 30-minute (or 5-minute, if you're Elon Musk) segments and assign responsibilities. As seen.

Here's why:

The procrastination that results from attempting to determine when to begin working is eliminated. Procrastination is a given if you choose when to begin working in real-time. Even if you may assume you'll start working in five minutes, it won't take you long to realize that five minutes have turned into an hour. But if you've already determined to start working at 2:00 the next day, your odds of procrastinating are greatly decreased, if not eliminated altogether.

You'll also see that you have a lot of time in a day when you plan your day out on paper and assign chores to each hour. Doing this daily will permanently eliminate the lack of time mindset.

5-4-3-2-1: Have breakfast with the frog!

“If it’s your job to eat a frog, it’s best to do it first thing in the morning. And If it’s your job to eat two frogs, it’s best to eat the biggest one first.”

Eating the frog means accomplishing the day's most difficult chore. It's better to schedule it first thing in the morning when time-blocking the night before. Why?

The day's most difficult task is also the one that causes the most postponement. Because of the stress it causes, the later you schedule it, the more time you risk wasting by procrastinating.

However, if you do it right away in the morning, you'll feel good all day. This is the reason it was set for the morning.

Mel Robbins' 5-second rule can help. Start counting backward 54321 and force yourself to start at 1. If you acquire the urge to work on a goal, you must act within 5 seconds or your brain will destroy it. If you're scheduled to eat your frog at 9, eat it at 8:59. Start working.

Micro-visualisation

You've heard of visualizing to enhance the future. Visualizing a bright future won't do much if you're not prepared to focus on the now and develop the necessary habits. Alexander said:

People don’t decide their futures. They decide their habits and their habits decide their future.

I visualize the next day's schedule every morning. My day looks like this

“I’ll start writing an article at 7:30 AM. Then, I’ll get dressed up and reach the medicine outpatient department by 9:30 AM. After my duty is over, I’ll have lunch at 2 PM, followed by a nap at 3 PM. Then, I’ll go to the gym at 4…”

etc.

This reinforces the day you planned the night before. This makes following your plan easy.

Set the timer.

It's the best iPhone productivity app. A timer is incredible for increasing productivity.

Set a timer for an hour or 40 minutes before starting work. Your call. I don't believe in techniques like the Pomodoro because I can focus for varied amounts of time depending on the time of day, how fatigued I am, and how cognitively demanding the activity is.

I work with a timer. A timer keeps you focused and prevents distractions. Your mind stays concentrated because of the timer. Timers generate accountability.

To pee, I'll pause my timer. When I sit down, I'll continue. Same goes for bottle refills. To use Twitter, I must pause the timer. This creates accountability and focuses work.

Connecting everything

If you do all 4, you won't be disappointed. Here's how:

Plan out your day's schedule the night before.

Next, envision in your mind's eye the same timetable in the morning.

Speak aloud 54321 when it's time to work: Eat the frog! In the morning, devour the largest frog.

Then set a timer to ensure that you remain focused on the task at hand.

The woman

3 years ago

The best lesson from Sundar Pichai is that success and stress don't mix.

His regular regimen teaches stress management.

In 1995, an Indian graduate visited the US. He obtained a scholarship to Stanford after graduating from IIT with a silver medal. First flight. His ticket cost a year's income. His head was full.

Pichai Sundararajan is his full name. He became Google's CEO and a world leader. Mr. Pichai transformed technology and inspired millions to dream big.

This article reveals his daily schedule.

Mornings

While many of us dread Mondays, Mr. Pichai uses the day to contemplate.

A typical Indian morning. He awakens between 6:30 and 7 a.m. He avoids working out in the mornings.

Mr. Pichai oversees the internet, but he reads a real newspaper every morning.

Pichai mentioned that he usually enjoys a quiet breakfast during which he reads the news to get a good sense of what’s happening in the world. Pichai often has an omelet for breakfast and reads while doing so. The native of Chennai, India, continues to enjoy his daily cup of tea, which he describes as being “very English.”

Pichai starts his day. BuzzFeed's Mat Honan called the CEO Banana Republic dad.

Overthinking in the morning is a bad idea. It's crucial to clear our brains and give ourselves time in the morning before we hit traffic.

Mr. Pichai's morning ritual shows how to stay calm. Wharton Business School found that those who start the day calmly tend to stay that way. It's worth doing regularly.

And he didn't forget his roots.

Afternoons

He has a busy work schedule, as you can imagine. Running one of the world's largest firm takes time, energy, and effort. He prioritizes his work. Monitoring corporate performance and guaranteeing worker efficiency.

Sundar Pichai spends 7-8 hours a day to improve Google. He's noted for changing the company's culture. He wants to boost employee job satisfaction and performance.

His work won him recognition within the company.

Pichai received a 96% approval rating from Glassdoor users in 2017.

Mr. Pichai stresses work satisfaction. Each day is a new canvas for him to find ways to enrich people's job and personal lives.

His work offers countless lessons. According to several profiles and press sources, the Google CEO is a savvy negotiator. Mr. Pichai's success came from his strong personality, work ethic, discipline, simplicity, and hard labor.

Evenings

His evenings are spent with family after a busy day. Sundar Pichai's professional and personal lives are balanced. Sundar Pichai is a night owl who re-energizes about 9 p.m.

However, he claims to be most productive after 10 p.m., and he thinks doing a lot of work at that time is really useful. But he ensures he sleeps for around 7–8 hours every day. He enjoys long walks with his dog and enjoys watching NSDR on YouTube. It helps him in relaxing and sleep better.

His regular routine teaches us what? Work wisely, not hard, discipline, vision, etc. His stress management is key. Leading one of the world's largest firm with 85,000 employees is scary.

The pressure to achieve may ruin a day. Overworked employees are more likely to make mistakes or be angry with coworkers, according to the Family Work Institute. They can't handle daily problems, making the house more stressful than the office.

Walking your dog, having fun with friends, and having hobbies are as vital as your office.

Samer Buna

2 years ago

The Errors I Committed As a Novice Programmer

Learn to identify them, make habits to avoid them

First, a clarification. This article is aimed to make new programmers aware of their mistakes, train them to detect them, and remind them to prevent them.

I learned from all these blunders. I'm glad I have coding habits to avoid them. Do too.

These mistakes are not ordered.

1) Writing code haphazardly

Writing good content is hard. It takes planning and investigation. Quality programs don't differ.

Think. Research. Plan. Write. Validate. Modify. Unfortunately, no good acronym exists. Create a habit of doing the proper quantity of these activities.

As a newbie programmer, my biggest error was writing code without thinking or researching. This works for small stand-alone apps but hurts larger ones.

Like saying anything you might regret, you should think before coding something you could regret. Coding expresses your thoughts.

When angry, count to 10 before you speak. If very angry, a hundred. — Thomas Jefferson.

My quote:

When reviewing code, count to 10 before you refactor a line. If the code does not have tests, a hundred. — Samer Buna

Programming is primarily about reviewing prior code, investigating what is needed and how it fits into the current system, and developing small, testable features. Only 10% of the process involves writing code.

Programming is not writing code. Programming need nurturing.

2) Making excessive plans prior to writing code

Yes. Planning before writing code is good, but too much of it is bad. Water poisons.

Avoid perfect plans. Programming does not have that. Find a good starting plan. Your plan will change, but it helped you structure your code for clarity. Overplanning wastes time.

Only planning small features. All-feature planning should be illegal! The Waterfall Approach is a step-by-step system. That strategy requires extensive planning. This is not planning. Most software projects fail with waterfall. Implementing anything sophisticated requires agile changes to reality.

Programming requires responsiveness. You'll add waterfall plan-unthinkable features. You will eliminate functionality for reasons you never considered in a waterfall plan. Fix bugs and adjust. Be agile.

Plan your future features, though. Do it cautiously since too little or too much planning can affect code quality, which you must risk.

3) Underestimating the Value of Good Code

Readability should be your code's exclusive goal. Unintelligible code stinks. Non-recyclable.

Never undervalue code quality. Coding communicates implementations. Coders must explicitly communicate solution implementations.

Programming quote I like:

Always code as if the guy who ends up maintaining your code will be a violent psychopath who knows where you live. — John Woods

John, great advice!

Small things matter. If your indentation and capitalization are inconsistent, you should lose your coding license.

Long queues are also simple. Readability decreases after 80 characters. To highlight an if-statement block, you might put a long condition on the same line. No. Just never exceed 80 characters.

Linting and formatting tools fix many basic issues like this. ESLint and Prettier work great together in JavaScript. Use them.

Code quality errors:

Multiple lines in a function or file. Break long code into manageable bits. My rule of thumb is that any function with more than 10 lines is excessively long.

Double-negatives. Don't.

Using double negatives is just very not not wrong

Short, generic, or type-based variable names. Name variables clearly.

There are only two hard things in Computer Science: cache invalidation and naming things. — Phil Karlton

Hard-coding primitive strings and numbers without descriptions. If your logic relies on a constant primitive string or numeric value, identify it.

Avoiding simple difficulties with sloppy shortcuts and workarounds. Avoid evasion. Take stock.

Considering lengthier code better. Shorter code is usually preferable. Only write lengthier versions if they improve code readability. For instance, don't utilize clever one-liners and nested ternary statements just to make the code shorter. In any application, removing unneeded code is better.

Measuring programming progress by lines of code is like measuring aircraft building progress by weight. — Bill Gates

Excessive conditional logic. Conditional logic is unnecessary for most tasks. Choose based on readability. Measure performance before optimizing. Avoid Yoda conditions and conditional assignments.

4) Selecting the First Approach

When I started programming, I would solve an issue and move on. I would apply my initial solution without considering its intricacies and probable shortcomings.

After questioning all the solutions, the best ones usually emerge. If you can't think of several answers, you don't grasp the problem.

Programmers do not solve problems. Find the easiest solution. The solution must work well and be easy to read, comprehend, and maintain.

There are two ways of constructing a software design. One way is to make it so simple that there are obviously no deficiencies, and the other way is to make it so complicated that there are no obvious deficiencies. — C.A.R. Hoare

5) Not Giving Up

I generally stick with the original solution even though it may not be the best. The not-quitting mentality may explain this. This mindset is helpful for most things, but not programming. Program writers should fail early and often.

If you doubt a solution, toss it and rethink the situation. No matter how much you put in that solution. GIT lets you branch off and try various solutions. Use it.

Do not be attached to code because of how much effort you put into it. Bad code needs to be discarded.

6) Avoiding Google

I've wasted time solving problems when I should have researched them first.

Unless you're employing cutting-edge technology, someone else has probably solved your problem. Google It First.

Googling may discover that what you think is an issue isn't and that you should embrace it. Do not presume you know everything needed to choose a solution. Google surprises.

But Google carefully. Newbies also copy code without knowing it. Use only code you understand, even if it solves your problem.

Never assume you know how to code creatively.

The most dangerous thought that you can have as a creative person is to think that you know what you’re doing. — Bret Victor

7) Failing to Use Encapsulation

Not about object-oriented paradigm. Encapsulation is always useful. Unencapsulated systems are difficult to maintain.

An application should only handle a feature once. One object handles that. The application's other objects should only see what's essential. Reducing application dependencies is not about secrecy. Following these guidelines lets you safely update class, object, and function internals without breaking things.

Classify logic and state concepts. Class means blueprint template. Class or Function objects are possible. It could be a Module or Package.

Self-contained tasks need methods in a logic class. Methods should accomplish one thing well. Similar classes should share method names.

As a rookie programmer, I didn't always establish a new class for a conceptual unit or recognize self-contained units. Newbie code has a Util class full of unrelated code. Another symptom of novice code is when a small change cascades and requires numerous other adjustments.

Think before adding a method or new responsibilities to a method. Time's needed. Avoid skipping or refactoring. Start right.

High Cohesion and Low Coupling involves grouping relevant code in a class and reducing class dependencies.

8) Arranging for Uncertainty

Thinking beyond your solution is appealing. Every line of code will bring up what-ifs. This is excellent for edge cases but not for foreseeable needs.

Your what-ifs must fall into one of these two categories. Write only code you need today. Avoid future planning.

Writing a feature for future use is improper. No.

Write only the code you need today for your solution. Handle edge-cases, but don't introduce edge-features.

Growth for the sake of growth is the ideology of the cancer cell. — Edward Abbey

9) Making the incorrect data structure choices

Beginner programmers often overemphasize algorithms when preparing for interviews. Good algorithms should be identified and used when needed, but memorizing them won't make you a programming genius.

However, learning your language's data structures' strengths and shortcomings will make you a better developer.

The improper data structure shouts "newbie coding" here.

Let me give you a few instances of data structures without teaching you:

Managing records with arrays instead of maps (objects).

Most data structure mistakes include using lists instead of maps to manage records. Use a map to organize a list of records.

This list of records has an identifier to look up each entry. Lists for scalar values are OK and frequently superior, especially if the focus is pushing values to the list.

Arrays and objects are the most common JavaScript list and map structures, respectively (there is also a map structure in modern JavaScript).

Lists over maps for record management often fail. I recommend always using this point, even though it only applies to huge collections. This is crucial because maps are faster than lists in looking up records by identifier.

Stackless

Simple recursive functions are often tempting when writing recursive programming. In single-threaded settings, optimizing recursive code is difficult.

Recursive function returns determine code optimization. Optimizing a recursive function that returns two or more calls to itself is harder than optimizing a single call.

Beginners overlook the alternative to recursive functions. Use Stack. Push function calls to a stack and start popping them out to traverse them back.

10) Worsening the current code

Imagine this:

Add an item to that room. You might want to store that object anywhere as it's a mess. You can finish in seconds.

Not with messy code. Do not worsen! Keep the code cleaner than when you started.

Clean the room above to place the new object. If the item is clothing, clear a route to the closet. That's proper execution.

The following bad habits frequently make code worse:

code duplication You are merely duplicating code and creating more chaos if you copy/paste a code block and then alter just the line after that. This would be equivalent to adding another chair with a lower base rather than purchasing a new chair with a height-adjustable seat in the context of the aforementioned dirty room example. Always keep abstraction in mind, and use it when appropriate.

utilizing configuration files not at all. A configuration file should contain the value you need to utilize if it may differ in certain circumstances or at different times. A configuration file should contain a value if you need to use it across numerous lines of code. Every time you add a new value to the code, simply ask yourself: "Does this value belong in a configuration file?" The most likely response is "yes."

using temporary variables and pointless conditional statements. Every if-statement represents a logic branch that should at the very least be tested twice. When avoiding conditionals doesn't compromise readability, it should be done. The main issue with this is that branch logic is being used to extend an existing function rather than creating a new function. Are you altering the code at the appropriate level, or should you go think about the issue at a higher level every time you feel you need an if-statement or a new function variable?

This code illustrates superfluous if-statements:

function isOdd(number) {

if (number % 2 === 1) {

return true;

} else {

return false;

}

}Can you spot the biggest issue with the isOdd function above?

Unnecessary if-statement. Similar code:

function isOdd(number) {

return (number % 2 === 1);

};11) Making remarks on things that are obvious

I've learnt to avoid comments. Most code comments can be renamed.

instead of:

// This function sums only odd numbers in an array

const sum = (val) => {

return val.reduce((a, b) => {

if (b % 2 === 1) { // If the current number is odd

a+=b; // Add current number to accumulator

}

return a; // The accumulator

}, 0);

};Commentless code looks like this:

const sumOddValues = (array) => {

return array.reduce((accumulator, currentNumber) => {

if (isOdd(currentNumber)) {

return accumulator + currentNumber;

}

return accumulator;

}, 0);

};Better function and argument names eliminate most comments. Remember that before commenting.

Sometimes you have to use comments to clarify the code. This is when your comments should answer WHY this code rather than WHAT it does.

Do not write a WHAT remark to clarify the code. Here are some unnecessary comments that clutter code:

// create a variable and initialize it to 0

let sum = 0;

// Loop over array

array.forEach(

// For each number in the array

(number) => {

// Add the current number to the sum variable

sum += number;

}

);Avoid that programmer. Reject that code. Remove such comments if necessary. Most importantly, teach programmers how awful these remarks are. Tell programmers who publish remarks like this that they may lose their jobs. That terrible.

12) Skipping tests

I'll simplify. If you develop code without tests because you think you're an excellent programmer, you're a rookie.

If you're not writing tests in code, you're probably testing manually. Every few lines of code in a web application will be refreshed and interacted with. Also. Manual code testing is fine. To learn how to automatically test your code, manually test it. After testing your application, return to your code editor and write code to automatically perform the same interaction the next time you add code.

Human. After each code update, you will forget to test all successful validations. Automate it!

Before writing code to fulfill validations, guess or design them. TDD is real. It improves your feature design thinking.

If you can use TDD, even partially, do so.

13) Making the assumption that if something is working, it must be right.

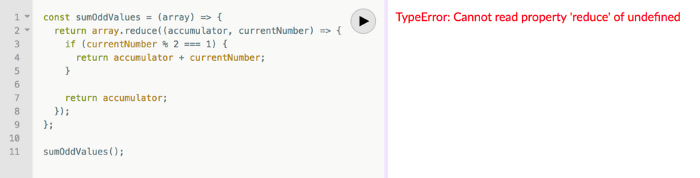

See this sumOddValues function. Is it flawed?

const sumOddValues = (array) => {

return array.reduce((accumulator, currentNumber) => {

if (currentNumber % 2 === 1) {

return accumulator + currentNumber;

}

return accumulator;

});

};

console.assert(

sumOddValues([1, 2, 3, 4, 5]) === 9

);Verified. Good life. Correct?

Code above is incomplete. It handles some scenarios correctly, including the assumption used, but it has many other issues. I'll list some:

#1: No empty input handling. What happens when the function is called without arguments? That results in an error revealing the function's implementation:

TypeError: Cannot read property 'reduce' of undefined.

Two main factors indicate faulty code.

Your function's users shouldn't come across implementation-related information.

The user cannot benefit from the error. Simply said, they were unable to use your function. They would be aware that they misused the function if the error was more obvious about the usage issue. You might decide to make the function throw a custom exception, for instance:

TypeError: Cannot execute function for empty list.Instead of returning an error, your method should disregard empty input and return a sum of 0. This case requires action.

Problem #2: No input validation. What happens if the function is invoked with a text, integer, or object instead of an array?

The function now throws:

sumOddValues(42);

TypeError: array.reduce is not a functionUnfortunately, array. cut's a function!

The function labels anything you call it with (42 in the example above) as array because we named the argument array. The error says 42.reduce is not a function.

See how that error confuses? An mistake like:

TypeError: 42 is not an array, dude.Edge-cases are #1 and #2. These edge-cases are typical, but you should also consider less obvious ones. Negative numbers—what happens?

sumOddValues([1, 2, 3, 4, 5, -13]) // => still 9-13's unusual. Is this the desired function behavior? Error? Should it sum negative numbers? Should it keep ignoring negative numbers? You may notice the function should have been titled sumPositiveOddNumbers.

This decision is simple. The more essential point is that if you don't write a test case to document your decision, future function maintainers won't know if you ignored negative values intentionally or accidentally.

It’s not a bug. It’s a feature. — Someone who forgot a test case

#3: Valid cases are not tested. Forget edge-cases, this function mishandles a straightforward case:

sumOddValues([2, 1, 3, 4, 5]) // => 11The 2 above was wrongly included in sum.

The solution is simple: reduce accepts a second input to initialize the accumulator. Reduce will use the first value in the collection as the accumulator if that argument is not provided, like in the code above. The sum included the test case's first even value.

This test case should have been included in the tests along with many others, such as all-even numbers, a list with 0 in it, and an empty list.

Newbie code also has rudimentary tests that disregard edge-cases.

14) Adhering to Current Law

Unless you're a lone supercoder, you'll encounter stupid code. Beginners don't identify it and assume it's decent code because it works and has been in the codebase for a while.

Worse, if the terrible code uses bad practices, the newbie may be enticed to use them elsewhere in the codebase since they learnt them from good code.

A unique condition may have pushed the developer to write faulty code. This is a nice spot for a thorough note that informs newbies about that condition and why the code is written that way.

Beginners should presume that undocumented code they don't understand is bad. Ask. Enquire. Blame it!

If the code's author is dead or can't remember it, research and understand it. Only after understanding the code can you judge its quality. Before that, presume nothing.

15) Being fixated on best practices

Best practices damage. It suggests no further research. Best practice ever. No doubts!

No best practices. Today's programming language may have good practices.

Programming best practices are now considered bad practices.

Time will reveal better methods. Focus on your strengths, not best practices.

Do not do anything because you read a quote, saw someone else do it, or heard it is a recommended practice. This contains all my article advice! Ask questions, challenge theories, know your options, and make informed decisions.

16) Being preoccupied with performance

Premature optimization is the root of all evil (or at least most of it) in programming — Donald Knuth (1974)

I think Donald Knuth's advice is still relevant today, even though programming has changed.

Do not optimize code if you cannot measure the suspected performance problem.

Optimizing before code execution is likely premature. You may possibly be wasting time optimizing.

There are obvious optimizations to consider when writing new code. You must not flood the event loop or block the call stack in Node.js. Remember this early optimization. Will this code block the call stack?

Avoid non-obvious code optimization without measurements. If done, your performance boost may cause new issues.

Stop optimizing unmeasured performance issues.

17) Missing the End-User Experience as a Goal

How can an app add a feature easily? Look at it from your perspective or in the existing User Interface. Right? Add it to the form if the feature captures user input. Add it to your nested menu of links if it adds a link to a page.

Avoid that developer. Be a professional who empathizes with customers. They imagine this feature's consumers' needs and behavior. They focus on making the feature easy to find and use, not just adding it to the software.

18) Choosing the incorrect tool for the task

Every programmer has their preferred tools. Most tools are good for one thing and bad for others.

The worst tool for screwing in a screw is a hammer. Do not use your favorite hammer on a screw. Don't use Amazon's most popular hammer on a screw.

A true beginner relies on tool popularity rather than problem fit.

You may not know the best tools for a project. You may know the best tool. However, it wouldn't rank high. You must learn your tools and be open to new ones.

Some coders shun new tools. They like their tools and don't want to learn new ones. I can relate, but it's wrong.

You can build a house slowly with basic tools or rapidly with superior tools. You must learn and use new tools.

19) Failing to recognize that data issues are caused by code issues

Programs commonly manage data. The software will add, delete, and change records.

Even the simplest programming errors can make data unpredictable. Especially if the same defective application validates all data.

Code-data relationships may be confusing for beginners. They may employ broken code in production since feature X is not critical. Buggy coding may cause hidden data integrity issues.

Worse, deploying code that corrected flaws without fixing minor data problems caused by these defects will only collect more data problems that take the situation into the unrecoverable-level category.

How do you avoid these issues? Simply employ numerous data integrity validation levels. Use several interfaces. Front-end, back-end, network, and database validations. If not, apply database constraints.

Use all database constraints when adding columns and tables:

If a column has a NOT NULL constraint, null values will be rejected for that column. If your application expects that field has a value, your database should designate its source as not null.

If a column has a UNIQUE constraint, the entire table cannot include duplicate values for that column. This is ideal for a username or email field on a Users table, for instance.

For the data to be accepted, a CHECK constraint, or custom expression, must evaluate to true. For instance, you can apply a check constraint to ensure that the values of a normal % column must fall within the range of 0 and 100.

With a PRIMARY KEY constraint, the values of the columns must be both distinct and not null. This one is presumably what you're utilizing. To distinguish the records in each table, the database needs have a primary key.

A FOREIGN KEY constraint requires that the values in one database column, typically a primary key, match those in another table column.

Transaction apathy is another data integrity issue for newbies. If numerous actions affect the same data source and depend on each other, they must be wrapped in a transaction that can be rolled back if one fails.

20) Reinventing the Wheel

Tricky. Some programming wheels need reinvention. Programming is undefined. New requirements and changes happen faster than any team can handle.

Instead of modifying the wheel we all adore, maybe we should rethink it if you need a wheel that spins at varied speeds depending on the time of day. If you don't require a non-standard wheel, don't reinvent it. Use the darn wheel.

Wheel brands can be hard to choose from. Research and test before buying! Most software wheels are free and transparent. Internal design quality lets you evaluate coding wheels. Try open-source wheels. Debug and fix open-source software simply. They're easily replaceable. In-house support is also easy.

If you need a wheel, don't buy a new automobile and put your maintained car on top. Do not include a library to use a few functions. Lodash in JavaScript is the finest example. Import shuffle to shuffle an array. Don't import lodash.

21) Adopting the incorrect perspective on code reviews

Beginners often see code reviews as criticism. Dislike them. Not appreciated. Even fear them.

Incorrect. If so, modify your mindset immediately. Learn from every code review. Salute them. Observe. Most crucial, thank reviewers who teach you.

Always learning code. Accept it. Most code reviews teach something new. Use these for learning.

You may need to correct the reviewer. If your code didn't make that evident, it may need to be changed. If you must teach your reviewer, remember that teaching is one of the most enjoyable things a programmer can do.

22) Not Using Source Control

Newbies often underestimate Git's capabilities.

Source control is more than sharing your modifications. It's much bigger. Clear history is source control. The history of coding will assist address complex problems. Commit messages matter. They are another way to communicate your implementations, and utilizing them with modest commits helps future maintainers understand how the code got where it is.

Commit early and often with present-tense verbs. Summarize your messages but be detailed. If you need more than a few lines, your commit is too long. Rebase!

Avoid needless commit messages. Commit summaries should not list new, changed, or deleted files. Git commands can display that list from the commit object. The summary message would be noise. I think a big commit has many summaries per file altered.

Source control involves discoverability. You can discover the commit that introduced a function and see its context if you doubt its need or design. Commits can even pinpoint which code caused a bug. Git has a binary search within commits (bisect) to find the bug-causing commit.

Source control can be used before commits to great effect. Staging changes, patching selectively, resetting, stashing, editing, applying, diffing, reversing, and others enrich your coding flow. Know, use, and enjoy them.

I consider a Git rookie someone who knows less functionalities.

23) Excessive Use of Shared State

Again, this is not about functional programming vs. other paradigms. That's another article.

Shared state is problematic and should be avoided if feasible. If not, use shared state as little as possible.

As a new programmer, I didn't know that all variables represent shared states. All variables in the same scope can change its data. Global scope reduces shared state span. Keep new states in limited scopes and avoid upward leakage.

When numerous resources modify common state in the same event loop tick, the situation becomes severe (in event-loop-based environments). Races happen.

This shared state race condition problem may encourage a rookie to utilize a timer, especially if they have a data lock issue. Red flag. No. Never accept it.

24) Adopting the Wrong Mentality Toward Errors

Errors are good. Progress. They indicate a simple way to improve.

Expert programmers enjoy errors. Newbies detest them.

If these lovely red error warnings irritate you, modify your mindset. Consider them helpers. Handle them. Use them to advance.

Some errors need exceptions. Plan for user-defined exceptions. Ignore some mistakes. Crash and exit the app.

25) Ignoring rest periods

Humans require mental breaks. Take breaks. In the zone, you'll forget breaks. Another symptom of beginners. No compromises. Make breaks mandatory in your process. Take frequent pauses. Take a little walk to plan your next move. Reread the code.

This has been a long post. You deserve a break.

You might also like

Olga Kharif

3 years ago

A month after freezing customer withdrawals, Celsius files for bankruptcy.

Alex Mashinsky, CEO of Celsius, speaks at Web Summit 2021 in Lisbon.

Celsius Network filed for Chapter 11 bankruptcy a month after freezing customer withdrawals, joining other crypto casualties.

Celsius took the step to stabilize its business and restructure for all stakeholders. The filing was done in the Southern District of New York.

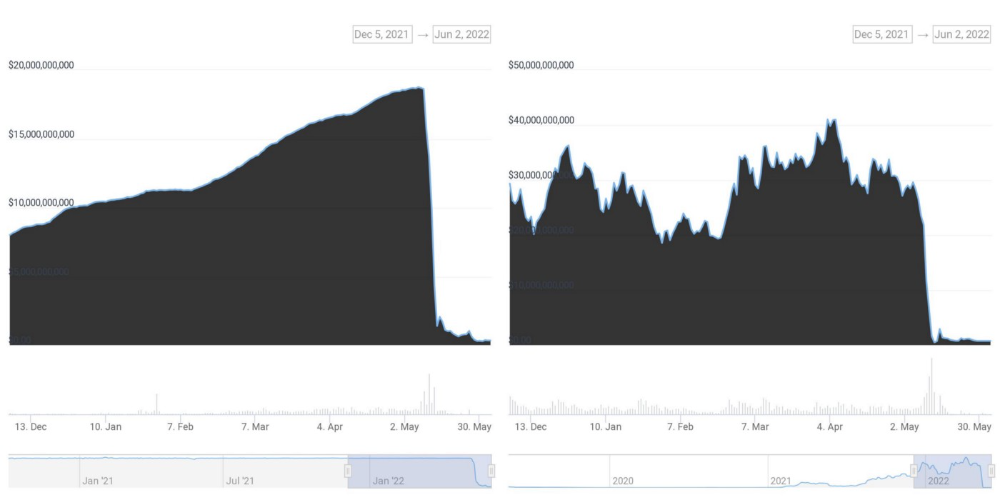

The company, which amassed more than $20 billion by offering 18% interest on cryptocurrency deposits, paused withdrawals and other functions in mid-June, citing "extreme market conditions."

As the Fed raises interest rates aggressively, it hurts risk sentiment and squeezes funding costs. Voyager Digital Ltd. filed for Chapter 11 bankruptcy this month, and Three Arrows Capital has called in liquidators.

Celsius called the pause "difficult but necessary." Without the halt, "the acceleration of withdrawals would have allowed certain customers to be paid in full while leaving others to wait for Celsius to harvest value from illiquid or longer-term asset deployment activities," it said.

Celsius declined to comment. CEO Alex Mashinsky said the move will strengthen the company's future.

The company wants to keep operating. It's not requesting permission to allow customer withdrawals right now; Chapter 11 will handle customer claims. The filing estimates assets and liabilities between $1 billion and $10 billion.

Celsius is advised by Kirkland & Ellis, Centerview Partners, and Alvarez & Marsal.

Yield-promises

Celsius promised 18% returns on crypto loans. It lent those coins to institutional investors and participated in decentralized-finance apps.

When TerraUSD (UST) and Luna collapsed in May, Celsius pulled its funds from Terra's Anchor Protocol, which offered 20% returns on UST deposits. Recently, another large holding, staked ETH, or stETH, which is tied to Ether, became illiquid and discounted to Ether.

The lender is one of many crypto companies hurt by risky bets in the bear market. Also, Babel halted withdrawals. Voyager Digital filed for bankruptcy, and crypto hedge fund Three Arrows Capital filed for Chapter 15 bankruptcy.

According to blockchain data and tracker Zapper, Celsius repaid all of its debt in Aave, Compound, and MakerDAO last month.

Celsius charged Symbolic Capital Partners Ltd. 2,000 Ether as collateral for a cash loan on June 13. According to company filings, Symbolic was charged 2,545.25 Ether on June 11.

In July 6 filings, it said it reshuffled its board, appointing two new members and firing others.

CyberPunkMetalHead

3 years ago

It's all about the ego with Terra 2.0.

UST depegs and LUNA crashes 99.999% in a fraction of the time it takes the Moon to orbit the Earth.

Fat Man, a Terra whistle-blower, promises to expose Do Kwon's dirty secrets and shady deals.

The Terra community has voted to relaunch Terra LUNA on a new blockchain. The Terra 2.0 Pheonix-1 blockchain went live on May 28, 2022, and people were airdropped the new LUNA, now called LUNA, while the old LUNA became LUNA Classic.

Does LUNA deserve another chance? To answer this, or at least start a conversation about the Terra 2.0 chain's advantages and limitations, we must assess its fundamentals, ideology, and long-term vision.

Whatever the result, our analysis must be thorough and ruthless. A failure of this magnitude cannot happen again, so we must magnify every potential breaking point by 10.

Will UST and LUNA holders be compensated in full?

The obvious. First, and arguably most important, is to restore previous UST and LUNA holders' bags.

Terra 2.0 has 1,000,000,000,000 tokens to distribute.

25% of a community pool

Holders of pre-attack LUNA: 35%

10% of aUST holders prior to attack

Holders of LUNA after an attack: 10%

UST holders as of the attack: 20%

Every LUNA and UST holder has been compensated according to the above proposal.

According to self-reported data, the new chain has 210.000.000 tokens and a $1.3bn marketcap. LUNC and UST alone lost $40bn. The new token must fill this gap. Since launch:

LUNA holders collectively own $1b worth of LUNA if we subtract the 25% community pool airdrop from the current market cap and assume airdropped LUNA was never sold.

At the current supply, the chain must grow 40 times to compensate holders. At the current supply, LUNA must reach $240.

LUNA needs a full-on Bull Market to make LUNC and UST holders whole.

Who knows if you'll be whole? From the time you bought to the amount and price, there are too many variables to determine if Terra can cover individual losses.

The above distribution doesn't consider individual cases. Terra didn't solve individual cases. It would have been huge.

What does LUNA offer in terms of value?

UST's marketcap peaked at $18bn, while LUNC's was $41bn. LUNC and UST drove the Terra chain's value.

After it was confirmed (again) that algorithmic stablecoins are bad, Terra 2.0 will no longer support them.

Algorithmic stablecoins contributed greatly to Terra's growth and value proposition. Terra 2.0 has no product without algorithmic stablecoins.

Terra 2.0 has an identity crisis because it has no actual product. It's like Volkswagen faking carbon emission results and then stopping car production.

A project that has already lost the trust of its users and nearly all of its value cannot survive without a clear and in-demand use case.

Do Kwon, how about him?

Oh, the Twitter-caller-poor? Who challenges crypto billionaires to break his LUNA chain? Who dissolved Terra Labs South Korea before depeg? Arrogant guy?

That's not a good image for LUNA, especially when making amends. I think he should step down and let a nicer person be Terra 2.0's frontman.

The verdict

Terra has a terrific community with an arrogant, unlikeable leader. The new LUNA chain must grow 40 times before it can start making up its losses, and even then, not everyone's losses will be covered.

I won't invest in Terra 2.0 or other algorithmic stablecoins in the near future. I won't be near any Do Kwon-related project within 100 miles. My opinion.

Can Terra 2.0 be saved? Comment below.

Daniel Clery

3 years ago

Twisted device investigates fusion alternatives

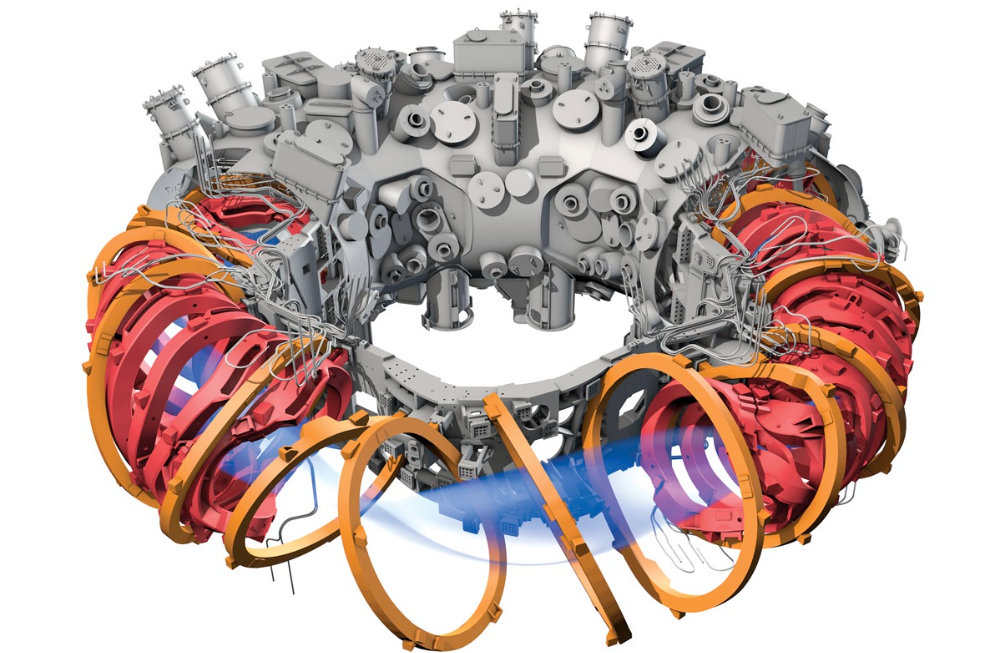

German stellarator revamped to run longer, hotter, compete with tokamaks

Tokamaks have dominated the search for fusion energy for decades. Just as ITER, the world's largest and most expensive tokamak, nears completion in southern France, a smaller, twistier testbed will start up in Germany.

If the 16-meter-wide stellarator can match or outperform similar-size tokamaks, fusion experts may rethink their future. Stellarators can keep their superhot gases stable enough to fuse nuclei and produce energy. They can theoretically run forever, but tokamaks must pause to reset their magnet coils.

The €1 billion German machine, Wendelstein 7-X (W7-X), is already getting "tokamak-like performance" in short runs, claims plasma physicist David Gates, preventing particles and heat from escaping the superhot gas. If W7-X can go long, "it will be ahead," he says. "Stellarators excel" Eindhoven University of Technology theorist Josefine Proll says, "Stellarators are back in the game." A few of startup companies, including one that Gates is leaving Princeton Plasma Physics Laboratory, are developing their own stellarators.

W7-X has been running at the Max Planck Institute for Plasma Physics (IPP) in Greifswald, Germany, since 2015, albeit only at low power and for brief runs. W7-X's developers took it down and replaced all inner walls and fittings with water-cooled equivalents, allowing for longer, hotter runs. The team reported at a W7-X board meeting last week that the revised plasma vessel has no leaks. It's expected to restart later this month to show if it can get plasma to fusion-igniting conditions.

Wendelstein 7-X's water-cooled inner surface allows for longer runs.

HOSAN/IPP

Both stellarators and tokamaks create magnetic gas cages hot enough to melt metal. Microwaves or particle beams heat. Extreme temperatures create a plasma, a seething mix of separated nuclei and electrons, and cause the nuclei to fuse, releasing energy. A fusion power plant would use deuterium and tritium, which react quickly. Non-energy-generating research machines like W7-X avoid tritium and use hydrogen or deuterium instead.

Tokamaks and stellarators use electromagnetic coils to create plasma-confining magnetic fields. A greater field near the hole causes plasma to drift to the reactor's wall.

Tokamaks control drift by circulating plasma around a ring. Streaming creates a magnetic field that twists and stabilizes ionized plasma. Stellarators employ magnetic coils to twist, not plasma. Once plasma physicists got powerful enough supercomputers, they could optimize stellarator magnets to improve plasma confinement.

W7-X is the first large, optimized stellarator with 50 6- ton superconducting coils. Its construction began in the mid-1990s and cost roughly twice the €550 million originally budgeted.

The wait hasn't disappointed researchers. W7-X director Thomas Klinger: "The machine operated immediately." "It's a friendly machine." It did everything we asked." Tokamaks are prone to "instabilities" (plasma bulging or wobbling) or strong "disruptions," sometimes associated to halted plasma flow. IPP theorist Sophia Henneberg believes stellarators don't employ plasma current, which "removes an entire branch" of instabilities.

In early stellarators, the magnetic field geometry drove slower particles to follow banana-shaped orbits until they collided with other particles and leaked energy. Gates believes W7-X's ability to suppress this effect implies its optimization works.

W7-X loses heat through different forms of turbulence, which push particles toward the wall. Theorists have only lately mastered simulating turbulence. W7-X's forthcoming campaign will test simulations and turbulence-fighting techniques.

A stellarator can run constantly, unlike a tokamak, which pulses. W7-X has run 100 seconds—long by tokamak standards—at low power. The device's uncooled microwave and particle heating systems only produced 11.5 megawatts. The update doubles heating power. High temperature, high plasma density, and extensive runs will test stellarators' fusion power potential. Klinger wants to heat ions to 50 million degrees Celsius for 100 seconds. That would make W7-X "a world-class machine," he argues. The team will push for 30 minutes. "We'll move step-by-step," he says.

W7-X's success has inspired VCs to finance entrepreneurs creating commercial stellarators. Startups must simplify magnet production.

Princeton Stellarators, created by Gates and colleagues this year, has $3 million to build a prototype reactor without W7-X's twisted magnet coils. Instead, it will use a mosaic of 1000 HTS square coils on the plasma vessel's outside. By adjusting each coil's magnetic field, operators can change the applied field's form. Gates: "It moves coil complexity to the control system." The company intends to construct a reactor that can fuse cheap, abundant deuterium to produce neutrons for radioisotopes. If successful, the company will build a reactor.

Renaissance Fusion, situated in Grenoble, France, raised €16 million and wants to coat plasma vessel segments in HTS. Using a laser, engineers will burn off superconductor tracks to carve magnet coils. They want to build a meter-long test segment in 2 years and a full prototype by 2027.

Type One Energy in Madison, Wisconsin, won DOE money to bend HTS cables for stellarator magnets. The business carved twisting grooves in metal with computer-controlled etching equipment to coil cables. David Anderson of the University of Wisconsin, Madison, claims advanced manufacturing technology enables the stellarator.

Anderson said W7-X's next phase will boost stellarator work. “Half-hour discharges are steady-state,” he says. “This is a big deal.”