More on Personal Growth

Leah

3 years ago

The Burnout Recovery Secrets Nobody Is Talking About

What works and what’s just more toxic positivity

Just keep at it; you’ll get it.

I closed the Zoom call and immediately dropped my head. Open tabs included material on inspiration, burnout, and recovery.

I searched everywhere for ways to avoid burnout.

It wasn't that I needed to keep going, change my routine, employ 8D audio playlists, or come up with fresh ideas. I had several ideas and a schedule. I knew what to do.

I wasn't interested. I kept reading, changing my self-care and mental health routines, and writing even though it was tiring.

Since burnout became a psychiatric illness in 2019, thousands have shared their experiences. It's spreading rapidly among writers.

What is the actual key to recovering from burnout?

Every A-list burnout story emphasizes prevention. Other lists provide repackaged self-care tips. More discuss mental health.

It's like the mid-2000s, when pink quotes about bubble baths saturated social media.

The self-care mania cost us all. Self-care is crucial, but utilizing it to address everything didn't work then or now.

How can you recover from burnout?

Time

Are extended breaks actually good for you? Most people need a break every 62 days or so to avoid burnout.

Real-life burnout victims all took breaks. Perhaps not a long hiatus, but breaks nonetheless.

Burnout is slow and gradual. It takes little bits of your motivation and passion at a time. Sometimes it’s so slow that you barely notice or blame it on other things like stress and poor sleep.

Burnout doesn't come overnight; neither will recovery.

I don’t care what anyone else says the cure for burnout is. It has to be time because time is what gave us all burnout in the first place.

Ian Writes

3 years ago

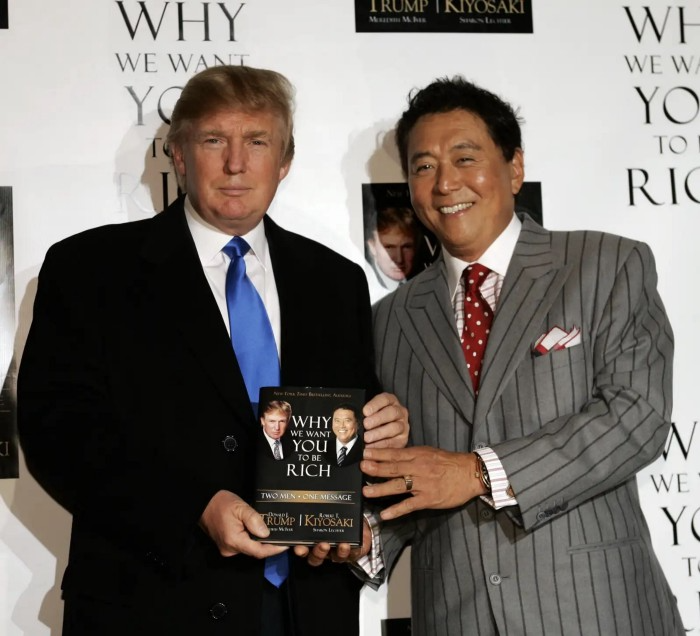

Rich Dad, Poor Dad is a Giant Steaming Pile of Sh*t by Robert Kiyosaki.

Don't promote it.

I rarely read a post on how Rich Dad, Poor Dad motivated someone to grow rich or change their investing/finance attitude. Rich Dad, Poor Dad is a sham, though. This book isn't worth anyone's attention.

Robert Kiyosaki, the author of this garbage, doesn't deserve recognition or attention. This first finance guru wanted to build his own wealth at your expense. These charlatans only care about themselves.

The reason why Rich Dad, Poor Dad is a huge steaming piece of trash

The book's ideas are superficial, apparent, and unsurprising to entrepreneurs and investors. The book's themes may seem profound to first-time readers.

Apparently, starting a business will make you rich.

The book supports founding or buying a business, making it self-sufficient, and being rich through it. Starting a business is time-consuming, tough, and expensive. Entrepreneurship isn't for everyone. Rarely do enterprises succeed.

Robert says we should think like his mentor, a rich parent. Robert never said who or if this guy existed. He was apparently his own father. Robert proposes investing someone else's money in several enterprises and properties. The book proposes investing in:

“have returns of 100 percent to infinity. Investments that for $5,000 are soon turned into $1 million or more.”

In rare cases, a business may provide 200x returns, but 65% of US businesses fail within 10 years. Australia's first-year business failure rate is 60%. A business that lasts 10 years doesn't mean its owner is rich. These statistics only include businesses that survive and pay their owners.

Employees are depressed and broke.

The novel portrays employees as broke and sad. The author degrades workers.

I've owned and worked for a business. I was broke and miserable as a business owner, working 80 hours a week for absolutely little salary. I work 50 hours a week and make over $200,000 a year. My work is hard, intriguing, and I'm surrounded by educated individuals. Self-employed or employee?

Don't listen to a charlatan's tax advice.

From a bad advise perspective, Robert's tax methods were funny. Robert suggests forming a corporation to write off holidays as board meetings or health club costs as business expenses. These actions can land you in serious tax trouble.

Robert dismisses college and traditional schooling. Rich individuals learn by doing or living, while educated people are agitated and destitute, says Robert.

Rich dad says:

“All too often business schools train employees to become sophisticated bean-counters. Heaven forbid a bean counter takes over a business. All they do is look at the numbers, fire people, and kill the business.”

And then says:

“Accounting is possibly the most confusing, boring subject in the world, but if you want to be rich long-term, it could be the most important subject.”

Get rich by avoiding paying your debts to others.

While this book has plenty of bad advice, I'll end with this: Robert advocates paying yourself first. This man's work with Trump isn't surprising.

Rich Dad's book says:

“So you see, after paying myself, the pressure to pay my taxes and the other creditors is so great that it forces me to seek other forms of income. The pressure to pay becomes my motivation. I’ve worked extra jobs, started other companies, traded in the stock market, anything just to make sure those guys don’t start yelling at me […] If I had paid myself last, I would have felt no pressure, but I’d be broke.“

Paying yourself first shouldn't mean ignoring debt, damaging your credit score and reputation, or paying unneeded fees and interest. Good business owners pay employees, creditors, and other costs first. You can pay yourself after everyone else.

If you follow Robert Kiyosaki's financial and business advice, you might as well follow Donald Trump's, the most notoriously ineffective businessman and swindle artist.

This book's popularity is unfortunate. Robert utilized the book's fame to promote paid seminars. At these seminars, he sold more expensive seminars to the gullible. This strategy was utilized by several conmen and Trump University.

It's reasonable that many believed him. It sounded appealing because he was pushing to get rich by thinking like a rich person. Anyway. At a time when most persons addressing wealth development advised early sacrifices (such as eschewing luxury or buying expensive properties), Robert told people to act affluent now and utilize other people's money to construct their fantasy lifestyle. It's exciting and fast.

I often voice my skepticism and scorn for internet gurus now that social media and platforms like Medium make it easier to promote them. Robert Kiyosaki was a guru. Many people still preach his stuff because he was so good at pushing it.

Daniel Vassallo

3 years ago

Why I quit a $500K job at Amazon to work for myself

I quit my 8-year Amazon job last week. I wasn't motivated to do another year despite promotions, pay, recognition, and praise.

In AWS, I built developer tools. I could have worked in that field forever.

I became an Amazon developer. Within 3.5 years, I was promoted twice to senior engineer and would have been promoted to principal engineer if I stayed. The company said I had great potential.

Over time, I became a reputed expert and leader within the company. I was respected.

First year I made $75K, last year $511K. If I stayed another two years, I could have made $1M.

Despite Amazon's reputation, my work–life balance was good. I no longer needed to prove myself and could do everything in 40 hours a week. My team worked from home once a week, and I rarely opened my laptop nights or weekends.

My coworkers were great. I had three generous, empathetic managers. I’m very grateful to everyone I worked with.

Everything was going well and getting better. My motivation to go to work each morning was declining despite my career and income growth.

Another promotion, pay raise, or big project wouldn't have boosted my motivation. Motivation was also waning. It was my freedom.

Demotivation

My motivation was high in the beginning. I worked with someone on an internal tool with little scrutiny. I had more freedom to choose how and what to work on than in recent years. Me and another person improved it, talked to users, released updates, and tested it. Whatever we wanted, we did. We did our best and were mostly self-directed.

In recent years, things have changed. My department's most important project had many stakeholders and complex goals. What I could do depended on my ability to convince others it was the best way to achieve our goals.

Amazon was always someone else's terms. The terms started out simple (keep fixing it), but became more complex over time (maximize all goals; satisfy all stakeholders). Working in a large organization imposed restrictions on how to do the work, what to do, what goals to set, and what business to pursue. This situation forced me to do things I didn't want to do.

Finding New Motivation

What would I do forever? Not something I did until I reached a milestone (an exit), but something I'd do until I'm 80. What could I do for the next 45 years that would make me excited to wake up and pay my bills? Is that too unambitious? Nope. Because I'm motivated by two things.

One is an external carrot or stick. I'm not forced to file my taxes every April, but I do because I don't want to go to jail. Or I may not like something but do it anyway because I need to pay the bills or want a nice car. Extrinsic motivation

One is internal. When there's no carrot or stick, this motivates me. This fuels hobbies. I wanted a job that was intrinsically motivated.

Is this too low-key? Extrinsic motivation isn't sustainable. Getting promoted felt good for a week, then it was over. When I hit $100K, I admired my W2 for a few days, but then it wore off. Same thing happened at $200K, $300K, $400K, and $500K. Earning $1M or $10M wouldn't change anything. I feel the same about every material reward or possession. Getting them feels good at first, but quickly fades.

Things I've done since I was a kid, when no one forced me to, don't wear off. Coding, selling my creations, charting my own path, and being honest. Why not always use my strengths and motivation? I'm lucky to live in a time when I can work independently in my field without large investments. So that’s what I’m doing.

What’s Next?

I'm going all-in on independence and will make a living from scratch. I won't do only what I like, but on my terms. My goal is to cover my family's expenses before my savings run out while doing something I enjoy. What more could I want from my work?

You can now follow me on Twitter as I continue to document my journey.

This post is a summary. Read full article here

You might also like

Cammi Pham

3 years ago

7 Scientifically Proven Things You Must Stop Doing To Be More Productive

Smarter work yields better results.

17-year-old me worked and studied 20 hours a day. During school breaks, I did coursework and ran a nonprofit at night. Long hours earned me national campaigns, A-list opportunities, and a great career. As I aged, my thoughts changed. Working harder isn't necessarily the key to success.

In some cases, doing less work might lead to better outcomes.

Consider a hard-working small business owner. He can't beat his corporate rivals by working hard. Time's limited. An entrepreneur can work 24 hours a day, 7 days a week, but a rival can invest more money, create a staff, and put in more man hours. Why have small startups done what larger companies couldn't? Facebook paid $1 billion for 13-person Instagram. Snapchat, a 30-person startup, rejected Facebook and Google bids. Luck and efficiency each contributed to their achievement.

The key to success is not working hard. It’s working smart.

Being busy and productive are different. Busy doesn't always equal productive. Productivity is less about time management and more about energy management. Life's work. It's using less energy to obtain more rewards. I cut my work week from 80 to 40 hours and got more done. I value simplicity.

Here are seven activities I gave up in order to be more productive.

1. Give up working extra hours and boost productivity instead.

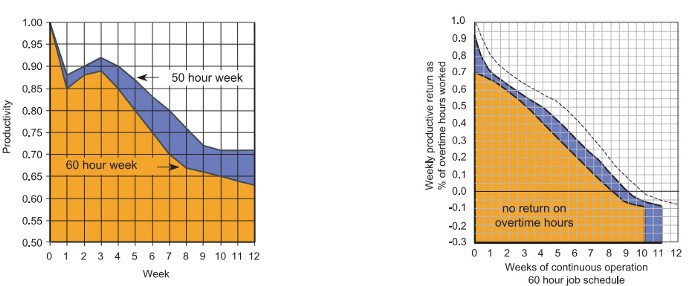

When did the five-day, 40-hour work week start? Henry Ford, Ford Motor Company founder, experimented with his workers in 1926.

He decreased their daily hours from 10 to 8, and shortened the work week from 6 days to 5. As a result, he saw his workers’ productivity increase.

According to a 1980 Business Roundtable report, Scheduled Overtime Effect on Construction Projects, the more you work, the less effective and productive you become.

“Where a work schedule of 60 or more hours per week is continued longer than about two months, the cumulative effect of decreased productivity will cause a delay in the completion date beyond that which could have been realized with the same crew size on a 40-hour week.” Source: Calculating Loss of Productivity Due to Overtime Using Published Charts — Fact or Fiction

AlterNet editor Sara Robinson cited US military research showing that losing one hour of sleep per night for a week causes cognitive impairment equivalent to a.10 blood alcohol level. You can get fired for showing up drunk, but an all-nighter is fine.

Irrespective of how well you were able to get on with your day after that most recent night without sleep, it is unlikely that you felt especially upbeat and joyous about the world. Your more-negative-than-usual perspective will have resulted from a generalized low mood, which is a normal consequence of being overtired. More important than just the mood, this mind-set is often accompanied by decreases in willingness to think and act proactively, control impulses, feel positive about yourself, empathize with others, and generally use emotional intelligence. Source: The Secret World of Sleep: The Surprising Science of the Mind at Rest

To be productive, don't overwork and get enough sleep. If you're not productive, lack of sleep may be to blame. James Maas, a sleep researcher and expert, said 7/10 Americans don't get enough sleep.

Did you know?

Leonardo da Vinci slept little at night and frequently took naps.

Napoleon, the French emperor, had no qualms about napping. He splurged every day.

Even though Thomas Edison felt self-conscious about his napping behavior, he regularly engaged in this ritual.

President Franklin D. Roosevelt's wife Eleanor used to take naps before speeches to increase her energy.

The Singing Cowboy, Gene Autry, was known for taking regular naps in his dressing area in between shows.

Every day, President John F. Kennedy took a siesta after eating his lunch in bed.

Every afternoon, oil businessman and philanthropist John D. Rockefeller took a nap in his office.

It was unavoidable for Winston Churchill to take an afternoon snooze. He thought it enabled him to accomplish twice as much each day.

Every afternoon around 3:30, President Lyndon B. Johnson took a nap to divide his day into two segments.

Ronald Reagan, the 40th president, was well known for taking naps as well.

Source: 5 Reasons Why You Should Take a Nap Every Day — Michael Hyatt

Since I started getting 7 to 8 hours of sleep a night, I've been more productive and completed more work than when I worked 16 hours a day. Who knew marketers could use sleep?

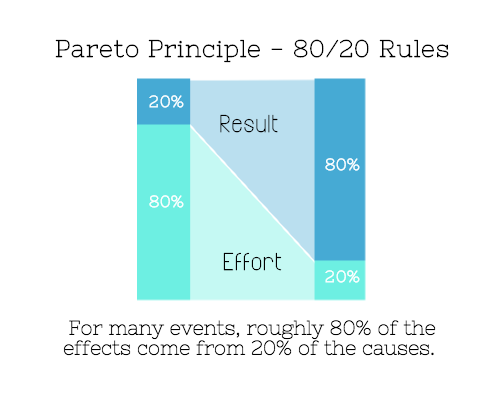

2. Refrain from accepting too frequently

Pareto's principle states that 20% of effort produces 80% of results, but 20% of results takes 80% of effort. Instead of working harder, we should prioritize the initiatives that produce the most outcomes. So we can focus on crucial tasks. Stop accepting unproductive tasks.

“The difference between successful people and very successful people is that very successful people say “no” to almost everything.” — Warren Buffett

What should you accept? Why say no? Consider doing a split test to determine if anything is worth your attention. Track what you do, how long it takes, and the consequences. Then, evaluate your list to discover what worked (or didn't) to optimize future chores.

Most of us say yes more often than we should, out of guilt, overextension, and because it's simpler than no. Nobody likes being awful.

Researchers separated 120 students into two groups for a 2012 Journal of Consumer Research study. One group was educated to say “I can't” while discussing choices, while the other used “I don't”.

The students who told themselves “I can’t eat X” chose to eat the chocolate candy bar 61% of the time. Meanwhile, the students who told themselves “I don’t eat X” chose to eat the chocolate candy bars only 36% of the time. This simple change in terminology significantly improved the odds that each person would make a more healthy food choice.

Next time you need to say no, utilize I don't to encourage saying no to unimportant things.

The 20-second rule is another wonderful way to avoid pursuits with little value. Add a 20-second roadblock to things you shouldn't do or bad habits you want to break. Delete social media apps from your phone so it takes you 20 seconds to find your laptop to access them. You'll be less likely to engage in a draining hobby or habit if you add an inconvenience.

Lower the activation energy for habits you want to adopt and raise it for habits you want to avoid. The more we can lower or even eliminate the activation energy for our desired actions, the more we enhance our ability to jump-start positive change. Source: The Happiness Advantage: The Seven Principles of Positive Psychology That Fuel Success and Performance at Work

3. Stop doing everything yourself and start letting people help you

I once managed a large community and couldn't do it alone. The community took over once I burned out. Members did better than I could have alone. I learned about community and user-generated content.

Consumers know what they want better than marketers. Octoly says user-generated videos on YouTube are viewed 10 times more than brand-generated videos. 51% of Americans trust user-generated material more than a brand's official website (16%) or media coverage (22%). (14 percent). Marketers should seek help from the brand community.

Being a successful content marketer isn't about generating the best content, but cultivating a wonderful community.

We should seek aid when needed. We can't do everything. It's best to delegate work so you may focus on the most critical things. Instead of overworking or doing things alone, let others help.

Having friends or coworkers around can boost your productivity even if they can't help.

Just having friends nearby can push you toward productivity. “There’s a concept in ADHD treatment called the ‘body double,’ ” says David Nowell, Ph.D., a clinical neuropsychologist from Worcester, Massachusetts. “Distractable people get more done when there is someone else there, even if he isn’t coaching or assisting them.” If you’re facing a task that is dull or difficult, such as cleaning out your closets or pulling together your receipts for tax time, get a friend to be your body double. Source: Friendfluence: The Surprising Ways Friends Make Us Who We Are

4. Give up striving for perfection

Perfectionism hinders professors' research output. Dr. Simon Sherry, a psychology professor at Dalhousie University, did a study on perfectionism and productivity. Dr. Sherry established a link between perfectionism and productivity.

Perfectionism has its drawbacks.

They work on a task longer than necessary.

They delay and wait for the ideal opportunity. If the time is right in business, you are already past the point.

They pay too much attention to the details and miss the big picture.

Marketers await the right time. They miss out.

The perfect moment is NOW.

5. Automate monotonous chores instead of continuing to do them.

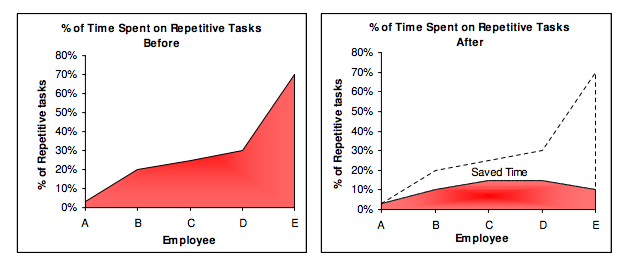

A team of five workers who spent 3%, 20%, 25%, 30%, and 70% of their time on repetitive tasks reduced their time spent to 3%, 10%, 15%, 15%, and 10% after two months of working to improve their productivity.

Last week, I wrote a 15-minute Python program. I wanted to generate content utilizing Twitter API data and Hootsuite to bulk schedule it. Automation has cut this task from a day to five minutes. Whenever I do something more than five times, I try to automate it.

Automate monotonous chores without coding. Skills and resources are nice, but not required. If you cannot build it, buy it.

People forget time equals money. Manual work is easy and requires little investigation. You can moderate 30 Instagram photographs for your UGC campaign. You need digital asset management software to manage 30,000 photographs and movies from five platforms. Filemobile helps individuals develop more user-generated content. You may buy software to manage rich media and address most internet difficulties.

Hire an expert if you can't find a solution. Spend money to make money, and time is your most precious asset.

Visit GitHub or Google Apps Script library, marketers. You may often find free, easy-to-use open source code.

6. Stop relying on intuition and start supporting your choices with data.

You may optimize your life by optimizing webpages for search engines.

Numerous studies might help you boost your productivity. Did you know individuals are most distracted from midday to 4 p.m.? This is what a Penn State psychology professor found. Even if you can't find data on a particular question, it's easy to run a split test and review your own results.

7. Stop working and spend some time doing absolutely nothing.

Most people don't know that being too focused can be destructive to our work or achievements. The Boston Globe's The Power of Lonely says solo time is excellent for the brain and spirit.

One ongoing Harvard study indicates that people form more lasting and accurate memories if they believe they’re experiencing something alone. Another indicates that a certain amount of solitude can make a person more capable of empathy towards others. And while no one would dispute that too much isolation early in life can be unhealthy, a certain amount of solitude has been shown to help teenagers improve their moods and earn good grades in school. Source: The Power of Lonely

Reflection is vital. We find solutions when we're not looking.

We don't become more productive overnight. It demands effort and practice. Waiting for change doesn't work. Instead, learn about your body and identify ways to optimize your energy and time for a happy existence.

Daniel Clery

3 years ago

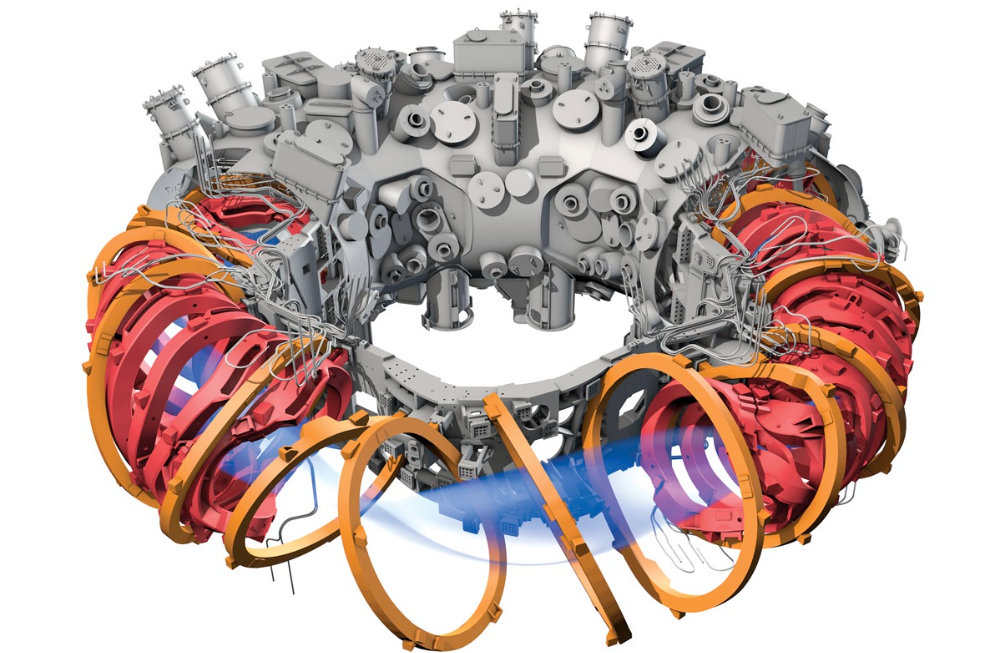

Twisted device investigates fusion alternatives

German stellarator revamped to run longer, hotter, compete with tokamaks

Tokamaks have dominated the search for fusion energy for decades. Just as ITER, the world's largest and most expensive tokamak, nears completion in southern France, a smaller, twistier testbed will start up in Germany.

If the 16-meter-wide stellarator can match or outperform similar-size tokamaks, fusion experts may rethink their future. Stellarators can keep their superhot gases stable enough to fuse nuclei and produce energy. They can theoretically run forever, but tokamaks must pause to reset their magnet coils.

The €1 billion German machine, Wendelstein 7-X (W7-X), is already getting "tokamak-like performance" in short runs, claims plasma physicist David Gates, preventing particles and heat from escaping the superhot gas. If W7-X can go long, "it will be ahead," he says. "Stellarators excel" Eindhoven University of Technology theorist Josefine Proll says, "Stellarators are back in the game." A few of startup companies, including one that Gates is leaving Princeton Plasma Physics Laboratory, are developing their own stellarators.

W7-X has been running at the Max Planck Institute for Plasma Physics (IPP) in Greifswald, Germany, since 2015, albeit only at low power and for brief runs. W7-X's developers took it down and replaced all inner walls and fittings with water-cooled equivalents, allowing for longer, hotter runs. The team reported at a W7-X board meeting last week that the revised plasma vessel has no leaks. It's expected to restart later this month to show if it can get plasma to fusion-igniting conditions.

Wendelstein 7-X's water-cooled inner surface allows for longer runs.

HOSAN/IPP

Both stellarators and tokamaks create magnetic gas cages hot enough to melt metal. Microwaves or particle beams heat. Extreme temperatures create a plasma, a seething mix of separated nuclei and electrons, and cause the nuclei to fuse, releasing energy. A fusion power plant would use deuterium and tritium, which react quickly. Non-energy-generating research machines like W7-X avoid tritium and use hydrogen or deuterium instead.

Tokamaks and stellarators use electromagnetic coils to create plasma-confining magnetic fields. A greater field near the hole causes plasma to drift to the reactor's wall.

Tokamaks control drift by circulating plasma around a ring. Streaming creates a magnetic field that twists and stabilizes ionized plasma. Stellarators employ magnetic coils to twist, not plasma. Once plasma physicists got powerful enough supercomputers, they could optimize stellarator magnets to improve plasma confinement.

W7-X is the first large, optimized stellarator with 50 6- ton superconducting coils. Its construction began in the mid-1990s and cost roughly twice the €550 million originally budgeted.

The wait hasn't disappointed researchers. W7-X director Thomas Klinger: "The machine operated immediately." "It's a friendly machine." It did everything we asked." Tokamaks are prone to "instabilities" (plasma bulging or wobbling) or strong "disruptions," sometimes associated to halted plasma flow. IPP theorist Sophia Henneberg believes stellarators don't employ plasma current, which "removes an entire branch" of instabilities.

In early stellarators, the magnetic field geometry drove slower particles to follow banana-shaped orbits until they collided with other particles and leaked energy. Gates believes W7-X's ability to suppress this effect implies its optimization works.

W7-X loses heat through different forms of turbulence, which push particles toward the wall. Theorists have only lately mastered simulating turbulence. W7-X's forthcoming campaign will test simulations and turbulence-fighting techniques.

A stellarator can run constantly, unlike a tokamak, which pulses. W7-X has run 100 seconds—long by tokamak standards—at low power. The device's uncooled microwave and particle heating systems only produced 11.5 megawatts. The update doubles heating power. High temperature, high plasma density, and extensive runs will test stellarators' fusion power potential. Klinger wants to heat ions to 50 million degrees Celsius for 100 seconds. That would make W7-X "a world-class machine," he argues. The team will push for 30 minutes. "We'll move step-by-step," he says.

W7-X's success has inspired VCs to finance entrepreneurs creating commercial stellarators. Startups must simplify magnet production.

Princeton Stellarators, created by Gates and colleagues this year, has $3 million to build a prototype reactor without W7-X's twisted magnet coils. Instead, it will use a mosaic of 1000 HTS square coils on the plasma vessel's outside. By adjusting each coil's magnetic field, operators can change the applied field's form. Gates: "It moves coil complexity to the control system." The company intends to construct a reactor that can fuse cheap, abundant deuterium to produce neutrons for radioisotopes. If successful, the company will build a reactor.

Renaissance Fusion, situated in Grenoble, France, raised €16 million and wants to coat plasma vessel segments in HTS. Using a laser, engineers will burn off superconductor tracks to carve magnet coils. They want to build a meter-long test segment in 2 years and a full prototype by 2027.

Type One Energy in Madison, Wisconsin, won DOE money to bend HTS cables for stellarator magnets. The business carved twisting grooves in metal with computer-controlled etching equipment to coil cables. David Anderson of the University of Wisconsin, Madison, claims advanced manufacturing technology enables the stellarator.

Anderson said W7-X's next phase will boost stellarator work. “Half-hour discharges are steady-state,” he says. “This is a big deal.”

Rachel Greenberg

3 years ago

6 Causes Your Sales Pitch Is Unintentionally Repulsing Customers

Skip this if you don't want to discover why your lively, no-brainer pitch isn't making $10k a month.

You don't want to be repulsive as an entrepreneur or anyone else. Making friends, influencing people, and converting strangers into customers will be difficult if your words evoke disgust, distrust, or disrespect. You may be one of many entrepreneurs who do this obliviously and involuntarily.

I've had to master selling my skills to recruiters (to land 6-figure jobs on Wall Street), selling companies to buyers in M&A transactions, and selling my own companies' products to strangers-turned-customers. I probably committed every cardinal sin of sales repulsion before realizing it was me or my poor salesmanship strategy.

If you're launching a new business, frustrated by low conversion rates, or just curious if you're repelling customers, read on to identify (and avoid) the 6 fatal errors that can kill any sales pitch.

1. The first indication

So many people fumble before they even speak because they assume their role is to convince the buyer. In other words, they expect to pressure, arm-twist, and combat objections until they convert the buyer. Actuality, the approach stinks of disgust, and emotionally-aware buyers would feel "gross" immediately.

Instead of trying to persuade a customer to buy, ask questions that will lead them to do so on their own. When a customer discovers your product or service on their own, they need less outside persuasion. Why not position your offer in a way that leads customers to sell themselves on it?

2. A flawless performance

Are you memorizing a sales script, tweaking video testimonials, and expunging historical blemishes before hitting "publish" on your new campaign? If so, you may be hurting your conversion rate.

Perfection may be a step too far and cause prospects to mistrust your sincerity. Become a great conversationalist to boost your sales. Seriously. Being charismatic is hard without being genuine and showing a little vulnerability.

People like vulnerability, even if it dents your perfect facade. Show the customer's stuttering testimonial. Open up about your or your company's past mistakes (and how you've since improved). Make your sales pitch a two-way conversation. Let the customer talk about themselves to build rapport. Real people sell, not canned scripts and movie-trailer testimonials.

If marketing or sales calls feel like a performance, you may be doing something wrong or leaving money on the table.

3. Your greatest phobia

Three minutes into prospect talks, I'd start sweating. I was talking 100 miles per hour, covering as many bases as possible to avoid the ones I feared. I knew my then-offering was inadequate and my firm had fears I hadn't addressed. So I word-vomited facts, features, and everything else to avoid the customer's concerns.

Do my prospects know I'm insecure? Maybe not, but it added an unnecessary and unhelpful layer of paranoia that kept me stressed, rushed, and on edge instead of connecting with the prospect. Skirting around a company, product, or service's flaws or objections is a poor, temporary, lazy (and cowardly) decision.

How can you project confidence and trust if you're afraid? Before you make another sales call, face your shortcomings, weak points, and objections. Your company won't be everyone's cup of tea, but you should have answers to every question or objection. You should be your business's top spokesperson and defender.

4. The unintentional apologies

Have you ever begged for a sale? I'm going to say no, however you may be unknowingly emitting sorry, inferior, insecure energy.

Young founders, first-time entrepreneurs, and those with severe imposter syndrome may elevate their target customer. This is common when trying to get first customers for obvious reasons.

Since you're truly new at this, you naturally lack experience.

You don't have the self-confidence boost of thousands or hundreds of closed deals or satisfied client results to remind you that your good or service is worthwhile.

Getting those initial few clients seems like the most difficult task, as if doing so will decide the fate of your company as a whole (it probably won't, and you shouldn't actually place that much emphasis on any one transaction).

Customers can smell fear, insecurity, and anxiety just like they can smell B.S. If you believe your product or service improves clients' lives, selling it should feel like a benevolent act of service, not a sleazy money-grab. If you're a sincere entrepreneur, prospects will believe your proposition; if you're apprehensive, they'll notice.

Approach every sale as if you're fine with or without it. This has improved my salesmanship, marketing skills, and mental health. When you put pressure on yourself to close a sale or convince a difficult prospect "or else" (your company will fail, your rent will be late, your electricity will be cut), you emit desperation and lower the quality of your pitch. There's no point.

5. The endless promises

We've all read a million times how to answer or disprove prospects' arguments and add extra incentives to speed or secure the close. Some objections shouldn't be refuted. What if I told you not to offer certain incentives, bonuses, and promises? What if I told you to walk away from some prospects, even if it means losing your sales goal?

If you market to enough people, make enough sales calls, or grow enough companies, you'll encounter prospects who can't be satisfied. These prospects have endless questions, concerns, and requests for more, more, more that you'll never satisfy. These people are a distraction, a resource drain, and a test of your ability to cut losses before they erode your sanity and profit margin.

To appease or convert these insatiably needy, greedy Nellies into customers, you may agree with or acquiesce to every request and demand — even if you can't follow through. Once you overpromise and answer every hole they poke, their trust in you may wane quickly.

Telling a prospect what you can't do takes courage and integrity. If you're honest, upfront, and willing to admit when a product or service isn't right for the customer, you'll gain respect and positive customer experiences. Sometimes honesty is the most refreshing pitch and the deal-closer.

6. No matter what

Have you ever said, "I'll do anything to close this sale"? If so, you've probably already been disqualified. If a prospective customer haggles over a price, requests a discount, or continues to wear you down after you've made three concessions too many, you have a metal hook in your mouth, not them, and it may not end well. Why?

If you're so willing to cut a deal that you cut prices, comp services, extend payment plans, waive fees, etc., you betray your own confidence that your product or service was worth the stated price. They wonder if anyone is paying those prices, if you've ever had a customer (who wasn't a blood relative), and if you're legitimate or worth your rates.

Once a prospect senses that you'll do whatever it takes to get them to buy, their suspicions rise and they wonder why.

Why are you cutting pricing if something is wrong with you or your service?

Why are you so desperate for their sale?

Why aren't more customers waiting in line to pay your pricing, and if they aren't, what on earth are they doing there?

That's what a prospect thinks when you reveal your lack of conviction, desperation, and willingness to give up control. Some prospects will exploit it to drain you dry, while others will be too frightened to buy from you even if you paid them.

Walking down a two-way street. Be casual.

If we track each act of repulsion to an uneasiness, fear, misperception, or impulse, it's evident that these sales and marketing disasters were forced communications. Stiff, imbalanced, divisive, combative, bravado-filled, and desperate. They were unnatural and accepted a power struggle between two sparring, suspicious, unequal warriors, rather than a harmonious oneness of two natural, but opposite parties shaking hands.

Sales should be natural, harmonious. Sales should feel good for both parties, not like one party is having their arm twisted.

You may be doing sales wrong if it feels repulsive, icky, or degrading. If you're thinking cringe-worthy thoughts about yourself, your product, service, or sales pitch, imagine what you're projecting to prospects. Don't make it unpleasant, repulsive, or cringeworthy.