How a $300K Bored Ape Yacht Club NFT was accidentally sold for $3K

The Bored Ape Yacht Club is one of the most prestigious NFT collections in the world. A collection of 10,000 NFTs, each depicting an ape with different traits and visual attributes, Jimmy Fallon, Steph Curry and Post Malone are among their star-studded owners. Right now the price of entry is 52 ether, or $210,000.

Which is why it's so painful to see that someone accidentally sold their Bored Ape NFT for $3,066.

Unusual trades are often a sign of funny business, as in the case of the person who spent $530 million to buy an NFT from themselves. In Saturday's case, the cause was a simple, devastating "fat-finger error." That's when people make a trade online for the wrong thing, or for the wrong amount. Here the owner, real name Max or username maxnaut, meant to list his Bored Ape for 75 ether, or around $300,000. Instead he accidentally listed it for 0.75. One hundredth the intended price.

It was bought instantaneously. The buyer paid an extra $34,000 to speed up the transaction, ensuring no one could snap it up before them. The Bored Ape was then promptly listed for $248,000. The transaction appears to have been done by a bot, which can be coded to immediately buy NFTs listed below a certain price on behalf of their owners in order to take advantage of these exact situations.

"How'd it happen? A lapse of concentration I guess," Max told me. "I list a lot of items every day and just wasn't paying attention properly. I instantly saw the error as my finger clicked the mouse but a bot sent a transaction with over 8 eth [$34,000] of gas fees so it was instantly sniped before I could click cancel, and just like that, $250k was gone."

"And here within the beauty of the Blockchain you can see that it is both honest and unforgiving," he added.

Fat finger trades happen sporadically in traditional finance -- like the Japanese trader who almost bought 57% of Toyota's stock in 2014 -- but most financial institutions will stop those transactions if alerted quickly enough. Since cryptocurrency and NFTs are designed to be decentralized, you essentially have to rely on the goodwill of the buyer to reverse the transaction.

Fat finger errors in cryptocurrency trades have made many a headline over the past few years. Back in 2019, the company behind Tether, a cryptocurrency pegged to the US dollar, nearly doubled its own coin supply when it accidentally created $5 billion-worth of new coins. In March, BlockFi meant to send 700 Gemini Dollars to a set of customers, worth roughly $1 each, but mistakenly sent out millions of dollars worth of bitcoin instead. Last month a company erroneously paid a $24 million fee on a $100,000 transaction.

Similar incidents are increasingly being seen in NFTs, now that many collections have accumulated in market value over the past year. Last month someone tried selling a CryptoPunk NFT for $19 million, but accidentally listed it for $19,000 instead. Back in August, someone fat finger listed their Bored Ape for $26,000, an error that someone else immediately capitalized on. The original owner offered $50,000 to the buyer to return the Bored Ape -- but instead the opportunistic buyer sold it for the then-market price of $150,000.

"The industry is so new, bad things are going to happen whether it's your fault or the tech," Max said. "Once you no longer have control of the outcome, forget and move on."

The Bored Ape Yacht Club launched back in April 2021, with 10,000 NFTs being sold for 0.08 ether each -- about $190 at the time. While NFTs are often associated with individual digital art pieces, collections like the Bored Ape Yacht Club, which allow owners to flaunt their NFTs by using them as profile pictures on social media, are becoming increasingly prevalent. The Bored Ape Yacht Club has since become the second biggest NFT collection in the world, second only to CryptoPunks, which launched in 2017 and is considered the "original" NFT collection.

More on Web3 & Crypto

TheRedKnight

2 years ago

Say goodbye to Ponzi yields - A new era of decentralized perpetual

Decentralized perpetual may be the next crypto market boom; with tons of perpetual popping up, let's look at two protocols that offer organic, non-inflationary yields.

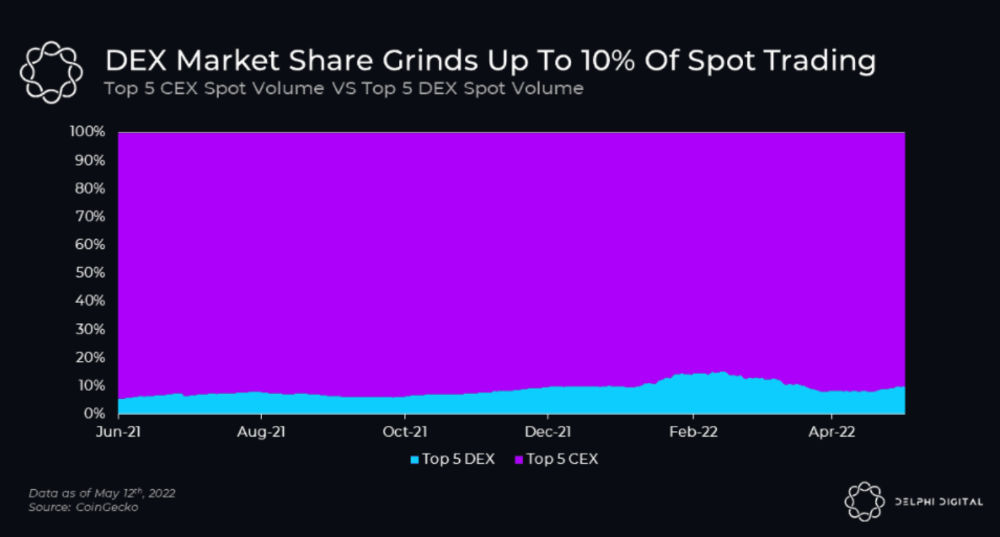

Decentralized derivatives exchanges' market share has increased tenfold in a year, but it's still 2% of CEXs'. DEXs have a long way to go before they can compete with centralized exchanges in speed, liquidity, user experience, and composability.

I'll cover gains.trade and GMX protocol in Polygon, Avalanche, and Arbitrum. Both protocols support leveraged perpetual crypto, stock, and Forex trading.

Why these protocols?

Decentralized GMX Gains protocol

Organic yield: path to sustainability

I've never trusted Defi's non-organic yields. Example: XYZ protocol. 20–75% of tokens may be set aside as farming rewards to provide liquidity, according to tokenomics.

Say you provide ETH-USDC liquidity. They advertise a 50% APR reward for this pair, 10% from trading fees and 40% from farming rewards. Only 10% is real, the rest is "Ponzi." The "real" reward is in protocol tokens.

Why keep this token? Governance voting or staking rewards are promoted services.

Most liquidity providers expect compensation for unused tokens. Basic psychological principles then? — Profit.

Nobody wants governance tokens. How many out of 100 care about the protocol's direction and will vote?

Staking increases your token's value. Currently, they're mostly non-liquid. If the protocol is compromised, you can't withdraw funds. Most people are sceptical of staking because of this.

"Free tokens," lack of use cases, and skepticism lead to tokens moving south. No farming reward protocols have lasted.

It may have shown strength in a bull market, but what about a bear market?

What is decentralized perpetual?

A perpetual contract is a type of futures contract that doesn't expire. So one can hold a position forever.

You can buy/sell any leveraged instruments (Long-Short) without expiration.

In centralized exchanges like Binance and coinbase, fees and revenue (liquidation) go to the exchanges, not users.

Users can provide liquidity that traders can use to leverage trade, and the revenue goes to liquidity providers.

Gains.trade and GMX protocol are perpetual trading platforms with a non-inflationary organic yield for liquidity providers.

GMX protocol

GMX is an Arbitrum and Avax protocol that rewards in ETH and Avax. GLP uses a fast oracle to borrow the "true price" from other trading venues, unlike a traditional AMM.

GLP and GMX are protocol tokens. GLP is used for leveraged trading, swapping, etc.

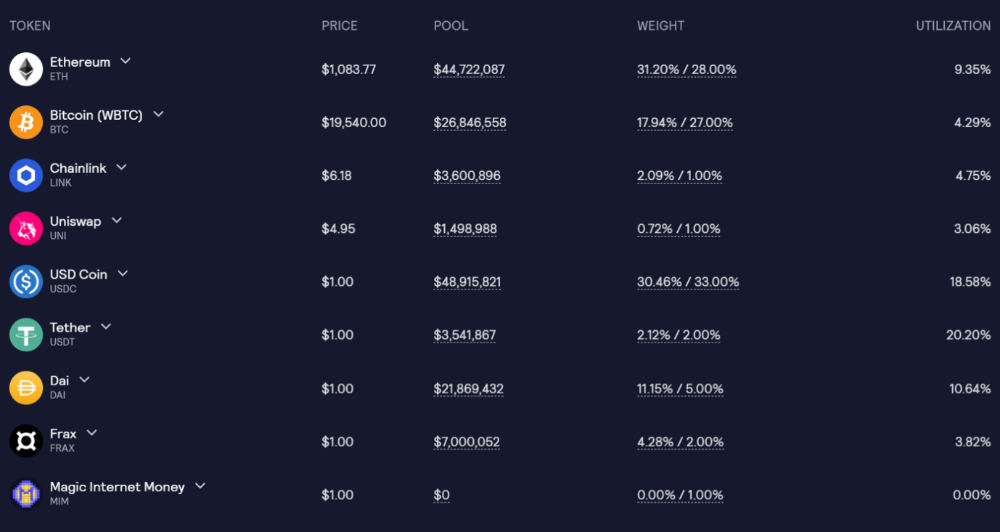

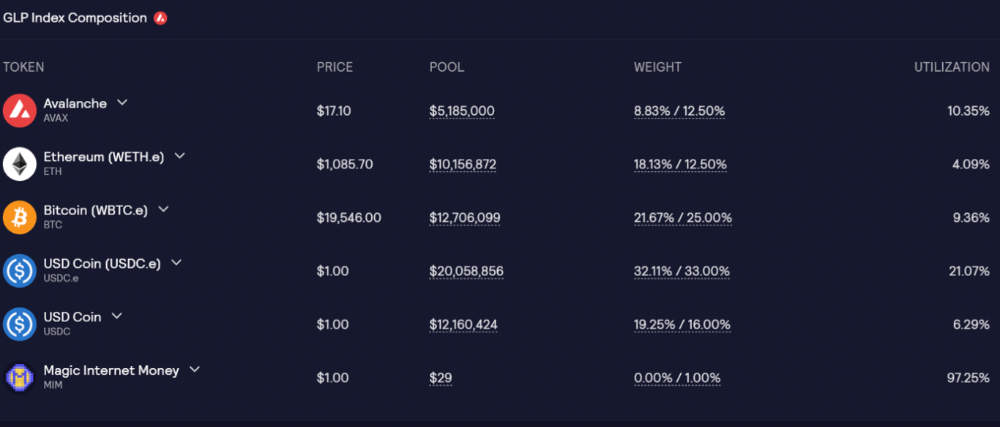

GLP is a basket of tokens, including ETH, BTC, AVAX, stablecoins, and UNI, LINK, and Stablecoins.

GLP composition on arbitrum

GLP composition on Avalanche

GLP token rebalances based on usage, providing liquidity without loss.

Protocol "runs" on Staking GLP. Depending on their chain, the protocol will reward users with ETH or AVAX. Current rewards are 22 percent (15.71 percent in ETH and the rest in escrowed GMX) and 21 percent (15.72 percent in AVAX and the rest in escrowed GMX). escGMX and ETH/AVAX percentages fluctuate.

Where is the yield coming from?

Swap fees, perpetual interest, and liquidations generate yield. 70% of fees go to GLP stakers, 30% to GMX. Organic yields aren't paid in inflationary farm tokens.

Escrowed GMX is vested GMX that unlocks in 365 days. To fully unlock GMX, you must farm the Escrowed GMX token for 365 days. That means less selling pressure for the GMX token.

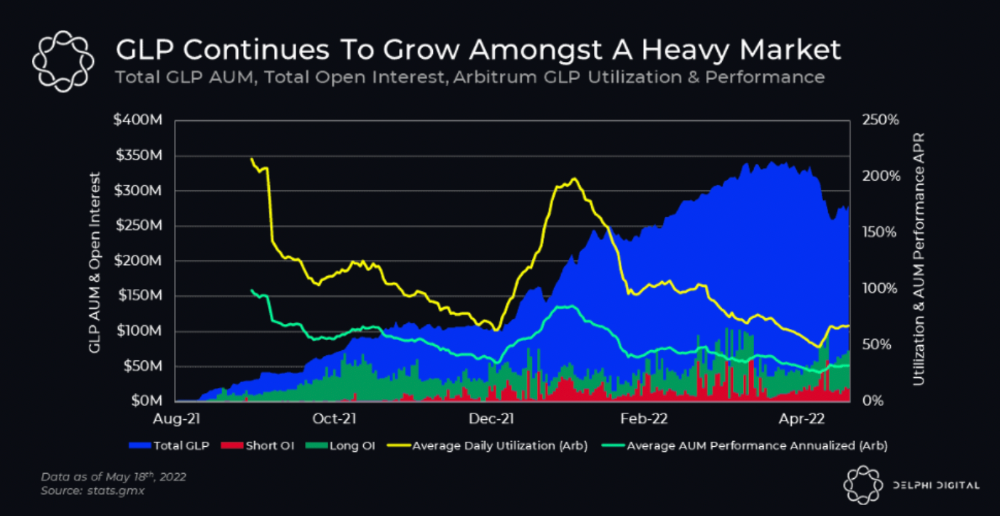

GMX's status

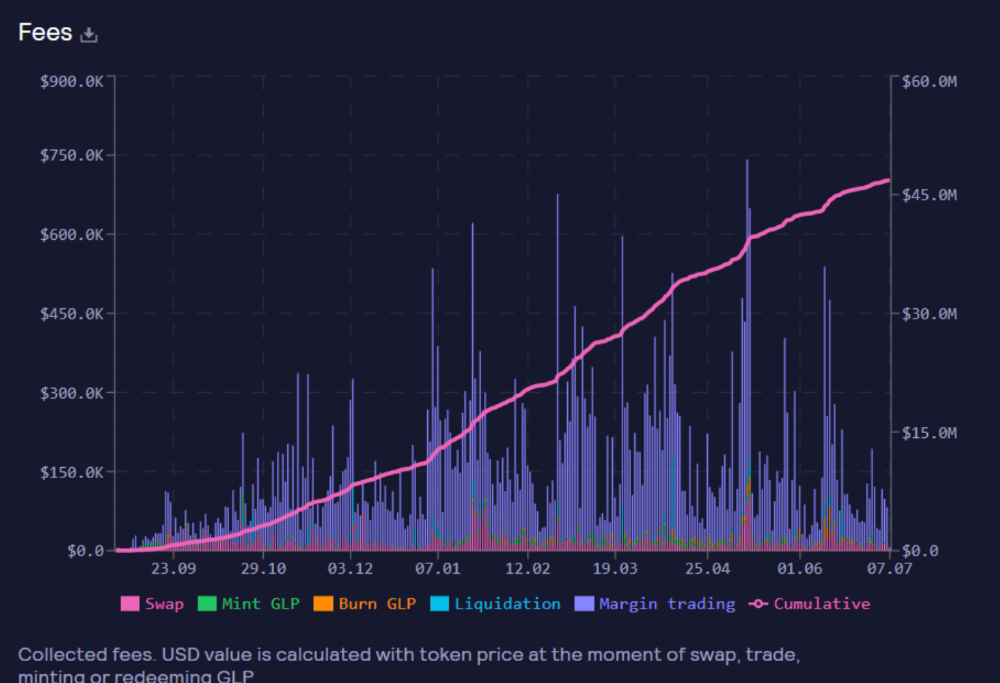

These are the fees in Arbitrum in the past 11 months by GMX.

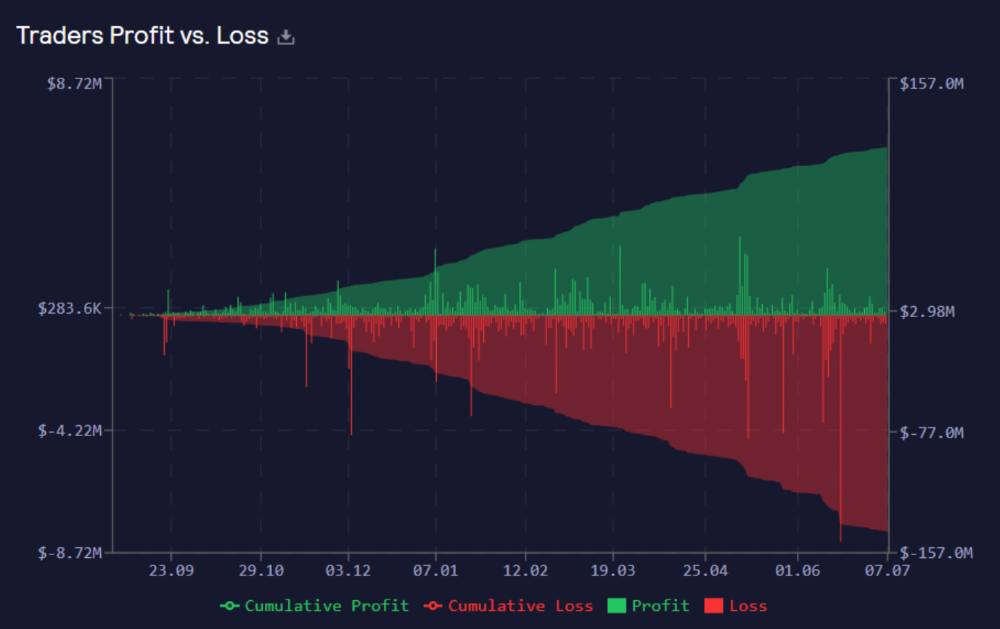

GMX works like a casino, which increases fees. Most fees come from Margin trading, which means most traders lose money; this money goes to the casino, or GLP stakers.

Strategies

My personal strategy is to DCA into GLP when markets hit bottom and stake it; GLP will be less volatile with extra staking rewards.

GLP YoY return vs. naked buying

Let's say I invested $10,000 in BTC, AVAX, and ETH in January.

BTC price: 47665$

ETH price: 3760$

AVAX price: $145

Current prices

BTC $21,000 (Down 56 percent )

ETH $1233 (Down 67.2 percent )

AVAX $20.36 (Down 85.95 percent )

Your $10,000 investment is now worth around $3,000.

How about GLP? My initial investment is 50% stables and 50% other assets ( Assuming the coverage ratio for stables is 50 percent at that time)

Without GLP staking yield, your value is $6500.

Let's assume the average APR for GLP staking is 23%, or $1500. So 8000$ total. It's 50% safer than holding naked assets in a bear market.

In a bull market, naked assets are preferable to GLP.

Short farming using GLP

Simple GLP short farming.

You use a stable asset as collateral to borrow AVAX. Sell it and buy GLP. Even if GLP rises, it won't rise as fast as AVAX, so we can get yields.

Let's do the maths

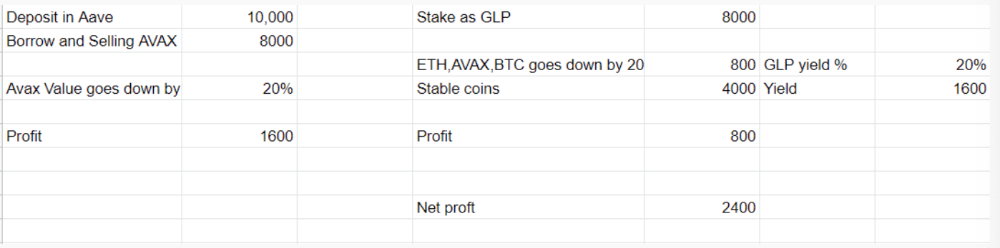

You deposit $10,000 USDT in Aave and borrow Avax. Say you borrow $8,000; you sell it, buy GLP, and risk 20%.

After a year, ETH, AVAX, and BTC rise 20%. GLP is $8800. $800 vanishes. 20% yields $1600. You're profitable. Shorting Avax costs $1600. (Assumptions-ETH, AVAX, BTC move the same, GLP yield is 20%. GLP has a 50:50 stablecoin/others ratio. Aave won't liquidate

In naked Avax shorting, Avax falls 20% in a year. You'll make $1600. If you buy GLP and stake it using the sold Avax and BTC, ETH and Avax go down by 20% - your profit is 20%, but with the yield, your total gain is $2400.

Issues with GMX

GMX's historical funding rates are always net positive, so long always pays short. This makes long-term shorts less appealing.

Oracle price discovery isn't enough. This limitation doesn't affect Bitcoin and ETH, but it affects less liquid assets. Traders can buy and sell less liquid assets at a lower price than their actual cost as long as GMX exists.

As users must provide GLP liquidity, adding more assets to GMX will be difficult. Next iteration will have synthetic assets.

Gains Protocol

Best leveraged trading platform. Smart contract-based decentralized protocol. 46 crypto pairs can be leveraged 5–150x and 10 Forex pairs 5–1000x. $10 DAI @ 150x (min collateral x leverage pos size is $1500 DAI). No funding fees, no KYC, trade DAI from your wallet, keep funds.

DAI single-sided staking and the GNS-DAI pool are important parts of Gains trading. GNS-DAI stakers get 90% of trading fees and 100% swap fees. 10 percent of trading fees go to DAI stakers, which is currently 14 percent!

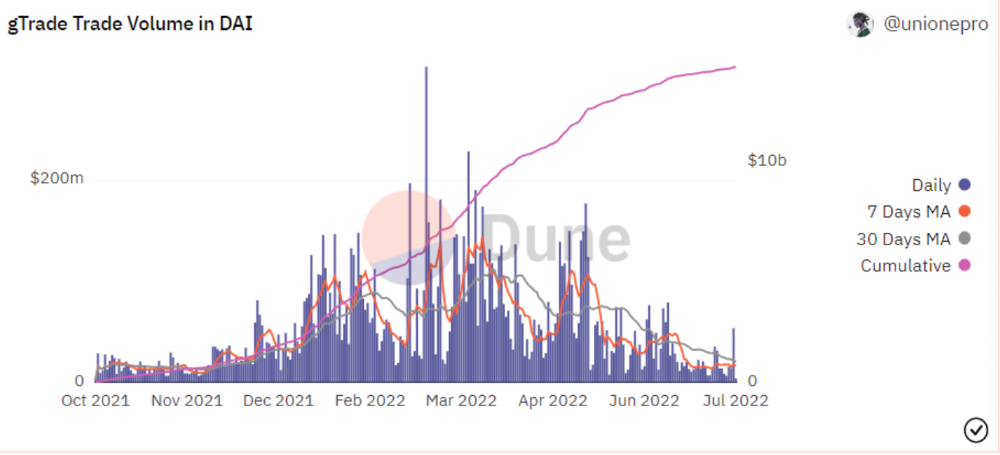

Trade volume

When a trader opens a trade, the leverage and profit are pulled from the DAI pool. If he loses, the protocol yield goes to the stakers.

If the trader's win rate is high and the DAI pool slowly depletes, the GNS token is minted and sold to refill DAI. Trader losses are used to burn GNS tokens. 25%+ of GNS is burned, making it deflationary.

Due to high leverage and volatility of crypto assets, most traders lose money and the protocol always wins, keeping GNS deflationary.

Gains uses a unique decentralized oracle for price feeds, which is better for leverage trading platforms. Let me explain.

Gains uses chainlink price oracles, not its own price feeds. Chainlink oracles only query centralized exchanges for price feeds every minute, which is unsuitable for high-precision trading.

Gains created a custom oracle that queries the eight chainlink nodes for the current price and, on average, for trade confirmation. This model eliminates every-second inquiries, which waste gas but are more efficient than chainlink's per-minute price.

This price oracle helps Gains open and close trades instantly, eliminate scam wicks, etc.

Other benefits include:

Stop-loss guarantee (open positions updated)

No scam wicks

Spot-pricing

Highest possible leverage

Fixed-spreads. During high volatility, a broker can increase the spread, which can hit your stop loss without the price moving.

Trade directly from your wallet and keep your funds.

>90% loss before liquidation (Some platforms liquidate as little as -50 percent)

KYC-free

Directly trade from wallet; keep funds safe

Further improvements

GNS-DAI liquidity providers fear the impermanent loss, so the protocol is migrating to its own liquidity and single staking GNS vaults. This allows users to stake GNS without permanent loss and obtain 90% DAI trading fees by staking. This starts in August.

Their upcoming improvements can be found here.

Gains constantly add new features and change pairs. It's an interesting protocol.

Conclusion

Next bull run, watch decentralized perpetual protocols. Effective tokenomics and non-inflationary yields may attract traders and liquidity providers. But still, there is a long way for them to develop, and I don't see them tackling the centralized exchanges any time soon until they fix their inherent problems and improve fast enough.

Read the full post here.

Faisal Khan

1 year ago

4 typical methods of crypto market manipulation

Market fraud

Due to its decentralized and fragmented character, the crypto market has integrity difficulties.

Cryptocurrencies are an immature sector, therefore market manipulation becomes a bigger issue. Many research have attempted to uncover these abuses. CryptoCompare's newest one highlights some of the industry's most typical scams.

Why are these concerns so common in the crypto market? First, even the largest centralized exchanges remain unregulated due to industry immaturity. A low-liquidity market segment makes an attack more harmful. Finally, market surveillance solutions not implemented reduce transparency.

In CryptoCompare's latest exchange benchmark, 62.4% of assessed exchanges had a market surveillance system, although only 18.1% utilised an external solution. To address market integrity, this measure must improve dramatically. Before discussing the report's malpractices, note that this is not a full list of attacks and hacks.

Clean Trading

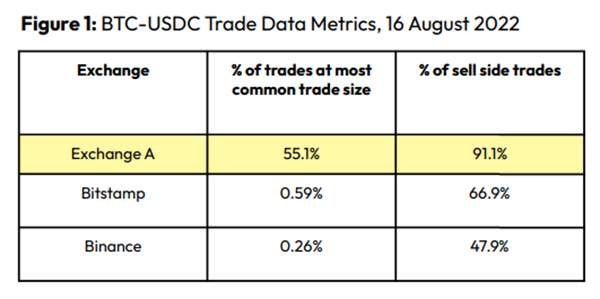

An investor buys and sells concurrently to increase the asset's price. Centralized and decentralized exchanges show this misconduct. 23 exchanges have a volume-volatility correlation < 0.1 during the previous 100 days, according to CryptoCompares. In August 2022, Exchange A reported $2.5 trillion in artificial and/or erroneous volume, up from $33.8 billion the month before.

Spoofing

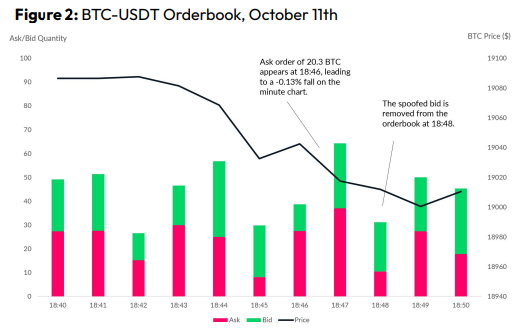

Criminals create and cancel fake orders before they can be filled. Since manipulators can hide in larger trading volumes, larger exchanges have more spoofing. A trader placed a 20.8 BTC ask order at $19,036 when BTC was trading at $19,043. BTC declined 0.13% to $19,018 in a minute. At 18:48, the trader canceled the ask order without filling it.

Front-Running

Most cryptocurrency front-running involves inside trading. Traditional stock markets forbid this. Since most digital asset information is public, this is harder. Retailers could utilize bots to front-run.

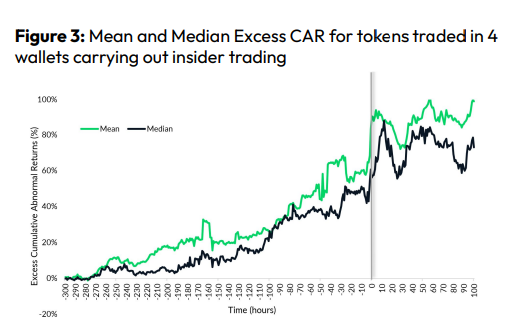

CryptoCompare found digital wallets of people who traded like insiders on exchange listings. The figure below shows excess cumulative anomalous returns (CAR) before a coin listing on an exchange.

Finally, LAYERING is a sequence of spoofs in which successive orders are put along a ladder of greater (layering offers) or lower (layering bids) values. The paper concludes with recommendations to mitigate market manipulation. Exchange data transparency, market surveillance, and regulatory oversight could reduce manipulative tactics.

rekt

3 years ago

LCX is the latest CEX to have suffered a private key exploit.

The attack began around 10:30 PM +UTC on January 8th.

Peckshield spotted it first, then an official announcement came shortly after.

We’ve said it before; if established companies holding millions of dollars of users’ funds can’t manage their own hot wallet security, what purpose do they serve?

The Unique Selling Proposition (USP) of centralised finance grows smaller by the day.

The official incident report states that 7.94M USD were stolen in total, and that deposits and withdrawals to the platform have been paused.

LCX hot wallet: 0x4631018f63d5e31680fb53c11c9e1b11f1503e6f

Hacker’s wallet: 0x165402279f2c081c54b00f0e08812f3fd4560a05

Stolen funds:

- 162.68 ETH (502,671 USD)

- 3,437,783.23 USDC (3,437,783 USD)

- 761,236.94 EURe (864,840 USD)

- 101,249.71 SAND Token (485,995 USD)

- 1,847.65 LINK (48,557 USD)

- 17,251,192.30 LCX Token (2,466,558 USD)

- 669.00 QNT (115,609 USD)

- 4,819.74 ENJ (10,890 USD)

- 4.76 MKR (9,885 USD)

**~$1M worth of $LCX remains in the address, along with 611k EURe which has been frozen by Monerium.

The rest, a total of 1891 ETH (~$6M) was sent to Tornado Cash.**

Why can’t they keep private keys private?

Is it really that difficult for a traditional corporate structure to maintain good practice?

CeFi hacks leave us with little to say - we can only go on what the team chooses to tell us.

Next time, they can write this article themselves.

See below for a template.

You might also like

Tim Soulo

2 years ago

Here is why 90.63% of Pages Get No Traffic From Google.

The web adds millions or billions of pages per day.

How much Google traffic does this content get?

In 2017, we studied 2 million randomly-published pages to answer this question. Only 5.7% of them ranked in Google's top 10 search results within a year of being published.

94.3 percent of roughly two million pages got no Google traffic.

Two million pages is a small sample compared to the entire web. We did another study.

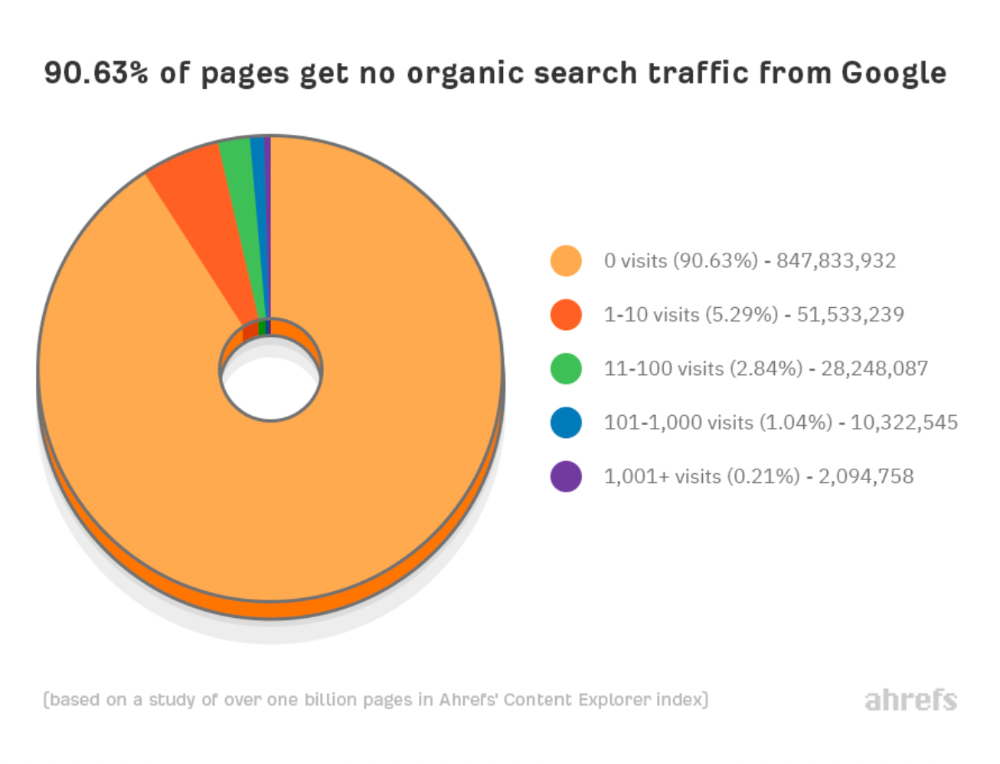

We analyzed over a billion pages to see how many get organic search traffic and why.

How many pages get search traffic?

90% of pages in our index get no Google traffic, and 5.2% get ten visits or less.

90% of google pages get no organic traffic

How can you join the minority that gets Google organic search traffic?

There are hundreds of SEO problems that can hurt your Google rankings. If we only consider common scenarios, there are only four.

Reason #1: No backlinks

I hate to repeat what most SEO articles say, but it's true:

Backlinks boost Google rankings.

Google's "top 3 ranking factors" include them.

Why don't we divide our studied pages by the number of referring domains?

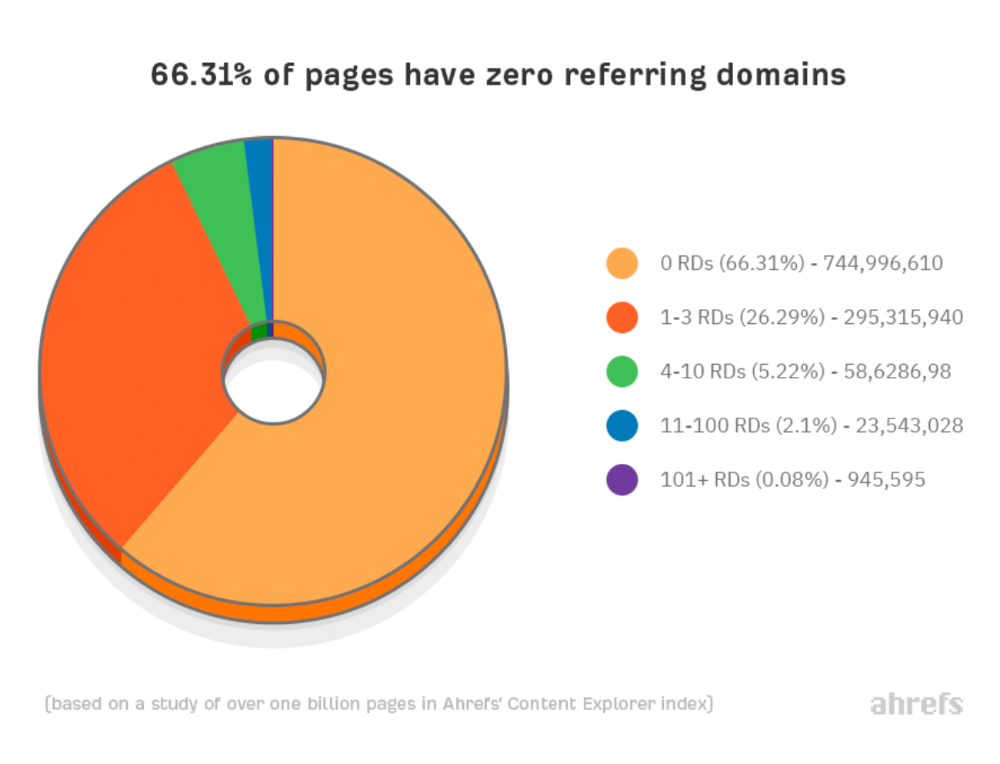

66.31 percent of pages have no backlinks, and 26.29 percent have three or fewer.

Did you notice the trend already?

Most pages lack search traffic and backlinks.

But are these the same pages?

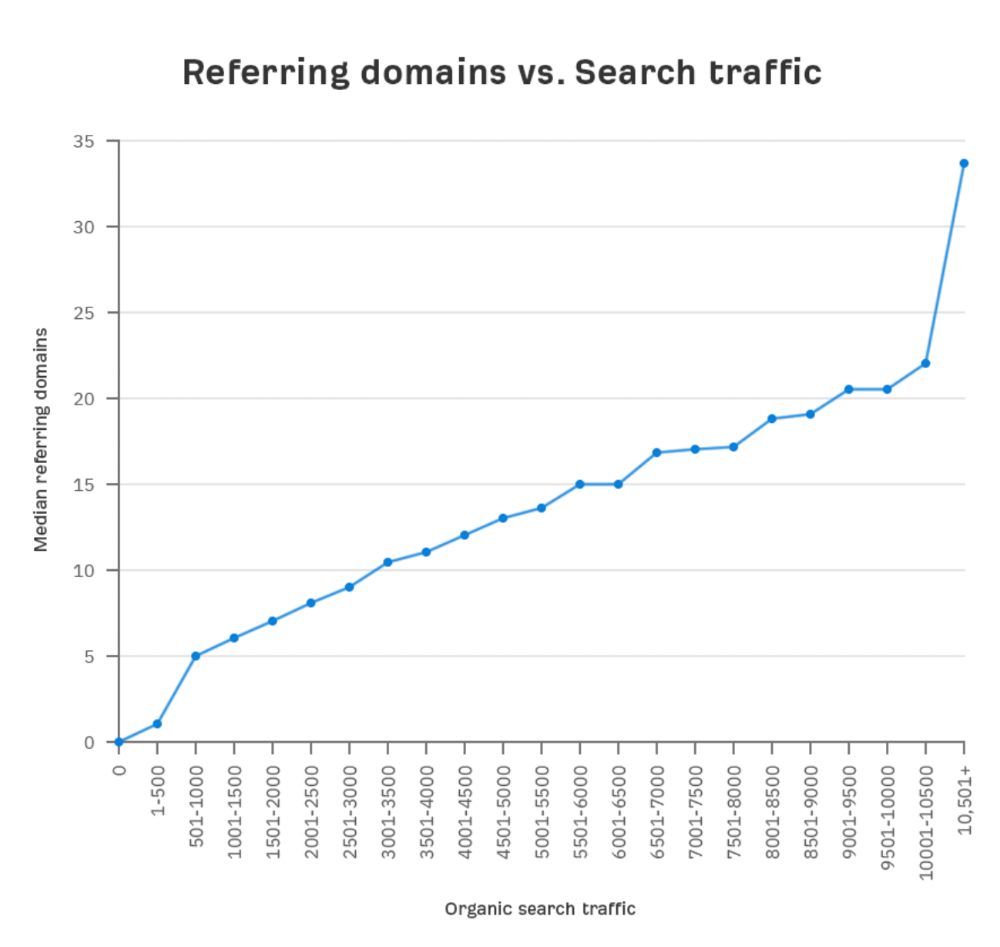

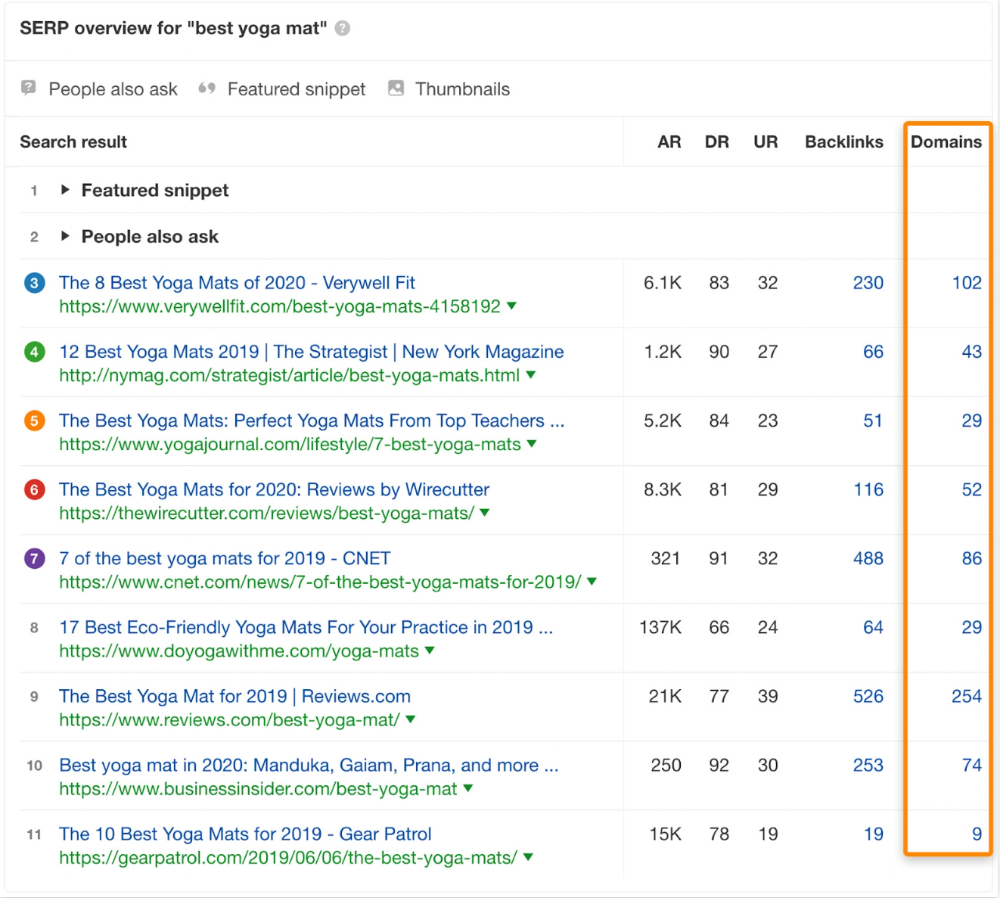

Let's compare monthly organic search traffic to backlinks from unique websites (referring domains):

More backlinks equals more Google organic traffic.

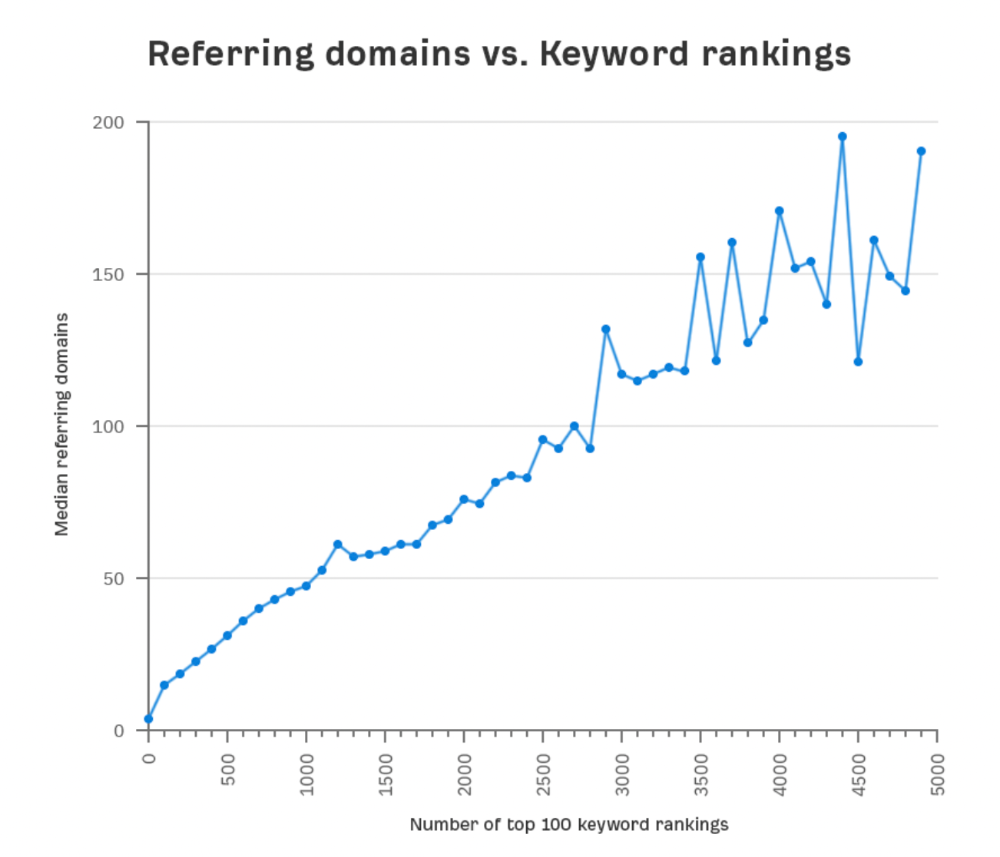

Referring domains and keyword rankings are correlated.

It's important to note that correlation does not imply causation, and none of these graphs prove backlinks boost Google rankings. Most SEO professionals agree that it's nearly impossible to rank on the first page without backlinks.

You'll need high-quality backlinks to rank in Google and get search traffic.

Is organic traffic possible without links?

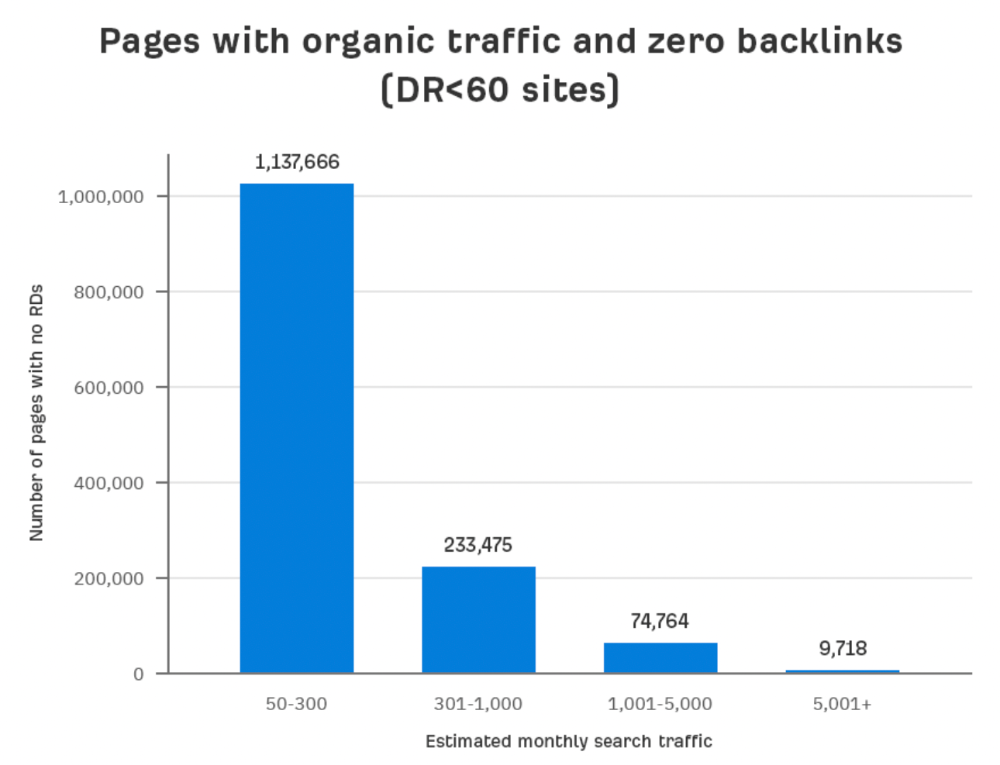

Here are the numbers:

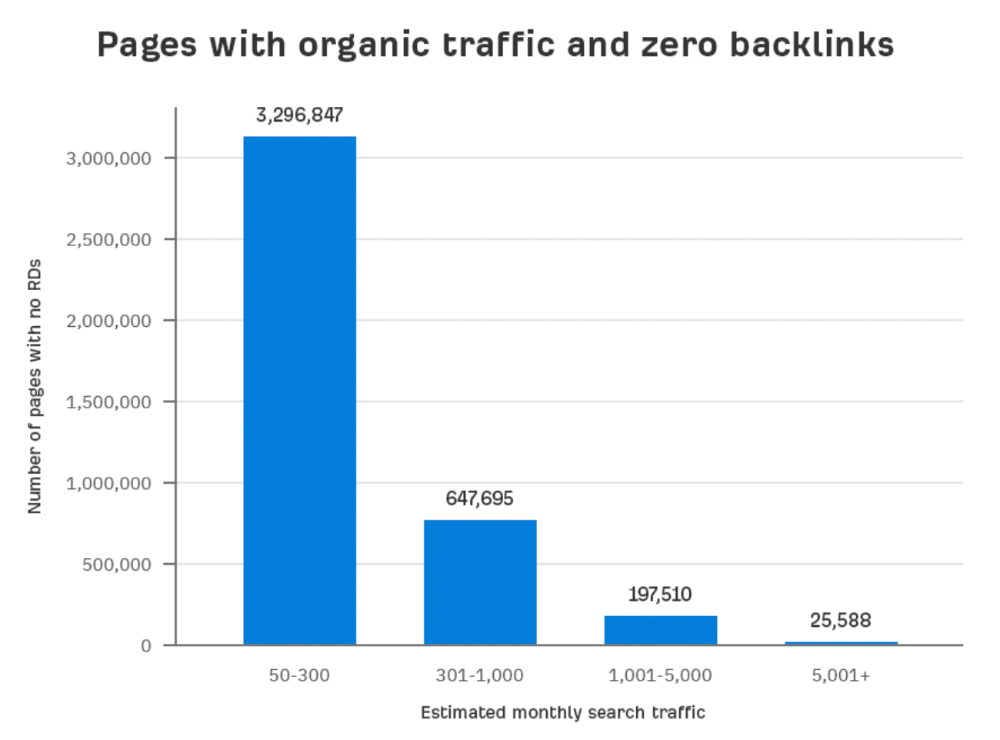

Four million pages get organic search traffic without backlinks. Only one in 20 pages without backlinks has traffic, which is 5% of our sample.

Most get 300 or fewer organic visits per month.

What happens if we exclude high-Domain-Rating pages?

The numbers worsen. Less than 4% of our sample (1.4 million pages) receive organic traffic. Only 320,000 get over 300 monthly organic visits, or 0.1% of our sample.

This suggests high-authority pages without backlinks are more likely to get organic traffic than low-authority pages.

Internal links likely pass PageRank to new pages.

Two other reasons:

Our crawler's blocked. Most shady SEOs block backlinks from us. This prevents competitors from seeing (and reporting) PBNs.

They choose low-competition subjects. Low-volume queries are less competitive, requiring fewer backlinks to rank.

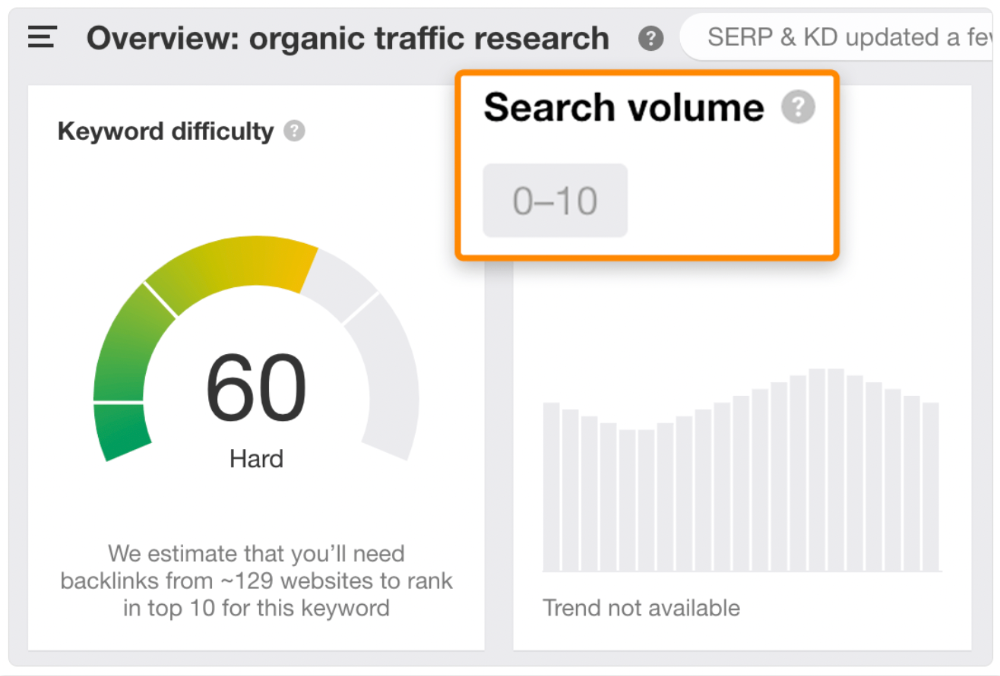

If the idea of getting search traffic without building backlinks excites you, learn about Keyword Difficulty and how to find keywords/topics with decent traffic potential and low competition.

Reason #2: The page has no long-term traffic potential.

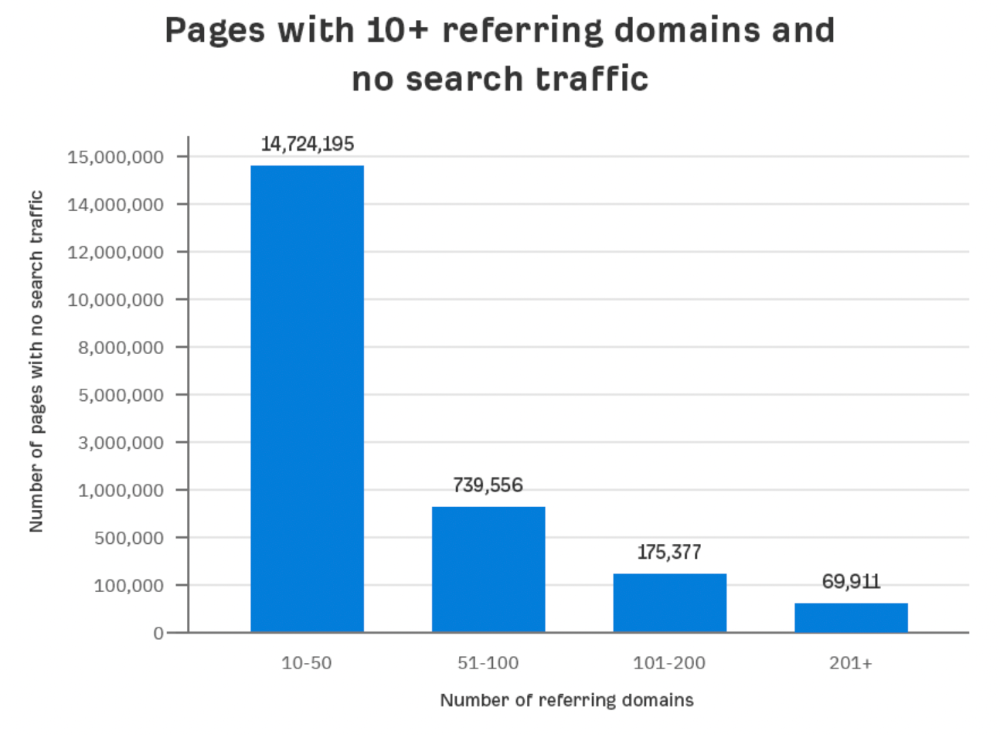

Some pages with many backlinks get no Google traffic.

Why? I filtered Content Explorer for pages with no organic search traffic and divided them into four buckets by linking domains.

Almost 70k pages have backlinks from over 200 domains, but no search traffic.

By manually reviewing these (and other) pages, I noticed two general trends that explain why they get no traffic:

They overdid "shady link building" and got penalized by Google;

They're not targeting a Google-searched topic.

I won't elaborate on point one because I hope you don't engage in "shady link building"

#2 is self-explanatory:

If nobody searches for what you write, you won't get search traffic.

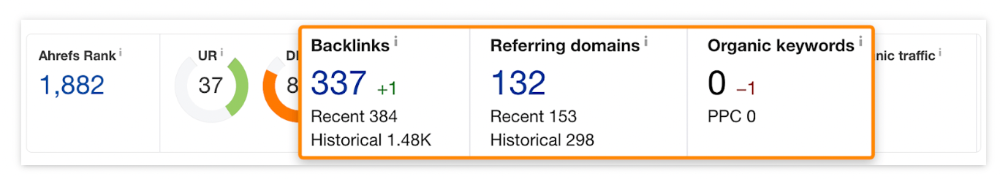

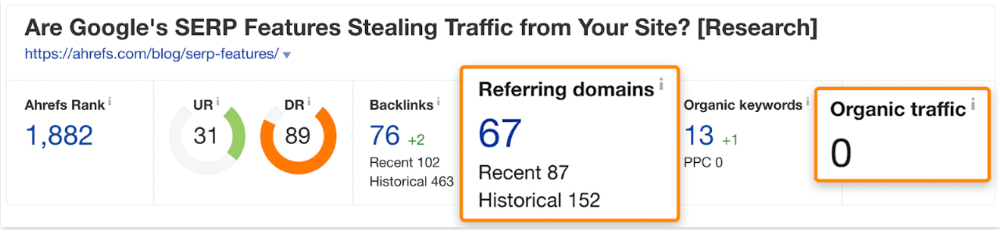

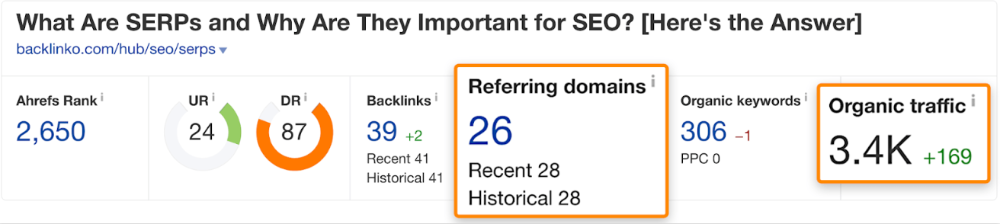

Consider one of our blog posts' metrics:

No organic traffic despite 337 backlinks from 132 sites.

The page is about "organic traffic research," which nobody searches for.

News articles often have this. They get many links from around the web but little Google traffic.

People can't search for things they don't know about, and most don't care about old events and don't search for them.

Note:

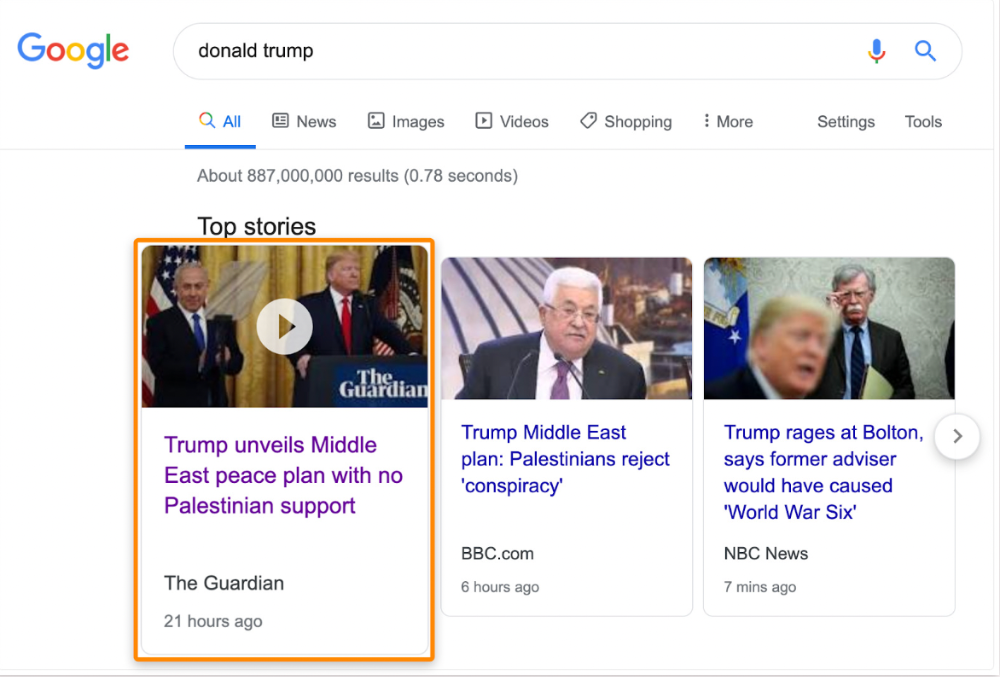

Some news articles rank in the "Top stories" block for relevant, high-volume search queries, generating short-term organic search traffic.

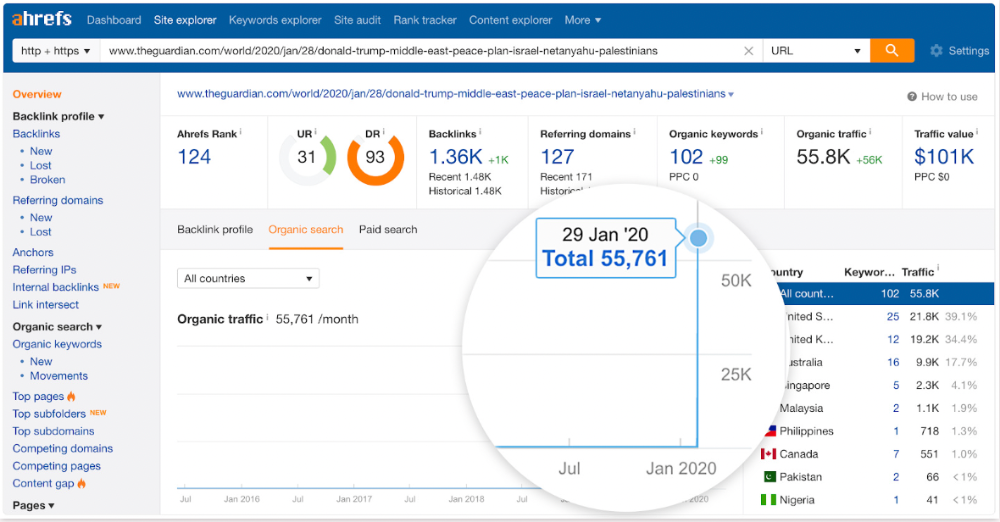

The Guardian's top "Donald Trump" story:

Ahrefs caught on quickly:

"Donald Trump" gets 5.6M monthly searches, so this page got a lot of "Top stories" traffic.

I bet traffic has dropped if you check now.

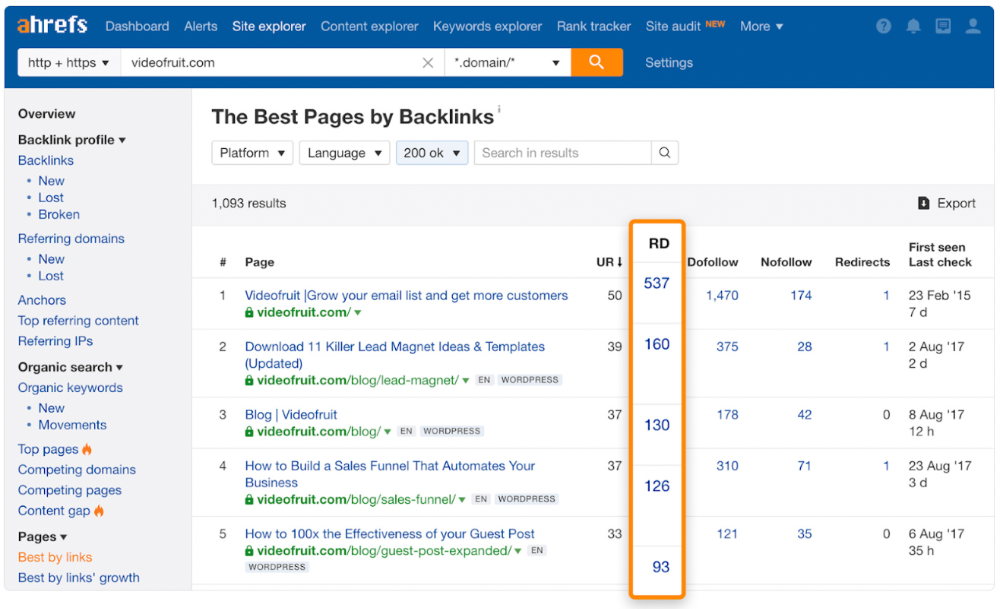

One of the quickest and most effective SEO wins is:

Find your website's pages with the most referring domains;

Do keyword research to re-optimize them for relevant topics with good search traffic potential.

Bryan Harris shared this "quick SEO win" during a course interview:

He suggested using Ahrefs' Site Explorer's "Best by links" report to find your site's most-linked pages and analyzing their search traffic. This finds pages with lots of links but little organic search traffic.

We see:

The guide has 67 backlinks but no organic traffic.

We could fix this by re-optimizing the page for "SERP"

A similar guide with 26 backlinks gets 3,400 monthly organic visits, so we should easily increase our traffic.

Don't do this with all low-traffic pages with backlinks. Choose your battles wisely; some pages shouldn't be ranked.

Reason #3: Search intent isn't met

Google returns the most relevant search results.

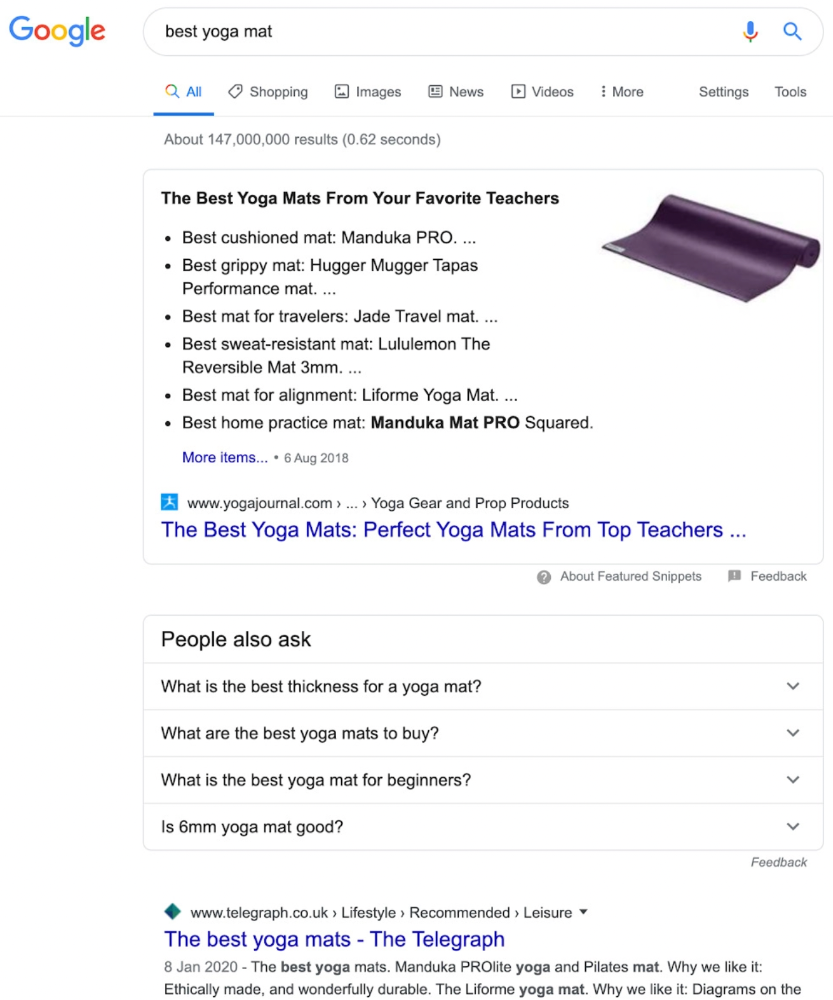

That's why blog posts with recommendations rank highest for "best yoga mat."

Google knows that most searchers aren't buying.

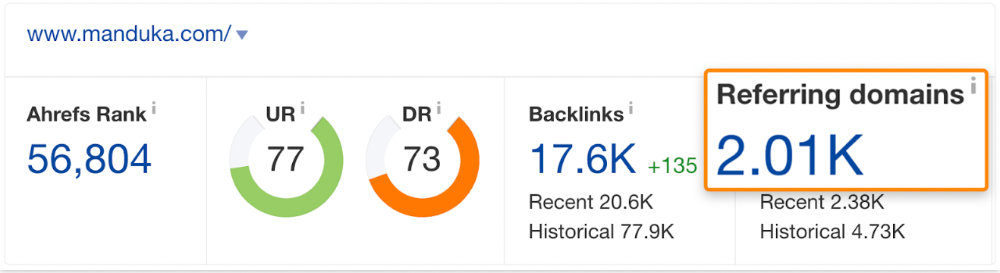

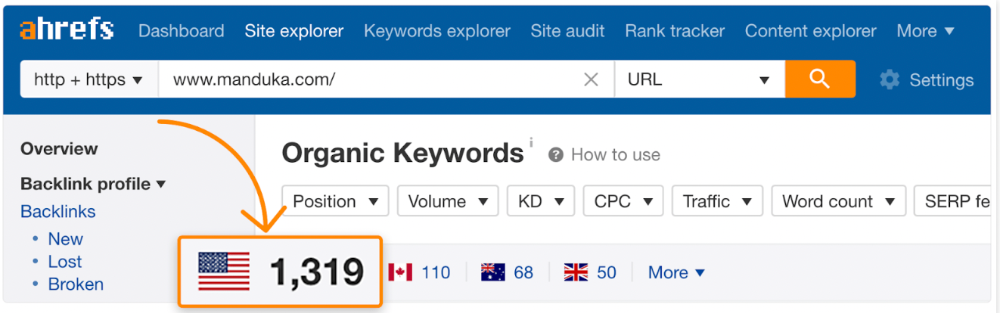

It's also why this yoga mats page doesn't rank, despite having seven times more backlinks than the top 10 pages:

The page ranks for thousands of other keywords and gets tens of thousands of monthly organic visits. Not being the "best yoga mat" isn't a big deal.

If you have pages with lots of backlinks but no organic traffic, re-optimizing them for search intent can be a quick SEO win.

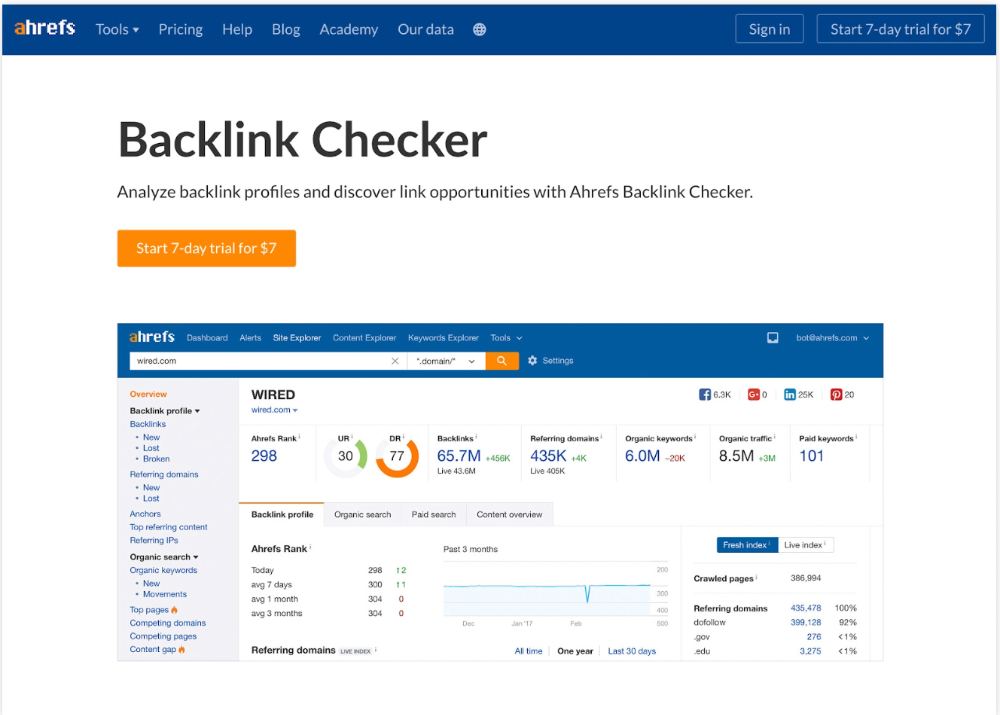

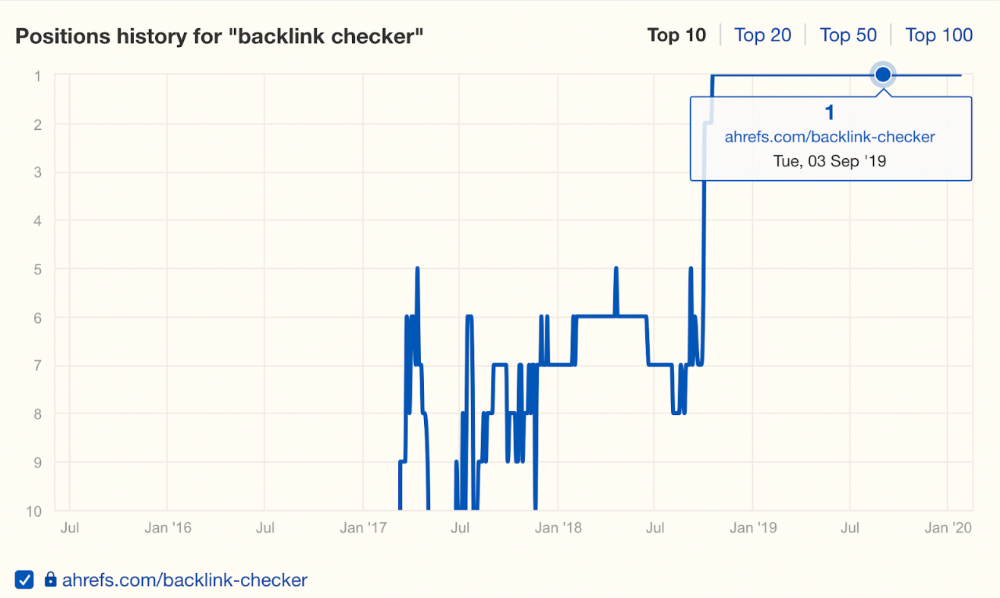

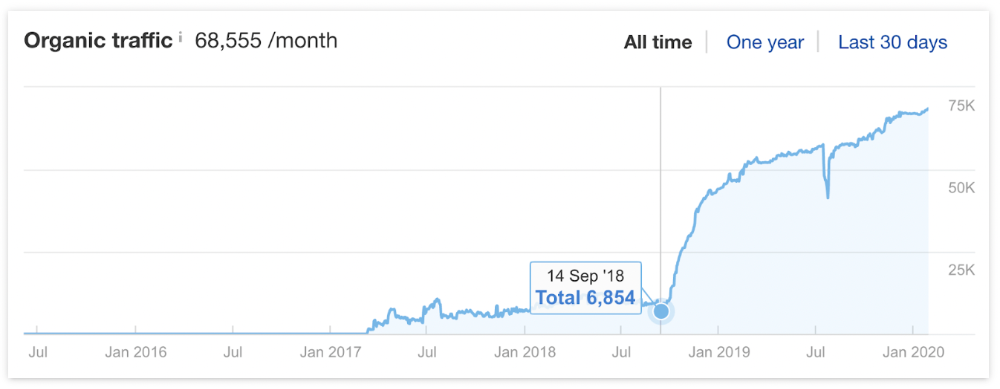

It was originally a boring landing page describing our product's benefits and offering a 7-day trial.

We realized the problem after analyzing search intent.

People wanted a free tool, not a landing page.

In September 2018, we published a free tool at the same URL. Organic traffic and rankings skyrocketed.

Reason #4: Unindexed page

Google can’t rank pages that aren’t indexed.

If you think this is the case, search Google for site:[url]. You should see at least one result; otherwise, it’s not indexed.

A rogue noindex meta tag is usually to blame. This tells search engines not to index a URL.

Rogue canonicals, redirects, and robots.txt blocks prevent indexing.

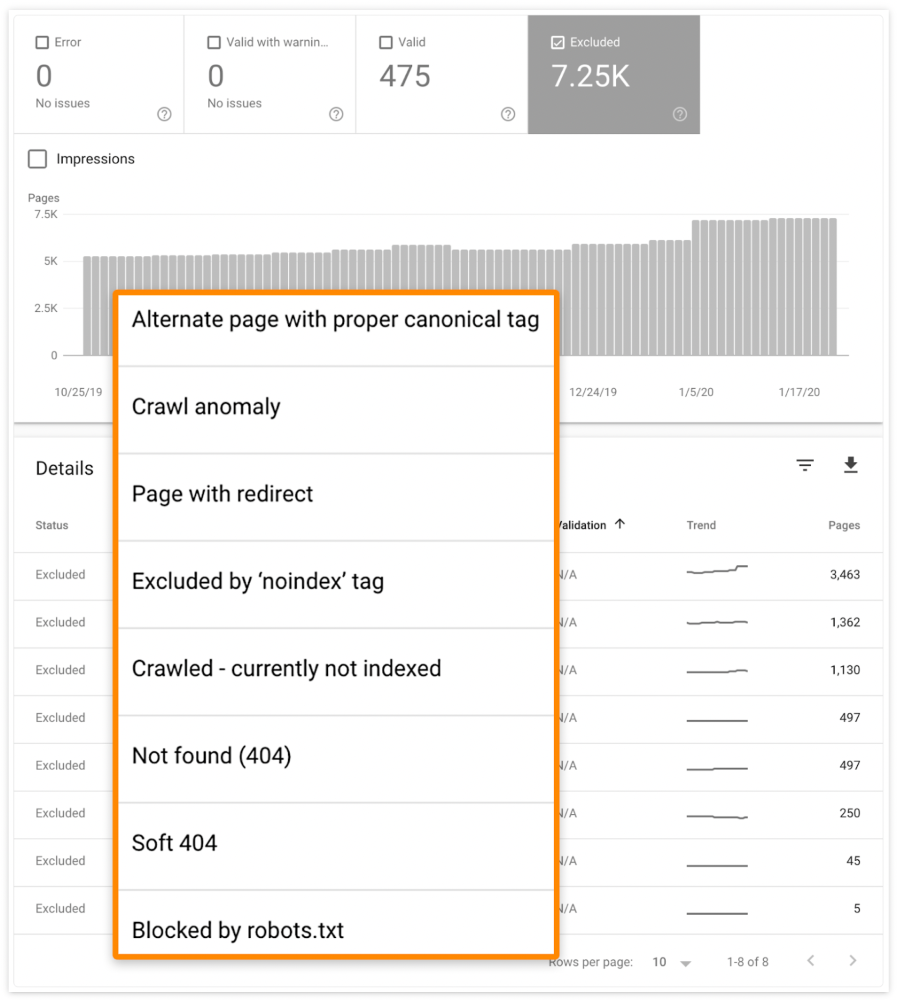

Check the "Excluded" tab in Google Search Console's "Coverage" report to see excluded pages.

Google doesn't index broken pages, even with backlinks.

Surprisingly common.

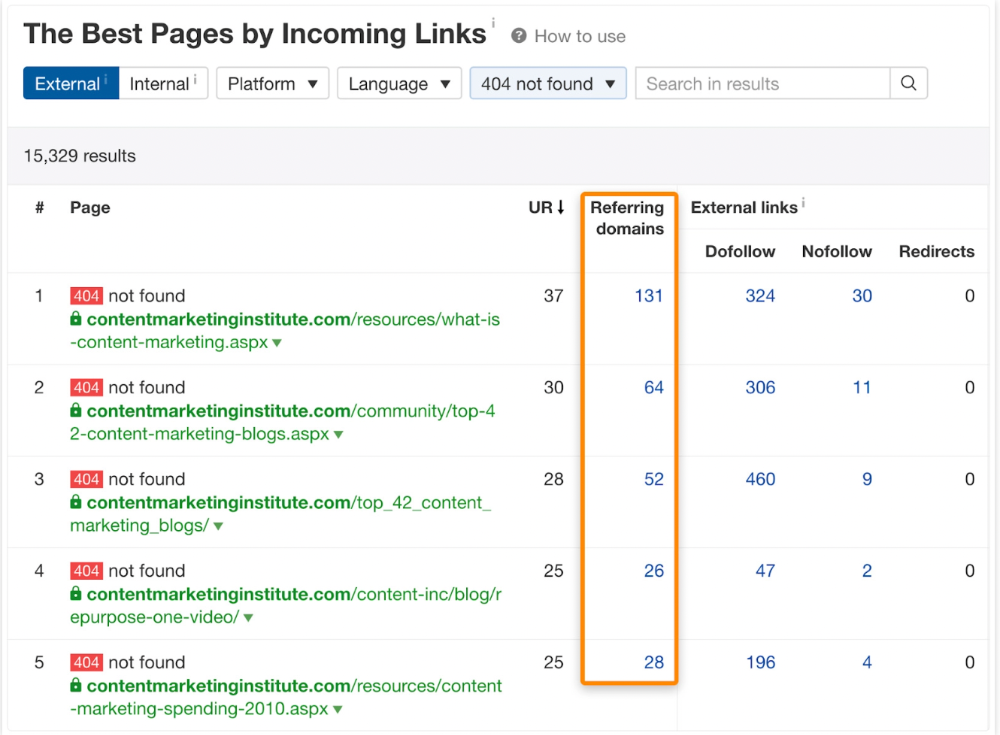

In Ahrefs' Site Explorer, the Best by Links report for a popular content marketing blog shows many broken pages.

One dead page has 131 backlinks:

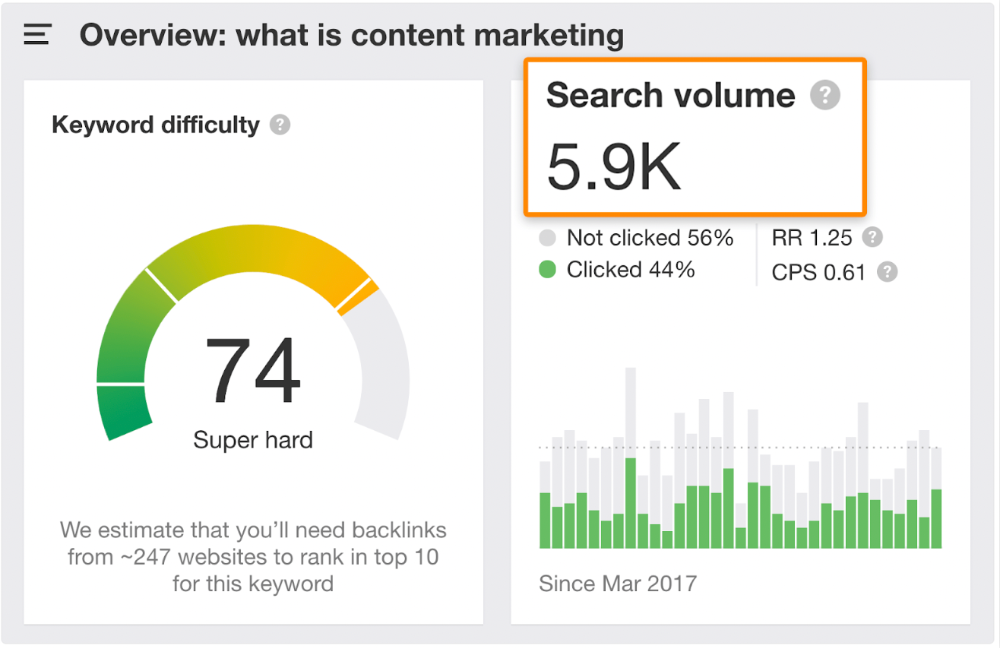

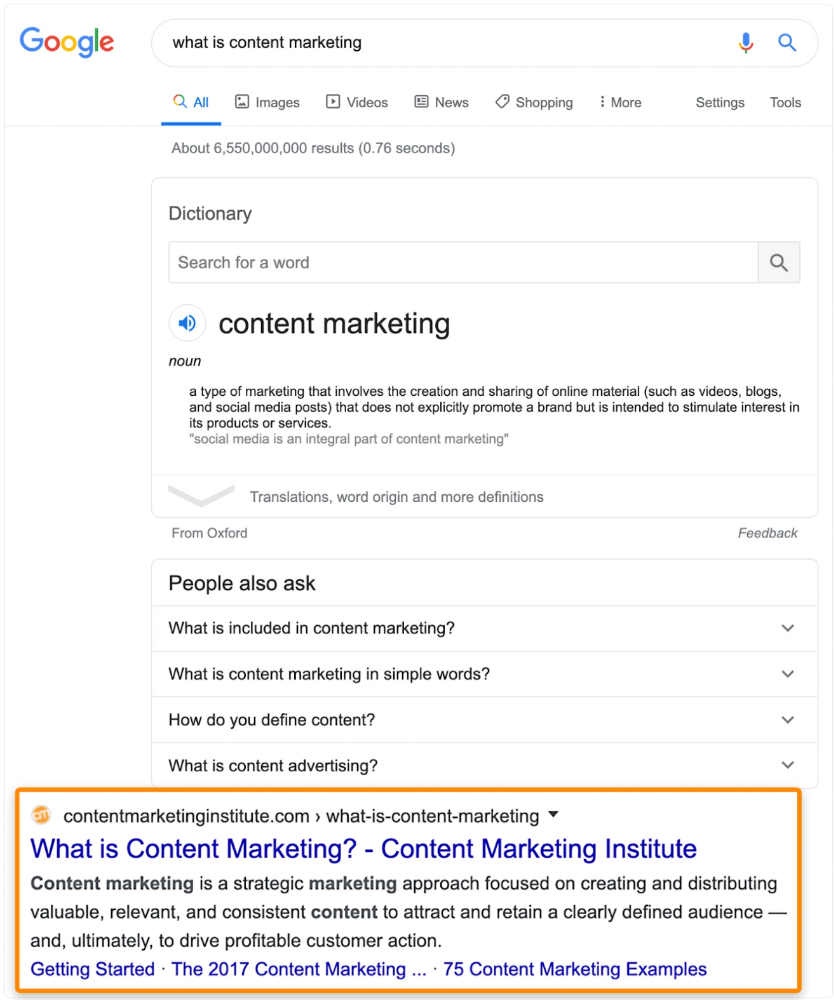

According to the URL, the page defined content marketing. —a keyword with a monthly search volume of 5,900 in the US.

Luckily, another page ranks for this keyword. Not a huge loss.

At least redirect the dead page's backlinks to a working page on the same topic. This may increase long-tail keyword traffic.

This post is a summary. See the original post here

Yogita Khatri

2 years ago

Moonbirds NFT sells for $1 million in first week

On Saturday, Moonbird #2642, one of the collection's rarest NFTs, sold for a record 350 ETH (over $1 million) on OpenSea.

The Sandbox, a blockchain-based gaming company based in Hong Kong, bought the piece. The seller, "oscuranft" on OpenSea, made around $600,000 after buying the NFT for 100 ETH a week ago.

Owl avatars

Moonbirds is a 10,000 owl NFT collection. It is one of the quickest collections to achieve bluechip status. Proof, a media startup founded by renowned VC Kevin Rose, launched Moonbirds on April 16.

Rose is currently a partner at True Ventures, a technology-focused VC firm. He was a Google Ventures general partner and has 1.5 million Twitter followers.

Rose has an NFT podcast on Proof. It follows Proof Collective, a group of 1,000 NFT collectors and artists, including Beeple, who hold a Proof Collective NFT and receive special benefits.

These include early access to the Proof podcast and in-person events.

According to the Moonbirds website, they are "the official Proof PFP" (picture for proof).

Moonbirds NFTs sold nearly $360 million in just over a week, according to The Block Research and Dune Analytics. Its top ten sales range from $397,000 to $1 million.

In the current market, Moonbirds are worth 33.3 ETH. Each NFT is 2.5 ETH. Holders have gained over 12 times in just over a week.

Why was it so popular?

The Block Research's NFT analyst, Thomas Bialek, attributes Moonbirds' rapid rise to Rose's backing, the success of his previous Proof Collective project, and collectors' preference for proven NFT projects.

Proof Collective NFT holders have made huge gains. These NFTs were sold in a Dutch auction last December for 5 ETH each. According to OpenSea, the current floor price is 109 ETH.

According to The Block Research, citing Dune Analytics, Proof Collective NFTs have sold over $39 million to date.

Rose has bigger plans for Moonbirds. Moonbirds is introducing "nesting," a non-custodial way for holders to stake NFTs and earn rewards.

Holders of NFTs can earn different levels of status based on how long they keep their NFTs locked up.

"As you achieve different nest status levels, we can offer you different benefits," he said. "We'll have in-person meetups and events, as well as some crazy airdrops planned."

Rose went on to say that Proof is just the start of "a multi-decade journey to build a new media company."

Amelia Winger-Bearskin

2 years ago

Hate NFTs? I must break some awful news to you...

If you think NFTs are awful, check out the art market.

The fervor around NFTs has subsided in recent months due to the crypto market crash and the media's short attention span. They were all anyone could talk about earlier this spring. Last semester, when passions were high and field luminaries were discussing "slurp juices," I asked my students and students from over 20 other universities what they thought of NFTs.

According to many, NFTs were either tasteless pyramid schemes or a new way for artists to make money. NFTs contributed to the climate crisis and harmed the environment, but so did air travel, fast fashion, and smartphones. Some students complained that NFTs were cheap, tasteless, algorithmically generated schlock, but others asked how this was different from other art.

I'm not sure what I expected, but the intensity of students' reactions surprised me. They had strong, emotional opinions about a technology I'd always considered administrative. NFTs address ownership and accounting, like most crypto/blockchain projects.

Art markets can be irrational, arbitrary, and subject to the same scams and schemes as any market. And maybe a few shenanigans that are unique to the art world.

The Fairness Question

Fairness, a deflating moral currency, was the general sentiment (the less of it in circulation, the more ardently we clamor for it.) These students, almost all of whom are artists, complained to the mismatch between the quality of the work in some notable NFT collections and the excessive amounts these items were fetching on the market. They can sketch a Bored Ape or Lazy Lion in their sleep. Why should they buy ramen with school loans while certain swindlers get rich?

I understand students. Art markets are unjust. They can be irrational, arbitrary, and governed by chance and circumstance, like any market. And art-world shenanigans.

Almost every mainstream critique leveled against NFTs applies just as easily to art markets

Over 50% of artworks in circulation are fake, say experts. Sincere art collectors and institutions are upset by the prevalence of fake goods on the market. Not everyone. Wealthy people and companies use art as investments. They can use cultural institutions like museums and galleries to increase the value of inherited art collections. People sometimes buy artworks and use family ties or connections to museums or other cultural taste-makers to hype the work in their collection, driving up the price and allowing them to sell for a profit. Money launderers can disguise capital flows by using market whims, hype, and fluctuating asset prices.

Almost every mainstream critique leveled against NFTs applies just as easily to art markets.

Art has always been this way. Edward Kienholz's 1989 print series satirized art markets. He stamped 395 identical pieces of paper from $1 to $395. Each piece was initially priced as indicated. Kienholz was joking about a strange feature of art markets: once the last print in a series sells for $395, all previous works are worth at least that much. The entire series is valued at its highest auction price. I don't know what a Kienholz print sells for today (inquire with the gallery), but it's more than $395.

I love Lee Lozano's 1969 "Real Money Piece." Lozano put cash in various denominations in a jar in her apartment and gave it to visitors. She wrote, "Offer guests coffee, diet pepsi, bourbon, half-and-half, ice water, grass, and money." "Offer real money as candy."

Lee Lozano kept track of who she gave money to, how much they took, if any, and how they reacted to the offer of free money without explanation. Diverse reactions. Some found it funny, others found it strange, and others didn't care. Lozano rarely says:

Apr 17 Keith Sonnier refused, later screws lid very tightly back on. Apr 27 Kaltenbach takes all the money out of the jar when I offer it, examines all the money & puts it all back in jar. Says he doesn’t need money now. Apr 28 David Parson refused, laughing. May 1 Warren C. Ingersoll refused. He got very upset about my “attitude towards money.” May 4 Keith Sonnier refused, but said he would take money if he needed it which he might in the near future. May 7 Dick Anderson barely glances at the money when I stick it under his nose and says “Oh no thanks, I intend to earn it on my own.” May 8 Billy Bryant Copley didn’t take any but then it was sort of spoiled because I had told him about this piece on the phone & he had time to think about it he said.

Smart Contracts (smart as in fair, not smart as in Blockchain)

Cornell University's Cheryl Finley has done a lot of research on secondary art markets. I first learned about her research when I met her at the University of Florida's Harn Museum, where she spoke about smart contracts (smart as in fair, not smart as in Blockchain) and new protocols that could help artists who are often left out of the economic benefits of their own work, including women and women of color.

Her talk included findings from her ArtNet op-ed with Lauren van Haaften-Schick, Christian Reeder, and Amy Whitaker.

NFTs allow us to think about and hack on formal contractual relationships outside a system of laws that is currently not set up to service our community.

The ArtNet article The Recent Sale of Amy Sherald's ‘Welfare Queen' Symbolizes the Urgent Need for Resale Royalties and Economic Equity for Artists discussed Sherald's 2012 portrait of a regal woman in a purple dress wearing a sparkling crown and elegant set of pearls against a vibrant red background.

Amy Sherald sold "Welfare Queen" to Princeton professor Imani Perry. Sherald agreed to a payment plan to accommodate Perry's budget.

Amy Sherald rose to fame for her 2016 portrait of Michelle Obama and her full-length portrait of Breonna Taylor, one of the most famous works of the past decade.

As is common, Sherald's rising star drove up the price of her earlier works. Perry's "Welfare Queen" sold for $3.9 million in 2021.

Imani Perry's early investment paid off big-time. Amy Sherald, whose work directly increased the painting's value and who was on an artist's shoestring budget when she agreed to sell "Welfare Queen" in 2012, did not see any of the 2021 auction money. Perry and the auction house got that money.

Sherald sold her Breonna Taylor portrait to the Smithsonian and Louisville's Speed Art Museum to fund a $1 million scholarship. This is a great example of what an artist can do for the community if they can amass wealth through their work.

NFTs haven't solved all of the art market's problems — fakes, money laundering, market manipulation — but they didn't create them. Blockchain and NFTs are credited with making these issues more transparent. More ideas emerge daily about what a smart contract should do for artists.

NFTs are a copyright solution. They allow us to hack formal contractual relationships outside a law system that doesn't serve our community.

Amy Sherald shows the good smart contracts can do (as in, well-considered, self-determined contracts, not necessarily blockchain contracts.) Giving back to our community, deciding where and how our work can be sold or displayed, and ensuring artists share in the equity of our work and the economy our labor creates.