Did volcanic 'glasses' play a role in igniting early life?

Quenched lava may have aided in the formation of long RNA strands required by primitive life.

It took a long time for life to emerge. Microbes were present 3.7 billion years ago, just a few hundred million years after the 4.5-billion-year-old Earth had cooled enough to sustain biochemistry, according to fossils, and many scientists believe RNA was the genetic material for these first species. RNA, while not as complicated as DNA, would be difficult to forge into the lengthy strands required to transmit genetic information, raising the question of how it may have originated spontaneously.

Researchers may now have a solution. They demonstrate how basaltic glasses assist individual RNA letters, also known as nucleoside triphosphates, join into strands up to 200 letters long in lab studies. The glasses are formed when lava is quenched in air or water, or when melted rock generated by asteroid strikes cools rapidly, and they would have been plentiful in the early Earth's fire and brimstone.

The outcome has caused a schism among top origin-of-life scholars. "This appears to be a great story that finally explains how nucleoside triphosphates react with each other to create RNA strands," says Thomas Carell, a scientist at Munich's Ludwig Maximilians University. However, Harvard University's Jack Szostak, an RNA expert, says he won't believe the results until the study team thoroughly describes the RNA strands.

Researchers interested in the origins of life like the idea of a primordial "RNA universe" since the molecule can perform two different functions that are essential for life. It's made up of four chemical letters, just like DNA, and can carry genetic information. RNA, like proteins, can catalyze chemical reactions that are necessary for life.

However, RNA can cause headaches. No one has yet discovered a set of plausible primordial conditions that would cause hundreds of RNA letters—each of which is a complicated molecule—to join together into strands long enough to support the intricate chemistry required to kick-start evolution.

Basaltic glasses may have played a role, according to Stephen Mojzsis, a geologist at the University of Colorado, Boulder. They're high in metals like magnesium and iron, which help to trigger a variety of chemical reactions. "Basaltic glass was omnipresent on Earth at the time," he adds.

He provided the Foundation for Applied Molecular Evolution samples of five different basalt glasses. Each sample was ground into a fine powder, sanitized, and combined with a solution of nucleoside triphosphates by molecular biologist Elisa Biondi and her colleagues. The RNA letters were unable to link up without the presence of glass powder. However, when the molecules were mixed with the glass particles, they formed long strands of hundreds of letters, according to the researchers, who published their findings in Astrobiology this week. There was no need for heat or light. Biondi explains, "All we had to do was wait." After only a day, little RNA strands produced, yet the strands continued to grow for months. Jan Paek, a molecular biologist at Firebird Biomolecular Sciences, says, "The beauty of this approach is its simplicity." "Mix the components together, wait a few days, and look for RNA."

Nonetheless, the findings pose a slew of problems. One of the questions is how nucleoside triphosphates came to be in the first place. Recent study by Biondi's colleague Steven Benner suggests that the same basaltic glasses may have aided in the creation and stabilization of individual RNA letters.

The form of the lengthy RNA strands, according to Szostak, is a significant challenge. Enzymes in modern cells ensure that most RNAs form long linear chains. RNA letters, on the other hand, can bind in complicated branching sequences. Szostak wants the researchers to reveal what kind of RNA was produced by the basaltic glasses. "It irritates me that the authors made an intriguing initial finding but then chose to follow the hype rather than the research," Szostak says.

Biondi acknowledges that her team's experiment almost probably results in some RNA branching. She does acknowledge, however, that some branched RNAs are seen in species today, and that analogous structures may have existed before the origin of life. Other studies carried out by the study also confirmed the presence of lengthy strands with connections, indicating that they are most likely linear. "It's a healthy argument," says Dieter Braun, a Ludwig Maximilian University origin-of-life chemist. "It will set off the next series of tests."

More on Science

Jamie Ducharme

3 years ago

How monkeypox spreads (and doesn't spread)

Monkeypox was rare until recently. In 2005, a research called a cluster of six monkeypox cases in the Republic of Congo "the longest reported chain to date."

That's changed. This year, over 25,000 monkeypox cases have been reported in 83 countries, indicating widespread human-to-human transmission.

What spreads monkeypox? Monkeypox transmission research is ongoing; findings may change. But science says...

Most cases were formerly animal-related.

According to the WHO, monkeypox was first diagnosed in an infant in the DRC in 1970. After that, instances were infrequent and often tied to animals. In 2003, 47 Americans contracted rabies from pet prairie dogs.

In 2017, Nigeria saw a significant outbreak. NPR reported that doctors diagnosed young guys without animal exposure who had genital sores. Nigerian researchers highlighted the idea of sexual transmission in a 2019 study, but the theory didn't catch on. “People tend to cling on to tradition, and the idea is that monkeypox is transmitted from animals to humans,” explains research co-author Dr. Dimie Ogoina.

Most monkeypox cases are sex-related.

Human-to-human transmission of monkeypox occurs, and sexual activity plays a role.

Joseph Osmundson, a clinical assistant professor of biology at NYU, says most transmission occurs in queer and gay sexual networks through sexual or personal contact.

Monkeypox spreads by skin-to-skin contact, especially with its blister-like rash, explains Ogoina. Researchers are exploring whether people can be asymptomatically contagious, but they are infectious until their rash heals and fresh skin forms, according to the CDC.

A July research in the New England Journal of Medicine reported that of more than 500 monkeypox cases in 16 countries as of June, 95% were linked to sexual activity and 98% were among males who have sex with men. WHO Director-General Tedros Adhanom Ghebreyesus encouraged males to temporarily restrict their number of male partners in July.

Is monkeypox a sexually transmitted infection (STI)?

Skin-to-skin contact can spread monkeypox, not simply sexual activities. Dr. Roy Gulick, infectious disease chief at Weill Cornell Medicine and NewYork-Presbyterian, said monkeypox is not a "typical" STI. Monkeypox isn't a STI, claims the CDC.

Most cases in the current outbreak are tied to male sexual behavior, but Osmundson thinks the virus might also spread on sports teams, in spas, or in college dorms.

Can you get monkeypox from surfaces?

Monkeypox can be spread by touching infected clothing or bedding. According to a study, a U.K. health care worker caught monkeypox in 2018 after handling ill patient's bedding.

Angela Rasmussen, a virologist at the University of Saskatchewan in Canada, believes "incidental" contact seldom distributes the virus. “You need enough virus exposure to get infected,” she says. It's conceivable after sharing a bed or towel with an infectious person, but less likely after touching a doorknob, she says.

Dr. Müge evik, a clinical lecturer in infectious diseases at the University of St. Andrews in Scotland, says there is a "spectrum" of risk connected with monkeypox. "Every exposure isn't equal," she explains. "People must know where to be cautious. Reducing [sexual] partners may be more useful than cleaning coffee shop seats.

Is monkeypox airborne?

Exposure to an infectious person's respiratory fluids can cause monkeypox, but the WHO says it needs close, continuous face-to-face contact. CDC researchers are still examining how often this happens.

Under precise laboratory conditions, scientists have shown that monkeypox can spread via aerosols, or tiny airborne particles. But there's no clear evidence that this is happening in the real world, Rasmussen adds. “This is expanding predominantly in communities of males who have sex with men, which suggests skin-to-skin contact,” she explains. If airborne transmission were frequent, she argues, we'd find more occurrences in other demographics.

In the shadow of COVID-19, people are worried about aerosolized monkeypox. Rasmussen believes the epidemiology is different. Different viruses.

Can kids get monkeypox?

More than 80 youngsters have contracted the virus thus far, mainly through household transmission. CDC says pregnant women can spread the illness to their fetus.

Among the 1970s, monkeypox predominantly affected children, but by the 2010s, it was more common in adults, according to a February study. The study's authors say routine smallpox immunization (which protects against monkeypox) halted when smallpox was eradicated. Only toddlers were born after smallpox vaccination halted decades ago. More people are vulnerable now.

Schools and daycares could become monkeypox hotspots, according to pediatric instances. Ogoina adds this hasn't happened in Nigeria's outbreaks, which is encouraging. He says, "I'm not sure if we should worry." We must be careful and seek evidence.

Nojus Tumenas

3 years ago

NASA: Strange Betelgeuse Explosion Just Took Place

Orion's red supergiant Betelgeuse erupted. This is astronomers' most magnificent occurrence.

Betelgeuse, a supergiant star in Orion, garnered attention in 2019 for its peculiar appearance. It continued to dim in 2020.

The star was previously thought to explode as a supernova. Studying the event has revealed what happened to Betelgeuse since it happened.

Astronomers saw that the star released a large amount of material, causing it to lose a section of its surface.

They have never seen anything like this and are unsure what caused the star to release so much material.

According to Harvard-Smithsonian Center for Astrophysics astrophysicist Andrea Dupre, astronomers' data reveals an unexplained mystery.

They say it's a new technique to examine star evolution. The James Webb telescope revealed the star's surface features.

Corona flares are stellar mass ejections. These eruptions change the Sun's outer atmosphere.

This could affect power grids and satellite communications if it hits Earth.

Betelgeuse's flare ejected four times more material than the Sun's corona flare.

Astronomers have monitored star rhythms for 50 years. They've seen its dimming and brightening cycle start, stop, and repeat.

Monitoring Betelgeuse's pulse revealed the eruption's power.

Dupre believes the star's convection cells are still amplifying the blast's effects, comparing it to an imbalanced washing machine tub.

The star's outer layer has returned to normal, Hubble data shows. The photosphere slowly rebuilds its springy surface.

Dupre noted the star's unusual behavior. For instance, it’s causing its interior to bounce.

This suggests that the mass ejections that caused the star's surface to lose mass were two separate processes.

Researchers hope to better understand star mass ejection with the James Webb Space Telescope.

Will Lockett

3 years ago

Thanks to a recent development, solar energy may prove to be the best energy source.

Perovskite solar cells will revolutionize everything.

Humanity is in a climatic Armageddon. Our widespread ecological crimes of the previous century are catching up with us, and planet-scale karma threatens everyone. We must adjust to new technologies and lifestyles to avoid this fate. Even solar power, a renewable energy source, has climate problems. A recent discovery could boost solar power's eco-friendliness and affordability. Perovskite solar cells are amazing.

Perovskite is a silicon-like semiconductor. Semiconductors are used to make computer chips, LEDs, camera sensors, and solar cells. Silicon makes sturdy and long-lasting solar cells, thus it's used in most modern solar panels.

Perovskite solar cells are far better. First, they're easy to make at room temperature, unlike silicon cells, which require long, intricate baking processes. This makes perovskite cells cheaper to make and reduces their carbon footprint. Perovskite cells are efficient. Most silicon panel solar farms are 18% efficient, meaning 18% of solar radiation energy is transformed into electricity. Perovskite cells are 25% efficient, making them 38% more efficient than silicon.

However, perovskite cells are nowhere near as durable. A normal silicon panel will lose efficiency after 20 years. The first perovskite cells were ineffective since they lasted barely minutes.

Recent research from Princeton shows that perovskite cells can endure 30 years. The cells kept their efficiency, therefore no sacrifices were made.

No electrical or chemical engineer here, thus I can't explain how they did it. But strangely, the team said longevity isn't the big deal. In the next years, perovskite panels will become longer-lasting. How do you test a panel if you only have a month or two? This breakthrough technique needs a uniform method to estimate perovskite life expectancy fast. The study's key milestone was establishing a standard procedure.

Lab-based advanced aging tests are their solution. Perovskite cells decay faster at higher temperatures, so scientists can extrapolate from that. The test heated the panel to 110 degrees and waited for its output to reduce by 20%. Their panel lasted 2,100 hours (87.5 days) before a 20% decline.

They did some math to extrapolate this data and figure out how long the panel would have lasted in different climates, and were shocked to find it would last 30 years in Princeton. This made perovskite panels as durable as silicon panels. This panel could theoretically be sold today.

This technology will soon allow these brilliant panels to be released into the wild. This technology could be commercially viable in ten, maybe five years.

Solar power will be the best once it does. Solar power is cheap and low-carbon. Perovskite is the cheapest renewable energy source if we switch to it. Solar panel manufacturing's carbon footprint will also drop.

Perovskites' impact goes beyond cost and carbon. Silicon panels require harmful mining and contain toxic elements (cadmium). Perovskite panels don't require intense mining or horrible materials, making their production and expiration more eco-friendly.

Solar power destroys habitat. Massive solar farms could reduce biodiversity and disrupt local ecology by destroying vital habitats. Perovskite cells are more efficient, so they can shrink a solar farm while maintaining energy output. This reduces land requirements, making perovskite solar power cheaper, and could reduce solar's environmental impact.

Perovskite solar power is scalable and environmentally friendly. Princeton scientists will speed up the development and rollout of this energy.

Why bother with fusion, fast reactors, SMRs, or traditional nuclear power? We're close to developing a nearly perfect environmentally friendly power source, and we have the tools and systems to do so quickly. It's also affordable, so we can adopt it quickly and let the developing world use it to grow. Even I struggle to justify spending billions on fusion when a great, cheap technology outperforms it. Perovskite's eco-credentials and cost advantages could save the world and power humanity's future.

You might also like

Ezra Reguerra

3 years ago

Yuga Labs’ Otherdeeds NFT mint triggers backlash from community

Unhappy community members accuse Yuga Labs of fraud, manipulation, and favoritism over Otherdeeds NFT mint.

Following the Otherdeeds NFT mint, disgruntled community members took to Twitter to criticize Yuga Labs' handling of the event.

Otherdeeds NFTs were a huge hit with the community, selling out almost instantly. Due to high demand, the launch increased Ethereum gas fees from 2.6 ETH to 5 ETH.

But the event displeased many people. Several users speculated that the mint was “planned to fail” so the group could advertise launching its own blockchain, as the team mentioned a chain migration in one tweet.

Others like Mark Beylin tweeted that he had "sold out" on all Ape-related NFT investments after Yuga Labs "revealed their true colors." Beylin also advised others to assume Yuga Labs' owners are “bad actors.”

Some users who failed to complete transactions claim they lost ETH. However, Yuga Labs promised to refund lost gas fees.

CryptoFinally, a Twitter user, claimed Yuga Labs gave BAYC members better land than non-members. Others who wanted to participate paid for shittier land, while BAYCS got the only worthwhile land.

The Otherdeed NFT drop also increased Ethereum's burn rate. Glassnode and Data Always reported nearly 70,000 ETH burned on mint day.

Clive Thompson

3 years ago

Small Pieces of Code That Revolutionized the World

Few sentences can have global significance.

Ethan Zuckerman invented the pop-up commercial in 1997.

He was working for Tripod.com, an online service that let people make little web pages for free. Tripod offered advertising to make money. Advertisers didn't enjoy seeing their advertising next to filthy content, like a user's anal sex website.

Zuckerman's boss wanted a solution. Wasn't there a way to move the ads away from user-generated content?

When you visited a Tripod page, a pop-up ad page appeared. So, the ad isn't officially tied to any user page. It'd float onscreen.

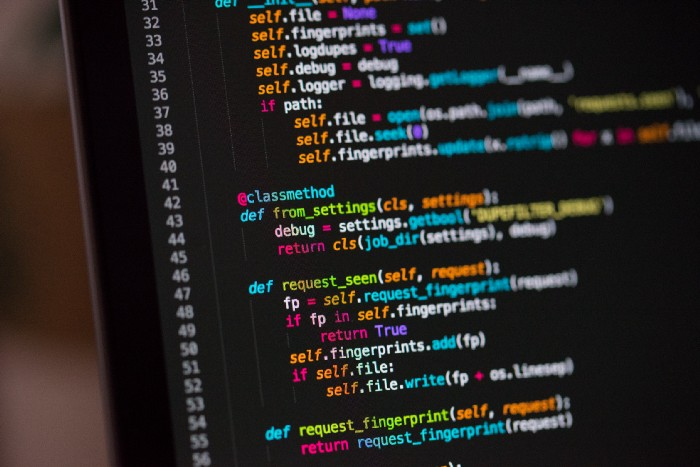

Here’s the thing, though: Zuckerman’s bit of Javascript, that created the popup ad? It was incredibly short — a single line of code:

window.open('http://tripod.com/navbar.html'

"width=200, height=400, toolbar=no, scrollbars=no, resizable=no, target=_top");Javascript tells the browser to open a 200-by-400-pixel window on top of any other open web pages, without a scrollbar or toolbar.

Simple yet harmful! Soon, commercial websites mimicked Zuckerman's concept, infesting the Internet with pop-up advertising. In the early 2000s, a coder for a download site told me that most of their revenue came from porn pop-up ads.

Pop-up advertising are everywhere. You despise them. Hopefully, your browser blocks them.

Zuckerman wrote a single line of code that made the world worse.

I read Zuckerman's story in How 26 Lines of Code Changed the World. Torie Bosch compiled a humorous anthology of short writings about code that tipped the world.

Most of these samples are quite short. Pop-cultural preconceptions about coding say that important code is vast and expansive. Hollywood depicts programmers as blurs spouting out Niagaras of code. Google's success was formerly attributed to its 2 billion lines of code.

It's usually not true. Google's original breakthrough, the piece of code that propelled Google above its search-engine counterparts, was its PageRank algorithm, which determined a web page's value based on how many other pages connected to it and the quality of those connecting pages. People have written their own Python versions; it's only a few dozen lines.

Google's operations, like any large tech company's, comprise thousands of procedures. So their code base grows. The most impactful code can be brief.

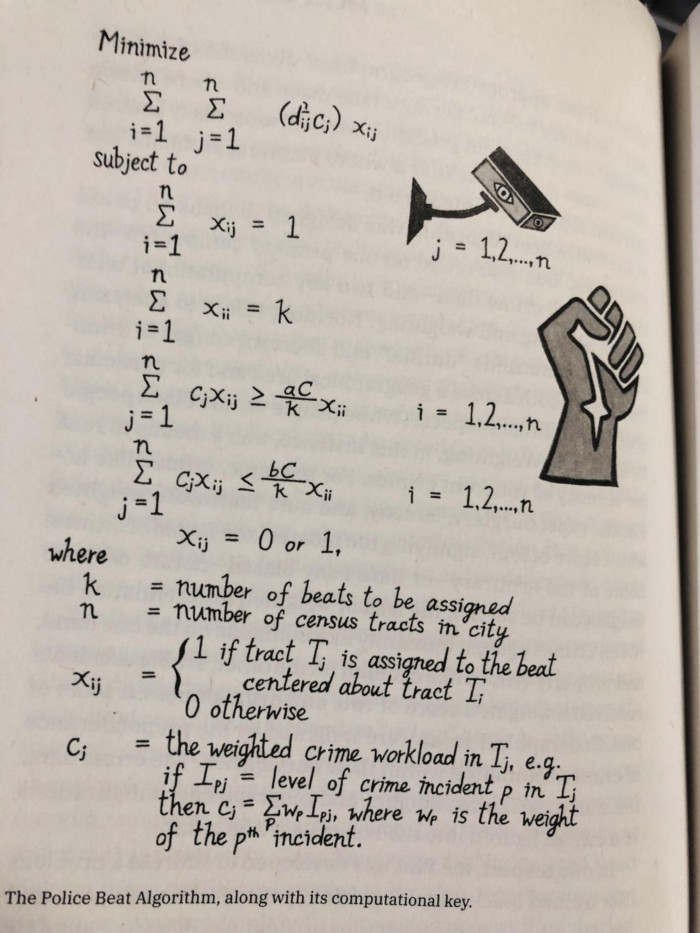

The examples are fascinating and wide-ranging, so read the whole book (or give it to nerds as a present). Charlton McIlwain wrote a chapter on the police beat algorithm developed in the late 1960s to anticipate crime hotspots so law enforcement could dispatch more officers there. It created a racial feedback loop. Since poor Black neighborhoods were already overpoliced compared to white ones, the algorithm directed more policing there, resulting in more arrests, which convinced it to send more police; rinse and repeat.

Kelly Chudler's You Are Not Expected To Understand This depicts the police-beat algorithm.

Even shorter code changed the world: the tracking pixel.

Lily Hay Newman's chapter on monitoring pixels says you probably interact with this code every day. It's a snippet of HTML that embeds a single tiny pixel in an email. Getting an email with a tracking code spies on me. As follows: My browser requests the single-pixel image as soon as I open the mail. My email sender checks to see if Clives browser has requested that pixel. My email sender can tell when I open it.

Adding a tracking pixel to an email is easy:

<img src="URL LINKING TO THE PIXEL ONLINE" width="0" height="0">An older example: Ellen R. Stofan and Nick Partridge wrote a chapter on Apollo 11's lunar module bailout code. This bailout code operated on the lunar module's tiny on-board computer and was designed to prioritize: If the computer grew overloaded, it would discard all but the most vital work.

When the lunar module approached the moon, the computer became overloaded. The bailout code shut down anything non-essential to landing the module. It shut down certain lunar module display systems, scaring the astronauts. Module landed safely.

22-line code

POODOO INHINT

CA Q

TS ALMCADR

TC BANKCALL

CADR VAC5STOR # STORE ERASABLES FOR DEBUGGING PURPOSES.

INDEX ALMCADR

CAF 0

ABORT2 TC BORTENT

OCT77770 OCT 77770 # DONT MOVE

CA V37FLBIT # IS AVERAGE G ON

MASK FLAGWRD7

CCS A

TC WHIMPER -1 # YES. DONT DO POODOO. DO BAILOUT.

TC DOWNFLAG

ADRES STATEFLG

TC DOWNFLAG

ADRES REINTFLG

TC DOWNFLAG

ADRES NODOFLAG

TC BANKCALL

CADR MR.KLEAN

TC WHIMPERThis fun book is worth reading.

I'm a contributor to the New York Times Magazine, Wired, and Mother Jones. I've also written Coders: The Making of a New Tribe and the Remaking of the World and Smarter Than You Think: How Technology is Changing Our Minds. Twitter and Instagram: @pomeranian99; Mastodon: @clive@saturation.social.

Leonardo Castorina

3 years ago

How to Use Obsidian to Boost Research Productivity

Tools for managing your PhD projects, reading lists, notes, and inspiration.

As a researcher, you have to know everything. But knowledge is useless if it cannot be accessed quickly. An easy-to-use method of archiving information makes taking notes effortless and enjoyable.

As a PhD student in Artificial Intelligence, I use Obsidian (https://obsidian.md) to manage my knowledge.

The article has three parts:

- What is a note, how to organize notes, tags, folders, and links? This section is tool-agnostic, so you can use most of these ideas with any note-taking app.

- Instructions for using Obsidian, managing notes, reading lists, and useful plugins. This section demonstrates how I use Obsidian, my preferred knowledge management tool.

- Workflows: How to use Zotero to take notes from papers, manage multiple projects' notes, create MOCs with Dataview, and more. This section explains how to use Obsidian to solve common scientific problems and manage/maintain your knowledge effectively.

This list is not perfect or complete, but it is my current solution to problems I've encountered during my PhD. Please leave additional comments or contact me if you have any feedback. I'll try to update this article.

Throughout the article, I'll refer to your digital library as your "Obsidian Vault" or "Zettelkasten".

Other useful resources are listed at the end of the article.

1. Philosophy: Taking and organizing notes

Carl Sagan: “To make an apple pie from scratch, you must first create the universe.”

Before diving into Obsidian, let's establish a Personal Knowledge Management System and a Zettelkasten. You can skip to Section 2 if you already know these terms.

Niklas Luhmann, a prolific sociologist who wrote 400 papers and 70 books, inspired this section and much of Zettelkasten. Zettelkasten means “slip box” (or library in this article). His Zettlekasten had around 90000 physical notes, which can be found here.

There are now many tools available to help with this process. Obsidian's website has a good introduction section: https://publish.obsidian.md/hub/

Notes

We'll start with "What is a note?" Although it may seem trivial, the answer depends on the topic or your note-taking style. The idea is that a note is as “atomic” (i.e. You should read the note and get the idea right away.

The resolution of your notes depends on their detail. Deep Learning, for example, could be a general description of Neural Networks, with a few notes on the various architectures (eg. Recurrent Neural Networks, Convolutional Neural Networks etc..).

Limiting length and detail is a good rule of thumb. If you need more detail in a specific section of this note, break it up into smaller notes. Deep Learning now has three notes:

- Deep Learning

- Recurrent Neural Networks

- Convolutional Neural Networks

Repeat this step as needed until you achieve the desired granularity. You might want to put these notes in a “Neural Networks” folder because they are all about the same thing. But there's a better way:

#Tags and [[Links]] over /Folders/

The main issue with folders is that they are not flexible and assume that all notes in the folder belong to a single category. This makes it difficult to make connections between topics.

Deep Learning has been used to predict protein structure (AlphaFold) and classify images (ImageNet). Imagine a folder structure like this:

- /Proteins/

- Protein Folding

- /Deep Learning/

- /Proteins/

Your notes about Protein Folding and Convolutional Neural Networks will be separate, and you won't be able to find them in the same folder.

This can be solved in several ways. The most common one is to use tags rather than folders. A note can be grouped with multiple topics this way. Obsidian tags can also be nested (have subtags).

You can also link two notes together. You can build your “Knowledge Graph” in Obsidian and other note-taking apps like Obsidian.

My Knowledge Graph. Green: Biology, Red: Machine Learning, Yellow: Autoencoders, Blue: Graphs, Brown: Tags.

My Knowledge Graph and the note “Backrpropagation” and its links.

Backpropagation note and all its links

Why use Folders?

Folders help organize your vault as it grows. The main suggestion is to have few folders that "weakly" collect groups of notes or better yet, notes from different sources.

Among my Zettelkasten folders are:

My Zettelkasten's 5 folders

They usually gather data from various sources:

MOC: Map of Contents for the Zettelkasten.

Projects: Contains one note for each side-project of my PhD where I log my progress and ideas. Notes are linked to these.

Bio and ML: These two are the main content of my Zettelkasten and could theoretically be combined.

Papers: All my scientific paper notes go here. A bibliography links the notes. Zotero .bib file

Books: I make a note for each book I read, which I then split into multiple notes.

Keeping images separate from other files can help keep your main folders clean.

I will elaborate on these in the Workflow Section.

My general recommendation is to use tags and links instead of folders.

Maps of Content (MOC)

Making Tables of Contents is a good solution (MOCs).

These are notes that "signposts" your Zettelkasten library, directing you to the right type of notes. It can link to other notes based on common tags. This is usually done with a title, then your notes related to that title. As an example:

An example of a Machine Learning MOC generated with Dataview.

As shown above, my Machine Learning MOC begins with the basics. Then it's on to Variational Auto-Encoders. Not only does this save time, but it also saves scrolling through the tag search section.

So I keep MOCs at the top of my library so I can quickly find information and see my library. These MOCs are generated automatically using an Obsidian Plugin called Dataview (https://github.com/blacksmithgu/obsidian-dataview).

Ideally, MOCs could be expanded to include more information about the notes, their status, and what's left to do. In the absence of this, Dataview does a fantastic job at creating a good structure for your notes.

In the absence of this, Dataview does a fantastic job at creating a good structure for your notes.

2. Tools: Knowing Obsidian

Obsidian is my preferred tool because it is free, all notes are stored in Markdown format, and each panel can be dragged and dropped. You can get it here: https://obsidian.md/

Obsidian interface.

Obsidian is highly customizable, so here is my preferred interface:

The theme is customized from https://github.com/colineckert/obsidian-things

Alternatively, each panel can be collapsed, moved, or removed as desired. To open a panel later, click on the vertical "..." (bottom left of the note panel).

My interface is organized as follows:

How my Obsidian Interface is organized.

Folders/Search:

This is where I keep all relevant folders. I usually use the MOC note to navigate, but sometimes I use the search button to find a note.

Tags:

I use nested tags and look into each one to find specific notes to link.

cMenu:

Easy-to-use menu plugin cMenu (https://github.com/chetachiezikeuzor/cMenu-Plugin)

Global Graph:

The global graph shows all your notes (linked and unlinked). Linked notes will appear closer together. Zoom in to read each note's title. It's a bit overwhelming at first, but as your library grows, you get used to the positions and start thinking of new connections between notes.

Local Graph:

Your current note will be shown in relation to other linked notes in your library. When needed, you can quickly jump to another link and back to the current note.

Links:

Finally, an outline panel and the plugin Obsidian Power Search (https://github.com/aviral-batra/obsidian-power-search) allow me to search my vault by highlighting text.

Start using the tool and worry about panel positioning later. I encourage you to find the best use-case for your library.

Plugins

An additional benefit of using Obsidian is the large plugin library. I use several (Calendar, Citations, Dataview, Templater, Admonition):

Obsidian Calendar Plugin: https://github.com/liamcain

It organizes your notes on a calendar. This is ideal for meeting notes or keeping a journal.

Calendar addon from hans/obsidian-citation-plugin

Obsidian Citation Plugin: https://github.com/hans/

Allows you to cite papers from a.bib file. You can also customize your notes (eg. Title, Authors, Abstract etc..)

Plugin citation from hans/obsidian-citation-plugin

Obsidian Dataview: https://github.com/blacksmithgu/

A powerful plugin that allows you to query your library as a database and generate content automatically. See the MOC section for an example.

Allows you to create notes with specific templates like dates, tags, and headings.

Templater. Obsidian Admonition: https://github.com/valentine195/obsidian-admonition

Blocks allow you to organize your notes.

Plugin warning. Obsidian Admonition (valentine195)

There are many more, but this list should get you started.

3. Workflows: Cool stuff

Here are a few of my workflows for using obsidian for scientific research. This is a list of resources I've found useful for my use-cases. I'll outline and describe them briefly so you can skim them quickly.

3.1 Using Templates to Structure Notes

3.2 Free Note Syncing (Laptop, Phone, Tablet)

3.3 Zotero/Mendeley/JabRef -> Obsidian — Managing Reading Lists

3.4 Projects and Lab Books

3.5 Private Encrypted Diary

3.1 Using Templates to Structure Notes

Plugins: Templater and Dataview (optional).

To take effective notes, you must first make adding new notes as easy as possible. Templates can save you time and give your notes a consistent structure. As an example:

An example of a note using a template.

### [[YOUR MOC]]

# Note Title of your note

**Tags**::

**Links**::

The top line links to your knowledge base's Map of Content (MOC) (see previous sections). After the title, I add tags (and a link between the note and the tag) and links to related notes.

To quickly identify all notes that need to be expanded, I add the tag “#todo”. In the “TODO:” section, I list the tasks within the note.

The rest are notes on the topic.

Templater can help you create these templates. For new books, I use the following template:

### [[Books MOC]]

# Title

**Author**::

**Date::

**Tags::

**Links::

A book template example.

Using a simple query, I can hook Dataview to it.

dataview

table author as Author, date as “Date Finished”, tags as “Tags”, grade as “Grade”

from “4. Books”

SORT grade DESCENDING

using Dataview to query templates.

3.2 Free Note Syncing (Laptop, Phone, Tablet)

No plugins used.

One of my favorite features of Obsidian is the library's self-contained and portable format. Your folder contains everything (plugins included).

Ordinary folders and documents are available as well. There is also a “.obsidian” folder. This contains all your plugins and settings, so you can use it on other devices.

So you can use Google Drive, iCloud, or Dropbox for free as long as you sync your folder (note: your folder should be in your Cloud Folder).

For my iOS and macOS work, I prefer iCloud. You can also use the paid service Obsidian Sync.

3.3 Obsidian — Managing Reading Lists and Notes in Zotero/Mendeley/JabRef

Plugins: Quotes (required).

3.3 Zotero/Mendeley/JabRef -> Obsidian — Taking Notes and Managing Reading Lists of Scientific Papers

My preferred reference manager is Zotero, but this workflow should work with any reference manager that produces a .bib file. This file is exported to my cloud folder so I can access it from any platform.

My Zotero library is tagged as follows:

My reference manager's tags

For readings, I usually search for the tags “!!!” and “To-Read” and select a paper. Annotate the paper next (either on PDF using GoodNotes or on physical paper).

Then I make a paper page using a template in the Citations plugin settings:

An example of my citations template.

Create a new note, open the command list with CMD/CTRL + P, and find the Citations “Insert literature note content in the current pane” to see this lovely view.

Citation generated by the article https://doi.org/10.1101/2022.01.24.22269144

You can then convert your notes to digital. I found that transcribing helped me retain information better.

3.4 Projects and Lab Books

Plugins: Tweaker (required).

PhD students offering advice on thesis writing are common (read as regret). I started asking them what they would have done differently or earlier.

“Deep stuff Leo,” one person said. So my main issue is basic organization, losing track of my tasks and the reasons for them.

As a result, I'd go on other experiments that didn't make sense, and have to reverse engineer my logic for thesis writing. - PhD student now wise Postdoc

Time management requires planning. Keeping track of multiple projects and lab books is difficult during a PhD. How I deal with it:

- One folder for all my projects

- One file for each project

I use a template to create each project

### [[Projects MOC]]

# <% tp.file.title %>

**Tags**::

**Links**::

**URL**::

**Project Description**::## Notes:

### <% tp.file.last_modified_date(“dddd Do MMMM YYYY”) %>

#### Done:

#### TODO:

#### Notes

You can insert a template into a new note with CMD + P and looking for the Templater option.

I then keep adding new days with another template:

### <% tp.file.last_modified_date("dddd Do MMMM YYYY") %>

#### Done:

#### TODO:

#### Notes:

This way you can keep adding days to your project and update with reasonings and things you still have to do and have done. An example below:

Example of project note with timestamped notes.

3.5 Private Encrypted Diary

This is one of my favorite Obsidian uses.

Mini Diary's interface has long frustrated me. After the author archived the project, I looked for a replacement. I had two demands:

- It had to be private, and nobody had to be able to read the entries.

- Cloud syncing was required for editing on multiple devices.

Then I learned about encrypting the Obsidian folder. Then decrypt and open the folder with Obsidian. Sync the folder as usual.

Use CryptoMator (https://cryptomator.org/). Create an encrypted folder in Cryptomator for your Obsidian vault, set a password, and let it do the rest.

If you need a step-by-step video guide, here it is:

Conclusion

So, I hope this was helpful!

In the first section of the article, we discussed notes and note-taking techniques. We discussed when to use tags and links over folders and when to break up larger notes.

Then we learned about Obsidian, its interface, and some useful plugins like Citations for citing papers and Templater for creating note templates.

Finally, we discussed workflows and how to use Zotero to take notes from scientific papers, as well as managing Lab Books and Private Encrypted Diaries.

Thanks for reading and commenting :)

Read original post here