More on Entrepreneurship/Creators

Jenn Leach

3 years ago

What TikTok Paid Me in 2021 with 100,000 Followers

I thought it would be interesting to share how much TikTok paid me in 2021.

Onward!

Oh, you get paid by TikTok?

Yes.

They compensate thousands of creators. My Tik Tok account

I launched my account in March 2020 and generally post about money, finance, and side hustles.

TikTok creators are paid in several ways.

Fund for TikTok creators

Sponsorships (aka brand deals)

Affiliate promotion

My own creations

Only one, the TikTok Creator Fund, pays me.

The TikTok Creator Fund: What Is It?

TikTok's initiative pays creators.

YouTube's Shorts Fund, Snapchat Spotlight, and other platforms have similar programs.

Creator Fund doesn't pay everyone. Some prerequisites are:

age requirement of at least 18 years

In the past 30 days, there must have been 100,000 views.

a minimum of 10,000 followers

If you qualify, you can apply using your TikTok account, and once accepted, your videos can earn money.

My earnings from the TikTok Creator Fund

Since 2020, I've made $273.65. My 2021 payment is $77.36.

Yikes!

I made between $4.91 to around $13 payout each time I got paid.

TikTok reportedly pays 3 to 5 cents per thousand views.

To live off the Creator Fund, you'd need billions of monthly views.

Top personal finance creator Sara Finance has millions (if not billions) of views and over 700,000 followers yet only received $3,000 from the TikTok Creator Fund.

Goals for 2022

TikTok pays me in different ways, as listed above.

My largest TikTok account isn't my only one.

In 2022, I'll revamp my channel.

It's been a tumultuous year on TikTok for my account, from getting shadow-banned to being banned from the Creator Fund to being accepted back (not at my wish).

What I've experienced isn't rare. I've read about other creators' experiences.

So, some quick goals for this account…

200,000 fans by the year 2023

Consistent monthly income of $5,000

two brand deals each month

For now, that's all.

Pat Vieljeux

3 years ago

The three-year business plan is obsolete for startups.

If asked, run.

An entrepreneur asked me about her pitch deck. A Platform as a Service (PaaS).

She told me she hadn't done her 5-year forecasts but would soon.

I said, Don't bother. I added "time-wasting."

“I've been asked”, she said.

“Who asked?”

“a VC”

“5-year forecast?”

“Yes”

“Get another VC. If he asks, it's because he doesn't understand your solution or to waste your time.”

Some VCs are lagging. They're still using steam engines.

10-years ago, 5-year forecasts were requested.

Since then, we've adopted a 3-year plan.

But It's outdated.

Max one year.

What has happened?

Revolutionary technology. NO-CODE.

Revolution's consequences?

Product viability tests are shorter. Hugely. SaaS and PaaS.

Let me explain:

Building a minimum viable product (MVP) that works only takes a few months.

1 to 2 months for practical testing.

Your company plan can be validated or rejected in 4 months as a consequence.

After validation, you can ask for VC money. Even while a prototype can generate revenue, you may not require any.

Good VCs won't ask for a 3-year business plan in that instance.

One-year, though.

If you want, establish a three-year plan, but realize that the second year will be different.

You may have changed your business model by then.

A VC isn't interested in a three-year business plan because your solution may change.

Your ability to create revenue will be key.

But also, to pivot.

They will be interested in your value proposition.

They will want to know what differentiates you from other competitors and why people will buy your product over another.

What will interest them is your resilience, your ability to bounce back.

Not to mention your mindset. The fact that you won’t get discouraged at the slightest setback.

The grit you have when facing adversity, as challenges will surely mark your journey.

The authenticity of your approach. They’ll want to know that you’re not just in it for the money, let alone to show off.

The fact that you put your guts into it and that you are passionate about it. Because entrepreneurship is a leap of faith, a leap into the void.

They’ll want to make sure you are prepared for it because it’s not going to be a walk in the park.

They’ll want to know your background and why you got into it.

They’ll also want to know your family history.

And what you’re like in real life.

So a 5-year plan…. You can bet they won’t give a damn. Like their first pair of shoes.

Aaron Dinin, PhD

3 years ago

I put my faith in a billionaire, and he destroyed my business.

How did his money blind me?

Like most fledgling entrepreneurs, I wanted a mentor. I met as many nearby folks with "entrepreneur" in their LinkedIn biographies for coffee.

These meetings taught me a lot, and I'd suggest them to any new creator. Attention! Meeting with many experienced entrepreneurs means getting contradictory advice. One entrepreneur will tell you to do X, then the next one you talk to may tell you to do Y, which are sometimes opposites. You'll have to chose which suggestion to take after the chats.

I experienced this. Same afternoon, I had two coffee meetings with experienced entrepreneurs. The first meeting was with a billionaire entrepreneur who took his company public.

I met him in a swanky hotel lobby and ordered a drink I didn't pay for. As a fledgling entrepreneur, money was scarce.

During the meeting, I demoed the software I'd built, he liked it, and we spent the hour discussing what features would make it a success. By the end of the meeting, he requested I include a killer feature we both agreed would attract buyers. The feature was complex and would require some time. The billionaire I was sipping coffee with in a beautiful hotel lobby insisted people would love it, and that got me enthusiastic.

The second meeting was with a young entrepreneur who had recently raised a small amount of investment and looked as eager to pitch me as I was to pitch him. I forgot his name. I mostly recall meeting him in a filthy coffee shop in a bad section of town and buying his pricey cappuccino. Water for me.

After his pitch, I demoed my app. When I was done, he barely noticed. He questioned my customer acquisition plan. Who was my client? What did they offer? What was my plan? Etc. No decent answers.

After our meeting, he insisted I spend more time learning my market and selling. He ignored my questions about features. Don't worry about features, he said. Customers will request features. First, find them.

Putting your faith in results over relevance

Problems plagued my afternoon. I met with two entrepreneurs who gave me differing advice about how to proceed, and I had to decide which to pursue. I couldn't decide.

Ultimately, I followed the advice of the billionaire.

Obviously.

Who wouldn’t? That was the guy who clearly knew more.

A few months later, I constructed the feature the billionaire said people would line up for.

The new feature was unpopular. I couldn't even get the billionaire to answer an email showing him what I'd done. He disappeared.

Within a few months, I shut down the company, wasting all the time and effort I'd invested into constructing the killer feature the billionaire said I required.

Would follow the struggling entrepreneur's advice have saved my company? It would have saved me time in retrospect. Potential consumers would have told me they didn't want what I was producing, and I could have shut down the company sooner or built something they did want. Both outcomes would have been better.

Now I know, but not then. I favored achievement above relevance.

Success vs. relevance

The millionaire gave me advice on building a large, successful public firm. A successful public firm is different from a startup. Priorities change in the last phase of business building, which few entrepreneurs reach. He gave wonderful advice to founders trying to double their stock values in two years, but it wasn't beneficial for me.

The other failing entrepreneur had relevant, recent experience. He'd recently been in my shoes. We still had lots of problems. He may not have achieved huge success, but he had valuable advice on how to pass the closest hurdle.

The money blinded me at the moment. Not alone So much of company success is defined by money valuations, fundraising, exits, etc., so entrepreneurs easily fall into this trap. Money chatter obscures the value of knowledge.

Don't base startup advice on a person's income. Focus on what and when the person has learned. Relevance to you and your goals is more important than a person's accomplishments when considering advice.

You might also like

Sam Warain

2 years ago

The Brilliant Idea Behind Kim Kardashian's New Private Equity Fund

Kim Kardashian created Skky Partners. Consumer products, internet & e-commerce, consumer media, hospitality, and luxury are company targets.

Some call this another Kardashian publicity gimmick.

This maneuver is brilliance upon closer inspection. Why?

1) Kim has amassed a sizable social media fan base:

Over 320 million Instagram and 70 million Twitter users follow Kim Kardashian.

Kim Kardashian's Instagram account ranks 8th. Three Kardashians in top 10 is ridiculous.

This gives her access to consumer data. She knows what people are discussing. Investment firms need this data.

Quality, not quantity, of her followers matters. Studies suggest that her following are more engaged than Selena Gomez and Beyonce's.

Kim's followers are worth roughly $500 million to her brand, according to a research. They trust her and buy what she recommends.

2) She has a special aptitude for identifying trends.

Kim Kardashian can sense trends.

She's always ahead of fashion and beauty trends. She's always trying new things, too. She doesn't mind making mistakes when trying anything new. Her desire to experiment makes her a good business prospector.

Kim has also created a lifestyle brand that followers love. Kim is an entrepreneur, mom, and role model, not just a reality TV star or model. She's established a brand around her appearance, so people want to buy her things.

Her fragrance collection has sold over $100 million since its 2009 introduction, and her Sears apparel line did over $200 million in its first year.

SKIMS is her latest $3.2bn brand. She can establish multibillion-dollar firms with her enormous distribution platform.

Early founders would kill for Kim Kardashian's network.

Making great products is hard, but distribution is more difficult. — David Sacks, All-in-Podcast

3) She can delegate the financial choices to Jay Sammons, one of the greatest in the industry.

Jay Sammons is well-suited to develop Kim Kardashian's new private equity fund.

Sammons has 16 years of consumer investing experience at Carlyle. This will help Kardashian invest in consumer-facing enterprises.

Sammons has invested in Supreme and Beats Electronics, both of which have grown significantly. Sammons' track record and competence make him the obvious choice.

Kim Kardashian and Jay Sammons have joined forces to create a new business endeavor. The agreement will increase Kardashian's commercial empire. Sammons can leverage one of the world's most famous celebrities.

“Together we hope to leverage our complementary expertise to build the next generation consumer and media private equity firm” — Kim Kardashian

Kim Kardashian is a successful businesswoman. She developed an empire by leveraging social media to connect with fans. By developing a global lifestyle brand, she has sold things and experiences that have made her one of the world's richest celebrities.

She's a shrewd entrepreneur who knows how to maximize on herself and her image.

Imagine how much interest Kim K will bring to private equity and venture capital.

I'm curious about the company's growth.

Sammy Abdullah

3 years ago

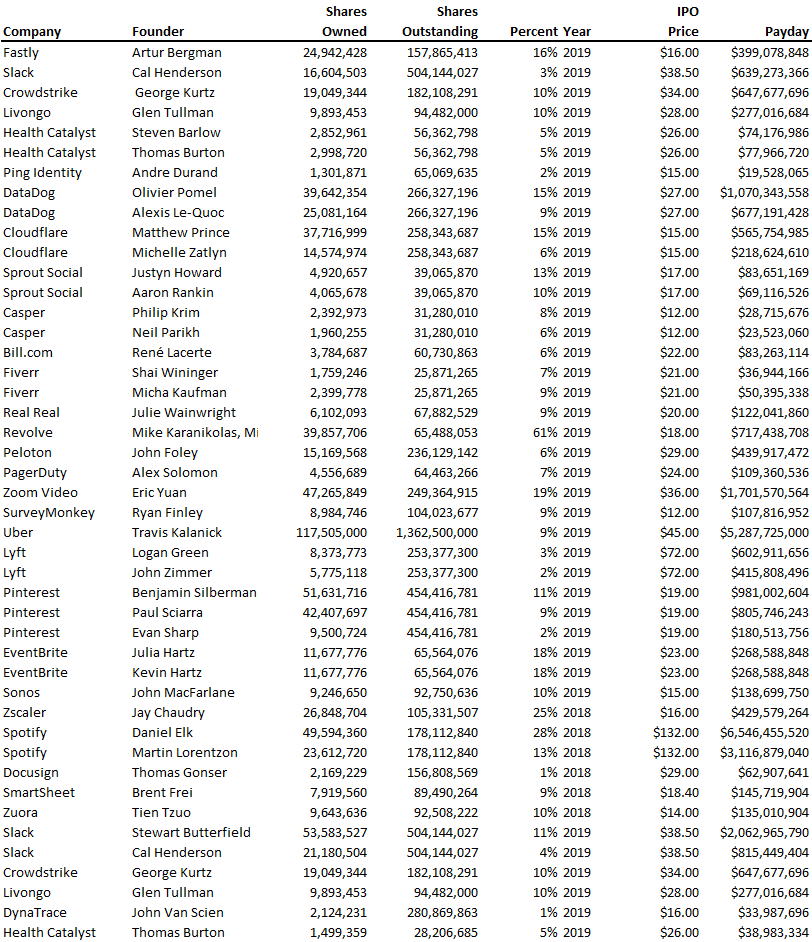

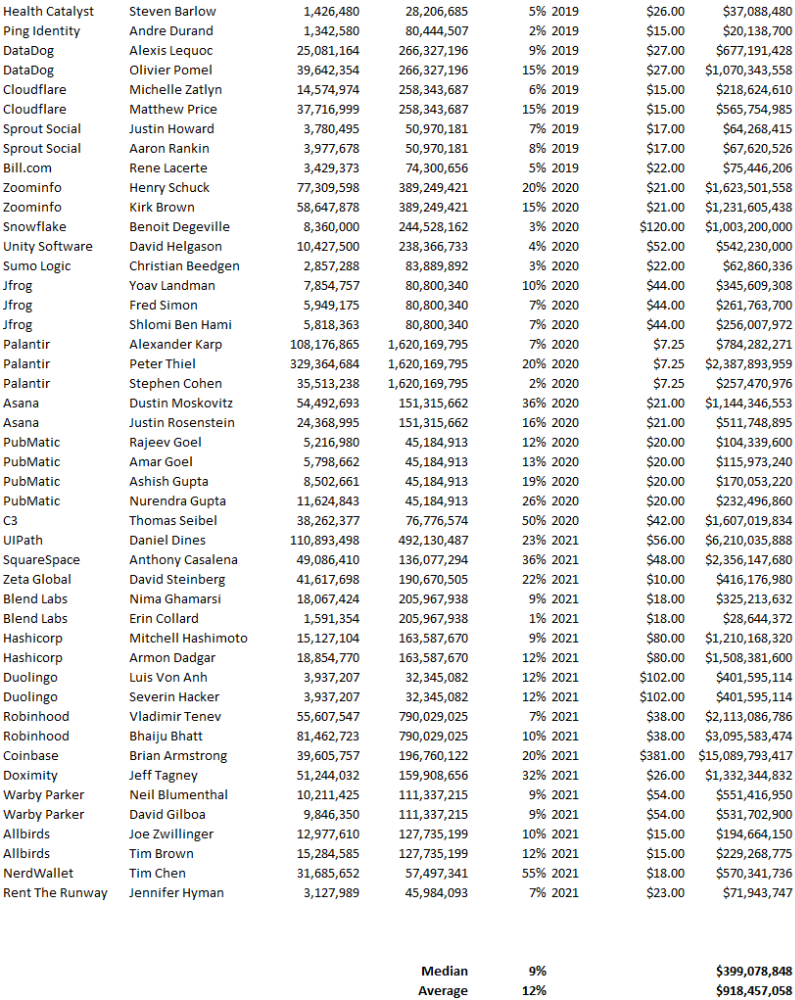

Payouts to founders at IPO

How much do startup founders make after an IPO? We looked at 2018's major tech IPOs. Paydays aren't what founders took home at the IPO (shares are normally locked up for 6 months), but what they were worth at the IPO price on the day the firm went public. It's not cash, but it's nice. Here's the data.

Several points are noteworthy.

Huge payoffs. Median and average pay were $399m and $918m. Average and median homeownership were 9% and 12%.

Coinbase, Uber, UI Path. Uber, Zoom, Spotify, UI Path, and Coinbase founders raised billions. Zoom's founder owned 19% and Spotify's 28% and 13%. Brian Armstrong controlled 20% of Coinbase at IPO and was worth $15bn. Preserving as much equity as possible by staying cash-efficient or raising at high valuations also helps.

The smallest was Ping. Ping's compensation was the smallest. Andre Duand owned 2% but was worth $20m at IPO. That's less than some billion-dollar paydays, but still good.

IPOs can be lucrative, as you can see. Preserving equity could be the difference between a $20mm and $15bln payday (Coinbase).

Sam Hickmann

3 years ago

Donor-Advised Fund Tax Benefits (DAF)

Giving through a donor-advised fund can be tax-efficient. Using a donor-advised fund can reduce your tax liability while increasing your charitable impact.

Grow Your Donations Tax-Free.

Your DAF's charitable dollars can be invested before being distributed. Your DAF balance can grow with the market. This increases grantmaking funds. The assets of the DAF belong to the charitable sponsor, so you will not be taxed on any growth.

Avoid a Windfall Tax Year.

DAFs can help reduce tax burdens after a windfall like an inheritance, business sale, or strong market returns. Contributions to your DAF are immediately tax deductible, lowering your taxable income. With DAFs, you can effectively pre-fund years of giving with assets from a single high-income event.

Make a contribution to reduce or eliminate capital gains.

One of the most common ways to fund a DAF is by gifting publicly traded securities. Securities held for more than a year can be donated at fair market value and are not subject to capital gains tax. If a donor liquidates assets and then donates the proceeds to their DAF, capital gains tax reduces the amount available for philanthropy. Gifts of appreciated securities, mutual funds, real estate, and other assets are immediately tax deductible up to 30% of Adjusted gross income (AGI), with a five-year carry-forward for gifts that exceed AGI limits.

Using Appreciated Stock as a Gift

Donating appreciated stock directly to a DAF rather than liquidating it and donating the proceeds reduces philanthropists' tax liability by eliminating capital gains tax and lowering marginal income tax.

In the example below, a donor has $100,000 in long-term appreciated stock with a cost basis of $10,000:

Using a DAF would allow this donor to give more to charity while paying less taxes. This strategy often allows donors to give more than 20% more to their favorite causes.

For illustration purposes, this hypothetical example assumes a 35% income tax rate. All realized gains are subject to the federal long-term capital gains tax of 20% and the 3.8% Medicare surtax. No other state taxes are considered.

The information provided here is general and educational in nature. It is not intended to be, nor should it be construed as, legal or tax advice. NPT does not provide legal or tax advice. Furthermore, the content provided here is related to taxation at the federal level only. NPT strongly encourages you to consult with your tax advisor or attorney before making charitable contributions.