More on Personal Growth

Daniel Vassallo

3 years ago

Why I quit a $500K job at Amazon to work for myself

I quit my 8-year Amazon job last week. I wasn't motivated to do another year despite promotions, pay, recognition, and praise.

In AWS, I built developer tools. I could have worked in that field forever.

I became an Amazon developer. Within 3.5 years, I was promoted twice to senior engineer and would have been promoted to principal engineer if I stayed. The company said I had great potential.

Over time, I became a reputed expert and leader within the company. I was respected.

First year I made $75K, last year $511K. If I stayed another two years, I could have made $1M.

Despite Amazon's reputation, my work–life balance was good. I no longer needed to prove myself and could do everything in 40 hours a week. My team worked from home once a week, and I rarely opened my laptop nights or weekends.

My coworkers were great. I had three generous, empathetic managers. I’m very grateful to everyone I worked with.

Everything was going well and getting better. My motivation to go to work each morning was declining despite my career and income growth.

Another promotion, pay raise, or big project wouldn't have boosted my motivation. Motivation was also waning. It was my freedom.

Demotivation

My motivation was high in the beginning. I worked with someone on an internal tool with little scrutiny. I had more freedom to choose how and what to work on than in recent years. Me and another person improved it, talked to users, released updates, and tested it. Whatever we wanted, we did. We did our best and were mostly self-directed.

In recent years, things have changed. My department's most important project had many stakeholders and complex goals. What I could do depended on my ability to convince others it was the best way to achieve our goals.

Amazon was always someone else's terms. The terms started out simple (keep fixing it), but became more complex over time (maximize all goals; satisfy all stakeholders). Working in a large organization imposed restrictions on how to do the work, what to do, what goals to set, and what business to pursue. This situation forced me to do things I didn't want to do.

Finding New Motivation

What would I do forever? Not something I did until I reached a milestone (an exit), but something I'd do until I'm 80. What could I do for the next 45 years that would make me excited to wake up and pay my bills? Is that too unambitious? Nope. Because I'm motivated by two things.

One is an external carrot or stick. I'm not forced to file my taxes every April, but I do because I don't want to go to jail. Or I may not like something but do it anyway because I need to pay the bills or want a nice car. Extrinsic motivation

One is internal. When there's no carrot or stick, this motivates me. This fuels hobbies. I wanted a job that was intrinsically motivated.

Is this too low-key? Extrinsic motivation isn't sustainable. Getting promoted felt good for a week, then it was over. When I hit $100K, I admired my W2 for a few days, but then it wore off. Same thing happened at $200K, $300K, $400K, and $500K. Earning $1M or $10M wouldn't change anything. I feel the same about every material reward or possession. Getting them feels good at first, but quickly fades.

Things I've done since I was a kid, when no one forced me to, don't wear off. Coding, selling my creations, charting my own path, and being honest. Why not always use my strengths and motivation? I'm lucky to live in a time when I can work independently in my field without large investments. So that’s what I’m doing.

What’s Next?

I'm going all-in on independence and will make a living from scratch. I won't do only what I like, but on my terms. My goal is to cover my family's expenses before my savings run out while doing something I enjoy. What more could I want from my work?

You can now follow me on Twitter as I continue to document my journey.

This post is a summary. Read full article here

Jari Roomer

3 years ago

Successful people have this one skill.

Without self-control, you'll waste time chasing dopamine fixes.

I found a powerful quote in Tony Robbins' Awaken The Giant Within:

“Most of the challenges that we have in our personal lives come from a short-term focus” — Tony Robbins

Most people are short-term oriented, but highly successful people are long-term oriented.

Successful people act in line with their long-term goals and values, while the rest are distracted by short-term pleasures and dopamine fixes.

Instant gratification wrecks lives

Instant pleasure is fleeting. Quickly fading effects leave you craving more stimulation.

Before you know it, you're in a cycle of quick fixes. This explains binging on food, social media, and Netflix.

These things cause a dopamine spike, which is entertaining. This dopamine spike crashes quickly, leaving you craving more stimulation.

It's fine to watch TV or play video games occasionally. Problems arise when brain impulses aren't controlled. You waste hours chasing dopamine fixes.

Instant gratification becomes problematic when it interferes with long-term goals, happiness, and life fulfillment.

Most rewarding things require delay

Life's greatest rewards require patience and delayed gratification. They must be earned through patience, consistency, and effort.

Ex:

A fit, healthy body

A deep connection with your spouse

A thriving career/business

A healthy financial situation

These are some of life's most rewarding things, but they take work and patience. They all require the ability to delay gratification.

To have a healthy bank account, you must save (and invest) a large portion of your monthly income. This means no new tech or clothes.

If you want a fit, healthy body, you must eat better and exercise three times a week. So no fast food and Netflix.

It's a battle between what you want now and what you want most.

Successful people choose what they want most over what they want now. It's a major difference.

Instant vs. delayed gratification

Most people subconsciously prefer instant rewards over future rewards, even if the future rewards are more significant.

We humans aren't logical. Emotions and instincts drive us. So we act against our goals and values.

Fortunately, instant gratification bias can be overridden. This is a modern superpower. Effective methods include:

#1: Train your brain to handle overstimulation

Training your brain to function without constant stimulation is a powerful change. Boredom can lead to long-term rewards.

Unlike impulsive shopping, saving money is boring. Having lots of cash is amazing.

Compared to video games, deep work is boring. A successful online business is rewarding.

Reading books is boring compared to scrolling through funny videos on social media. Knowledge is invaluable.

You can't do these things if your brain is overstimulated. Your impulses will control you. To reduce overstimulation addiction, try:

Daily meditation (10 minutes is enough)

Daily study/work for 90 minutes (no distractions allowed)

First hour of the day without phone, social media, and Netflix

Nature walks, journaling, reading, sports, etc.

#2: Make Important Activities Less Intimidating

Instant gratification helps us cope with stress. Starting a book or business can be intimidating. Video games and social media offer a quick escape in such situations.

Make intimidating tasks less so. Break them down into small tasks. Start a new business/side-hustle by:

Get domain name

Design website

Write out a business plan

Research competition/peers

Approach first potential client

Instead of one big mountain, divide it into smaller sub-tasks. This makes a task easier and less intimidating.

#3: Plan ahead for important activities

Distractions will invade unplanned time. Your time is dictated by your impulses, which are usually Netflix, social media, fast food, and video games. It wants quick rewards and dopamine fixes.

Plan your days and be proactive with your time. Studies show that scheduling activities makes you 3x more likely to do them.

To achieve big goals, you must plan. Don't gamble.

Want to get fit? Schedule next week's workouts. Want a side-job? Schedule your work time.

Scott Stockdale

3 years ago

A Day in the Life of Lex Fridman Can Help You Hit 6-Month Goals

The Lex Fridman podcast host has interviewed Elon Musk.

Lex is a minimalist YouTuber. His videos are sloppy. Suits are his trademark.

In a video, he shares a typical day. I've smashed my 6-month goals using its ideas.

Here's his schedule.

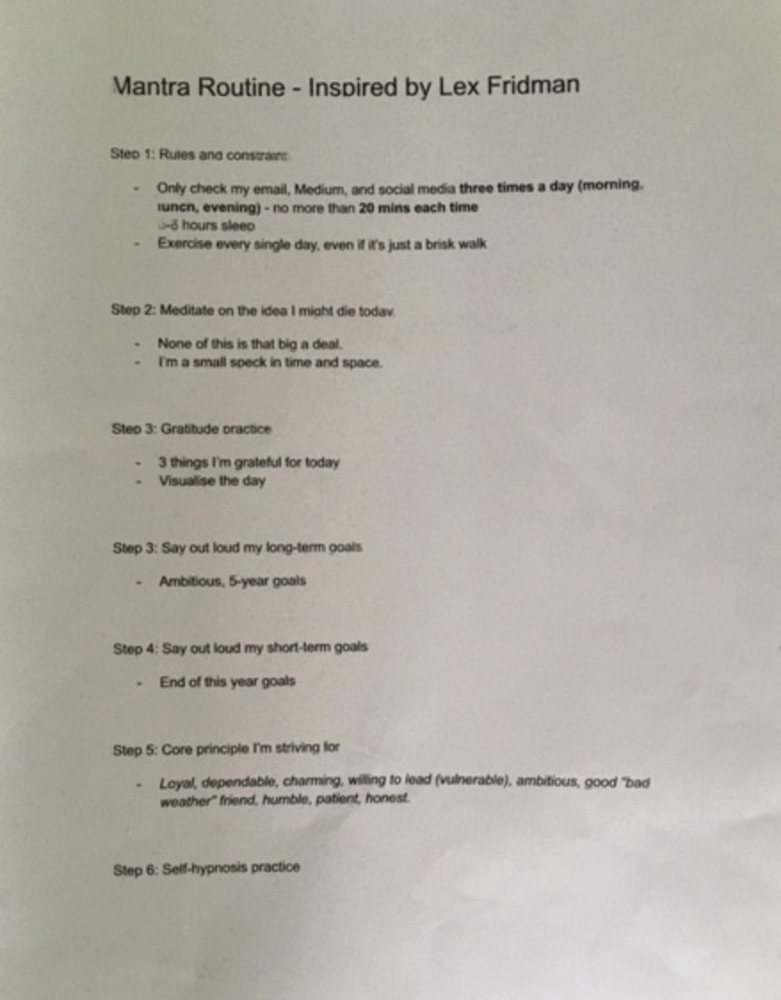

Morning Mantra

Not woo-woo. Lex's mantra reflects his practicality.

Four parts.

Rulebook

"I remember the game's rules," he says.

Among them:

Sleeping 6–8 hours nightly

1–3 times a day, he checks social media.

Every day, despite pain, he exercises. "I exercise uninjured body parts."

Visualize

He imagines his day. "Like Sims..."

He says three things he's grateful for and contemplates death.

"Today may be my last"

Objectives

Then he visualizes his goals. He starts big. Five-year goals.

Short-term goals follow. Lex says they're year-end goals.

Near but out of reach.

Principles

He lists his principles. Assertions. His goals.

He acknowledges his cliche beliefs. Compassion, empathy, and strength are key.

Here's my mantra routine:

Four-Hour Deep Work

Lex begins a four-hour deep work session after his mantra routine. Today's toughest.

AI is Lex's specialty. His video doesn't explain what he does.

Clearly, he works hard.

Before starting, he has water, coffee, and a bathroom break.

"During deep work sessions, I minimize breaks."

He's distraction-free. Phoneless. Silence. Nothing. Any loose ideas are typed into a Google doc for later. He wants to work.

"Just get the job done. Don’t think about it too much and feel good once it’s complete." — Lex Fridman

30-Minute Social Media & Music

After his first deep work session, Lex rewards himself.

10 minutes on social media, 20 on music. Upload content and respond to comments in 10 minutes. 20 minutes for guitar or piano.

"In the real world, I’m currently single, but in the music world, I’m in an open relationship with this beautiful guitar. Open relationship because sometimes I cheat on her with the acoustic." — Lex Fridman

Two-hour exercise

Then exercise for two hours.

Daily runs six miles. Then he chooses how far to go. Run time is an hour.

He does bodyweight exercises. Every minute for 15 minutes, do five pull-ups and ten push-ups. It's David Goggins-inspired. He aims for an hour a day.

He's hungry. Before running, he takes a salt pill for electrolytes.

He'll then take a one-minute cold shower while listening to cheesy songs. Afterward, he might eat.

Four-Hour Deep Work

Lex's second work session.

He works 8 hours a day.

Again, zero distractions.

Eating

The video's meal doesn't look appetizing, but it's healthy.

It's ground beef with vegetables. Cauliflower is his "ground-floor" veggie. "Carrots are my go-to party food."

Lex's keto diet includes 1800–2000 calories.

He drinks a "nutrient-packed" Atheltic Greens shake and takes tablets. It's:

One daily tablet of sodium.

Magnesium glycinate tablets stopped his keto headaches.

Potassium — "For electrolytes"

Fish oil: healthy joints

“So much of nutrition science is barely a science… I like to listen to my own body and do a one-person, one-subject scientific experiment to feel good.” — Lex Fridman

Four-hour shallow session

This work isn't as mentally taxing.

Lex planned to:

Finish last session's deep work (about an hour)

Adobe Premiere podcasting (about two hours).

Email-check (about an hour). Three times a day max. First, check for emergencies.

If he's sick, he may watch Netflix or YouTube documentaries or visit friends.

“The possibilities of chaos are wide open, so I can do whatever the hell I want.” — Lex Fridman

Two-hour evening reading

Nonstop work.

Lex ends the day reading academic papers for an hour. "Today I'm skimming two machine learning and neuroscience papers"

This helps him "think beyond the paper."

He reads for an hour.

“When I have a lot of energy, I just chill on the bed and read… When I’m feeling tired, I jump to the desk…” — Lex Fridman

Takeaways

Lex's day-in-the-life video is inspiring.

He has positive energy and works hard every day.

Schedule:

Mantra Routine includes rules, visualizing, goals, and principles.

Deep Work Session #1: Four hours of focus.

10 minutes social media, 20 minutes guitar or piano. "Music brings me joy"

Six-mile run, then bodyweight workout. Two hours total.

Deep Work #2: Four hours with no distractions. Google Docs stores random thoughts.

Lex supplements his keto diet.

This four-hour session is "open to chaos."

Evening reading: academic papers followed by fiction.

"I value some things in life. Work is one. The other is loving others. With those two things, life is great." — Lex Fridman

You might also like

Jonathan Vanian

4 years ago

What is Terra? Your guide to the hot cryptocurrency

With cryptocurrencies like Bitcoin, Ether, and Dogecoin gyrating in value over the past few months, many people are looking at so-called stablecoins like Terra to invest in because of their more predictable prices.

Terraform Labs, which oversees the Terra cryptocurrency project, has benefited from its rising popularity. The company said recently that investors like Arrington Capital, Lightspeed Venture Partners, and Pantera Capital have pledged $150 million to help it incubate various crypto projects that are connected to Terra.

Terraform Labs and its partners have built apps that operate on the company’s blockchain technology that helps keep a permanent and shared record of the firm’s crypto-related financial transactions.

Here’s what you need to know about Terra and the company behind it.

What is Terra?

Terra is a blockchain project developed by Terraform Labs that powers the startup’s cryptocurrencies and financial apps. These cryptocurrencies include the Terra U.S. Dollar, or UST, that is pegged to the U.S. dollar through an algorithm.

Terra is a stablecoin that is intended to reduce the volatility endemic to cryptocurrencies like Bitcoin. Some stablecoins, like Tether, are pegged to more conventional currencies, like the U.S. dollar, through cash and cash equivalents as opposed to an algorithm and associated reserve token.

To mint new UST tokens, a percentage of another digital token and reserve asset, Luna, is “burned.” If the demand for UST rises with more people using the currency, more Luna will be automatically burned and diverted to a community pool. That balancing act is supposed to help stabilize the price, to a degree.

“Luna directly benefits from the economic growth of the Terra economy, and it suffers from contractions of the Terra coin,” Terraform Labs CEO Do Kwon said.

Each time someone buys something—like an ice cream—using UST, that transaction generates a fee, similar to a credit card transaction. That fee is then distributed to people who own Luna tokens, similar to a stock dividend.

Who leads Terra?

The South Korean firm Terraform Labs was founded in 2018 by Daniel Shin and Kwon, who is now the company’s CEO. Kwon is a 29-year-old former Microsoft employee; Shin now heads the Chai online payment service, a Terra partner. Kwon said many Koreans have used the Chai service to buy goods like movie tickets using Terra cryptocurrency.

Terraform Labs does not make money from transactions using its crypto and instead relies on outside funding to operate, Kwon said. It has raised $57 million in funding from investors like HashKey Digital Asset Group, Divergence Digital Currency Fund, and Huobi Capital, according to deal-tracking service PitchBook. The amount raised is in addition to the latest $150 million funding commitment announced on July 16.

What are Terra’s plans?

Terraform Labs plans to use Terra’s blockchain and its associated cryptocurrencies—including one pegged to the Korean won—to create a digital financial system independent of major banks and fintech-app makers. So far, its main source of growth has been in Korea, where people have bought goods at stores, like coffee, using the Chai payment app that’s built on Terra’s blockchain. Kwon said the company’s associated Mirror trading app is experiencing growth in China and Thailand.

Meanwhile, Kwon said Terraform Labs would use its latest $150 million in funding to invest in groups that build financial apps on Terra’s blockchain. He likened the scouting and investing in other groups as akin to a “Y Combinator demo day type of situation,” a reference to the popular startup pitch event organized by early-stage investor Y Combinator.

The combination of all these Terra-specific financial apps shows that Terraform Labs is “almost creating a kind of bank,” said Ryan Watkins, a senior research analyst at cryptocurrency consultancy Messari.

In addition to cryptocurrencies, Terraform Labs has a number of other projects including the Anchor app, a high-yield savings account for holders of the group’s digital coins. Meanwhile, people can use the firm’s associated Mirror app to create synthetic financial assets that mimic more conventional ones, like “tokenized” representations of corporate stocks. These synthetic assets are supposed to be helpful to people like “a small retail trader in Thailand” who can more easily buy shares and “get some exposure to the upside” of stocks that they otherwise wouldn’t have been able to obtain, Kwon said. But some critics have said the U.S. Securities and Exchange Commission may eventually crack down on synthetic stocks, which are currently unregulated.

What do critics say?

Terra still has a long way to go to catch up to bigger cryptocurrency projects like Ethereum.

Most financial transactions involving Terra-related cryptocurrencies have originated in Korea, where its founders are based. Although Terra is becoming more popular in Korea thanks to rising interest in its partner Chai, it’s too early to say whether Terra-related currencies will gain traction in other countries.

Terra’s blockchain runs on a “limited number of nodes,” said Messari’s Watkins, referring to the computers that help keep the system running. That helps reduce latency that may otherwise slow processing of financial transactions, he said.

But the tradeoff is that Terra is less “decentralized” than other blockchain platforms like Ethereum, which is powered by thousands of interconnected computing nodes worldwide. That could make Terra less appealing to some blockchain purists.

The Secret Developer

3 years ago

What Elon Musk's Take on Bitcoin Teaches Us

Tesla Q2 earnings revealed unethical dealings.

As of end of Q2, we have converted approximately 75% of our Bitcoin purchases into fiat currency

That’s OK then, isn’t it?

Elon Musk, Tesla's CEO, is now untrustworthy.

It’s not about infidelity, it’s about doing the right thing

And what can we learn?

The Opening Remark

Musk tweets on his (and Tesla's) future goals.

Don’t worry, I’m not expecting you to read it.

What's crucial?

Tesla will not be selling any Bitcoin

The Situation as It Develops

2021 Tesla spent $1.5 billion on Bitcoin. In 2022, they sold 75% of the ownership for $946 million.

That’s a little bit of a waste of money, right?

Musk predicted the reverse would happen.

What gives? Why would someone say one thing, then do the polar opposite?

The Justification For Change

Tesla's public. They must follow regulations. When a corporation trades, they must record what happens.

At least this keeps Musk some way in line.

We now understand Musk and Tesla's actions.

Musk claimed that Tesla sold bitcoins to maximize cash given the unpredictability of COVID lockdowns in China.

Tesla may buy Bitcoin in the future, he said.

That’s fine then. He’s not knocking the NFT at least.

Tesla has moved investments into cash due to China lockdowns.

That doesn’t explain the 180° though

Musk's Tweet isn't company policy. Therefore, the CEO's change of heart reflects the organization. Look.

That's okay, since

Leaders alter their positions when circumstances change.

Leaders must adapt to their surroundings. This isn't embarrassing; it's a leadership prerequisite.

Yet

The Man

Someone stated if you're not in the office full-time, you need to explain yourself. He doesn't treat his employees like adults.

This is the individual mentioned in the quote.

If Elon was not happy, you knew it. Things could get nasty

also, He fired his helper for requesting a raise.

This public persona isn't good. Without mentioning his disastrous performances on Twitter (pedo dude) or Joe Rogan. This image sums up the odd Podcast appearance:

Which describes the man.

I wouldn’t trust this guy to feed a cat

What we can discover

When Musk's company bet on Bitcoin, what happened?

Exactly what we would expect

The company's position altered without the CEO's awareness. He seems uncaring.

This article is about how something happened, not what happened. Change of thinking requires contrition.

This situation is about a lack of respect- although you might argue that followers on Twitter don’t deserve any

Tesla fans call the sale a great move.

It's absurd.

As you were, then.

Conclusion

Good luck if you gamble.

When they pay off, congrats!

When wrong, admit it.

You must take chances if you want to succeed.

Risks don't always pay off.

Mr. Musk lacks insight and charisma to combine these two attributes.

I don’t like him, if you hadn’t figured.

It’s probably all of the cheating.

cdixon

3 years ago

2000s Toys, Secrets, and Cycles

During the dot-com bust, I started my internet career. People used the internet intermittently to check email, plan travel, and do research. The average internet user spent 30 minutes online a day, compared to 7 today. To use the internet, you had to "log on" (most people still used dial-up), unlike today's always-on, high-speed mobile internet. In 2001, Amazon's market cap was $2.2B, 1/500th of what it is today. A study asked Americans if they'd adopt broadband, and most said no. They didn't see a need to speed up email, the most popular internet use. The National Academy of Sciences ranked the internet 13th among the 100 greatest inventions, below radio and phones. The internet was a cool invention, but it had limited uses and wasn't a good place to build a business.

A small but growing movement of developers and founders believed the internet could be more than a read-only medium, allowing anyone to create and publish. This is web 2. The runner up name was read-write web. (These terms were used in prominent publications and conferences.)

Web 2 concepts included letting users publish whatever they want ("user generated content" was a buzzword), social graphs, APIs and mashups (what we call composability today), and tagging over hierarchical navigation. Technical innovations occurred. A seemingly simple but important one was dynamically updating web pages without reloading. This is now how people expect web apps to work. Mobile devices that could access the web were niche (I was an avid Sidekick user).

The contrast between what smart founders and engineers discussed over dinner and on weekends and what the mainstream tech world took seriously during the week was striking. Enterprise security appliances, essentially preloaded servers with security software, were a popular trend. Many of the same people would talk about "serious" products at work, then talk about consumer internet products and web 2. It was tech's biggest news. Web 2 products were seen as toys, not real businesses. They were hobbies, not work-related.

There's a strong correlation between rich product design spaces and what smart people find interesting, which took me some time to learn and led to blog posts like "The next big thing will start out looking like a toy" Web 2's novel product design possibilities sparked dinner and weekend conversations. Imagine combining these features. What if you used this pattern elsewhere? What new product ideas are next? This excited people. "Serious stuff" like security appliances seemed more limited.

The small and passionate web 2 community also stood out. I attended the first New York Tech meetup in 2004. Everyone fit in Meetup's small conference room. Late at night, people demoed their software and chatted. I have old friends. Sometimes I get asked how I first met old friends like Fred Wilson and Alexis Ohanian. These topics didn't interest many people, especially on the east coast. We were friends. Real community. Alex Rampell, who now works with me at a16z, is someone I met in 2003 when a friend said, "Hey, I met someone else interested in consumer internet." Rare. People were focused and enthusiastic. Revolution seemed imminent. We knew a secret nobody else did.

My web 2 startup was called SiteAdvisor. When my co-founders and I started developing the idea in 2003, web security was out of control. Phishing and spyware were common on Internet Explorer PCs. SiteAdvisor was designed to warn users about security threats like phishing and spyware, and then, using web 2 concepts like user-generated reviews, add more subjective judgments (similar to what TrustPilot seems to do today). This staged approach was common at the time; I called it "Come for the tool, stay for the network." We built APIs, encouraged mashups, and did SEO marketing.

Yahoo's 2005 acquisitions of Flickr and Delicious boosted web 2 in 2005. By today's standards, the amounts were small, around $30M each, but it was a signal. Web 2 was assumed to be a fun hobby, a way to build cool stuff, but not a business. Yahoo was a savvy company that said it would make web 2 a priority.

As I recall, that's when web 2 started becoming mainstream tech. Early web 2 founders transitioned successfully. Other entrepreneurs built on the early enthusiasts' work. Competition shifted from ideation to execution. You had to decide if you wanted to be an idealistic indie bar band or a pragmatic stadium band.

Web 2 was booming in 2007 Facebook passed 10M users, Twitter grew and got VC funding, and Google bought YouTube. The 2008 financial crisis tested entrepreneurs' resolve. Smart people predicted another great depression as tech funding dried up.

Many people struggled during the recession. 2008-2011 was a golden age for startups. By 2009, talented founders were flooding Apple's iPhone app store. Mobile apps were booming. Uber, Venmo, Snap, and Instagram were all founded between 2009 and 2011. Social media (which had replaced web 2), cloud computing (which enabled apps to scale server side), and smartphones converged. Even if social, cloud, and mobile improve linearly, the combination could improve exponentially.

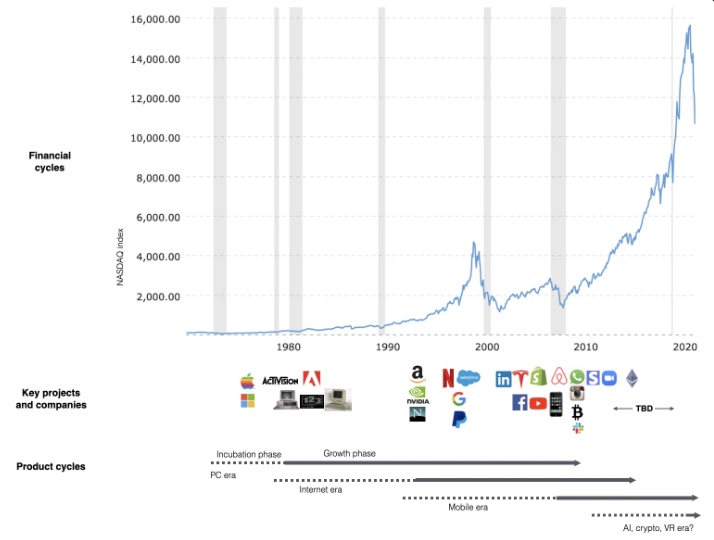

This chart shows how I view product and financial cycles. Product and financial cycles evolve separately. The Nasdaq index is a proxy for the financial sentiment. Financial sentiment wildly fluctuates.

Next row shows iconic startup or product years. Bottom-row product cycles dictate timing. Product cycles are more predictable than financial cycles because they follow internal logic. In the incubation phase, enthusiasts build products for other enthusiasts on nights and weekends. When the right mix of technology, talent, and community knowledge arrives, products go mainstream. (I show the biggest tech cycles in the chart, but smaller ones happen, like web 2 in the 2000s and fintech and SaaS in the 2010s.)

Tech has changed since the 2000s. Few tech giants dominate the internet, exerting economic and cultural influence. In the 2000s, web 2 was ignored or dismissed as trivial. Entrenched interests respond aggressively to new movements that could threaten them. Creative patterns from the 2000s continue today, driven by enthusiasts who see possibilities where others don't. Know where to look. Crypto and web 3 are where I'd start.

Today's negative financial sentiment reminds me of 2008. If we face a prolonged downturn, we can learn from 2008 by preserving capital and focusing on the long term. Keep an eye on the product cycle. Smart people are interested in things with product potential. This becomes true. Toys become necessities. Hobbies become mainstream. Optimists build the future, not cynics.

Full article is available here