More on Personal Growth

Sad NoCoiner

3 years ago

Two Key Money Principles You Should Understand But Were Never Taught

Prudence is advised. Be debt-free. Be frugal. Spend less.

This advice sounds nice, but it rarely works.

Most people never learn these two money rules. Both approaches will impact how you see personal finance.

It may safeguard you from inflation or the inability to preserve money.

Let’s dive in.

#1: Making long-term debt your ally

High-interest debt hurts consumers. Many credit cards carry 25% yearly interest (or more), so always pay on time. Otherwise, you’re losing money.

Some low-interest debt is good. Especially when buying an appreciating asset with borrowed money.

Inflation helps you.

If you borrow $800,000 at 3% interest and invest it at 7%, you'll make $32,000 (4%).

As money loses value, fixed payments get cheaper. Your assets' value and cash flow rise.

The never-in-debt crowd doesn't know this. They lose money paying off mortgages and low-interest loans early when they could have bought assets instead.

#2: How To Buy Or Build Assets To Make Inflation Irrelevant

Dozens of studies demonstrate actual wage growth is static; $2.50 in 1964 was equivalent to $22.65 now.

These reports never give solutions unless they're selling gold.

But there is one.

Assets beat inflation.

$100 invested into the S&P 500 would have an inflation-adjusted return of 17,739.30%.

Likewise, you can build assets from nothing. Doing is easy and quick. The returns can boost your income by 10% or more.

The people who obsess over inflation inadvertently make the problem worse for themselves. They wait for The Big Crash to buy assets. Or they moan about debt clocks and spending bills instead of seeking a solution.

Conclusion

Being ultra-prudent is like playing golf with a putter to avoid hitting the ball into the water. Sure, you might not slice a drive into the pond. But, you aren’t going to play well either. Or have very much fun.

Money has rules.

Avoiding debt or investment risks will limit your rewards. Long-term, being too cautious hurts your finances.

Disclaimer: This article is for entertainment purposes only. It is not financial advice, always do your own research.

Alexander Nguyen

3 years ago

How can you bargain for $300,000 at Google?

Don’t give a number

Google pays its software engineers generously. While many of their employees are competent, they disregard a critical skill to maximize their pay.

Negotiation.

If Google employees have never negotiated, they're as helpless as anyone else.

In this piece, I'll reveal a compensation negotiation tip that will set you apart.

The Fallacy of Negotiating

How do you negotiate your salary? “Just give them a number twice the amount you really want”. - Someplace on the internet

Above is typical negotiation advice. If you ask for more than you want, the recruiter may meet you halfway.

It seems logical and great, but here's why you shouldn't follow that advice.

Haitian hostage rescue

In 1977, an official's aunt was kidnapped in Haiti. The kidnappers demanded $150,000 for the aunt's life. It seems reasonable until you realize why kidnappers want $150,000.

FBI detective and negotiator Chris Voss researched why they demanded so much.

“So they could party through the weekend”

When he realized their ransom was for partying, he offered $4,751 and a CD stereo. Criminals freed the aunt.

These thieves gave 31.57x their estimated amount and got a fraction. You shouldn't trust these thieves to negotiate your compensation.

What happened?

Negotiating your offer and Haiti

This narrative teaches you how to negotiate with a large number.

You can and will be talked down.

If a recruiter asks your wage expectation and you offer double, be ready to explain why.

If you can't justify your request, you may be offered less. The recruiter will notice and talk you down.

Reasonably,

a tiny bit more than the present amount you earn

a small premium over an alternative offer

a little less than the role's allotted amount

Real-World Illustration

Recruiter: What’s your expected salary? Candidate: (I know the role is usually $100,000) $200,000 Recruiter: How much are you compensated in your current role? Candidate: $90,000 Recruiter: We’d be excited to offer you $95,000 for your experiences for the role.

So Why Do They Even Ask?

Recruiters ask for a number to negotiate a lower one. Asking yourself limits you.

You'll rarely get more than you asked for, and your request can be lowered.

The takeaway from all of this is to never give an expected compensation.

Tell them you haven't thought about it when you applied.

Teronie Donalson

3 years ago

The best financial advice I've ever received and how you can use it.

Taking great financial advice is key to financial success.

A wealthy man told me to INVEST MY MONEY when I was young.

As I entered Starbucks, an older man was leaving. I noticed his watch and expensive-looking shirt, not like the guy in the photo, but one made of fine fabric like vicuna wool, which can only be shorn every two to three years. His Bentley confirmed my suspicions about his wealth.

This guy looked like James Bond, so I asked him how to get rich like him.

"Drug dealer?" he laughed.

Whether he was telling the truth, I'll never know, and I didn't want to be an accessory, but he quickly added, "Kid, invest your money; it will do wonders." He left.

When he told me to invest, he didn't say what. Later, I realized the investment game has so many levels that even if he drew me a blueprint, I wouldn't understand it.

The best advice I received was to invest my earnings. I must decide where to invest.

I'll preface by saying I'm not a financial advisor or Your financial advisor, but I'll share what I've learned from books, links, and sources. The rest is up to you.

Basically:

Invest your Money

Money is money, whether you call it cake, dough, moolah, benjamins, paper, bread, etc.

If you're lucky, you can buy one of the gold shirts in the photo.

Investing your money today means putting it towards anything that could be profitable.

According to the website Investopedia:

“Investing is allocating money to generate income or profit.”

You can invest in a business, real estate, or a skill that will pay off later.

Everyone has different goals and wants at different stages of life, so investing varies.

He was probably a sugar daddy with his Bentley, nice shirt, and Rolex.

In my twenties, I started making "good" money; now, in my forties, with a family and three kids, I'm building a legacy for my grandkids.

“It’s not how much money you make, but how much money you keep, how hard it works for you, and how many generations you keep it for.” — Robert Kiyosaki.

Money isn't evil, but lack of it is.

Financial stress is a major source of problems, according to studies.

Being broke hurts, especially if you want to provide for your family or do things.

“An investment in knowledge pays the best interest.” — Benjamin Franklin.

Investing in knowledge is invaluable. Before investing, do your homework.

You probably didn't learn about investing when you were young, like I didn't. My parents were in survival mode, making investing difficult.

In my 20s, I worked in banking to better understand money.

So, why invest?

Growth requires investment.

Investing puts money to work and can build wealth. Your money may outpace inflation with smart investing. Compounding and the risk-return tradeoff boost investment growth.

Investing your money means you won't have to work forever — unless you want to.

Two common ways to make money are;

-working hard,

and

-interest or capital gains from investments.

Capital gains can help you invest.

“How many millionaires do you know who have become wealthy by investing in savings accounts? I rest my case.” — Robert G. Allen

If you keep your money in a savings account, you'll earn less than 2% interest at best; the bank makes money by loaning it out.

Savings accounts are a safe bet, but the low-interest rates limit your gains.

Don't skip it. An emergency fund should be in a savings account, not the market.

Other reasons to invest:

Investing can generate regular income.

If you own rental properties, the tenant's rent will add to your cash flow.

Daily, weekly, or monthly rentals (think Airbnb) generate higher returns year-round.

Capital gains are taxed less than earned income if you own dividend-paying or appreciating stock.

Time is on your side

“Compound interest is the eighth wonder of the world. He who understands it, earns it; he who doesn’t — pays it.” — Albert Einstein

Historical data shows that young investors outperform older investors. So you can use compound interest over decades instead of investing at 45 and having less time to earn.

If I had taken that man's advice and invested in my twenties, I would have made a decent return by my thirties. (Depending on my investments)

So for those who live a YOLO (you only live once) life, investing can't hurt.

Investing increases your knowledge.

Lessons are clearer when you're invested. Each win boosts confidence and draws attention to losses. Losing money prompts you to investigate.

Before investing, I read many financial books, but I didn't understand them until I invested.

Now what?

What do you invest in? Equities, mutual funds, ETFs, retirement accounts, savings, business, real estate, cryptocurrencies, marijuana, insurance, etc.

The key is to start somewhere. Know you don't know everything. You must care.

“A journey of a thousand miles must begin with a single step.” — Lao Tzu.

Start simple because there's so much information. My first investment book was:

Robert Kiyosaki's "Rich Dad, Poor Dad"

This easy-to-read book made me hungry for more. This book is about the money lessons rich parents teach their children, which poor and middle-class parents neglect. The poor and middle-class work for money, while the rich let their assets work for them, says Kiyosaki.

There is so much to learn, but you gotta start somewhere.

More books:

***Wisdom

I hope I'm not suggesting that investing makes everything rosy. Remember three rules:

1. Losing money is possible.

2. Losing money is possible.

3. Losing money is possible.

You can lose money, so be careful.

Read, research, invest.

Golden rules for Investing your money

Never invest money you can't lose.

Financial freedom is possible regardless of income.

"Courage taught me that any sound investment will pay off, no matter how bad a crisis gets." Helu Carlos

"I'll tell you Wall Street's secret to wealth. When others are afraid, you're greedy. You're afraid when others are greedy. Buffett

Buy low, sell high, and have an exit strategy.

Ask experts or wealthy people for advice.

"With a good understanding of history, we can have a clear vision of the future." Helu Carlos

"It's not whether you're right or wrong, but how much money you make when you're right." Soros

"The individual investor should act as an investor, not a speculator." Graham

"It's different this time" is the most dangerous investment phrase. Templeton

Lastly,

Avoid quick-money schemes. Building wealth takes years, not months.

Start small and work your way up.

Thanks for reading!

This post is a summary. Read the full article here

You might also like

Niharikaa Kaur Sodhi

3 years ago

The Only Paid Resources I Turn to as a Solopreneur

4 Pricey Tools That Are Valuable

I pay based on ROI (return on investment).

If a $20/month tool or $500 online course doubles my return, I'm in.

Investing helps me build wealth.

Canva Pro

I initially refused to pay.

My course content needed updating a few months ago. My Google Docs text looked cleaner and more professional in Canva.

I've used it to:

product cover pages

eBook covers

Product page infographics

See my Google Sheets vs. Canva product page graph.

Google Sheets vs Canva

Yesterday, I used it to make a LinkedIn video thumbnail. It took less than 5 minutes and improved my video.

In 30 hours, the video had 39,000 views.

Here's more.

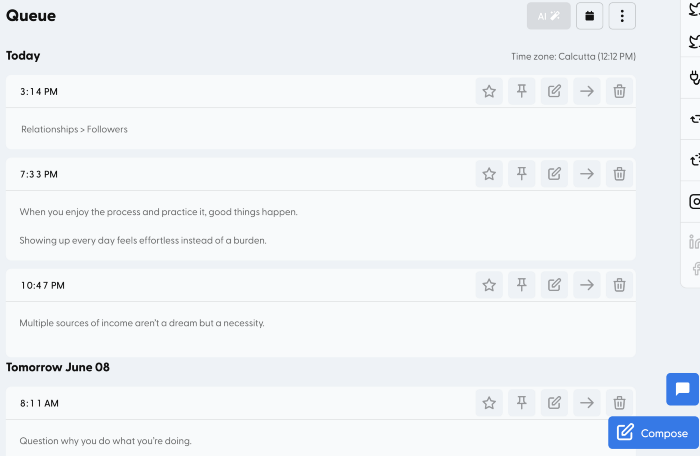

HypeFury

Hypefury rocks!

It builds my brand as I sleep. What else?

Because I'm traveling this weekend, I planned tweets for 10 days. It took me 80 minutes.

So while I travel or am absent, my content mill keeps producing.

Also I like:

I can reach hundreds of people thanks to auto-DMs. I utilize it to advertise freebies; for instance, leave an emoji remark to receive my checklist. And they automatically receive a message in their DM.

Scheduled Retweets: By appearing in a different time zone, they give my tweet a second chance.

It helps me save time and expand my following, so that's my favorite part.

It’s also super neat:

Zoom Pro

My course involves weekly and monthly calls for alumni.

Google Meet isn't great for group calls. The interface isn't great.

Zoom Pro is expensive, and the monthly payments suck, but it's necessary.

It gives my students a smooth experience.

Previously, we'd do 40-minute meetings and then reconvene.

Zoom's free edition limits group calls to 40 minutes.

This wouldn't be a good online course if I paid hundreds of dollars.

So I felt obligated to help.

YouTube Premium

My laptop has an ad blocker.

I bought an iPad recently.

When you're self-employed and work from home, the line between the two blurs. My bed is only 5 steps away!

When I read or watched videos on my laptop, I'd slide into work mode. Only option was to view on phone, which is awkward.

YouTube premium handles it. No more advertisements and I can listen on the move.

3 Expensive Tools That Aren't Valuable

Marketing strategies are sometimes aimed to make you feel you need 38474 cool features when you don’t.

Certain tools are useless.

I found it useless.

Depending on your needs. As a writer and creator, I get no return.

They could for other jobs.

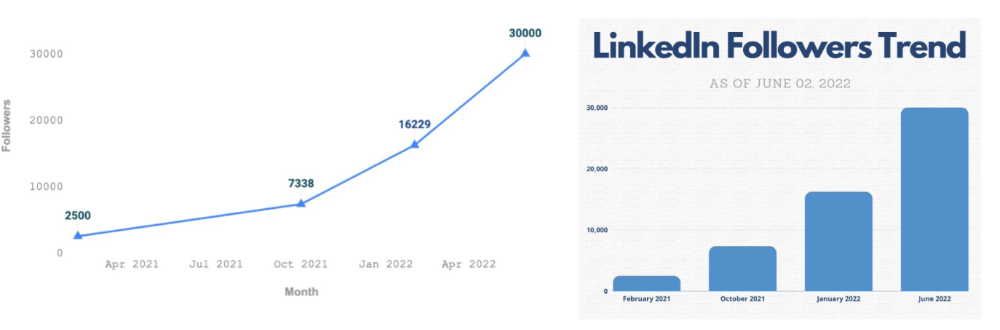

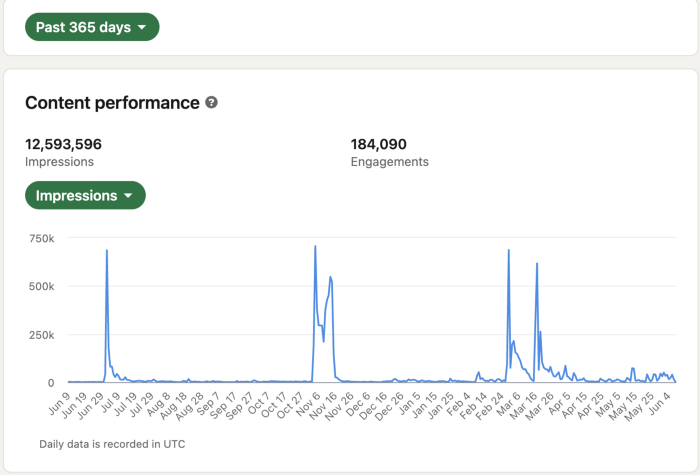

Shield Analytics

It tracks LinkedIn stats, like:

follower growth

trend chart for impressions

Engagement, views, and comment stats for posts

and much more.

Middle-tier creator costs $12/month.

I got a 25% off coupon but canceled my free trial before writing this. It's not worth the discount.

Why?

LinkedIn provides free analytics. See:

Not thorough and won't show top posts.

I don't need to see my top posts because I love experimenting with writing.

Slack Premium

Slack was my classroom. Slack provided me a premium trial during the prior cohort.

I skipped it.

Sure, voice notes are better than a big paragraph. I didn't require pro features.

Marketing methods sometimes make you think you need 38474 amazing features. Don’t fall for it.

Calendly Pro

This may be worth it if you get many calls.

I avoid calls. During my 9-5, I had too many pointless calls.

I don't need:

ability to schedule calls for 15, 30, or 60 minutes: I just distribute each link separately.

I have a Gumroad consultation page with a payment option.

follow-up emails: I hardly ever make calls, so

I just use one calendar, therefore I link to various calendars.

I'll admit, the integrations are cool. Not for me.

If you're a coach or consultant, the features may be helpful. Or book meetings.

Conclusion

Investing is spending to make money.

Use my technique — put money in tools that help you make money. This separates it from being an investment instead of an expense.

Try free versions of these tools before buying them since everyone else is.

Arthur Hayes

3 years ago

Contagion

(The author's opinions should not be used to make investment decisions or as a recommendation to invest.)

The pandemic and social media pseudoscience have made us all epidemiologists, for better or worse. Flattening the curve, social distancing, lockdowns—remember? Some of you may remember R0 (R naught), the number of healthy humans the average COVID-infected person infects. Thankfully, the world has moved on from Greater China's nightmare. Politicians have refocused their talent for misdirection on getting their constituents invested in the war for Russian Reunification or Russian Aggression, depending on your side of the iron curtain.

Humanity battles two fronts. A war against an invisible virus (I know your Commander in Chief might have told you COVID is over, but viruses don't follow election cycles and their economic impacts linger long after the last rapid-test clinic has closed); and an undeclared World War between US/NATO and Eurasia/Russia/China. The fiscal and monetary authorities' current policies aim to mitigate these two conflicts' economic effects.

Since all politicians are short-sighted, they usually print money to solve most problems. Printing money is the easiest and fastest way to solve most problems because it can be done immediately without much discussion. The alternative—long-term restructuring of our global economy—would hurt stakeholders and require an honest discussion about our civilization's state. Both of those requirements are non-starters for our short-sighted political friends, so whether your government practices capitalism, communism, socialism, or fascism, they all turn to printing money-ism to solve all problems.

Free money stimulates demand, so people buy crap. Overbuying shit raises prices. Inflation. Every nation has food, energy, or goods inflation. The once-docile plebes demand action when the latter two subsets of inflation rise rapidly. They will be heard at the polls or in the streets. What would you do to feed your crying hungry child?

Global central banks During the pandemic, the Fed, PBOC, BOJ, ECB, and BOE printed money to aid their governments. They worried about inflation and promised to remove fiat liquidity and tighten monetary conditions.

Imagine Nate Diaz's round-house kick to the face. The financial markets probably felt that way when the US and a few others withdrew fiat wampum. Sovereign debt markets suffered a near-record bond market rout.

The undeclared WW3 is intensifying, with recent gas pipeline attacks. The global economy is already struggling, and credit withdrawal will worsen the situation. The next pandemic, the Yield Curve Control (YCC) virus, is spreading as major central banks backtrack on inflation promises. All central banks eventually fail.

Here's a scorecard.

In order to save its financial system, BOE recently reverted to Quantitative Easing (QE).

BOJ Continuing YCC to save their banking system and enable affordable government borrowing.

ECB printing money to buy weak EU member bonds, but will soon start Quantitative Tightening (QT).

PBOC Restarting the money printer to give banks liquidity to support the falling residential property market.

Fed raising rates and QT-shrinking balance sheet.

80% of the world's biggest central banks are printing money again. Only the Fed has remained steadfast in the face of a financial market bloodbath, determined to end the inflation for which it is at least partially responsible—the culmination of decades of bad economic policies and a world war.

YCC printing is the worst for fiat currency and society. Because it necessitates central banks fixing a multi-trillion-dollar bond market. YCC central banks promise to infinitely expand their balance sheets to keep a certain interest rate metric below an unnatural ceiling. The market always wins, crushing humanity with inflation.

BOJ's YCC policy is longest-standing. The BOE joined them, and my essay this week argues that the ECB will follow. The ECB joining YCC would make 60% of major central banks follow this terrible policy. Since the PBOC is part of the Chinese financial system, the number could be 80%. The Chinese will lend any amount to meet their economic activity goals.

The BOE committed to a 13-week, GBP 65bn bond price-fixing operation. However, BOEs YCC may return. If you lose to the market, you're stuck. Since the BOE has announced that it will buy your Gilt at inflated prices, why would you not sell them all? Market participants taking advantage of this policy will only push the bank further into the hole it dug itself, so I expect the BOE to re-up this program and count them as YCC.

In a few trading days, the BOE went from a bank determined to slay inflation by raising interest rates and QT to buying an unlimited amount of UK Gilts. I expect the ECB to be dragged kicking and screaming into a similar policy. Spoiler alert: big daddy Fed will eventually die from the YCC virus.

Threadneedle St, London EC2R 8AH, UK

Before we discuss the BOE's recent missteps, a chatroom member called the British royal family the Kardashians with Crowns, which made me laugh. I'm sad about royal attention. If the public was as interested in energy and economic policies as they are in how the late Queen treated Meghan, Duchess of Sussex, UK politicians might not have been able to get away with energy and economic fairy tales.

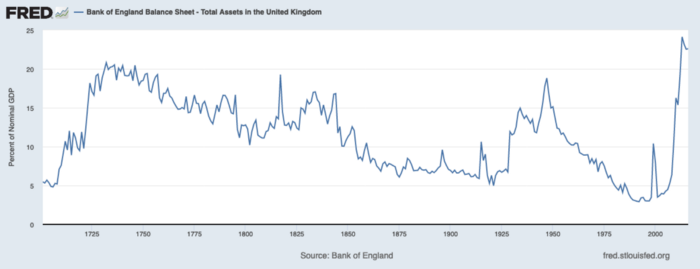

The BOE printed money to recover from COVID, as all good central banks do. For historical context, this chart shows the BOE's total assets as a percentage of GDP since its founding in the 18th century.

The UK has had a rough three centuries. Pandemics, empire wars, civil wars, world wars. Even so, the BOE's recent money printing was its most aggressive ever!

BOE Total Assets as % of GDP (white) vs. UK CPI

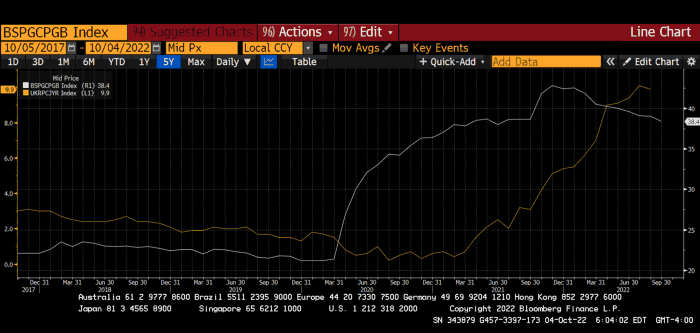

Now, inflation responded slowly to the bank's most aggressive monetary loosening. King Charles wishes the gold line above showed his popularity, but it shows his subjects' suffering.

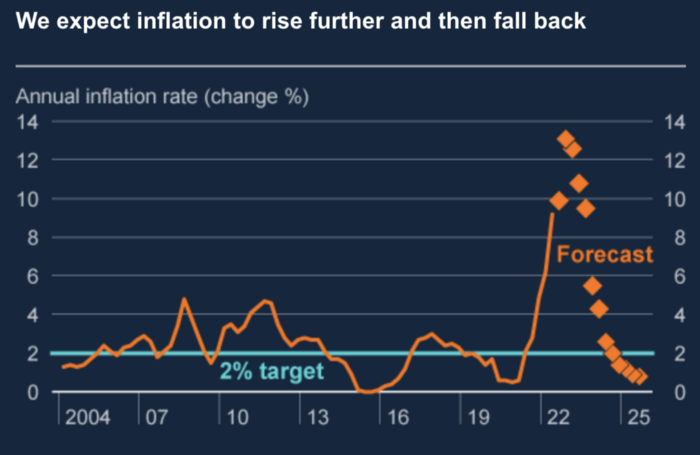

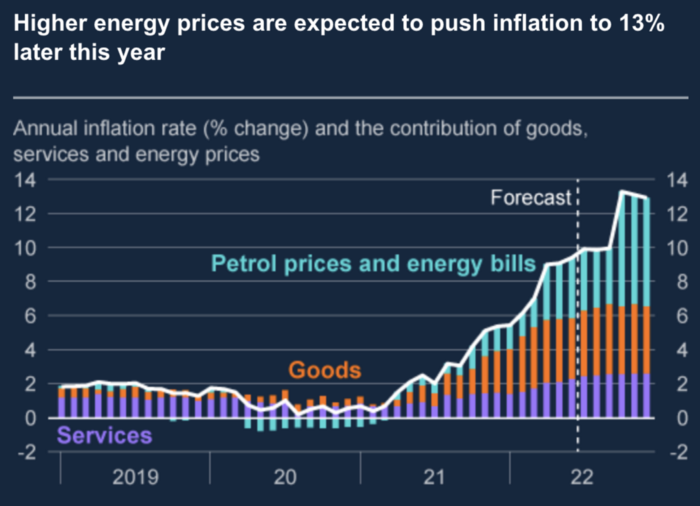

The BOE recognized early that its money printing caused runaway inflation. In its August 2022 report, the bank predicted that inflation would reach 13% by year end before aggressively tapering in 2023 and 2024.

Aug 2022 BOE Monetary Policy Report

The BOE was the first major central bank to reduce its balance sheet and raise its policy rate to help.

The BOE first raised rates in December 2021. Back then, JayPow wasn't even considering raising rates.

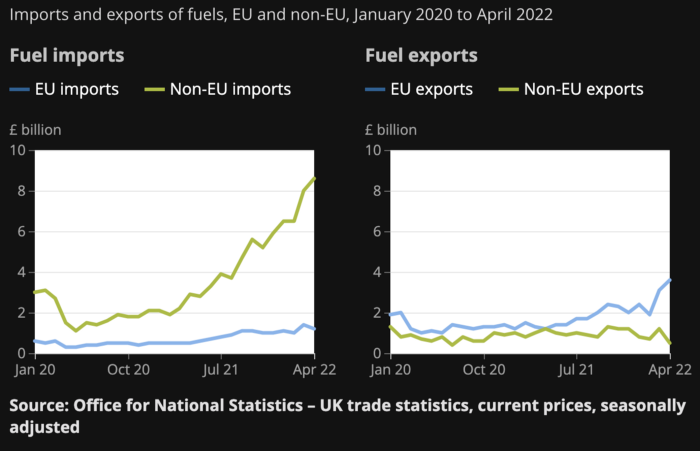

UK policymakers, like most developed nations, believe in energy fairy tales. Namely, that the developed world, which grew in lockstep with hydrocarbon use, could switch to wind and solar by 2050. The UK's energy import bill has grown while coal, North Sea oil, and possibly stranded shale oil have been ignored.

WW3 is an economic war that is balkanizing energy markets, which will continue to inflate. A nation that imports energy and has printed the most money in its history cannot avoid inflation.

The chart above shows that energy inflation is a major cause of plebe pain.

The UK is hit by a double whammy: the BOE must remove credit to reduce demand, and energy prices must rise due to WW3 inflation. That's not economic growth.

Boris Johnson was knocked out by his country's poor economic performance, not his lockdown at 10 Downing St. Prime Minister Truss and her merry band of fools arrived with the tried-and-true government remedy: goodies for everyone.

She released a budget full of economic stimulants. She cut corporate and individual taxes for the rich. She plans to give poor people vouchers for higher energy bills. Woohoo! Margret Thatcher's new pants suit.

My buddy Jim Bianco said Truss budget's problem is that it works. It will boost activity at a time when inflation is over 10%. Truss' budget didn't include austerity measures like tax increases or spending cuts, which the bond market wanted. The bond market protested.

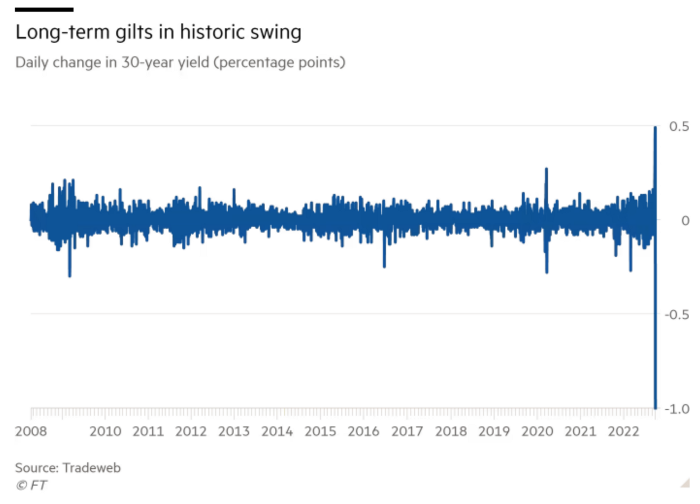

30-year Gilt yield chart. Yields spiked the most ever after Truss announced her budget, as shown. The Gilt market is the longest-running bond market in the world.

The Gilt market showed the pole who's boss with Cardi B.

Before this, the BOE was super-committed to fighting inflation. To their credit, they raised short-term rates and shrank their balance sheet. However, rapid yield rises threatened to destroy the entire highly leveraged UK financial system overnight, forcing them to change course.

Accounting gimmicks allowed by regulators for pension funds posed a systemic threat to the UK banking system. UK pension funds could use interest rate market levered derivatives to match liabilities. When rates rise, short rate derivatives require more margin. The pension funds spent all their money trying to pick stonks and whatever else their sell side banker could stuff them with, so the historic rate spike would have bankrupted them overnight. The FT describes BOE-supervised chicanery well.

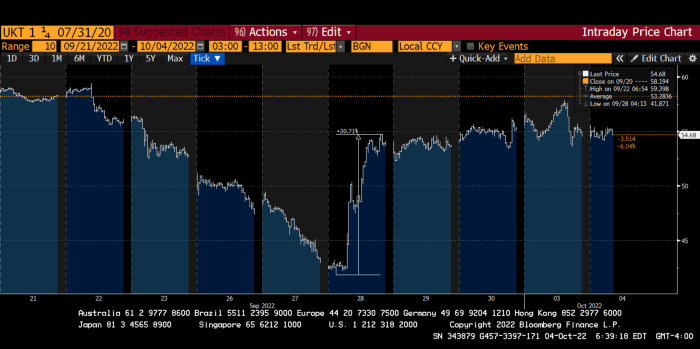

To avoid a financial apocalypse, the BOE in one morning abandoned all their hard work and started buying unlimited long-dated Gilts to drive prices down.

Another reminder to never fight a central bank. The 30-year Gilt is shown above. After the BOE restarted the money printer on September 28, this bond rose 30%. Thirty-fucking-percent! Developed market sovereign bonds rarely move daily. You're invested in His Majesty's government obligations, not a Chinese property developer's offshore USD bond.

The political need to give people goodies to help them fight the terrible economy ran into a financial reality. The central bank protected the UK financial system from asset-price deflation because, like all modern economies, it is debt-based and highly levered. As bad as it is, inflation is not their top priority. The BOE example demonstrated that. To save the financial system, they abandoned almost a year of prudent monetary policy in a few hours. They also started the endgame.

Let's play Central Bankers Say the Darndest Things before we go to the continent (and sorry if you live on a continent other than Europe, but you're not culturally relevant).

Pre-meltdown BOE output:

FT, October 17, 2021 On Sunday, the Bank of England governor warned that it must act to curb inflationary pressure, ignoring financial market moves that have priced in the first interest rate increase before the end of the year.

On July 19, 2022, Gov. Andrew Bailey spoke. Our 2% inflation target is unwavering. We'll do our job.

August 4th 2022 MPC monetary policy announcement According to its mandate, the MPC will sustainably return inflation to 2% in the medium term.

Catherine Mann, MPC member, September 5, 2022 speech. Fast and forceful monetary tightening, possibly followed by a hold or reversal, is better than gradualism because it promotes inflation expectations' role in bringing inflation back to 2% over the medium term.

When their financial system nearly collapsed in one trading session, they said:

The Bank of England's Financial Policy Committee warned on 28 September that gilt market dysfunction threatened UK financial stability. It advised action and supported the Bank's urgent gilt market purchases for financial stability.

It works when the price goes up but not down. Is my crypto portfolio dysfunctional enough to get a BOE bailout?

Next, the EU and ECB. The ECB is also fighting inflation, but it will also succumb to the YCC virus for the same reasons as the BOE.

Frankfurt am Main, ECB Tower, Sonnemannstraße 20, 60314

Only France and Germany matter economically in the EU. Modern European history has focused on keeping Germany and Russia apart. German manufacturing and cheap Russian goods could change geopolitics.

France created the EU to keep Germany down, and the Germans only cooperated because of WWII guilt. France's interests are shared by the US, which lurks in the shadows to prevent a Germany-Russia alliance. A weak EU benefits US politics. Avoid unification of Eurasia. (I paraphrased daddy Felix because I thought quoting a large part of his most recent missive would get me spanked.)

As with everything, understanding Germany's energy policy is the best way to understand why the German economy is fundamentally fucked and why that spells doom for the EU. Germany, the EU's main economic engine, is being crippled by high energy prices, threatening a depression. This economic downturn threatens the union. The ECB may have to abandon plans to shrink its balance sheet and switch to YCC to save the EU's unholy political union.

France did the smart thing and went all in on nuclear energy, which is rare in geopolitics. 70% of electricity is nuclear-powered. Their manufacturing base can survive Russian gas cuts. Germany cannot.

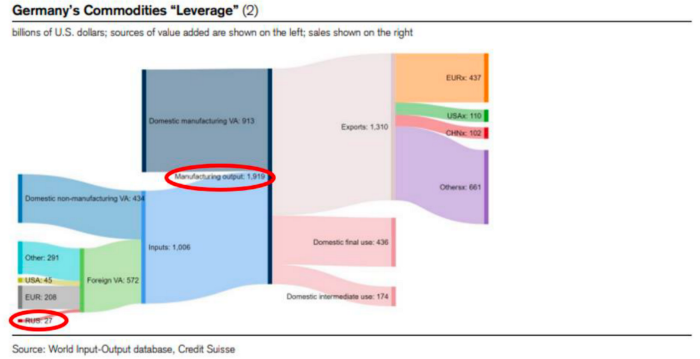

My boy Zoltan made this great graphic showing how screwed Germany is as cheap Russian gas leaves the industrial economy.

$27 billion of Russian gas powers almost $2 trillion of German economic output, a 75x energy leverage. The German public was duped into believing the same energy fairy tales as their politicians, and they overwhelmingly allowed the Green party to dismantle any efforts to build a nuclear energy ecosystem over the past several decades. Germany, unlike France, must import expensive American and Qatari LNG via supertankers due to Nordstream I and II pipeline sabotage.

American gas exports to Europe are touted by the media. Gas is cheap because America isn't the Western world's swing producer. If gas prices rise domestically in America, the plebes would demand the end of imports to avoid paying more to heat their homes.

German goods would cost much more in this scenario. German producer prices rose 46% YoY in August. The German current account is rapidly approaching zero and will soon be negative.

German PPI Change YoY

German Current Account

The reason this matters is a curious construction called TARGET2. Let’s hear from the horse’s mouth what exactly this beat is:

TARGET2 is the real-time gross settlement (RTGS) system owned and operated by the Eurosystem. Central banks and commercial banks can submit payment orders in euro to TARGET2, where they are processed and settled in central bank money, i.e. money held in an account with a central bank.

Source: ECB

Let me explain this in plain English for those unfamiliar with economic dogma.

This chart shows intra-EU credits and debits. TARGET2. Germany, Europe's powerhouse, is owed money. IOU-buying Greeks buy G-wagons. The G-wagon pickup truck is badass.

If all EU countries had fiat currencies, the Deutsche Mark would be stronger than the Italian Lira, according to the chart above. If Europe had to buy goods from non-EU countries, the Euro would be much weaker. Credits and debits between smaller political units smooth out imbalances in other federal-provincial-state political systems. Financial and fiscal unions allow this. The EU is financial, so the centre cannot force the periphery to settle their imbalances.

Greece has never had to buy Fords or Kias instead of BMWs, but what if Germany had to shut down its auto manufacturing plants due to energy shortages?

Italians have done well buying ammonia from Germany rather than China, but what if BASF had to close its Ludwigshafen facility due to a lack of affordable natural gas?

I think you're seeing the issue.

Instead of Germany, EU countries would owe foreign producers like America, China, South Korea, Japan, etc. Since these countries aren't tied into an uneconomic union for politics, they'll demand hard fiat currency like USD instead of Euros, which have become toilet paper (or toilet plastic).

Keynesian economists have a simple solution for politicians who can't afford market prices. Government debt can maintain production. The debt covers the difference between what a business can afford and the international energy market price.

Germans are monetary policy conservative because of the Weimar Republic's hyperinflation. The Bundesbank is the only thing preventing ECB profligacy. Germany must print its way out without cheap energy. Like other nations, they will issue more bonds for fiscal transfers.

More Bunds mean lower prices. Without German monetary discipline, the Euro would have become a trash currency like any other emerging market that imports energy and food and has uncompetitive labor.

Bunds price all EU country bonds. The ECB's money printing is designed to keep the spread of weak EU member bonds vs. Bunds low. Everyone falls with Bunds.

Like the UK, German politicians seeking re-election will likely cause a Bunds selloff. Bond investors will understandably reject their promises of goodies for industry and individuals to offset the lack of cheap Russian gas. Long-dated Bunds will be smoked like UK Gilts. The ECB will face a wave of ultra-levered financial players who will go bankrupt if they mark to market their fixed income derivatives books at higher Bund yields.

Some treats People: Germany will spend 200B to help consumers and businesses cope with energy prices, including promoting renewable energy.

That, ladies and germs, is why the ECB will immediately abandon QT, move to a stop-gap QE program to normalize the Bund and every other EU bond market, and eventually graduate to YCC as the market vomits bonds of all stripes into Christine Lagarde's loving hands. She probably has soft hands.

The 30-year Bund market has noticed Germany's economic collapse. 2021 yields skyrocketed.

30-year Bund Yield

ECB Says the Darndest Things:

Because inflation is too high and likely to stay above our target for a long time, we took today's decision and expect to raise interest rates further.- Christine Lagarde, ECB Press Conference, Sept 8.

The Governing Council will adjust all of its instruments to stabilize inflation at 2% over the medium term. July 21 ECB Monetary Decision

Everyone struggles with high inflation. The Governing Council will ensure medium-term inflation returns to two percent. June 9th ECB Press Conference

I'm excited to read the after. Like the BOE, the ECB may abandon their plans to shrink their balance sheet and resume QE due to debt market dysfunction.

Eighty Percent

I like YCC like dark chocolate over 80%. ;).

Can 80% of the world's major central banks' QE and/or YCC overcome Sir Powell's toughness on fungible risky asset prices?

Gold and crypto are fungible global risky assets. Satoshis and gold bars are the same in New York, London, Frankfurt, Tokyo, and Shanghai.

As more Euros, Yen, Renminbi, and Pounds are printed, people will move their savings into Dollars or other stores of value. As the Fed raises rates and reduces its balance sheet, the USD will strengthen. Gold/EUR and BTC/JPY may also attract buyers.

Gold and crypto markets are much smaller than the trillions in fiat money that will be printed, so they will appreciate in non-USD currencies. These flows only matter in one instance because we trade the global or USD price. Arbitrage occurs when BTC/EUR rises faster than EUR/USD. Here is how it works:

An investor based in the USD notices that BTC is expensive in EUR terms.

Instead of buying BTC, this investor borrows USD and then sells it.

After that, they sell BTC and buy EUR.

Then they choose to sell EUR and buy USD.

The investor receives their profit after repaying the USD loan.

This triangular FX arbitrage will align the global/USD BTC price with the elevated EUR, JPY, CNY, and GBP prices.

Even if the Fed continues QT, which I doubt they can do past early 2023, small stores of value like gold and Bitcoin may rise as non-Fed central banks get serious about printing money.

“Arthur, this is just more copium,” you might retort.

Patience. This takes time. Economic and political forcing functions take time. The BOE example shows that bond markets will reject politicians' policies to appease voters. Decades of bad energy policy have no immediate fix. Money printing is the only politically viable option. Bond yields will rise as bond markets see more stimulative budgets, and the over-leveraged fiat debt-based financial system will collapse quickly, followed by a monetary bailout.

America has enough food, fuel, and people. China, Europe, Japan, and the UK suffer. America can be autonomous. Thus, the Fed can prioritize domestic political inflation concerns over supplying the world (and most of its allies) with dollars. A steady flow of dollars allows other nations to print their currencies and buy energy in USD. If the strongest player wins, everyone else loses.

I'm making a GDP-weighted index of these five central banks' money printing. When ready, I'll share its rate of change. This will show when the 80%'s money printing exceeds the Fed's tightening.

CyberPunkMetalHead

3 years ago

It's all about the ego with Terra 2.0.

UST depegs and LUNA crashes 99.999% in a fraction of the time it takes the Moon to orbit the Earth.

Fat Man, a Terra whistle-blower, promises to expose Do Kwon's dirty secrets and shady deals.

The Terra community has voted to relaunch Terra LUNA on a new blockchain. The Terra 2.0 Pheonix-1 blockchain went live on May 28, 2022, and people were airdropped the new LUNA, now called LUNA, while the old LUNA became LUNA Classic.

Does LUNA deserve another chance? To answer this, or at least start a conversation about the Terra 2.0 chain's advantages and limitations, we must assess its fundamentals, ideology, and long-term vision.

Whatever the result, our analysis must be thorough and ruthless. A failure of this magnitude cannot happen again, so we must magnify every potential breaking point by 10.

Will UST and LUNA holders be compensated in full?

The obvious. First, and arguably most important, is to restore previous UST and LUNA holders' bags.

Terra 2.0 has 1,000,000,000,000 tokens to distribute.

25% of a community pool

Holders of pre-attack LUNA: 35%

10% of aUST holders prior to attack

Holders of LUNA after an attack: 10%

UST holders as of the attack: 20%

Every LUNA and UST holder has been compensated according to the above proposal.

According to self-reported data, the new chain has 210.000.000 tokens and a $1.3bn marketcap. LUNC and UST alone lost $40bn. The new token must fill this gap. Since launch:

LUNA holders collectively own $1b worth of LUNA if we subtract the 25% community pool airdrop from the current market cap and assume airdropped LUNA was never sold.

At the current supply, the chain must grow 40 times to compensate holders. At the current supply, LUNA must reach $240.

LUNA needs a full-on Bull Market to make LUNC and UST holders whole.

Who knows if you'll be whole? From the time you bought to the amount and price, there are too many variables to determine if Terra can cover individual losses.

The above distribution doesn't consider individual cases. Terra didn't solve individual cases. It would have been huge.

What does LUNA offer in terms of value?

UST's marketcap peaked at $18bn, while LUNC's was $41bn. LUNC and UST drove the Terra chain's value.

After it was confirmed (again) that algorithmic stablecoins are bad, Terra 2.0 will no longer support them.

Algorithmic stablecoins contributed greatly to Terra's growth and value proposition. Terra 2.0 has no product without algorithmic stablecoins.

Terra 2.0 has an identity crisis because it has no actual product. It's like Volkswagen faking carbon emission results and then stopping car production.

A project that has already lost the trust of its users and nearly all of its value cannot survive without a clear and in-demand use case.

Do Kwon, how about him?

Oh, the Twitter-caller-poor? Who challenges crypto billionaires to break his LUNA chain? Who dissolved Terra Labs South Korea before depeg? Arrogant guy?

That's not a good image for LUNA, especially when making amends. I think he should step down and let a nicer person be Terra 2.0's frontman.

The verdict

Terra has a terrific community with an arrogant, unlikeable leader. The new LUNA chain must grow 40 times before it can start making up its losses, and even then, not everyone's losses will be covered.

I won't invest in Terra 2.0 or other algorithmic stablecoins in the near future. I won't be near any Do Kwon-related project within 100 miles. My opinion.

Can Terra 2.0 be saved? Comment below.