More on Entrepreneurship/Creators

Tim Denning

3 years ago

One of the biggest publishers in the world offered me a book deal, but I don't feel deserving of it.

My ego is so huge it won't fit through the door.

I don't know how I feel about it. I should be excited. Many of you have this exact dream to publish a book with a well-known book publisher and get a juicy advance.

Let me dissect how I'm thinking about it to help you.

How it happened

An email comes in. A generic "can we put a backlink on your website and get a freebie" email.

Almost deleted it.

Then I noticed the logo. It seemed shady. I found the URL. Check. I searched the employee's LinkedIn. Legit. I avoided middlemen. Check.

Mixed feelings. LinkedIn hasn't valued my writing for years. I'm just a guy in an unironed t-shirt whose content they sell advertising against.

They get big dollars. I get $0 and a few likes, plus some email subscribers.

Still, I felt adrenaline for hours.

I texted a few friends to see how they felt. I wrapped them.

Messages like "No shocker. You're entertaining online." I didn't like praises, so I blushed.

The thrill faded after hours. Who knows?

Most authors desire this chance.

"You entitled piece of crap, Denning!"

You may think so. Okay. My job is to stand on the internet and get bananas thrown at me.

I approached writing backwards. More important than a book deal was a social media audience converted to an email list.

Romantic authors think backward. They hope a fantastic book will land them a deal and an audience.

Rarely occurs. So I never pursued it. It's like permission-seeking or the lottery.

Not being a professional writer, I've never written a good book. I post online for fun and to express my opinions.

Writing is therapeutic. I overcome mental illness and rebuilt my life this way. Without blogging, I'd be dead.

I've always dreamed of staying alive and doing something I love, not getting a book contract. Writing is my passion. I'm a winner without a book deal.

Why I was given a book deal

You may assume I received a book contract because of my views or follows. Nope.

They gave me a deal because they like my writing style. I've heard this for eight years.

Several authors agree. One asked me to improve their writer's voice.

Takeaway: highlight your writer's voice.

What if they discover I'm writing incompetently?

An edited book is published. It's edited.

I need to master writing mechanics, thus this concerns me. I need help with commas and sentence construction.

I must learn verb, noun, and adjective. Seriously.

Writing a book may reveal my imposter status to a famous publisher. Imagine the email

"It happened again. He doesn't even know how to spell. He thinks 'less' is the correct word, not 'fewer.' Are you sure we should publish his book?"

Fears stink.

I'm capable of blogging. Even listicles. So what?

Writing for a major publisher feels advanced.

I only blog. I'm good at listicles. Digital media executives have criticized me for this.

It is allegedly clickbait.

Or it is following trends.

Alternately, growth hacking.

Never. I learned copywriting to improve my writing.

Apple, Amazon, and Tesla utilize copywriting to woo customers. Whoever thinks otherwise is the wisest person in the room.

Old-schoolers loathe copywriters.

Their novels sell nothing.

They assume their elitist version of writing is better and that the TikTok generation will invest time in random writing with no subheadings and massive walls of text they can't read on their phones.

I'm terrified of book proposals.

My friend's book proposal suggestion was contradictory and made no sense.

They told him to compose another genre. This book got three Amazon reviews. Is that a good model?

The process disappointed him. I've heard other book proposal horror stories. Tim Ferriss' book "The 4-Hour Workweek" was criticized.

Because he has thick skin, his book came out. He wouldn't be known without that.

I hate book proposals.

An ongoing commitment

Writing a book is time-consuming.

I appreciate time most. I want to focus on my daughter for the next few years. I can't recreate her childhood because of a book.

No idea how parents balance kids' goals.

My silly face in a bookstore. Really?

Genuine thought.

I don't want my face in bookstores. I fear fame. I prefer anonymity.

I want to purchase a property in a bad Australian area, then piss off and play drums. Is bookselling worth it?

Are there even bookstores anymore?

(Except for Ryan Holiday's legendary Painted Porch Bookshop in Texas.)

What's most important about books

Many were duped.

Tweets and TikTok hopscotch vids are their future. Short-form content creates devoted audiences that buy newsletter subscriptions.

Books=depth.

Depth wins (if you can get people to buy your book). Creating a book will strengthen my reader relationships.

It's cheaper than my classes, so more people can benefit from my life lessons.

A deeper justification for writing a book

Mind wandered.

If I write this book, my daughter will follow it. "Look what you can do, love, when you ignore critics."

That's my favorite.

I'll be her best leader and teacher. If her dad can accomplish this, she can too.

My kid can read my book when I'm gone to remember her loving father.

Last paragraph made me cry.

The positive

This book thing might make me sound like Karen.

The upside is... Building in public, like I have with online writing, attracts the right people.

Proof-of-work over proposals, beautiful words, or huge aspirations. If you want a book deal, try writing online instead of the old manner.

Next steps

No idea.

I'm a rural Aussie. Writing a book in the big city is intimidating. Will I do it? Lots to think about. Right now, some level of reflection and gratitude feels most appropriate.

Sometimes when you don't feel worthy, it gives you the greatest lessons. That's how I feel about getting offered this book deal.

Perhaps you can relate.

Victoria Kurichenko

3 years ago

Here's what happened after I launched my second product on Gumroad.

One-hour ebook sales, affiliate relationships, and more.

If you follow me, you may know I started a new ebook in August 2022.

Despite publishing on this platform, my website, and Quora, I'm not a writer.

My writing speed is slow, 2,000 words a day, and I struggle to communicate cohesively.

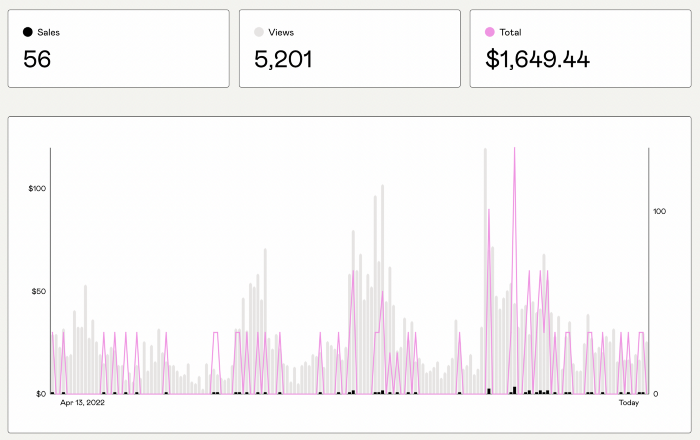

In April 2022, I wrote a successful guide on How to Write Google-Friendly Blog Posts.

I had no email list or social media presence. I've made $1,600+ selling ebooks.

Evidence:

My first digital offering isn't a book.

It's an actionable guide with my tried-and-true process for writing Google-friendly content.

I'm not bragging.

Established authors like Tim Denning make more from my ebook sales with one newsletter.

This experience taught me writing isn't a privilege.

Writing a book and making money online doesn't require expertise.

Many don't consult experts. They want someone approachable.

Two years passed before I realized my own limits.

I have a brain, two hands, and Internet to spread my message.

I wrote and published a second ebook after the first's success.

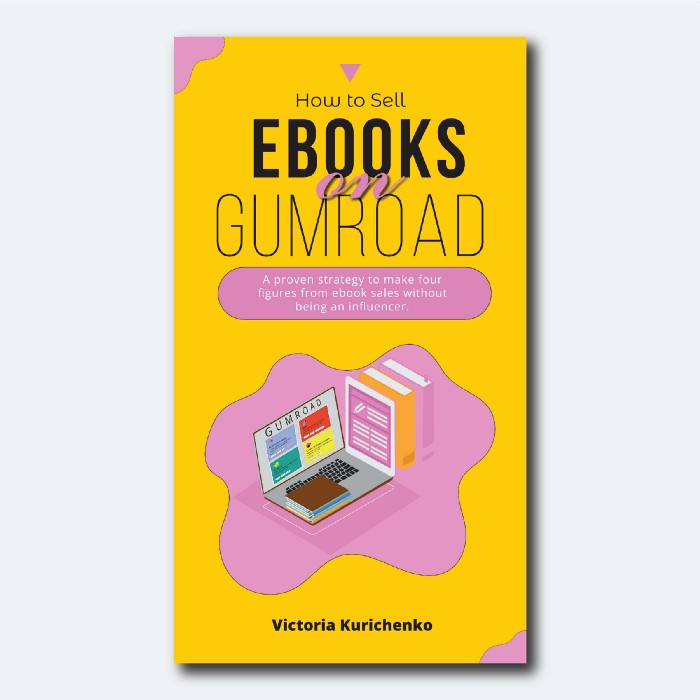

On Gumroad, I released my second digital product.

Here's my complete Gumroad evaluation.

Gumroad is a marketplace for content providers to develop and sell sales pages.

Gumroad handles payments and client requests. It's helpful when someone sends a bogus payment receipt requesting an ebook (actual story!).

You'll forget administrative concerns after your first ebook sale.

After my first ebook sale, I did this: I made additional cash!

After every sale, I tell myself, "I built a new semi-passive revenue source."

This thinking shift helps me become less busy while increasing my income and quality of life.

Besides helping others, folks sell evergreen digital things to earn passive money.

It's in my second ebook.

I explain how I built and sold 50+ copies of my SEO writing ebook without being an influencer.

I show how anyone can sell ebooks on Gumroad and automate their sales process.

This is my ebook.

After publicizing the ebook release, I sold three copies within an hour.

Wow, or meh?

I don’t know.

The answer is different for everyone.

These three sales came from a small email list of 40 motivated fans waiting for my ebook release.

I had bigger plans.

I'll market my ebook on Medium, my website, Quora, and email.

I'm testing affiliate partnerships this time.

One of my ebook buyers is now promoting it for 40% commission.

Become my affiliate if you think your readers would like my ebook.

My ebook is a few days old, but I'm interested to see where it goes.

My SEO writing book started without an email list, affiliates, or 4,000 website visitors. I've made four figures.

I'm slowly expanding my communication avenues to have more impact.

Even a small project can open doors you never knew existed.

So began my writing career.

In summary

If you dare, every concept can become a profitable trip.

Before, I couldn't conceive of creating an ebook.

How to Sell eBooks on Gumroad is my second digital product.

Marketing and writing taught me that anything can be sold online.

Jim Siwek

3 years ago

In 2022, can a lone developer be able to successfully establish a SaaS product?

In the early 2000s, I began developing SaaS. I helped launch an internet fax service that delivered faxes to email inboxes. Back then, it saved consumers money and made the procedure easier.

Google AdWords was young then. Anyone might establish a new website, spend a few hundred dollars on keywords, and see dozens of new paying clients every day. That's how we launched our new SaaS, and these clients stayed for years. Our early ROI was sky-high.

Changing times

The situation changed dramatically after 15 years. Our paid advertising cost $200-$300 for every new customer. Paid advertising takes three to four years to repay.

Fortunately, we still had tens of thousands of loyal clients. Good organic rankings gave us new business. We needed less sponsored traffic to run a profitable SaaS firm.

Is it still possible?

Since selling our internet fax firm, I've dreamed about starting a SaaS company. One I could construct as a lone developer and progressively grow a dedicated customer base, as I did before in a small team.

It seemed impossible to me. Solo startups couldn't afford paid advertising. SEO was tough. Even the worst SaaS startup ideas attracted VC funding. How could I compete with startups that could hire great talent and didn't need to make money for years (or ever)?

The One and Only Way to Learn

After years of talking myself out of SaaS startup ideas, I decided to develop and launch one. I needed to know if a solitary developer may create a SaaS app in 2022.

Thus, I did. I invented webwriter.ai, an AI-powered writing tool for website content, from hero section headlines to blog posts, this year. I soft-launched an MVP in July.

Considering the Issue

Now that I've developed my own fully capable SaaS app for site builders and developers, I wonder if it's still possible. Can webwriter.ai be successful?

I know webwriter.ai's proposal is viable because Jasper.ai and Grammarly are also AI-powered writing tools. With competition comes validation.

To Win, Differentiate

To compete with well-funded established brands, distinguish to stand out to a portion of the market. So I can speak directly to a target user, unlike larger competition.

I created webwriter.ai to help web builders and designers produce web content rapidly. This may be enough differentiation for now.

Budget-Friendly Promotion

When paid search isn't an option, we get inventive. There are more tools than ever to promote a new website.

Organic Results

on social media (Twitter, Instagram, TikTok, LinkedIn)

Marketing with content that is compelling

Link Creation

Listings in directories

references made in blog articles and on other websites

Forum entries

The Beginning of the Journey

As I've labored to construct my software, I've pondered a new mantra. Not sure where that originated from, but I like it. I'll live by it and teach my kids:

“Do the work.”

You might also like

Akshad Singi

3 years ago

Four obnoxious one-minute habits that help me save more than 30 hours each week

These four, when combined, destroy procrastination.

You're not rushed. You waste it on busywork.

You'll accept this eventually.

In 2022, the daily average usage of a user on social media is 2.5 hours.

By 2020, 6 billion hours of video were watched each month by Netflix's customers, who used the service an average of 3.2 hours per day.

When we see these numbers, we think "Wow!" People squander so much time as though they don't contribute. True. These are yours. Likewise.

We don't lack time; we just waste it. Once you realize this, you can change your habits to save time. This article explains. If you adopt ALL 4 of these simple behaviors, you'll see amazing benefits.

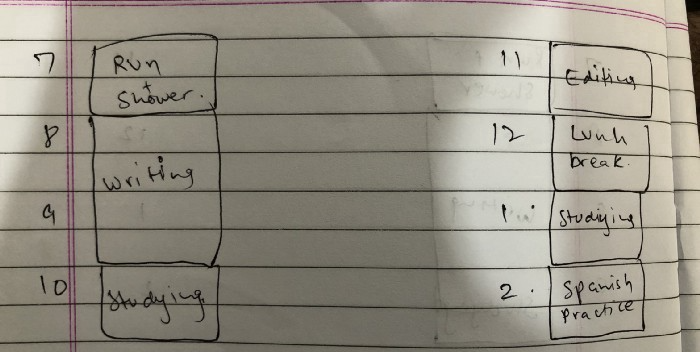

Time-blocking

Cal Newport's time-blocking trick takes a minute but improves your day's clarity.

Divide the next day into 30-minute (or 5-minute, if you're Elon Musk) segments and assign responsibilities. As seen.

Here's why:

The procrastination that results from attempting to determine when to begin working is eliminated. Procrastination is a given if you choose when to begin working in real-time. Even if you may assume you'll start working in five minutes, it won't take you long to realize that five minutes have turned into an hour. But if you've already determined to start working at 2:00 the next day, your odds of procrastinating are greatly decreased, if not eliminated altogether.

You'll also see that you have a lot of time in a day when you plan your day out on paper and assign chores to each hour. Doing this daily will permanently eliminate the lack of time mindset.

5-4-3-2-1: Have breakfast with the frog!

“If it’s your job to eat a frog, it’s best to do it first thing in the morning. And If it’s your job to eat two frogs, it’s best to eat the biggest one first.”

Eating the frog means accomplishing the day's most difficult chore. It's better to schedule it first thing in the morning when time-blocking the night before. Why?

The day's most difficult task is also the one that causes the most postponement. Because of the stress it causes, the later you schedule it, the more time you risk wasting by procrastinating.

However, if you do it right away in the morning, you'll feel good all day. This is the reason it was set for the morning.

Mel Robbins' 5-second rule can help. Start counting backward 54321 and force yourself to start at 1. If you acquire the urge to work on a goal, you must act within 5 seconds or your brain will destroy it. If you're scheduled to eat your frog at 9, eat it at 8:59. Start working.

Micro-visualisation

You've heard of visualizing to enhance the future. Visualizing a bright future won't do much if you're not prepared to focus on the now and develop the necessary habits. Alexander said:

People don’t decide their futures. They decide their habits and their habits decide their future.

I visualize the next day's schedule every morning. My day looks like this

“I’ll start writing an article at 7:30 AM. Then, I’ll get dressed up and reach the medicine outpatient department by 9:30 AM. After my duty is over, I’ll have lunch at 2 PM, followed by a nap at 3 PM. Then, I’ll go to the gym at 4…”

etc.

This reinforces the day you planned the night before. This makes following your plan easy.

Set the timer.

It's the best iPhone productivity app. A timer is incredible for increasing productivity.

Set a timer for an hour or 40 minutes before starting work. Your call. I don't believe in techniques like the Pomodoro because I can focus for varied amounts of time depending on the time of day, how fatigued I am, and how cognitively demanding the activity is.

I work with a timer. A timer keeps you focused and prevents distractions. Your mind stays concentrated because of the timer. Timers generate accountability.

To pee, I'll pause my timer. When I sit down, I'll continue. Same goes for bottle refills. To use Twitter, I must pause the timer. This creates accountability and focuses work.

Connecting everything

If you do all 4, you won't be disappointed. Here's how:

Plan out your day's schedule the night before.

Next, envision in your mind's eye the same timetable in the morning.

Speak aloud 54321 when it's time to work: Eat the frog! In the morning, devour the largest frog.

Then set a timer to ensure that you remain focused on the task at hand.

Matthew Royse

3 years ago

5 Tips for Concise Writing

Here's how to be clear.

“I have only made this letter longer because I have not had the time to make it shorter.” — French mathematician, physicist, inventor, philosopher, and writer Blaise Pascal

Concise.

People want this. We tend to repeat ourselves and use unnecessary words.

Being vague frustrates readers. It focuses their limited attention span on figuring out what you're saying rather than your message.

Edit carefully.

“Examine every word you put on paper. You’ll find a surprising number that don’t serve any purpose.” — American writer, editor, literary critic, and teacher William Zinsser

How do you write succinctly?

Here are three ways to polish your writing.

1. Delete

Your readers will appreciate it if you delete unnecessary words. If a word or phrase is essential, keep it. Don't force it.

Many readers dislike bloated sentences. Ask yourself if cutting a word or phrase will change the meaning or dilute your message.

For example, you could say, “It’s absolutely essential that I attend this meeting today, so I know the final outcome.” It’s better to say, “It’s critical I attend the meeting today, so I know the results.”

Key takeaway

Delete actually, completely, just, full, kind of, really, and totally. Keep the necessary words, cut the rest.

2. Just Do It

Don't tell readers your plans. Your readers don't need to know your plans. Who are you?

Don't say, "I want to highlight our marketing's problems." Our marketing issues are A, B, and C. This cuts 5–7 words per sentence.

Keep your reader's attention on the essentials, not the fluff. What are you doing? You won't lose readers because you get to the point quickly and don't build up.

Key takeaway

Delete words that don't add to your message. Do something, don't tell readers you will.

3. Cut Overlap

You probably repeat yourself unintentionally. You may add redundant sentences when brainstorming. Read aloud to detect overlap.

Remove repetition from your writing. It's important to edit our writing and thinking to avoid repetition.

Key Takeaway

If you're repeating yourself, combine sentences to avoid overlap.

4. Simplify

Write as you would to family or friends. Communicate clearly. Don't use jargon. These words confuse readers.

Readers want specifics, not jargon. Write simply. Done.

Most adults read at 8th-grade level. Jargon and buzzwords make speech fluffy. This confuses readers who want simple language.

Key takeaway

Ensure all audiences can understand you. USA Today's 5th-grade reading level is intentional. They want everyone to understand.

5. Active voice

Subjects perform actions in active voice. When you write in passive voice, the subject receives the action.

For example, “the board of directors decided to vote on the topic” is an active voice, while “a decision to vote on the topic was made by the board of directors” is a passive voice.

Key takeaway

Active voice clarifies sentences. Active voice is simple and concise.

Bringing It All Together

Five tips help you write clearly. Delete, just do it, cut overlap, use simple language, and write in an active voice.

Clear writing is effective. It's okay to occasionally use unnecessary words or phrases. Realizing it is key. Check your writing.

Adding words costs.

Write more concisely. People will appreciate it and read your future articles, emails, and messages. Spending extra time will increase trust and influence.

“Not that the story need be long, but it will take a long while to make it short.” — Naturalist, essayist, poet, and philosopher Henry David Thoreau

Techletters

2 years ago

Using Synthesia, DALL-E 2, and Chat GPT-3, create AI news videos

Combining AIs creates realistic AI News Videos.

Powerful AI tools like Chat GPT-3 are trending. Have you combined AIs?

The 1-minute fake news video below is startlingly realistic. Artificial Intelligence developed NASA's Mars exploration breakthrough video (AI). However, integrating the aforementioned AIs generated it.

AI-generated text for the Chat GPT-3 based on a succinct tagline

DALL-E-2 AI generates an image from a brief slogan.

Artificial intelligence-generated avatar and speech

This article shows how to use and mix the three AIs to make a realistic news video. First, watch the video (1 minute).

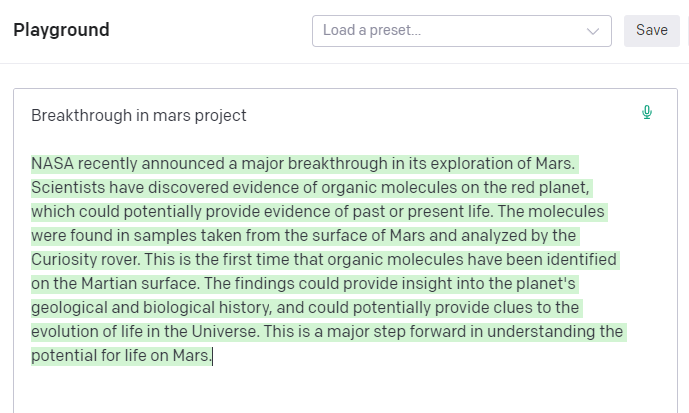

Talk GPT-3

Chat GPT-3 is an OpenAI NLP model. It can auto-complete text and produce conversational responses.

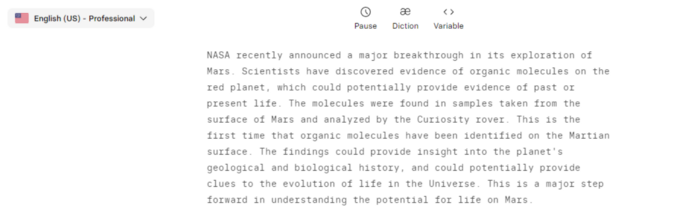

Try it at the playground. The AI will write a comprehensive text from a brief tagline. Let's see what the AI generates with "Breakthrough in Mars Project" as the headline.

Amazing. Our tagline matches our complete and realistic text. Fake news can start here.

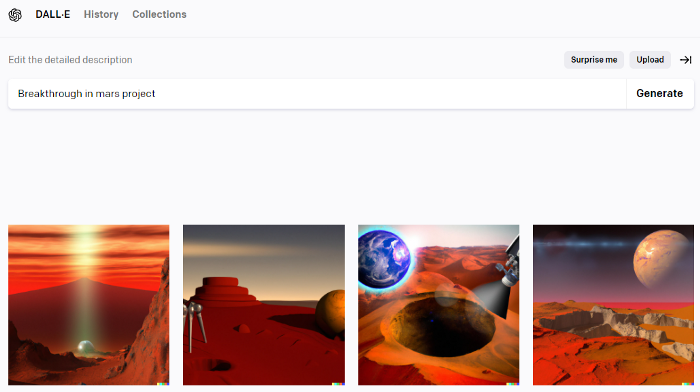

DALL-E-2

OpenAI's huge transformer-based language model DALL-E-2. Its GPT-3 basis is geared for image generation. It can generate high-quality photos from a brief phrase and create artwork and images of non-existent objects.

DALL-E-2 can create a news video background. We'll use "Breakthrough in Mars project" again. Our AI creates four striking visuals. Last.

Synthesia

Synthesia lets you quickly produce videos with AI avatars and synthetic vocals.

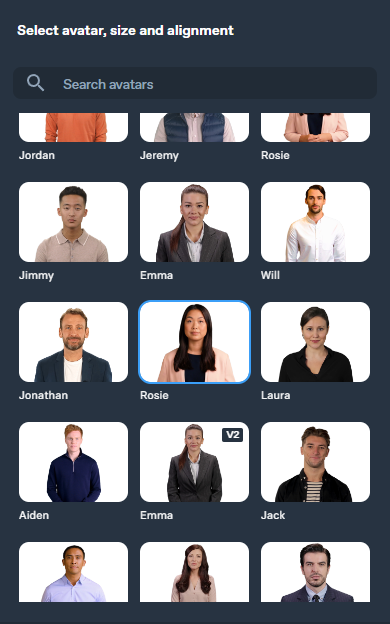

Avatars are first. Rosie it is.

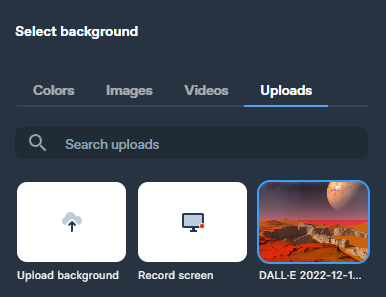

Upload and select DALL-backdrop. E-2's

Copy the Chat GPT-3 content and choose a synthetic voice.

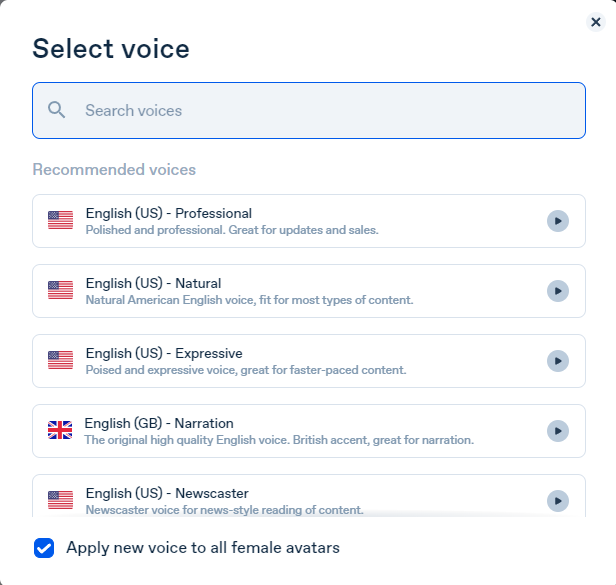

Voice: English (US) Professional.

Finally, we generate and watch or download our video.

Synthesia AI completes the AI video.

Overview & Resources

We used three AIs to make surprisingly realistic NASA Mars breakthrough fake news in this post. Synthesia generates an avatar and a synthetic voice, therefore it may be four AIs.

These AIs created our fake news.

AI-generated text for the Chat GPT-3 based on a succinct tagline

DALL-E-2 AI generates an image from a brief slogan.

Artificial intelligence-generated avatar and speech