Union LA x Air Jordan 2 “Future Is Now” PREVIEW

With the help of Virgil Abloh and Union LA‘s Chris Gibbs, it's now clear that Jordan Brand intended to bring the Air Jordan 2 back in 2022.

The “Future Is Now” collection includes two colorways of MJ's second signature as well as an extensive range of apparel and accessories.

“We wanted to juxtapose what some futuristic gear might look like after being worn and patina'd,”

Union stated on the collaboration's landing page.

“You often see people's future visions that are crisp and sterile. We thought it would be cool to wear it in and make it organic...”

The classic co-branding appears on short-sleeve tees, hoodies, and sweat shorts/sweat pants, all lightly distressed at the hems and seams.

Also, a filtered black-and-white photo of MJ graces the adjacent long sleeves, labels stitch into the socks, and the Jumpman logo adorns the four caps.

Liner jackets and flight pants will also be available, adding reimagined militaria to a civilian ensemble.

The Union LA x Air Jordan 2 (Grey Fog and Rattan) shares many of the same beats. Vintage suedes show age, while perforations and detailing reimagine Bruce Kilgore's design for the future.

The “UN/LA” tag across the modified eye stays, the leather patch across the tongue, and the label that wraps over the lateral side of the collar complete the look.

The footwear will also include a Crater Slide in the “Grey Fog” color scheme.

BUYING

On 4/9 and 4/10 from 9am-3pm, Union LA will be giving away a pair of Air Jordan 2s at their La Brea storefront (110 S. LA BREA AVE. LA, CA 90036). The raffle is only open to LA County residents with a valid CA ID. You must enter by 11:59pm on 4/10 to win. Winners will be notified via email.

More on Lifestyle

Will Lockett

2 years ago

There Is A New EV King in Town

McMurtry Spéirling outperforms Tesla in speed and efficiency.

EVs were ridiculously slow for decades. However, the 2008 Tesla Roadster revealed that EVs might go extraordinarily fast. The Tesla Model S Plaid and Rimac Nevera are the fastest-accelerating road vehicles, despite combustion-engined road cars dominating the course. A little-known firm beat Tesla and Rimac in the 0-60 race, beat F1 vehicles on a circuit, and boasts a 350-mile driving range. The McMurtry Spéirling is completely insane.

Mat Watson of CarWow, a YouTube megastar, was recently handed a Spéirling and access to Silverstone Circuit (view video above). Mat ran a quarter-mile on Silverstone straight with former F1 driver Max Chilton. The little pocket-rocket automobile touched 100 mph in 2.7 seconds, completed the quarter mile in 7.97 seconds, and hit 0-60 in 1.4 seconds. When looking at autos quickly, 0-60 times can seem near. The Tesla Model S Plaid does 0-60 in 1.99 seconds, which is comparable to the Spéirling. Despite the meager statistics, the Spéirling is nearly 30% faster than Plaid!

My vintage VW Golf 1.4s has an 8.8-second 0-60 time, whereas a BMW Z4 3.0i is 30% faster (with a 0-60 time of 6 seconds). I tried to beat a Z4 off the lights in my Golf, but the Beamer flew away. If they challenge the Spéirling in a Model S Plaid, they'll feel as I did. Fast!

Insane quarter-mile drag time. Its road car record is 7.97 seconds. A Dodge Demon, meant to run extremely fast quarter miles, finishes so in 9.65 seconds, approximately 20% slower. The Rimac Nevera's 8.582-second quarter-mile record was miles behind drag racing. This run hampered the Spéirling. Because it was employing gearing that limited its top speed to 150 mph, it reached there in a little over 5 seconds without accelerating for most of the quarter mile! McMurtry can easily change the gearing, making the Spéirling run quicker.

McMurtry did this how? First, the Spéirling is a tiny single-seater EV with a 60 kWh battery pack, making it one of the lightest EVs ever. The 1,000-hp Spéirling has more than one horsepower per kg. The Nevera has 0.84 horsepower per kg and the Plaid 0.44.

However, you cannot simply construct a car light and power it. Instead of accelerating, it would spin. This makes the Spéirling a fan car. Its huge fans create massive downforce. These fans provide the Spéirling 2 tonnes of downforce while stationary, so you could park it on the ceiling. Its fast 0-60 time comes from its downforce, which lets it deliver all that power without wheel spin.

It also possesses complete downforce at all speeds, allowing it to tackle turns faster than even race vehicles. Spéirlings overcame VW IDRs and F1 cars to set the Goodwood Hill Climb record (read more here). The Spéirling is a dragstrip winner and track dominator, unlike the Plaid and Nevera.

The Spéirling is astonishing for a single-seater. Fan-generated downforce is more efficient than wings and splitters. It also means the vehicle has very minimal drag without the fan. The Spéirling can go 350 miles per charge (WLTP) or 20-30 minutes at full speed on a track despite its 60 kWh battery pack. The G-forces would hurt your neck before the battery died if you drove around a track for longer. The Spéirling can charge at over 200 kW in about 30 minutes. Thus, driving to track days, having fun, and returning is possible. Unlike other high-performance EVs.

Tesla, Rimac, or Lucid will struggle to defeat the Spéirling. They would need to build a fan automobile because adding power to their current vehicle would make it uncontrollable. The EV and automobile industries now have a new, untouchable performance king.

Tech With Dom

2 years ago

6 Awesome Desk Accessories You Must Have!

I'm gadget-obsessed. So I shared my top 6 desk gadgets.

These gadgets improve my workflow and are handy for working from home.

Without further ado...

Computer light bar Xiaomi Mi

I've previously recommended the Xiaomi Mi Light Bar, and I still do. It's stylish and convenient.

The Mi bar is a monitor-mounted desk lamp. The lamp's hue and brightness can be changed with a stylish wireless remote.

Changeable hue and brightness make it ideal for late-night work.

Desk Mat 2.

I wasn't planning to include a desk surface in this article, but I find it improves computer use.

The mouse feels smoother and is a better palm rest than wood or glass.

I'm currently using the overkill Razer Goliathus Extended Chroma RGB Gaming Surface, but I like RGB.

Using a desk surface or mat makes computer use more comfortable, and it's not expensive.

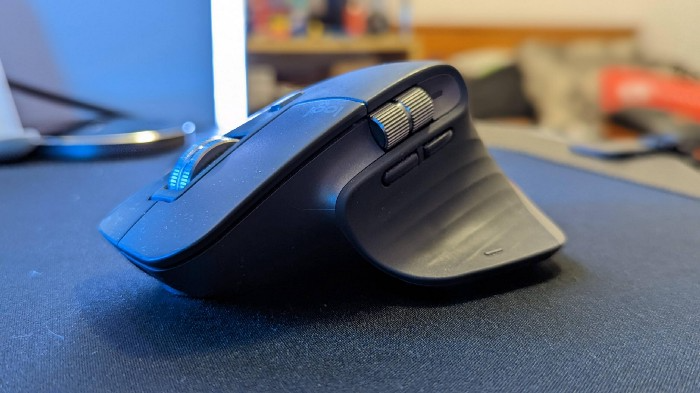

Third, the Logitech MX Master 3 Mouse

The Logitech MX Master 3 or any from the MX Master series is my favorite mouse.

The side scroll wheel on these mice is a feature I've never seen on another mouse.

Side scroll wheels are great for spreadsheets and video editing. It would be hard for me to switch from my Logitech MX Master 3 to another mouse. Only gaming is off-limits.

Google Nest 4.

Without a smart assistant, my desk is useless. I'm currently using the second-generation Google Nest Hub, but I've also used the Amazon Echo Dot, Echo Spot, and Apple HomePod Mini.

As a Pixel 6 Pro user, the Nest Hub works best with my phone.

My Nest Hub plays news, music, and calendar events. It also lets me control lights and switches with my smartphone. It plays YouTube videos.

Google Pixel Stand, No. 5

A wireless charger on my desk is convenient for charging my phone and other devices while I work. My desk has two wireless chargers. I have a Satechi aluminum fast charger and a second-generation Google Pixel Stand.

If I need to charge my phone and earbuds simultaneously, I use two wireless chargers. Satechi chargers are well-made and fast. Micro-USB is my only complaint.

The Pixel Stand converts compatible devices into a smart display for adjusting charging speeds and controlling other smart devices. My Pixel 6 Pro charges quickly. Here's my video review.

6. Anker Power Bank

Anker's 65W charger is my final recommendation. This online find was a must-have. This can charge my laptop and several non-wireless devices, perfect for any techie!

The charger has two USB-A ports and two USB-C ports, one with 45W and the other with 20W, so it can charge my iPad Pro and Pixel 6 Pro simultaneously.

Summary

These are some of my favorite office gadgets. My kit page has an updated list.

Links to the products mentioned in this article are in the appropriate sections. These are affiliate links.

You're up! Share the one desk gadget you can't live without and why.

Hannah Elliott

2 years ago

Pebble Beach Auto Auctions Set $469M Record

The world's most prestigious vintage vehicle show included amazing autos and record-breaking sums.

This 1932 Duesenberg J Figoni Sports Torpedo earned Best of Show in 2022.

David Paul Morris (DPM)/Bloomberg

2022 Pebble Beach Concours d'Elegance winner was a pre-war roadster.

Lee Anderson's 1932 Duesenberg J Figoni Sports Torpedo won Best of Show at Pebble Beach Golf Links near Carmel, Calif., on Sunday. First American win since 2013.

Sandra Button, chairperson of the annual concours, said the car, whose chassis and body had been separated for years, "marries American force with European style." "Its resurrection story is passionate."

Pebble Beach Concours d'Elegance Auction

Since 1950, the Pebble Beach Concours d'Elegance has welcomed the world's most costly collectable vehicles for a week of parties, auctions, rallies, and high-roller meetings. The cold, dreary weather highlighted the automobiles' stunning lines and hues.

DPM/Bloomberg

A visitor photographs a 1948 Ferrari 166 MM Touring Barchetta. This is one of 25 Ferraris manufactured in the years after World War II. First shown at the 1948 Turin Salon. Others finished Mille Miglia and Le Mans, which set the tone for Ferrari racing for years.

DPM/Bloomberg

This year's frontrunners were ultra-rare pre-war and post-war automobiles with long and difficult titles, such a 1937 Talbot-Lago T150C-SS Figoni & Falaschi Teardrop Coupe and a 1951 Talbot-Lago T26 Grand Sport Stabilimenti Farina Cabriolet.

The hefty, enormous coaches inspire visions of golden pasts when mysterious saloons swept over the road with otherworldly style, speed, and grace. Only the richest and most powerful people, like Indian maharaja and Hollywood stars, owned such vehicles.

Antonio Chopitea, a Peruvian sugar tycoon, ordered a new Duesenberg in Paris. Hemmings says the two-tone blue beauty was moved to the US and dismantled in the 1960s. Body and chassis were sold separately and rejoined decades later in a three-year, prize-winning restoration.

The concours is the highlight of Monterey Car Week, a five-day Super Bowl for car enthusiasts. Early events included Porsche and Ferrari displays, antique automobile races, and new-vehicle debuts. Many auto executives call Monterey Car Week the "new auto show."

Many visitors were drawn to the record-breaking auctions.

A 1969 Porsche 908/02 auctioned for $4.185 million. Flat-eight air-cooled engine, 90.6-inch wheelbase, 1,320-pound weight. Vic Elford, Richard Attwood, Rudi Lins, Gérard Larrousse, Kurt Ahrens Jr., Masten Gregory, and Pedro Rodriguez drove it, according to Gooding.

DPM/Bloomberg

The 1931 Bentley Eight Liter Sports Tourer doesn't meet its reserve. Gooding & Co., the official auction house of the concours, made more than $105 million and had an 82% sell-through rate. This powerful open-top tourer is one of W.O. Bentley's 100 automobiles. Only 80 remain.

DPM/Bloomberg

The final auction on Aug. 21 brought in $456.1 million, breaking the previous high of $394.48 million established in 2015 in Monterey. “The week put an exclamation point on what has been an exceptional year for the collector automobile market,” Hagerty analyst John Wiley said.

Many cars that go unsold at public auction are sold privately in the days after. After-sales pushed the week's haul to $469 million on Aug. 22, up 18.9% from 2015's record.

In today's currencies, 2015's record sales amount to $490 million, Wiley noted. The dollar is degrading faster than old autos.

Still, 113 million-dollar automobiles sold. The average car sale price was $583,211, up from $446,042 last year, while multimillion-dollar hammer prices made up around 75% of total sales.

Industry insiders and market gurus expected that stock market volatility, the crisis in Ukraine, and the dollar-euro exchange rate wouldn't influence the world's biggest spenders.

Classic.com's CEO said there's no hint of a recession in an e-mail. Big sales and crowds.

Ticket-holders wore huge hats, flowery skirts, and other Kentucky Derby-esque attire. Coffee, beverages, and food are extra.

DPM/Bloomberg

Mercedes-Benz 300 SL Gullwing, 1955. Mercedes produced the two-seat gullwing coupe from 1954–1957 and the roadster from 1957–1963. It was once West Germany's fastest and most powerful automobile. You'd be hard-pressed to locate one for less $1 million.

DPM/Bloomberg

1955 Ferrari 410 Sport sold for $22 million at RM Sotheby's. It sold a 1937 Mercedes-Benz 540K Sindelfingen Roadster for $9.9 million and a 1924 Hispano-Suiza H6C Transformable Torpedo for $9.245 million. The family-run mansion sold $221.7 million with a 90% sell-through rate, up from $147 million in 2021. This year, RM Sotheby's cars averaged $1.3 million.

Not everyone saw such great benefits.

Gooding & Co., the official auction house of the concours, made more than $105 million and had an 82% sell-through rate. 1937 Bugatti Type 57SC Atalante, 1990 Ferrari F40, and 1994 Bugatti EB110 Super Sport were top sellers.

The 1969 Autobianchi A112 Bertone. This idea two-seater became a Hot Wheels toy but was never produced. It has a four-speed manual drive and an inline-four mid-engine arrangement like the Lamborghini Miura.

DPM/Bloomberg

1956 Porsche 356 A Speedster at Gooding & Co. The Porsche 356 is a lightweight, rear-engine, rear-wheel drive vehicle that lacks driving power but is loved for its rounded, Beetle-like hardtop coupé and open-top versions.

DPM/Bloomberg

Mecum sold $50.8 million with a 64% sell-through rate, down from $53.8 million and 77% in 2021. Its top lot, a 1958 Ferrari 250 GT 'Tour de France' Alloy Coupe, sold for $2.86 million, but its average price was $174,016.

Bonhams had $27.8 million in sales with an 88% sell-through rate. The same sell-through generated $35.9 million in 2021.

Gooding & Co. and RM Sotheby's posted all 10 top sales, leaving Bonhams, Mecum, and Hagerty-owned Broad Arrow fighting for leftovers. Six of the top 10 sellers were Ferraris, which remain the gold standard for collectable automobiles. Their prices have grown over decades.

Classic.com's Calle claimed RM Sotheby's "stole the show," but "BroadArrow will be a force to reckon with."

Although pre-war cars were hot, '80s and '90s cars showed the most appreciation and attention. Generational transition and new buyer profile."

2022 Pebble Beach Concours d'Elegance judges inspect 1953 Siata 208. The rounded coupe was introduced at the 1952 Turin Auto Show in Italy and is one of 18 ever produced. It sports a 120hp Fiat engine, five-speed manual transmission, and alloy drum brakes. Owners liked their style, but not their reliability.

DPM/Bloomberg

The Czinger 21 CV Max at Pebble Beach. Monterey Car Week concentrates on historic and classic automobiles, but modern versions like this Czinger hypercar also showed.

DPM/Bloomberg

The 1932 Duesenberg J Figoni Sports Torpedo won Best in Show in 2022. Lee and Penny Anderson of Naples, Fla., own the once-separate-chassis-from-body automobile.

DPM/Bloomberg

You might also like

Dmitrii Eliuseev

2 years ago

Creating Images on Your Local PC Using Stable Diffusion AI

Deep learning-based generative art is being researched. As usual, self-learning is better. Some models, like OpenAI's DALL-E 2, require registration and can only be used online, but others can be used locally, which is usually more enjoyable for curious users. I'll demonstrate the Stable Diffusion model's operation on a standard PC.

Let’s get started.

What It Does

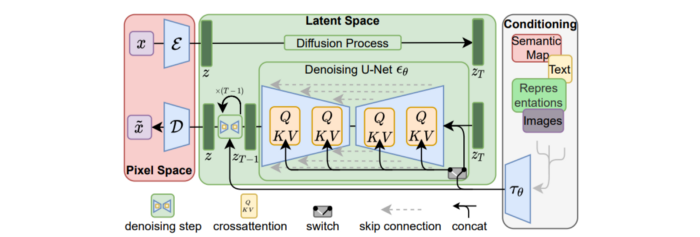

Stable Diffusion uses numerous components:

A generative model trained to produce images is called a diffusion model. The model is incrementally improving the starting data, which is only random noise. The model has an image, and while it is being trained, the reversed process is being used to add noise to the image. Being able to reverse this procedure and create images from noise is where the true magic is (more details and samples can be found in the paper).

An internal compressed representation of a latent diffusion model, which may be altered to produce the desired images, is used (more details can be found in the paper). The capacity to fine-tune the generation process is essential because producing pictures at random is not very attractive (as we can see, for instance, in Generative Adversarial Networks).

A neural network model called CLIP (Contrastive Language-Image Pre-training) is used to translate natural language prompts into vector representations. This model, which was trained on 400,000,000 image-text pairs, enables the transformation of a text prompt into a latent space for the diffusion model in the scenario of stable diffusion (more details in that paper).

This figure shows all data flow:

The weights file size for Stable Diffusion model v1 is 4 GB and v2 is 5 GB, making the model quite huge. The v1 model was trained on 256x256 and 512x512 LAION-5B pictures on a 4,000 GPU cluster using over 150.000 NVIDIA A100 GPU hours. The open-source pre-trained model is helpful for us. And we will.

Install

Before utilizing the Python sources for Stable Diffusion v1 on GitHub, we must install Miniconda (assuming Git and Python are already installed):

wget https://repo.anaconda.com/miniconda/Miniconda3-py39_4.12.0-Linux-x86_64.sh

chmod +x Miniconda3-py39_4.12.0-Linux-x86_64.sh

./Miniconda3-py39_4.12.0-Linux-x86_64.sh

conda update -n base -c defaults condaInstall the source and prepare the environment:

git clone https://github.com/CompVis/stable-diffusion

cd stable-diffusion

conda env create -f environment.yaml

conda activate ldm

pip3 install transformers --upgradeDownload the pre-trained model weights next. HiggingFace has the newest checkpoint sd-v14.ckpt (a download is free but registration is required). Put the file in the project folder and have fun:

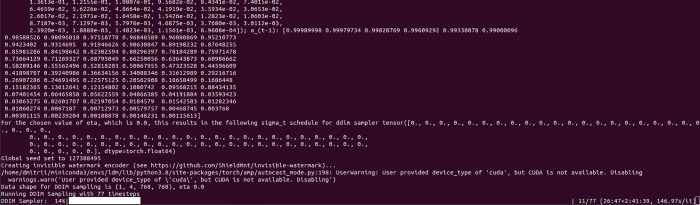

python3 scripts/txt2img.py --prompt "hello world" --plms --ckpt sd-v1-4.ckpt --skip_grid --n_samples 1Almost. The installation is complete for happy users of current GPUs with 12 GB or more VRAM. RuntimeError: CUDA out of memory will occur otherwise. Two solutions exist.

Running the optimized version

Try optimizing first. After cloning the repository and enabling the environment (as previously), we can run the command:

python3 optimizedSD/optimized_txt2img.py --prompt "hello world" --ckpt sd-v1-4.ckpt --skip_grid --n_samples 1Stable Diffusion worked on my visual card with 8 GB RAM (alas, I did not behave well enough to get NVIDIA A100 for Christmas, so 8 GB GPU is the maximum I have;).

Running Stable Diffusion without GPU

If the GPU does not have enough RAM or is not CUDA-compatible, running the code on a CPU will be 20x slower but better than nothing. This unauthorized CPU-only branch from GitHub is easiest to obtain. We may easily edit the source code to use the latest version. It's strange that a pull request for that was made six months ago and still hasn't been approved, as the changes are simple. Readers can finish in 5 minutes:

Replace if attr.device!= torch.device(cuda) with if attr.device!= torch.device(cuda) and torch.cuda.is available at line 20 of ldm/models/diffusion/ddim.py ().

Replace if attr.device!= torch.device(cuda) with if attr.device!= torch.device(cuda) and torch.cuda.is available in line 20 of ldm/models/diffusion/plms.py ().

Replace device=cuda in lines 38, 55, 83, and 142 of ldm/modules/encoders/modules.py with device=cuda if torch.cuda.is available(), otherwise cpu.

Replace model.cuda() in scripts/txt2img.py line 28 and scripts/img2img.py line 43 with if torch.cuda.is available(): model.cuda ().

Run the script again.

Testing

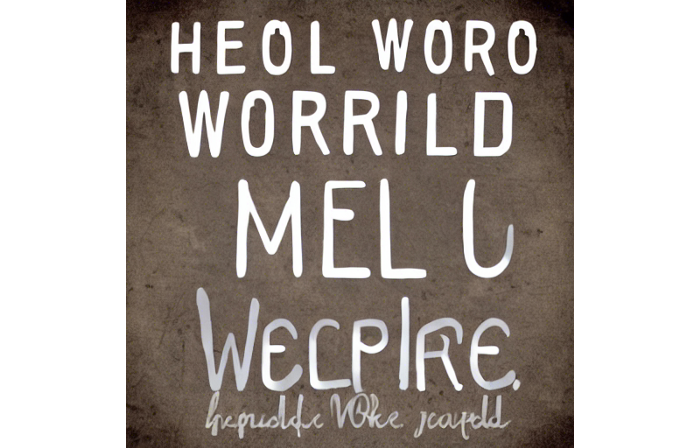

Test the model. Text-to-image is the first choice. Test the command line example again:

python3 scripts/txt2img.py --prompt "hello world" --plms --ckpt sd-v1-4.ckpt --skip_grid --n_samples 1The slow generation takes 10 seconds on a GPU and 10 minutes on a CPU. Final image:

Hello world is dull and abstract. Try a brush-wielding hamster. Why? Because we can, and it's not as insane as Napoleon's cat. Another image:

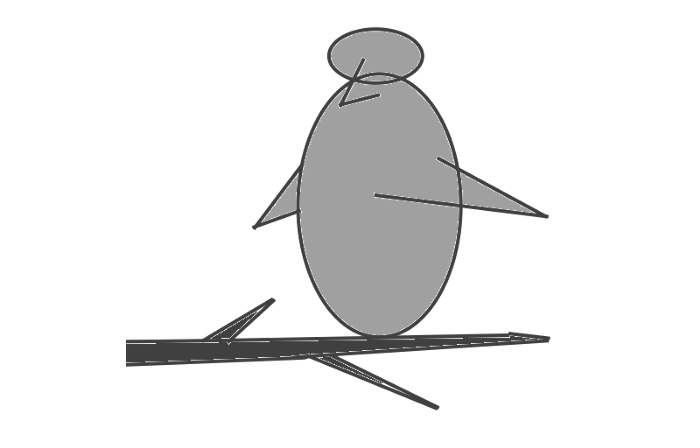

Generating an image from a text prompt and another image is interesting. I made this picture in two minutes using the image editor (sorry, drawing wasn't my strong suit):

I can create an image from this drawing:

python3 scripts/img2img.py --prompt "A bird is sitting on a tree branch" --ckpt sd-v1-4.ckpt --init-img bird.png --strength 0.8It was far better than my initial drawing:

I hope readers understand and experiment.

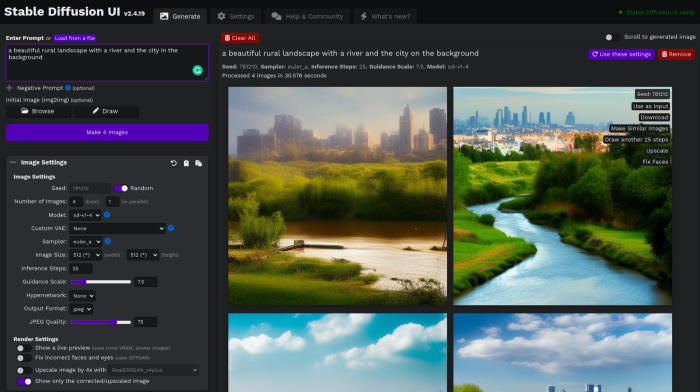

Stable Diffusion UI

Developers love the command line, but regular users may struggle. Stable Diffusion UI projects simplify image generation and installation. Simple usage:

Unpack the ZIP after downloading it from https://github.com/cmdr2/stable-diffusion-ui/releases. Linux and Windows are compatible with Stable Diffusion UI (sorry for Mac users, but those machines are not well-suitable for heavy machine learning tasks anyway;).

Start the script.

Done. The web browser UI makes configuring various Stable Diffusion features (upscaling, filtering, etc.) easy:

V2.1 of Stable Diffusion

I noticed the notification about releasing version 2.1 while writing this essay, and it was intriguing to test it. First, compare version 2 to version 1:

alternative text encoding. The Contrastive LanguageImage Pre-training (CLIP) deep learning model, which was trained on a significant number of text-image pairs, is used in Stable Diffusion 1. The open-source CLIP implementation used in Stable Diffusion 2 is called OpenCLIP. It is difficult to determine whether there have been any technical advancements or if legal concerns were the main focus. However, because the training datasets for the two text encoders were different, the output results from V1 and V2 will differ for the identical text prompts.

a new depth model that may be used to the output of image-to-image generation.

a revolutionary upscaling technique that can quadruple the resolution of an image.

Generally higher resolution Stable Diffusion 2 has the ability to produce both 512x512 and 768x768 pictures.

The Hugging Face website offers a free online demo of Stable Diffusion 2.1 for code testing. The process is the same as for version 1.4. Download a fresh version and activate the environment:

conda deactivate

conda env remove -n ldm # Use this if version 1 was previously installed

git clone https://github.com/Stability-AI/stablediffusion

cd stablediffusion

conda env create -f environment.yaml

conda activate ldmHugging Face offers a new weights ckpt file.

The Out of memory error prevented me from running this version on my 8 GB GPU. Version 2.1 fails on CPUs with the slow conv2d cpu not implemented for Half error (according to this GitHub issue, the CPU support for this algorithm and data type will not be added). The model can be modified from half to full precision (float16 instead of float32), however it doesn't make sense since v1 runs up to 10 minutes on the CPU and v2.1 should be much slower. The online demo results are visible. The same hamster painting with a brush prompt yielded this result:

It looks different from v1, but it functions and has a higher resolution.

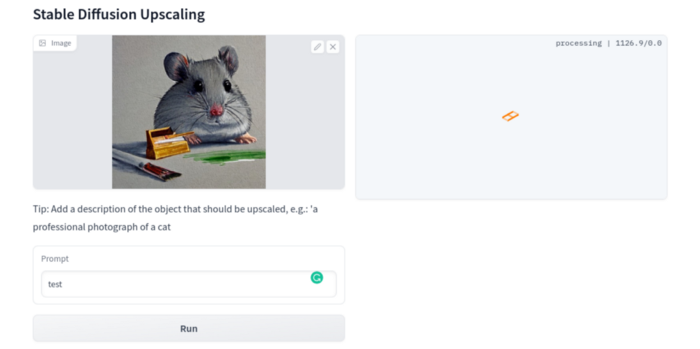

The superresolution.py script can run the 4x Stable Diffusion upscaler locally (the x4-upscaler-ema.ckpt weights file should be in the same folder):

python3 scripts/gradio/superresolution.py configs/stable-diffusion/x4-upscaling.yaml x4-upscaler-ema.ckptThis code allows the web browser UI to select the image to upscale:

The copy-paste strategy may explain why the upscaler needs a text prompt (and the Hugging Face code snippet does not have any text input as well). I got a GPU out of memory error again, although CUDA can be disabled like v1. However, processing an image for more than two hours is unlikely:

Stable Diffusion Limitations

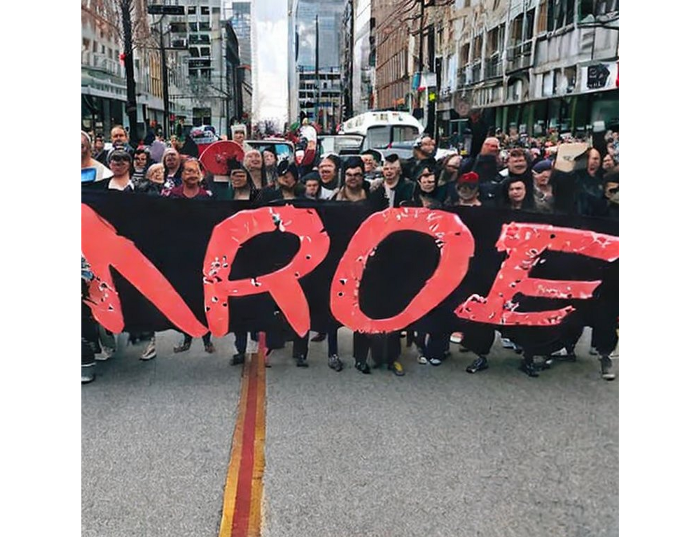

When we use the model, it's fun to see what it can and can't do. Generative models produce abstract visuals but not photorealistic ones. This fundamentally limits The generative neural network was trained on text and image pairs, but humans have a lot of background knowledge about the world. The neural network model knows nothing. If someone asks me to draw a Chinese text, I can draw something that looks like Chinese but is actually gibberish because I never learnt it. Generative AI does too! Humans can learn new languages, but the Stable Diffusion AI model includes only language and image decoder brain components. For instance, the Stable Diffusion model will pull NO WAR banner-bearers like this:

V1:

V2.1:

The shot shows text, although the model never learned to read or write. The model's string tokenizer automatically converts letters to lowercase before generating the image, so typing NO WAR banner or no war banner is the same.

I can also ask the model to draw a gorgeous woman:

V1:

V2.1:

The first image is gorgeous but physically incorrect. A second one is better, although it has an Uncanny valley feel. BTW, v2 has a lifehack to add a negative prompt and define what we don't want on the image. Readers might try adding horrible anatomy to the gorgeous woman request.

If we ask for a cartoon attractive woman, the results are nice, but accuracy doesn't matter:

V1:

V2.1:

Another example: I ordered a model to sketch a mouse, which looks beautiful but has too many legs, ears, and fingers:

V1:

V2.1: improved but not perfect.

V1 produces a fun cartoon flying mouse if I want something more abstract:

I tried multiple times with V2.1 but only received this:

The image is OK, but the first version is closer to the request.

Stable Diffusion struggles to draw letters, fingers, etc. However, abstract images yield interesting outcomes. A rural landscape with a modern metropolis in the background turned out well:

V1:

V2.1:

Generative models help make paintings too (at least, abstract ones). I searched Google Image Search for modern art painting to see works by real artists, and this was the first image:

I typed "abstract oil painting of people dancing" and got this:

V1:

V2.1:

It's a different style, but I don't think the AI-generated graphics are worse than the human-drawn ones.

The AI model cannot think like humans. It thinks nothing. A stable diffusion model is a billion-parameter matrix trained on millions of text-image pairs. I input "robot is creating a picture with a pen" to create an image for this post. Humans understand requests immediately. I tried Stable Diffusion multiple times and got this:

This great artwork has a pen, robot, and sketch, however it was not asked. Maybe it was because the tokenizer deleted is and a words from a statement, but I tried other requests such robot painting picture with pen without success. It's harder to prompt a model than a person.

I hope Stable Diffusion's general effects are evident. Despite its limitations, it can produce beautiful photographs in some settings. Readers who want to use Stable Diffusion results should be warned. Source code examination demonstrates that Stable Diffusion images feature a concealed watermark (text StableDiffusionV1 and SDV2) encoded using the invisible-watermark Python package. It's not a secret, because the official Stable Diffusion repository's test watermark.py file contains a decoding snippet. The put watermark line in the txt2img.py source code can be removed if desired. I didn't discover this watermark on photographs made by the online Hugging Face demo. Maybe I did something incorrectly (but maybe they are just not using the txt2img script on their backend at all).

Conclusion

The Stable Diffusion model was fascinating. As I mentioned before, trying something yourself is always better than taking someone else's word, so I encourage readers to do the same (including this article as well;).

Is Generative AI a game-changer? My humble experience tells me:

I think that place has a lot of potential. For designers and artists, generative AI can be a truly useful and innovative tool. Unfortunately, it can also pose a threat to some of them since if users can enter a text field to obtain a picture or a website logo in a matter of clicks, why would they pay more to a different party? Is it possible right now? unquestionably not yet. Images still have a very poor quality and are erroneous in minute details. And after viewing the image of the stunning woman above, models and fashion photographers may also unwind because it is highly unlikely that AI will replace them in the upcoming years.

Today, generative AI is still in its infancy. Even 768x768 images are considered to be of a high resolution when using neural networks, which are computationally highly expensive. There isn't an AI model that can generate high-resolution photographs natively without upscaling or other methods, at least not as of the time this article was written, but it will happen eventually.

It is still a challenge to accurately represent knowledge in neural networks (information like how many legs a cat has or the year Napoleon was born). Consequently, AI models struggle to create photorealistic photos, at least where little details are important (on the other side, when I searched Google for modern art paintings, the results are often even worse;).

When compared to the carefully chosen images from official web pages or YouTube reviews, the average output quality of a Stable Diffusion generation process is actually less attractive because to its high degree of randomness. When using the same technique on their own, consumers will theoretically only view those images as 1% of the results.

Anyway, it's exciting to witness this area's advancement, especially because the project is open source. Google's Imagen and DALL-E 2 can also produce remarkable findings. It will be interesting to see how they progress.

Rita McGrath

2 years ago

Flywheels and Funnels

Traditional sales organizations used the concept of a sales “funnel” to describe the process through which potential customers move, ending up with sales at the end. Winners today have abandoned that way of thinking in favor of building flywheels — business models in which every element reinforces every other.

Ah, the marketing funnel…

Prospective clients go through a predictable set of experiences, students learn in business school marketing classes. It looks like this:

Understanding the funnel helps evaluate sales success indicators. Gail Goodwin, former CEO of small business direct mail provider Constant Contact, said managing the pipeline was key to escaping the sluggish SaaS ramp of death.

Like the funnel concept. To predict how well your business will do, measure how many potential clients are aware of it (awareness) and how many take the next step. If 1,000 people heard about your offering and 10% showed interest, you'd have 100 at that point. If 50% of these people made buyer-like noises, you'd know how many were, etc. It helped model buying trends.

TV, magazine, and radio advertising are pricey for B2C enterprises. Traditional B2B marketing involved armies of sales reps, which was expensive and a barrier to entry.

Cracks in the funnel model

Digital has exposed the funnel's limitations. Hubspot was born at a time when buyers and sellers had huge knowledge asymmetries, according to co-founder Brian Halligan. Those selling a product could use the buyer's lack of information to become a trusted partner.

As the world went digital, getting information and comparing offerings became faster, easier, and cheaper. Buyers didn't need a seller to move through a funnel. Interactions replaced transactions, and the relationship didn't end with a sale.

Instead, buyers and sellers interacted in a constant flow. In many modern models, the sale is midway through the process (particularly true with subscription and software-as-a-service models). Example:

You're creating a winding journey with many touch points, not a funnel (and lots of opportunities for customers to get lost).

From winding journey to flywheel

Beyond this revised view of an interactive customer journey, a company can create what Jim Collins famously called a flywheel. Imagine rolling a heavy disc on its axis. The first few times you roll it, you put in a lot of effort for a small response. The same effort yields faster turns as it gains speed. Over time, the flywheel gains momentum and turns without your help.

Modern digital organizations have created flywheel business models, in which any additional force multiplies throughout the business. The flywheel becomes a force multiplier, according to Collins.

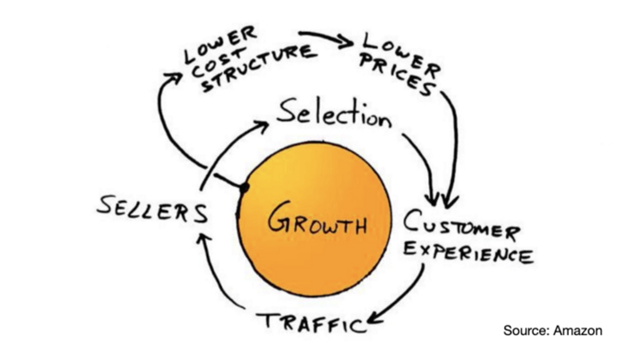

Amazon is a famous flywheel example. Collins explained the concept to Amazon CEO Jeff Bezos at a corporate retreat in 2001. In The Everything Store, Brad Stone describes in his book The Everything Store how he immediately understood Amazon's levers.

The result (drawn on a napkin):

Low prices and a large selection of products attracted customers, while a focus on customer service kept them coming back, increasing traffic. Third-party sellers then increased selection. Low-cost structure supports low-price commitment. It's brilliant! Every wheel turn creates acceleration.

Where from here?

Flywheel over sales funnel! Consider these business terms.

Eve Arnold

2 years ago

Your Ideal Position As a Part-Time Creator

Inspired by someone I never met

Inspiration is good and bad.

Paul Jarvis inspires me. He's a web person and writer who created his own category by being himself.

Paul said no thank you when everyone else was developing, building, and assuming greater responsibilities. This isn't success. He rewrote the rules. Working for himself, expanding at his own speed, and doing what he loves were his definitions of success.

Play with a problem that you have

The biggest problem can be not recognizing a problem.

Acceptance without question is deception. When you don't push limits, you forget how. You start thinking everything must be as it is.

For example: working. Paul worked a 9-5 agency work with little autonomy. He questioned whether the 9-5 was a way to live, not the way.

Another option existed. So he chipped away at how to live in this new environment.

Don't simply jump

Internet writers tell people considering quitting 9-5 to just quit. To throw in the towel. To do what you like.

The advice is harmful, despite the good intentions. People think quitting is hard. Like courage is the issue. Like handing your boss a resignation letter.

Nope. The tough part comes after. It’s easy to jump. Landing is difficult.

The landing

Paul didn't quit. Intelligent individuals don't. Smart folks focus on landing. They imagine life after 9-5.

Paul had been a web developer for a long time, had solid clients, and was respected. Hence if he pushed the limits and discovered another route, he had the potential to execute.

Working on the side

Society loves polarization. It’s left or right. Either way. Or chaos. It's 9-5 or entrepreneurship.

But like Paul, you can stretch polarization's limits. In-between exists.

You can work a 9-5 and side jobs (as I do). A mix of your favorites. The 9-5's stability and creativity. Fire and routine.

Remember you can't have everything but anything. You can create and work part-time.

My hybrid lifestyle

Not selling books doesn't destroy my world. My globe keeps spinning if my new business fails or if people don't like my Tweets. Unhappy algorithm? Cool. I'm not bothered (okay maybe a little).

The mix gives me the best of both worlds. To create, hone my skill, and grasp big-business basics. I like routine, but I also appreciate spending 4 hours on Saturdays writing.

Some days I adore leaving work at 5 pm and disconnecting. Other days, I adore having a place to write if inspiration strikes during a run or a discussion.

I’m a part-time creator

I’m a part-time creator. No, I'm not trying to quit. I don't work 5 pm - 2 am on the side. No, I'm not at $10,000 MRR.

I work part-time but enjoy my 9-5. My 9-5 has goodies. My side job as well.

It combines both to meet my lifestyle. I'm satisfied.

Join the Part-time Creators Club for free here. I’ll send you tips to enhance your creative game.