More on Web3 & Crypto

Onchain Wizard

3 years ago

Three Arrows Capital & Celsius Updates

I read 1k+ page 3AC liquidation documentation so you don't have to. Also sharing revised Celsius recovery plans.

3AC's liquidation documents:

Someone disclosed 3AC liquidation records in the BVI courts recently. I'll discuss the leak's timeline and other highlights.

Three Arrows Capital began trading traditional currencies in emerging markets in 2012. They switched to equities and crypto, then purely crypto in 2018.

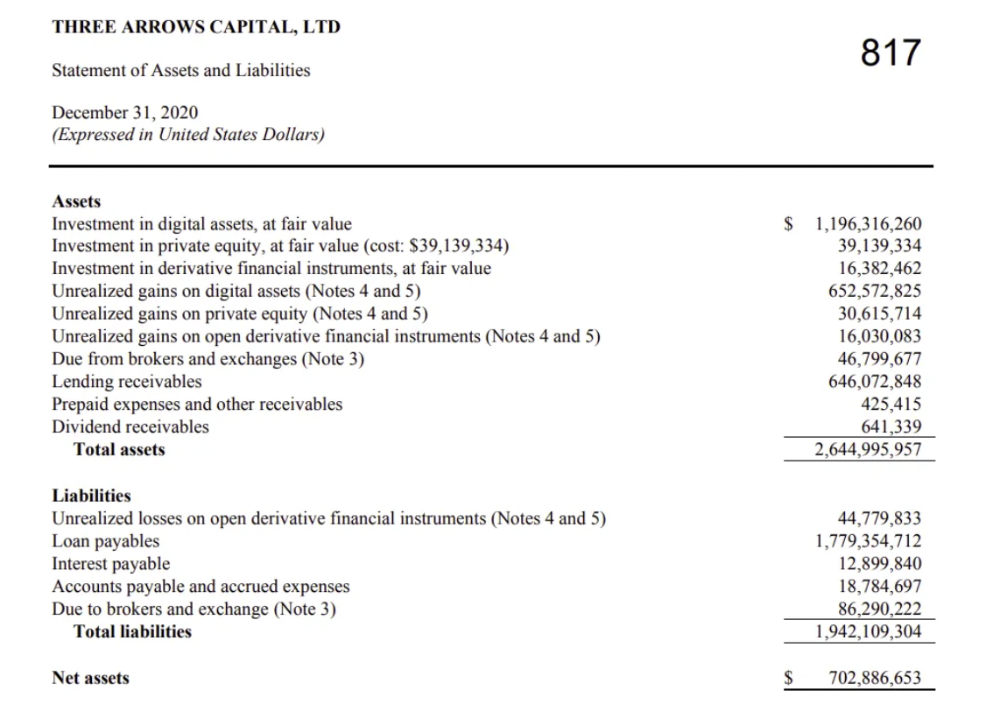

By 2020, the firm had $703mm in net assets and $1.8bn in loans (these guys really like debt).

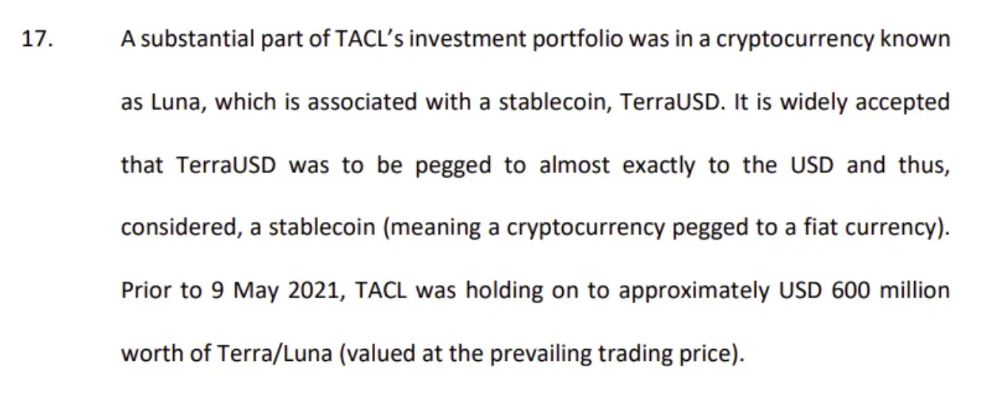

The firm's net assets under control reached $3bn in April 2022, according to the filings. 3AC had $600mm of LUNA/UST exposure before May 9th 2022, which put them over.

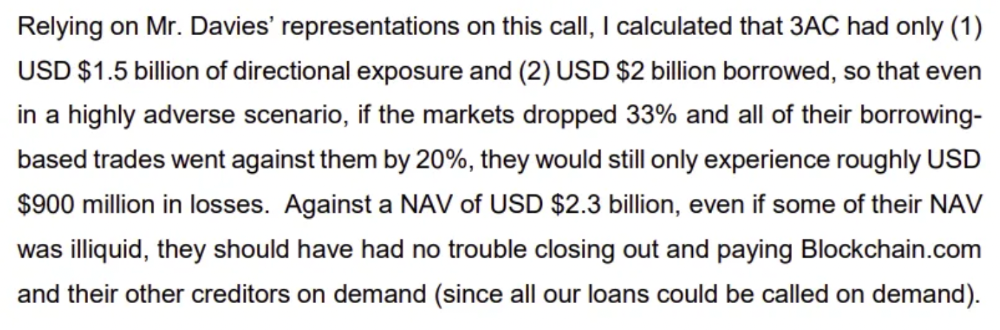

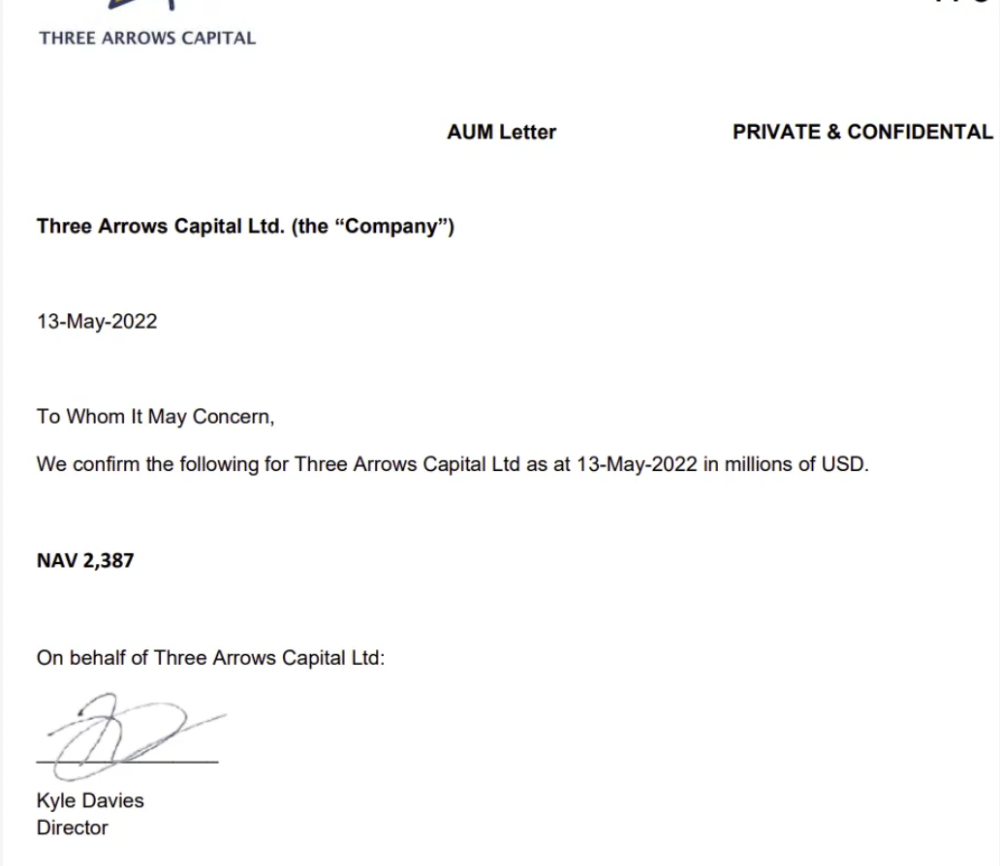

LUNA and UST go to zero quickly (I wrote about the mechanics of the blowup here). Kyle Davies, 3AC co-founder, told Blockchain.com on May 13 that they have $2.4bn in assets and $2.3bn NAV vs. $2bn in borrowings. As BTC and ETH plunged 33% and 50%, the company became insolvent by mid-2022.

3AC sent $32mm to Tai Ping Shen, a Cayman Islands business owned by Su Zhu and Davies' partner, Kelly Kaili Chen (who knows what is going on here).

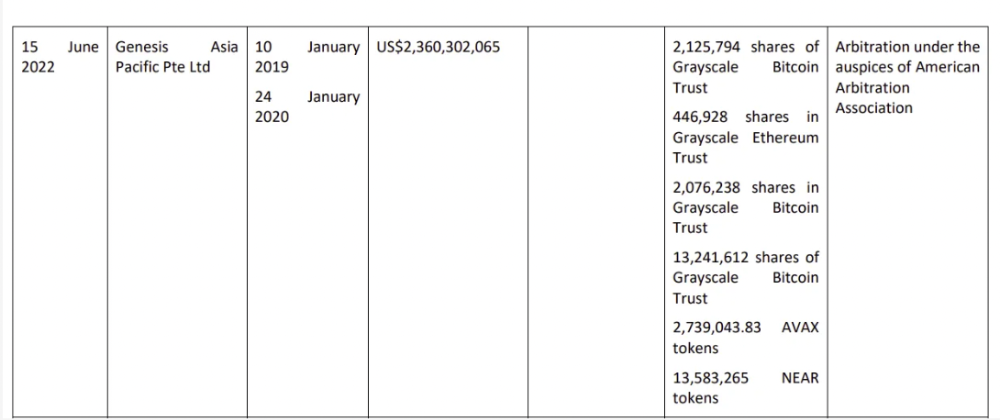

3AC had borrowed over $3.5bn in notional principle, with Genesis ($2.4bn) and Voyager ($650mm) having the most exposure.

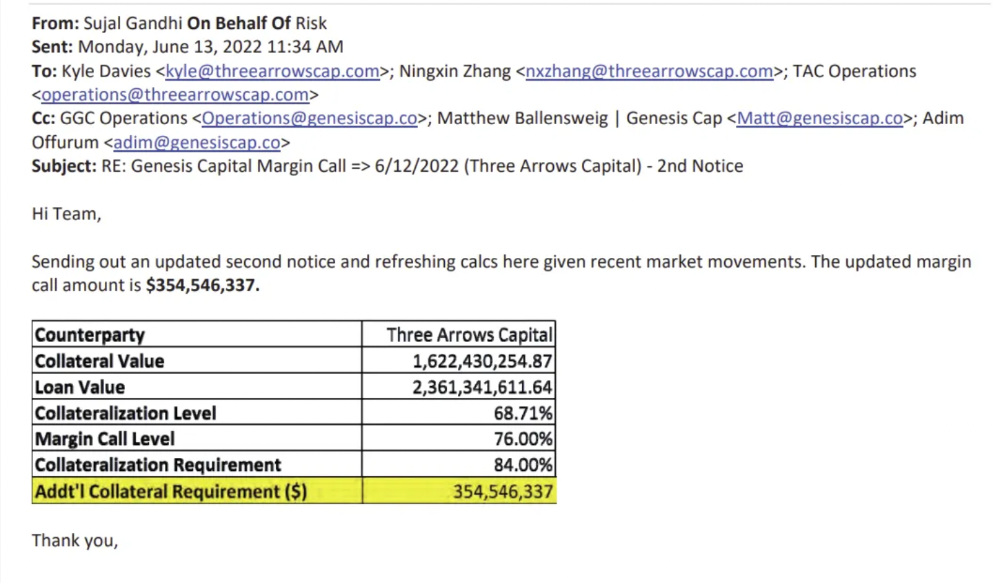

Genesis demanded $355mm in further collateral in June.

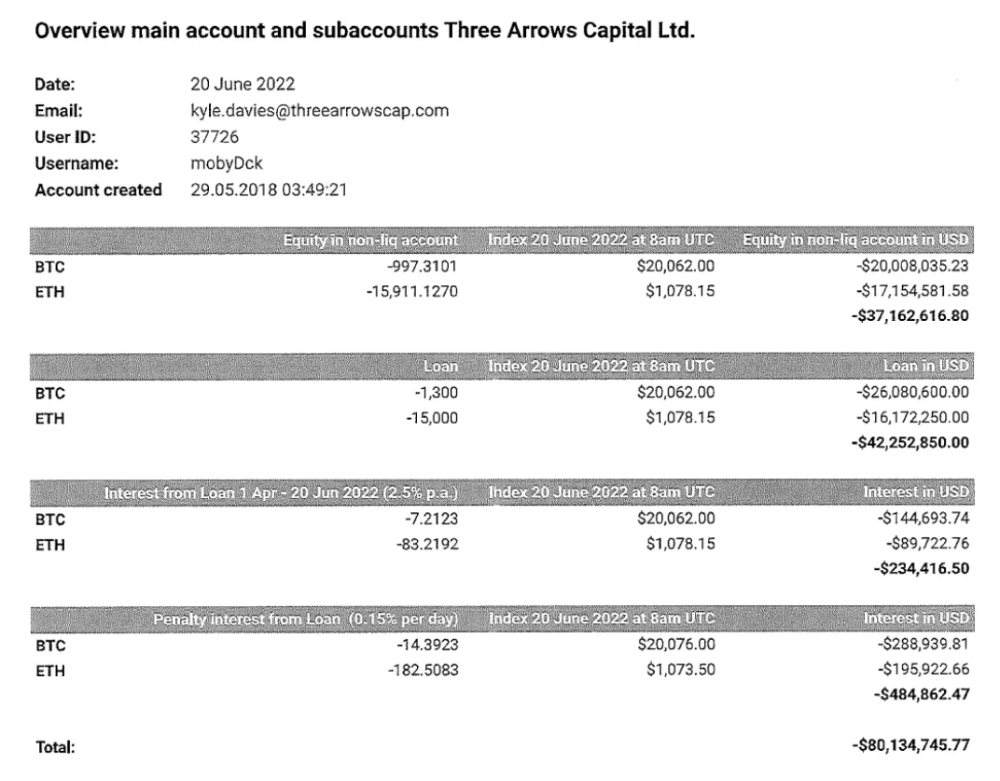

Deribit (another 3AC investment) called for $80 million in mid-June.

Even in mid-June, the corporation was trying to borrow more money to stay afloat. They approached Genesis for another $125mm loan (to pay another lender) and HODLnauts for BTC & ETH loans.

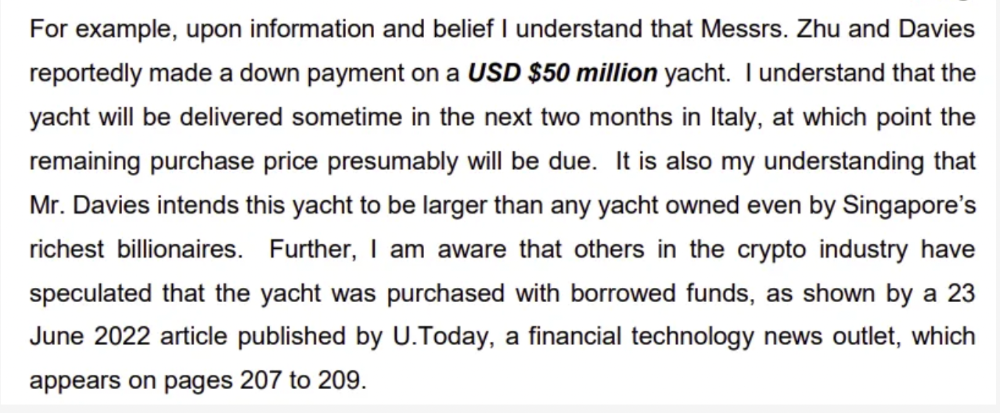

Pretty crazy. 3AC founders used borrowed money to buy a $50 million boat, according to the leak.

Su requesting for $5m + Chen Kaili Kelly asserting they loaned $65m unsecured to 3AC are identified as creditors.

Celsius:

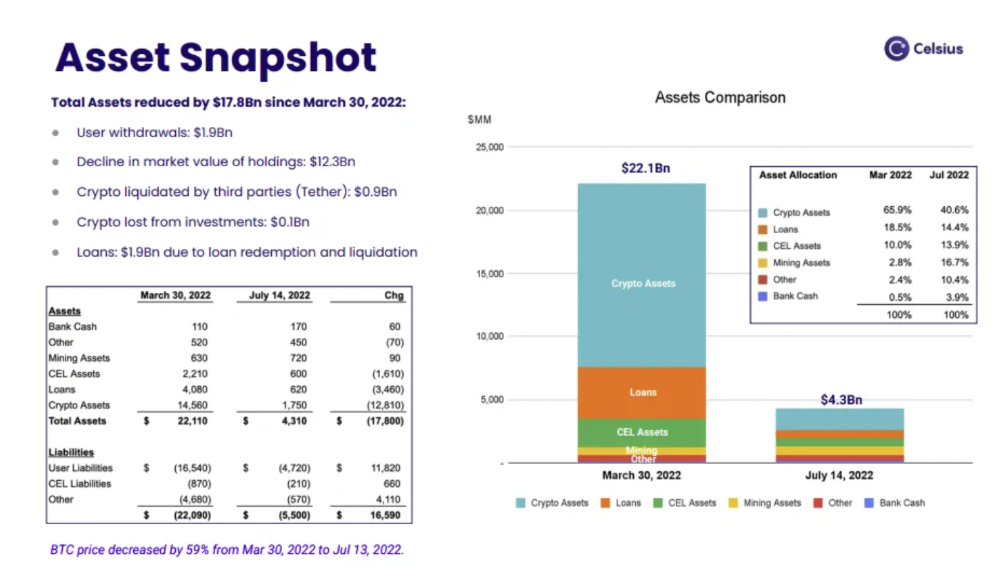

This bankruptcy presentation shows the Celsius breakdown from March to July 14, 2022. From $22bn to $4bn, crypto assets plummeted from $14.6bn to $1.8bn (ouch). $16.5bn in user liabilities dropped to $4.72bn.

In my recent post, I examined if "forced selling" is over, with Celsius' crypto assets being a major overhang. In this presentation, it looks that Chapter 11 will provide clients the opportunity to accept cash at a discount or remain long crypto. Provided that a fresh source of money is unlikely to enter the Celsius situation, cash at a discount or crypto given to customers will likely remain a near-term market risk - cash at a discount will likely come from selling crypto assets, while customers who receive crypto could sell at any time. I'll share any Celsius updates I find.

Conclusion

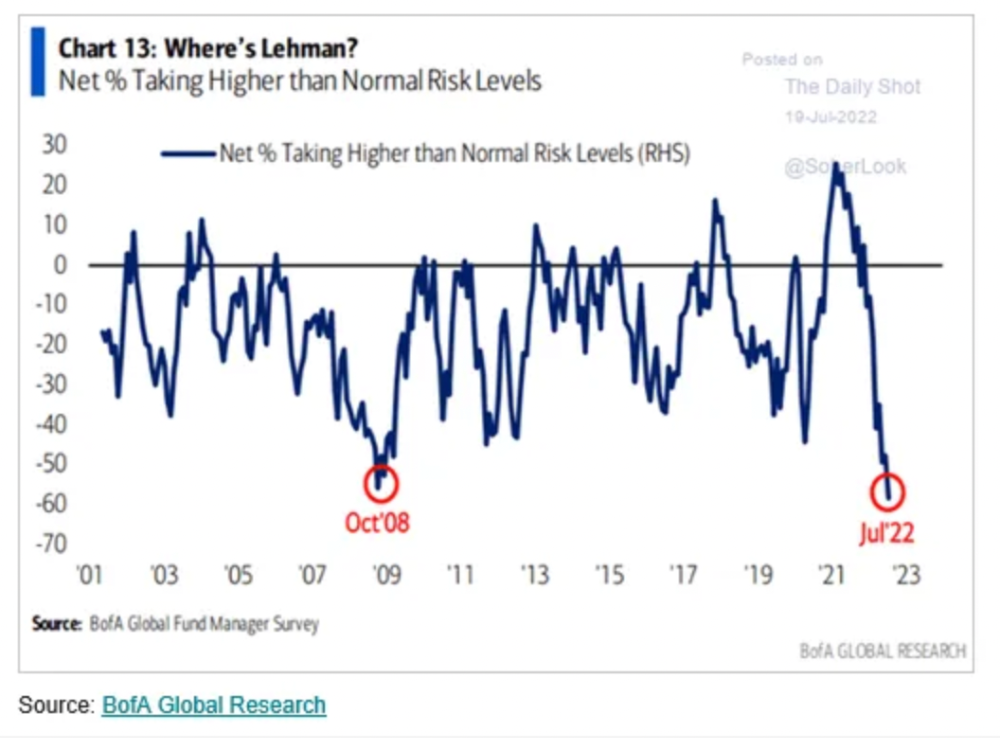

Only Celsius and the Mt Gox BTC unlock remain as forced selling catalysts. While everything went through a "relief" pump, with ETH up 75% from the bottom and numerous alts multiples higher, there are still macro dangers to equities + risk assets. There's a lot of wealth waiting to be deployed in crypto ($153bn in stables), but fund managers are risk apprehensive (lower than 2008 levels).

We're hopefully over crypto's "bottom," with peak anxiety and forced selling behind us, but we may chop around.

To see the full article, click here.

Stephen Moore

3 years ago

Web 2 + Web 3 = Web 5.

Monkey jpegs and shitcoins have tarnished Web3's reputation. Let’s move on.

Web3 was called "the internet's future."

Well, 'crypto bros' shouted about it loudly.

As quickly as it arrived to be the next internet, it appears to be dead. It's had scandals, turbulence, and crashes galore:

Web 3.0's cryptocurrencies have crashed. Bitcoin's all-time high was $66,935. This month, Ethereum fell from $2130 to $1117. Six months ago, the cryptocurrency market peaked at $3 trillion. Worst is likely ahead.

Gas fees make even the simplest Web3 blockchain transactions unsustainable.

Terra, Luna, and other dollar pegs collapsed, hurting crypto markets. Celsius, a crypto lender backed by VCs and Canada's second-largest pension fund, and Binance, a crypto marketplace, have withheld money and coins. They're near collapse.

NFT sales are falling rapidly and losing public interest.

Web3 has few real-world uses, like most crypto/blockchain technologies. Web3's image has been tarnished by monkey profile pictures and shitcoins while failing to become decentralized (the whole concept is controlled by VCs).

The damage seems irreparable, leaving Web3 in the gutter.

Step forward our new saviour — Web5

Fear not though, as hero awaits to drag us out of the Web3 hellscape. Jack Dorsey revealed his plan to save the internet quickly.

Dorsey has long criticized Web3, believing that VC capital and silicon valley insiders have created a centralized platform. In a tweet that upset believers and VCs (he was promptly blocked by Marc Andreessen), Dorsey argued, "You don't own "Web3." VCs and LPs do. Their incentives prevent it. It's a centralized organization with a new name.

Dorsey announced Web5 on June 10 in a very Elon-like manner. Block's TBD unit will work on the project (formerly Square).

Web5's pitch is that users will control their own data and identity. Bitcoin-based. Sound familiar? The presentation pack's official definition emphasizes decentralization. Web5 is a decentralized web platform that enables developers to write decentralized web apps using decentralized identifiers, verifiable credentials, and decentralized web nodes, returning ownership and control over identity and data to individuals.

Web5 would be permission-less, open, and token-less. What that means for Earth is anyone's guess. Identity. Ownership. Blockchains. Bitcoin. Different.

Web4 appears to have been skipped, forever destined to wish it could have shown the world what it could have been. (It was probably crap.) As this iteration combines Web2 and Web3, simple math and common sense add up to 5. Or something.

Dorsey and his team have had this idea simmering for a while. Daniel Buchner, a member of Block's Decentralized Identity team, said, "We're finishing up Web5's technical components."

Web5 could be the project that decentralizes the internet. It must be useful to users and convince everyone to drop the countless Web3 projects, products, services, coins, blockchains, and websites being developed as I write this.

Web5 may be too late for Dorsey and the incoming flood of creators.

Web6 is planned!

The next months and years will be hectic and less stable than the transition from Web 1.0 to Web 2.0.

Web1 was around 1991-2004.

Web2 ran from 2004 to 2021. (though the Web3 term was first used in 2014, it only really gained traction years later.)

Web3 lasted a year.

Web4 is dead.

Silicon Valley billionaires are turning it into a startup-style race, each disrupting the next iteration until they crack it. Or destroy it completely.

Web5 won't last either.

Scott Hickmann

4 years ago

Welcome

Welcome to Integrity's Web3 community!

You might also like

Laura Sanders

3 years ago

Xenobots, tiny living machines, can duplicate themselves.

Strange and complex behavior of frog cell blobs

A xenobot “parent,” shaped like a hungry Pac-Man (shown in red false color), created an “offspring” xenobot (green sphere) by gathering loose frog cells in its opening.

Tiny “living machines” made of frog cells can make copies of themselves. This newly discovered renewal mechanism may help create self-renewing biological machines.

According to Kirstin Petersen, an electrical and computer engineer at Cornell University who studies groups of robots, “this is an extremely exciting breakthrough.” She says self-replicating robots are a big step toward human-free systems.

Researchers described the behavior of xenobots earlier this year (SN: 3/31/21). Small clumps of skin stem cells from frog embryos knitted themselves into small spheres and started moving. Cilia, or cellular extensions, powered the xenobots around their lab dishes.

The findings are published in the Proceedings of the National Academy of Sciences on Dec. 7. The xenobots can gather loose frog cells into spheres, which then form xenobots.

The researchers call this type of movement-induced reproduction kinematic self-replication. The study's coauthor, Douglas Blackiston of Tufts University in Medford, Massachusetts, and Harvard University, says this is typical. For example, sexual reproduction requires parental sperm and egg cells. Sometimes cells split or budded off from a parent.

“This is unique,” Blackiston says. These xenobots “find loose parts in the environment and cobble them together.” This second generation of xenobots can move like their parents, Blackiston says.

The researchers discovered that spheroid xenobots could only produce one more generation before dying out. The original xenobots' shape was predicted by an artificial intelligence program, allowing for four generations of replication.

A C shape, like an openmouthed Pac-Man, was predicted to be a more efficient progenitor. When improved xenobots were let loose in a dish, they began scooping up loose cells into their gaping “mouths,” forming more sphere-shaped bots (see image below). As many as 50 cells clumped together in the opening of a parent to form a mobile offspring. A xenobot is made up of 4,000–6,000 frog cells.

Petersen likes the Xenobots' small size. “The fact that they were able to do this at such a small scale just makes it even better,” she says. Miniature xenobots could sculpt tissues for implantation or deliver therapeutics inside the body.

Beyond the xenobots' potential jobs, the research advances an important science, says study coauthor and Tufts developmental biologist Michael Levin. The science of anticipating and controlling the outcomes of complex systems, he says.

“No one could have predicted this,” Levin says. “They regularly surprise us.” Researchers can use xenobots to test the unexpected. “This is about advancing the science of being less surprised,” Levin says.

Nitin Sharma

3 years ago

Quietly Create a side business that will revolutionize everything in a year.

Quitting your job for a side gig isn't smart.

A few years ago, I would have laughed at the idea of starting a side business.

I never thought a side gig could earn more than my 9-to-5. My side gig pays more than my main job now.

You may then tell me to leave your job. But I don't want to gamble, and my side gig is important. Programming and web development help me write better because of my job.

Yes, I share work-related knowledge. Web development, web3, programming, money, investment, and side hustles are key.

Let me now show you how to make one.

Create a side business based on your profession or your interests.

I'd be direct.

Most people don't know where to start or which side business to pursue.

You can make money by taking online surveys, starting a YouTube channel, or playing web3 games, according to several blogs.

You won't make enough money and will waste time.

Nitin directs our efforts. My friend, you've worked and have talent. Profit from your talent.

Example:

College taught me web development. I soon created websites, freelanced, and made money. First year was hardest for me financially and personally.

As I worked, I became more skilled. Soon after, I got more work, wrote about web development on Medium, and started selling products.

I've built multiple income streams from web development. It wasn't easy. Web development skills got me a 9-to-5 job.

Focus on a specific skill and earn money in many ways. Most people start with something they hate or are bad at; the rest is predictable.

Result? They give up, frustrated.

Quietly focus for a year.

I started my side business in college and never told anyone. My parents didn't know what I did for fun.

The only motivation is time constraints. So I focused.

As I've said, I focused on my strengths (learned skills) and made money. Yes, I was among Medium's top 500 authors in a year and got a bonus.

How did I succeed? Since I know success takes time, I never imagined making enough money in a month. I spent a year concentrating.

I became wealthy. Now that I have multiple income sources, some businesses pay me based on my skill.

I recommend learning skills and working quietly for a year. You can do anything with this.

The hardest part will always be the beginning.

When someone says you can make more money working four hours a week. Leave that, it's bad advice.

If someone recommends a paid course to help you succeed, think twice.

The beginning is always the hardest.

I made many mistakes learning web development. When I started my technical content side gig, it was tough. I made mistakes and changed how I create content, which helped.

And it’s applicable everywhere.

Don't worry if you face problems at first. Time and effort heal all wounds.

Quitting your job to work a side job is not a good idea.

Some honest opinions.

Most online gurus encourage side businesses. It takes time to start and grow a side business.

Suppose you quit and started a side business.

After six months, what happens? Your side business won't provide enough money to survive.

Indeed. Later, you'll become demotivated and tense and look for work.

Instead, work 9-5, and start a side business. You decide. Stop watching Netflix and focus on your side business.

I know you're busy, but do it.

Next? It'll succeed or fail in six months. You can continue your side gig for another six months because you have a job and have tried it.

You'll probably make money, but you may need to change your side gig.

That’s it.

You've created a new revenue stream.

Remember.

Starting a side business, a company, or finding work is difficult. There's no free money in a competitive world. You'll only succeed with skill.

Read it again.

Focusing silently for a year can help you succeed.

I studied web development and wrote about it. First year was tough. I went viral, hit the top 500, and other firms asked me to write for them. So, my life changed.

Yours can too. One year of silence is required.

Enjoy!

Rachel Greenberg

3 years ago

The Unsettling Fact VC-Backed Entrepreneurs Don't Want You to Know

What they'll do is scarier.

My acquaintance recently joined a VC-funded startup. Money, equity, and upside possibilities were nice, but he had a nagging dread.

They just secured a $40M round and are hiring like crazy to prepare for their IPO in two years. All signals pointed to this startup's (a B2B IT business in a stable industry) success, and its equity-holding workers wouldn't pass that up.

Five months after starting the work, my friend struggled with leaving. We might overlook the awful culture and long hours at the proper price. This price plus the company's fate and survival abilities sent my friend departing in an unpleasant unplanned resignation before jumping on yet another sinking ship.

This affects founders. This affects VC-backed companies (and all businesses). This affects anyone starting, buying, or running a business.

Here's the under-the-table approach that's draining VC capital, leaving staff terrified (or jobless), founders rattled, and investors upset. How to recognize, solve, and avoid it

The unsettling reality behind door #1

You can't raise money off just your looks, right? If "looks" means your founding team's expertise, then maybe. In my friend's case, the founding team's strong qualifications and track records won over investors before talking figures.

They're hardly the only startup to raise money without a profitable customer acquisition strategy. Another firm raised money for an expensive sleep product because it's eco-friendly. They were off to the races with a few keywords and key players.

Both companies, along with numerous others, elected to invest on product development first. Company A employed all the tech, then courted half their market (they’re a tech marketplace that connects two parties). Company B spent millions on R&D to create a palatable product, then flooded the world with marketing.

My friend is on Company B's financial team, and he's seen where they've gone wrong. It's terrible.

Company A (tech market): Growing? Not quite. To achieve the ambitious expansion they (and their investors) demand, they've poured much of their little capital into salespeople: Cold-calling commission and salary salesmen. Is it working? Considering attrition and companies' dwindling capital, I don't think so.

Company B (green sleep) has been hiring, digital marketing, and opening new stores like crazy. Growing expenses should result in growing revenues and a favorable return on investment; if you grow too rapidly, you may neglect to check that ROI.

Once Company A cut headcount and Company B declared “going concerned”, my friend realized both startups had the same ailment and didn't recognize it.

I shouldn't have to ask a friend to verify a company's cash reserves and profitability to spot a financial problem. It happened anyhow.

The frightening part isn't that investors were willing to invest millions without product-market fit, CAC, or LTV estimates. That's alarming, but not as scary as the fact that startups aren't understanding the problem until VC rounds have dried up.

When they question consultants if their company will be around in 6 months. It’s a red flag. How will they stretch $20M through a 2-year recession with a $3M/month burn rate and no profitability? Alarms go off.

Who's in danger?

In a word, everyone who raised money without a profitable client acquisition strategy or enough resources to ride out dry spells.

Money mismanagement and poor priorities affect every industry (like sinking all your capital into your product, team, or tech, at the expense of probing what customer acquisition really takes and looks like).

This isn't about tech, real estate, or recession-proof luxury products. Fast, cheap, easy money flows into flashy-looking teams with buzzwords, trending industries, and attractive credentials.

If these companies can't show progress or get a profitable CAC, they can't raise more money. They die if they can't raise more money (or slash headcount and find shoestring budget solutions until they solve the real problem).

The kiss of death (and how to avoid it)

If you're running a startup and think raising VC is the answer, pause and evaluate. Do you need the money now?

I'm not saying VC is terrible or has no role. Founders have used it as a Band-Aid for larger, pervasive problems. Venture cash isn't a crutch for recruiting consumers profitably; it's rocket fuel to get you what and who you need.

Pay-to-play isn't a way to throw money at the wall and hope for a return. Pay-to-play works until you run out of money, and if you haven't mastered client acquisition, your cash will diminish quickly.

How can you avoid this bottomless pit? Tips:

Understand your burn rate

Keep an eye on your growth or profitability.

Analyze each and every marketing channel and initiative.

Make lucrative customer acquisition strategies and satisfied customers your top two priorities. not brand-new products. not stellar hires. avoid the fundraising rollercoaster to save time. If you succeed in these two tasks, investors will approach you with their thirsty offers rather than the other way around, and your cash reserves won't diminish as a result.

Not as much as your grandfather

My family friend always justified expensive, impractical expenditures by saying it was only monopoly money. In business, startups, and especially with money from investors expecting a return, that's not true.

More founders could understand that there isn't always another round if they viewed VC money as their own limited pool. When the well runs dry, you must refill it or save the day.

Venture financing isn't your grandpa's money. A discerning investor has entrusted you with dry powder in the hope that you'll use it wisely, strategically, and thoughtfully. Use it well.