LCX is the latest CEX to have suffered a private key exploit.

The attack began around 10:30 PM +UTC on January 8th.

Peckshield spotted it first, then an official announcement came shortly after.

We’ve said it before; if established companies holding millions of dollars of users’ funds can’t manage their own hot wallet security, what purpose do they serve?

The Unique Selling Proposition (USP) of centralised finance grows smaller by the day.

The official incident report states that 7.94M USD were stolen in total, and that deposits and withdrawals to the platform have been paused.

LCX hot wallet: 0x4631018f63d5e31680fb53c11c9e1b11f1503e6f

Hacker’s wallet: 0x165402279f2c081c54b00f0e08812f3fd4560a05

Stolen funds:

- 162.68 ETH (502,671 USD)

- 3,437,783.23 USDC (3,437,783 USD)

- 761,236.94 EURe (864,840 USD)

- 101,249.71 SAND Token (485,995 USD)

- 1,847.65 LINK (48,557 USD)

- 17,251,192.30 LCX Token (2,466,558 USD)

- 669.00 QNT (115,609 USD)

- 4,819.74 ENJ (10,890 USD)

- 4.76 MKR (9,885 USD)

**~$1M worth of $LCX remains in the address, along with 611k EURe which has been frozen by Monerium.

The rest, a total of 1891 ETH (~$6M) was sent to Tornado Cash.**

Why can’t they keep private keys private?

Is it really that difficult for a traditional corporate structure to maintain good practice?

CeFi hacks leave us with little to say - we can only go on what the team chooses to tell us.

Next time, they can write this article themselves.

See below for a template.

More on Web3 & Crypto

Ben

3 years ago

The Real Value of Carbon Credit (Climate Coin Investment)

Disclaimer : This is not financial advice for any investment.

TL;DR

You might not have realized it, but as we move toward net zero carbon emissions, the globe is already at war.

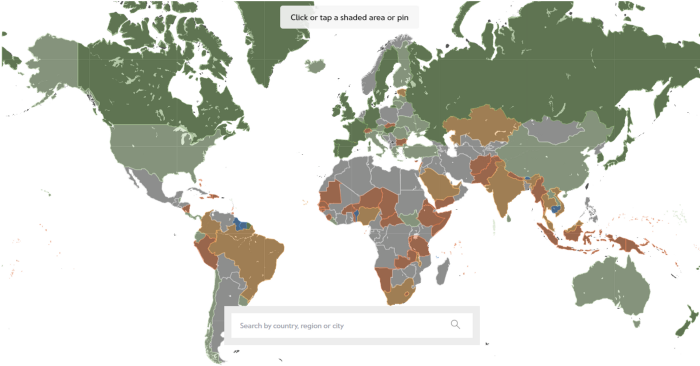

According to the Paris Agreement of COP26, 64% of nations have already declared net zero, and the issue of carbon reduction has already become so important for businesses that it affects their ability to survive. Furthermore, the time when carbon emission standards will be defined and controlled on an individual basis is becoming closer.

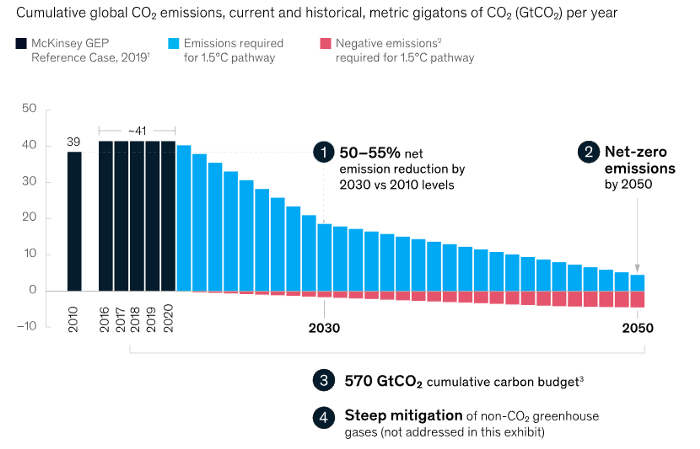

Since 2017, the market for carbon credits has experienced extraordinary expansion as a result of widespread talks about carbon credits. The carbon credit market is predicted to expand much more once net zero is implemented and carbon emission rules inevitably tighten.

Hello! Ben here from Nonce Classic. Nonce Classic has recently confirmed the tremendous growth potential of the carbon credit market in the midst of a major trend towards the global goal of net zero (carbon emissions caused by humans — carbon reduction by humans = 0 ). Moreover, we too believed that the questions and issues the carbon credit market suffered from the last 30–40yrs could be perfectly answered through crypto technology and that is why we have added a carbon credit crypto project to the Nonce Classic portfolio. There have been many teams out there that have tried to solve environmental problems through crypto but very few that have measurable experience working in the carbon credit scene. Thus we have put in our efforts to find projects that are not crypto projects created for the sake of issuing tokens but projects that pragmatically use crypto technology to combat climate change by solving problems of the current carbon credit market. In that process, we came to hear of Climate Coin, a veritable carbon credit crypto project, and us Nonce Classic as an accelerator, have begun contributing to its growth and invested in its tokens. Starting with this article, we plan to publish a series of articles explaining why the carbon credit market is bullish, why we invested in Climate Coin, and what kind of project Climate Coin is specifically. In this first article let us understand the carbon credit market and look into its growth potential! Let’s begin :)

The Unavoidable Entry of the Net Zero Era

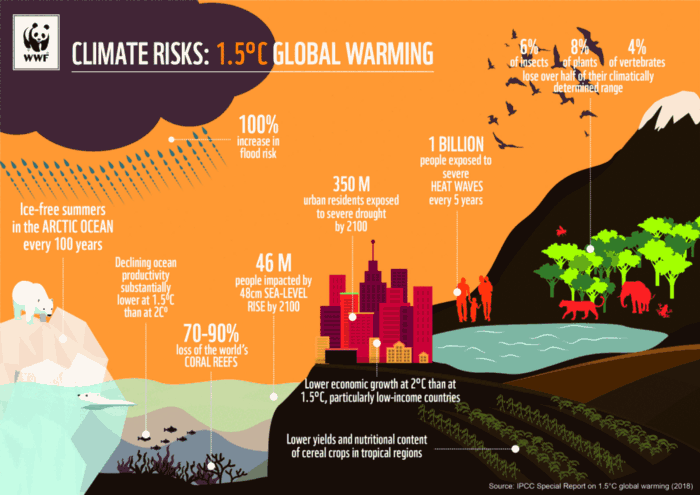

Net zero means... Human carbon emissions are balanced by carbon reduction efforts. A non-environmentalist may find it hard to accept that net zero is attainable by 2050. Global cooperation to save the earth is happening faster than we imagine.

In the Paris Agreement of COP26, concluded in Glasgow, UK on Oct. 31, 2021, nations pledged to reduce worldwide yearly greenhouse gas emissions by more than 50% by 2030 and attain net zero by 2050. Governments throughout the world have pledged net zero at the national level and are holding each other accountable by submitting Nationally Determined Contributions (NDC) every five years to assess implementation. 127 of 198 nations have declared net zero.

Each country's 1.5-degree reduction plans have led to carbon reduction obligations for companies. In places with the strictest environmental regulations, like the EU, companies often face bankruptcy because the cost of buying carbon credits to meet their carbon allowances exceeds their operating profits. In this day and age, minimizing carbon emissions and securing carbon credits are crucial.

Recent SEC actions on climate change may increase companies' concerns about reducing emissions. The SEC required all U.S. stock market companies to disclose their annual greenhouse gas emissions and climate change impact on March 21, 2022. The SEC prepared the proposed regulation through in-depth analysis and stakeholder input since last year. Three out of four SEC members agreed that it should pass without major changes. If the regulation passes, it will affect not only US companies, but also countless companies around the world, directly or indirectly.

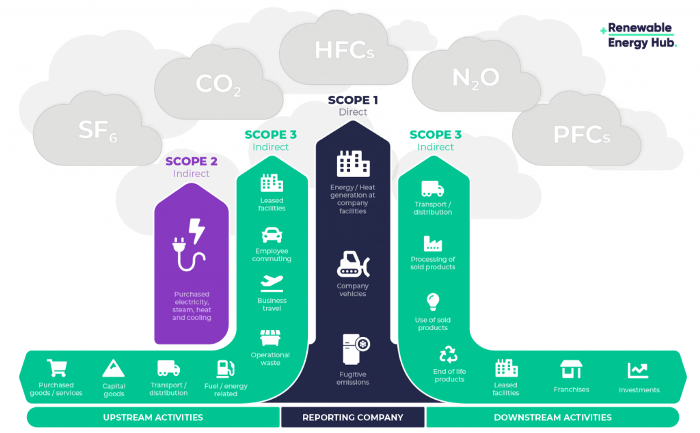

Even companies not listed on the U.S. stock market will be affected and, in most cases, required to disclose emissions. Companies listed on the U.S. stock market with significant greenhouse gas emissions or specific targets are subject to stricter emission standards (Scope 3) and disclosure obligations, which will magnify investigations into all related companies. Greenhouse gas emissions can be calculated three ways. Scope 1 measures carbon emissions from a company's facilities and transportation. Scope 2 measures carbon emissions from energy purchases. Scope 3 covers all indirect emissions from a company's value chains.

The SEC's proposed carbon emission disclosure mandate and regulations are one example of how carbon credit policies can cross borders and affect all parties. As such incidents will continue throughout the implementation of net zero, even companies that are not immediately obligated to disclose their carbon emissions must be prepared to respond to changes in carbon emission laws and policies.

Carbon reduction obligations will soon become individual. Individual consumption has increased dramatically with improved quality of life and convenience, despite national and corporate efforts to reduce carbon emissions. Since consumption is directly related to carbon emissions, increasing consumption increases carbon emissions. Countries around the world have agreed that to achieve net zero, carbon emissions must be reduced on an individual level. Solutions to individual carbon reduction are being actively discussed and studied under the term Personal Carbon Trading (PCT).

PCT is a system that allows individuals to trade carbon emission quotas in the form of carbon credits. Individuals who emit more carbon than their allotment can buy carbon credits from those who emit less. European cities with well-established carbon credit markets are preparing for net zero by conducting early carbon reduction prototype projects. The era of checking product labels for carbon footprints, choosing low-emissions transportation, and worrying about hot shower emissions is closer than we think.

The Market for Carbon Credits Is Expanding Fearfully

Compliance and voluntary carbon markets make up the carbon credit market.

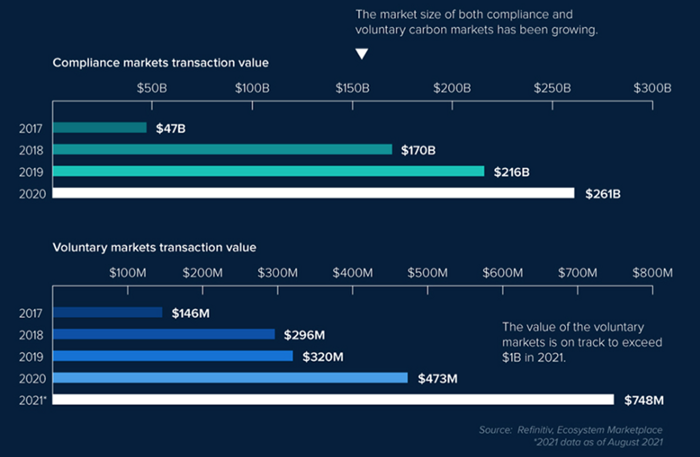

A Compliance Market enforces carbon emission allowances for actors. Companies in industries that previously emitted a lot of carbon are included in the mandatory carbon market, and each government receives carbon credits each year. If a company's emissions are less than the assigned cap and it has extra carbon credits, it can sell them to other companies that have larger emissions and require them (Cap and Trade). The annual number of free emission permits provided to companies is designed to decline, therefore companies' desire for carbon credits will increase. The compliance market's yearly trading volume will exceed $261B in 2020, five times its 2017 level.

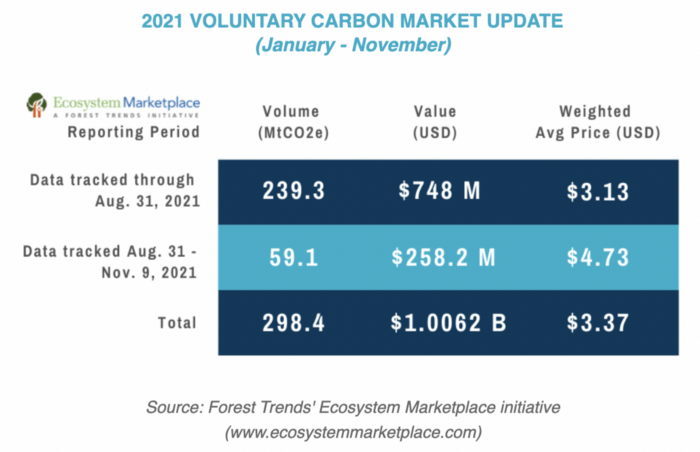

In the Voluntary Market, carbon reduction is voluntary and carbon credits are sold for personal reasons or to build market participants' eco-friendly reputations. Even if not in the compliance market, it is typical for a corporation to be obliged to offset its carbon emissions by acquiring voluntary carbon credits. When a company seeks government or company investment, it may be denied because it is not net zero. If a significant shareholder declares net zero, the companies below it must execute it. As the world moves toward ESG management, becoming an eco-friendly company is no longer a strategic choice to gain a competitive edge, but an important precaution to not fall behind. Due to this eco-friendly trend, the annual market volume of voluntary emission credits will approach $1B by November 2021. The voluntary credit market is anticipated to reach $5B to $50B by 2030. (TSCVM 2021 Report)

In conclusion

This article analyzed how net zero, a target promised by countries around the world to combat climate change, has brought governmental, corporate, and human changes. We discussed how these shifts will become more obvious as we approach net zero, and how the carbon credit market would increase exponentially in response. In the following piece, let's analyze the hurdles impeding the carbon credit market's growth, how the project we invested in tries to tackle these issues, and why we chose Climate Coin. Wait! Jim Skea, co-chair of the IPCC working group, said,

“It’s now or never, if we want to limit global warming to 1.5°C” — Jim Skea

Join nonceClassic’s community:

Telegram: https://t.me/non_stock

Youtube: https://www.youtube.com/channel/UCqeaLwkZbEfsX35xhnLU2VA

Twitter: @nonceclassic

Mail us : general@nonceclassic.org

Isobel Asher Hamilton

3 years ago

$181 million in bitcoin buried in a dump. $11 million to get them back

James Howells lost 8,000 bitcoins. He has $11 million to get them back.

His life altered when he threw out an iPhone-sized hard drive.

Howells, from the city of Newport in southern Wales, had two identical laptop hard drives squirreled away in a drawer in 2013. One was blank; the other had 8,000 bitcoins, currently worth around $181 million.

He wanted to toss out the blank one, but the drive containing the Bitcoin went to the dump.

He's determined to reclaim his 2009 stash.

Howells, 36, wants to arrange a high-tech treasure hunt for bitcoins. He can't enter the landfill.

Newport's city council has rebuffed Howells' requests to dig for his hard drive for almost a decade, stating it would be expensive and environmentally destructive.

I got an early look at his $11 million idea to search 110,000 tons of trash. He expects submitting it to the council would convince it to let him recover the hard disk.

110,000 tons of trash, 1 hard drive

Finding a hard disk among heaps of trash may seem Herculean.

Former IT worker Howells claims it's possible with human sorters, robot dogs, and an AI-powered computer taught to find hard drives on a conveyor belt.

His idea has two versions, depending on how much of the landfill he can search.

His most elaborate solution would take three years and cost $11 million to sort 100,000 metric tons of waste. Scaled-down version costs $6 million and takes 18 months.

He's created a team of eight professionals in AI-powered sorting, landfill excavation, garbage management, and data extraction, including one who recovered Columbia's black box data.

The specialists and their companies would be paid a bonus if they successfully recovered the bitcoin stash.

Howells: "We're trying to commercialize this project."

Howells claimed rubbish would be dug up by machines and sorted near the landfill.

Human pickers and a Max-AI machine would sort it. The machine resembles a scanner on a conveyor belt.

Remi Le Grand of Max-AI told us it will train AI to recognize Howells-like hard drives. A robot arm would select candidates.

Howells has added security charges to his scheme because he fears people would steal the hard drive.

He's budgeted for 24-hour CCTV cameras and two robotic "Spot" canines from Boston Dynamics that would patrol at night and look for his hard drive by day.

Howells said his crew met in May at the Celtic Manor Resort outside Newport for a pitch rehearsal.

Richard Hammond's narrative swings from banal to epic.

Richard Hammond filmed the meeting and created a YouTube documentary on Howells.

Hammond said of Howells' squad, "They're committed and believe in him and the idea."

Hammond: "It goes from banal to gigantic." "If I were in his position, I wouldn't have the strength to answer the door."

Howells said trash would be cleaned and repurposed after excavation. Reburying the rest.

"We won't pollute," he declared. "We aim to make everything better."

After the project is finished, he hopes to develop a solar or wind farm on the dump site. The council is unlikely to accept his vision soon.

A council representative told us, "Mr. Howells can't convince us of anything." "His suggestions constitute a significant ecological danger, which we can't tolerate and are forbidden by our permit."

Will the recovered hard drive work?

The "platter" is a glass or metal disc that holds the hard drive's data. Howells estimates 80% to 90% of the data will be recoverable if the platter isn't damaged.

Phil Bridge, a data-recovery expert who consulted Howells, confirmed these numbers.

If the platter is broken, Bridge adds, data recovery is unlikely.

Bridge says he was intrigued by the proposal. "It's an intriguing case," he added. Helping him get it back and proving everyone incorrect would be a great success story.

Who'd pay?

Swiss and German venture investors Hanspeter Jaberg and Karl Wendeborn told us they would fund the project if Howells received council permission.

Jaberg: "It's a needle in a haystack and a high-risk investment."

Howells said he had no contract with potential backers but had discussed the proposal in Zoom meetings. "Until Newport City Council gives me something in writing, I can't commit," he added.

Suppose he finds the bitcoins.

Howells said he would keep 30% of the data, worth $54 million, if he could retrieve it.

A third would go to the recovery team, 30% to investors, and the remainder to local purposes, including gifting £50 ($61) in bitcoin to each of Newport's 150,000 citizens.

Howells said he opted to spend extra money on "professional firms" to help convince the council.

What if the council doesn't approve?

If Howells can't win the council's support, he'll sue, claiming its actions constitute a "illegal embargo" on the hard drive. "I've avoided that path because I didn't want to cause complications," he stated. I wanted to cooperate with Newport's council.

Howells never met with the council face-to-face. He mentioned he had a 20-minute Zoom meeting in May 2021 but thought his new business strategy would help.

He met with Jessica Morden on June 24. Morden's office confirmed meeting.

After telling the council about his proposal, he can only wait. "I've never been happier," he said. This is our most professional operation, with the best employees.

The "crypto proponent" buys bitcoin every month and sells it for cash.

Howells tries not to think about what he'd do with his part of the money if the hard disk is found functional. "Otherwise, you'll go mad," he added.

This post is a summary. Read the full article here.

William Brucee

3 years ago

This person is probably Satoshi Nakamoto.

Who founded bitcoin is the biggest mystery in technology today, not how it works.

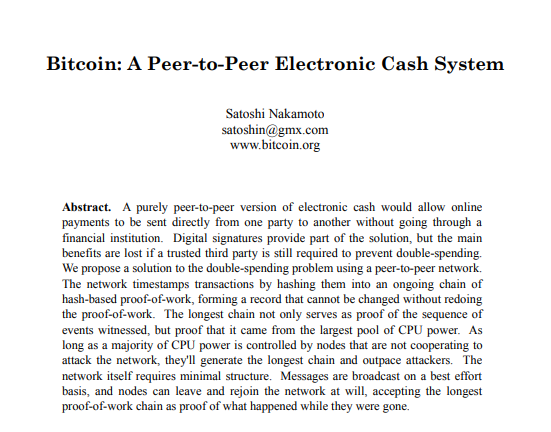

On October 31, 2008, Satoshi Nakamoto posted a whitepaper to a cryptography email list. Still confused by the mastermind who changed monetary history.

Journalists and bloggers have tried in vain to uncover bitcoin's creator. Some candidates self-nominated. We're still looking for the mystery's perpetrator because none of them have provided proof.

One person. I'm confident he invented bitcoin. Let's assess Satoshi Nakamoto before I reveal my pick. Or what he wants us to know.

Satoshi's P2P Foundation biography says he was born in 1975. He doesn't sound or look Japanese. First, he wrote the whitepaper and subsequent articles in flawless English. His sleeping habits are unusual for a Japanese person.

Stefan Thomas, a Bitcoin Forum member, displayed Satoshi's posting timestamps. Satoshi Nakamoto didn't publish between 2 and 8 p.m., Japanese time. Satoshi's identity may not be real.

Why would he disguise himself?

There is a legitimate explanation for this

Phil Zimmermann created PGP to give dissidents an open channel of communication, like Pretty Good Privacy. US government seized this technology after realizing its potential. Police investigate PGP and Zimmermann.

This technology let only two people speak privately. Bitcoin technology makes it possible to send money for free without a bank or other intermediary, removing it from government control.

How much do we know about the person who invented bitcoin?

Here's what we know about Satoshi Nakamoto now that I've covered my doubts about his personality.

Satoshi Nakamoto first appeared with a whitepaper on metzdowd.com. On Halloween 2008, he presented a nine-page paper on a new peer-to-peer electronic monetary system.

Using the nickname satoshi, he created the bitcointalk forum. He kept developing bitcoin and created bitcoin.org. Satoshi mined the genesis block on January 3, 2009.

Satoshi Nakamoto worked with programmers in 2010 to change bitcoin's protocol. He engaged with the bitcoin community. Then he gave Gavin Andresen the keys and codes and transferred community domains. By 2010, he'd abandoned the project.

The bitcoin creator posted his goodbye on April 23, 2011. Mike Hearn asked Satoshi if he planned to rejoin the group.

“I’ve moved on to other things. It’s in good hands with Gavin and everyone.”

Nakamoto Satoshi

The man who broke the banking system vanished. Why?

Satoshi's wallets held 1,000,000 BTC. In December 2017, when the price peaked, he had over US$19 billion. Nakamoto had the 44th-highest net worth then. He's never cashed a bitcoin.

This data suggests something happened to bitcoin's creator. I think Hal Finney is Satoshi Nakamoto .

Hal Finney had ALS and died in 2014. I suppose he created the future of money, then he died, leaving us with only rumors about his identity.

Hal Finney, who was he?

Hal Finney graduated from Caltech in 1979. Student peers voted him the smartest. He took a doctoral-level gravitational field theory course as a freshman. Finney's intelligence meets the first requirement for becoming Satoshi Nakamoto.

Students remember Finney holding an Ayn Rand book. If he'd read this, he may have developed libertarian views.

His beliefs led him to a small group of freethinking programmers. In the 1990s, he joined Cypherpunks. This action promoted the use of strong cryptography and privacy-enhancing technologies for social and political change. Finney helped them achieve a crypto-anarchist perspective as self-proclaimed privacy defenders.

Zimmermann knew Finney well.

Hal replied to a Cypherpunk message about Phil Zimmermann and PGP. He contacted Phil and became PGP Corporation's first member, retiring in 2011. Satoshi Nakamoto quit bitcoin in 2011.

Finney improved the new PGP protocol, but he had to do so secretly. He knew about Phil's PGP issues. I understand why he wanted to hide his identity while creating bitcoin.

Why did he pretend to be from Japan?

His envisioned persona was spot-on. He resided near scientist Dorian Prentice Satoshi Nakamoto. Finney could've assumed Nakamoto's identity to hide his. Temple City has 36,000 people, so what are the chances they both lived there? A cryptographic genius with the same name as Bitcoin's creator: coincidence?

Things went differently, I think.

I think Hal Finney sent himself Satoshis messages. I know it's odd. If you want to conceal your involvement, do as follows. He faked messages and transferred the first bitcoins to himself to test the transaction mechanism, so he never returned their money.

Hal Finney created the first reusable proof-of-work system. The bitcoin protocol. In the 1990s, Finney was intrigued by digital money. He invented CRypto cASH in 1993.

Legacy

Hal Finney's contributions should not be forgotten. Even if I'm wrong and he's not Satoshi Nakamoto, we shouldn't forget his bitcoin contribution. He helped us achieve a better future.

You might also like

Benjamin Lin

3 years ago

I sold my side project for $20,000: 6 lessons I learned

How I monetized and sold an abandoned side project for $20,000

The Origin Story

I've always wanted to be an entrepreneur but never succeeded. I often had business ideas, made a landing page, and told my buddies. Never got customers.

In April 2021, I decided to try again with a new strategy. I noticed that I had trouble acquiring an initial set of customers, so I wanted to start by acquiring a product that had a small user base that I could grow.

I found a SaaS marketplace called MicroAcquire.com where you could buy and sell SaaS products. I liked Shareit.video, an online Loom-like screen recorder.

Shareit.video didn't generate revenue, but 50 people visited daily to record screencasts.

Purchasing a Failed Side Project

I eventually bought Shareit.video for $12,000 from its owner.

$12,000 was probably too much for a website without revenue or registered users.

I thought time was most important. I could have recreated the website, but it would take months. $12,000 would give me an organized code base and a working product with a few users to monetize.

I considered buying a screen recording website and trying to grow it versus buying a new car or investing in crypto with the $12K.

Buying the website would make me a real entrepreneur, which I wanted more than anything.

Putting down so much money would force me to commit to the project and prevent me from quitting too soon.

A Year of Development

I rebranded the website to be called RecordJoy and worked on it with my cousin for about a year. Within a year, we made $5000 and had 3000 users.

We spent $3500 on ads, hosting, and software to run the business.

AppSumo promoted our $120 Life Time Deal in exchange for 30% of the revenue.

We put RecordJoy on maintenance mode after 6 months because we couldn't find a scalable user acquisition channel.

We improved SEO and redesigned our landing page, but nothing worked.

Despite not being able to grow RecordJoy any further, I had already learned so much from working on the project so I was fine with putting it on maintenance mode. RecordJoy still made $500 a month, which was great lunch money.

Getting Taken Over

One of our customers emailed me asking for some feature requests and I replied that we weren’t going to add any more features in the near future. They asked if we'd sell.

We got on a call with the customer and I asked if he would be interested in buying RecordJoy for 15k. The customer wanted around $8k but would consider it.

Since we were negotiating with one buyer, we put RecordJoy on MicroAcquire to see if there were other offers.

We quickly received 10+ offers. We got 18.5k. There was also about $1000 in AppSumo that we could not withdraw, so we agreed to transfer that over for $600 since about 40% of our sales on AppSumo usually end up being refunded.

Lessons Learned

First, create an acquisition channel

We couldn't discover a scalable acquisition route for RecordJoy. If I had to start another project, I'd develop a robust acquisition channel first. It might be LinkedIn, Medium, or YouTube.

Purchase Power of the Buyer Affects Acquisition Price

Some of the buyers we spoke to were individuals looking to buy side projects, as well as companies looking to launch a new product category. Individual buyers had less budgets than organizations.

Customers of AppSumo vary.

AppSumo customers value lifetime deals and low prices, which may not be a good way to build a business with recurring revenue. Designed for AppSumo users, your product may not connect with other users.

Try to increase acquisition trust

Acquisition often fails. The buyer can go cold feet, cease communicating, or run away with your stuff. Trusting the buyer ensures a smooth asset exchange. First acquisition meeting was unpleasant and price negotiation was tight. In later meetings, we spent the first few minutes trying to get to know the buyer’s motivations and background before jumping into the negotiation, which helped build trust.

Operating expenses can reduce your earnings.

Monitor operating costs. We were really happy when we withdrew the $5000 we made from AppSumo and Stripe until we realized that we had spent $3500 in operating fees. Spend money on software and consultants to help you understand what to build.

Don't overspend on advertising

We invested $1500 on Google Ads but made little money. For a side project, it’s better to focus on organic traffic from SEO rather than paid ads unless you know your ads are going to have a positive ROI.

Will Lockett

3 years ago

Tesla recently disclosed its greatest secret.

The VP has revealed a secret that should frighten the rest of the EV world.

Tesla led the EV revolution. Elon Musk's invention offers a viable alternative to gas-guzzlers. Tesla has lost ground in recent years. VW, BMW, Mercedes, and Ford offer EVs with similar ranges, charging speeds, performance, and cost. Tesla's next-generation 4680 battery pack, Roadster, Cybertruck, and Semi were all delayed. CATL offers superior batteries than the 4680. Martin Viecha, Tesla's Vice President, recently told Business Insider something that startled the EV world and will establish Tesla as the EV king.

Viecha mentioned that Tesla's production costs have dropped 57% since 2017. This isn't due to cheaper batteries or devices like Model 3. No, this is due to amazing factory efficiency gains.

Musk wasn't crazy to want a nearly 100% automated production line, and Tesla's strategy of sticking with one model and improving it has paid off. Others change models every several years. This implies they must spend on new R&D, set up factories, and modernize service and parts systems. All of this costs a ton of money and prevents them from refining production to cut expenses.

Meanwhile, Tesla updates its vehicles progressively. Everything from the backseats to the screen has been enhanced in a 2022 Model 3. Tesla can refine, standardize, and cheaply produce every part without changing the production line.

In 2017, Tesla's automobile production averaged $84,000. In 2022, it'll be $36,000.

Mr. Viecha also claimed that new factories in Shanghai and Berlin will be significantly cheaper to operate once fully operating.

Tesla's hand is visible. Tesla selling $36,000 cars for $60,000 This barely beats the competition. Model Y long-range costs just over $60,000. Tesla makes $24,000+ every sale, giving it a 40% profit margin, one of the best in the auto business.

VW I.D4 costs about the same but makes no profit. Tesla's rivals face similar challenges. Their EVs make little or no profit.

Tesla costs the same as other EVs, but they're in a different league.

But don't forget that the battery pack accounts for 40% of an EV's cost. Tesla may soon fully utilize its 4680 battery pack.

The 4680 battery pack has larger cells and a unique internal design. This means fewer cells are needed for a car, making it cheaper to assemble and produce (per kWh). Energy density and charge speeds increase slightly.

Tesla underestimated the difficulty of making this revolutionary new cell. Each time they try to scale up production, quality drops and rejected cells rise.

Tesla recently installed this battery pack in Model Ys and is scaling production. If they succeed, Tesla battery prices will plummet.

Tesla's Model Ys 2170 battery costs $11,000. The same size pack with 4680 cells costs $3,400 less. Once scaled, it could be $5,500 (50%) less. The 4680 battery pack could reduce Tesla production costs by 20%.

With these cost savings, Tesla could sell Model Ys for $40,000 while still making a profit. They could offer a $25,000 car.

Even with new battery technology, it seems like other manufacturers will struggle to make EVs profitable.

Teslas cost about the same as competitors, so don't be fooled. Behind the scenes, they're still years ahead, and the 4680 battery pack and new factories will only increase that lead. Musk faces a first. He could sell Teslas at current prices and make billions while other manufacturers struggle. Or, he could massively undercut everyone and crush the competition once and for all. Tesla and Elon win.

James White

3 years ago

Ray Dalio suggests reading these three books in 2022.

An inspiring reading list

I'm no billionaire or hedge-fund manager. My bank account doesn't have millions. Ray Dalio's love of reading motivates me to think differently.

Here are some books recommended by Ray Dalio. Each influenced me. Hope they'll help you.

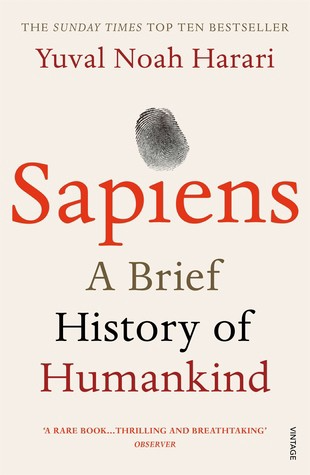

Sapiens by Yuval Noah Harari

Page Count: 512

Rating on Goodreads: 4.39

My favorite nonfiction book.

Sapiens explores human evolution. It explains how Homo Sapiens developed from hunter-gatherers to a dominant species. Amazing!

Sapiens will teach you about human history. Yuval Noah Harari has a follow-up book on human evolution.

My favorite book quotes are:

The tendency for luxuries to turn into necessities and give rise to new obligations is one of history's few unbreakable laws.

Happiness is not dependent on material wealth, physical health, or even community. Instead, it depends on how closely subjective expectations and objective circumstances align.

The romantic comparison between today's industry, which obliterates the environment, and our forefathers, who coexisted well with nature, is unfounded. Homo sapiens held the record among all organisms for eradicating the most plant and animal species even before the Industrial Revolution. The unfortunate distinction of being the most lethal species in the history of life belongs to us.

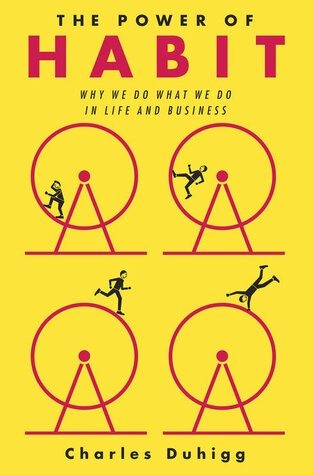

The Power Of Habit by Charles Duhigg

Page Count: 375

Rating on Goodreads: 4.13

Great book: The Power Of Habit. It illustrates why habits are everything. The book explains how healthier habits can improve your life, career, and society.

The Power of Habit rocks. It's a great book on productivity. Its suggestions helped me build healthier behaviors (and drop bad ones).

Read ASAP!

My favorite book quotes are:

Change may not occur quickly or without difficulty. However, almost any behavior may be changed with enough time and effort.

People who exercise begin to eat better and produce more at work. They are less smokers and are more patient with friends and family. They claim to feel less anxious and use their credit cards less frequently. A fundamental habit that sparks broad change is exercise.

Habits are strong but also delicate. They may develop independently of our awareness or may be purposefully created. They frequently happen without our consent, but they can be altered by changing their constituent pieces. They have a much greater influence on how we live than we realize; in fact, they are so powerful that they cause our brains to adhere to them above all else, including common sense.

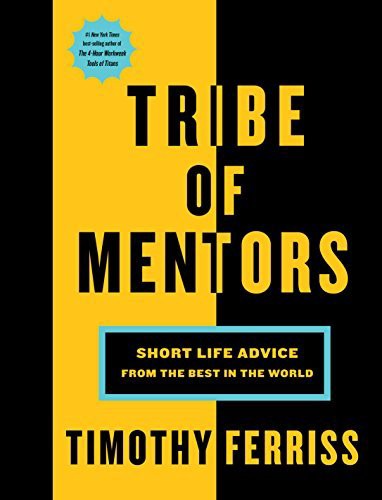

Tribe Of Mentors by Tim Ferriss

Page Count: 561

Rating on Goodreads: 4.06

Unusual book structure. It's worth reading if you want to learn from successful people.

The book is Q&A-style. Tim questions everyone. Each chapter features a different person's life-changing advice. In the book, Pressfield, Willink, Grylls, and Ravikant are interviewed.

Amazing!

My favorite book quotes are:

According to one's courage, life can either get smaller or bigger.

Don't engage in actions that you are aware are immoral. The reputation you have with yourself is all that constitutes self-esteem. Always be aware.

People mistakenly believe that focusing means accepting the task at hand. However, that is in no way what it represents. It entails rejecting the numerous other worthwhile suggestions that exist. You must choose wisely. Actually, I'm just as proud of the things we haven't accomplished as I am of what I have. Saying no to 1,000 things is what innovation is.