More on Personal Growth

James White

3 years ago

Ray Dalio suggests reading these three books in 2022.

An inspiring reading list

I'm no billionaire or hedge-fund manager. My bank account doesn't have millions. Ray Dalio's love of reading motivates me to think differently.

Here are some books recommended by Ray Dalio. Each influenced me. Hope they'll help you.

Sapiens by Yuval Noah Harari

Page Count: 512

Rating on Goodreads: 4.39

My favorite nonfiction book.

Sapiens explores human evolution. It explains how Homo Sapiens developed from hunter-gatherers to a dominant species. Amazing!

Sapiens will teach you about human history. Yuval Noah Harari has a follow-up book on human evolution.

My favorite book quotes are:

The tendency for luxuries to turn into necessities and give rise to new obligations is one of history's few unbreakable laws.

Happiness is not dependent on material wealth, physical health, or even community. Instead, it depends on how closely subjective expectations and objective circumstances align.

The romantic comparison between today's industry, which obliterates the environment, and our forefathers, who coexisted well with nature, is unfounded. Homo sapiens held the record among all organisms for eradicating the most plant and animal species even before the Industrial Revolution. The unfortunate distinction of being the most lethal species in the history of life belongs to us.

The Power Of Habit by Charles Duhigg

Page Count: 375

Rating on Goodreads: 4.13

Great book: The Power Of Habit. It illustrates why habits are everything. The book explains how healthier habits can improve your life, career, and society.

The Power of Habit rocks. It's a great book on productivity. Its suggestions helped me build healthier behaviors (and drop bad ones).

Read ASAP!

My favorite book quotes are:

Change may not occur quickly or without difficulty. However, almost any behavior may be changed with enough time and effort.

People who exercise begin to eat better and produce more at work. They are less smokers and are more patient with friends and family. They claim to feel less anxious and use their credit cards less frequently. A fundamental habit that sparks broad change is exercise.

Habits are strong but also delicate. They may develop independently of our awareness or may be purposefully created. They frequently happen without our consent, but they can be altered by changing their constituent pieces. They have a much greater influence on how we live than we realize; in fact, they are so powerful that they cause our brains to adhere to them above all else, including common sense.

Tribe Of Mentors by Tim Ferriss

Page Count: 561

Rating on Goodreads: 4.06

Unusual book structure. It's worth reading if you want to learn from successful people.

The book is Q&A-style. Tim questions everyone. Each chapter features a different person's life-changing advice. In the book, Pressfield, Willink, Grylls, and Ravikant are interviewed.

Amazing!

My favorite book quotes are:

According to one's courage, life can either get smaller or bigger.

Don't engage in actions that you are aware are immoral. The reputation you have with yourself is all that constitutes self-esteem. Always be aware.

People mistakenly believe that focusing means accepting the task at hand. However, that is in no way what it represents. It entails rejecting the numerous other worthwhile suggestions that exist. You must choose wisely. Actually, I'm just as proud of the things we haven't accomplished as I am of what I have. Saying no to 1,000 things is what innovation is.

Tim Denning

3 years ago

In this recession, according to Mark Cuban, you need to outwork everyone

Here’s why that’s baloney

Mark Cuban popularized entrepreneurship.

Shark Tank (which made Mark famous) made starting a business glamorous to attract more entrepreneurs. First off

This isn't an anti-billionaire rant.

Mark Cuban has done excellent. He's a smart, principled businessman. I enjoy his Web3 work. But Mark's work and productivity theories are absurd.

You don't need to outwork everyone in this recession to live well.

You won't be able to outwork me.

Yuck! Mark's words made me gag.

Why do boys think working is a football game where the winner wins a Super Bowl trophy? To outwork you.

Hard work doesn't equal intelligence.

Highly clever professionals spend 4 hours a day in a flow state, then go home to relax with family.

If you don't put forth the effort, someone else will.

- Mark.

He'll burn out. He's delusional and doesn't understand productivity. Boredom or disconnection spark our best thoughts.

TikTok outlaws boredom.

In a spare minute, we check our phones because we can't stand stillness.

All this work p*rn makes things worse. When is it okay to feel again? Because I can’t feel anything when I’m drowning in work and haven’t had a holiday in 2 years.

Your rivals are actively attempting to undermine you.

Ohhh please Mark…seriously.

This isn't a Tom Hanks war film. Relax. Not everyone is a rival. Only yourself is your competitor. To survive the recession, be better than a year ago.

If you get rich, great. If not, there's more to life than Lambos and angel investments.

Some want to relax and enjoy life. No competition. We witness people with lives trying to endure the recession and record-high prices.

This fictitious rival worsens life and work.

If you are truly talented, you will motivate others to work more diligently and effectively.

No Mark. Soz.

If you're a good leader, you won't brag about working hard and treating others like cogs. Treat them like humans. You'll have EQ.

Silly statements like this are caused by an out-of-control ego. No longer watch Shark Tank.

Ego over humanity.

Good leaders will urge people to keep together during the recession. Good leaders support those who are laid off and need a reference.

Not harder, quicker, better. That created my mental health problems 10 years ago.

Truth: we want to work less.

The promotion of entrepreneurship is ludicrous.

Marvel superheroes. Seriously, relax Max.

I used to write about entrepreneurship, then I quit. Many WeWork Adam Neumanns. Carelessness.

I now utilize the side hustle title when writing about online company or entrepreneurship. Humanizes.

Stop glorifying. Thinking we'll all be Elon Musks who send rockets to Mars is delusional. Most of us won't create companies employing hundreds.

OK.

The true epidemic is glorification. fewer selfies Little birdy needs less bank account screenshots. Less Uber talk.

We're exhausted.

Fun, ego-free business can transform the world. Take a relax pill.

Work as if someone were attempting to take everything from you.

I've seen people lose everything.

Myself included. My 20s startup failed. I was almost bankrupt. I thought I'd never recover. Nope.

Best thing ever.

Losing everything reveals your true self. Unintelligent entrepreneur egos perish instantly. Regaining humility revitalizes relationships.

Money's significance shifts. Stop chasing it like a puppy with a bone.

Fearing loss is unfounded.

Here is a more effective approach than outworking nobody.

(You'll thrive in the recession and become wealthy.)

Smarter work

Overworking is donkey work.

You don't want to be a career-long overworker. Instead than wasting time, write down what you do. List tasks and processes.

Keep doing/outsource the list. Step-by-step each task. Continuously systematize.

Then recruit a digital employee like Zapier or a virtual assistant in the same country.

Intelligent, not difficult.

If your big break could burn in hell, diversify like it will.

People err by focusing on one chance.

Chances can vanish. All-in risky. Instead of working like a Mark Cuban groupie, diversify your income.

If you're employed, your customer is your employer.

Sell the same abilities twice and add 2-3 contract clients. Reduce your hours at your main job and take on more clients.

Leave brand loyalty behind

Mark desires his employees' worship.

That's stupid. When times are bad, layoffs multiply. The problem is the false belief that companies care. No. A business maximizes profit and pays you the least.

To care or overpay is anti-capitalist (that run the world). Be honest.

I was a banker. Then the bat virus hit and jobs disappeared faster than I urinate after a night of drinking.

Start being disloyal now since your company will cheerfully replace you with a better applicant. Meet recruiters and hiring managers on LinkedIn. Whenever something goes wrong at work, act.

Loyalty to self and family. Nobody.

Outwork this instead

Mark doesn't suggest outworking inflation instead of people.

Inflation erodes your time on earth. If you ignore inflation, you'll work harder for less pay every minute.

Financial literacy beats inflation.

Get a side job and earn money online

So you can stop outworking everyone.

Internet leverages time. Same effort today yields exponential results later. There are still whole places not online.

Instead of working forever, generate money online.

Final Words

Overworking is stupid. Don't listen to wealthy football jocks.

Work isn't everything. Prioritize diversification, internet income streams, boredom, and financial knowledge throughout the recession.

That’s how to get wealthy rather than burnout-rich.

Glorin Santhosh

3 years ago

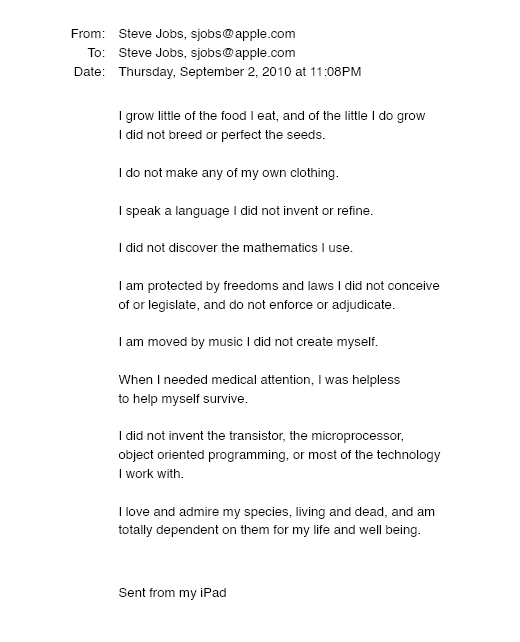

In his final days, Steve Jobs sent an email to himself. What It Said Was This

An email capturing Steve Jobs's philosophy.

Steve Jobs may have been the most inspired and driven entrepreneur.

He worked on projects because he wanted to leave a legacy.

Steve Jobs' final email to himself encapsulated his philosophy.

After his death from pancreatic cancer in October 2011, Laurene Powell Jobs released the email. He was 56.

Read: Steve Jobs by Walter Isaacson (#BestSeller)

The Email:

September 2010 Steve Jobs email:

“I grow little of the food I eat, and of the little I do grow, I do not breed or perfect the seeds.” “I do not make my own clothing. I speak a language I did not invent or refine,” he continued. “I did not discover the mathematics I use… I am moved by music I did not create myself.”

Jobs ended his email by reflecting on how others created everything he uses.

He wrote:

“When I needed medical attention, I was helpless to help myself survive.”

The Apple co-founder concluded by praising humanity.

“I did not invent the transistor, the microprocessor, object-oriented programming, or most of the technology I work with. I love and admire my species, living and dead, and am totally dependent on them for my life and well-being,” he concluded.

The email was made public as a part of the Steve Jobs Archive, a website that was launched in tribute to his legacy.

Steve Jobs' widow founded the internet archive. Apple CEO Tim Cook and former design leader Jony Ive were prominent guests.

Steve Jobs has always inspired because he shows how even the best can be improved.

High expectations were always there, and they were consistently met.

We miss him because he was one of the few with lifelong enthusiasm and persona.

You might also like

Sanjay Priyadarshi

3 years ago

A 19-year-old dropped out of college to build a $2,300,000,000 company in 2 years.

His success was unforeseeable.

2014 saw Facebook's $2.3 billion purchase of Oculus VR.

19-year-old Palmer Luckey founded Oculus. He quit journalism school. His parents worried about his college dropout.

Facebook bought Oculus VR in less than 2 years.

Palmer Luckey started Anduril Industries. Palmer has raised $385 million with Anduril.

The Oculus journey began in a trailer

Palmer Luckey, 19, owned the trailer.

Luckey had his trailer customized. The trailer had all six of Luckey's screens. In the trailer's remaining area, Luckey conducted hardware tests.

At 16, he became obsessed with virtual reality. Virtual reality was rare at the time.

Luckey didn't know about VR when he started.

Previously, he liked "portabilizing" mods. Hacking ancient game consoles into handhelds.

In his city, fewer portabilizers actively traded.

Luckey started "ModRetro" for other portabilizers. Luckey was exposed to VR headsets online.

Luckey:

“Man, ModRetro days were the best.”

Palmer Luckey used VR headsets for three years. His design had 50 prototypes.

Luckey used to work at the Long Beach Sailing Center for minimum salary, servicing diesel engines and cleaning boats.

Luckey worked in a USC Institute for Creative Technologies mixed reality lab in July 2011. (ICT).

Luckey cleaned the lab, did reports, and helped other students with VR projects.

Luckey's lab job was dull.

Luckey chose to work in the lab because he wanted to engage with like-minded folks.

By 2012, Luckey had a prototype he hoped to share globally. He made cheaper headsets than others.

Luckey wanted to sell an easy-to-assemble virtual reality kit on Kickstarter.

He realized he needed a corporation to do these sales legally. He started looking for names. "Virtuality," "virtual," and "VR" are all taken.

Hence, Oculus.

If Luckey sold a hundred prototypes, he would be thrilled since it would boost his future possibilities.

John Carmack, legendary game designer

Carmack has liked sci-fi and fantasy since infancy.

Carmack loved imagining intricate gaming worlds.

His interest in programming and computer science grew with age.

He liked graphics. He liked how mismatching 0 and 1 might create new colors and visuals.

Carmack played computer games as a teen. He created Shadowforge in high school.

He founded Id software in 1991. When Carmack created id software, console games were the best-sellers.

Old computer games have weak graphics. John Carmack and id software developed "adaptive tile refresh."

This technique smoothed PC game scrolling. id software launched 3-D, Quake, and Doom using "adaptive tile refresh."

These games made John Carmack a gaming star. Later, he sold Id software to ZeniMax Media.

How Palmer Luckey met Carmack

In 2011, Carmack was thinking a lot about 3-D space and virtual reality.

He was underwhelmed by the greatest HMD on the market. Because of their flimsiness and latency.

His disappointment was partly due to the view (FOV). Best HMD had 40-degree field of view.

Poor. The best VR headset is useless with a 40-degree FOV.

Carmack intended to show the press Doom 3 in VR. He explored VR headsets and internet groups for this reason.

Carmack identified a VR enthusiast in the comments section of "LEEP on the Cheap." "PalmerTech" was the name.

Carmack approached PalmerTech about his prototype. He told Luckey about his VR demos, so he wanted to see his prototype.

Carmack got a Rift prototype. Here's his May 17 tweet.

John Carmack tweeted an evaluation of the Luckey prototype.

Dan Newell, a Valve engineer, and Mick Hocking, a Sony senior director, pre-ordered Oculus Rift prototypes with Carmack's help.

Everyone praised Luckey after Carmack demoed Rift.

Palmer Luckey received a job offer from Sony.

It was a full-time position at Sony Computer Europe.

He would run Sony’s R&D lab.

The salary would be $70k.

Who is Brendan Iribe?

Brendan Iribe started early with Startups. In 2004, he and Mike Antonov founded Scaleform.

Scaleform created high-performance middleware. This package allows 3D Flash games.

In 2011, Iribe sold Scaleform to Autodesk for $36 million.

How Brendan Iribe discovered Palmer Luckey.

Brendan Iribe's friend Laurent Scallie.

Laurent told Iribe about a potential opportunity.

Laurent promised Iribe VR will work this time. Laurent introduced Iribe to Luckey.

Iribe was doubtful after hearing Laurent's statements. He doubted Laurent's VR claims.

But since Laurent took the name John Carmack, Iribe thought he should look at Luckey Innovation. Iribe was hooked on virtual reality after reading Palmer Luckey stories.

He asked Scallie about Palmer Luckey.

Iribe convinced Luckey to start Oculus with him

First meeting between Palmer Luckey and Iribe.

The Iribe team wanted Luckey to feel comfortable.

Iribe sought to convince Luckey that launching a company was easy. Iribe told Luckey anyone could start a business.

Luckey told Iribe's staff he was homeschooled from childhood. Luckey took self-study courses.

Luckey had planned to launch a Kickstarter campaign and sell kits for his prototype. Many companies offered him jobs, nevertheless.

He's considering Sony's offer.

Iribe advised Luckey to stay independent and not join a firm. Iribe asked Luckey how he could raise his child better. No one sees your baby like you do?

Iribe's team pushed Luckey to stay independent and establish a software ecosystem around his device.

After conversing with Iribe, Luckey rejected every job offer and merger option.

Iribe convinced Luckey to provide an SDK for Oculus developers.

After a few months. Brendan Iribe co-founded Oculus with Palmer Luckey. Luckey trusted Iribe and his crew, so he started a corporation with him.

Crowdfunding

Brendan Iribe and Palmer Luckey launched a Kickstarter.

Gabe Newell endorsed Palmer's Kickstarter video.

Gabe Newell wants folks to trust Palmer Luckey since he's doing something fascinating and answering tough questions.

Mark Bolas and David Helgason backed Palmer Luckey's VR Kickstarter video.

Luckey introduced Oculus Rift during the Kickstarter campaign. He introduced virtual reality during press conferences.

Oculus' Kickstarter effort was a success. Palmer Luckey felt he could raise $250,000.

Oculus raised $2.4 million through Kickstarter. Palmer Luckey's virtual reality vision was well-received.

Mark Zuckerberg's Oculus discovery

Brendan Iribe and Palmer Luckey hired the right personnel after a successful Kickstarter campaign.

Oculus needs a lot of money for engineers and hardware. They needed investors' money.

Series A raised $16M.

Next, Andreessen Horowitz partner Brain Cho approached Iribe.

Cho told Iribe that Andreessen Horowitz could invest in Oculus Series B if the company solved motion sickness.

Mark Andreessen was Iribe's dream client.

Marc Andreessen and his partners gave Oculus $75 million.

Andreessen introduced Iribe to Zukerberg. Iribe and Zukerberg discussed the future of games and virtual reality by phone.

Facebook's Oculus demo

Iribe showed Zuckerberg Oculus.

Mark was hooked after using Oculus. The headset impressed him.

The whole Facebook crew who saw the demo said only one thing.

“Holy Crap!”

This surprised them all.

Mark Zuckerberg was impressed by the team's response. Mark Zuckerberg met the Oculus team five days after the demo.

First meeting Palmer Luckey.

Palmer Luckey is one of Mark's biggest supporters and loves Facebook.

Oculus Acquisition

Zuckerberg wanted Oculus.

Brendan Iribe had requested for $4 billion, but Mark wasn't interested.

Facebook bought Oculus for $2.3 billion after months of drama.

After selling his company, how does Palmer view money?

Palmer loves the freedom money gives him. Money frees him from small worries.

Money has allowed him to pursue things he wouldn't have otherwise.

“If I didn’t have money I wouldn’t have a collection of vintage military vehicles…You can have nice hobbies that keep you relaxed when you have money.”

He didn't start Oculus to generate money. His virtual reality passion spanned years.

He didn't have to lie about how virtual reality will transform everything until he needed funding.

The company's success was an unexpected bonus. He was merely passionate about a good cause.

After Oculus' $2.3 billion exit, what changed?

Palmer didn't mind being rich. He did similar things.

After Facebook bought Oculus, he moved to Silicon Valley and lived in a 12-person shared house due to high rents.

Palmer might have afforded a big mansion, but he prefers stability and doing things because he wants to, not because he has to.

“Taco Bell is never tasted so good as when you know you could afford to never eat taco bell again.”

Palmer's leadership shifted.

Palmer changed his leadership after selling Oculus.

When he launched his second company, he couldn't work on his passions.

“When you start a tech company you do it because you want to work on a technology, that is why you are interested in that space in the first place. As the company has grown, he has realized that if he is still doing optical design in the company it’s because he is being negligent about the hiring process.”

Once his startup grows, the founder's responsibilities shift. He must recruit better firm managers.

Recruiting talented people becomes the top priority. The founder must convince others of their influence.

A book that helped me write this:

The History of the Future: Oculus, Facebook, and the Revolution That Swept Virtual Reality — Blake Harris

*This post is a summary. Read the full article here.

Steve QJ

3 years ago

Putin's War On Reality

The dictator's playbook.

Stalin's successor, Nikita Khrushchev, delivered a speech titled "On The Cult Of Personality And Its Consequences" in 1956, three years after Stalin’s death.

It was Stalin's grave abuse of power that caused untold harm to our party.

Stalin acted not by persuasion, explanation, or patient cooperation, but by imposing his ideas and demanding absolute obedience. […]

See where Stalin's mania for greatness led? He had lost all sense of reality.

The speech, which was never made public, shook the Soviet Union and the Soviet Bloc. After Stalin's "cult of personality" was exposed as a lie, only reality remained.

As I've watched the nightmare unfold in Ukraine, I'm reminded of that question. Primarily by Putin's repeated denials.

His odd claim that Ukraine is run by drug addicts and Nazis (especially strange given that Volodymyr Zelenskyy, the Ukrainian president, is Jewish). Others attempt to portray Russia as liberators rather than occupiers. For example, he portrays Luhansk and Donetsk as plucky, newly independent states when they have been totalitarian statelets for 8 years.

Putin seemed to have lost all sense of reality.

Maybe that's why his remarks to an oligarchs' gathering stood out:

Everything is a desperate measure. They gave us no choice. We couldn't do anything about their security risks. […] They could have put the country in jeopardy.

This is almost certainly true from Putin's perspective. Even for Putin, a military invasion seems unlikely. So, what exactly is putting Russia's security in jeopardy? How could Ukraine's independence endanger Russia's existence?

The truth is the only thing that truly terrifies leaders like these.

Trump, the president of “alternative facts,” "and “fake news” praised Putin's fabricated justifications for the Ukraine invasion. Russia tightened news censorship as news of their losses came in. It's no accident that modern dictatorships like Russia (and China and North Korea) restrict citizens' access to information.

Controlling what people see, hear, and think is the simplest method. And Ukraine's recent efforts to join the European Union showed a country whose thoughts Putin couldn't control. With the Russian and Ukrainian peoples so close, he could not control their reality.

He appears to think this is a threat worth fighting NATO over.

It's easy to disown history's great dictators. By the magnitude of their harm. But the strategy they used is still in use today, albeit not to the same devastating effect.

The Kim dynasty in North Korea has ruled for 74 years, Putin has ruled Russia for 19 years (using loopholes and even rewriting the constitution).

“Politicians and diapers must be changed frequently,” said Mark Twain. "And for the same reason.”

When their egos are threatened, they sabre-rattle, as in Kim Jong-un and Donald Trump's famous spat about the size of their...ahem, “nuclear buttons”." Or Putin's threats of mutual destruction this weekend.

Most importantly, they have cult-like control over their followers.

When a leader whose power is built on lies feels he is losing control of the narrative, things like Trump's Jan. 6 meltdown and Putin's current actions in Ukraine are unavoidable.

Leaders who try to control their people's reality will have to die to keep the illusion alive.

Long version of this post available here

Maddie Wang

3 years ago

Easiest and fastest way to test your startup idea!

Here's the fastest way to validate company concepts.

I squandered a year after dropping out of Stanford designing a product nobody wanted.

But today, I’m at 100k!

Differences:

I was designing a consumer product when I dropped out.

I coded MVP, got 1k users, and got YC interview.

Nice, huh?

WRONG!

Still coding and getting users 12 months later

WOULD PEOPLE PAY FOR IT? was the riskiest assumption I hadn't tested.

When asked why I didn't verify payment, I said,

Not-ready products. Now, nobody cares. The website needs work. Include this. Increase usage…

I feared people would say no.

After 1 year of pushing it off, my team told me they were really worried about the Business Model. Then I asked my audience if they'd buy my product.

So?

No, overwhelmingly.

I felt like I wasted a year building a product no one would buy.

Founders Cafe was the opposite.

Before building anything, I requested payment.

40 founders were interviewed.

Then we emailed Stanford, YC, and other top founders, asking them to join our community.

BOOM! 10/12 paid!

Without building anything, in 1 day I validated my startup's riskiest assumption. NOT 1 year.

Asking people to pay is one of the scariest things.

I understand.

I asked Stanford queer women to pay before joining my gay sorority.

I was afraid I'd turn them off or no one would pay.

Gay women, like those founders, were in such excruciating pain that they were willing to pay me upfront to help.

You can ask for payment (before you build) to see if people have the burning pain. Then they'll pay!

Examples from Founders Cafe members:

😮 Using a fake landing page, a college dropout tested a product. Paying! He built it and made $3m!

😮 YC solo founder faked a Powerpoint demo. 5 Enterprise paid LOIs. $1.5m raised, built, and in YC!

😮 A Harvard founder can convert Figma to React. 1 day, 10 customers. Built a tool to automate Figma -> React after manually fulfilling requests. 1m+

Bad example:

😭 Stanford Dropout Spends 1 Year Building Product Without Payment Validation

Some people build for a year and then get paying customers.

What I'm sharing is my experience and what Founders Cafe members have told me about validating startup ideas.

Don't waste a year like I did.

After my first startup failed, I planned to re-enroll at Stanford/work at Facebook.

After people paid, I quit for good.

I've hit $100k!

Hope this inspires you to request upfront payment! It'll change your life