More on Marketing

obimy.app

3 years ago

How TikTok helped us grow to 6 million users

This resulted to obimy's new audience.

Hi! obimy's official account. Here, we'll teach app developers and marketers. In 2022, our downloads increased dramatically, so we'll share what we learned.

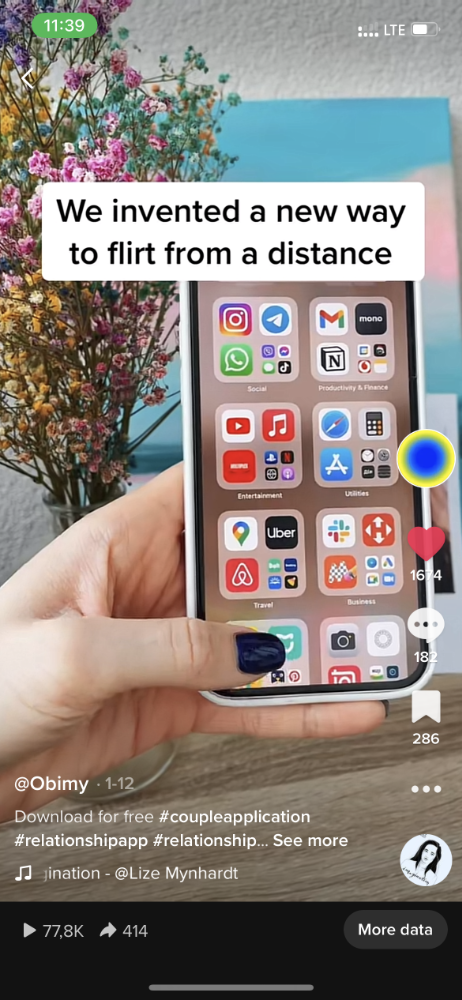

obimy is what we call a ‘senseger’. It's a new method to communicate digitally. Instead of text, obimy users connect through senses and moods. Feeling playful? Flirt with your partner, pat a pal, or dump water on a classmate. Each feeling is an interactive animation with vibration. It's a wordless app. App Store and Google Play have obimy.

We had 20,000 users in 2022. Two to five thousand of them opened the app monthly. Our DAU metric was 500.

We have 6 million users after 6 months. 500,000 individuals use obimy daily. obimy was the top lifestyle app this week in the U.S.

And TikTok helped.

TikTok fuels obimys' growth. It's why our app exploded. How and what did we learn? Our Head of Marketing, Anastasia Avramenko, knows.

our actions prior to TikTok

We wanted to achieve product-market fit through organic expansion. Quora, Reddit, Facebook Groups, Facebook Ads, Google Ads, Apple Search Ads, and social media activity were tested. Nothing worked. Our CPI was sometimes $4, so unit economics didn't work.

We studied our markets and made audience hypotheses. We promoted our goods and studied our audience through social media quizzes. Our target demographic was Americans in long-distance relationships. I designed quizzes like Test the Strength of Your Relationship to better understand the user base. After each quiz, we encouraged users to download the app to enhance their connection and bridge the distance.

We got 1,000 responses for $50. This helped us comprehend the audience's grief and coping strategies (aka our rivals). I based action items on answers given. If you can't embrace a loved one, use obimy.

We also tried Facebook and Google ads. From the start, we knew it wouldn't work.

We were desperate to discover a free way to get more users.

Our journey to TikTok

TikTok is a great venue for emerging creators. It also helped reach people. Before obimy, my TikTok videos garnered 12 million views without sponsored promotion.

We had to act. TikTok was required.

I wasn't a TikTok user before obimy. Initially, I uploaded promotional content. Call-to-actions appear strange next to dancing challenges and my money don't jiggle jiggle. I learned TikTok. Watch TikTok for an hour was on my to-do list. What a dream job!

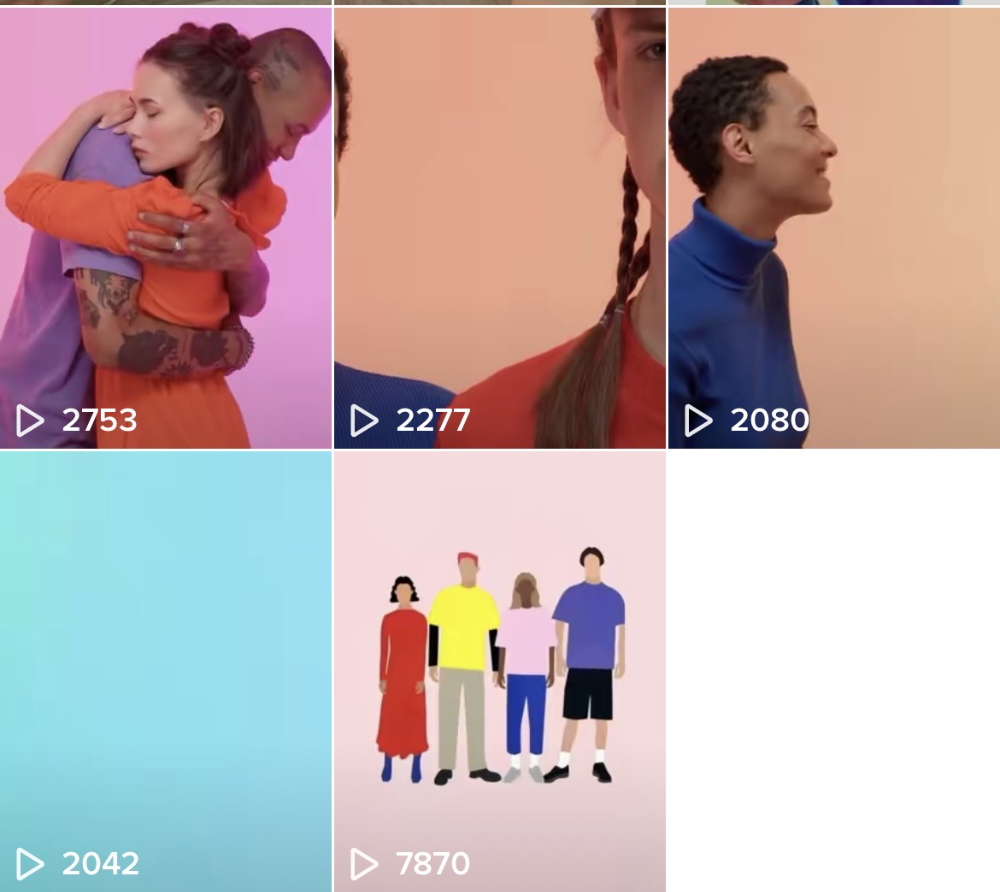

Our most popular movies presented the app alongside text outlining what it does. We started promoting them in Europe and the U.S. and got a 16% CTR and $1 CPI, an improvement over our previous efforts.

Somehow, we were expanding. So we came up with new hypotheses, calls to action, and content.

Four months passed, yet we saw no organic growth.

Russia attacked Ukraine.

Our app aimed to be helpful. For now, we're focusing on our Ukrainian audience. I posted sloppy TikToks illustrating how obimy can help during shelling or air raids.

In two hours, Kostia sent me our visitor count. Our servers crashed.

Initially, we had several thousand daily users. Over 200,000 users joined obimy in a week. They posted obimy videos on TikTok, drawing additional users. We've also resumed U.S. video promotion.

We gained 2,000,000 new members with less than $100 in ads, primarily in the U.S. and U.K.

TikTok helped.

The figures

We were confident we'd chosen the ideal tool for organic growth.

Over 45 million people have viewed our own videos plus a ton of user-generated content with the hashtag #obimy.

About 375 thousand people have liked all of our individual videos.

The number of downloads and the virality of videos are directly correlated.

Where are we now?

TikTok fuels our organic growth. We post 56 videos every week and pay to promote viral content.

We use UGC and influencers. We worked with Universal Music Italy on Eurovision. They offered to promote us through their million-follower TikTok influencers. We thought their followers would improve our audience, but it didn't matter. Integration didn't help us. Users that share obimy videos with their followers can reach several million views, which affects our download rate.

After the dust settled, we determined our key audience was 13-18-year-olds. They want to express themselves, but it's sometimes difficult. We're searching for methods to better engage with our users. We opened a Discord server to discuss anime and video games and gather app and content feedback.

TikTok helps us test product updates and hypotheses. Example: I once thought we might raise MAU by prompting users to add strangers as friends. Instead of asking our team to construct it, I made a TikTok urging users to share invite URLs. Users share links under every video we upload, embracing people worldwide.

Key lessons

Don't direct-sell. TikTok isn't for Instagram, Facebook, or YouTube promo videos. Conventional advertisements don't fit. Most users will swipe up and watch humorous doggos.

More product videos are better. Finally. So what?

Encourage interaction. Tagging friends in comments or making videos with the app promotes it more than any marketing spend.

Be odd and risqué. A user mistakenly sent a French kiss to their mom in one of our most popular videos.

TikTok helps test hypotheses and build your user base. It also helps develop apps. In our upcoming blog, we'll guide you through obimy's design revisions based on TikTok. Follow us on Twitter, Instagram, and TikTok.

Camilla Dudley

3 years ago

How to gain Twitter followers: A 101 Guide

No wonder brands use Twitter to reach their audience. 53% of Twitter users buy new products first.

Twitter growth does more than make your brand look popular. It helps clients trust your business. It boosts your industry standing. It shows clients, prospects, and even competitors you mean business.

How can you naturally gain Twitter followers?

Share useful information

Post visual content

Tweet consistently

Socialize

Spread your @name everywhere.

Use existing customers

Promote followers

Share useful information

Twitter users join conversations and consume material. To build your followers, make sure your material appeals to them and gives value, whether it's sales, product lessons, or current events.

Use Twitter Analytics to learn what your audience likes.

Explore popular topics by utilizing relevant keywords and hashtags. Check out this post on how to use Twitter trends.

Post visual content

97% of Twitter users focus on images, so incorporating media can help your Tweets stand out. Visuals and videos make content more engaging and memorable.

Tweet often

Your audience should expect regular content updates. Plan your ideas and tweet during crucial seasons and events with a content calendar.

Socialize

Twitter connects people. Do more than tweet. Follow industry leaders. Retweet influencers, engage with thought leaders, and reply to mentions and customers to boost engagement.

Micro-influencers can promote your brand or items. They can help you gain new audiences' trust.

Spread your @name everywhere.

Maximize brand exposure. Add a follow button on your website, link to it in your email signature and newsletters, and promote it on business cards or menus.

Use existing customers

Emails can be used to find existing Twitter clients. Upload your email contacts and follow your customers on Twitter to start a dialogue.

Promote followers

Run a followers campaign to boost your organic growth. Followers campaigns promote your account to a particular demographic, and you only pay when someone follows you.

Consider short campaigns to enhance momentum or an always-on campaign to gain new followers.

Increasing your brand's Twitter followers takes effort and experimentation, but the payback is huge.

👋 Follow me on twitter

Jenn Leach

3 years ago

This clever Instagram marketing technique increased my sales to $30,000 per month.

No Paid Ads Required

I had an online store. After a year of running the company alongside my 9-to-5, I made enough to resign.

That day was amazing.

This Instagram marketing plan helped the store succeed.

How did I increase my sales to five figures a month without using any paid advertising?

I used customer event marketing.

I'm not sure this term exists. I invented it to describe what I was doing.

Instagram word-of-mouth, fan engagement, and interaction drove sales.

If a customer liked or disliked a product, the buzz would drive attention to the store.

I used customer-based events to increase engagement and store sales.

Success!

Here are the weekly Instagram customer events I coordinated while running my business:

Be the Buyer Days

Flash sales

Mystery boxes

Be the Buyer Days: How do they work?

Be the Buyer Days are exactly that.

You choose a day to share stock selections with social media followers.

This is an easy approach to engaging customers and getting fans enthusiastic about new releases.

First, pick a handful of items you’re considering ordering. I’d usually pick around 3 for Be the Buyer Day.

Then I'd poll the crowd on Instagram to vote on their favorites.

This was before Instagram stories, polls, and all the other cool features Instagram offers today. I think using these tools now would make this event even better.

I'd ask customers their favorite back then.

The growing comments excited customers.

Then I'd declare the winner, acquire the products, and start selling it.

How do flash sales work?

I mostly ran flash sales.

You choose a limited number of itemsdd for a few-hour sale.

We wanted most sales to result in sold-out items.

When an item sells out, it contributes to the sensation of scarcity and can inspire customers to visit your store to buy a comparable product, join your email list, become a fan, etc.

We hoped they'd act quickly.

I'd hold flash deals twice a week, which generated scarcity and boosted sales.

The store had a few thousand Instagram followers when I started flash deals.

Each flash sale item would make $400 to $600.

$400 x 3= $1,200

That's $1,200 on social media!

Twice a week, you'll make roughly $10K a month from Instagram.

$1,200/day x 8 events/month=$9,600

Flash sales did great.

We held weekly flash deals and sent social media and email reminders. That’s about it!

How are mystery boxes put together?

All you do is package a box of store products and sell it as a mystery box on TikTok or retail websites.

A $100 mystery box would cost $30.

You're discounting high-value boxes.

This is a clever approach to get rid of excess inventory and makes customers happy.

It worked!

Be the Buyer Days, flash deals, and mystery boxes helped build my company without paid advertisements.

All companies can use customer event marketing. Involving customers and providing an engaging environment can boost sales.

Try it!

You might also like

Ben Chino

3 years ago

100-day SaaS buildout.

We're opening up Maki through a series of Medium posts. We'll describe what Maki is building and how. We'll explain how we built a SaaS in 100 days. This isn't a step-by-step guide to starting a business, but a product philosophy to help you build quickly.

Focus on end-users.

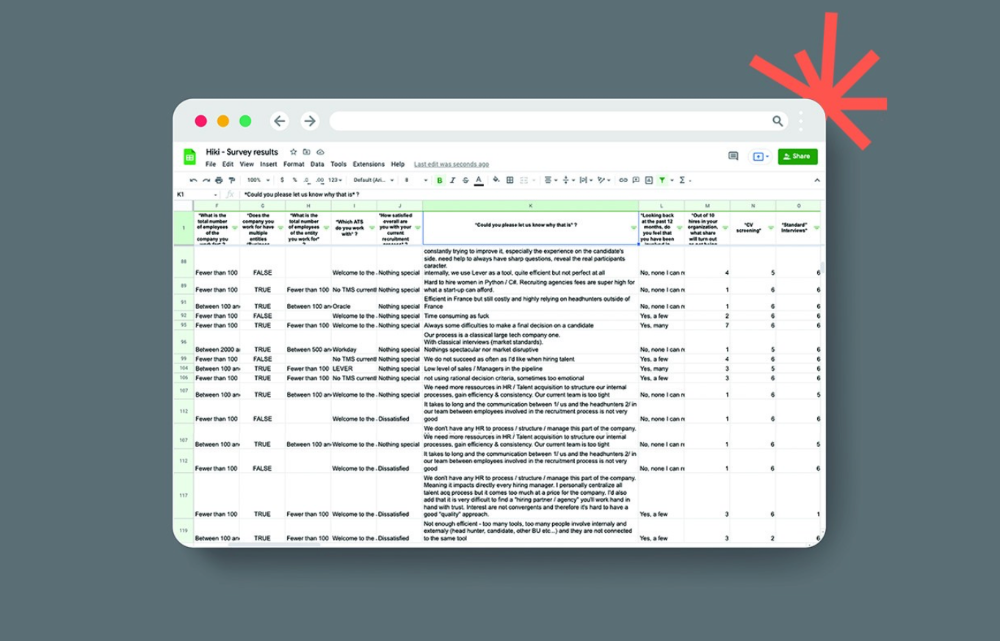

This may seem obvious, but it's important to talk to users first. When we started thinking about Maki, we interviewed 100 HR directors from SMBs, Next40 scale-ups, and major Enterprises to understand their concerns. We initially thought about the future of employment, but most of their worries centered on Recruitment. We don't have a clear recruiting process, it's time-consuming, we recruit clones, we don't support diversity, etc. And as hiring managers, we couldn't help but agree.

Co-create your product with your end-users.

We went to the drawing board, read as many books as possible (here, here, and here), and when we started getting a sense for a solution, we questioned 100 more operational HR specialists to corroborate the idea and get a feel for our potential answer. This confirmed our direction to help hire more objectively and efficiently.

Back to the drawing board, we designed our first flows and screens. We organized sessions with certain survey respondents to show them our early work and get comments. We got great input that helped us build Maki, and we met some consumers. Obsess about users and execute alongside them.

Don’t shoot for the moon, yet. Make pragmatic choices first.

Once we were convinced, we began building. To launch a SaaS in 100 days, we needed an operating principle that allowed us to accelerate while still providing a reliable, secure, scalable experience. We focused on adding value and outsourced everything else. Example:

Concentrate on adding value. Reuse existing bricks.

When determining which technology to use, we looked at our strengths and the future to see what would last. Node.js for backend, React for frontend, both with typescript. We thought this technique would scale well since it would attract more talent and the surrounding mature ecosystem would help us go quicker.

We explored for ways to bootstrap services while setting down strong foundations that might support millions of users. We built our backend services on NestJS so we could extend into microservices later. Hasura, a GraphQL APIs engine, automates Postgres data exposing through a graphQL layer. MUI's ready-to-use components powered our design-system. We used well-maintained open-source projects to speed up certain tasks.

We outsourced important components of our platform (Auth0 for authentication, Stripe for billing, SendGrid for notifications) because, let's face it, we couldn't do better. We choose to host our complete infrastructure (SQL, Cloud run, Logs, Monitoring) on GCP to simplify our work between numerous providers.

Focus on your business, use existing bricks for the rest. For the curious, we'll shortly publish articles detailing each stage.

Most importantly, empower people and step back.

We couldn't have done this without the incredible people who have supported us from the start. Since Powership is one of our key values, we provided our staff the power to make autonomous decisions from day one. Because we believe our firm is its people, we hired smart builders and let them build.

Nicolas left Spendesk to create scalable interfaces using react-router, react-queries, and MUI. JD joined Swile and chose Hasura as our GraphQL engine. Jérôme chose NestJS to build our backend services. Since then, Justin, Ben, Anas, Yann, Benoit, and others have followed suit.

If you consider your team a collective brain, you should let them make decisions instead of directing them what to do. You'll make mistakes, but you'll go faster and learn faster overall.

Invest in great talent and develop a strong culture from the start. Here's how to establish a SaaS in 100 days.

Cory Doctorow

3 years ago

The downfall of the Big Four accounting companies is just one (more) controversy away.

Economic mutual destruction.

Multibillion-dollar corporations never bothered with an independent audit, and they all lied about their balance sheets.

It's easy to forget that the Big Four accounting firms are lousy fraud enablers. Just because they sign off on your books doesn't mean you're not a hoax waiting to erupt.

This is *crazy* Capitalism depends on independent auditors. Rich folks need to know their financial advisers aren't lying. Rich folks usually succeed.

No accounting. EY, KPMG, PWC, and Deloitte make more money consulting firms than signing off on their accounts.

The Big Four sign off on phony books because failing to make friends with unscrupulous corporations may cost them consulting contracts.

The Big Four are the only firms big enough to oversee bankruptcy when they sign off on fraudulent books, as they did for Carillion in 2018. All four profited from Carillion's bankruptcy.

The Big Four are corrupt without any consequences for misconduct. Who can forget when KPMG's top management was fined millions for helping auditors cheat on ethics exams?

Consulting and auditing conflict. Consultants help a firm cover its evil activities, such as tax fraud or wage theft, whereas auditors add clarity to a company's finances. The Big Four make more money from cooking books than from uncooking them, thus they are constantly embroiled in scandals.

If a major scandal breaks, it may bring down the entire sector and substantial parts of the economy. Jim Peterson explains system risk for The Dig.

The Big Four are voluntary private partnerships where accountants invest their time, reputations, and money. If a controversy threatens the business, partners who depart may avoid scandal and financial disaster.

When disaster looms, each partner should bolt for the door, even if a disciplined stay-and-hold posture could weather the storm. This happened to Arthur Andersen during Enron's collapse, and a 2006 EU report recognized the risk to other corporations.

Each partner at a huge firm knows how much dirty laundry they've buried in the company's garden, and they have well-founded suspicions about what other partners have buried, too. When someone digs, everyone runs.

If a firm confronts substantial litigation damages or enforcement penalties, it could trigger the collapse of one of the Big Four. That would be bad news for the firm's clients, who would have trouble finding another big auditor.

Most of the world's auditing capacity is concentrated in four enormous, brittle, opaque, compromised organizations. If one of them goes bankrupt, the other three won't be able to take on its clients.

Peterson: Another collapse would strand many of the world's large public businesses, leaving them unable to obtain audit views for their securities listings and regulatory compliance.

Count Down: The Past, Present, and Uncertain Future of the Big Four Accounting Firms is in its second edition.

https://www.emerald.com/insight/publication/doi/10.1108/9781787147003

Stephen Moore

3 years ago

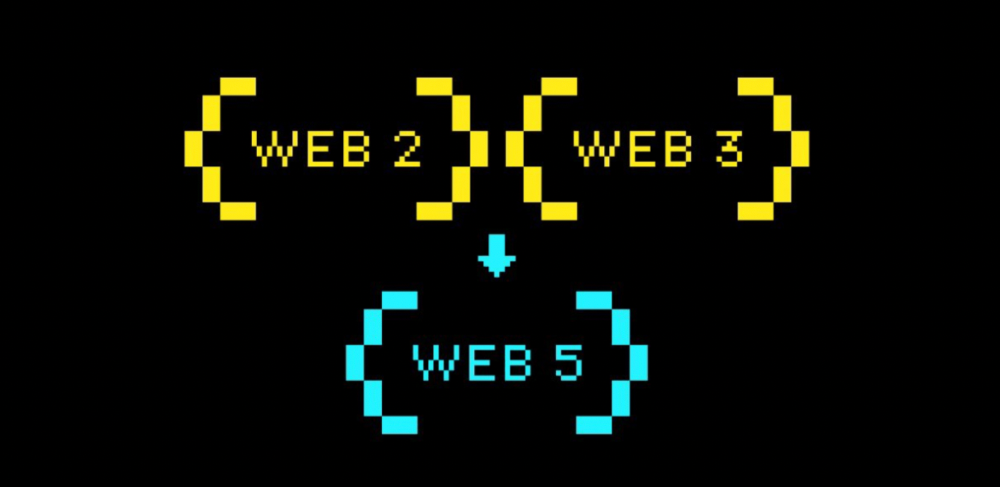

Web 2 + Web 3 = Web 5.

Monkey jpegs and shitcoins have tarnished Web3's reputation. Let’s move on.

Web3 was called "the internet's future."

Well, 'crypto bros' shouted about it loudly.

As quickly as it arrived to be the next internet, it appears to be dead. It's had scandals, turbulence, and crashes galore:

Web 3.0's cryptocurrencies have crashed. Bitcoin's all-time high was $66,935. This month, Ethereum fell from $2130 to $1117. Six months ago, the cryptocurrency market peaked at $3 trillion. Worst is likely ahead.

Gas fees make even the simplest Web3 blockchain transactions unsustainable.

Terra, Luna, and other dollar pegs collapsed, hurting crypto markets. Celsius, a crypto lender backed by VCs and Canada's second-largest pension fund, and Binance, a crypto marketplace, have withheld money and coins. They're near collapse.

NFT sales are falling rapidly and losing public interest.

Web3 has few real-world uses, like most crypto/blockchain technologies. Web3's image has been tarnished by monkey profile pictures and shitcoins while failing to become decentralized (the whole concept is controlled by VCs).

The damage seems irreparable, leaving Web3 in the gutter.

Step forward our new saviour — Web5

Fear not though, as hero awaits to drag us out of the Web3 hellscape. Jack Dorsey revealed his plan to save the internet quickly.

Dorsey has long criticized Web3, believing that VC capital and silicon valley insiders have created a centralized platform. In a tweet that upset believers and VCs (he was promptly blocked by Marc Andreessen), Dorsey argued, "You don't own "Web3." VCs and LPs do. Their incentives prevent it. It's a centralized organization with a new name.

Dorsey announced Web5 on June 10 in a very Elon-like manner. Block's TBD unit will work on the project (formerly Square).

Web5's pitch is that users will control their own data and identity. Bitcoin-based. Sound familiar? The presentation pack's official definition emphasizes decentralization. Web5 is a decentralized web platform that enables developers to write decentralized web apps using decentralized identifiers, verifiable credentials, and decentralized web nodes, returning ownership and control over identity and data to individuals.

Web5 would be permission-less, open, and token-less. What that means for Earth is anyone's guess. Identity. Ownership. Blockchains. Bitcoin. Different.

Web4 appears to have been skipped, forever destined to wish it could have shown the world what it could have been. (It was probably crap.) As this iteration combines Web2 and Web3, simple math and common sense add up to 5. Or something.

Dorsey and his team have had this idea simmering for a while. Daniel Buchner, a member of Block's Decentralized Identity team, said, "We're finishing up Web5's technical components."

Web5 could be the project that decentralizes the internet. It must be useful to users and convince everyone to drop the countless Web3 projects, products, services, coins, blockchains, and websites being developed as I write this.

Web5 may be too late for Dorsey and the incoming flood of creators.

Web6 is planned!

The next months and years will be hectic and less stable than the transition from Web 1.0 to Web 2.0.

Web1 was around 1991-2004.

Web2 ran from 2004 to 2021. (though the Web3 term was first used in 2014, it only really gained traction years later.)

Web3 lasted a year.

Web4 is dead.

Silicon Valley billionaires are turning it into a startup-style race, each disrupting the next iteration until they crack it. Or destroy it completely.

Web5 won't last either.