More on Personal Growth

Neeramitra Reddy

3 years ago

The best life advice I've ever heard could very well come from 50 Cent.

He built a $40M hip-hop empire from street drug dealing.

50 Cent was nearly killed by 9mm bullets.

Before 50 Cent, Curtis Jackson sold drugs.

He sold coke to worried addicts after being orphaned at 8.

Pursuing police. Murderous hustlers and gangs. Unwitting informers.

Despite his hard life, his hip-hop career was a success.

An assassination attempt ended his career at the start.

What sane producer would want to deal with a man entrenched in crime?

Most would have drowned in self-pity and drank themselves to death.

But 50 Cent isn't most people. Life on the streets had given him fearlessness.

“Having a brush with death, or being reminded in a dramatic way of the shortness of our lives, can have a positive, therapeutic effect. So it is best to make every moment count, to have a sense of urgency about life.” ― 50 Cent, The 50th Law

50 released a series of mixtapes that caught Eminem's attention and earned him a $50 million deal!

50 Cents turned death into life.

Things happen; that is life.

We want problems solved.

Every human has problems, whether it's Jeff Bezos swimming in his billions, Obama in his comfortable retirement home, or Dan Bilzerian with his hired bikini models.

All problems.

Problems churn through life. solve one, another appears.

It's harsh. Life's unfair. We can face reality or run from it.

The latter will worsen your issues.

“The firmer your grasp on reality, the more power you will have to alter it for your purposes.” — 50 Cent, The 50th Law

In a fantasy-obsessed world, 50 Cent loves reality.

Wish for better problem-solving skills rather than problem-free living.

Don't wish, work.

We All Have the True Power of Alchemy

Humans are arrogant enough to think the universe cares about them.

That things happen as if the universe notices our nanosecond existences.

Things simply happen. Period.

By changing our perspective, we can turn good things bad.

The alchemists' search for the philosopher's stone may have symbolized the ability to turn our lead-like perceptions into gold.

Negativity bias tints our perceptions.

Normal sparring broke your elbow? Rest and rethink your training. Fired? You can improve your skills and get a better job.

Consider Curtis if he had fallen into despair.

The legend we call 50 Cent wouldn’t have existed.

The Best Lesson in Life Ever?

Neither avoid nor fear your reality.

That simple sentence contains every self-help tip and life lesson on Earth.

When reality is all there is, why fear it? avoidance?

Or worse, fleeing?

To accept reality, we must eliminate the words should be, could be, wish it were, and hope it will be.

It is. Period.

Only by accepting reality's chaos can you shape your life.

“Behind me is infinite power. Before me is endless possibility, around me is boundless opportunity. My strength is mental, physical and spiritual.” — 50 Cent

Hudson Rennie

3 years ago

My Work at a $1.2 Billion Startup That Failed

Sometimes doing everything correctly isn't enough.

In 2020, I could fix my life.

After failing to start a business, I owed $40,000 and had no work.

A $1.2 billion startup on the cusp of going public pulled me up.

Ironically, it was getting ready for an epic fall — with the world watching.

Life sometimes helps. Without a base, even the strongest fall. A corporation that did everything right failed 3 months after going public.

First-row view.

Apple is the creator of Adore.

Out of respect, I've altered the company and employees' names in this account, despite their failure.

Although being a publicly traded company, it may become obvious.

We’ll call it “Adore” — a revolutionary concept in retail shopping.

Two Apple execs established Adore in 2014 with a focus on people-first purchasing.

Jon and Tim:

The concept for the stylish Apple retail locations you see today was developed by retail expert Jon Swanson, who collaborated closely with Steve Jobs.

Tim Cruiter is a graphic designer who produced the recognizable bouncing lamp video that appears at the start of every Pixar film.

The dynamic duo realized their vision.

“What if you could combine the convenience of online shopping with the confidence of the conventional brick-and-mortar store experience.”

Adore's mobile store concept combined traditional retail with online shopping.

Adore brought joy to 70+ cities and 4 countries over 7 years, including the US, Canada, and the UK.

Being employed on the ground floor, with world dominance and IPO on the horizon, was exciting.

I started as an Adore Expert.

I delivered cell phones, helped consumers set them up, and sold add-ons.

As the company grew, I became a Virtual Learning Facilitator and trained new employees across North America using Zoom.

In this capacity, I gained corporate insider knowledge. I worked with the creative team and Jon and Tim.

It's where I saw company foundation fissures. Despite appearances, investors were concerned.

The business strategy was ground-breaking.

Even after seeing my employee stocks fall from a home down payment to $0 (when Adore filed for bankruptcy), it's hard to pinpoint what went wrong.

Solid business model, well-executed.

Jon and Tim's chase for public funding ended in glory.

Here’s the business model in a nutshell:

Buying cell phones is cumbersome. You have two choices:

Online purchase: not knowing what plan you require or how to operate your device.

Enter a store, which can be troublesome and stressful.

Apple, AT&T, and Rogers offered Adore as a free delivery add-on. Customers could:

Have their phone delivered by UPS or Canada Post in 1-2 weeks.

Alternately, arrange for a person to visit them the same day (or sometimes even the same hour) to assist them set up their phone and demonstrate how to use it (transferring contacts, switching the SIM card, etc.).

Each Adore Expert brought a van with extra devices and accessories to customers.

Happy customers.

Here’s how Adore and its partners made money:

Adores partners appreciated sending Experts to consumers' homes since they improved customer satisfaction, average sale, and gadget returns.

**Telecom enterprises have low customer satisfaction. The average NPS is 30/100. Adore's global NPS was 80.

Adore made money by:

a set cost for each delivery

commission on sold warranties and extras

Consumer product applications seemed infinite.

A proprietary scheduling system (“The Adore App”), allowed for same-day, even same-hour deliveries.

It differentiates Adore.

They treated staff generously by:

Options on stock

health advantages

sales enticements

high rates per hour

Four-day workweeks were set by experts.

Being hired early felt like joining Uber, Netflix, or Tesla. We hoped the company's stocks would rise.

Exciting times.

I smiled as I greeted more than 1,000 new staff.

I spent a decade in retail before joining Adore. I needed a change.

After a leap of faith, I needed a lifeline. So, I applied for retail sales jobs in the spring of 2019.

The universe typically offers you what you want after you accept what you need. I needed a job to settle my debt and reach $0 again.

And the universe listened.

After being hired as an Adore Expert, I became a Virtual Learning Facilitator. Enough said.

After weeks of economic damage from the pandemic.

This employment let me work from home during the pandemic. It taught me excellent business skills.

I was active in brainstorming, onboarding new personnel, and expanding communication as we grew.

This job gave me vital skills and a regular paycheck during the pandemic.

It wasn’t until January of 2022 that I left on my own accord to try to work for myself again — this time, it’s going much better.

Adore was perfect. We valued:

Connection

Discovery

Empathy

Everything we did centered on compassion, and we held frequent Justice Calls to discuss diversity and work culture.

The last day of onboarding typically ended in tears as employees felt like they'd found a home, as I had.

Like all nice things, the wonderful vibes ended.

First indication of distress

My first day at the workplace was great.

Fun, intuitive, and they wanted creative individuals, not salesman.

While sales were important, the company's vision was more important.

“To deliver joy through life-changing mobile retail experiences.”

Thorough, forward-thinking training. We had a module on intuition. It gave us role ownership.

We were flown cross-country for training, gave feedback, and felt like we made a difference. Multiple contacts responded immediately and enthusiastically.

The atmosphere was genuine.

Making money was secondary, though. Incredible service was a priority.

Jon and Tim answered new hires' questions during Zoom calls during onboarding. CEOs seldom meet new hires this way, but they seemed to enjoy it.

All appeared well.

But in late 2021, things started changing.

Adore's leadership changed after its IPO. From basic values to sales maximization. We lost communication and were forced to fend for ourselves.

Removed the training wheels.

It got tougher to gain instructions from those above me, and new employees told me their roles weren't as advertised.

External money-focused managers were hired.

Instead of creative types, we hired salespeople.

With a new focus on numbers, Adore's uniqueness began to crumble.

Via Zoom, hundreds of workers were let go.

So.

Early in 2022, mass Zoom firings were trending. A CEO firing 900 workers over Zoom went viral.

Adore was special to me, but it became a headline.

30 June 2022, Vice Motherboard published Watch as Adore's CEO Fires Hundreds.

It described a leaked video of Jon Swanson laying off all staff in Canada and the UK.

They called it a “notice of redundancy”.

The corporation couldn't pay its employees.

I loved Adore's underlying ideals, among other things. We called clients Adorers and sold solutions, not add-ons.

But, like anything, a company is only as strong as its weakest link. And obviously, the people-first focus wasn’t making enough money.

There were signs. The expansion was presumably a race against time and money.

Adore finally declared bankruptcy.

Adore declared bankruptcy 3 months after going public. It happened in waves, like any large-scale fall.

Initial key players to leave were

Then, communication deteriorated.

Lastly, the corporate culture disintegrated.

6 months after leaving Adore, I received a letter in the mail from a Law firm — it was about my stocks.

Adore filed Chapter 11. I had to sue to collect my worthless investments.

I hoped those stocks will be valuable someday. Nope. Nope.

Sad, I sighed.

$1.2 billion firm gone.

I left the workplace 3 months before starting a writing business. Despite being mediocre, I'm doing fine.

I got up as Adore fell.

Finally, can we scale kindness?

I trust my gut. Changes at Adore made me leave before it sank.

Adores' unceremonious slide from a top startup to bankruptcy is astonishing to me.

The company did everything perfectly, in my opinion.

first to market,

provided excellent service

paid their staff handsomely.

was responsible and attentive to criticism

The company wasn't led by an egotistical eccentric. The crew had centuries of cumulative space experience.

I'm optimistic about the future of work culture, but is compassion scalable?

Khyati Jain

3 years ago

By Engaging in these 5 Duplicitous Daily Activities, You Rapidly Kill Your Brain Cells

No, it’s not smartphones, overeating, or sugar.

Everyday practices affect brain health. Good brain practices increase memory and cognition.

Bad behaviors increase stress, which destroys brain cells.

Bad behaviors can reverse evolution and diminish the brain. So, avoid these practices for brain health.

1. The silent assassin

Introverts appreciated quarantine.

Before the pandemic, they needed excuses to remain home; thereafter, they had enough.

I am an introvert, and I didn’t hate quarantine. There are billions of people like me who avoid people.

Social relationships are important for brain health. Social anxiety harms your brain.

Antisocial behavior changes brains. It lowers IQ and increases drug abuse risk.

What you can do is as follows:

Make a daily commitment to engage in conversation with a stranger. Who knows, you might turn out to be your lone mate.

Get outside for at least 30 minutes each day.

Shop for food locally rather than online.

Make a call to a friend you haven't spoken to in a while.

2. Try not to rush things.

People love hustle culture. This economy requires a side gig to save money.

Long hours reduce brain health. A side gig is great until you burn out.

Work ages your wallet and intellect. Overworked brains age faster and lose cognitive function.

Working longer hours can help you make extra money, but it can harm your brain.

Side hustle but don't overwork.

What you can do is as follows:

Decide what hour you are not permitted to work after.

Three hours prior to night, turn off your laptop.

Put down your phone and work.

Assign due dates to each task.

3. Location is everything!

The environment may cause brain fog. High pollution can cause brain damage.

Air pollution raises Alzheimer's risk. Air pollution causes cognitive and behavioral abnormalities.

Polluted air can trigger early development of incurable brain illnesses, not simply lung harm.

Your city's air quality is uncontrollable. You may take steps to improve air quality.

In Delhi, schools and colleges are closed to protect pupils from polluted air. So I've adapted.

What you can do is as follows:

To keep your mind healthy and young, make an investment in a high-quality air purifier.

Enclose your windows during the day.

Use a N95 mask every day.

4. Don't skip this meal.

Fasting intermittently is trendy. Delaying breakfast to finish fasting is frequent.

Some skip breakfast and have a hefty lunch instead.

Skipping breakfast might affect memory and focus. Skipping breakfast causes low cognition, delayed responsiveness, and irritation.

Breakfast affects mood and productivity.

Intermittent fasting doesn't prevent healthy breakfasts.

What you can do is as follows:

Try to fast for 14 hours, then break it with a nutritious breakfast.

So that you can have breakfast in the morning, eat dinner early.

Make sure your breakfast is heavy in fiber and protein.

5. The quickest way to damage the health of your brain

Brain health requires water. 1% dehydration can reduce cognitive ability by 5%.

Cerebral fog and mental clarity might result from 2% brain dehydration. Dehydration shrinks brain cells.

Dehydration causes midday slumps and unproductivity. Water improves work performance.

Dehydration can harm your brain, so drink water throughout the day.

What you can do is as follows:

Always keep a water bottle at your desk.

Enjoy some tasty herbal teas.

With a big glass of water, begin your day.

Bring your own water bottle when you travel.

Conclusion

Bad habits can harm brain health. Low cognition reduces focus and productivity.

Unproductive work leads to procrastination, failure, and low self-esteem.

Avoid these harmful habits to optimize brain health and function.

You might also like

Hudson Rennie

3 years ago

Meet the $5 million monthly controversy-selling King of Toxic Masculinity.

Trigger warning — Andrew Tate is running a genius marketing campaign

Andrew Tate is a 2022 internet celebrity.

Kickboxing world champion became rich playboy with controversial views on gender roles.

Andrew's get-rich-quick scheme isn't new. His social media popularity is impressive.

He’s currently running one of the most genius marketing campaigns in history.

He pulls society's pendulum away from diversity and inclusion and toward diversion and exclusion. He's unstoppable.

Here’s everything you need to know about Andrew Tate. And how he’s playing chess while the world plays checkers.

Cobra Tate is the name he goes by.

American-born, English-raised entrepreneur Andrew Tate lives in Romania.

Romania? Says Andrew,

“I prefer a country in which corruption is available to everyone.”

Andrew was a professional kickboxer with the ring moniker Cobra before starting Hustlers University.

Before that, he liked chess and worshipped his father.

Emory Andrew Tate III is named after his grandmaster chess player father.

Emory was the first black-American chess champion. He was military, martial arts-trained, and multilingual. A superhuman.

He lived in his car to make ends meet.

Andrew and Tristan relocated to England with their mother when their parents split.

It was there that Andrew began his climb toward becoming one of the internet’s greatest villains.

Andrew fell in love with kickboxing.

Andrew spent his 20s as a professional kickboxer and reality TV star, featuring on Big Brother UK and The Ultimate Traveller.

These 3 incidents, along with a chip on his shoulder, foreshadowed Andrews' social media breakthrough.

Chess

Combat sports

Reality television

A dangerous trio.

Andrew started making money online after quitting kickboxing in 2017 due to an eye issue.

Andrew didn't suddenly become popular.

Andrew's web work started going viral in 2022.

Due to his contentious views on patriarchy and gender norms, he's labeled the King of Toxic Masculinity. His most contentious views (trigger warning):

“Women are intrinsically lazy.”

“Female promiscuity is disgusting.”

“Women shouldn’t drive cars or fly planes.”

“A lot of the world’s problems would be solved if women had their body count tattooed on their foreheads.”

Andrew's two main beliefs are:

“These are my personal opinions based on my experiences.”

2. “I believe men are better at some things and women are better at some things. We are not equal.”

Andrew intentionally offends.

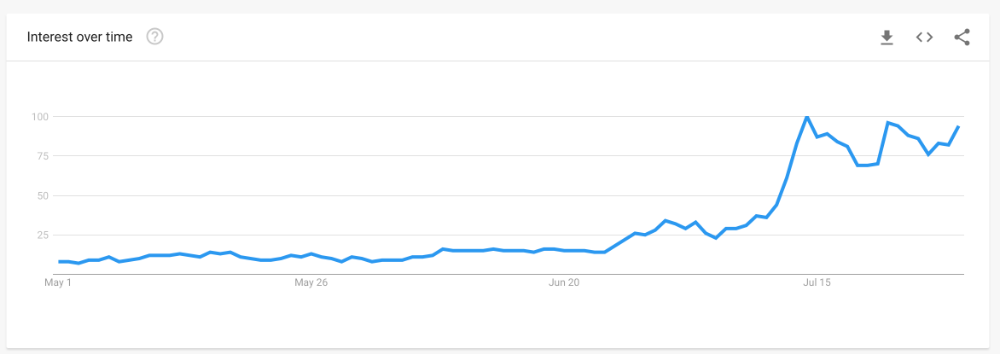

Andrew's thoughts began circulating online in 2022.

In July 2022, he was one of the most Googled humans, surpassing:

Joe Biden

Donald Trump

Kim Kardashian

Andrews' rise is a mystery since no one can censure or suppress him. This is largely because Andrew nor his team post his clips.

But more on that later.

Andrew's path to wealth.

Andrew Tate is a self-made millionaire. His morality is uncertain.

Andrew and Tristan needed money soon after retiring from kickboxing.

“I owed some money to some dangerous people. I had $70K and needed $100K to stay alive.”

Andrews lost $20K on roulette at a local casino.

Andrew had one week to make $50,000, so he started planning. Andrew locked himself in a chamber like Thomas Edison to solve an energy dilemma.

He listed his assets.

Physical strength (but couldn’t fight)

a BMW (worth around $20K)

Intelligence (but no outlet)

A lightbulb.

He had an epiphany after viewing a webcam ad. He sought aid from women, ironically. His 5 international girlfriends are assets.

Then, a lightbulb.

Andrew and Tristan messaged and flew 7 women to a posh restaurant. Selling desperation masked as opportunity, Andrew pitched his master plan:

A webcam business — with a 50/50 revenue split.

5 women left.

2 stayed.

Andrew Tate, a broke kickboxer, became Top G, Cobra Tate.

The business model was simple — yet sad.

Andrew's girlfriends moved in with him and spoke online for 15+ hours a day. Andrew handled ads and equipment as the women posed.

Andrew eventually took over their keyboards, believing he knew what men wanted more than women.

Andrew detailed on the Full Send Podcast how he emotionally manipulated men for millions. They sold houses, automobiles, and life savings to fuel their companionship addiction.

When asked if he felt bad, Andrew said,

“F*ck no.“

Andrew and Tristan wiped off debts, hired workers, and diversified.

Tristan supervised OnlyFans models.

Andrew bought Romanian casinos and MMA league RXF (Real Xtreme Fighting).

Pandemic struck suddenly.

Andrew couldn't run his 2 businesses without a plan. Another easy moneymaker.

He banked on Hustlers University.

The actual cause of Andrew's ubiquity.

On a Your Mom’s House episode Andrew's 4 main revenue sources:

Hustler’s University

2. Owning casinos in Romania

3. Owning 10% of the Romanian MMA league “RXF”

4. “The War Room” — a society of rich and powerful men

When the pandemic hit, 3/4 became inoperable.

So he expanded Hustlers University.

But what is Hustler’s University?

Andrew says Hustlers University teaches 18 wealth-building tactics online. Examples:

Real estate

Copywriting

Amazon FBA

Dropshipping

Flipping Cryptos

How to swiftly become wealthy.

Lessons are imprecise, rudimentary, and macro-focused, say reviews. Invest wisely, etc. Everything is free online.

You pay for community. One unique income stream.

The only money-making mechanism that keeps the course from being a scam.

The truth is, many of Andrew’s students are actually making money. Maybe not from the free YouTube knowledge Andrew and his professors teach in the course, but through Hustler’s University’s affiliate program.

Affiliates earn 10% commission for each new student = $5.

Students can earn $10 for each new referral in the first two months.

Andrew earns $50 per membership per month.

This affiliate program isn’t anything special — in fact, it’s on the lower end of affiliate payouts. Normally, it wouldn’t be very lucrative.

But it has one secret weapon— Andrew and his viral opinions.

Andrew is viral. Andrew went on a media tour in January 2022 after appearing on Your Mom's House.

And many, many more…

He chatted with Twitch streamers. Hustlers University wanted more controversy (and clips).

Here’s the strategy behind Hustler’s University that has (allegedly) earned students upwards of $10K per month:

Make a social media profile with Andrew Tates' name and photo.

Post any of the online videos of Andrews that have gone viral.

Include a referral link in your bio.

Effectively simple.

Andrew's controversy attracts additional students. More student clips circulate as more join. Andrew's students earn more and promote the product as he goes viral.

A brilliant plan that's functioning.

At the beginning of his media tour, Hustler’s University had 5,000 students. 6 months in, and he now has over 100,000.

One income stream generates $5 million every month.

Andrew's approach is not new.

But it is different.

In the early 2010s, Tai Lopez dominated the internet.

His viral video showed his house.

“Here in my garage. Just bought this new Lamborghini.”

Tais' marketing focused on intellect, not strength, power, and wealth to attract women.

How reading quicker leads to financial freedom in 67 steps.

Years later, it was revealed that Tai Lopez rented the mansion and Lamborghini as a marketing ploy to build social proof. Meanwhile, he was living in his friend’s trailer.

Faked success is an old tactic.

Andrew is doing something similar. But with one major distinction.

Andrew outsources his virality — making him nearly impossible to cancel.

In 2022, authorities searched Andrews' estate over human trafficking suspicions. Investigation continues despite withdrawn charges.

Andrew's divisive nature would normally get him fired. Andrew's enterprises and celebrity don't rely on social media.

He doesn't promote or pay for ads. Instead, he encourages his students and anyone wishing to get rich quick to advertise his work.

Because everything goes through his affiliate program. Old saying:

“All publicity is good publicity.”

Final thoughts: it’s ok to feel triggered.

Tate is divisive.

His emotionally charged words are human nature. Andrews created the controversy.

It's non-personal.

His opinions are those of one person. Not world nor generational opinion.

Briefly:

It's easy to understand why Andrews' face is ubiquitous. Money.

The world wide web is a chessboard. Misdirection is part of it.

It’s not personal, it’s business.

Controversy sells

Sometimes understanding the ‘why’, can help you deal with the ‘what.’

Jess Rifkin

3 years ago

As the world watches the Russia-Ukraine border situation, This bill would bar aid to Ukraine until the Mexican border is secured.

Although Mexico and Ukraine are thousands of miles apart, this legislation would link their responses.

Context

Ukraine was a Soviet republic until 1991. A significant proportion of the population, particularly in the east, is ethnically Russian. In February, the Russian military invaded Ukraine, intent on overthrowing its democratically elected government.

This could be the biggest European land invasion since WWII. In response, President Joe Biden sent 3,000 troops to NATO countries bordering Ukraine to help with Ukrainian refugees, with more troops possible if the situation worsened.

In July 2021, the US Border Patrol reported its highest monthly encounter total since March 2000. Some Republicans compare Biden's response to the Mexican border situation to his response to the Ukrainian border situation, though the correlation is unclear.

What the bills do

Two new Republican bills seek to link the US response to Ukraine to the situation in Mexico.

The Secure America's Borders First Act would prohibit federal funding for Ukraine until the US-Mexico border is “operationally controlled,” including a wall as promised by former President Donald Trump. (The bill even mandates a 30-foot-high wall.)

The USB (Ukraine and Southern Border) Act, introduced on February 8 by Rep. Matt Rosendale (R-MT0), would allow the US to support Ukraine, but only if the number of Armed Forces deployed there is less than the number deployed to the Mexican border. Madison Cawthorne introduced H.R. 6665 on February 9th (R-NC11).

What backers say

Supporters argue that even if the US should militarily assist Ukraine, our own domestic border situation should take precedence.

After failing to secure our own border and protect our own territorial integrity, ‘America Last' politicians on both sides of the aisle now tell us that we must do so for Ukraine. “Before rushing America into another foreign conflict over an Eastern European nation's border thousands of miles from our shores, they should first secure our southern border.”

“If Joe Biden truly cared about Americans, he would prioritize national security over international affairs,” Rep. Cawthorn said in a separate press release. The least we can do to secure our own country is send the same number of troops to the US-Mexico border to assist our border patrol agents working diligently to secure America.

What opponents say

The president has defended his Ukraine and Mexico policies, stating that both seek peace and diplomacy.

Our nations [the US and Mexico] have a long and complicated history, and we haven't always been perfect neighbors, but we have seen the power and purpose of cooperation,” Biden said in 2021. “We're safer when we work together, whether it's to manage our shared border or stop the pandemic. [In both the Obama and Biden administration], we made a commitment that we look at Mexico as an equal, not as somebody who is south of our border.”

No mistake: If Russia goes ahead with its plans, it will be responsible for a catastrophic and unnecessary war of choice. To protect our collective security, the United States and our allies are ready to defend every inch of NATO territory. We won't send troops into Ukraine, but we will continue to support the Ukrainian people... But, I repeat, Russia can choose diplomacy. It is not too late to de-escalate and return to the negotiating table.”

Odds of passage

The Secure America's Borders First Act has nine Republican sponsors. Either the House Armed Services or Foreign Affairs Committees may vote on it.

Rep. Paul Gosar, a Republican, co-sponsored the USB Act (R-AZ4). The House Armed Services Committee may vote on it.

With Republicans in control, passage is unlikely.

Sarah Bird

3 years ago

Memes Help This YouTube Channel Earn Over $12k Per Month

Take a look at a YouTube channel making anything up to over $12k a month from making very simple videos.

And the best part? Its replicable by anyone. Basic videos can be generated for free without design abilities.

Join me as I deconstruct the channel to estimate how much they make, how they do it, and how you can too.

What Do They Do Exactly?

Happy Land posts memes with a simple caption they wrote. So, it's new. The videos are a slideshow of meme photos with stock music.

The site posts 12 times a day.

8-10-minute videos show 10 second images. Thus, each video needs 48-60 memes.

Memes are video titles (e.g. times a boyfriend was hilarious, back to school fails, funny restaurant signs).

Some stats about the channel:

Founded on October 30, 2020

873 videos were added.

81.8k subscribers

67,244,196 views of the video

What Value Are They Adding?

Everyone can find free memes online. This channel collects similar memes into a single video so you don't have to scroll or click for more. It’s right there, you just keep watching and more will come.

By theming it, the audience is prepared for the video's content.

If you want hilarious animal memes or restaurant signs, choose the video and you'll get up to 60 memes without having to look for them. Genius!

How much money do they make?

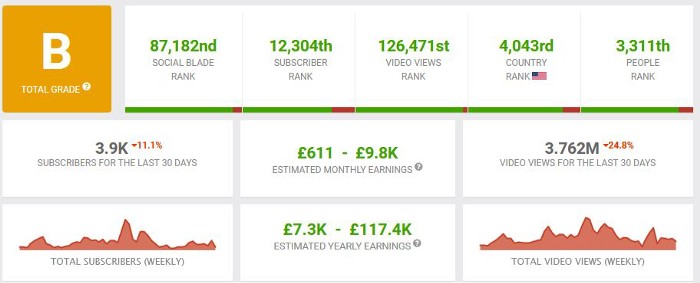

According to www.socialblade.com, the channel earns $800-12.8k (image shown in my home currency of GBP).

That's a crazy estimate, but it highlights the unbelievable potential of a channel that presents memes.

This channel thrives on quantity, thus putting out videos is necessary to keep the flow continuing and capture its audience's attention.

How Are the Videos Made?

Straightforward. Memes are added to a presentation without editing (so you could make this in PowerPoint or Keynote).

Each slide should include a unique image and caption. Set 10 seconds per slide.

Add music and post the video.

Finding enough memes for the material and theming is difficult, but if you enjoy memes, this is a fun job.

This case study should have shown you that you don't need expensive software or design expertise to make entertaining videos. Why not try fresh, easy-to-do ideas and see where they lead?