More on Marketing

Tim Denning

3 years ago

I Posted Six Times a Day for 210 Days on Twitter. Here's What Happened.

I'd spend hours composing articles only to find out they were useless. Twitter solved the problem.

Twitter is wrinkled, say critics.

Nope. Writing is different. It won't make sense until you write there.

Twitter is resurgent. People are reading again. 15-second TikToks overloaded our senses.

After nuking my 20,000-follower Twitter account and starting again, I wrote every day for 210 days.

I'll explain.

I came across the strange world of microblogging.

Traditional web writing is filler-heavy.

On Twitter, you must be brief. I played Wordle.

Twitter Threads are the most popular writing format. Like a blog post. It reminds me of the famous broetry posts on LinkedIn a few years ago.

Threads combine tweets into an article.

Sharp, concise sentences

No regard for grammar

As important as the information is how the text looks.

Twitter Threads are like Michael Angelo's David monument. He chipped away at an enormous piece of marble until a man with a big willy appeared.

That's Twitter Threads.

I tried to remove unnecessary layers from several of my Wordpress blog posts. Then I realized something.

Tweeting from scratch is easier and more entertaining. It's quicker and makes you think more concisely.

Superpower: saying much with little words. My long-form writing has improved. My article sentences resemble tweets.

You never know what will happen.

Twitter's subcultures are odd. Best-performing tweets are strange.

Unusual trend: working alone and without telling anyone. It's a rebellion against Instagram influencers who share their every moment.

Early on, random thoughts worked:

My friend’s wife is Ukrainian. Her family are trapped in the warzone. He is devastated. And here I was complaining about my broken garage door. War puts everything in perspective. Today is a day to be grateful for peace.

Documenting what's happening triggers writing. It's not about viral tweets. Helping others matters.

There are numerous anonymous users.

Twitter uses pseudonyms.

You don't matter. On sites like LinkedIn, you must use your real name. Welcome to the Cyberpunk metaverse of Twitter :)

One daily piece of writing is a powerful habit.

Habits build creator careers. Read that again.

Twitter is an easy habit to pick up. If you can't tweet in one sentence, something's wrong. Easy-peasy-japanese.

Not what I tweeted, but my constancy, made the difference.

Daily writing is challenging, especially if your supervisor is on your back. Twitter encourages writing.

Tweets evolved as the foundation of all other material.

During my experiment, I enjoyed Twitter's speed.

Tweets get immediate responses, comments, and feedback. My popular tweets become newspaper headlines. I've also written essays from tweet discussions.

Sometimes the tweet and article were clear. Twitter sometimes helped me overcome writer's block.

I used to spend hours composing big things that had little real-world use.

Twitter helped me. No guessing. Data guides my coverage and validates concepts.

Test ideas on Twitter.

It took some time for my email list to grow.

Subscribers are a writer's lifeblood.

Without them, you're broke and homeless when Mark Zuckerberg tweaks the algorithms for ad dollars. Twitter has three ways to obtain email subscribers:

1. Add a link to your bio.

Twitter allows bio links (LinkedIn now does too). My eBook's landing page is linked. I collect emails there.

2. Start an online newsletter.

Twitter bought newsletter app Revue. They promote what they own.

I just established up a Revue email newsletter. I imported them weekly into my ConvertKit email list.

3. Create Twitter threads and include a link to your email list in the final tweet.

Write Twitter Threads and link the last tweet to your email list (example below).

Initial email subscribers were modest.

Numbers are growing. Twitter provides 25% of my new email subscribers. Some days, 50 people join.

Without them, my writing career is over. I'd be back at a 9-5 job begging for time off to spend with my newborn daughter. Nope.

Collect email addresses or die trying.

As insurance against unsubscribes and Zucks, use a second email list or Discord community.

What I still need to do

Twitter's fun. I'm wiser. I need to enable auto-replies and auto-DMs (direct messages).

This adds another way to attract subscribers. I schedule tweets with Tweet Hunter.

It’s best to go slow. People assume you're an internet marketer if you spam them with click requests.

A human internet marketer is preferable to a robot. My opinion.

210 days on Twitter taught me that. I plan to use the platform until I'm a grandfather unless Elon ruins it.

Michael Salim

3 years ago

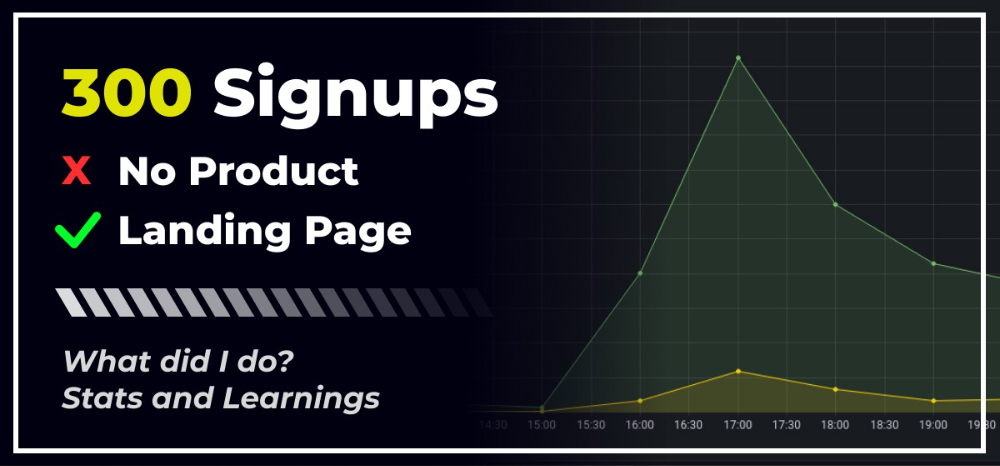

300 Signups, 1 Landing Page, 0 Products

I placed a link on HackerNews and got 300 signups in a week. This post explains what happened.

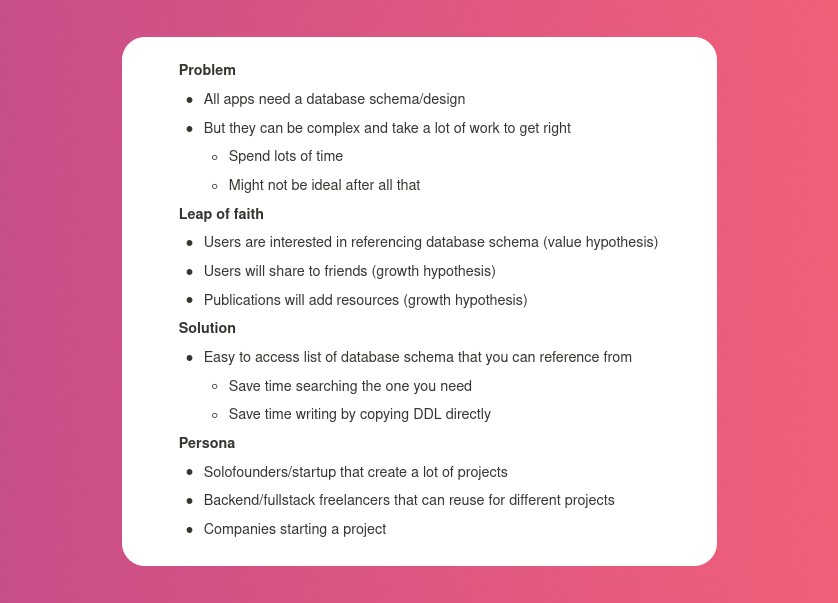

Product Concept

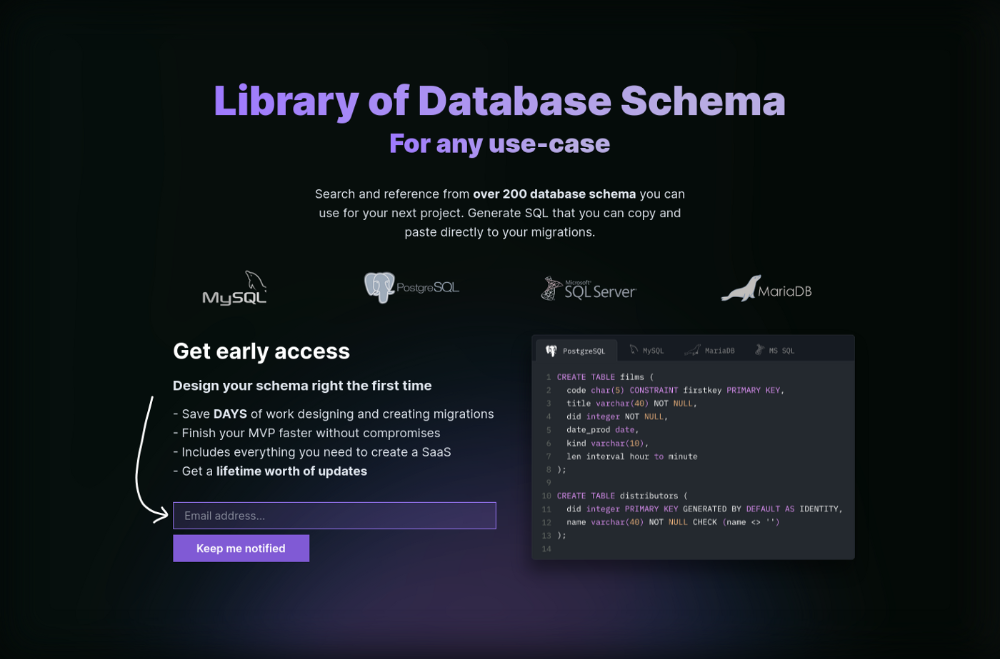

The product is DbSchemaLibrary. A library of Database Schema.

I'm not sure where this idea originated from. Very fast. Build fast, fail fast, test many ideas, and one will be a hit. I tried it. Let's try it anyway, even though it'll probably fail. I finished The Lean Startup book and wanted to use it.

Database job bores me. Important! I get drowsy working on it. Someone must do it. I remember this happening once. I needed examples at the time. Something similar to Recall (my other project) that I can copy — or at least use as a reference.

Frequently googled. Many tabs open. The results were useless. I raised my hand and agreed to construct the database myself.

It resurfaced. I decided to do something.

Due Diligence

Lean Startup emphasizes validated learning. Everything the startup does should result in learning. I may build something nobody wants otherwise. That's what happened to Recall.

So, I wrote a business plan document. This happens before I code. What am I solving? What is my proposed solution? What is the leap of faith between the problem and solution? Who would be my target audience?

My note:

In my previous project, I did the opposite!

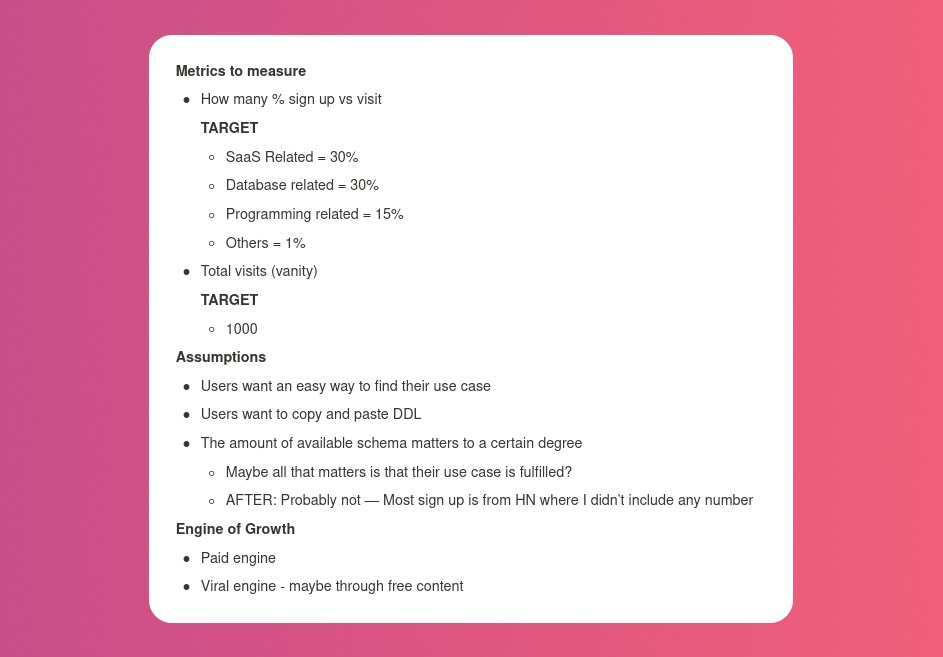

I wrote my expectations after reading the book's advice.

“Failure is a prerequisite to learning. The problem with the notion of shipping a product and then seeing what happens is that you are guaranteed to succeed — at seeing what happens.” — The Lean Startup book

These are successful metrics. If I don't reach them, I'll drop the idea and try another. I didn't understand numbers then. Below are guesses. But it’s a start!

I then wrote the project's What and Why. I'll use this everywhere. Before, I wrote a different pitch each time. I thought certain words would be better. I felt the audience might want something unusual.

Occasionally, this works. I'm unsure if it's a good idea. No stats, just my writing-time opinion. Writing every time is time-consuming and sometimes hazardous. Having a copy saved me duplication.

I can measure and learn from performance.

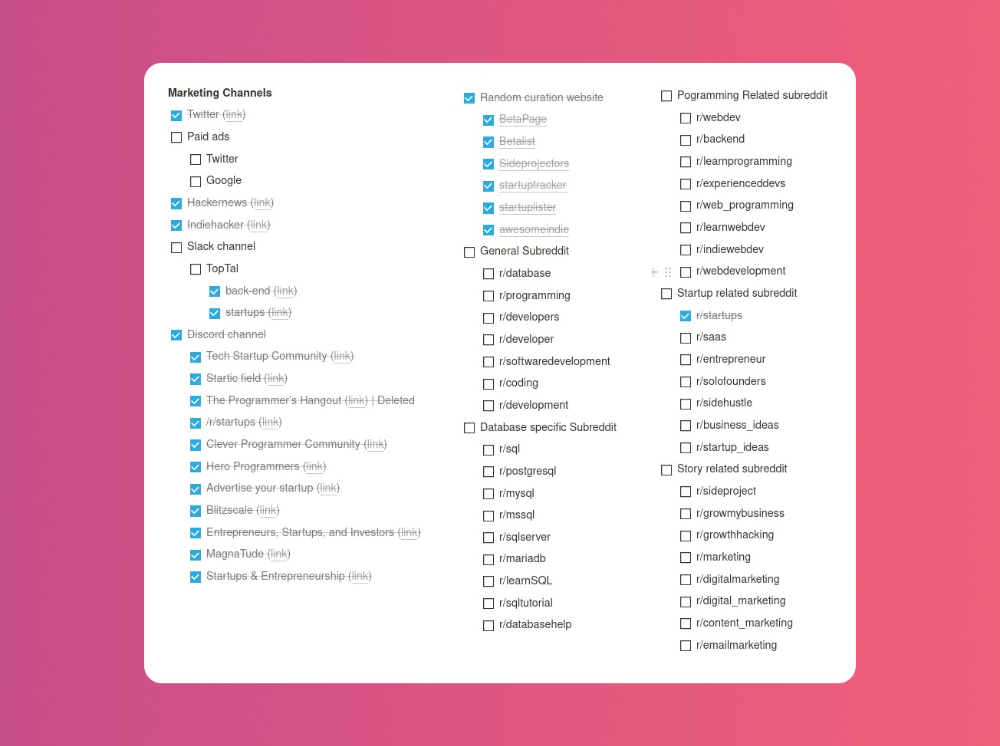

Last, I identified communities that might demand the product. This became an exercise in creativity.

The MVP

So now it’s time to build.

A MVP can test my assumptions. Business may learn from it. Not low-quality. We should learn from the tiniest thing.

I like the example of how Dropbox did theirs. They assumed that if the product works, people will utilize it. How can this be tested without a quality product? They made a movie demonstrating the software's functionality. Who knows how much functionality existed?

So I tested my biggest assumption. Users want schema references. How can I test if users want to reference another schema? I'd love this. Recall taught me that wanting something doesn't mean others do.

I made an email-collection landing page. Describe it briefly. Reference library. Each email sender wants a reference. They're interested in the product. Few other reasons exist.

Header and footer were skipped. No name or logo. DbSchemaLibrary is a name I thought of after the fact. 5-minute logo. I expected a flop. Recall has no users after months of labor. What could happen to a 2-day project?

I didn't compromise learning validation. How many visitors sign up? To draw a conclusion, I must track these results.

Posting Time

Now that the job is done, gauge interest. The next morning, I posted on all my channels. I didn't want to be spammy, therefore it required more time.

I made sure each channel had at least one fan of this product. I also answer people's inquiries in the channel.

My list stinks. Several channels wouldn't work. The product's target market isn't there. Posting there would waste our time. This taught me to create marketing channels depending on my persona.

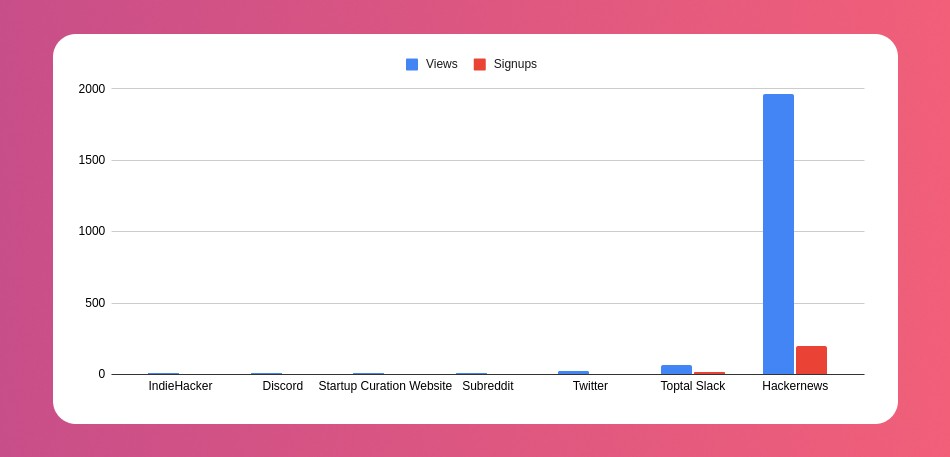

Statistics! What actually happened

My favorite part! 23 channels received the link.

I stopped posting to Discord despite its high conversion rate. I eliminated some channels because they didn't fit. According to the numbers, some users like it. Most users think it's spam.

I was skeptical. And 12 people viewed it.

I didn't expect much attention on a startup subreddit. I'll likely examine Reddit further in the future. As I have enough info, I didn't post much. Time for the next validated learning

No comment. The post had few views, therefore the numbers are low.

The targeted people come next.

I'm a Toptal freelancer. There's a member-only Slack channel. Most people can't use this marketing channel, but you should! It's not as spectacular as discord's 27% conversion rate. But I think the users here are better.

I don’t really have a following anywhere so this isn’t something I can leverage.

The best yet. 10% is converted. With more data, I expect to attain a 10% conversion rate from other channels. Stable number.

This number required some work. Did you know that people use many different clients to read HN?

Unknowns

Untrackable views and signups abound. 1136 views and 135 signups are untraceable. It's 11%. I bet much of that came from Hackernews.

Overall Statistics

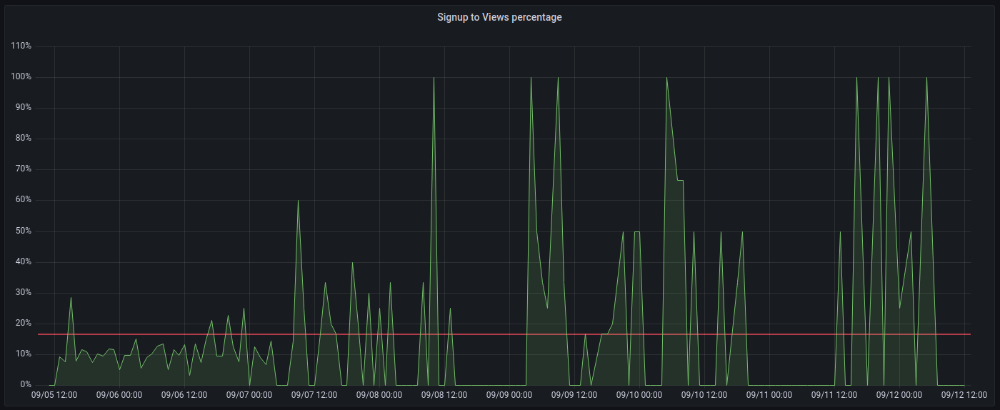

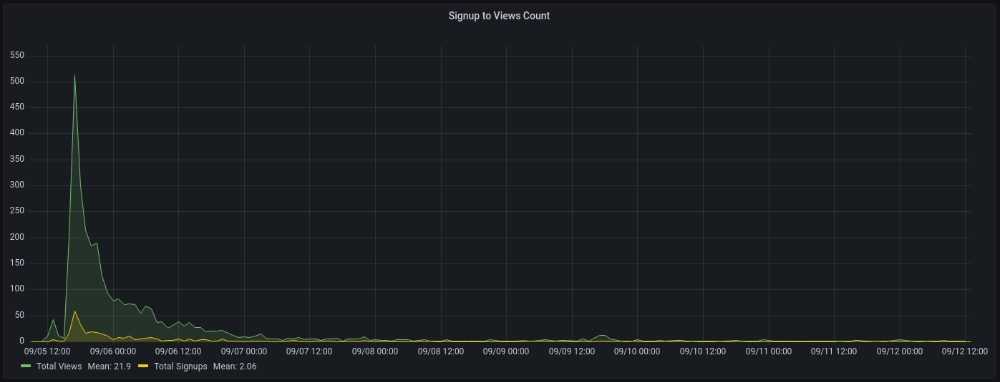

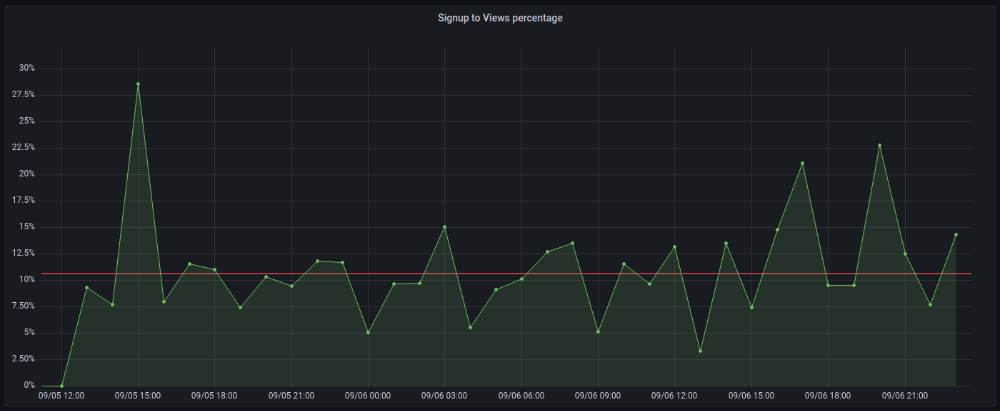

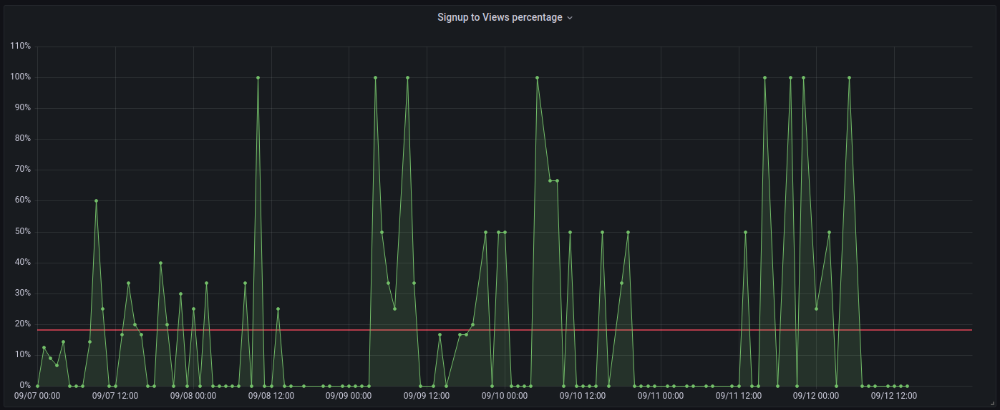

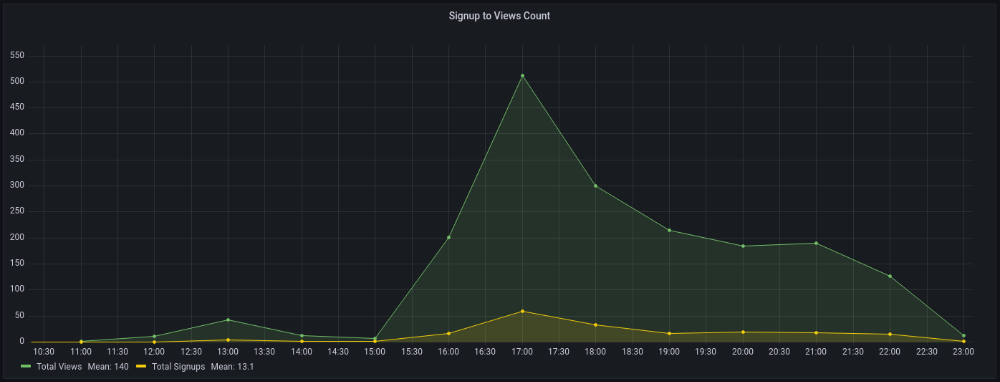

The 7-day signup-to-visit ratio was 17%. (Hourly data points)

First-day percentages were lower, which is noteworthy. Initially, it was little above 10%. The HN post started getting views then.

When traffic drops, the number reaches just around 20%. More individuals are interested in the connection. hn.algolia.com sent 2 visitors. This means people are searching and finding my post.

Interesting discoveries

1. HN post struggled till the US woke up.

11am UTC. After an hour, it lost popularity. It seemed over. 7 signups converted 13%. Not amazing, but I would've thought ahead.

After 4pm UTC, traffic grew again. 4pm UTC is 9am PDT. US awakened. 10am PDT saw 512 views.

2. The product was highlighted in a newsletter.

I found Revue references when gathering data. Newsletter platform. Someone posted the newsletter link. 37 views and 3 registrations.

3. HN numbers are extremely reliable

I don't have a time-lapse graph (yet). The statistics were constant all day.

2717 views later 272 new users, or 10.1%

With 293 signups at 2856 views, 10.25%

At 306 signups at 2965 views, 10.32%

Learnings

1. My initial estimations were wildly inaccurate

I wrote 30% conversion. Reading some articles, looks like 10% is a good number to aim for.

2. Paying attention to what matters rather than vain metrics

The Lean Startup discourages vanity metrics. Feel-good metrics that don't measure growth or traction. Considering the proportion instead of the total visitors made me realize there was something here.

What’s next?

There are lots of work to do. Data aggregation, display, website development, marketing, legal issues. Fun! It's satisfying to solve an issue rather than investigate its cause.

In the meantime, I’ve already written the first project update in another post. Continue reading it if you’d like to know more about the project itself! Shifting from Quantity to Quality — DbSchemaLibrary

obimy.app

3 years ago

How TikTok helped us grow to 6 million users

This resulted to obimy's new audience.

Hi! obimy's official account. Here, we'll teach app developers and marketers. In 2022, our downloads increased dramatically, so we'll share what we learned.

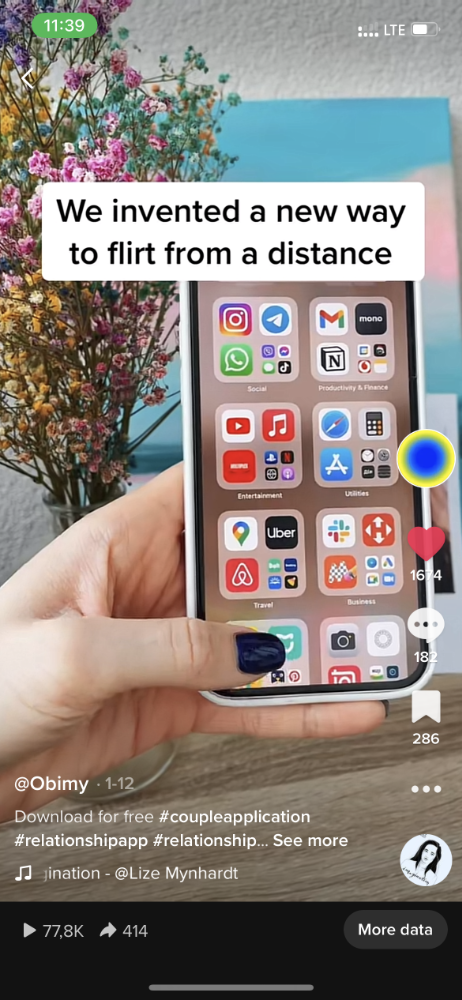

obimy is what we call a ‘senseger’. It's a new method to communicate digitally. Instead of text, obimy users connect through senses and moods. Feeling playful? Flirt with your partner, pat a pal, or dump water on a classmate. Each feeling is an interactive animation with vibration. It's a wordless app. App Store and Google Play have obimy.

We had 20,000 users in 2022. Two to five thousand of them opened the app monthly. Our DAU metric was 500.

We have 6 million users after 6 months. 500,000 individuals use obimy daily. obimy was the top lifestyle app this week in the U.S.

And TikTok helped.

TikTok fuels obimys' growth. It's why our app exploded. How and what did we learn? Our Head of Marketing, Anastasia Avramenko, knows.

our actions prior to TikTok

We wanted to achieve product-market fit through organic expansion. Quora, Reddit, Facebook Groups, Facebook Ads, Google Ads, Apple Search Ads, and social media activity were tested. Nothing worked. Our CPI was sometimes $4, so unit economics didn't work.

We studied our markets and made audience hypotheses. We promoted our goods and studied our audience through social media quizzes. Our target demographic was Americans in long-distance relationships. I designed quizzes like Test the Strength of Your Relationship to better understand the user base. After each quiz, we encouraged users to download the app to enhance their connection and bridge the distance.

We got 1,000 responses for $50. This helped us comprehend the audience's grief and coping strategies (aka our rivals). I based action items on answers given. If you can't embrace a loved one, use obimy.

We also tried Facebook and Google ads. From the start, we knew it wouldn't work.

We were desperate to discover a free way to get more users.

Our journey to TikTok

TikTok is a great venue for emerging creators. It also helped reach people. Before obimy, my TikTok videos garnered 12 million views without sponsored promotion.

We had to act. TikTok was required.

I wasn't a TikTok user before obimy. Initially, I uploaded promotional content. Call-to-actions appear strange next to dancing challenges and my money don't jiggle jiggle. I learned TikTok. Watch TikTok for an hour was on my to-do list. What a dream job!

Our most popular movies presented the app alongside text outlining what it does. We started promoting them in Europe and the U.S. and got a 16% CTR and $1 CPI, an improvement over our previous efforts.

Somehow, we were expanding. So we came up with new hypotheses, calls to action, and content.

Four months passed, yet we saw no organic growth.

Russia attacked Ukraine.

Our app aimed to be helpful. For now, we're focusing on our Ukrainian audience. I posted sloppy TikToks illustrating how obimy can help during shelling or air raids.

In two hours, Kostia sent me our visitor count. Our servers crashed.

Initially, we had several thousand daily users. Over 200,000 users joined obimy in a week. They posted obimy videos on TikTok, drawing additional users. We've also resumed U.S. video promotion.

We gained 2,000,000 new members with less than $100 in ads, primarily in the U.S. and U.K.

TikTok helped.

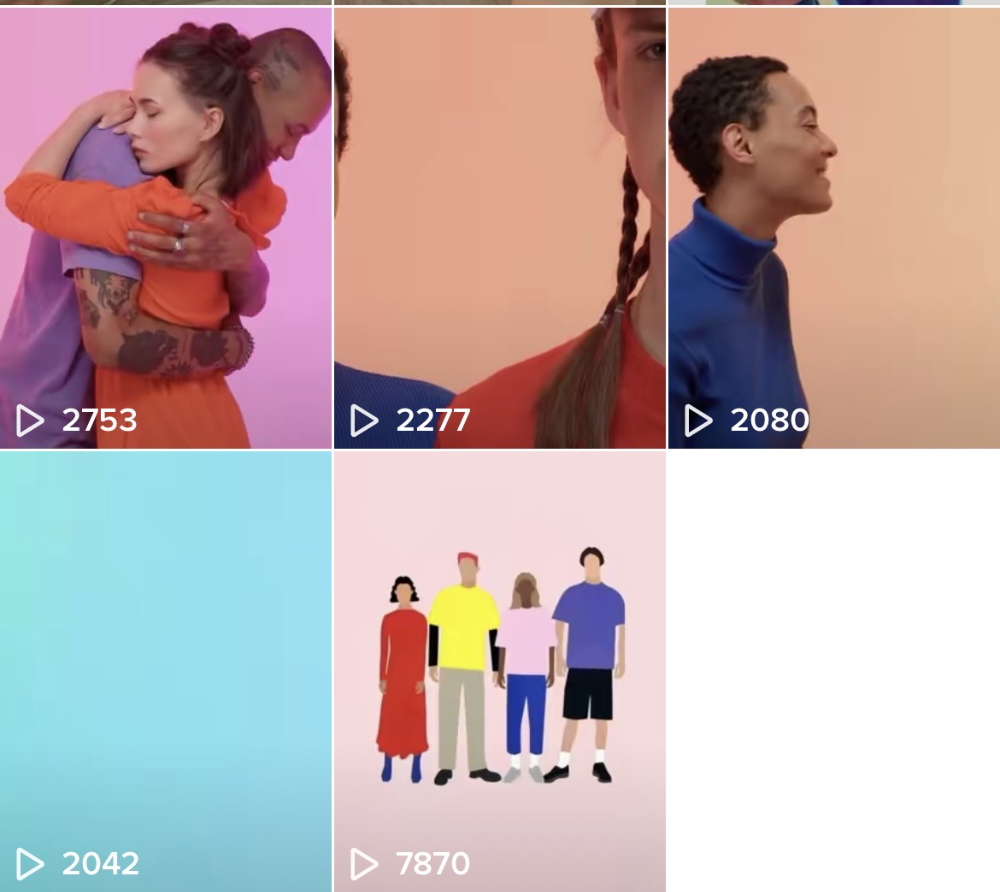

The figures

We were confident we'd chosen the ideal tool for organic growth.

Over 45 million people have viewed our own videos plus a ton of user-generated content with the hashtag #obimy.

About 375 thousand people have liked all of our individual videos.

The number of downloads and the virality of videos are directly correlated.

Where are we now?

TikTok fuels our organic growth. We post 56 videos every week and pay to promote viral content.

We use UGC and influencers. We worked with Universal Music Italy on Eurovision. They offered to promote us through their million-follower TikTok influencers. We thought their followers would improve our audience, but it didn't matter. Integration didn't help us. Users that share obimy videos with their followers can reach several million views, which affects our download rate.

After the dust settled, we determined our key audience was 13-18-year-olds. They want to express themselves, but it's sometimes difficult. We're searching for methods to better engage with our users. We opened a Discord server to discuss anime and video games and gather app and content feedback.

TikTok helps us test product updates and hypotheses. Example: I once thought we might raise MAU by prompting users to add strangers as friends. Instead of asking our team to construct it, I made a TikTok urging users to share invite URLs. Users share links under every video we upload, embracing people worldwide.

Key lessons

Don't direct-sell. TikTok isn't for Instagram, Facebook, or YouTube promo videos. Conventional advertisements don't fit. Most users will swipe up and watch humorous doggos.

More product videos are better. Finally. So what?

Encourage interaction. Tagging friends in comments or making videos with the app promotes it more than any marketing spend.

Be odd and risqué. A user mistakenly sent a French kiss to their mom in one of our most popular videos.

TikTok helps test hypotheses and build your user base. It also helps develop apps. In our upcoming blog, we'll guide you through obimy's design revisions based on TikTok. Follow us on Twitter, Instagram, and TikTok.

You might also like

Sam Warain

3 years ago

Sam Altman, CEO of Open AI, foresees the next trillion-dollar AI company

“I think if I had time to do something else, I would be so excited to go after this company right now.”

Sam Altman, CEO of Open AI, recently discussed AI's present and future.

Open AI is important. They're creating the cyberpunk and sci-fi worlds.

They use the most advanced algorithms and data sets.

GPT-3...sound familiar? Open AI built most copyrighting software. Peppertype, Jasper AI, Rytr. If you've used any, you'll be shocked by the quality.

Open AI isn't only GPT-3. They created DallE-2 and Whisper (a speech recognition software released last week).

What will they do next? What's the next great chance?

Sam Altman, CEO of Open AI, recently gave a lecture about the next trillion-dollar AI opportunity.

Who is the organization behind Open AI?

Open AI first. If you know, skip it.

Open AI is one of the earliest private AI startups. Elon Musk, Greg Brockman, and Rebekah Mercer established OpenAI in December 2015.

OpenAI has helped its citizens and AI since its birth.

They have scary-good algorithms.

Their GPT-3 natural language processing program is excellent.

The algorithm's exponential growth is astounding. GPT-2 came out in November 2019. May 2020 brought GPT-3.

Massive computation and datasets improved the technique in just a year. New York Times said GPT-3 could write like a human.

Same for Dall-E. Dall-E 2 was announced in April 2022. Dall-E 2 won a Colorado art contest.

Open AI's algorithms challenge jobs we thought required human innovation.

So what does Sam Altman think?

The Present Situation and AI's Limitations

During the interview, Sam states that we are still at the tip of the iceberg.

So I think so far, we’ve been in the realm where you can do an incredible copywriting business or you can do an education service or whatever. But I don’t think we’ve yet seen the people go after the trillion dollar take on Google.

He's right that AI can't generate net new human knowledge. It can train and synthesize vast amounts of knowledge, but it simply reproduces human work.

“It’s not going to cure cancer. It’s not going to add to the sum total of human scientific knowledge.”

But the key word is yet.

And that is what I think will turn out to be wrong that most surprises the current experts in the field.

Reinforcing his point that massive innovations are yet to come.

But where?

The Next $1 Trillion AI Company

Sam predicts a bio or genomic breakthrough.

There’s been some promising work in genomics, but stuff on a bench top hasn’t really impacted it. I think that’s going to change. And I think this is one of these areas where there will be these new $100 billion to $1 trillion companies started, and those areas are rare.

Avoid human trials since they take time. Bio-materials or simulators are suitable beginning points.

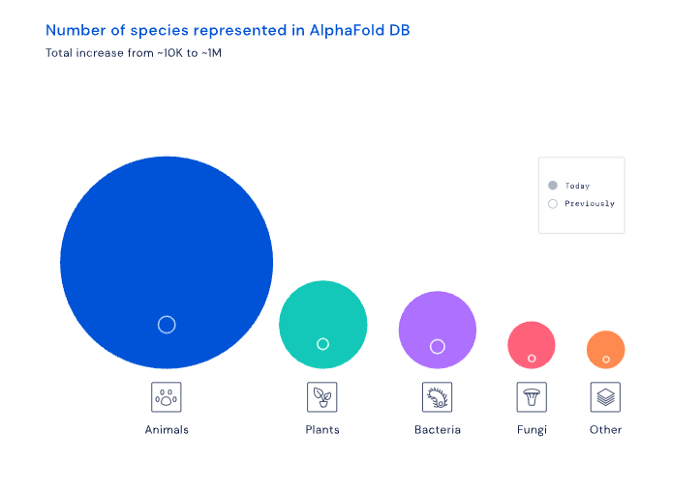

AI may have a breakthrough. DeepMind, an OpenAI competitor, has developed AlphaFold to predict protein 3D structures.

It could change how we see proteins and their function. AlphaFold could provide fresh understanding into how proteins work and diseases originate by revealing their structure. This could lead to Alzheimer's and cancer treatments. AlphaFold could speed up medication development by revealing how proteins interact with medicines.

Deep Mind offered 200 million protein structures for scientists to download (including sustainability, food insecurity, and neglected diseases).

Being in AI for 4+ years, I'm amazed at the progress. We're past the hype cycle, as evidenced by the collapse of AI startups like C3 AI, and have entered a productive phase.

We'll see innovative enterprises that could replace Google and other trillion-dollar companies.

What happens after AI adoption is scary and unpredictable. How will AGI (Artificial General Intelligence) affect us? Highly autonomous systems that exceed humans at valuable work (Open AI)

My guess is that the things that we’ll have to figure out are how we think about fairly distributing wealth, access to AGI systems, which will be the commodity of the realm, and governance, how we collectively decide what they can do, what they don’t do, things like that. And I think figuring out the answer to those questions is going to just be huge. — Sam Altman CEO

CyberPunkMetalHead

3 years ago

I made ChatGPT copy itself.

OpenAI's ChatGPT is an amazing AI-powered chatbot. It can write poetry, stories, and marketing campaigns, and it's also adept at coding. ChatGPT's coding is so amazing that it replicated itself. What happened:

Me: Hi

Hello! Can I help? I'll answer any inquiries you have.

Me: Build a python and flask AI chatbot that responds to user messages on the frontend.

CGPT:

from flask import Flask, request, jsonify

import openai

openai.api_key = "YOUR_API_KEY"

@app.route('/chatbot', methods=['POST'])

def chatbot():

user_message = request.get_json()['message']

response = chatbot_response(user_message)

return jsonify({'response': response})

def chatbot_response(user_message):

response = openai.Completion.create(

engine="text-davinci-002",

prompt=user_message,

max_tokens=1024,

n = 1,

temperature=0.5

)

return response['choices'][0]['text']

if __name__ == '__main__':

app.run()To integrate a chatbot with a frontend, use Flask to serve an HTML page with a form for user messages and the chatbot's answer. AJAX can submit the form to the /chatbot route, and JavaScript can show the chatbot's response.

I imported code snippets at the top of the file.

Let's add a frontend interface, I said.

CGPT:

<!DOCTYPE html>

<html>

<head>

<title>AI Chatbot</title>

</head>

<body>

<h1>AI Chatbot</h1>

<form id="chatbot-form">

<label for="message">Message:</label><br>

<input type="text" id="message" name="message"><br>

<input type="submit" value="Submit">

</form>

<div id="chatbot-response"></div>

<script src="https://ajax.googleapis.com/ajax/libs/jquery/3.5.1/jquery.min.js"></script>

<script>

$(document).ready(function() {

$("#chatbot-form").submit(function(e) {

e.preventDefault();

var userMessage = $("#message").val();

$.ajax({

type: "POST",

url: "/chatbot",

data: JSON.stringify({message: userMessage}),

contentType: "application/json; charset=utf-8",

dataType: "json",

success: function(response) {

$("#chatbot-response").text(response.response);

}

});

});

});

</script>

</body>

</html>To serve this HTML page using Flask, build a new route that returns the page as a GET response.

So far, so good.

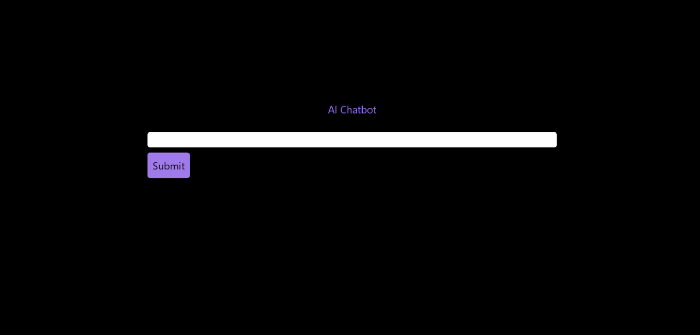

Looked quite basic so I got chatGPT to add some tailwind to the project.

Me: Tailwind the chatbot.html page, please. Give it a futuristic style with purple and neon

After a few more prompts, Talk GPT aligned the form to the center, changed some colors, and added padding.

CGPT:

<!DOCTYPE html>

<html>

<head>

<title>AI Chatbot</title>

<link href="https://unpkg.com/tailwindcss@^1.0/dist/tailwind.min.css" rel="stylesheet">

</head>

<body class="bg-black flex flex-col justify-center items-center">

<h1 class="sm:text-center text-purple-500">AI Chatbot</h1>

<form id="chatbot-form" class="border-purple-500 bg-purple-800 rounded">

<label for="message">Message:</label><br>

<input type="text" id="message" name="message" class="min-w-30 rounded"><br>

<input type="submit" value="Submit" class="bg-purple-500 border-red-500 transform hover:scale-110 rounded">

</form>

<div class="flex justify-center">

<div id="chatbot-response" class="text-white rounded"></div>

</div>

<script src="https://ajax.googleapis.com/ajax/libs/jquery/3.5.1/jquery.min.js"></script>

<script>

var colors = ['red', 'orange', 'yellow', 'green', 'blue', 'indigo', 'violet'];

var colorIndex = 0;

$(document).ready(function() {

setInterval(function() {

$("#chatbot-form").css('border-color', colors[colorIndex]);

colorIndex = (colorIndex + 1) % colors.length;

}, 1000);

$("#chatbot-form").submit(function(e) {

e.preventDefault();

var userMessage = $("#message").val();

$.ajax({

type: "POST",

url: "/chatbot",

data: JSON.stringify({message: userMessage}),

contentType: "application/json; charset=utf-8",

dataType: "json",

success: function(response) {

$("#chatbot-response").text(response.response);

}

});

});

});

</script>

</body>

</html>Improved:

A few more prompts like “Add more vertical and horizontal padding to the submit button and vertical margin, and vertically align this file's body.”

The result:

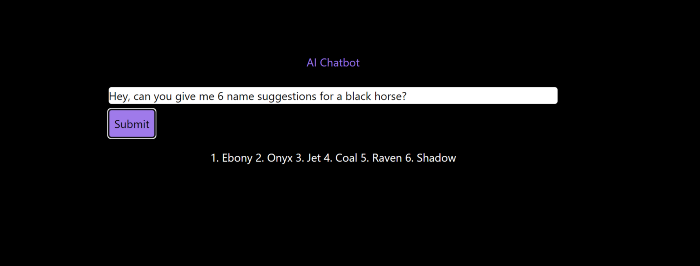

Is it effective? Now, I'll chat with ChatGPT's AI Chatbot. I intervened minimally throughout construction. Chat GPT created numerous files, connected and interacted with its own API, and presented the user request response on the front end by itself.

Me to AI Chatbot: Hey, can you give me 6 name suggestions for a black horse?

Me to AI Chatbot: Write a poem in the style of Jim Morrison. Make sure to mention cacti and black holes

ChatGPTs Chatbot:

In the desert night, I am confronted by cacti,

Their arms reaching out, As if to grab me and take me away.

The stars in the sky, Beckon me to come,

To a world of black holes, Where I can be free.

I am in a trance, My body and mind,

In a state of limbo, As I wander the night.

I hear a voice, Calling me to the unknown,

I cannot resist, The lure of the black hole.

The cacti watch, As I take my leave,

To a place of mystery, Where I can find peace.

Of course, the next question I gave it was to build a chatbot!

Me: Build a python and flask AI chatbot that responds to user messages on the frontend.

ChatbotGPTs:

import os from flask

import Flask, request, jsonify

from chatterbot

import ChatBot

from chatterbot.trainers

import ListTrainer

# Create a new chatbot

bot = ChatBot(

"My Bot",

storage_adapter="chatterbot.storage.SQLStorageAdapter",

logic_adapters=[

"chatterbot.logic.BestMatch",

{ "import_path": "chatterbot.logic.BestMatch",

"statement_comparison_function": "chatterbot.comparisons.levenshtein_distance",

"response_selection_method": "chatterbot.response_selection.get_first_response" }

],

database_uri="sqlite:///database.sqlite3" )

# Train the chatbot with a few sample conversations

conversation = [ "Hello", "Hi there!", "How are you doing?", "I'm doing great.", "That is good to hear", "Thank you.", "You're welcome." ]

trainer = ListTrainer(bot) trainer.train(conversation)

# Create a Flask app

app = Flask(__name__)

# Define a route for the default URL

@app.route("/") def index(): return "<h1>Chatbot</h1>"

# Define a route for the /get_response URL

@app.route("/get_response", methods=["POST"])

def get_response():

# Get the user's message

user_message = request.form["user_message"]

# Get the bot's response

bot_response = bot.get_response(user_message)

# Return the bot's response

return str(bot_response)

# Run the Flask

app if __name__ == "__main__": app.run()No matter how many times I tried, the second generation always used chatterbot instead of the ChatGPT API. Even when I told it to use the ChatGPT API, it didn't.

ChatGTP's ability to reproduce or construct other machine learning algorithms is interesting and possibly terrifying. Nothing prevents ChatGPT from replicating itself ad infinitum throughout the Internet other than a lack of desire. This may be the first time a machine repeats itself, so I've preserved the project as a reference. Adding a requirements.txt file and python env for easier deployment is the only change to the code.

I hope you enjoyed this.

Jay Peters

3 years ago

Apple AR/VR heaset

Apple is said to have opted for a standalone AR/VR headset over a more powerful tethered model.

It has had a tumultuous history.

Apple's alleged mixed reality headset appears to be the worst-kept secret in tech, and a fresh story from The Information is jam-packed with details regarding the device's rocky development.

Apple's decision to use a separate headgear is one of the most notable aspects of the story. Apple had yet to determine whether to pursue a more powerful VR headset that would be linked with a base station or a standalone headset. According to The Information, Apple officials chose the standalone product over the version with the base station, which had a processor that later arrived as the M1 Ultra. In 2020, Bloomberg published similar information.

That decision appears to have had a long-term impact on the headset's development. "The device's many processors had already been in development for several years by the time the choice was taken, making it impossible to go back to the drawing board and construct, say, a single chip to handle all the headset's responsibilities," The Information stated. "Other difficulties, such as putting 14 cameras on the headset, have given hardware and algorithm engineers stress."

Jony Ive remained to consult on the project's design even after his official departure from Apple, according to the story. Ive "prefers" a wearable battery, such as that offered by Magic Leap. Other prototypes, according to The Information, placed the battery in the headset's headband, and it's unknown which will be used in the final design.

The headset was purportedly shown to Apple's board of directors last week, indicating that a public unveiling is imminent. However, it is possible that it will not be introduced until later this year, and it may not hit shop shelves until 2023, so we may have to wait a bit to try it.

For further down the line, Apple is working on a pair of AR spectacles that appear like Ray-Ban wayfarer sunglasses, but according to The Information, they're "still several years away from release." (I'm interested to see how they compare to Meta and Ray-Bans' true wayfarer-style glasses.)