More on Technology

Jay Peters

3 years ago

Apple AR/VR heaset

Apple is said to have opted for a standalone AR/VR headset over a more powerful tethered model.

It has had a tumultuous history.

Apple's alleged mixed reality headset appears to be the worst-kept secret in tech, and a fresh story from The Information is jam-packed with details regarding the device's rocky development.

Apple's decision to use a separate headgear is one of the most notable aspects of the story. Apple had yet to determine whether to pursue a more powerful VR headset that would be linked with a base station or a standalone headset. According to The Information, Apple officials chose the standalone product over the version with the base station, which had a processor that later arrived as the M1 Ultra. In 2020, Bloomberg published similar information.

That decision appears to have had a long-term impact on the headset's development. "The device's many processors had already been in development for several years by the time the choice was taken, making it impossible to go back to the drawing board and construct, say, a single chip to handle all the headset's responsibilities," The Information stated. "Other difficulties, such as putting 14 cameras on the headset, have given hardware and algorithm engineers stress."

Jony Ive remained to consult on the project's design even after his official departure from Apple, according to the story. Ive "prefers" a wearable battery, such as that offered by Magic Leap. Other prototypes, according to The Information, placed the battery in the headset's headband, and it's unknown which will be used in the final design.

The headset was purportedly shown to Apple's board of directors last week, indicating that a public unveiling is imminent. However, it is possible that it will not be introduced until later this year, and it may not hit shop shelves until 2023, so we may have to wait a bit to try it.

For further down the line, Apple is working on a pair of AR spectacles that appear like Ray-Ban wayfarer sunglasses, but according to The Information, they're "still several years away from release." (I'm interested to see how they compare to Meta and Ray-Bans' true wayfarer-style glasses.)

Stephen Moore

3 years ago

A Meta-Reversal: Zuckerberg's $71 Billion Loss

The company's epidemic gains are gone.

Mark Zuckerberg was in line behind Jeff Bezos and Bill Gates less than two years ago. His wealth soared to $142 billion. Facebook's shares reached $382 in September 2021.

What comes next is either the start of something truly innovative or the beginning of an epic rise and fall story.

In order to start over (and avoid Facebook's PR issues), he renamed the firm Meta. Along with the new logo, he announced a turn into unexplored territory, the Metaverse, as the next chapter for the internet after mobile. Or, Zuckerberg believed Facebook's death was near, so he decided to build a bigger, better, cooler ship. Then we saw his vision (read: dystopian nightmare) in a polished demo that showed Zuckerberg in a luxury home and on a spaceship with aliens. Initially, it looked entertaining. A problem was obvious, though. He might claim this was the future and show us using the Metaverse for business, play, and more, but when I took off my headset, I'd realize none of it was genuine.

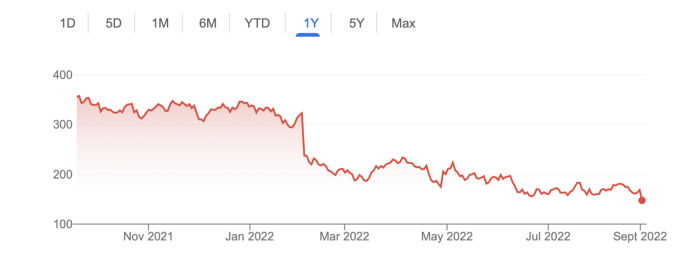

The stock price is almost as low as January 2019, when Facebook was dealing with the aftermath of the Cambridge Analytica crisis.

Irony surrounded the technology's aim. Zuckerberg says the Metaverse connects people. Despite some potential uses, this is another step away from physical touch with people. Metaverse worlds can cause melancholy, addiction, and mental illness. But forget all the cool stuff you can't afford. (It may be too expensive online, too.)

Metaverse activity slowed for a while. In early February 2022, we got an earnings call update. Not good. Reality Labs lost $10 billion on Oculus and Zuckerberg's Metaverse. Zuckerberg expects losses to rise. Meta's value dropped 20% in 11 minutes after markets closed.

It was a sign of things to come.

The corporation has failed to create interest in Metaverse, and there is evidence the public has lost interest. Meta still relies on Facebook's ad revenue machine, which is also struggling. In July, the company announced a decrease in revenue and missed practically all its forecasts, ending a decade of exceptional growth and relentless revenue. They blamed a dismal advertising demand climate, and Apple's monitoring changes smashed Meta's ad model. Throw in whistleblowers, leaked data revealing the firm knows Instagram negatively affects teens' mental health, the current Capital Hill probe, and the fact TikTok is eating its breakfast, lunch, and dinner, and 2022 might be the corporation's worst year ever.

After a rocky start, tech saw unprecedented growth during the pandemic. It was a tech bubble and then some.

The gains reversed after the dust settled and stock markets adjusted. Meta's year-to-date decline is 60%. Apple Inc is down 14%, Amazon is down 26%, and Alphabet Inc is down 29%. At the time of writing, Facebook's stock price is almost as low as January 2019, when the Cambridge Analytica scandal broke. Zuckerberg owns 350 million Meta shares. This drop costs him $71 billion.

The company's problems are growing, and solutions won't be easy.

Facebook's period of unabated expansion and exorbitant ad revenue is ended, and the company's impact is dwindling as it continues to be the program that only your parents use. Because of the decreased ad spending and stagnant user growth, Zuckerberg will have less time to create his vision for the Metaverse because of the declining stock value and decreasing ad spending.

Instagram is progressively dying in its attempt to resemble TikTok, alienating its user base and further driving users away from Meta-products.

And now that the corporation has shifted its focus to the Metaverse, it is clear that, in its eagerness to improve its image, it fired the launch gun too early. You're fighting a lost battle when you announce an idea and then claim it won't happen for 10-15 years. When the idea is still years away from becoming a reality, the public is already starting to lose interest.

So, as I questioned earlier, is it the beginning of a technological revolution that will take this firm to stratospheric growth and success, or are we witnessing the end of Meta and Zuckerberg himself?

Mark Schaefer

3 years ago

20 Fun Uses for ChatGPT

Our RISE community is stoked on ChatGPT. ChatGPT has countless uses.

Early on. Companies are figuring out the legal and ethical implications of AI's content revolution. Using AI for everyday tasks is cool.

So I challenged RISE friends... Let's have fun and share non-obvious uses.

Onward!

1. Tweet

Jim MacLeod requested a design-themed Twitter thread from ChatGPT.

2. Novelize

Giuseppe Fratoni writes novels.

"This is amazing!" Impotent. I asked about writing, mystery novels, and jazz.

"Develop a mystery novel plot in which a disillusioned homicide detective hunts a serial killer who targets jazz musicians who play Autumn Leaves."

"I have:

Detective James Thompson is a seasoned veteran of the Homicide department, but lately he’s been feeling burnt out and disillusioned. His latest case, the murder of a young jazz pianist who was found brutally killed in his home, only serves to deepen his sense of despair … (much more provided)

3. Question-and-answer sessions

Bliss recommends:

You have a guest speaker and want to ask audience questions.

Pre-generate questions with ChatGPT. "And here's a question from our AI?"

The conference is about Western water scarcity. Water management expert. My AI question was...

Which desalination-related questions should I ask an expert?

ChatGPT said...

How is desalination currently being used around the world, and in what types of locations is it most effective?

What are the potential advantages and disadvantages of using desalination to provide fresh water?

What are some of the key challenges and limitations of desalination technology, and how are they being addressed?

4. Game-making

Richard's a board game expert. He suggests using ChatGPT to plan games. Note: Some people have started getting help solving crossword puzzle clues.

5. Matchmaking

Tanya Thompson thinks it helps dating. ChatGPT:

Here are some flirty first-message ideas for dating apps:

I noticed you from afar. I had to say hi and see if we clicked.

Your profile picture is gorgeous. I'm obsessed with your [Feature] and want to know you better.

I don't usually message first, but I had to. You're [Attractive Quality] and I can't wait to talk.”

Hello. Your profile intimidates me. I'm starstruck by your [Attractiveness]. (Many instances)

5. Solitude/anxiety

ChatGPT excels at one-on-one conversations.

PepperBrooks: "I'm sad and lonely."

ChatGPT: I’m sorry to hear that you’re feeling sad and lonely. It’s natural to feel down sometimes, but it’s important to remember that you’re not alone. There are many people who care about you and want to help you. It can be helpful to talk to someone about your feelings, whether it’s a close friend, family member, or mental health professional.

Sometimes, just expressing your thoughts and emotions to someone else can help to alleviate your sadness and loneliness. It’s also important to take care of yourself and do things that make you happy, such as spending time with loved ones, pursuing a hobby, or exercising. These things can help to improve your mood and make you feel more connected to others. Remember that you are strong and capable, and that you can get through this difficult time.

Daniel Nestle asked ChatGPT to generate journal prompts to help him deal with anxiety.

Write down the things that are causing you anxiety at the moment.

Describe a situation in which you felt anxious and how you coped with it.

Reflect on how your thoughts and beliefs contribute to your anxiety.

Write a letter to your anxiety, expressing your feelings and concerns

Imagine yourself in a calm and peaceful place, and describe it in detail.

(many more items followed)

6. Names

Continued Daniel:

Also, naming ideas.

Me: Name this drink. Japanese gin, unsweetened tonic, lime, Szechuan pepper, grapefruit.

ChatGPT: How about “Sakura Sunrise?” The name incorporates the Japanese gin and the citrus flavors of grapefruit and lime, and the word “sunrise” suggests a fresh and invigorating start to the day. Alternatively, you could go with something like “Peppered Grapefruit Gin Fizz” to emphasize the unique flavor combination of Szechuan pepper and grapefruit.

7. Translation on the go

Monks recommends: ChatGPT is a perfect mobile translation tool. Say you need help in Italy.

ME: Translate to Italian

Where is the nearest gas station?

ChatGPT: “La mia auto sta per esaurire il carburante, mi puoi dire dove si trova la stazione di servizio più vicina?”

8. Fitness

Monks added:

ME: In 3 months, I want to run 5km. Plan out how I can do this.

ChatGPT: Here's a 3-month plan to run 5km.

Start by walking for 15–20 minutes each day to build up your endurance and get your body used to regular exercise.

After a week of walking, begin incorporating short jogs into your routine. Start by jogging for 1–2 minutes at a time, with walking breaks in between. Gradually increase the length of your jogs and reduce the amount of time you spend walking.

Once you can jog for 10 minutes without stopping, start increasing the distance of your runs. Aim to run 1km without stopping and then gradually increase the distance over time.

In addition to your regular runs, incorporate strength training and stretching into your routine to improve your overall fitness and reduce the risk of injury.

(more details continued on)

9. Integrations and coding

10. Teacher lesson plans

Spencer Crandall saved time.

This morning, I wondered who has the hardest job. Teachers.

Many students, little time. ChatGPT could handle lesson planning. I tried it and got this.

Lesson Title: The History and Impact of Artificial Intelligence

Students will understand the history and development of AI and the potential positive and negative impacts on society.

AI timeline AI technologies and applications

Overview:

Introduce the topic of artificial intelligence, and explain that it refers to the development of computer systems and algorithms that can simulate human intelligence and behavior.

Show the timeline of significant events in the history of AI, and discuss the key milestones and developments in the field.

Show examples of AI technologies and applications, and explain how they are used in various industries and fields.

Discuss the potential positive and negative impacts of AI on society, including its potential to improve productivity, efficiency, and safety, as well as its potential to create new challenges and concerns, such as job displacement and ethical issues.

(many more details followed)

11. Find podcast guests.

Joeri Billast suggested:

Who should be on my Web3 podcast?

ChatGPT: Web3 marketing experts, influencers, and thought leaders make great podcast guests. These include Web3 marketers, content creators, business owners, entrepreneurs, and technologists. You could invite Web3 startups and organizations to your podcast.

Please give examples.

ChatGPT: Ideal Web3 marketing podcast guests include:

Tim Berner-Lee, inventor of the World Wide Web

Chris Dixon, general partner at Andreessen Horowitz

Vitalik Buterin, founder of Ethereum

Joe Lubin, founder of ConsenSys

Susan Fowler, author of Whistleblower

(many other suggestions)

12. Comments and reviews on social media

Lisa Monk thinks ChatGPT can help retail staff with social media.

She asked ChatGPT to "review this restaurant"

POSITIVE CUSTOMER REVIEW: I absolutely love this place. We eat out a lot however this place has topped it all. Perfect meals, amazing staff and the timing for it all was great! Special thanks to Sophie B (you rock) From your guests all the way from the Gold Coast, THANK YOU

ChatGPT REPLY: Thank you so much for your kind words and for taking the time to write a review. We are delighted to hear that you had such a great experience at our restaurant. We are thrilled that you enjoyed your meal and the service provided by Sophie. It’s always a pleasure to serve guests visiting from the Gold Coast and we are grateful for your support. We look forward to welcoming you back again soon. Thank you again for your positive review.

CUSTOMER REVIEW: Most average food, there is a nice view, But that’s about it

ChatGPT: Thank you for your review. We apologize if your dining experience was not up to your expectations. We strive to provide top-quality food and service to all of our customers, and we will take your feedback into consideration as we continue to improve. Thank you for letting us know about your experience.

13. Crime-fighting

Zack Seipert said, "I researched some famous unsolved murders, and ChatGPT laid out the crime, suspects, clues, and more." I bet experienced detectives could solve some of these cold cases.

14. Reduce bias

Joanne Taylor struggles to remove biases from her writing. She asked ChatGPT for ideas.

I asked ChatGPT to check a text for racial bias, and it did well.

15. Furnishings

I designed a small, unique table using ChatGPT and MidJourney. I edited ChatGPT's idea for clarity and brevity before entering it in MidJourney.

Results:

16. Serialize a novel

Scott Scowcoft led ChatCPT through iterations of a story text to create serialized novel frames. You can view this here.

17. Interview preparation

I was on a sales podcast. Although this is marketing-related, it's not my forte. I asked ChatGPT for help with a sales interview. Response:

Here are some interview questions about the future of sales and how to answer them.

How will technology affect sales in the future?

Technology will impact sales in the coming years. Automation and AI could be used to handle routine tasks, and data analysis and CRM could become more important. E-commerce and online sales channels are also reshaping the sales landscape.

How will the salesperson's role change?

Future sales roles may change in response to changing customer needs and expectations. Salespeople must be knowledgeable and consultative to help customers navigate complex products and services. Increasing importance of data analysis and CRM tools will require salespeople to understand and use technology effectively.

Future sales professionals will need what skills?

Future salespeople will need many skills. Sales professionals must have strong communication and interpersonal skills, as well as product and service knowledge and the ability to use data analysis and CRM tools effectively. Adapting to changing customer needs and staying current on industry trends will also be important for sales success.

18. Gift ideas

I need Christmas gift ideas for my wife. I asked:

Suggest a gift idea under $200 for a middle-aged woman who loves The Eagles.

A middle-aged woman who loves The Eagles might like a vinyl record of "Hotel California" or "The Long Run." Many music fans enjoy the unique sound and experience of vinyl records.

Pair the record with a high-quality record player for a complete gift. The vinyl record and record player should fit within your $200 budget for an Eagles fan.

19. Explaining complex concepts

I entered: Explain quantum physics to me as if I were 10 years old.

Quantum physics is a branch of science that studies how tiny particles, like atoms and subatomic particles, behave. These particles are so small that they don’t always follow the same rules as the things we can see and touch every day.

For example, sometimes they can be in two places at the same time, or they can be friends with each other even if they are far apart. This might sound strange, but it’s what scientists have learned from studying these tiny particles. Quantum physics helps us understand how the world works at a very small scale, and it can also help us make new technologies, like computers that are much faster than the ones we have today.

20. AP-style editing

Blogs, journals, and books follow AP Style. I asked ChatGPT to "edit using AP Style" and it did a great job.

And then... "Write a catchy headline for a blog post about 16 ChatGPT uses"

Here are some possible blog post titles for 20 creative ChatGPT uses:

“20 Fun and Creative Ways to Use ChatGPT: From Games to Quizzes and More”

“The Ultimate Guide to Using ChatGPT for Entertainment and Creativity”

“20 Reasons to Use ChatGPT: How it Can Enhance Your Gaming, Storytelling, and More”

20 Entertaining Uses for ChatGPT You Never Knew Were Possible”

“Unleash Your Imagination with ChatGPT: 16 Creative and Fun Ways to Use it”

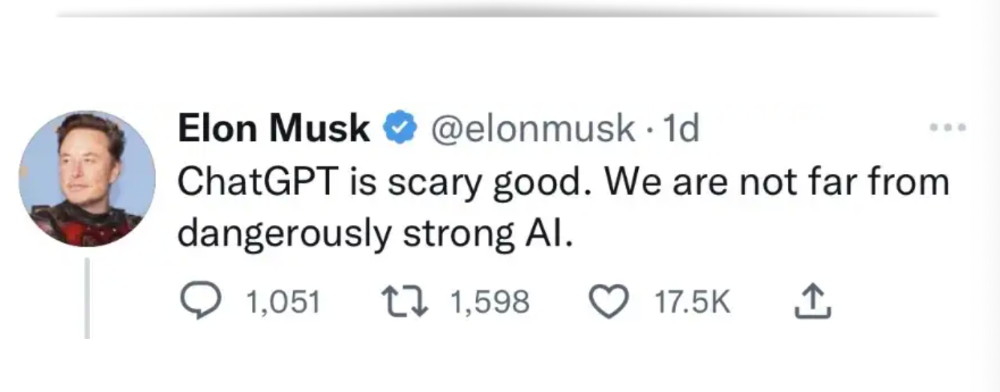

In a previous post, I said ChatGPT will change marketing careers forever. I have never made a statement like that in my life. Even in the early days of the internet, people were cautious.

This technology was just released two weeks ago, and over a million people are already using it. This is the fastest technology adoption in history.

Today's post offers inventive and entertaining ideas, but it's just the beginning. ChatGPT writes code, music, and papers.

You might also like

Michael Le

3 years ago

Union LA x Air Jordan 2 “Future Is Now” PREVIEW

With the help of Virgil Abloh and Union LA‘s Chris Gibbs, it's now clear that Jordan Brand intended to bring the Air Jordan 2 back in 2022.

The “Future Is Now” collection includes two colorways of MJ's second signature as well as an extensive range of apparel and accessories.

“We wanted to juxtapose what some futuristic gear might look like after being worn and patina'd,”

Union stated on the collaboration's landing page.

“You often see people's future visions that are crisp and sterile. We thought it would be cool to wear it in and make it organic...”

The classic co-branding appears on short-sleeve tees, hoodies, and sweat shorts/sweat pants, all lightly distressed at the hems and seams.

Also, a filtered black-and-white photo of MJ graces the adjacent long sleeves, labels stitch into the socks, and the Jumpman logo adorns the four caps.

Liner jackets and flight pants will also be available, adding reimagined militaria to a civilian ensemble.

The Union LA x Air Jordan 2 (Grey Fog and Rattan) shares many of the same beats. Vintage suedes show age, while perforations and detailing reimagine Bruce Kilgore's design for the future.

The “UN/LA” tag across the modified eye stays, the leather patch across the tongue, and the label that wraps over the lateral side of the collar complete the look.

The footwear will also include a Crater Slide in the “Grey Fog” color scheme.

BUYING

On 4/9 and 4/10 from 9am-3pm, Union LA will be giving away a pair of Air Jordan 2s at their La Brea storefront (110 S. LA BREA AVE. LA, CA 90036). The raffle is only open to LA County residents with a valid CA ID. You must enter by 11:59pm on 4/10 to win. Winners will be notified via email.

Rachel Greenberg

3 years ago

The Unsettling Fact VC-Backed Entrepreneurs Don't Want You to Know

What they'll do is scarier.

My acquaintance recently joined a VC-funded startup. Money, equity, and upside possibilities were nice, but he had a nagging dread.

They just secured a $40M round and are hiring like crazy to prepare for their IPO in two years. All signals pointed to this startup's (a B2B IT business in a stable industry) success, and its equity-holding workers wouldn't pass that up.

Five months after starting the work, my friend struggled with leaving. We might overlook the awful culture and long hours at the proper price. This price plus the company's fate and survival abilities sent my friend departing in an unpleasant unplanned resignation before jumping on yet another sinking ship.

This affects founders. This affects VC-backed companies (and all businesses). This affects anyone starting, buying, or running a business.

Here's the under-the-table approach that's draining VC capital, leaving staff terrified (or jobless), founders rattled, and investors upset. How to recognize, solve, and avoid it

The unsettling reality behind door #1

You can't raise money off just your looks, right? If "looks" means your founding team's expertise, then maybe. In my friend's case, the founding team's strong qualifications and track records won over investors before talking figures.

They're hardly the only startup to raise money without a profitable customer acquisition strategy. Another firm raised money for an expensive sleep product because it's eco-friendly. They were off to the races with a few keywords and key players.

Both companies, along with numerous others, elected to invest on product development first. Company A employed all the tech, then courted half their market (they’re a tech marketplace that connects two parties). Company B spent millions on R&D to create a palatable product, then flooded the world with marketing.

My friend is on Company B's financial team, and he's seen where they've gone wrong. It's terrible.

Company A (tech market): Growing? Not quite. To achieve the ambitious expansion they (and their investors) demand, they've poured much of their little capital into salespeople: Cold-calling commission and salary salesmen. Is it working? Considering attrition and companies' dwindling capital, I don't think so.

Company B (green sleep) has been hiring, digital marketing, and opening new stores like crazy. Growing expenses should result in growing revenues and a favorable return on investment; if you grow too rapidly, you may neglect to check that ROI.

Once Company A cut headcount and Company B declared “going concerned”, my friend realized both startups had the same ailment and didn't recognize it.

I shouldn't have to ask a friend to verify a company's cash reserves and profitability to spot a financial problem. It happened anyhow.

The frightening part isn't that investors were willing to invest millions without product-market fit, CAC, or LTV estimates. That's alarming, but not as scary as the fact that startups aren't understanding the problem until VC rounds have dried up.

When they question consultants if their company will be around in 6 months. It’s a red flag. How will they stretch $20M through a 2-year recession with a $3M/month burn rate and no profitability? Alarms go off.

Who's in danger?

In a word, everyone who raised money without a profitable client acquisition strategy or enough resources to ride out dry spells.

Money mismanagement and poor priorities affect every industry (like sinking all your capital into your product, team, or tech, at the expense of probing what customer acquisition really takes and looks like).

This isn't about tech, real estate, or recession-proof luxury products. Fast, cheap, easy money flows into flashy-looking teams with buzzwords, trending industries, and attractive credentials.

If these companies can't show progress or get a profitable CAC, they can't raise more money. They die if they can't raise more money (or slash headcount and find shoestring budget solutions until they solve the real problem).

The kiss of death (and how to avoid it)

If you're running a startup and think raising VC is the answer, pause and evaluate. Do you need the money now?

I'm not saying VC is terrible or has no role. Founders have used it as a Band-Aid for larger, pervasive problems. Venture cash isn't a crutch for recruiting consumers profitably; it's rocket fuel to get you what and who you need.

Pay-to-play isn't a way to throw money at the wall and hope for a return. Pay-to-play works until you run out of money, and if you haven't mastered client acquisition, your cash will diminish quickly.

How can you avoid this bottomless pit? Tips:

Understand your burn rate

Keep an eye on your growth or profitability.

Analyze each and every marketing channel and initiative.

Make lucrative customer acquisition strategies and satisfied customers your top two priorities. not brand-new products. not stellar hires. avoid the fundraising rollercoaster to save time. If you succeed in these two tasks, investors will approach you with their thirsty offers rather than the other way around, and your cash reserves won't diminish as a result.

Not as much as your grandfather

My family friend always justified expensive, impractical expenditures by saying it was only monopoly money. In business, startups, and especially with money from investors expecting a return, that's not true.

More founders could understand that there isn't always another round if they viewed VC money as their own limited pool. When the well runs dry, you must refill it or save the day.

Venture financing isn't your grandpa's money. A discerning investor has entrusted you with dry powder in the hope that you'll use it wisely, strategically, and thoughtfully. Use it well.

Joe Procopio

3 years ago

Provide a product roadmap that can withstand startup velocities

This is how to build a car while driving.

Building a high-growth startup is compared to building a car while it's speeding down the highway.

How to plan without going crazy? Or, without losing team, board, and investor buy-in?

I just delivered our company's product roadmap for the rest of the year. Complete. Thorough. Page-long. I'm optimistic about its chances of surviving as everything around us changes, from internal priorities to the global economy.

It's tricky. This isn't the first time I've created a startup roadmap. I didn't invent a document. It took time to deliver a document that will be relevant for months.

Goals matter.

Although they never change, goals are rarely understood.

This is the third in a series about a startup's unique roadmapping needs. Velocity is the intensity at which a startup must produce to survive.

A high-growth startup moves at breakneck speed, which I alluded to when I said priorities and economic factors can change daily or weekly.

At that speed, a startup's roadmap must be flexible, bend but not break, and be brief and to the point. I can't tell you how many startups and large companies develop a product roadmap every quarter and then tuck it away.

Big, wealthy companies can do this. It's suicide for a startup.

The drawer thing happens because startup product roadmaps are often valid for a short time. The roadmap is a random list of features prioritized by different company factions and unrelated to company goals.

It's not because the goals changed that a roadmap is shelved or ignored. Because the company's goals were never communicated or documented in the context of its product.

In the previous post, I discussed how to turn company goals into a product roadmap. In this post, I'll show you how to make a one-page startup roadmap.

In a future post, I'll show you how to follow this roadmap. This roadmap helps you track company goals, something a roadmap must do.

Be vague for growth, but direct for execution.

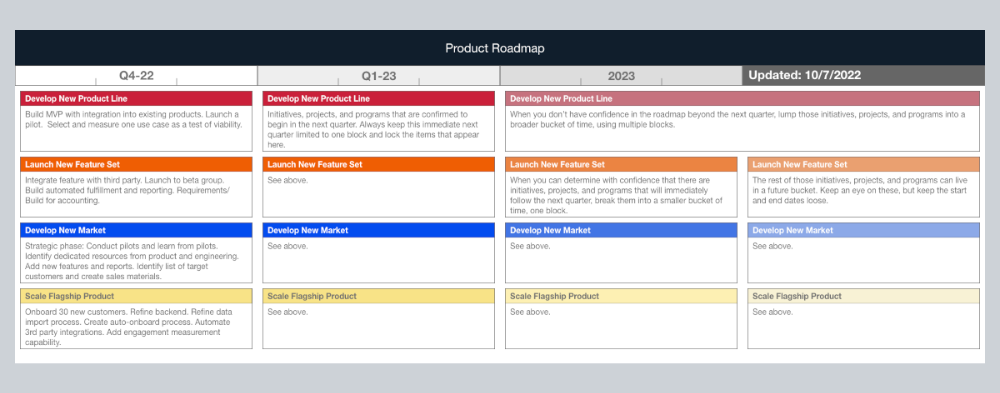

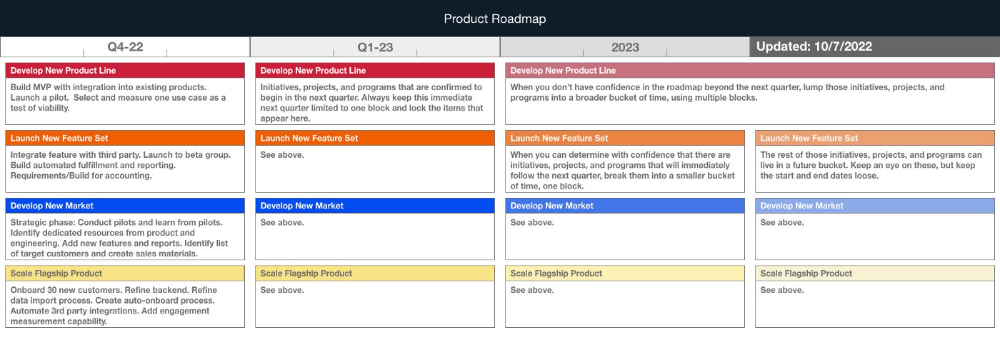

Here's my plan. The real one has more entries and more content in each.

Let's discuss smaller boxes.

Product developers and engineers know that the further out they predict, the more wrong they'll be. When developing the product roadmap, this rule is ignored. Then it bites us three, six, or nine months later when we haven't even started.

Why do we put everything in a product roadmap like a project plan?

Yes, I know. We use it when the product roadmap isn't goal-based.

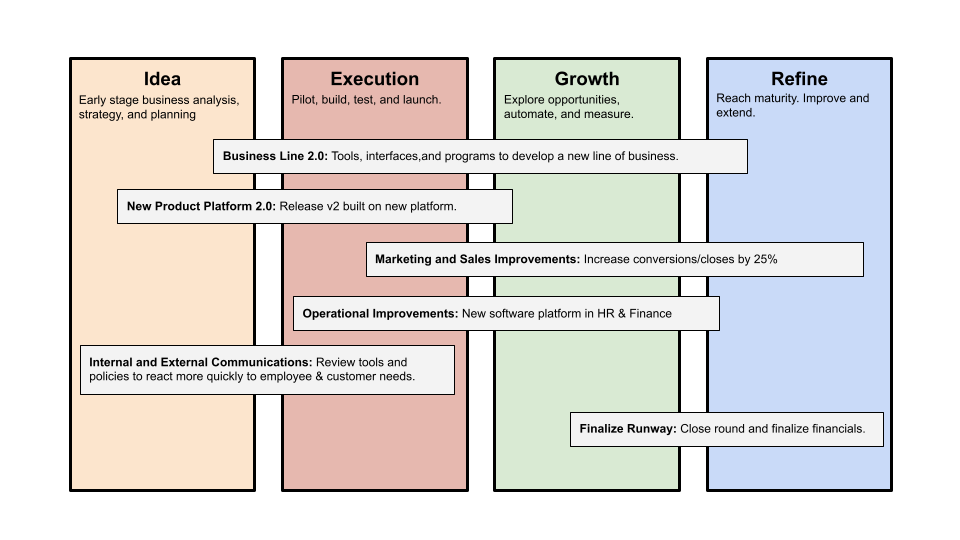

A goal-based roadmap begins with a document that outlines each goal's idea, execution, growth, and refinement.

Once the goals are broken down into epics, initiatives, projects, and programs, only the idea and execution phases should be modeled. Any goal growth or refinement items should be vague and loosely mapped.

Why? First, any idea or execution-phase goal will result in growth initiatives that are unimaginable today. Second, internal priorities and external factors will change, but the goals won't. Locking items into calendar slots reduces flexibility and forces deviation from the single source of truth.

No soothsayers. Predicting the future is pointless; just prepare.

A map is useless if you don't know where you're going.

As we speed down the road, the car and the road will change. Goals define the destination.

This quarter and next quarter's roadmap should be set. After that, you should track destination milestones, not how to get there.

When you do that, even the most critical investors will understand the roadmap and buy in. When you track progress at the end of the quarter and revise your roadmap, the destination won't change.