More on Technology

Gajus Kuizinas

3 years ago

How a few lines of code were able to eliminate a few million queries from the database

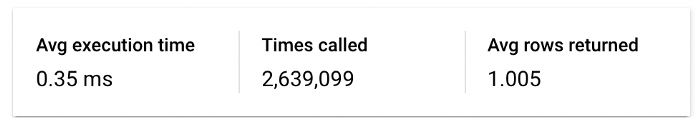

I was entering tens of millions of records per hour when I first published Slonik PostgreSQL client for Node.js. The data being entered was usually flat, making it straightforward to use INSERT INTO ... SELECT * FROM unnset() pattern. I advocated the unnest approach for inserting rows in groups (that was part I).

However, today I’ve found a better way: jsonb_to_recordset.

jsonb_to_recordsetexpands the top-level JSON array of objects to a set of rows having the composite type defined by an AS clause.

jsonb_to_recordset allows us to query and insert records from arbitrary JSON, like unnest. Since we're giving JSON to PostgreSQL instead of unnest, the final format is more expressive and powerful.

SELECT *

FROM json_to_recordset('[{"name":"John","tags":["foo","bar"]},{"name":"Jane","tags":["baz"]}]')

AS t1(name text, tags text[]);

name | tags

------+-----------

John | {foo,bar}

Jane | {baz}

(2 rows)Let’s demonstrate how you would use it to insert data.

Inserting data using json_to_recordset

Say you need to insert a list of people with attributes into the database.

const persons = [

{

name: 'John',

tags: ['foo', 'bar']

},

{

name: 'Jane',

tags: ['baz']

}

];You may be tempted to traverse through the array and insert each record separately, e.g.

for (const person of persons) {

await pool.query(sql`

INSERT INTO person (name, tags)

VALUES (

${person.name},

${sql.array(person.tags, 'text[]')}

)

`);

}It's easier to read and grasp when working with a few records. If you're like me and troubleshoot a 2M+ insert query per day, batching inserts may be beneficial.

What prompted the search for better alternatives.

Inserting using unnest pattern might look like this:

await pool.query(sql`

INSERT INTO public.person (name, tags)

SELECT t1.name, t1.tags::text[]

FROM unnest(

${sql.array(['John', 'Jane'], 'text')},

${sql.array(['{foo,bar}', '{baz}'], 'text')}

) AS t1.(name, tags);

`);You must convert arrays into PostgreSQL array strings and provide them as text arguments, which is unsightly. Iterating the array to create slices for each column is likewise unattractive.

However, with jsonb_to_recordset, we can:

await pool.query(sql`

INSERT INTO person (name, tags)

SELECT *

FROM jsonb_to_recordset(${sql.jsonb(persons)}) AS t(name text, tags text[])

`);In contrast to the unnest approach, using jsonb_to_recordset we can easily insert complex nested data structures, and we can pass the original JSON document to the query without needing to manipulate it.

In terms of performance they are also exactly the same. As such, my current recommendation is to prefer jsonb_to_recordset whenever inserting lots of rows or nested data structures.

Tom Smykowski

3 years ago

CSS Scroll-linked Animations Will Transform The Web's User Experience

We may never tap again in ten years.

I discussed styling websites and web apps on smartwatches in my earlier article on W3C standardization.

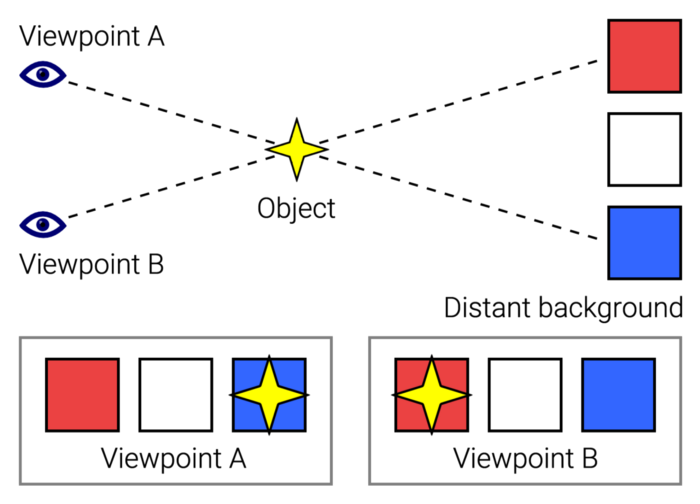

The Parallax Chronicles

Section containing examples and flying objects

Another intriguing Working Draft I found applies to all devices, including smartphones.

These pages may have something intriguing. Take your time. Return after scrolling:

What connects these three pages?

JustinWick at English Wikipedia • CC-BY-SA-3.0

Scroll-linked animation, commonly called parallax, is the effect.

WordPress theme developers' quick setup and low-code tools made the effect popular around 2014.

Parallax: Why Designers Love It

The chapter that your designer shouldn't read

Online video playback required searching, scrolling, and clicking ten years ago. Scroll and click four years ago.

Some video sites let you swipe to autoplay the next video from an endless list.

UI designers create scrollable pages and apps to accommodate the behavioral change.

Web interactivity used to be mouse-based. Clicking a button opened a help drawer, and hovering animated it.

However, a large page with more material requires fewer buttons and less interactiveness.

Designers choose scroll-based effects. Design and frontend developers must fight the trend but prepare for the worst.

How to Create Parallax

The component that you might want to show the designer

JavaScript-based effects track page scrolling and apply animations.

Javascript libraries like lax.js simplify it.

Using it needs a lot of human mathematical and physical computations.

Your asset library must also be prepared to display your website on a laptop, television, smartphone, tablet, foldable smartphone, and possibly even a microwave.

Overall, scroll-based animations can be solved better.

CSS Scroll-linked Animations

CSS makes sense since it's presentational. A Working Draft has been laying the groundwork for the next generation of interactiveness.

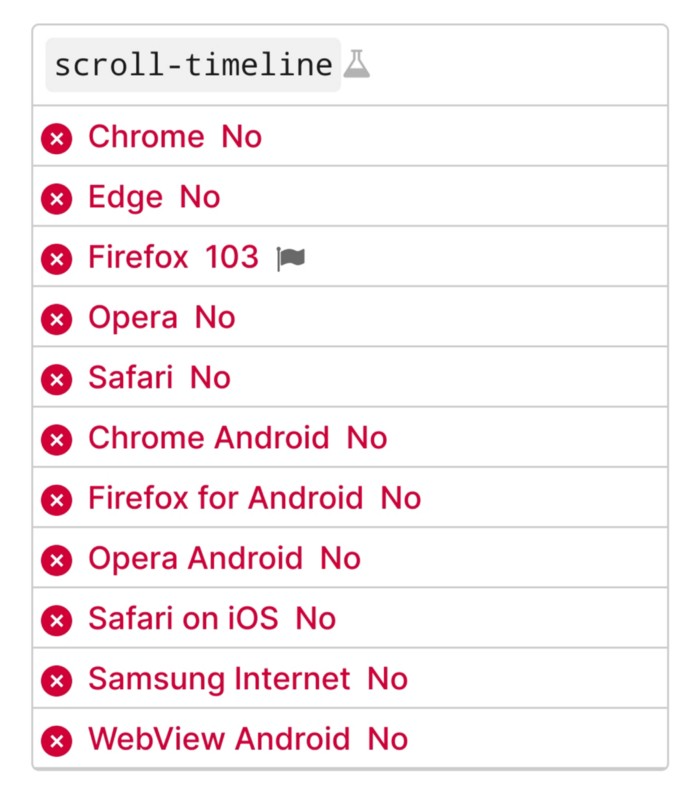

The new CSS property scroll-timeline powers the feature, which MDN describes well.

Before testing it, you should realize it is poorly supported:

Firefox 103 currently supports it.

There is also a polyfill, with some demo examples to explore.

Summary

Web design was a protracted process. Started with pages with static backdrop images and scrollable text. Artists and designers may use the scroll-based animation CSS API to completely revamp our web experience.

It's a promising frontier. This post may attract a future scrollable web designer.

Ps. I have created flashcards for HTML, Javascript etc. Check them out!

Christianlauer

3 years ago

Looker Studio Pro is now generally available, according to Google.

Great News about the new Google Business Intelligence Solution

Google has renamed Data Studio to Looker Studio and Looker Studio Pro.

Now, Google releases Looker Studio Pro. Similar to the move from Data Studio to Looker Studio, Looker Studio Pro is basically what Looker was previously, but both solutions will merge. Google says the Pro edition will acquire new enterprise management features, team collaboration capabilities, and SLAs.

![Dashboard Example in Looker Studio Pro — Image Source: Google[2]](https://storage.googleapis.com/int3grity/posts/m9yb4IqJCm7D/images/ZuJudlWT6GUeTKKNVduA5)

In addition to Google's announcements and sales methods, additional features include:

Looker Studio assets can now have organizational ownership. Customers can link Looker Studio to a Google Cloud project and migrate existing assets once. This provides:

Your users' created Looker Studio assets are all kept in a Google Cloud project.

When the users who own assets leave your organization, the assets won't be removed.

Using IAM, you may provide each Looker Studio asset in your company project-level permissions.

Other Cloud services can access Looker Studio assets that are owned by a Google Cloud project.

Looker Studio Pro clients may now manage report and data source access at scale using team workspaces.

Google announcing these features for the pro version is fascinating. Both products will likely converge, but Google may only release many features in the premium version in the future. Microsoft with Power BI and its free and premium variants already achieves this.

Sources and Further Readings

Google, Release Notes (2022)

Google, Looker (2022)

You might also like

Suzie Glassman

3 years ago

How I Stay Fit Despite Eating Fast Food and Drinking Alcohol

Here's me. Perfectionism is unnecessary.

This post isn't for people who gag at the prospect of eating french fries. I've been ridiculed for stating you can lose weight eating carbs and six-pack abs aren't good.

My family eats frozen processed meals and quick food most weeks (sometimes more). Clean eaters may think I'm unqualified to give fitness advice. I get it.

Hear me out, though. I’m a 44-year-old raising two busy kids with a weekly-traveling husband. Tutoring, dance, and guitar classes fill weeknights. I'm also juggling my job and freelancing.

I'm as worried and tired as my clients. I wish I ate only kale smoothies and salads. I can’t. Despite my mistakes, I'm fit. I won't promise you something just because it worked for me. But here’s a look at how I manage.

What I largely get right about eating

I have a flexible diet and track my daily intake. I count protein, fat, and carbs. Only on vacation or exceptional occasions do I not track.

My protein goal is 1 g per lb. I consume a lot of chicken breasts, eggs, turkey, and lean ground beef. I also occasionally drink protein shakes.

I eat 220–240 grams of carbs daily. My carb count depends on training volume and goals. I'm trying to lose weight slowly. If I want to lose weight faster, I cut carbs to 150-180.

My carbs include white rice, Daves Killer Bread, fruit, pasta, and veggies. I don't eat enough vegetables, so I take Athletic Greens. Also, V8.

Fat grams over 50 help me control my hormones. Recently, I've reached 70-80 grams. Cooking with olive oil. I eat daily dark chocolate. Eggs, butter, milk, and cheese contribute to the rest.

Those frozen meals? What can I say? Stouffer’s lasagna is sometimes needed. I order the healthiest fast food I can find (although I can never bring myself to order the salad). That's a chicken sandwich or a kid's hamburger. I rarely order fries. I eat slowly and savor each bite to feel full.

Potato chips and sugary cereals are in the pantry, but I'm not tempted. My kids eat them because I'd rather teach them moderation than total avoidance. If I eat them, I only eat one portion.

If you're not hungry and eating enough protein and fat, you won't want to eat everything in sight.

I drink once or twice a week. As a result, I rarely overdo it.

Food tracking is tedious and frustrating for many. Taking breaks and using estimates when eating out help. Not perfect, but realistic.

I practice a prolonged fast to enhance metabolic adaptability

Metabolic flexibility is the ability to switch between fuel sources (fat and carbs) based on activity intensity and time since eating. At rest or during low to moderate exertion, your body burns fat. Your body burns carbs after eating and during intense exercise.

Our metabolic flexibility can be hampered by lack of exercise, overeating, and stress. Our bodies become lousy fat burners, making weight loss difficult.

Once a week, I skip dinner (usually around 24 hours). Long-term fasting teaches my body to burn fat. It provides me one low-calorie day a week (I break the fast with a normal-sized dinner).

Fasting day helps me maintain my weight on weekends, when I typically overeat and drink.

Try an extended fast slowly. Delay breakfast by two hours. Next week, add two hours, etc. It takes practice to go that long without biting off your arm. I also suggest consulting your doctor.

I stay active.

I've always been active. As a child, I danced many nights a week, was on the high school dance team, and ran marathons in my 20s.

Often, I feel driven by an internal engine. Working from home makes it easy to exercise. If that’s not you, I get it. Everyone can benefit from raising their baseline.

After taking the kids to school, I walk two miles around the neighborhood. When I need to think, I switch off podcasts. First thing in the morning, I go for a walk.

I lift weights Monday, Wednesday, and Friday. 45 minutes is typical. I run 45-90 minutes on Tuesday and Thursday. I'm slow but reliable. On Saturdays and Sundays, I walk and add a short spin class if I'm not too tired.

I almost never forgo sleep.

I rarely stay up past 10 p.m., much to my night-owl husband's dismay. My 7-8-hour nights help me recover from workouts and handle stress. Without it, I'm grumpy.

I suppose sleep duration matters more than bedtime. Some people just can't fall asleep early. Internal clock and genetics determine sleep and wake hours.

Prioritize sleep.

Last thoughts

Fitness and diet advice is often useless. Some of the advice is inaccurate, dangerous, or difficult to follow if you have a life. I want to throw a shoe at my screen when I see headlines promising to speed up my metabolism or help me lose fat.

I studied exercise physiology for years. No shortcuts exist. No medications or cleanses reset metabolism. I play the hand I'm dealt. I realize that just because something works for me, it won't for you.

If I wanted 15% body fat and ripped abs, I'd have to be stricter. I occasionally think I’d like to get there. But then I remember I’m happy with my life. I like fast food and beer. Pizza and margaritas are favorites (not every day).

You can get it mostly right and live a healthy life.

Ben Chino

3 years ago

100-day SaaS buildout.

We're opening up Maki through a series of Medium posts. We'll describe what Maki is building and how. We'll explain how we built a SaaS in 100 days. This isn't a step-by-step guide to starting a business, but a product philosophy to help you build quickly.

Focus on end-users.

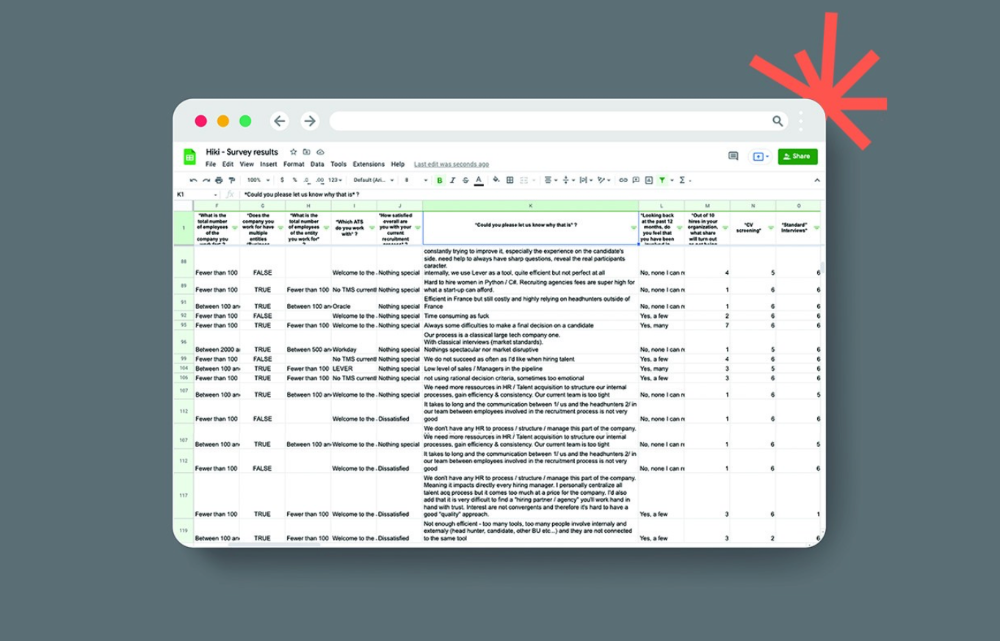

This may seem obvious, but it's important to talk to users first. When we started thinking about Maki, we interviewed 100 HR directors from SMBs, Next40 scale-ups, and major Enterprises to understand their concerns. We initially thought about the future of employment, but most of their worries centered on Recruitment. We don't have a clear recruiting process, it's time-consuming, we recruit clones, we don't support diversity, etc. And as hiring managers, we couldn't help but agree.

Co-create your product with your end-users.

We went to the drawing board, read as many books as possible (here, here, and here), and when we started getting a sense for a solution, we questioned 100 more operational HR specialists to corroborate the idea and get a feel for our potential answer. This confirmed our direction to help hire more objectively and efficiently.

Back to the drawing board, we designed our first flows and screens. We organized sessions with certain survey respondents to show them our early work and get comments. We got great input that helped us build Maki, and we met some consumers. Obsess about users and execute alongside them.

Don’t shoot for the moon, yet. Make pragmatic choices first.

Once we were convinced, we began building. To launch a SaaS in 100 days, we needed an operating principle that allowed us to accelerate while still providing a reliable, secure, scalable experience. We focused on adding value and outsourced everything else. Example:

Concentrate on adding value. Reuse existing bricks.

When determining which technology to use, we looked at our strengths and the future to see what would last. Node.js for backend, React for frontend, both with typescript. We thought this technique would scale well since it would attract more talent and the surrounding mature ecosystem would help us go quicker.

We explored for ways to bootstrap services while setting down strong foundations that might support millions of users. We built our backend services on NestJS so we could extend into microservices later. Hasura, a GraphQL APIs engine, automates Postgres data exposing through a graphQL layer. MUI's ready-to-use components powered our design-system. We used well-maintained open-source projects to speed up certain tasks.

We outsourced important components of our platform (Auth0 for authentication, Stripe for billing, SendGrid for notifications) because, let's face it, we couldn't do better. We choose to host our complete infrastructure (SQL, Cloud run, Logs, Monitoring) on GCP to simplify our work between numerous providers.

Focus on your business, use existing bricks for the rest. For the curious, we'll shortly publish articles detailing each stage.

Most importantly, empower people and step back.

We couldn't have done this without the incredible people who have supported us from the start. Since Powership is one of our key values, we provided our staff the power to make autonomous decisions from day one. Because we believe our firm is its people, we hired smart builders and let them build.

Nicolas left Spendesk to create scalable interfaces using react-router, react-queries, and MUI. JD joined Swile and chose Hasura as our GraphQL engine. Jérôme chose NestJS to build our backend services. Since then, Justin, Ben, Anas, Yann, Benoit, and others have followed suit.

If you consider your team a collective brain, you should let them make decisions instead of directing them what to do. You'll make mistakes, but you'll go faster and learn faster overall.

Invest in great talent and develop a strong culture from the start. Here's how to establish a SaaS in 100 days.

Robert Kim

4 years ago

Crypto Legislation Might Progress Beyond Talk in 2022

Financial regulators have for years attempted to apply existing laws to the multitude of issues created by digital assets. In 2021, leading federal regulators and members of Congress have begun to call for legislation to address these issues. As a result, 2022 may be the year when federal legislation finally addresses digital asset issues that have been growing since the mining of the first Bitcoin block in 2009.

Digital Asset Regulation in the Absence of Legislation

So far, Congress has left the task of addressing issues created by digital assets to regulatory agencies. Although a Congressional Blockchain Caucus formed in 2016, House and Senate members introduced few bills addressing digital assets until 2018. As of October 2021, Congress has not amended federal laws on financial regulation, which were last significantly revised by the Dodd-Frank Act in 2010, to address digital asset issues.

In the absence of legislation, issues that do not fit well into existing statutes have created problems. An example is the legal status of digital assets, which can be considered to be either securities or commodities, and can even shift from one to the other over time. Years after the SEC’s 2017 report applying the definition of a security to digital tokens, the SEC and the CFTC have yet to clarify the distinction between securities and commodities for the thousands of digital assets in existence.

SEC Chair Gary Gensler has called for Congress to act, stating in August, “We need additional Congressional authorities to prevent transactions, products, and platforms from falling between regulatory cracks.” Gensler has reached out to Sen. Elizabeth Warren (D-Ma.), who has expressed her own concerns about the need for legislation.

Legislation on Digital Assets in 2021

While regulators and members of Congress talked about the need for legislation, and the debate over cryptocurrency tax reporting in the 2021 infrastructure bill generated headlines, House and Senate bills proposing specific solutions to various issues quietly started to emerge.

Digital Token Sales

Several House bills attempt to address securities law barriers to digital token sales—some of them by building on ideas proposed by regulators in past years.

Exclusion from the definition of a security. Congressional Blockchain Caucus members have been introducing bills to exclude digital tokens from the definition of a security since 2018, and they have revived those bills in 2021. They include the Token Taxonomy Act of 2021 (H.R. 1628), successor to identically named bills in 2018 and 2019, and the Securities Clarity Act (H.R. 4451), successor to a 2020 namesake.

Safe harbor. SEC Commissioner Hester Peirce proposed a regulatory safe harbor for token sales in 2020, and two 2021 bills have proposed statutory safe harbors. Rep. Patrick McHenry (R-N.C.), Republican leader of the House Financial Services Committee, introduced a Clarity for Digital Tokens Act of 2021 (H.R. 5496) that would amend the Securities Act to create a safe harbor providing a grace period of exemption from Securities Act registration requirements. The Digital Asset Market Structure and Investor Protection Act (H.R. 4741) from Rep. Don Beyer (D-Va.) would amend the Securities Exchange Act to define a new type of security—a “digital asset security”—and add issuers of digital asset securities to an existing provision for delayed registration of securities.

Stablecoins

Stablecoins—digital currencies linked to the value of the U.S. dollar or other fiat currencies—have not yet been the subject of regulatory action, although Treasury Secretary Janet Yellen and Federal Reserve Chair Jerome Powell have each underscored the need to create a regulatory framework for them. The Beyer bill proposes to create a regulatory regime for stablecoins by amending Title 31 of the U.S. Code. Treasury Department approval would be required for any “digital asset fiat-based stablecoin” to be issued or used, under an application process to be established by Treasury in consultation with the Federal Reserve, the SEC, and the CFTC.

Serious consideration for any of these proposals in the current session of Congress may be unlikely. A spate of autumn bills on crypto ransom payments (S. 2666, S. 2923, S. 2926, H.R. 5501) shows that Congress is more inclined to pay attention first to issues that are more spectacular and less arcane. Moreover, the arcaneness of digital asset regulatory issues is likely only to increase further, now that major industry players such as Coinbase and Andreessen Horowitz are starting to roll out their own regulatory proposals.

Digital Dollar vs. Digital Yuan

Impetus to pass legislation on another type of digital asset, a central bank digital currency (CBDC), may come from a different source: rivalry with China.

China established itself as a world leader in developing a CBDC with a pilot project launched in 2020, and in 2021, the People’s Bank of China announced that its CBDC will be used at the Beijing Winter Olympics in February 2022. Republican Senators responded by calling for the U.S. Olympic Committee to forbid use of China’s CBDC by U.S. athletes in Beijing and introducing a bill (S. 2543) to require a study of its national security implications.

The Beijing Olympics could motivate a legislative mandate to accelerate implementation of a U.S. digital dollar, which the Federal Reserve has been in the process of considering in 2021. Antecedents to such legislation already exist. A House bill sponsored by 46 Republicans (H.R. 4792) has a provision that would require the Treasury Department to assess China’s CBDC project and report on the status of Federal Reserve work on a CBDC, and the Beyer bill includes a provision amending the Federal Reserve Act to authorize issuing a digital dollar.

Both parties are likely to support creating a digital dollar. The Covid-19 pandemic made a digital dollar for delivery of relief payments a popular idea in 2020, and House Democrats introduced bills with provisions for creating one in 2020 and 2021. Bipartisan support for a bill on a digital dollar, based on concerns both foreign and domestic in nature, could result.

International rivalry and bipartisan support may make the digital dollar a gateway issue for digital asset legislation in 2022. Legislative work on a digital dollar may open the door for considering further digital asset issues—including the regulatory issues that have been emerging for years—in 2022 and beyond.